Oskar von Stryk

Athena: An Autonomous Open-Hardware Tracked Rescue Robot Platform

Feb 23, 2026Abstract:In disaster response and situation assessment, robots have great potential in reducing the risks to the safety and health of first responders. As the situations encountered and the required capabilities of the robots deployed in such missions differ wildly and are often not known in advance, heterogeneous fleets of robots are needed to cover a wide range of mission requirements. While UAVs can quickly survey the mission environment, their ability to carry heavy payloads such as sensors and manipulators is limited. UGVs can carry required payloads to assess and manipulate the mission environment, but need to be able to deal with difficult and unstructured terrain such as rubble and stairs. The ability of tracked platforms with articulated arms (flippers) to reconfigure their geometry makes them particularly effective for navigating challenging terrain. In this paper, we present Athena, an open-hardware rescue ground robot research platform with four individually reconfigurable flippers and a reliable low-cost remote emergency stop (E-Stop) solution. A novel mounting solution using an industrial PU belt and tooth inserts allows the replacement and testing of different track profiles. The manipulator with a maximum reach of 1.54m can be used to operate doors, valves, and other objects of interest. Full CAD & PCB files, as well as all low-level software, are released as open-source contributions.

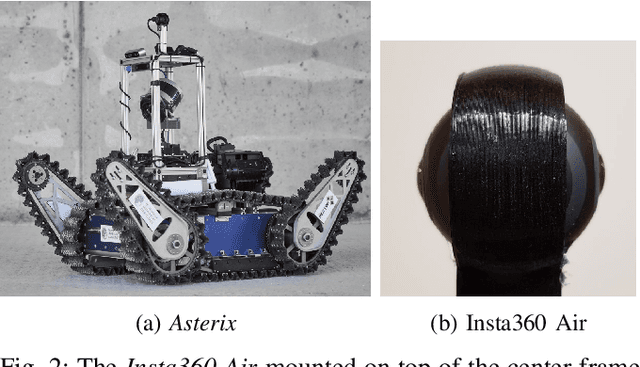

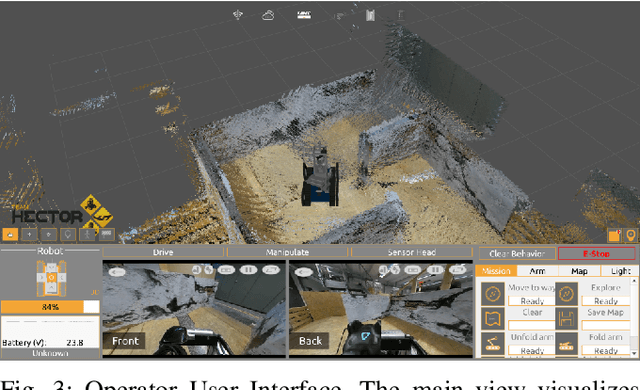

Hector UI: A Flexible Human-Robot User Interface for (Semi-)Autonomous Rescue and Inspection Robots

Apr 28, 2025

Abstract:The remote human operator's user interface (UI) is an important link to make the robot an efficient extension of the operator's perception and action. In rescue applications, several studies have investigated the design of operator interfaces based on observations during major robotics competitions or field deployments. Based on this research, guidelines for good interface design were empirically identified. The investigations on the UIs of teams participating in competitions are often based on external observations during UI application, which may miss some relevant requirements for UI flexibility. In this work, we present an open-source and flexibly configurable user interface based on established guidelines and its exemplary use for wheeled, tracked, and walking robots. We explain the design decisions and cover the insights we have gained during its highly successful applications in multiple robotics competitions and evaluations. The presented UI can also be adapted for other robots with little effort and is available as open source.

Efficient Dynamic LiDAR Odometry for Mobile Robots with Structured Point Clouds

Nov 27, 2024Abstract:We propose a real-time dynamic LiDAR odometry pipeline for mobile robots in Urban Search and Rescue (USAR) scenarios. Existing approaches to dynamic object detection often rely on pretrained learned networks or computationally expensive volumetric maps. To enhance efficiency on computationally limited robots, we reuse data between the odometry and detection module. Utilizing a range image segmentation technique and a novel residual-based heuristic, our method distinguishes dynamic from static objects before integrating them into the point cloud map. The approach demonstrates robust object tracking and improved map accuracy in environments with numerous dynamic objects. Even highly non-rigid objects, such as running humans, are accurately detected at point level without prior downsampling of the point cloud and hence, without loss of information. Evaluation on simulated and real-world data validates its computational efficiency. Compared to a state-of-the-art volumetric method, our approach shows comparable detection performance at a fraction of the processing time, adding only 14 ms to the odometry module for dynamic object detection and tracking. The implementation and a new real-world dataset are available as open-source for further research.

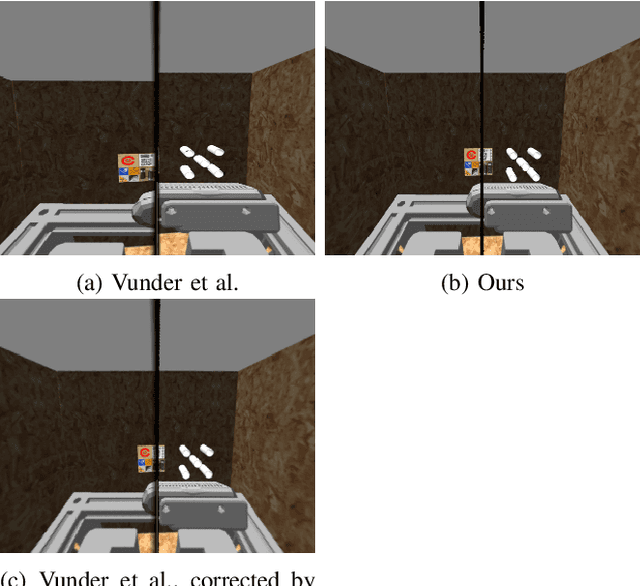

Accurate Pose Prediction on Signed Distance Fields for Mobile Ground Robots in Rough Terrain

May 03, 2024

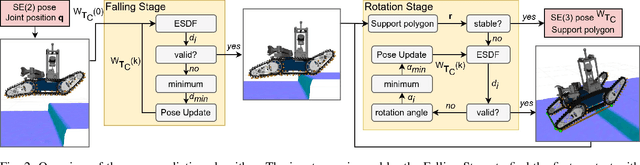

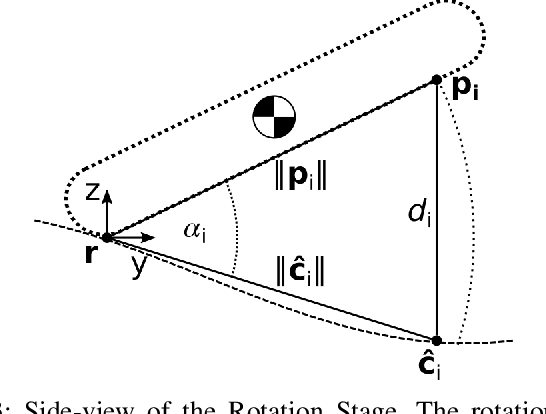

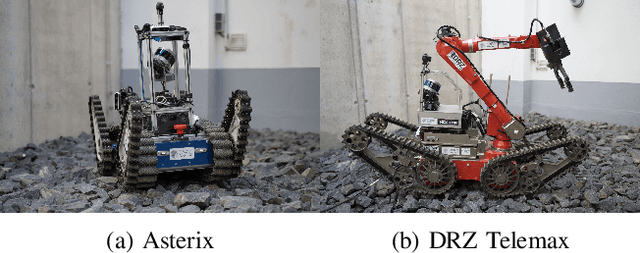

Abstract:Autonomous locomotion for mobile ground robots in unstructured environments such as waypoint navigation or flipper control requires a sufficiently accurate prediction of the robot-terrain interaction. Heuristics like occupancy grids or traversability maps are widely used but limit actions available to robots with active flippers as joint positions are not taken into account. We present a novel iterative geometric method to predict the 3D pose of mobile ground robots with active flippers on uneven ground with high accuracy and online planning capabilities. This is achieved by utilizing the ability of signed distance fields to represent surfaces with sub-voxel accuracy. The effectiveness of the presented approach is demonstrated on two different tracked robots in simulation and on a real platform. Compared to a tracking system as ground truth, our method predicts the robot position and orientation with an average accuracy of 3.11 cm and 3.91{\deg}, outperforming a recent heightmap-based approach. The implementation is made available as an open-source ROS package.

* Published in: 2023 IEEE International Symposium on Safety, Security, and Rescue Robotics (SSRR). Video: https://youtu.be/3kHDxPnEtHM

Clustering of Motion Trajectories by a Distance Measure Based on Semantic Features

Apr 26, 2024

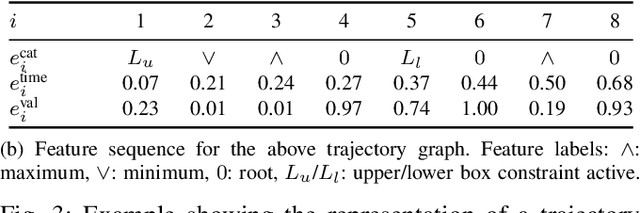

Abstract:Clustering of motion trajectories is highly relevant for human-robot interactions as it allows the anticipation of human motions, fast reaction to those, as well as the recognition of explicit gestures. Further, it allows automated analysis of recorded motion data. Many clustering algorithms for trajectories build upon distance metrics that are based on pointwise Euclidean distances. However, our work indicates that focusing on salient characteristics is often sufficient. We present a novel distance measure for motion plans consisting of state and control trajectories that is based on a compressed representation built from their main features. This approach allows a flexible choice of feature classes relevant to the respective task. The distance measure is used in agglomerative hierarchical clustering. We compare our method with the widely used dynamic time warping algorithm on test sets of motion plans for the Furuta pendulum and the Manutec robot arm and on real-world data from a human motion dataset. The proposed method demonstrates slight advantages in clustering and strong advantages in runtime, especially for long trajectories.

* Published in: 2023 IEEE-RAS 22nd International Conference on Humanoid Robots (Humanoids). Code available at: https://github.com/cztuda/semantic-feature-clustering

Emergency Response Person Localization and Vital Sign Estimation Using a Semi-Autonomous Robot Mounted SFCW Radar

May 25, 2023

Abstract:The large number and scale of natural and man-made disasters have led to an urgent demand for technologies that enhance the safety and efficiency of search and rescue teams. Semi-autonomous rescue robots are beneficial, especially when searching inaccessible terrains, or dangerous environments, such as collapsed infrastructures. For search and rescue missions in degraded visual conditions or non-line of sight scenarios, radar-based approaches may contribute to acquire valuable, and otherwise unavailable information. This article presents a complete signal processing chain for radar-based multi-person detection, 2D-MUSIC localization and breathing frequency estimation. The proposed method shows promising results on a challenging emergency response dataset that we collected using a semi-autonomous robot equipped with a commercially available through-wall radar system. The dataset is composed of 62 scenarios of various difficulty levels with up to five persons captured in different postures, angles and ranges including wooden and stone obstacles that block the radar line of sight. Ground truth data for reference locations, respiration, electrocardiogram, and acceleration signals are included. The full emergency response benchmark data set as well as all codes to reproduce our results, are publicly available at https://doi.org/10.21227/4bzd-jm32.

3D Coverage Path Planning for Efficient Construction Progress Monitoring

Feb 02, 2023

Abstract:On construction sites, progress must be monitored continuously to ensure that the current state corresponds to the planned state in order to increase efficiency, safety and detect construction defects at an early stage. Autonomous mobile robots can document the state of construction with high data quality and consistency. However, finding a path that fully covers the construction site is a challenging task as it can be large, slowly changing over time, and contain dynamic objects. Existing approaches are either exploration approaches that require a long time to explore the entire building, object scanning approaches that are not suitable for large and complex buildings, or planning approaches that only consider 2D coverage. In this paper, we present a novel approach for planning an efficient 3D path for progress monitoring on large construction sites with multiple levels. By making use of an existing 3D model we ensure that all surfaces of the building are covered by the sensor payload such as a 360-degree camera or a lidar. This enables the consistent and reliable monitoring of construction site progress with an autonomous ground robot. We demonstrate the effectiveness of the proposed planner on an artificial and a real building model, showing that much shorter paths and better coverage are achieved than with a traditional exploration planner.

* Published in: 2022 IEEE International Symposium on Safety, Security, and Rescue Robotics (SSRR)

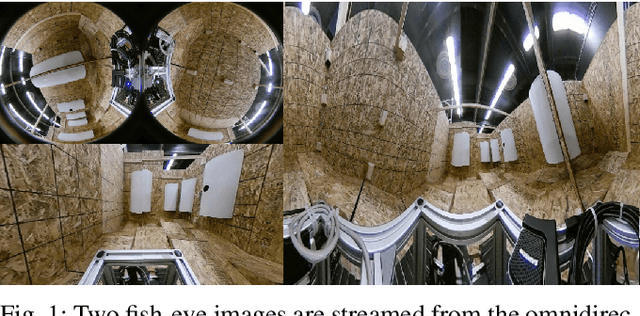

A Flexible Framework for Virtual Omnidirectional Vision to Improve Operator Situation Awareness

Feb 01, 2023

Abstract:During teleoperation of a mobile robot, providing good operator situation awareness is a major concern as a single mistake can lead to mission failure. Camera streams are widely used for teleoperation but offer limited field-of-view. In this paper, we present a flexible framework for virtual projections to increase situation awareness based on a novel method to fuse multiple cameras mounted anywhere on the robot. Moreover, we propose a complementary approach to improve scene understanding by fusing camera images and geometric 3D Lidar data to obtain a colorized point cloud. The implementation on a compact omnidirectional camera reduces system complexity considerably and solves multiple use-cases on a much smaller footprint compared to traditional approaches such as actuated pan-tilt units. Finally, we demonstrate the generality of the approach by application to the multi-camera system of the Boston Dynamics Spot. The software implementation is available as open-source ROS packages on the project page https://tu-darmstadt-ros-pkg.github.io/omnidirectional_vision.

* Accepted to European Conference on Mobile Robots (ECMR) 2021. Video link: https://youtu.be/7pocpdsMxOM Project page: https://tu-darmstadt-ros-pkg.github.io/omnidirectional_vision

Lessons from Robot-Assisted Disaster Response Deployments by the German Rescue Robotics Center Task Force

Dec 19, 2022Abstract:Earthquakes, fire, and floods often cause structural collapses of buildings. The inspection of damaged buildings poses a high risk for emergency forces or is even impossible, though. We present three recent selected missions of the Robotics Task Force of the German Rescue Robotics Center, where both ground and aerial robots were used to explore destroyed buildings. We describe and reflect the missions as well as the lessons learned that have resulted from them. In order to make robots from research laboratories fit for real operations, realistic test environments were set up for outdoor and indoor use and tested in regular exercises by researchers and emergency forces. Based on this experience, the robots and their control software were significantly improved. Furthermore, top teams of researchers and first responders were formed, each with realistic assessments of the operational and practical suitability of robotic systems.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge