Jan Razlaw

Team NimbRo's UGV Solution for Autonomous Wall Building and Fire Fighting at MBZIRC 2020

May 27, 2021

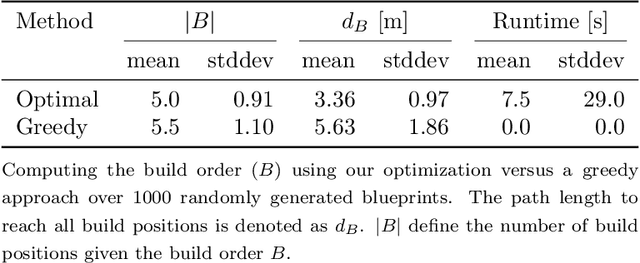

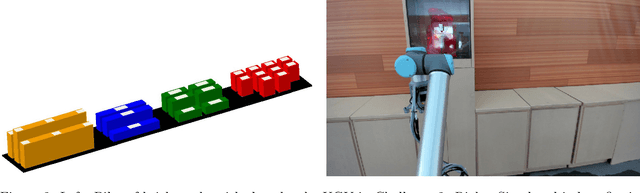

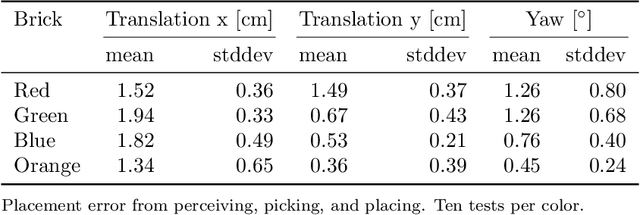

Abstract:Autonomous robotic systems for various applications including transport, mobile manipulation, and disaster response are becoming more and more complex. Evaluating and analyzing such systems is challenging. Robotic competitions are designed to benchmark complete robotic systems on complex state-of-the-art tasks. Participants compete in defined scenarios under equal conditions. We present our UGV solution developed for the Mohamed Bin Zayed International Robotics Challenge 2020. Our hard- and software components to address the challenge tasks of wall building and fire fighting are integrated into a fully autonomous system. The robot consists of a wheeled omnidirectional base, a 6 DoF manipulator arm equipped with a magnetic gripper, a highly efficient storage system to transport box-shaped objects, and a water spraying system to fight fires. The robot perceives its environment using 3D LiDAR as well as RGB and thermal camera-based perception modules, is capable of picking box-shaped objects and constructing a pre-defined wall structure, as well as detecting and localizing heat sources in order to extinguish potential fires. A high-level planner solves the challenge tasks using the robot skills. We analyze and discuss our successful participation during the MBZIRC 2020 finals, present further experiments, and provide insights to our lessons learned.

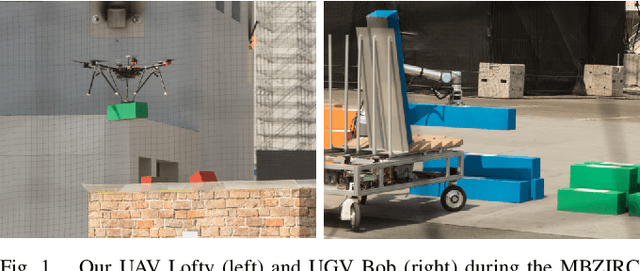

Autonomous Wall Building with a UGV-UAV Team at MBZIRC 2020

Nov 03, 2020

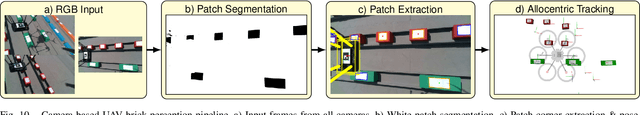

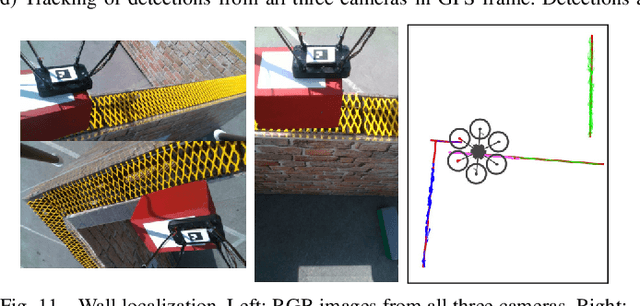

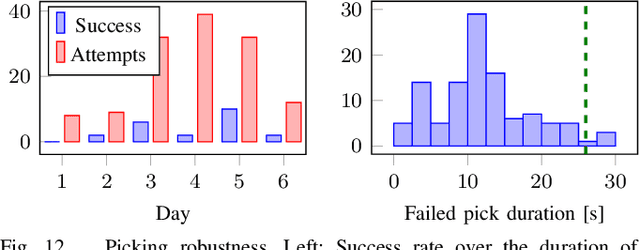

Abstract:Constructing large structures with robots is a challenging task with many potential applications that requires mobile manipulation capabilities. We present two systems for autonomous wall building that we developed for the Mohamed Bin Zayed International Robotics Challenge 2020. Both systems autonomously perceive their environment, find bricks, and build a predefined wall structure. While the UGV uses a 3D LiDAR-based perception system which measures brick poses with high precision, the UAV employs a real-time camera-based system for visual servoing. We report results and insights from our successful participation at the MBZIRC 2020 Finals, additional lab experiments, and discuss the lessons learned from the competition.

Detection and Tracking of Small Objects in Sparse 3D Laser Range Data

Mar 14, 2019

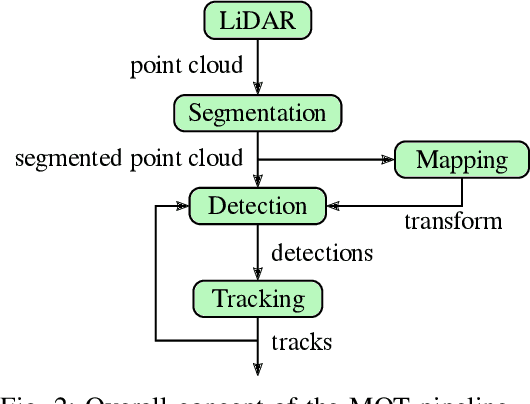

Abstract:Detection and tracking of dynamic objects is a key feature for autonomous behavior in a continuously changing environment. With the increasing popularity and capability of micro aerial vehicles (MAVs) efficient algorithms have to be utilized to enable multi object tracking on limited hardware and data provided by lightweight sensors. We present a novel segmentation approach based on a combination of median filters and an efficient pipeline for detection and tracking of small objects within sparse point clouds generated by a Velodyne VLP-16 sensor. We achieve real-time performance on a single core of our MAV hardware by exploiting the inherent structure of the data. Our approach is evaluated on simulated and real scans of in- and outdoor environments, obtaining results comparable to the state of the art. Additionally, we provide an application for filtering the dynamic and mapping the static part of the data, generating further insights into the performance of the pipeline on unlabeled data.

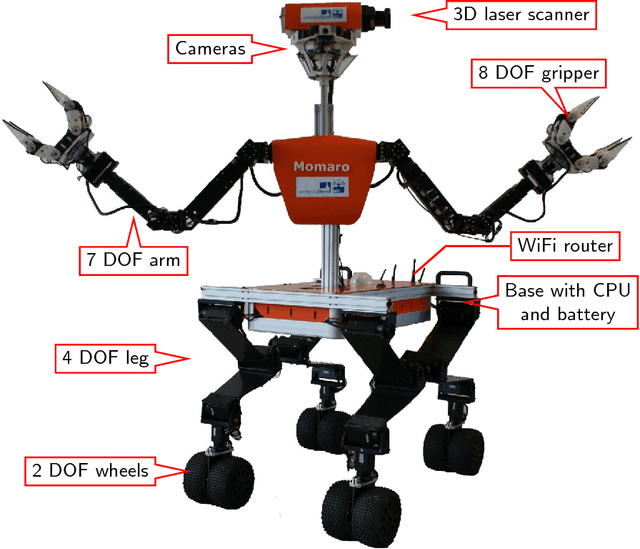

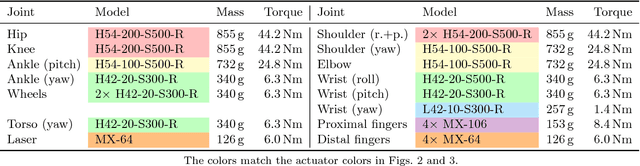

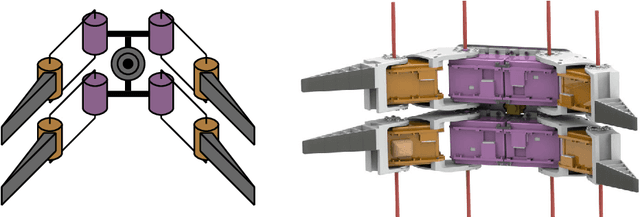

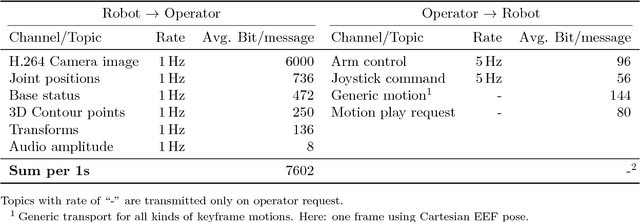

NimbRo Rescue: Solving Disaster-Response Tasks through Mobile Manipulation Robot Momaro

Oct 15, 2018

Abstract:Robots that solve complex tasks in environments too dangerous for humans to enter are desperately needed, e.g. for search and rescue applications. We describe our mobile manipulation robot Momaro, with which we participated successfully in the DARPA Robotics Challenge. It features a unique locomotion design with four legs ending in steerable wheels, which allows it both to drive omnidirectionally and to step over obstacles or climb. Furthermore, we present advanced communication and teleoperation approaches, which include immersive 3D visualization, and 6D tracking of operator head and arm motions. The proposed system is evaluated in the DARPA Robotics Challenge, the DLR SpaceBot Cup Qualification and lab experiments. We also discuss the lessons learned from the competitions.

Team NimbRo at MBZIRC 2017: Autonomous Valve Stem Turning using a Wrench

Oct 06, 2018

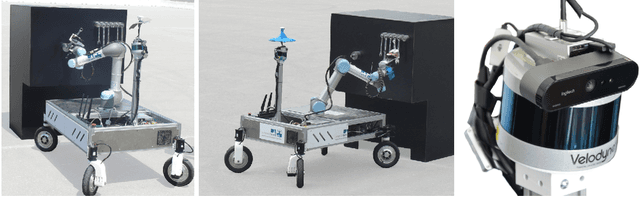

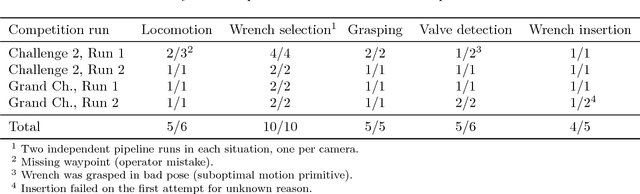

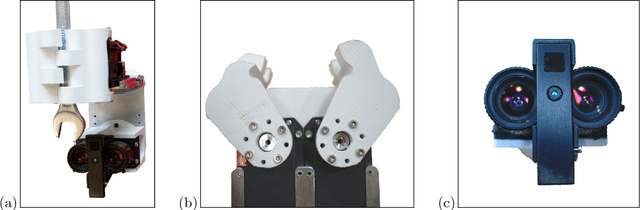

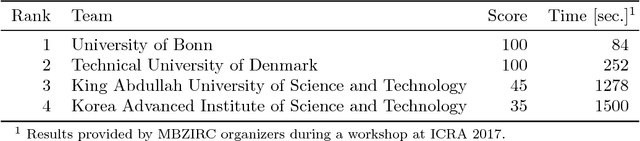

Abstract:The Mohamed Bin Zayed International Robotics Challenge (MBZIRC) 2017 has defined ambitious new benchmarks to advance the state-of-the-art in autonomous operation of ground-based and flying robots. In this article, we describe our winning entry to MBZIRC Challenge 2: the mobile manipulation robot Mario. It is capable of autonomously solving a valve manipulation task using a wrench tool detected, grasped, and finally employed to turn a valve stem. Mario's omnidirectional base allows both fast locomotion and precise close approach to the manipulation panel. We describe an efficient detector for medium-sized objects in 3D laser scans and apply it to detect the manipulation panel. An object detection architecture based on deep neural networks is used to find and select the correct tool from grayscale images. Parametrized motion primitives are adapted online to percepts of the tool and valve stem in order to turn the stem. We report in detail on our winning performance at the challenge and discuss lessons learned.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge