Evgenii Kruzhkov

OMCL: Open-vocabulary Monte Carlo Localization

Dec 17, 2025Abstract:Robust robot localization is an important prerequisite for navigation planning. If the environment map was created from different sensors, robot measurements must be robustly associated with map features. In this work, we extend Monte Carlo Localization using vision-language features. These open-vocabulary features enable to robustly compute the likelihood of visual observations, given a camera pose and a 3D map created from posed RGB-D images or aligned point clouds. The abstract vision-language features enable to associate observations and map elements from different modalities. Global localization can be initialized by natural language descriptions of the objects present in the vicinity of locations. We evaluate our approach using Matterport3D and Replica for indoor scenes and demonstrate generalization on SemanticKITTI for outdoor scenes.

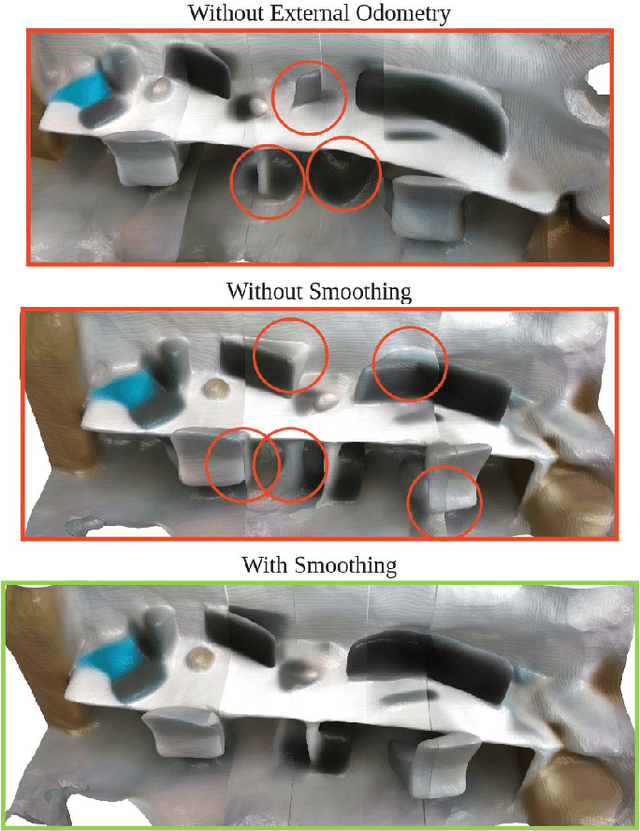

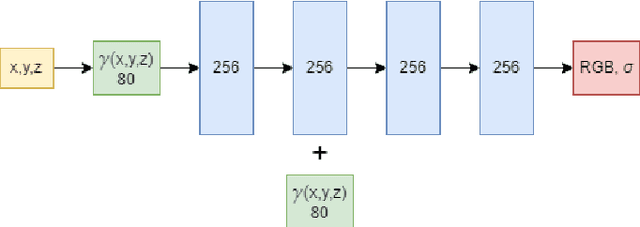

LiLMaps: Learnable Implicit Language Maps

Jan 08, 2025

Abstract:One of the current trends in robotics is to employ large language models (LLMs) to provide non-predefined command execution and natural human-robot interaction. It is useful to have an environment map together with its language representation, which can be further utilized by LLMs. Such a comprehensive scene representation enables numerous ways of interaction with the map for autonomously operating robots. In this work, we present an approach that enhances incremental implicit mapping through the integration of vision-language features. Specifically, we (i) propose a decoder optimization technique for implicit language maps which can be used when new objects appear on the scene, and (ii) address the problem of inconsistent vision-language predictions between different viewing positions. Our experiments demonstrate the effectiveness of LiLMaps and solid improvements in performance.

Hierarchical Pose Estimation and Mapping with Multi-Scale Neural Feature Fields

Dec 30, 2024

Abstract:Robotic applications require a comprehensive understanding of the scene. In recent years, neural fields-based approaches that parameterize the entire environment have become popular. These approaches are promising due to their continuous nature and their ability to learn scene priors. However, the use of neural fields in robotics becomes challenging when dealing with unknown sensor poses and sequential measurements. This paper focuses on the problem of sensor pose estimation for large-scale neural implicit SLAM. We investigate implicit mapping from a probabilistic perspective and propose hierarchical pose estimation with a corresponding neural network architecture. Our method is well-suited for large-scale implicit map representations. The proposed approach operates on consecutive outdoor LiDAR scans and achieves accurate pose estimation, while maintaining stable mapping quality for both short and long trajectories. We built our method on a structured and sparse implicit representation suitable for large-scale reconstruction and evaluated it using the KITTI and MaiCity datasets. Our approach outperforms the baseline in terms of mapping with unknown poses and achieves state-of-the-art localization accuracy.

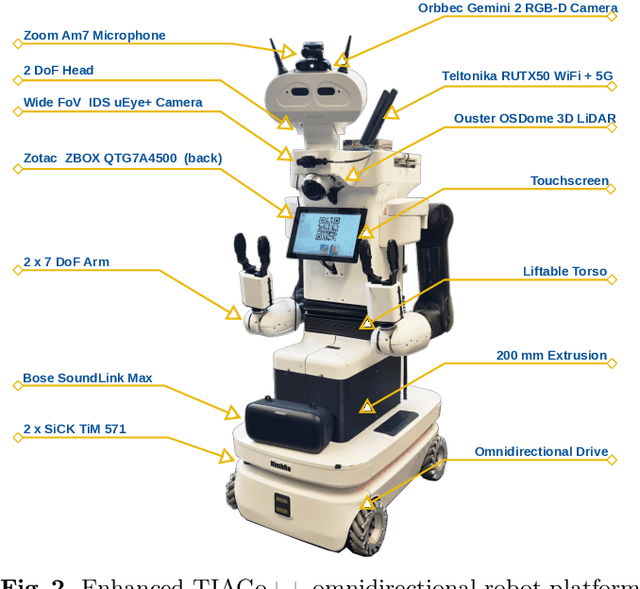

RoboCup@Home 2024 OPL Winner NimbRo: Anthropomorphic Service Robots using Foundation Models for Perception and Planning

Dec 19, 2024

Abstract:We present the approaches and contributions of the winning team NimbRo@Home at the RoboCup@Home 2024 competition in the Open Platform League held in Eindhoven, NL. Further, we describe our hardware setup and give an overview of the results for the task stages and the final demonstration. For this year's competition, we put a special emphasis on open-vocabulary object segmentation and grasping approaches that overcome the labeling overhead of supervised vision approaches, commonly used in RoboCup@Home. We successfully demonstrated that we can segment and grasp non-labeled objects by text descriptions. Further, we extensively employed LLMs for natural language understanding and task planning. Throughout the competition, our approaches showed robustness and generalization capabilities. A video of our performance can be found online.

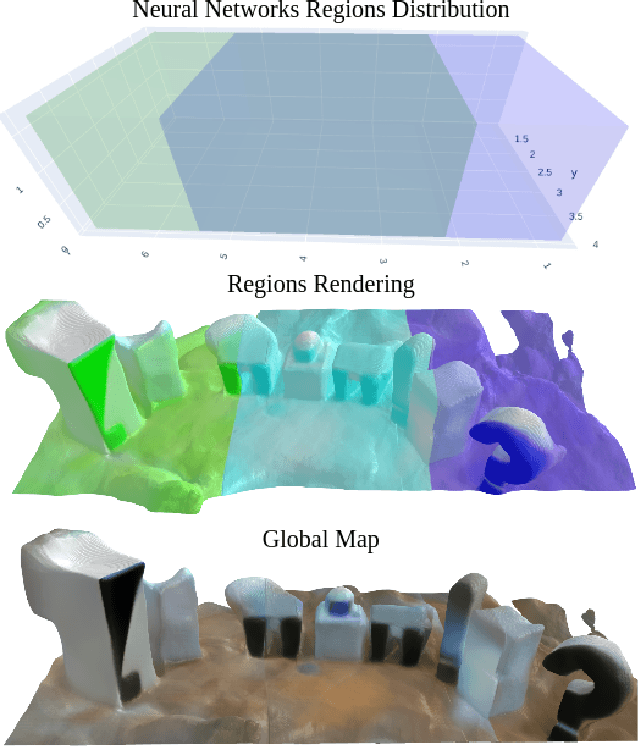

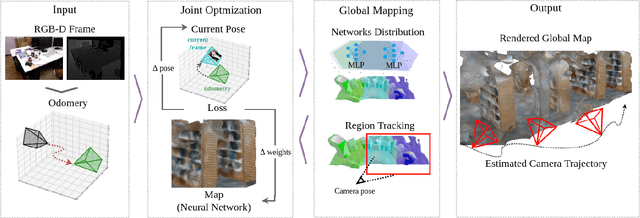

MeSLAM: Memory Efficient SLAM based on Neural Fields

Sep 19, 2022

Abstract:Existing Simultaneous Localization and Mapping (SLAM) approaches are limited in their scalability due to growing map size in long-term robot operation. Moreover, processing such maps for localization and planning tasks leads to the increased computational resources required onboard. To address the problem of memory consumption in long-term operation, we develop a novel real-time SLAM algorithm, MeSLAM, that is based on neural field implicit map representation. It combines the proposed global mapping strategy, including neural networks distribution and region tracking, with an external odometry system. As a result, the algorithm is able to efficiently train multiple networks representing different map regions and track poses accurately in large-scale environments. Experimental results show that the accuracy of the proposed approach is comparable to the state-of-the-art methods (on average, 6.6 cm on TUM RGB-D sequences) and outperforms the baseline, iMAP$^*$. Moreover, the proposed SLAM approach provides the most compact-sized maps without details distortion (1.9 MB to store 57 m$^3$) among the state-of-the-art SLAM approaches.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge