Delong Zhu

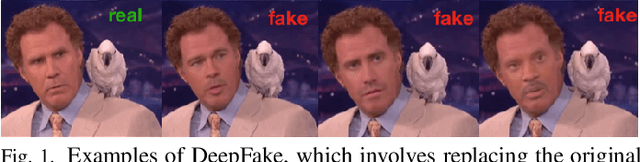

Celeb-DF++: A Large-scale Challenging Video DeepFake Benchmark for Generalizable Forensics

Jul 24, 2025Abstract:The rapid advancement of AI technologies has significantly increased the diversity of DeepFake videos circulating online, posing a pressing challenge for \textit{generalizable forensics}, \ie, detecting a wide range of unseen DeepFake types using a single model. Addressing this challenge requires datasets that are not only large-scale but also rich in forgery diversity. However, most existing datasets, despite their scale, include only a limited variety of forgery types, making them insufficient for developing generalizable detection methods. Therefore, we build upon our earlier Celeb-DF dataset and introduce {Celeb-DF++}, a new large-scale and challenging video DeepFake benchmark dedicated to the generalizable forensics challenge. Celeb-DF++ covers three commonly encountered forgery scenarios: Face-swap (FS), Face-reenactment (FR), and Talking-face (TF). Each scenario contains a substantial number of high-quality forged videos, generated using a total of 22 various recent DeepFake methods. These methods differ in terms of architectures, generation pipelines, and targeted facial regions, covering the most prevalent DeepFake cases witnessed in the wild. We also introduce evaluation protocols for measuring the generalizability of 24 recent detection methods, highlighting the limitations of existing detection methods and the difficulty of our new dataset.

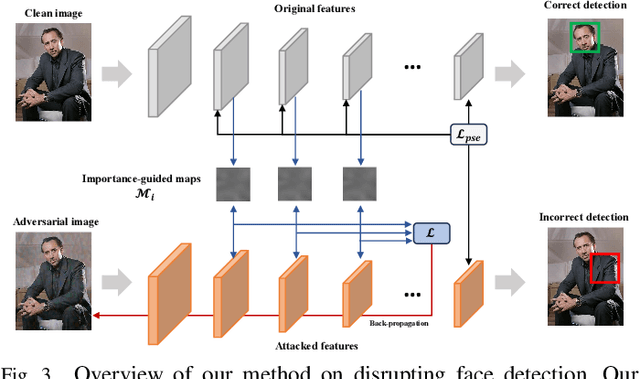

Hiding Faces in Plain Sight: Defending DeepFakes by Disrupting Face Detection

Dec 02, 2024

Abstract:This paper investigates the feasibility of a proactive DeepFake defense framework, {\em FacePosion}, to prevent individuals from becoming victims of DeepFake videos by sabotaging face detection. The motivation stems from the reliance of most DeepFake methods on face detectors to automatically extract victim faces from videos for training or synthesis (testing). Once the face detectors malfunction, the extracted faces will be distorted or incorrect, subsequently disrupting the training or synthesis of the DeepFake model. To achieve this, we adapt various adversarial attacks with a dedicated design for this purpose and thoroughly analyze their feasibility. Based on FacePoison, we introduce {\em VideoFacePoison}, a strategy that propagates FacePoison across video frames rather than applying them individually to each frame. This strategy can largely reduce the computational overhead while retaining the favorable attack performance. Our method is validated on five face detectors, and extensive experiments against eleven different DeepFake models demonstrate the effectiveness of disrupting face detectors to hinder DeepFake generation.

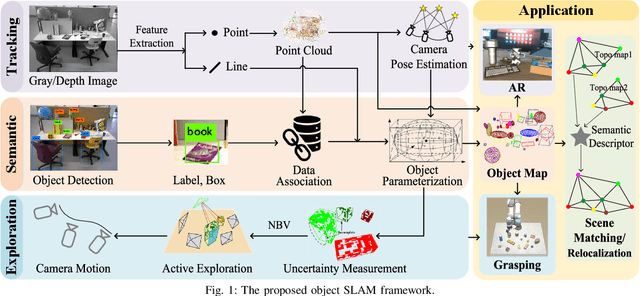

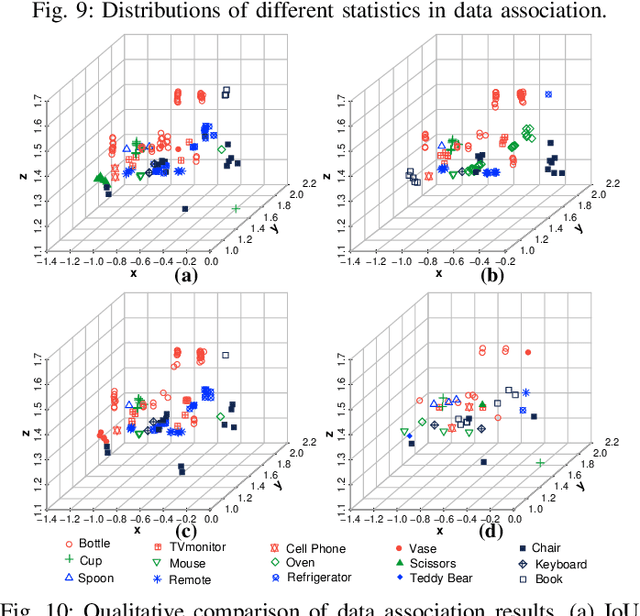

An Object SLAM Framework for Association, Mapping, and High-Level Tasks

May 12, 2023

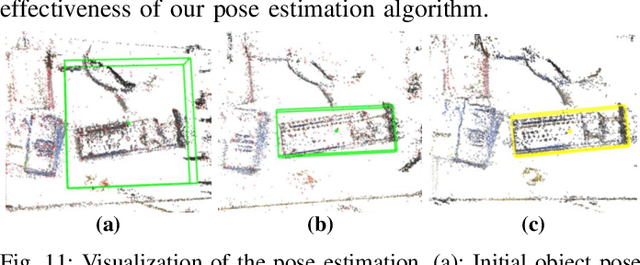

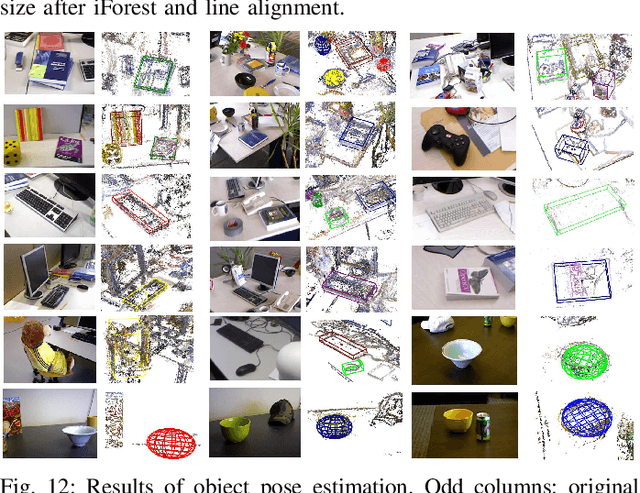

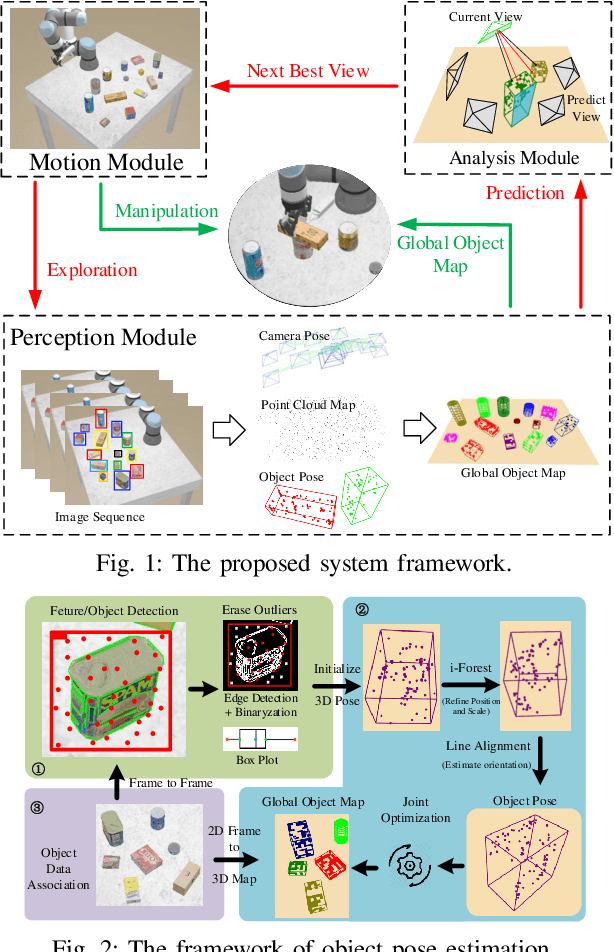

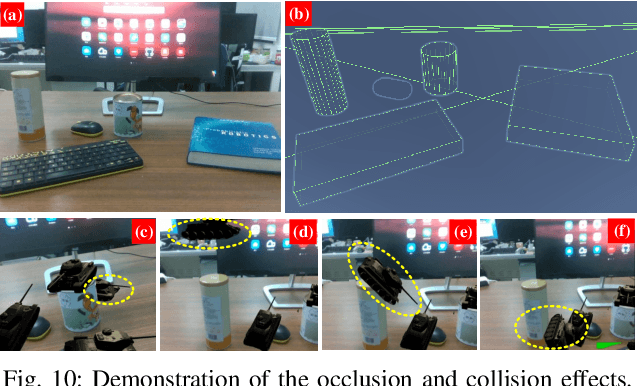

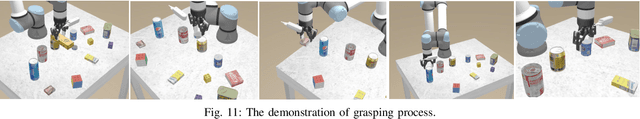

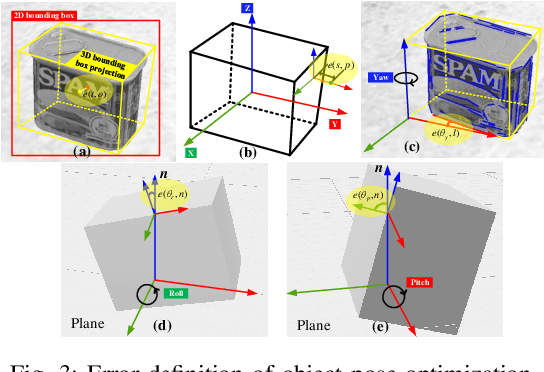

Abstract:Object SLAM is considered increasingly significant for robot high-level perception and decision-making. Existing studies fall short in terms of data association, object representation, and semantic mapping and frequently rely on additional assumptions, limiting their performance. In this paper, we present a comprehensive object SLAM framework that focuses on object-based perception and object-oriented robot tasks. First, we propose an ensemble data association approach for associating objects in complicated conditions by incorporating parametric and nonparametric statistic testing. In addition, we suggest an outlier-robust centroid and scale estimation algorithm for modeling objects based on the iForest and line alignment. Then a lightweight and object-oriented map is represented by estimated general object models. Taking into consideration the semantic invariance of objects, we convert the object map to a topological map to provide semantic descriptors to enable multi-map matching. Finally, we suggest an object-driven active exploration strategy to achieve autonomous mapping in the grasping scenario. A range of public datasets and real-world results in mapping, augmented reality, scene matching, relocalization, and robotic manipulation have been used to evaluate the proposed object SLAM framework for its efficient performance.

A Survey on Deep-Learning Approaches for Vehicle Trajectory Prediction in Autonomous Driving

Oct 29, 2021

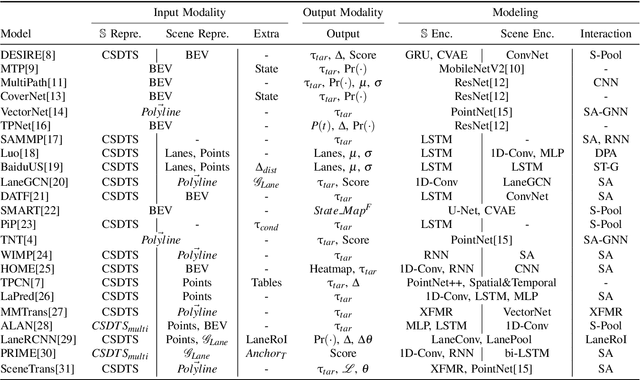

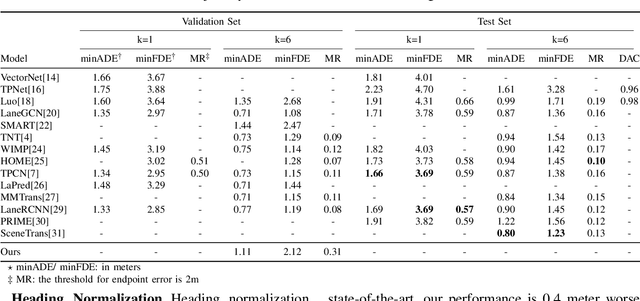

Abstract:With the rapid development of machine learning, autonomous driving has become a hot issue, making urgent demands for more intelligent perception and planning systems. Self-driving cars can avoid traffic crashes with precisely predicted future trajectories of surrounding vehicles. In this work, we review and categorize existing learning-based trajectory forecasting methods from perspectives of representation, modeling, and learning. Moreover, we make our implementation of Target-driveN Trajectory Prediction publicly available at https://github.com/Henry1iu/TNT-Trajectory-Predition, demonstrating its outstanding performance whereas its original codes are withheld. Enlightenment is expected for researchers seeking to improve trajectory prediction performance based on the achievement we have made.

VDB-EDT: An Efficient Euclidean Distance Transform Algorithm Based on VDB Data Structure

May 10, 2021

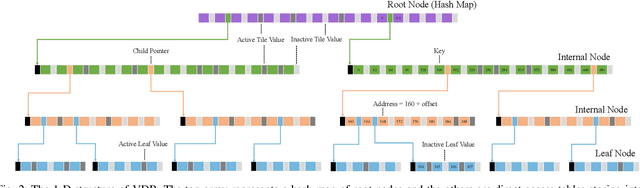

Abstract:This paper presents a fundamental algorithm, called VDB-EDT, for Euclidean distance transform (EDT) based on the VDB data structure. The algorithm executes on grid maps and generates the corresponding distance field for recording distance information against obstacles, which forms the basis of numerous motion planning algorithms. The contributions of this work mainly lie in three folds. Firstly, we propose a novel algorithm that can facilitate distance transform procedures by optimizing the scheduling priorities of transform functions, which significantly improves the running speed of conventional EDT algorithms. Secondly, we for the first time introduce the memory-efficient VDB data structure, a customed B+ tree, to represent the distance field hierarchically. Benefiting from the special index and caching mechanism, VDB shows a fast (average \textit{O}(1)) random access speed, and thus is very suitable for the frequent neighbor-searching operations in EDT. Moreover, regarding the small scale of existing datasets, we release a large-scale dataset captured from subterranean environments to benchmark EDT algorithms. Extensive experiments on the released dataset and publicly available datasets show that VDB-EDT can reduce memory consumption by about 30%-85%, depending on the sparsity of the environment, while maintaining a competitive running speed with the fastest array-based implementation. The experiments also show that VDB-EDT can significantly outperform the state-of-the-art EDT algorithm in both runtime and memory efficiency, which strongly demonstrates the advantages of our proposed method. The released dataset and source code are available on https://github.com/zhudelong/VDB-EDT.

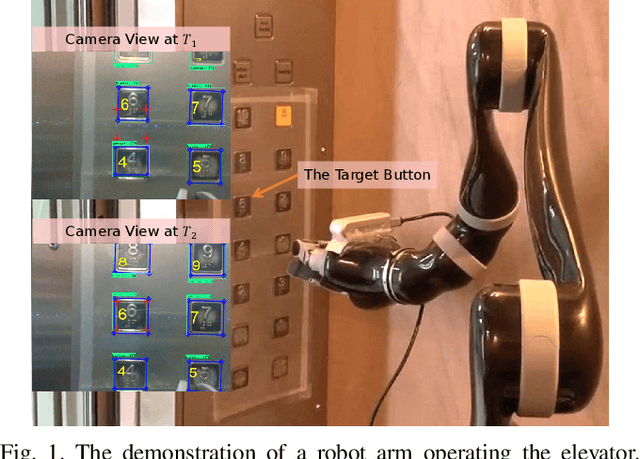

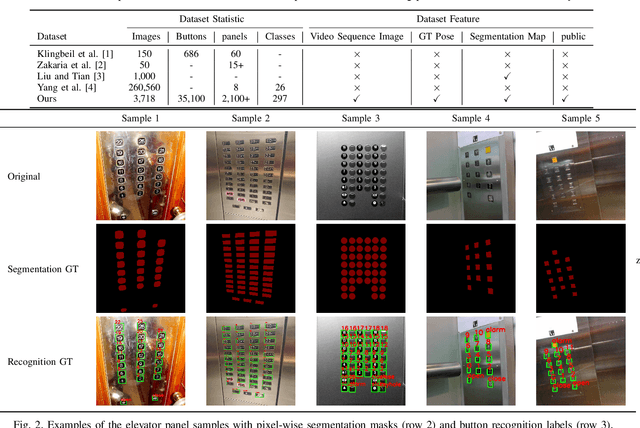

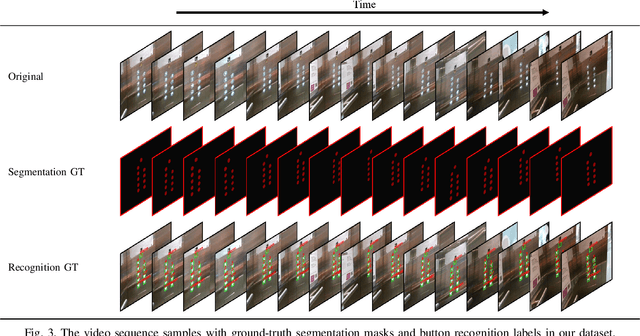

A Large-Scale Dataset for Benchmarking Elevator Button Segmentation and Character Recognition

Mar 22, 2021

Abstract:Human activities are hugely restricted by COVID-19, recently. Robots that can conduct inter-floor navigation attract much public attention, since they can substitute human workers to conduct the service work. However, current robots either depend on human assistance or elevator retrofitting, and fully autonomous inter-floor navigation is still not available. As the very first step of inter-floor navigation, elevator button segmentation and recognition hold an important position. Therefore, we release the first large-scale publicly available elevator panel dataset in this work, containing 3,718 panel images with 35,100 button labels, to facilitate more powerful algorithms on autonomous elevator operation. Together with the dataset, a number of deep learning based implementations for button segmentation and recognition are also released to benchmark future methods in the community. The dataset will be available at \url{https://github.com/zhudelong/elevator_button_recognition

Accurate and Robust Scale Recovery for Monocular Visual Odometry Based on Plane Geometry

Jan 15, 2021

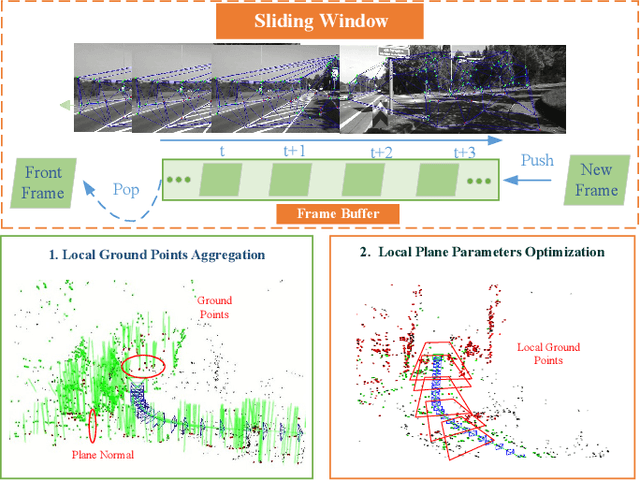

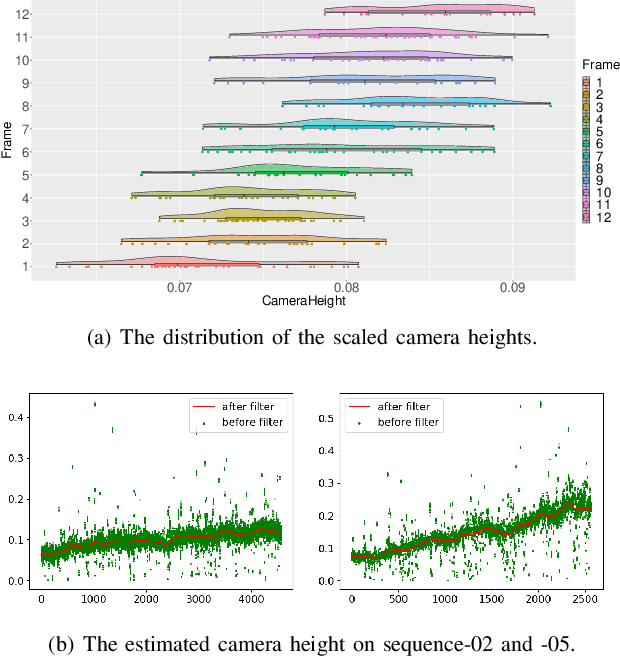

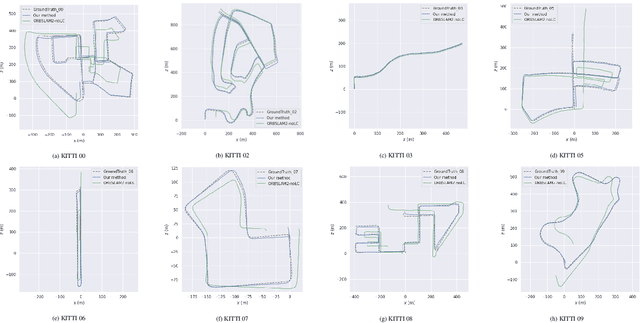

Abstract:Scale ambiguity is a fundamental problem in monocular visual odometry. Typical solutions include loop closure detection and environment information mining. For applications like self-driving cars, loop closure is not always available, hence mining prior knowledge from the environment becomes a more promising approach. In this paper, with the assumption of a constant height of the camera above the ground, we develop a light-weight scale recovery framework leveraging an accurate and robust estimation of the ground plane. The framework includes a ground point extraction algorithm for selecting high-quality points on the ground plane, and a ground point aggregation algorithm for joining the extracted ground points in a local sliding window. Based on the aggregated data, the scale is finally recovered by solving a least-squares problem using a RANSAC-based optimizer. Sufficient data and robust optimizer enable a highly accurate scale recovery. Experiments on the KITTI dataset show that the proposed framework can achieve state-of-the-art accuracy in terms of translation errors, while maintaining competitive performance on the rotation error. Due to the light-weight design, our framework also demonstrates a high frequency of 20Hz on the dataset.

Object-Driven Active Mapping for More Accurate Object Pose Estimation and Robotic Grasping

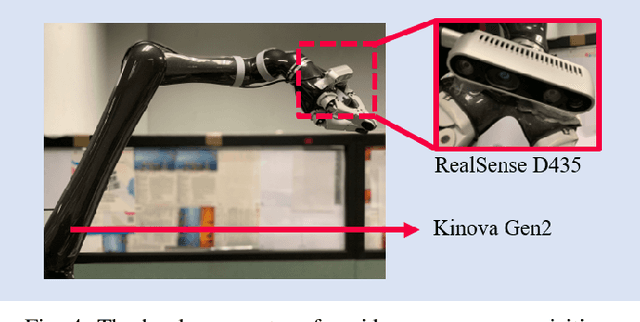

Dec 03, 2020

Abstract:This paper presents the first active object mapping framework for complex robotic grasping tasks. The framework is built on an object SLAM system integrated with a simultaneous multi-object pose estimation process. Aiming to reduce the observation uncertainty on target objects and increase their pose estimation accuracy, we also design an object-driven exploration strategy to guide the object mapping process. By combining the mapping module and the exploration strategy, an accurate object map that is compatible with robotic grasping can be generated. Quantitative evaluations also show that the proposed framework has a very high mapping accuracy. Manipulation experiments, including object grasping, object placement, and the augmented reality, significantly demonstrate the effectiveness and advantages of our proposed framework.

Search-Based Online Trajectory Planning for Car-like Robots in Highly Dynamic Environments

Nov 07, 2020

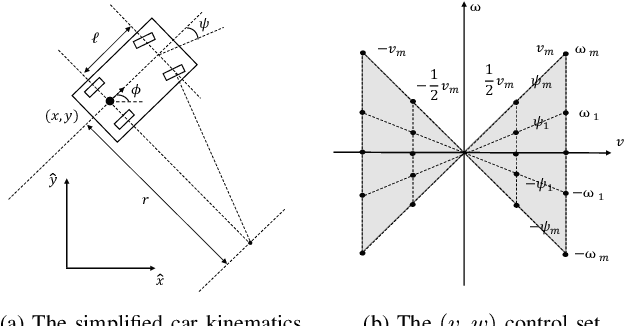

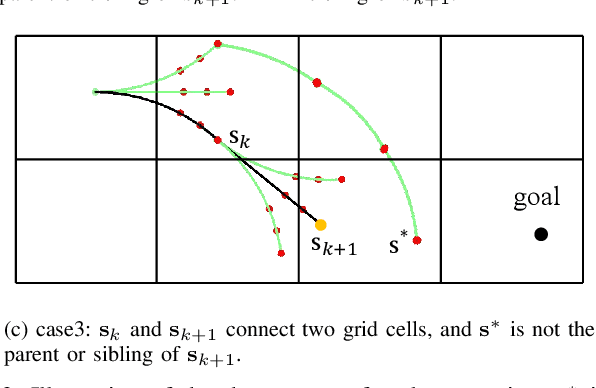

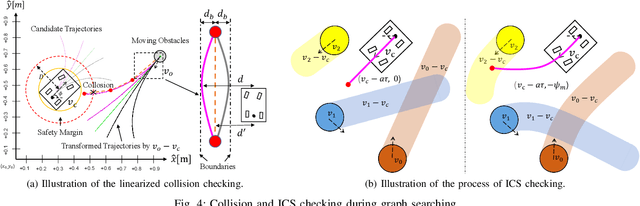

Abstract:This paper presents a search-based partial motion planner to generate dynamically feasible trajectories for car-like robots in highly dynamic environments. The planner searches for smooth, safe, and near-time-optimal trajectories by exploring a state graph built on motion primitives, which are generated by discretizing the time dimension and the control space. To enable fast online planning, we first propose an efficient path searching algorithm based on the aggregation and pruning of motion primitives. We then propose a fast collision checking algorithm that takes into account the motions of moving obstacles. The algorithm linearizes relative motions between the robot and obstacles and then checks collisions by comparing a point-line distance. Benefiting from the fast searching and collision checking algorithms, the planner can effectively and safely explore the state-time space to generate near-time-optimal solutions. The results through extensive experiments show that the proposed method can generate feasible trajectories within milliseconds while maintaining a higher success rate than up-to-date methods, which significantly demonstrates its advantages.

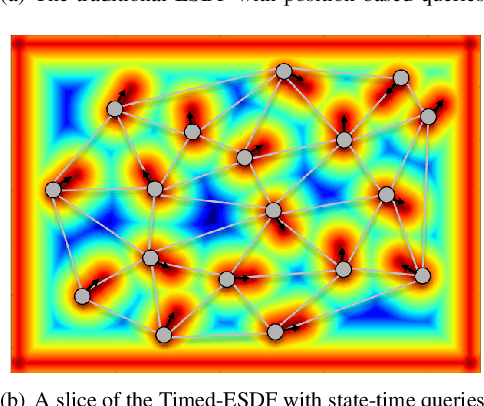

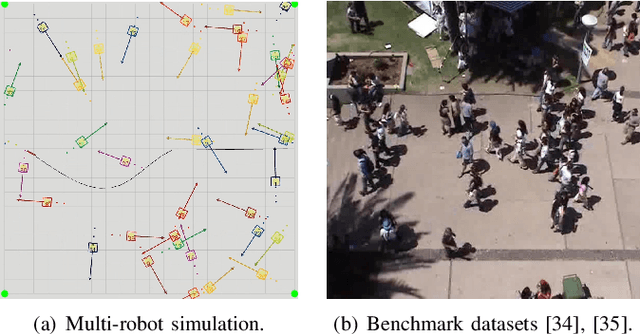

Online State-Time Trajectory Planning Using Timed-ESDF in Highly Dynamic Environments

Oct 29, 2020

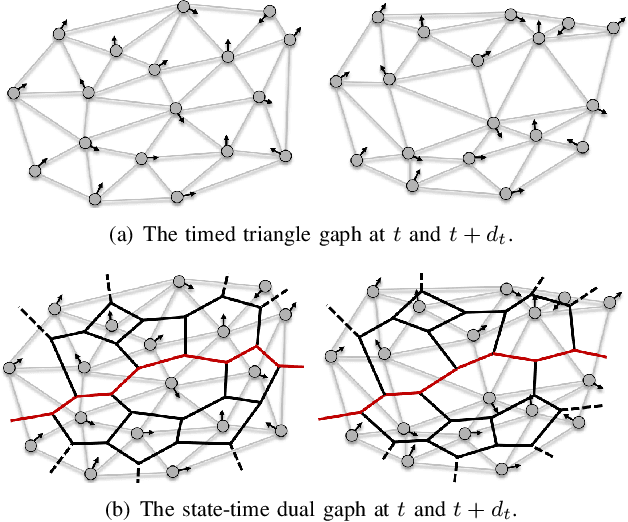

Abstract:Online state-time trajectory planning in highly dynamic environments remains an unsolved problem due to the unpredictable motions of moving obstacles and the curse of dimensionality from the state-time space. Existing state-time planners are typically implemented based on randomized sampling approaches or path searching on discretized state graph. The smoothness, path clearance, and planning efficiency of these planners are usually not satisfying. In this work, we propose a gradient-based planner over the state-time space for online trajectory generation in highly dynamic environments. To enable the gradient-based optimization, we propose a Timed-ESDT that supports distance and gradient queries with state-time keys. Based on the Timed-ESDT, we also define a smooth prior and an obstacle likelihood function that is compatible with the state-time space. The trajectory planning is then formulated to a MAP problem and solved by an efficient numerical optimizer. Moreover, to improve the optimality of the planner, we also define a state-time graph and then conduct path searching on it to find a better initialization for the optimizer. By integrating the graph searching, the planning quality is significantly improved. Experiment results on simulated and benchmark datasets show that our planner can outperform the state-of-the-art methods, demonstrating its significant advantages over the traditional ones.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge