Alex Zhou

A Multi-Robot Platform for Robotic Triage Combining Onboard Sensing and Foundation Models

Dec 09, 2025

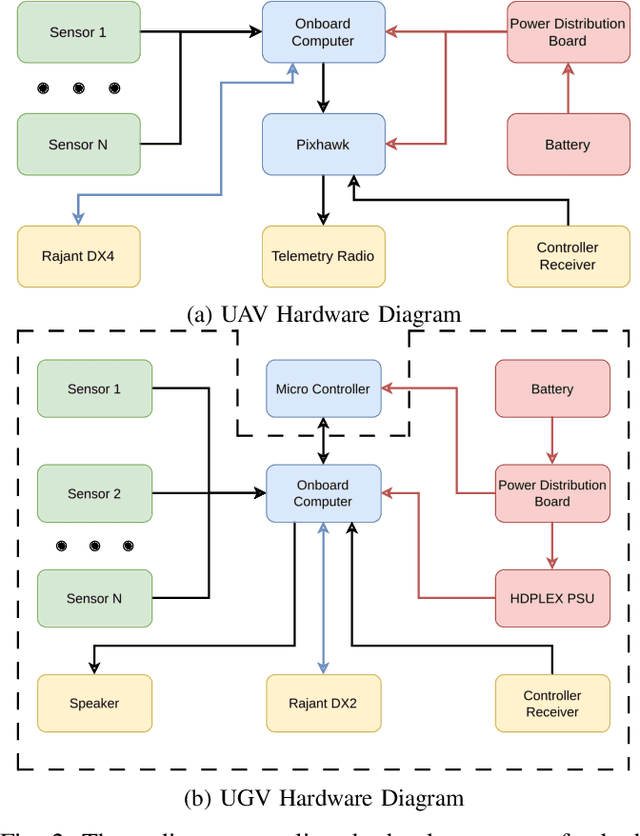

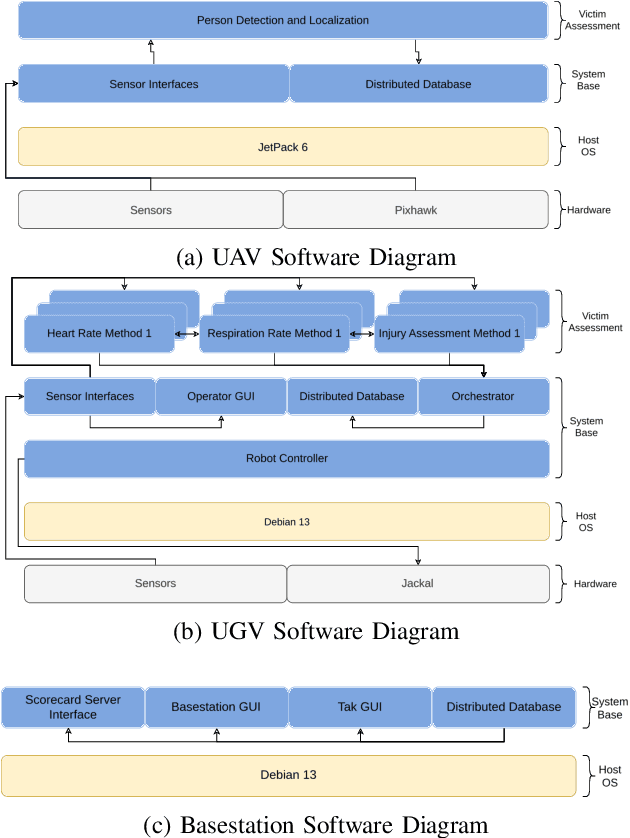

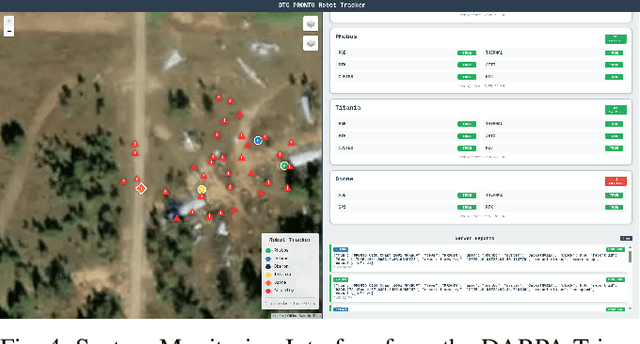

Abstract:This report presents a heterogeneous robotic system designed for remote primary triage in mass-casualty incidents (MCIs). The system employs a coordinated air-ground team of unmanned aerial vehicles (UAVs) and unmanned ground vehicles (UGVs) to locate victims, assess their injuries, and prioritize medical assistance without risking the lives of first responders. The UAV identify and provide overhead views of casualties, while UGVs equipped with specialized sensors measure vital signs and detect and localize physical injuries. Unlike previous work that focused on exploration or limited medical evaluation, this system addresses the complete triage process: victim localization, vital sign measurement, injury severity classification, mental status assessment, and data consolidation for first responders. Developed as part of the DARPA Triage Challenge, this approach demonstrates how multi-robot systems can augment human capabilities in disaster response scenarios to maximize lives saved.

SEER: Safe Efficient Exploration for Aerial Robots using Learning to Predict Information Gain

Sep 22, 2022

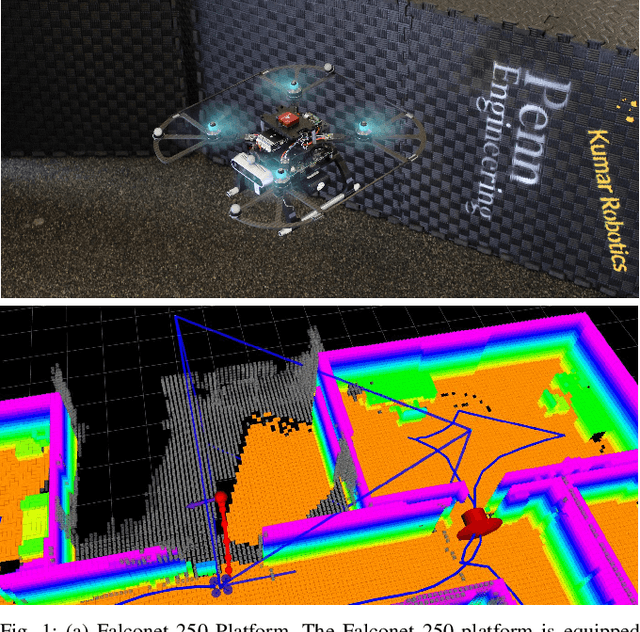

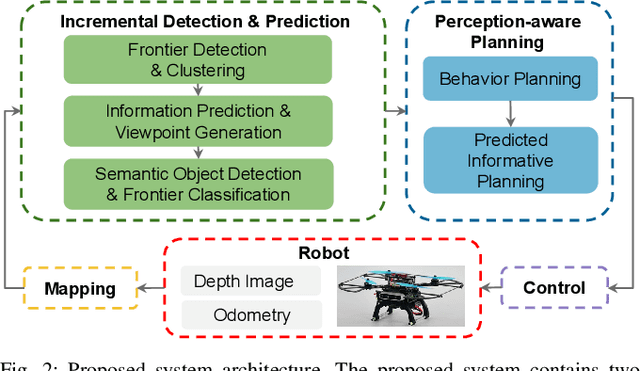

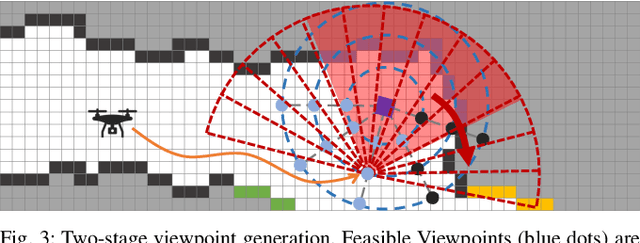

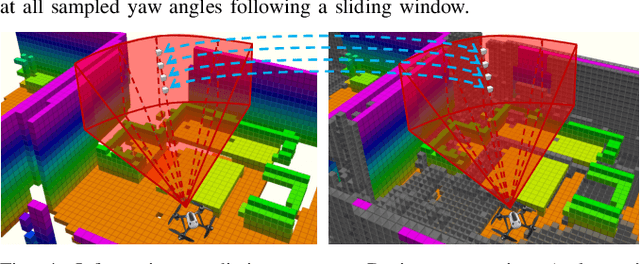

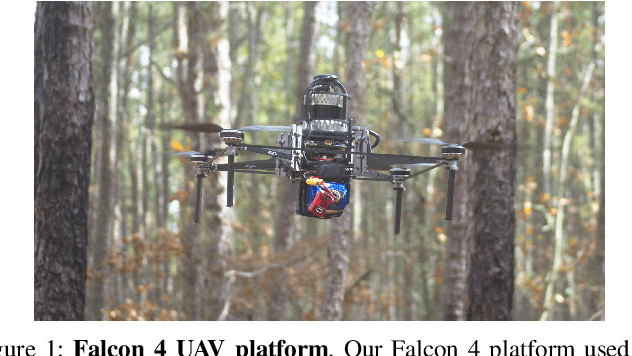

Abstract:We address the problem of efficient 3-D exploration in indoor environments for micro aerial vehicles with limited sensing capabilities and payload/power constraints. We develop an indoor exploration framework that uses learning to predict the occupancy of unseen areas, extracts semantic features, samples viewpoints to predict information gains for different exploration goals, and plans informative trajectories to enable safe and smart exploration. Extensive experimentation in simulated and real-world environments shows the proposed approach outperforms the state-of-the-art exploration framework by 24% in terms of the total path length in a structured indoor environment and with a higher success rate during exploration.

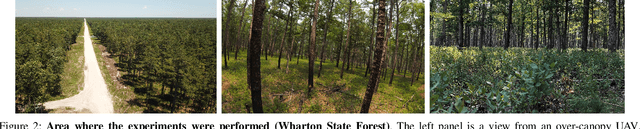

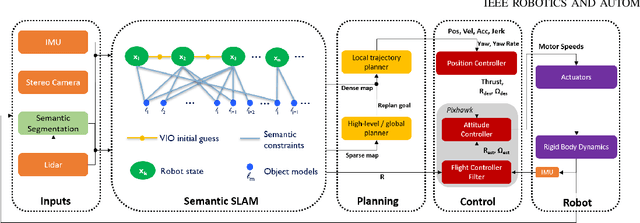

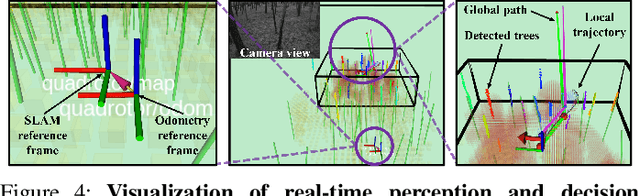

Large-scale Autonomous Flight with Real-time Semantic SLAM under Dense Forest Canopy

Sep 19, 2021

Abstract:In this letter, we propose an integrated autonomous flight and semantic SLAM system that can perform long-range missions and real-time semantic mapping in highly cluttered, unstructured, and GPS-denied under-canopy environments. First, tree trunks and ground planes are detected from LIDAR scans. We use a neural network and an instance extraction algorithm to enable semantic segmentation in real time onboard the UAV. Second, detected tree trunk instances are modeled as cylinders and associated across the whole LIDAR sequence. This semantic data association constraints both robot poses as well as trunk landmark models. The output of semantic SLAM is used in state estimation, planning, and control algorithms in real time. The global planner relies on a sparse map to plan the shortest path to the global goal, and the local trajectory planner uses a small but finely discretized robot-centric map to plan a dynamically feasible and collision-free trajectory to the local goal. Both the global path and local trajectory lead to drift-corrected goals, thus helping the UAV execute its mission accurately and safely.

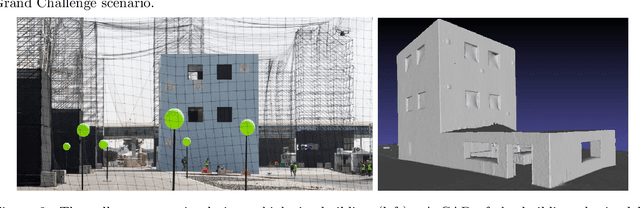

Design and Deployment of an Autonomous Unmanned Ground Vehicle for Urban Firefighting Scenarios

Jul 08, 2021

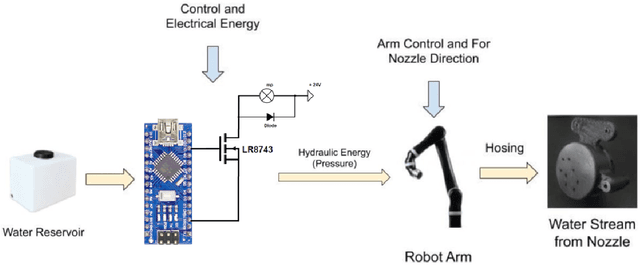

Abstract:Autonomous mobile robots have the potential to solve missions that are either too complex or dangerous to be accomplished by humans. In this paper, we address the design and autonomous deployment of a ground vehicle equipped with a robotic arm for urban firefighting scenarios. We describe the hardware design and algorithm approaches for autonomous navigation, planning, fire source identification and abatement in unstructured urban scenarios. The approach employs on-board sensors for autonomous navigation and thermal camera information for source identification. A custom electro{mechanical pump is responsible to eject water for fire abatement. The proposed approach is validated through several experiments, where we show the ability to identify and abate a sample heat source in a building. The whole system was developed and deployed during the Mohamed Bin Zayed International Robotics Challenge (MBZIRC) 2020, for Challenge No. 3 Fire Fighting Inside a High-Rise Building and during the Grand Challenge where our approach scored the highest number of points among all UGV solutions and was instrumental to win the first place.

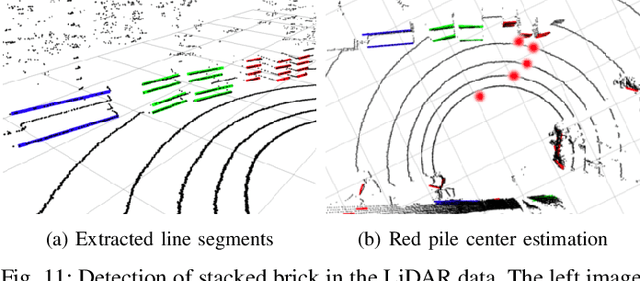

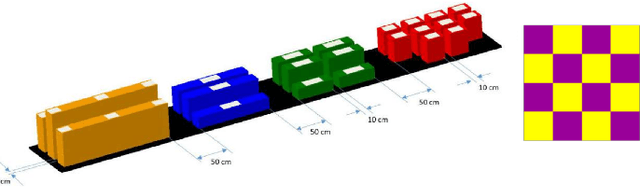

Mobile Manipulator for Autonomous Localization, Grasping and Precise Placement of Construction Material in a Semi-structured Environment

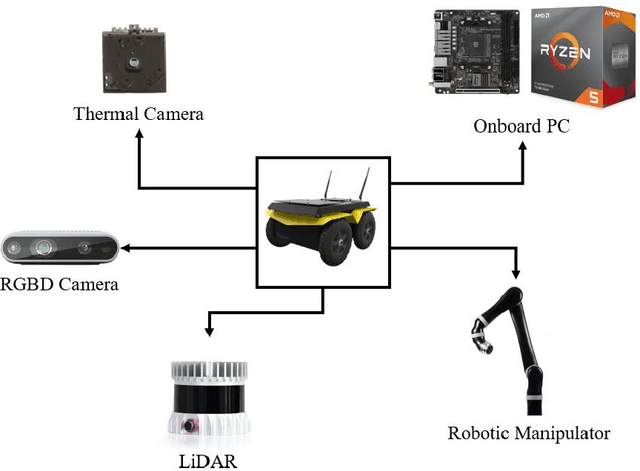

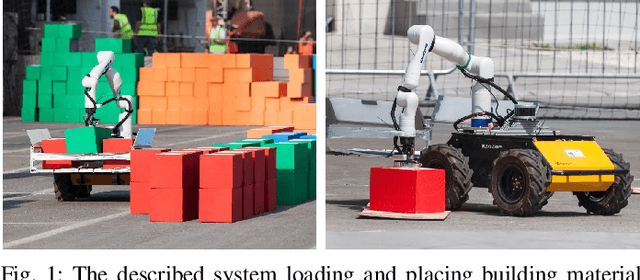

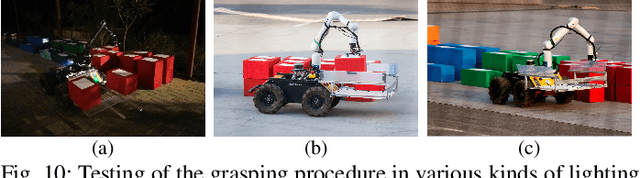

Nov 16, 2020

Abstract:Mobile manipulators have the potential to revolutionize modern agriculture, logistics and manufacturing. In this work, we present the design of a ground-based mobile manipulator for automated structure assembly. The proposed system is capable of autonomous localization, grasping, transportation and deployment of construction material in a semi-structured environment. Special effort was put into making the system invariant to lighting changes, and not reliant on external positioning systems. Therefore, the presented system is self-contained and capable of operating in outdoor and indoor conditions alike. Finally, we present means to extend the perceptive radius of the vehicle by using it in cooperation with an autonomous drone, which provides aerial reconnaissance. Performance of the proposed system has been evaluated in a series of experiments conducted in real-world conditions.

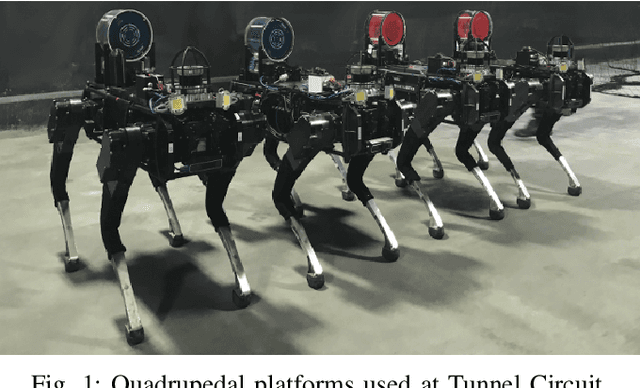

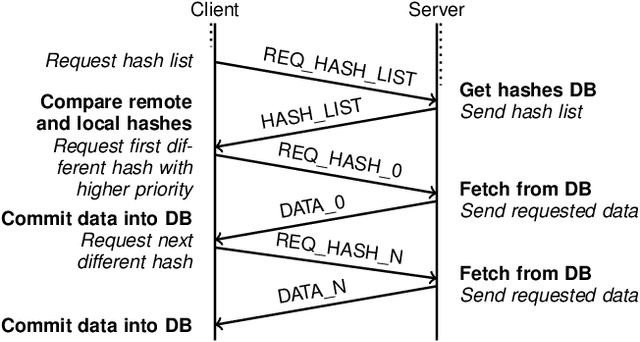

Mine Tunnel Exploration using Multiple Quadrupedal Robots

Sep 20, 2019

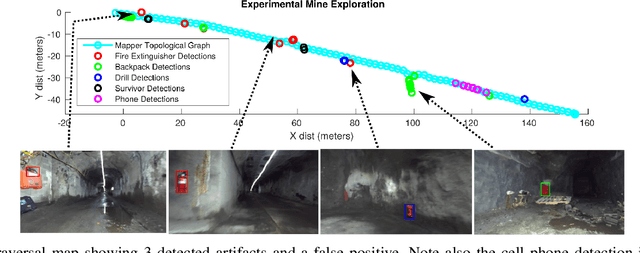

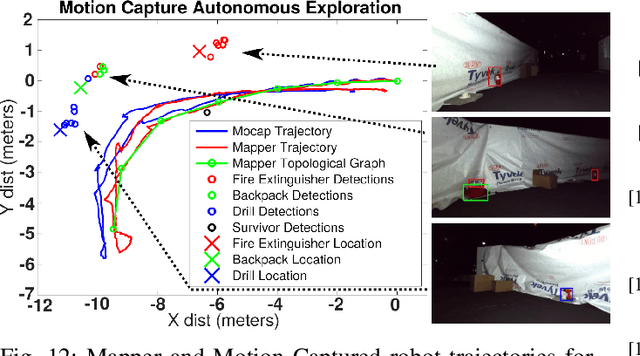

Abstract:Robotic exploration of underground environments is a particularly challenging problem due to communication, endurance, and traversability constraints which necessitate high degrees of autonomy and agility. These challenges are further enhanced by the need to minimize human intervention for practical applications. While legged robots have the ability to traverse extremely challenging terrain, they also engender further inherent challenges for planning, estimation, and control. In this work, we describe a fully autonomous system for multi-robot mine exploration and mapping using legged quadrupeds, as well as a distributed database mesh networking system for reporting data. In addition, we show results from the DARPA Subterranean Challenge (SubT) Tunnel Circuit demonstrating localization of artifacts after traversals of hundreds of meters. To our knowledge, these experiments represent the first fully autonomous exploration of an unknown GNSS-denied environment undertaken by legged robots.

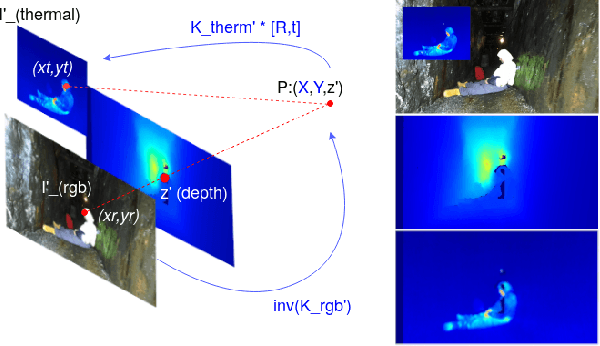

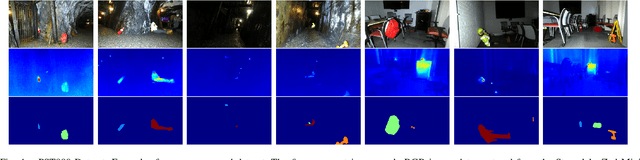

PST900: RGB-Thermal Calibration, Dataset and Segmentation Network

Sep 20, 2019

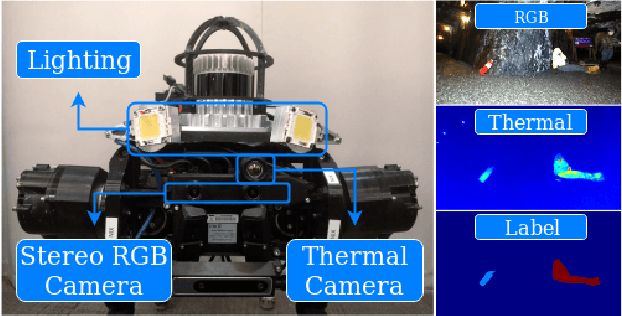

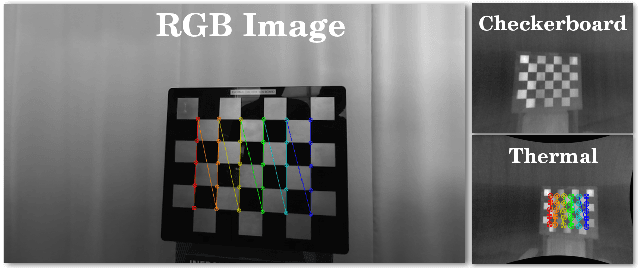

Abstract:In this work we propose long wave infrared (LWIR) imagery as a viable supporting modality for semantic segmentation using learning-based techniques. We first address the problem of RGB-thermal camera calibration by proposing a passive calibration target and procedure that is both portable and easy to use. Second, we present PST900, a dataset of 894 synchronized and calibrated RGB and Thermal image pairs with per pixel human annotations across four distinct classes from the DARPA Subterranean Challenge. Lastly, we propose a CNN architecture for fast semantic segmentation that combines both RGB and Thermal imagery in a way that leverages RGB imagery independently. We compare our method against the state-of-the-art and show that our method outperforms them in our dataset.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge