Zhichao Zhang

A Unified Fractional Spectral Framework for Spatiotemporal Graph Signals: Bi-Fractional Transform and Geodesic Coupling

Mar 02, 2026Abstract:Graph signal processing extends spectral analysis to data supported on irregular domains. Existing fractional transforms for two-dimensional graph signals, including the two-dimensional graph fractional Fourier transform (GFRFT), typically impose a shared fractional order across dimensions, which limits adaptivity to heterogeneous spatiotemporal spectra. To address this limitation, we propose the two-dimensional graph bi-fractional Fourier transform, which assigns independent fractional orders to the factor graphs of a Cartesian product, enabling decoupled spectral control while preserving separability, unitarity, and invertibility. To further resolve the basis ambiguity in temporal fractional analysis, we develop a geodesic-coupled GFRFT by constructing a coupling path along the principal geodesic on the unitary manifold, thereby unifying graph-induced and discrete temporal bases with guaranteed unitarity and a closed-form inverse. Building on these transforms, we derive a differentiable Wiener-type filtering framework with a hybrid optimization strategy: the fractional orders are learned end-to-end from data, while the coupling parameter is fixed as a structural regularizer. Experiments on real-world time-varying graph datasets and dynamic image restoration tasks demonstrate consistent gains over state-of-the-art fractional transforms and competitive learning-based baselines.

DWAFM: Dynamic Weighted Graph Structure Embedding Integrated with Attention and Frequency-Domain MLPs for Traffic Forecasting

Mar 01, 2026Abstract:Accurate traffic prediction is a key task for intelligent transportation systems. The core difficulty lies in accurately modeling the complex spatial-temporal dependencies in traffic data. In recent years, improvements in network architecture have failed to bring significant performance enhancements, while embedding technology has shown great potential. However, existing embedding methods often ignore graph structure information or rely solely on static graph structures, making it difficult to effectively capture the dynamic associations between nodes that evolve over time. To address this issue, this letter proposes a novel dynamic weighted graph structure (DWGS) embedding method, which relies on a graph structure that can truly reflect the changes in the strength of dynamic associations between nodes over time. By first combining the DWGS embedding with the spatial-temporal adaptive embedding, as well as the temporal embedding and feature embedding, and then integrating attention and frequency-domain multi-layer perceptrons (MLPs), we design a novel traffic prediction model, termed the DWGS embedding integrated with attention and frequency-domain MLPs (DWAFM). Experiments on five real-world traffic datasets show that the DWAFM achieves better prediction performance than some state-of-the-arts.

FGFRFT: Fast Graph Fractional FourierTransform via Fourier Series Approximation

Feb 24, 2026Abstract:The graph fractional Fourier transform (GFRFT) generalizes the graph Fourier transform (GFT) but suffers from a significant computational bottleneck: determining the optimal transform order requires expensive eigendecomposition and matrix multiplication, leading to $O(N^3)$ complexity. To address this issue, we propose a fast GFRFT (FGFRFT) algorithm for unitary GFT matrices based on Fourier series approximation and an efficient caching strategy. FGFRFT reduces the complexity of generating transform matrices to $O(2LN^2)$ while preserving differentiability, thereby enabling adaptive order learning. We validate the algorithm through theoretical analysis, approximation accuracy tests, and order learning experiments. Furthermore, we demonstrate its practical efficacy for image and point cloud denoising and present the fractional specformer, which integrates the FGFRFT into the specformer architecture. This integration enables the model to overcome the limitations of a fixed GFT basis and learn optimal fractional orders for complex data. Experimental results confirm that the proposed algorithm significantly accelerates computation and achieves superior performance compared with the GFRFT.

ELIQ: A Label-Free Framework for Quality Assessment of Evolving AI-Generated Images

Feb 03, 2026Abstract:Generative text-to-image models are advancing at an unprecedented pace, continuously shifting the perceptual quality ceiling and rendering previously collected labels unreliable for newer generations. To address this, we present ELIQ, a Label-free Framework for Quality Assessment of Evolving AI-generated Images. Specifically, ELIQ focuses on visual quality and prompt-image alignment, automatically constructs positive and aspect-specific negative pairs to cover both conventional distortions and AIGC-specific distortion modes, enabling transferable supervision without human annotations. Building on these pairs, ELIQ adapts a pre-trained multimodal model into a quality-aware critic via instruction tuning and predicts two-dimensional quality using lightweight gated fusion and a Quality Query Transformer. Experiments across multiple benchmarks demonstrate that ELIQ consistently outperforms existing label-free methods, generalizes from AI-generated content (AIGC) to user-generated content (UGC) scenarios without modification, and paves the way for scalable and label-free quality assessment under continuously evolving generative models. The code will be released upon publication.

Decoupling Perception and Calibration: Label-Efficient Image Quality Assessment Framework

Jan 28, 2026Abstract:Recent multimodal large language models (MLLMs) have demonstrated strong capabilities in image quality assessment (IQA) tasks. However, adapting such large-scale models is computationally expensive and still relies on substantial Mean Opinion Score (MOS) annotations. We argue that for MLLM-based IQA, the core bottleneck lies not in the quality perception capacity of MLLMs, but in MOS scale calibration. Therefore, we propose LEAF, a Label-Efficient Image Quality Assessment Framework that distills perceptual quality priors from an MLLM teacher into a lightweight student regressor, enabling MOS calibration with minimal human supervision. Specifically, the teacher conducts dense supervision through point-wise judgments and pair-wise preferences, with an estimate of decision reliability. Guided by these signals, the student learns the teacher's quality perception patterns through joint distillation and is calibrated on a small MOS subset to align with human annotations. Experiments on both user-generated and AI-generated IQA benchmarks demonstrate that our method significantly reduces the need for human annotations while maintaining strong MOS-aligned correlations, making lightweight IQA practical under limited annotation budgets.

JFRFFNet: A Data-Model Co-Driven Graph Signal Denoising Model with Partial Prior Information

Sep 11, 2025

Abstract:Wiener filtering in the joint time-vertex fractional Fourier transform (JFRFT) domain has shown high effectiveness in denoising time-varying graph signals. Traditional filtering models use grid search to determine the transform-order pair and compute filter coefficients, while learnable ones employ gradient-descent strategies to optimize them; both require complete prior information of graph signals. To overcome this shortcoming, this letter proposes a data-model co-driven denoising approach, termed neural-network-aided joint time-vertex fractional Fourier filtering (JFRFFNet), which embeds the JFRFT-domain Wiener filter model into a neural network and updates the transform-order pair and filter coefficients through a data-driven approach. This design enables effective denoising using only partial prior information. Experiments demonstrate that JFRFFNet achieves significant improvements in output signal-to-noise ratio compared with some state-of-the-art methods.

VQualA 2025 Challenge on Visual Quality Comparison for Large Multimodal Models: Methods and Results

Sep 11, 2025

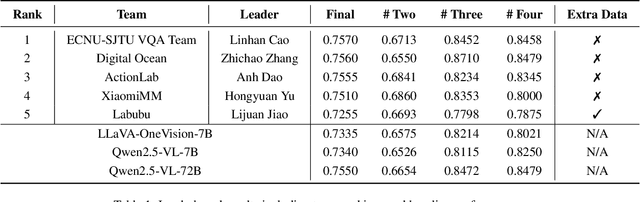

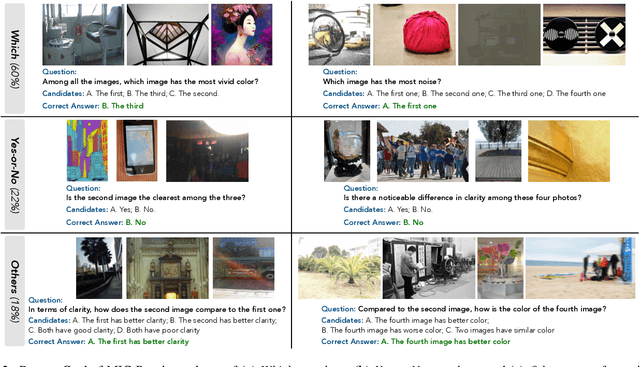

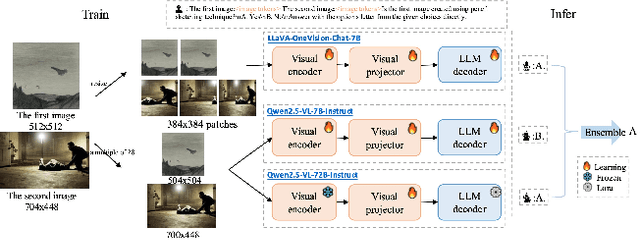

Abstract:This paper presents a summary of the VQualA 2025 Challenge on Visual Quality Comparison for Large Multimodal Models (LMMs), hosted as part of the ICCV 2025 Workshop on Visual Quality Assessment. The challenge aims to evaluate and enhance the ability of state-of-the-art LMMs to perform open-ended and detailed reasoning about visual quality differences across multiple images. To this end, the competition introduces a novel benchmark comprising thousands of coarse-to-fine grained visual quality comparison tasks, spanning single images, pairs, and multi-image groups. Each task requires models to provide accurate quality judgments. The competition emphasizes holistic evaluation protocols, including 2AFC-based binary preference and multi-choice questions (MCQs). Around 100 participants submitted entries, with five models demonstrating the emerging capabilities of instruction-tuned LMMs on quality assessment. This challenge marks a significant step toward open-domain visual quality reasoning and comparison and serves as a catalyst for future research on interpretable and human-aligned quality evaluation systems.

Multiple-Parameter Graph Fractional Fourier Transform: Theory and Applications

Jul 31, 2025Abstract:The graph fractional Fourier transform (GFRFT) applies a single global fractional order to all graph frequencies, which restricts its adaptability to diverse signal characteristics across the spectral domain. To address this limitation, in this paper, we propose two types of multiple-parameter GFRFTs (MPGFRFTs) and establish their corresponding theoretical frameworks. We design a spectral compression strategy tailored for ultra-low compression ratios, effectively preserving essential information even under extreme dimensionality reduction. To enhance flexibility, we introduce a learnable order vector scheme that enables adaptive compression and denoising, demonstrating strong performance on both graph signals and images. We explore the application of MPGFRFTs to image encryption and decryption. Experimental results validate the versatility and superior performance of the proposed MPGFRFT framework across various graph signal processing tasks.

NTIRE 2025 challenge on Text to Image Generation Model Quality Assessment

May 22, 2025Abstract:This paper reports on the NTIRE 2025 challenge on Text to Image (T2I) generation model quality assessment, which will be held in conjunction with the New Trends in Image Restoration and Enhancement Workshop (NTIRE) at CVPR 2025. The aim of this challenge is to address the fine-grained quality assessment of text-to-image generation models. This challenge evaluates text-to-image models from two aspects: image-text alignment and image structural distortion detection, and is divided into the alignment track and the structural track. The alignment track uses the EvalMuse-40K, which contains around 40K AI-Generated Images (AIGIs) generated by 20 popular generative models. The alignment track has a total of 371 registered participants. A total of 1,883 submissions are received in the development phase, and 507 submissions are received in the test phase. Finally, 12 participating teams submitted their models and fact sheets. The structure track uses the EvalMuse-Structure, which contains 10,000 AI-Generated Images (AIGIs) with corresponding structural distortion mask. A total of 211 participants have registered in the structure track. A total of 1155 submissions are received in the development phase, and 487 submissions are received in the test phase. Finally, 8 participating teams submitted their models and fact sheets. Almost all methods have achieved better results than baseline methods, and the winning methods in both tracks have demonstrated superior prediction performance on T2I model quality assessment.

AGHI-QA: A Subjective-Aligned Dataset and Metric for AI-Generated Human Images

Apr 30, 2025

Abstract:The rapid development of text-to-image (T2I) generation approaches has attracted extensive interest in evaluating the quality of generated images, leading to the development of various quality assessment methods for general-purpose T2I outputs. However, existing image quality assessment (IQA) methods are limited to providing global quality scores, failing to deliver fine-grained perceptual evaluations for structurally complex subjects like humans, which is a critical challenge considering the frequent anatomical and textural distortions in AI-generated human images (AGHIs). To address this gap, we introduce AGHI-QA, the first large-scale benchmark specifically designed for quality assessment of AGHIs. The dataset comprises 4,000 images generated from 400 carefully crafted text prompts using 10 state of-the-art T2I models. We conduct a systematic subjective study to collect multidimensional annotations, including perceptual quality scores, text-image correspondence scores, visible and distorted body part labels. Based on AGHI-QA, we evaluate the strengths and weaknesses of current T2I methods in generating human images from multiple dimensions. Furthermore, we propose AGHI-Assessor, a novel quality metric that integrates the large multimodal model (LMM) with domain-specific human features for precise quality prediction and identification of visible and distorted body parts in AGHIs. Extensive experimental results demonstrate that AGHI-Assessor showcases state-of-the-art performance, significantly outperforming existing IQA methods in multidimensional quality assessment and surpassing leading LMMs in detecting structural distortions in AGHIs.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge