Xudong Li

PLANING: A Loosely Coupled Triangle-Gaussian Framework for Streaming 3D Reconstruction

Jan 29, 2026Abstract:Streaming reconstruction from monocular image sequences remains challenging, as existing methods typically favor either high-quality rendering or accurate geometry, but rarely both. We present PLANING, an efficient on-the-fly reconstruction framework built on a hybrid representation that loosely couples explicit geometric primitives with neural Gaussians, enabling geometry and appearance to be modeled in a decoupled manner. This decoupling supports an online initialization and optimization strategy that separates geometry and appearance updates, yielding stable streaming reconstruction with substantially reduced structural redundancy. PLANING improves dense mesh Chamfer-L2 by 18.52% over PGSR, surpasses ARTDECO by 1.31 dB PSNR, and reconstructs ScanNetV2 scenes in under 100 seconds, over 5x faster than 2D Gaussian Splatting, while matching the quality of offline per-scene optimization. Beyond reconstruction quality, the structural clarity and computational efficiency of \modelname~make it well suited for a broad range of downstream applications, such as enabling large-scale scene modeling and simulation-ready environments for embodied AI. Project page: https://city-super.github.io/PLANING/ .

ActiShade: Activating Overshadowed Knowledge to Guide Multi-Hop Reasoning in Large Language Models

Jan 12, 2026Abstract:In multi-hop reasoning, multi-round retrieval-augmented generation (RAG) methods typically rely on LLM-generated content as the retrieval query. However, these approaches are inherently vulnerable to knowledge overshadowing - a phenomenon where critical information is overshadowed during generation. As a result, the LLM-generated content may be incomplete or inaccurate, leading to irrelevant retrieval and causing error accumulation during the iteration process. To address this challenge, we propose ActiShade, which detects and activates overshadowed knowledge to guide large language models (LLMs) in multi-hop reasoning. Specifically, ActiShade iteratively detects the overshadowed keyphrase in the given query, retrieves documents relevant to both the query and the overshadowed keyphrase, and generates a new query based on the retrieved documents to guide the next-round iteration. By supplementing the overshadowed knowledge during the formulation of next-round queries while minimizing the introduction of irrelevant noise, ActiShade reduces the error accumulation caused by knowledge overshadowing. Extensive experiments show that ActiShade outperforms existing methods across multiple datasets and LLMs.

Zooming from Context to Cue: Hierarchical Preference Optimization for Multi-Image MLLMs

May 28, 2025Abstract:Multi-modal Large Language Models (MLLMs) excel at single-image tasks but struggle with multi-image understanding due to cross-modal misalignment, leading to hallucinations (context omission, conflation, and misinterpretation). Existing methods using Direct Preference Optimization (DPO) constrain optimization to a solitary image reference within the input sequence, neglecting holistic context modeling. We propose Context-to-Cue Direct Preference Optimization (CcDPO), a multi-level preference optimization framework that enhances per-image perception in multi-image settings by zooming into visual clues -- from sequential context to local details. It features: (i) Context-Level Optimization : Re-evaluates cognitive biases underlying MLLMs' multi-image context comprehension and integrates a spectrum of low-cost global sequence preferences for bias mitigation. (ii) Needle-Level Optimization : Directs attention to fine-grained visual details through region-targeted visual prompts and multimodal preference supervision. To support scalable optimization, we also construct MultiScope-42k, an automatically generated dataset with high-quality multi-level preference pairs. Experiments show that CcDPO significantly reduces hallucinations and yields consistent performance gains across general single- and multi-image tasks.

Channel Fingerprint Construction for Massive MIMO: A Deep Conditional Generative Approach

May 12, 2025Abstract:Accurate channel state information (CSI) acquisition for massive multiple-input multiple-output (MIMO) systems is essential for future mobile communication networks. Channel fingerprint (CF), also referred to as channel knowledge map, is a key enabler for intelligent environment-aware communication and can facilitate CSI acquisition. However, due to the cost limitations of practical sensing nodes and test vehicles, the resulting CF is typically coarse-grained, making it insufficient for wireless transceiver design. In this work, we introduce the concept of CF twins and design a conditional generative diffusion model (CGDM) with strong implicit prior learning capabilities as the computational core of the CF twin to establish the connection between coarse- and fine-grained CFs. Specifically, we employ a variational inference technique to derive the evidence lower bound (ELBO) for the log-marginal distribution of the observed fine-grained CF conditioned on the coarse-grained CF, enabling the CGDM to learn the complicated distribution of the target data. During the denoising neural network optimization, the coarse-grained CF is introduced as side information to accurately guide the conditioned generation of the CGDM. To make the proposed CGDM lightweight, we further leverage the additivity of network layers and introduce a one-shot pruning approach along with a multi-objective knowledge distillation technique. Experimental results show that the proposed approach exhibits significant improvement in reconstruction performance compared to the baselines. Additionally, zero-shot testing on reconstruction tasks with different magnification factors further demonstrates the scalability and generalization ability of the proposed approach.

RIS-assisted Physical Layer Security

Jan 30, 2025

Abstract:We propose a reconfigurable intelligent surface (RIS)-assisted wiretap channel, where the RIS is strategically deployed to provide a spatial separation to the transmitter, and orthogonal combiners are employed at the legitimate receiver to extract the data streams from the direct and RIS-assisted links. Then we derive the achievable secrecy rate under semantic security for the RIS-assisted channel and design an algorithm for the secrecy rate optimization problem. The simulation results show the effects of total transmit power, the location and number of eavesdroppers on the security performance.

Alternating minimization for square root principal component pursuit

Dec 31, 2024Abstract:Recently, the square root principal component pursuit (SRPCP) model has garnered significant research interest. It is shown in the literature that the SRPCP model guarantees robust matrix recovery with a universal, constant penalty parameter. While its statistical advantages are well-documented, the computational aspects from an optimization perspective remain largely unexplored. In this paper, we focus on developing efficient optimization algorithms for solving the SRPCP problem. Specifically, we propose a tuning-free alternating minimization (AltMin) algorithm, where each iteration involves subproblems enjoying closed-form optimal solutions. Additionally, we introduce techniques based on the variational formulation of the nuclear norm and Burer-Monteiro decomposition to further accelerate the AltMin method. Extensive numerical experiments confirm the efficiency and robustness of our algorithms.

Continuous Gaussian Process Pre-Optimization for Asynchronous Event-Inertial Odometry

Dec 12, 2024Abstract:Event cameras, as bio-inspired sensors, are asynchronously triggered with high-temporal resolution compared to intensity cameras. Recent work has focused on fusing the event measurements with inertial measurements to enable ego-motion estimation in high-speed and HDR environments. However, existing methods predominantly rely on IMU preintegration designed mainly for synchronous sensors and discrete-time frameworks. In this paper, we propose a continuous-time preintegration method based on the Temporal Gaussian Process (TGP) called GPO. Concretely, we model the preintegration as a time-indexed motion trajectory and leverage an efficient two-step optimization to initialize the precision preintegration pseudo-measurements. Our method realizes a linear and constant time cost for initialization and query, respectively. To further validate the proposal, we leverage the GPO to design an asynchronous event-inertial odometry and compare with other asynchronous fusion schemes within the same odometry system. Experiments conducted on both public and own-collected datasets demonstrate that the proposed GPO offers significant advantages in terms of precision and efficiency, outperforming existing approaches in handling asynchronous sensor fusion.

Asynchronous Event-Inertial Odometry using a Unified Gaussian Process Regression Framework

Dec 04, 2024

Abstract:Recent works have combined monocular event camera and inertial measurement unit to estimate the $SE(3)$ trajectory. However, the asynchronicity of event cameras brings a great challenge to conventional fusion algorithms. In this paper, we present an asynchronous event-inertial odometry under a unified Gaussian Process (GP) regression framework to naturally fuse asynchronous data associations and inertial measurements. A GP latent variable model is leveraged to build data-driven motion prior and acquire the analytical integration capacity. Then, asynchronous event-based feature associations and integral pseudo measurements are tightly coupled using the same GP framework. Subsequently, this fusion estimation problem is solved by underlying factor graph in a sliding-window manner. With consideration of sparsity, those historical states are marginalized orderly. A twin system is also designed for comparison, where the traditional inertial preintegration scheme is embedded in the GP-based framework to replace the GP latent variable model. Evaluations on public event-inertial datasets demonstrate the validity of both systems. Comparison experiments show competitive precision compared to the state-of-the-art synchronous scheme.

AsynEIO: Asynchronous Monocular Event-Inertial Odometry Using Gaussian Process Regression

Nov 19, 2024

Abstract:Event cameras, when combined with inertial sensors, show significant potential for motion estimation in challenging scenarios, such as high-speed maneuvers and low-light environments. There are many methods for producing such estimations, but most boil down to a synchronous discrete-time fusion problem. However, the asynchronous nature of event cameras and their unique fusion mechanism with inertial sensors remain underexplored. In this paper, we introduce a monocular event-inertial odometry method called AsynEIO, designed to fuse asynchronous event and inertial data within a unified Gaussian Process (GP) regression framework. Our approach incorporates an event-driven frontend that tracks feature trajectories directly from raw event streams at a high temporal resolution. These tracked feature trajectories, along with various inertial factors, are integrated into the same GP regression framework to enable asynchronous fusion. With deriving analytical residual Jacobians and noise models, our method constructs a factor graph that is iteratively optimized and pruned using a sliding-window optimizer. Comparative assessments highlight the performance of different inertial fusion strategies, suggesting optimal choices for varying conditions. Experimental results on both public datasets and our own event-inertial sequences indicate that AsynEIO outperforms existing methods, especially in high-speed and low-illumination scenarios.

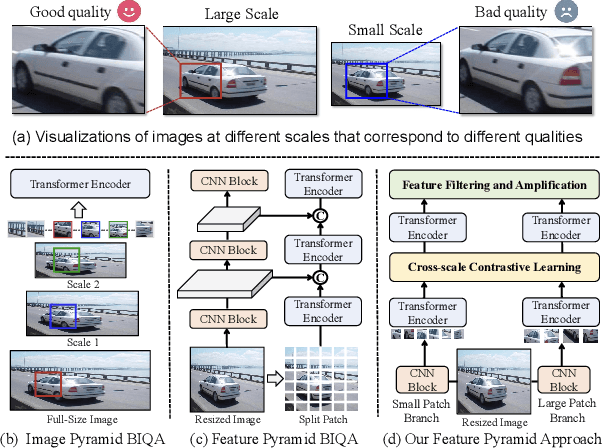

Scale Contrastive Learning with Selective Attentions for Blind Image Quality Assessment

Nov 13, 2024

Abstract:Blind image quality assessment (BIQA) serves as a fundamental task in computer vision, yet it often fails to consistently align with human subjective perception. Recent advances show that multi-scale evaluation strategies are promising due to their ability to replicate the hierarchical structure of human vision. However, the effectiveness of these strategies is limited by a lack of understanding of how different image scales influence perceived quality. This paper addresses two primary challenges: the significant redundancy of information across different scales, and the confusion caused by combining features from these scales, which may vary widely in quality. To this end, a new multi-scale BIQA framework is proposed, namely Contrast-Constrained Scale-Focused IQA Framework (CSFIQA). CSFIQA features a selective focus attention mechanism to minimize information redundancy and highlight critical quality-related information. Additionally, CSFIQA includes a scale-level contrastive learning module equipped with a noise sample matching mechanism to identify quality discrepancies across the same image content at different scales. By exploring the intrinsic relationship between image scales and the perceived quality, the proposed CSFIQA achieves leading performance on eight benchmark datasets, e.g., achieving SRCC values of 0.967 (versus 0.947 in CSIQ) and 0.905 (versus 0.876 in LIVEC).

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge