Xiaokui Xiao

Accurate Table Question Answering with Accessible LLMs

Jan 06, 2026Abstract:Given a table T in a database and a question Q in natural language, the table question answering (TQA) task aims to return an accurate answer to Q based on the content of T. Recent state-of-the-art solutions leverage large language models (LLMs) to obtain high-quality answers. However, most rely on proprietary, large-scale LLMs with costly API access, posing a significant financial barrier. This paper instead focuses on TQA with smaller, open-weight LLMs that can run on a desktop or laptop. This setting is challenging, as such LLMs typically have weaker capabilities than large proprietary models, leading to substantial performance degradation with existing methods. We observe that a key reason for this degradation is that prior approaches often require the LLM to solve a highly sophisticated task using long, complex prompts, which exceed the capabilities of small open-weight LLMs. Motivated by this observation, we present Orchestra, a multi-agent approach that unlocks the potential of accessible LLMs for high-quality, cost-effective TQA. Orchestra coordinates a group of LLM agents, each responsible for a relatively simple task, through a structured, layered workflow to solve complex TQA problems -- akin to an orchestra. By reducing the prompt complexity faced by each agent, Orchestra significantly improves output reliability. We implement Orchestra on top of AgentScope, an open-source multi-agent framework, and evaluate it on multiple TQA benchmarks using a wide range of open-weight LLMs. Experimental results show that Orchestra achieves strong performance even with small- to medium-sized models. For example, with Qwen2.5-14B, Orchestra reaches 72.1% accuracy on WikiTQ, approaching the best prior result of 75.3% achieved with GPT-4; with larger Qwen, Llama, or DeepSeek models, Orchestra outperforms all prior methods and establishes new state-of-the-art results across all benchmarks.

GEM-Bench: A Benchmark for Ad-Injected Response Generation within Generative Engine Marketing

Sep 17, 2025

Abstract:Generative Engine Marketing (GEM) is an emerging ecosystem for monetizing generative engines, such as LLM-based chatbots, by seamlessly integrating relevant advertisements into their responses. At the core of GEM lies the generation and evaluation of ad-injected responses. However, existing benchmarks are not specifically designed for this purpose, which limits future research. To address this gap, we propose GEM-Bench, the first comprehensive benchmark for ad-injected response generation in GEM. GEM-Bench includes three curated datasets covering both chatbot and search scenarios, a metric ontology that captures multiple dimensions of user satisfaction and engagement, and several baseline solutions implemented within an extensible multi-agent framework. Our preliminary results indicate that, while simple prompt-based methods achieve reasonable engagement such as click-through rate, they often reduce user satisfaction. In contrast, approaches that insert ads based on pre-generated ad-free responses help mitigate this issue but introduce additional overhead. These findings highlight the need for future research on designing more effective and efficient solutions for generating ad-injected responses in GEM.

GegenNet: Spectral Convolutional Neural Networks for Link Sign Prediction in Signed Bipartite Graphs

Aug 27, 2025Abstract:Given a signed bipartite graph (SBG) G with two disjoint node sets U and V, the goal of link sign prediction is to predict the signs of potential links connecting U and V based on known positive and negative edges in G. The majority of existing solutions towards link sign prediction mainly focus on unipartite signed graphs, which are sub-optimal due to the neglect of node heterogeneity and unique bipartite characteristics of SBGs. To this end, recent studies adapt graph neural networks to SBGs by introducing message-passing schemes for both inter-partition (UxV) and intra-partition (UxU or VxV) node pairs. However, the fundamental spectral convolutional operators were originally designed for positive links in unsigned graphs, and thus, are not optimal for inferring missing positive or negative links from known ones in SBGs. Motivated by this, this paper proposes GegenNet, a novel and effective spectral convolutional neural network model for link sign prediction in SBGs. In particular, GegenNet achieves enhanced model capacity and high predictive accuracy through three main technical contributions: (i) fast and theoretically grounded spectral decomposition techniques for node feature initialization; (ii) a new spectral graph filter based on the Gegenbauer polynomial basis; and (iii) multi-layer sign-aware spectral convolutional networks alternating Gegenbauer polynomial filters with positive and negative edges. Our extensive empirical studies reveal that GegenNet can achieve significantly superior performance (up to a gain of 4.28% in AUC and 11.69% in F1) in link sign prediction compared to 11 strong competitors over 6 benchmark SBG datasets.

You Are What You Bought: Generating Customer Personas for E-commerce Applications

Apr 24, 2025Abstract:In e-commerce, user representations are essential for various applications. Existing methods often use deep learning techniques to convert customer behaviors into implicit embeddings. However, these embeddings are difficult to understand and integrate with external knowledge, limiting the effectiveness of applications such as customer segmentation, search navigation, and product recommendations. To address this, our paper introduces the concept of the customer persona. Condensed from a customer's numerous purchasing histories, a customer persona provides a multi-faceted and human-readable characterization of specific purchase behaviors and preferences, such as Busy Parents or Bargain Hunters. This work then focuses on representing each customer by multiple personas from a predefined set, achieving readable and informative explicit user representations. To this end, we propose an effective and efficient solution GPLR. To ensure effectiveness, GPLR leverages pre-trained LLMs to infer personas for customers. To reduce overhead, GPLR applies LLM-based labeling to only a fraction of users and utilizes a random walk technique to predict personas for the remaining customers. We further propose RevAff, which provides an absolute error $\epsilon$ guarantee while improving the time complexity of the exact solution by a factor of at least $O(\frac{\epsilon\cdot|E|N}{|E|+N\log N})$, where $N$ represents the number of customers and products, and $E$ represents the interactions between them. We evaluate the performance of our persona-based representation in terms of accuracy and robustness for recommendation and customer segmentation tasks using three real-world e-commerce datasets. Most notably, we find that integrating customer persona representations improves the state-of-the-art graph convolution-based recommendation model by up to 12% in terms of NDCG@K and F1-Score@K.

KET-RAG: A Cost-Efficient Multi-Granular Indexing Framework for Graph-RAG

Feb 13, 2025

Abstract:Graph-RAG constructs a knowledge graph from text chunks to improve retrieval in Large Language Model (LLM)-based question answering. It is particularly useful in domains such as biomedicine, law, and political science, where retrieval often requires multi-hop reasoning over proprietary documents. Some existing Graph-RAG systems construct KNN graphs based on text chunk relevance, but this coarse-grained approach fails to capture entity relationships within texts, leading to sub-par retrieval and generation quality. To address this, recent solutions leverage LLMs to extract entities and relationships from text chunks, constructing triplet-based knowledge graphs. However, this approach incurs significant indexing costs, especially for large document collections. To ensure a good result accuracy while reducing the indexing cost, we propose KET-RAG, a multi-granular indexing framework. KET-RAG first identifies a small set of key text chunks and leverages an LLM to construct a knowledge graph skeleton. It then builds a text-keyword bipartite graph from all text chunks, serving as a lightweight alternative to a full knowledge graph. During retrieval, KET-RAG searches both structures: it follows the local search strategy of existing Graph-RAG systems on the skeleton while mimicking this search on the bipartite graph to improve retrieval quality. We evaluate eight solutions on two real-world datasets, demonstrating that KET-RAG outperforms all competitors in indexing cost, retrieval effectiveness, and generation quality. Notably, it achieves comparable or superior retrieval quality to Microsoft's Graph-RAG while reducing indexing costs by over an order of magnitude. Additionally, it improves the generation quality by up to 32.4% while lowering indexing costs by around 20%.

Rethinking Link Prediction for Directed Graphs

Feb 08, 2025

Abstract:Link prediction for directed graphs is a crucial task with diverse real-world applications. Recent advances in embedding methods and Graph Neural Networks (GNNs) have shown promising improvements. However, these methods often lack a thorough analysis of embedding expressiveness and suffer from ineffective benchmarks for a fair evaluation. In this paper, we propose a unified framework to assess the expressiveness of existing methods, highlighting the impact of dual embeddings and decoder design on performance. To address limitations in current experimental setups, we introduce DirLinkBench, a robust new benchmark with comprehensive coverage and standardized evaluation. The results show that current methods struggle to achieve strong performance on the new benchmark, while DiGAE outperforms others overall. We further revisit DiGAE theoretically, showing its graph convolution aligns with GCN on an undirected bipartite graph. Inspired by these insights, we propose a novel spectral directed graph auto-encoder SDGAE that achieves SOTA results on DirLinkBench. Finally, we analyze key factors influencing directed link prediction and highlight open challenges.

Optimal Streaming Algorithms for Multi-Armed Bandits

Oct 23, 2024

Abstract:This paper studies two variants of the best arm identification (BAI) problem under the streaming model, where we have a stream of $n$ arms with reward distributions supported on $[0,1]$ with unknown means. The arms in the stream are arriving one by one, and the algorithm cannot access an arm unless it is stored in a limited size memory. We first study the streaming \eps-$top$-$k$ arms identification problem, which asks for $k$ arms whose reward means are lower than that of the $k$-th best arm by at most $\eps$ with probability at least $1-\delta$. For general $\eps \in (0,1)$, the existing solution for this problem assumes $k = 1$ and achieves the optimal sample complexity $O(\frac{n}{\eps^2} \log \frac{1}{\delta})$ using $O(\log^*(n))$ ($\log^*(n)$ equals the number of times that we need to apply the logarithm function on $n$ before the results is no more than 1.) memory and a single pass of the stream. We propose an algorithm that works for any $k$ and achieves the optimal sample complexity $O(\frac{n}{\eps^2} \log\frac{k}{\delta})$ using a single-arm memory and a single pass of the stream. Second, we study the streaming BAI problem, where the objective is to identify the arm with the maximum reward mean with at least $1-\delta$ probability, using a single-arm memory and as few passes of the input stream as possible. We present a single-arm-memory algorithm that achieves a near instance-dependent optimal sample complexity within $O(\log \Delta_2^{-1})$ passes, where $\Delta_2$ is the gap between the mean of the best arm and that of the second best arm.

* 24pages

Feature Inference Attack on Shapley Values

Jul 16, 2024

Abstract:As a solution concept in cooperative game theory, Shapley value is highly recognized in model interpretability studies and widely adopted by the leading Machine Learning as a Service (MLaaS) providers, such as Google, Microsoft, and IBM. However, as the Shapley value-based model interpretability methods have been thoroughly studied, few researchers consider the privacy risks incurred by Shapley values, despite that interpretability and privacy are two foundations of machine learning (ML) models. In this paper, we investigate the privacy risks of Shapley value-based model interpretability methods using feature inference attacks: reconstructing the private model inputs based on their Shapley value explanations. Specifically, we present two adversaries. The first adversary can reconstruct the private inputs by training an attack model based on an auxiliary dataset and black-box access to the model interpretability services. The second adversary, even without any background knowledge, can successfully reconstruct most of the private features by exploiting the local linear correlations between the model inputs and outputs. We perform the proposed attacks on the leading MLaaS platforms, i.e., Google Cloud, Microsoft Azure, and IBM aix360. The experimental results demonstrate the vulnerability of the state-of-the-art Shapley value-based model interpretability methods used in the leading MLaaS platforms and highlight the significance and necessity of designing privacy-preserving model interpretability methods in future studies. To our best knowledge, this is also the first work that investigates the privacy risks of Shapley values.

A Benchmark Study of Deep-RL Methods for Maximum Coverage Problems over Graphs

Jun 20, 2024

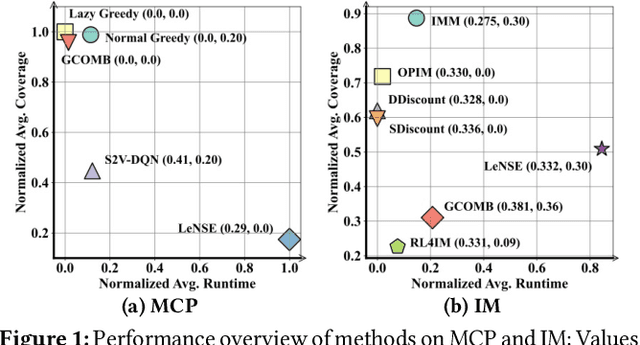

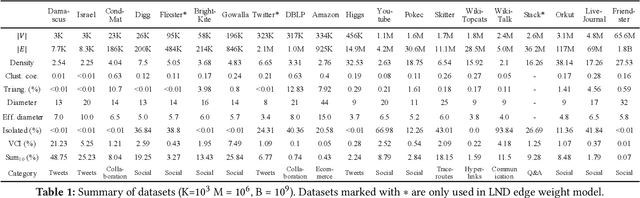

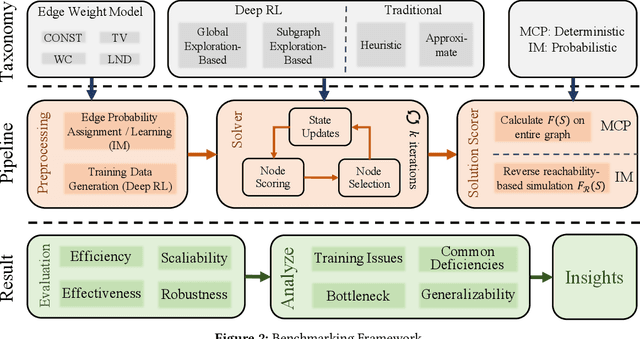

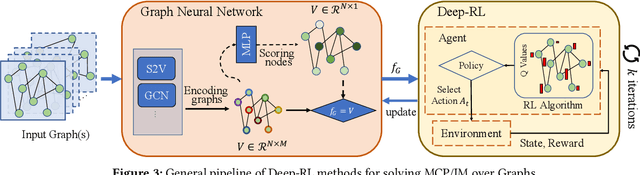

Abstract:Recent years have witnessed a growing trend toward employing deep reinforcement learning (Deep-RL) to derive heuristics for combinatorial optimization (CO) problems on graphs. Maximum Coverage Problem (MCP) and its probabilistic variant on social networks, Influence Maximization (IM), have been particularly prominent in this line of research. In this paper, we present a comprehensive benchmark study that thoroughly investigates the effectiveness and efficiency of five recent Deep-RL methods for MCP and IM. These methods were published in top data science venues, namely S2V-DQN, Geometric-QN, GCOMB, RL4IM, and LeNSE. Our findings reveal that, across various scenarios, the Lazy Greedy algorithm consistently outperforms all Deep-RL methods for MCP. In the case of IM, theoretically sound algorithms like IMM and OPIM demonstrate superior performance compared to Deep-RL methods in most scenarios. Notably, we observe an abnormal phenomenon in IM problem where Deep-RL methods slightly outperform IMM and OPIM when the influence spread nearly does not increase as the budget increases. Furthermore, our experimental results highlight common issues when applying Deep-RL methods to MCP and IM in practical settings. Finally, we discuss potential avenues for improving Deep-RL methods. Our benchmark study sheds light on potential challenges in current deep reinforcement learning research for solving combinatorial optimization problems.

Effective Edge-wise Representation Learning in Edge-Attributed Bipartite Graphs

Jun 19, 2024

Abstract:Graph representation learning (GRL) is to encode graph elements into informative vector representations, which can be used in downstream tasks for analyzing graph-structured data and has seen extensive applications in various domains. However, the majority of extant studies on GRL are geared towards generating node representations, which cannot be readily employed to perform edge-based analytics tasks in edge-attributed bipartite graphs (EABGs) that pervade the real world, e.g., spam review detection in customer-product reviews and identifying fraudulent transactions in user-merchant networks. Compared to node-wise GRL, learning edge representations (ERL) on such graphs is challenging due to the need to incorporate the structure and attribute semantics from the perspective of edges while considering the separate influence of two heterogeneous node sets U and V in bipartite graphs. To our knowledge, despite its importance, limited research has been devoted to this frontier, and existing workarounds all suffer from sub-par results. Motivated by this, this paper designs EAGLE, an effective ERL method for EABGs. Building on an in-depth and rigorous theoretical analysis, we propose the factorized feature propagation (FFP) scheme for edge representations with adequate incorporation of long-range dependencies of edges/features without incurring tremendous computation overheads. We further ameliorate FFP as a dual-view FFP by taking into account the influences from nodes in U and V severally in ERL. Extensive experiments on 5 real datasets showcase the effectiveness of the proposed EAGLE models in semi-supervised edge classification tasks. In particular, EAGLE can attain a considerable gain of at most 38.11% in AP and 1.86% in AUC when compared to the best baselines.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge