Zhewei Wei

AbFlow : End-to-end Paratope-Centric Antibody Design by Interaction Enhanced Flow Matching

Feb 06, 2026Abstract:Antigen-antibody binding is a critical process in the immune response. Although recent progress has advanced antibody design, current methods lack a generative framework for end-to-end modeling of full-atom antibody structures and struggle to fully exploit antigen-specific geometric information for optimizing local binding interfaces and global structures. To overcome these limitations, we introduce AbFlow, a flow-matching framework that leverages optimal transport to design full-atom antibodies end-to-end. AbFlow incorporates an extended velocity field network featuring an equivariant Surface Multi-channel Encoder, which uses surface-level antigen interaction data to refine the antibody structure, particularly the CDR-H3 region. Extensive experiments in paratoep-centric antibody design, multi-CDRs and full-atom antibody design, binding affinity optimization, and complex structure prediction show that AbFlow produces superior antigen-antibody complexes, especially at the contact interface, and markedly improves the binding affinity of generated antibodies.

Fused Gromov-Wasserstein Contrastive Learning for Effective Enzyme-Reaction Screening

Dec 09, 2025Abstract:Enzymes are crucial catalysts that enable a wide range of biochemical reactions. Efficiently identifying specific enzymes from vast protein libraries is essential for advancing biocatalysis. Traditional computational methods for enzyme screening and retrieval are time-consuming and resource-intensive. Recently, deep learning approaches have shown promise. However, these methods focus solely on the interaction between enzymes and reactions, overlooking the inherent hierarchical relationships within each domain. To address these limitations, we introduce FGW-CLIP, a novel contrastive learning framework based on optimizing the fused Gromov-Wasserstein distance. FGW-CLIP incorporates multiple alignments, including inter-domain alignment between reactions and enzymes and intra-domain alignment within enzymes and reactions. By introducing a tailored regularization term, our method minimizes the Gromov-Wasserstein distance between enzyme and reaction spaces, which enhances information integration across these domains. Extensive evaluations demonstrate the superiority of FGW-CLIP in challenging enzyme-reaction tasks. On the widely-used EnzymeMap benchmark, FGW-CLIP achieves state-of-the-art performance in enzyme virtual screening, as measured by BEDROC and EF metrics. Moreover, FGW-CLIP consistently outperforms across all three splits of ReactZyme, the largest enzyme-reaction benchmark, demonstrating robust generalization to novel enzymes and reactions. These results position FGW-CLIP as a promising framework for enzyme discovery in complex biochemical settings, with strong adaptability across diverse screening scenarios.

Future Link Prediction Without Memory or Aggregation

May 26, 2025Abstract:Future link prediction on temporal graphs is a fundamental task with wide applicability in real-world dynamic systems. These scenarios often involve both recurring (seen) and novel (unseen) interactions, requiring models to generalize effectively across both types of edges. However, existing methods typically rely on complex memory and aggregation modules, yet struggle to handle unseen edges. In this paper, we revisit the architecture of existing temporal graph models and identify two essential but overlooked modeling requirements for future link prediction: representing nodes with unique identifiers and performing target-aware matching between source and destination nodes. To this end, we propose Cross-Attention based Future Link Predictor on Temporal Graphs (CRAFT), a simple yet effective architecture that discards memory and aggregation modules and instead builds on two components: learnable node embeddings and cross-attention between the destination and the source's recent interactions. This design provides strong expressive power and enables target-aware modeling of the compatibility between candidate destinations and the source's interaction patterns. Extensive experiments on diverse datasets demonstrate that CRAFT consistently achieves superior performance with high efficiency, making it well-suited for large-scale real-world applications.

Rethinking Link Prediction for Directed Graphs

Feb 08, 2025

Abstract:Link prediction for directed graphs is a crucial task with diverse real-world applications. Recent advances in embedding methods and Graph Neural Networks (GNNs) have shown promising improvements. However, these methods often lack a thorough analysis of embedding expressiveness and suffer from ineffective benchmarks for a fair evaluation. In this paper, we propose a unified framework to assess the expressiveness of existing methods, highlighting the impact of dual embeddings and decoder design on performance. To address limitations in current experimental setups, we introduce DirLinkBench, a robust new benchmark with comprehensive coverage and standardized evaluation. The results show that current methods struggle to achieve strong performance on the new benchmark, while DiGAE outperforms others overall. We further revisit DiGAE theoretically, showing its graph convolution aligns with GCN on an undirected bipartite graph. Inspired by these insights, we propose a novel spectral directed graph auto-encoder SDGAE that achieves SOTA results on DirLinkBench. Finally, we analyze key factors influencing directed link prediction and highlight open challenges.

TGB-Seq Benchmark: Challenging Temporal GNNs with Complex Sequential Dynamics

Feb 05, 2025

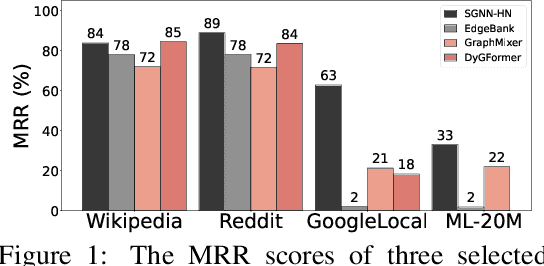

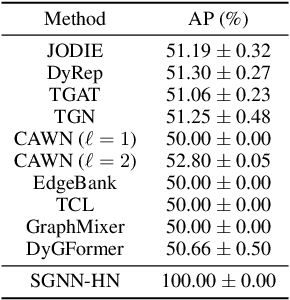

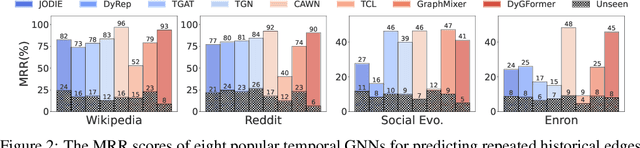

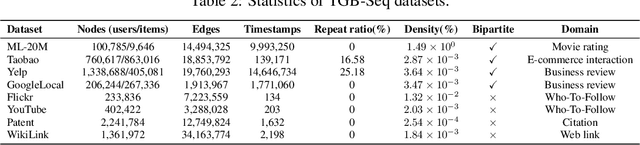

Abstract:Future link prediction is a fundamental challenge in various real-world dynamic systems. To address this, numerous temporal graph neural networks (temporal GNNs) and benchmark datasets have been developed. However, these datasets often feature excessive repeated edges and lack complex sequential dynamics, a key characteristic inherent in many real-world applications such as recommender systems and ``Who-To-Follow'' on social networks. This oversight has led existing methods to inadvertently downplay the importance of learning sequential dynamics, focusing primarily on predicting repeated edges. In this study, we demonstrate that existing methods, such as GraphMixer and DyGFormer, are inherently incapable of learning simple sequential dynamics, such as ``a user who has followed OpenAI and Anthropic is more likely to follow AI at Meta next.'' Motivated by this issue, we introduce the Temporal Graph Benchmark with Sequential Dynamics (TGB-Seq), a new benchmark carefully curated to minimize repeated edges, challenging models to learn sequential dynamics and generalize to unseen edges. TGB-Seq comprises large real-world datasets spanning diverse domains, including e-commerce interactions, movie ratings, business reviews, social networks, citation networks and web link networks. Benchmarking experiments reveal that current methods usually suffer significant performance degradation and incur substantial training costs on TGB-Seq, posing new challenges and opportunities for future research. TGB-Seq datasets, leaderboards, and example codes are available at https://tgb-seq.github.io/.

Large-Scale Spectral Graph Neural Networks via Laplacian Sparsification: Technical Report

Jan 08, 2025

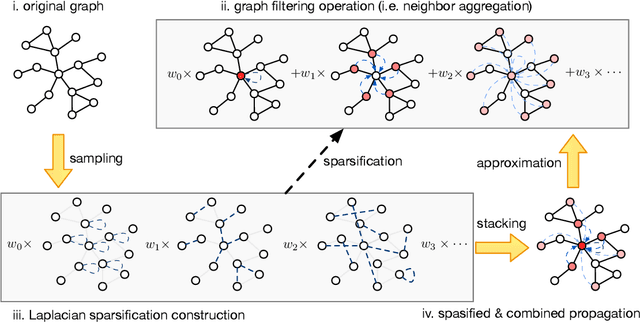

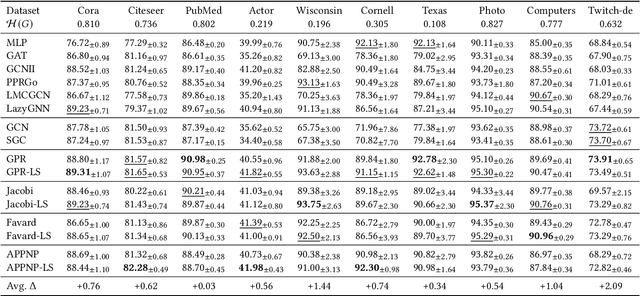

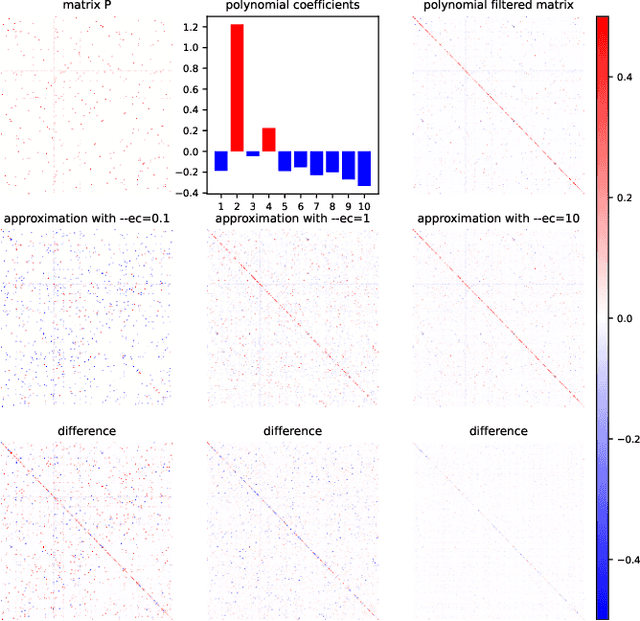

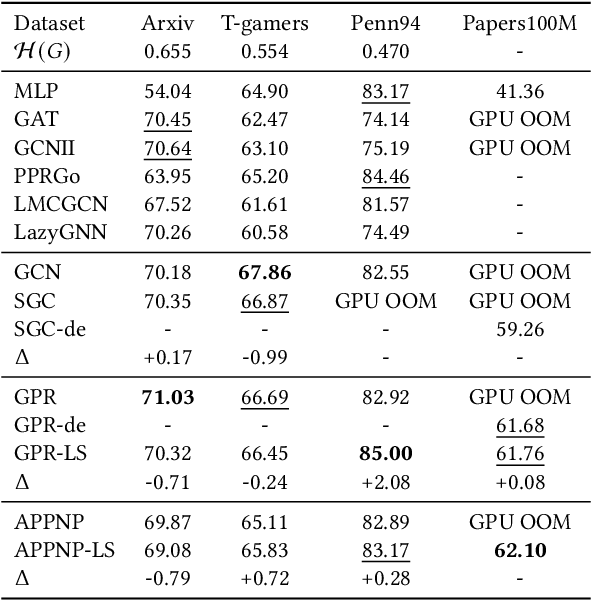

Abstract:Graph Neural Networks (GNNs) play a pivotal role in graph-based tasks for their proficiency in representation learning. Among the various GNN methods, spectral GNNs employing polynomial filters have shown promising performance on tasks involving both homophilous and heterophilous graph structures. However, The scalability of spectral GNNs on large graphs is limited because they learn the polynomial coefficients through multiple forward propagation executions during forward propagation. Existing works have attempted to scale up spectral GNNs by eliminating the linear layers on the input node features, a change that can disrupt end-to-end training, potentially impact performance, and become impractical with high-dimensional input features. To address the above challenges, we propose "Spectral Graph Neural Networks with Laplacian Sparsification (SGNN-LS)", a novel graph spectral sparsification method to approximate the propagation patterns of spectral GNNs. We prove that our proposed method generates Laplacian sparsifiers that can approximate both fixed and learnable polynomial filters with theoretical guarantees. Our method allows the application of linear layers on the input node features, enabling end-to-end training as well as the handling of raw text features. We conduct an extensive experimental analysis on datasets spanning various graph scales and properties to demonstrate the superior efficiency and effectiveness of our method. The results show that our method yields superior results in comparison with the corresponding approximated base models, especially on dataset Ogbn-papers100M(111M nodes, 1.6B edges) and MAG-scholar-C (2.8M features).

SRAP-Agent: Simulating and Optimizing Scarce Resource Allocation Policy with LLM-based Agent

Oct 18, 2024

Abstract:Public scarce resource allocation plays a crucial role in economics as it directly influences the efficiency and equity in society. Traditional studies including theoretical model-based, empirical study-based and simulation-based methods encounter limitations due to the idealized assumption of complete information and individual rationality, as well as constraints posed by limited available data. In this work, we propose an innovative framework, SRAP-Agent (Simulating and Optimizing Scarce Resource Allocation Policy with LLM-based Agent), which integrates Large Language Models (LLMs) into economic simulations, aiming to bridge the gap between theoretical models and real-world dynamics. Using public housing allocation scenarios as a case study, we conduct extensive policy simulation experiments to verify the feasibility and effectiveness of the SRAP-Agent and employ the Policy Optimization Algorithm with certain optimization objectives. The source code can be found in https://github.com/jijiarui-cather/SRAPAgent_Framework

Fast Second-Order Online Kernel Learning through Incremental Matrix Sketching and Decomposition

Oct 15, 2024Abstract:Online Kernel Learning (OKL) has attracted considerable research interest due to its promising predictive performance in streaming environments. Second-order approaches are particularly appealing for OKL as they often offer substantial improvements in regret guarantees. However, existing second-order OKL approaches suffer from at least quadratic time complexity with respect to the pre-set budget, rendering them unsuitable for meeting the real-time demands of large-scale streaming recommender systems. The singular value decomposition required to obtain explicit feature mapping is also computationally expensive due to the complete decomposition process. Moreover, the absence of incremental updates to manage approximate kernel space causes these algorithms to perform poorly in adversarial environments and real-world streaming recommendation datasets. To address these issues, we propose FORKS, a fast incremental matrix sketching and decomposition approach tailored for second-order OKL. FORKS constructs an incremental maintenance paradigm for second-order kernelized gradient descent, which includes incremental matrix sketching for kernel approximation and incremental matrix decomposition for explicit feature mapping construction. Theoretical analysis demonstrates that FORKS achieves a logarithmic regret guarantee on par with other second-order approaches while maintaining a linear time complexity w.r.t. the budget, significantly enhancing efficiency over existing approaches. We validate the performance of FORKS through extensive experiments conducted on real-world streaming recommendation datasets, demonstrating its superior scalability and robustness against adversarial attacks.

Matrix Sketching in Bandits: Current Pitfalls and New Framework

Oct 14, 2024Abstract:The utilization of sketching techniques has progressively emerged as a pivotal method for enhancing the efficiency of online learning. In linear bandit settings, current sketch-based approaches leverage matrix sketching to reduce the per-round time complexity from \(\Omega\left(d^2\right)\) to \(O(d)\), where \(d\) is the input dimension. Despite this improved efficiency, these approaches encounter critical pitfalls: if the spectral tail of the covariance matrix does not decrease rapidly, it can lead to linear regret. In this paper, we revisit the regret analysis and algorithm design concerning approximating the covariance matrix using matrix sketching in linear bandits. We illustrate how inappropriate sketch sizes can result in unbounded spectral loss, thereby causing linear regret. To prevent this issue, we propose Dyadic Block Sketching, an innovative streaming matrix sketching approach that adaptively manages sketch size to constrain global spectral loss. This approach effectively tracks the best rank-\( k \) approximation in an online manner, ensuring efficiency when the geometry of the covariance matrix is favorable. Then, we apply the proposed Dyadic Block Sketching to linear bandits and demonstrate that the resulting bandit algorithm can achieve sublinear regret without prior knowledge of the covariance matrix, even under the worst case. Our method is a general framework for efficient sketch-based linear bandits, applicable to all existing sketch-based approaches, and offers improved regret bounds accordingly. Additionally, we conduct comprehensive empirical studies using both synthetic and real-world data to validate the accuracy of our theoretical findings and to highlight the effectiveness of our algorithm.

Dynamic and Textual Graph Generation Via Large-Scale LLM-based Agent Simulation

Oct 13, 2024

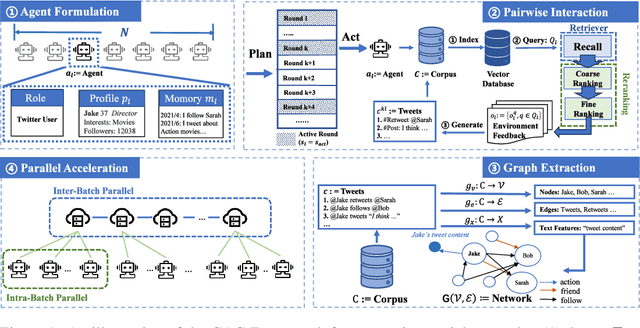

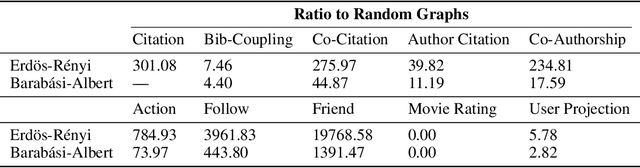

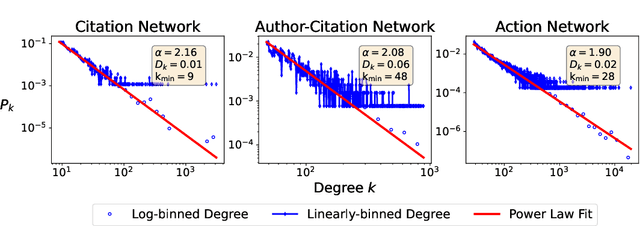

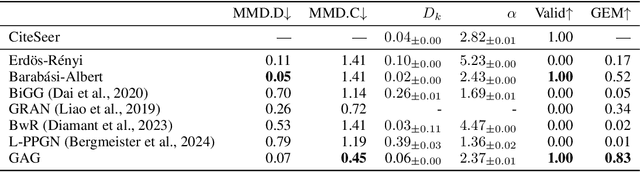

Abstract:Graph generation is a fundamental task that has been extensively studied in social, technological, and scientific analysis. For modeling the dynamic graph evolution process, traditional rule-based methods struggle to capture community structures within graphs, while deep learning methods only focus on fitting training graphs. This limits existing graph generators to producing graphs that adhere to predefined rules or closely resemble training datasets, achieving poor performance in dynamic graph generation. Given that graphs are abstract representations arising from pairwise interactions in human activities, a realistic simulation of human-wise interaction could provide deeper insights into the graph evolution mechanism. With the increasing recognition of large language models (LLMs) in simulating human behavior, we introduce GraphAgent-Generator (GAG), a novel simulation-based framework for dynamic graph generation. Without training or fine-tuning process of LLM, our framework effectively replicates seven macro-level structural characteristics in established network science theories while surpassing existing baselines in graph expansion tasks by 31\% on specific evaluation metrics. Through node classification task, we validate GAG effectively preserves characteristics of real-world network for node-wise textual features in generated text-rich graph. Furthermore, by incorporating parallel acceleration, GAG supports generating graphs with up to nearly 100,000 nodes or 10 million edges through large-scale LLM-based agent simulation, with a minimum speed-up of 90.4\%. The source code is available at https://anonymous.4open.science/r/GraphAgent-2206.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge