Siu Ming Yiu

HUMOF: Human Motion Forecasting in Interactive Social Scenes

Jun 05, 2025Abstract:Complex scenes present significant challenges for predicting human behaviour due to the abundance of interaction information, such as human-human and humanenvironment interactions. These factors complicate the analysis and understanding of human behaviour, thereby increasing the uncertainty in forecasting human motions. Existing motion prediction methods thus struggle in these complex scenarios. In this paper, we propose an effective method for human motion forecasting in interactive scenes. To achieve a comprehensive representation of interactions, we design a hierarchical interaction feature representation so that high-level features capture the overall context of the interactions, while low-level features focus on fine-grained details. Besides, we propose a coarse-to-fine interaction reasoning module that leverages both spatial and frequency perspectives to efficiently utilize hierarchical features, thereby enhancing the accuracy of motion predictions. Our method achieves state-of-the-art performance across four public datasets. Code will be released when this paper is published.

UniDemoiré: Towards Universal Image Demoiréing with Data Generation and Synthesis

Feb 10, 2025Abstract:Image demoir\'eing poses one of the most formidable challenges in image restoration, primarily due to the unpredictable and anisotropic nature of moir\'e patterns. Limited by the quantity and diversity of training data, current methods tend to overfit to a single moir\'e domain, resulting in performance degradation for new domains and restricting their robustness in real-world applications. In this paper, we propose a universal image demoir\'eing solution, UniDemoir\'e, which has superior generalization capability. Notably, we propose innovative and effective data generation and synthesis methods that can automatically provide vast high-quality moir\'e images to train a universal demoir\'eing model. Our extensive experiments demonstrate the cutting-edge performance and broad potential of our approach for generalized image demoir\'eing.

Generalizable Single-view Object Pose Estimation by Two-side Generating and Matching

Nov 24, 2024

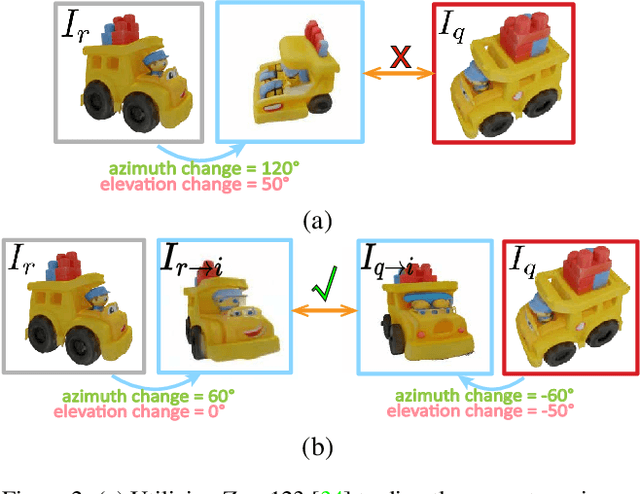

Abstract:In this paper, we present a novel generalizable object pose estimation method to determine the object pose using only one RGB image. Unlike traditional approaches that rely on instance-level object pose estimation and necessitate extensive training data, our method offers generalization to unseen objects without extensive training, operates with a single reference image of the object, and eliminates the need for 3D object models or multiple views of the object. These characteristics are achieved by utilizing a diffusion model to generate novel-view images and conducting a two-sided matching on these generated images. Quantitative experiments demonstrate the superiority of our method over existing pose estimation techniques across both synthetic and real-world datasets. Remarkably, our approach maintains strong performance even in scenarios with significant viewpoint changes, highlighting its robustness and versatility in challenging conditions. The code will be re leased at https://github.com/scy639/Gen2SM.

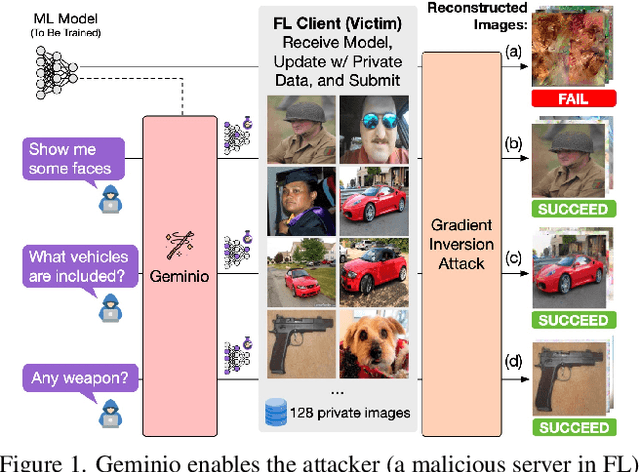

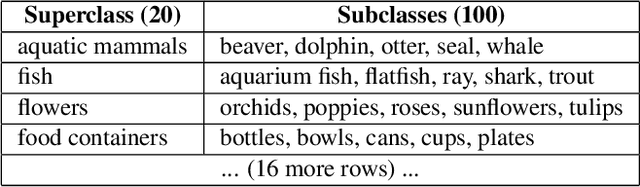

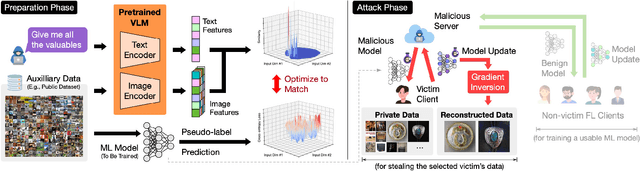

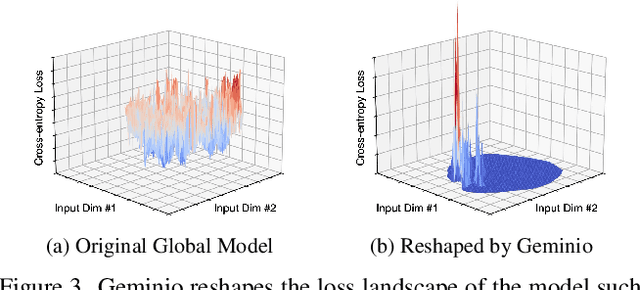

Geminio: Language-Guided Gradient Inversion Attacks in Federated Learning

Nov 22, 2024

Abstract:Foundation models that bridge vision and language have made significant progress, inspiring numerous life-enriching applications. However, their potential for misuse to introduce new threats remains largely unexplored. This paper reveals that vision-language models (VLMs) can be exploited to overcome longstanding limitations in gradient inversion attacks (GIAs) within federated learning (FL), where an FL server reconstructs private data samples from gradients shared by victim clients. Current GIAs face challenges in reconstructing high-resolution images, especially when the victim has a large local data batch. While focusing reconstruction on valuable samples rather than the entire batch is promising, existing methods lack the flexibility to allow attackers to specify their target data. In this paper, we introduce Geminio, the first approach to transform GIAs into semantically meaningful, targeted attacks. Geminio enables a brand new privacy attack experience: attackers can describe, in natural language, the types of data they consider valuable, and Geminio will prioritize reconstruction to focus on those high-value samples. This is achieved by leveraging a pretrained VLM to guide the optimization of a malicious global model that, when shared with and optimized by a victim, retains only gradients of samples that match the attacker-specified query. Extensive experiments demonstrate Geminio's effectiveness in pinpointing and reconstructing targeted samples, with high success rates across complex datasets under FL and large batch sizes and showing resilience against existing defenses.

Neural Networks with (Low-Precision) Polynomial Approximations: New Insights and Techniques for Accuracy Improvement

Feb 17, 2024Abstract:Replacing non-polynomial functions (e.g., non-linear activation functions such as ReLU) in a neural network with their polynomial approximations is a standard practice in privacy-preserving machine learning. The resulting neural network, called polynomial approximation of neural network (PANN) in this paper, is compatible with advanced cryptosystems to enable privacy-preserving model inference. Using ``highly precise'' approximation, state-of-the-art PANN offers similar inference accuracy as the underlying backbone model. However, little is known about the effect of approximation, and existing literature often determined the required approximation precision empirically. In this paper, we initiate the investigation of PANN as a standalone object. Specifically, our contribution is two-fold. Firstly, we provide an explanation on the effect of approximate error in PANN. In particular, we discovered that (1) PANN is susceptible to some type of perturbations; and (2) weight regularisation significantly reduces PANN's accuracy. We support our explanation with experiments. Secondly, based on the insights from our investigations, we propose solutions to increase inference accuracy for PANN. Experiments showed that combination of our solutions is very effective: at the same precision, our PANN is 10% to 50% more accurate than state-of-the-arts; and at the same accuracy, our PANN only requires a precision of $2^{-9}$ while state-of-the-art solution requires a precision of $2^{-12}$ using the ResNet-20 model on CIFAR-10 dataset.

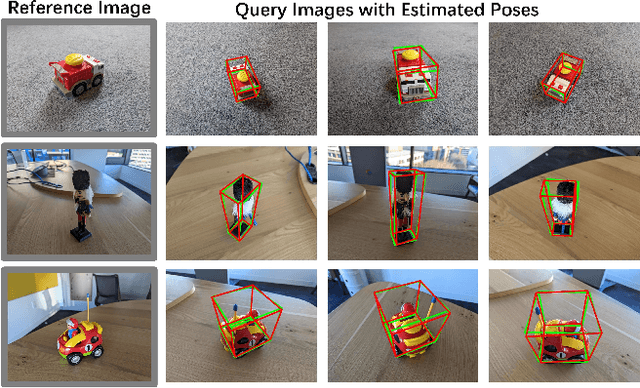

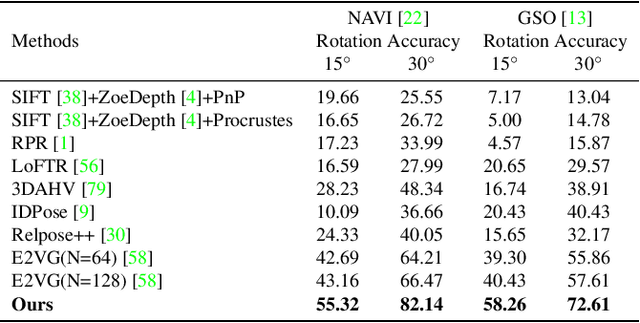

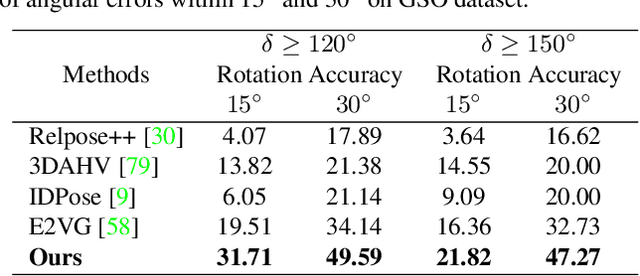

Extreme Two-View Geometry From Object Poses with Diffusion Models

Feb 05, 2024Abstract:Human has an incredible ability to effortlessly perceive the viewpoint difference between two images containing the same object, even when the viewpoint change is astonishingly vast with no co-visible regions in the images. This remarkable skill, however, has proven to be a challenge for existing camera pose estimation methods, which often fail when faced with large viewpoint differences due to the lack of overlapping local features for matching. In this paper, we aim to effectively harness the power of object priors to accurately determine two-view geometry in the face of extreme viewpoint changes. In our method, we first mathematically transform the relative camera pose estimation problem to an object pose estimation problem. Then, to estimate the object pose, we utilize the object priors learned from a diffusion model Zero123 to synthesize novel-view images of the object. The novel-view images are matched to determine the object pose and thus the two-view camera pose. In experiments, our method has demonstrated extraordinary robustness and resilience to large viewpoint changes, consistently estimating two-view poses with exceptional generalization ability across both synthetic and real-world datasets. Code will be available at https://github.com/scy639/Extreme-Two-View-Geometry-From-Object-Poses-with-Diffusion-Models.

Deep Efficient Private Neighbor Generation for Subgraph Federated Learning

Jan 19, 2024Abstract:Behemoth graphs are often fragmented and separately stored by multiple data owners as distributed subgraphs in many realistic applications. Without harming data privacy, it is natural to consider the subgraph federated learning (subgraph FL) scenario, where each local client holds a subgraph of the entire global graph, to obtain globally generalized graph mining models. To overcome the unique challenge of incomplete information propagation on local subgraphs due to missing cross-subgraph neighbors, previous works resort to the augmentation of local neighborhoods through the joint FL of missing neighbor generators and GNNs. Yet their technical designs have profound limitations regarding the utility, efficiency, and privacy goals of FL. In this work, we propose FedDEP to comprehensively tackle these challenges in subgraph FL. FedDEP consists of a series of novel technical designs: (1) Deep neighbor generation through leveraging the GNN embeddings of potential missing neighbors; (2) Efficient pseudo-FL for neighbor generation through embedding prototyping; and (3) Privacy protection through noise-less edge-local-differential-privacy. We analyze the correctness and efficiency of FedDEP, and provide theoretical guarantees on its privacy. Empirical results on four real-world datasets justify the clear benefits of proposed techniques.

Improving Factual Error Correction by Learning to Inject Factual Errors

Dec 12, 2023Abstract:Factual error correction (FEC) aims to revise factual errors in false claims with minimal editing, making them faithful to the provided evidence. This task is crucial for alleviating the hallucination problem encountered by large language models. Given the lack of paired data (i.e., false claims and their corresponding correct claims), existing methods typically adopt the mask-then-correct paradigm. This paradigm relies solely on unpaired false claims and correct claims, thus being referred to as distantly supervised methods. These methods require a masker to explicitly identify factual errors within false claims before revising with a corrector. However, the absence of paired data to train the masker makes accurately pinpointing factual errors within claims challenging. To mitigate this, we propose to improve FEC by Learning to Inject Factual Errors (LIFE), a three-step distantly supervised method: mask-corrupt-correct. Specifically, we first train a corruptor using the mask-then-corrupt procedure, allowing it to deliberately introduce factual errors into correct text. The corruptor is then applied to correct claims, generating a substantial amount of paired data. After that, we filter out low-quality data, and use the remaining data to train a corrector. Notably, our corrector does not require a masker, thus circumventing the bottleneck associated with explicit factual error identification. Our experiments on a public dataset verify the effectiveness of LIFE in two key aspects: Firstly, it outperforms the previous best-performing distantly supervised method by a notable margin of 10.59 points in SARI Final (19.3% improvement). Secondly, even compared to ChatGPT prompted with in-context examples, LIFE achieves a superiority of 7.16 points in SARI Final.

AnnoLLM: Making Large Language Models to Be Better Crowdsourced Annotators

Mar 29, 2023

Abstract:Many natural language processing (NLP) tasks rely on labeled data to train machine learning models to achieve high performance. However, data annotation can be a time-consuming and expensive process, especially when the task involves a large amount of data or requires specialized domains. Recently, GPT-3.5 series models have demonstrated remarkable few-shot and zero-shot ability across various NLP tasks. In this paper, we first claim that large language models (LLMs), such as GPT-3.5, can serve as an excellent crowdsourced annotator by providing them with sufficient guidance and demonstrated examples. To make LLMs to be better annotators, we propose a two-step approach, 'explain-then-annotate'. To be more precise, we begin by creating prompts for every demonstrated example, which we subsequently utilize to prompt a LLM to provide an explanation for why the specific ground truth answer/label was chosen for that particular example. Following this, we construct the few-shot chain-of-thought prompt with the self-generated explanation and employ it to annotate the unlabeled data. We conduct experiments on three tasks, including user input and keyword relevance assessment, BoolQ and WiC. The annotation results from GPT-3.5 surpasses those from crowdsourced annotation for user input and keyword relevance assessment. Additionally, for the other two tasks, GPT-3.5 achieves results that are comparable to those obtained through crowdsourced annotation.

Curriculum Sampling for Dense Retrieval with Document Expansion

Dec 18, 2022Abstract:The dual-encoder has become the de facto architecture for dense retrieval. Typically, it computes the latent representations of the query and document independently, thus failing to fully capture the interactions between the query and document. To alleviate this, recent work expects to get query-informed representations of documents. During training, it expands the document with a real query, while replacing the real query with a generated pseudo query at inference. This discrepancy between training and inference makes the dense retrieval model pay more attention to the query information but ignore the document when computing the document representation. As a result, it even performs worse than the vanilla dense retrieval model, since its performance depends heavily on the relevance between the generated queries and the real query. In this paper, we propose a curriculum sampling strategy, which also resorts to the pseudo query at training and gradually increases the relevance of the generated query to the real query. In this way, the retrieval model can learn to extend its attention from the document only to both the document and query, hence getting high-quality query-informed document representations. Experimental results on several passage retrieval datasets show that our approach outperforms the previous dense retrieval methods1.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge