Shuwen Wei

Solving a Nonlinear Blind Inverse Problem for Tagged MRI with Physics and Deep Generative Priors

Mar 01, 2026Abstract:Tagged MRI enables tracking internal tissue motion non-invasively. It encodes motion by modulating anatomy with periodic tags, which deform along with tissue. However, the entanglement between anatomy, tags and motion poses significant challenges for post-processing. The existence of tags and imaging blur hinders downstream tasks such as segmenting anatomy. Tag fading, due to T1-relaxation, disrupts the brightness constancy assumption for motion tracking. For decades, these challenges have been handled in isolation and sub-optimally. In contrast, we introduce a blind and nonlinear inverse framework for tagged MRI that, for the first time, unifies these tasks: anatomical image recovery, high-resolution cine image synthesis, and motion estimation. At its core, the synergy of MR physics and generative priors enables us to blindly estimate the unknown forward imaging models and high-resolution underlying anatomy, while simultaneously tracking 3D diffeomorphic Lagrangian motion over time. Experiments on tagged brain MRI demonstrate that our approach yields high-resolution anatomy images, cine images, and more accurate motion than specialized methods.

Likelihood-Separable Diffusion Inference for Multi-Image MRI Super-Resolution

Jan 20, 2026Abstract:Diffusion models are the current state-of-the-art for solving inverse problems in imaging. Their impressive generative capability allows them to approximate sampling from a prior distribution, which alongside a known likelihood function permits posterior sampling without retraining the model. While recent methods have made strides in advancing the accuracy of posterior sampling, the majority focuses on single-image inverse problems. However, for modalities such as magnetic resonance imaging (MRI), it is common to acquire multiple complementary measurements, each low-resolution along a different axis. In this work, we generalize common diffusion-based inverse single-image problem solvers for multi-image super-resolution (MISR) MRI. We show that the DPS likelihood correction allows an exactly-separable gradient decomposition across independently acquired measurements, enabling MISR without constructing a joint operator, modifying the diffusion model, or increasing network function evaluations. We derive MISR versions of DPS, DMAP, DPPS, and diffusion-based PnP/ADMM, and demonstrate substantial gains over SISR across $4\times/8\times/16\times$ anisotropic degradations. Our results achieve state-of-the-art super-resolution of anisotropic MRI volumes and, critically, enable reconstruction of near-isotropic anatomy from routine 2D multi-slice acquisitions, which are otherwise highly degraded in orthogonal views.

Pretraining Deformable Image Registration Networks with Random Images

May 30, 2025Abstract:Recent advances in deep learning-based medical image registration have shown that training deep neural networks~(DNNs) does not necessarily require medical images. Previous work showed that DNNs trained on randomly generated images with carefully designed noise and contrast properties can still generalize well to unseen medical data. Building on this insight, we propose using registration between random images as a proxy task for pretraining a foundation model for image registration. Empirical results show that our pretraining strategy improves registration accuracy, reduces the amount of domain-specific data needed to achieve competitive performance, and accelerates convergence during downstream training, thereby enhancing computational efficiency.

Beyond the LUMIR challenge: The pathway to foundational registration models

May 30, 2025

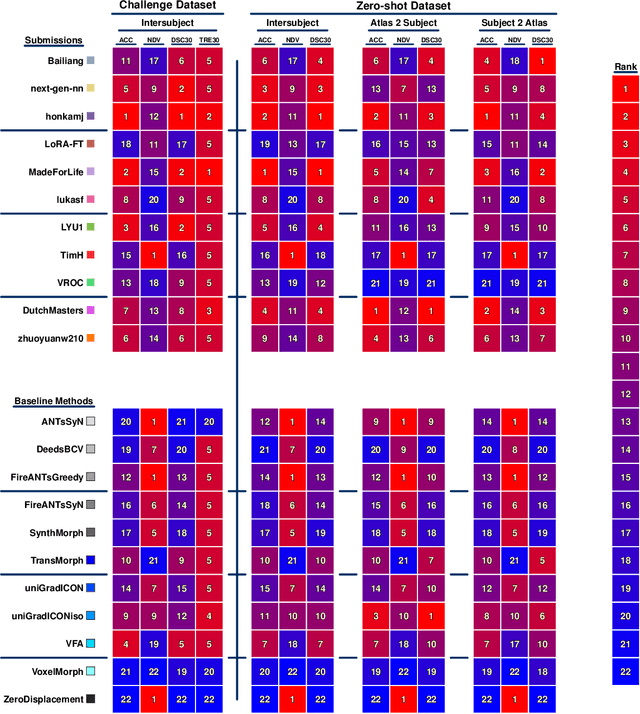

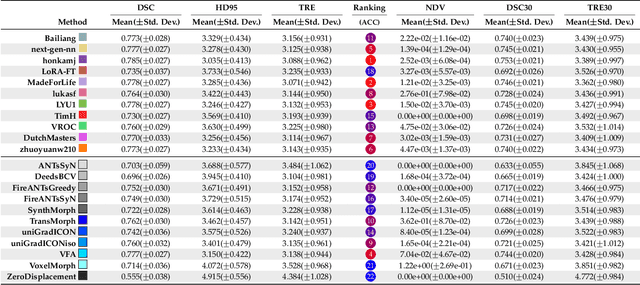

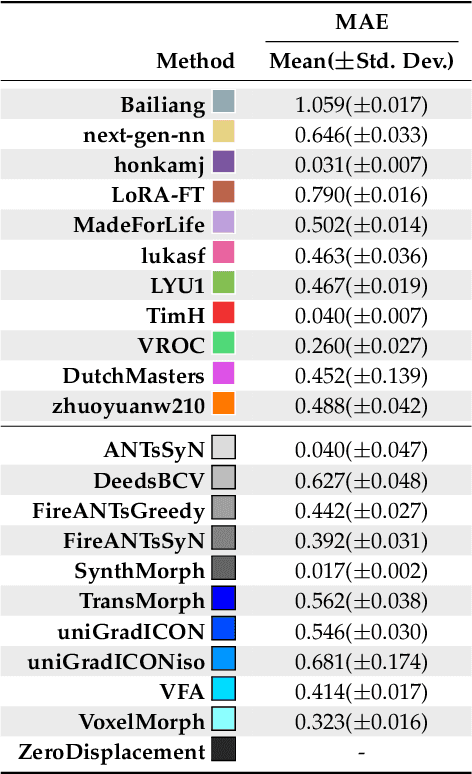

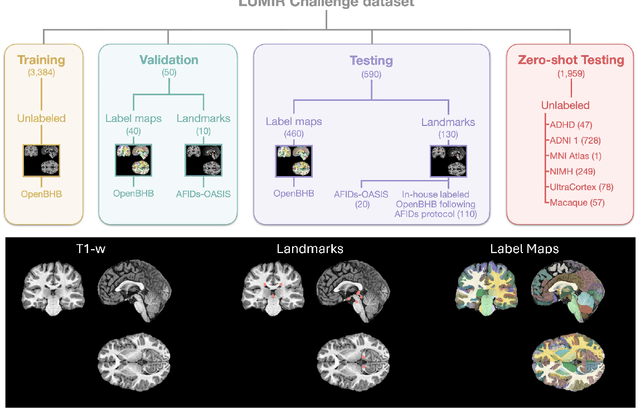

Abstract:Medical image challenges have played a transformative role in advancing the field, catalyzing algorithmic innovation and establishing new performance standards across diverse clinical applications. Image registration, a foundational task in neuroimaging pipelines, has similarly benefited from the Learn2Reg initiative. Building on this foundation, we introduce the Large-scale Unsupervised Brain MRI Image Registration (LUMIR) challenge, a next-generation benchmark designed to assess and advance unsupervised brain MRI registration. Distinct from prior challenges that leveraged anatomical label maps for supervision, LUMIR removes this dependency by providing over 4,000 preprocessed T1-weighted brain MRIs for training without any label maps, encouraging biologically plausible deformation modeling through self-supervision. In addition to evaluating performance on 590 held-out test subjects, LUMIR introduces a rigorous suite of zero-shot generalization tasks, spanning out-of-domain imaging modalities (e.g., FLAIR, T2-weighted, T2*-weighted), disease populations (e.g., Alzheimer's disease), acquisition protocols (e.g., 9.4T MRI), and species (e.g., macaque brains). A total of 1,158 subjects and over 4,000 image pairs were included for evaluation. Performance was assessed using both segmentation-based metrics (Dice coefficient, 95th percentile Hausdorff distance) and landmark-based registration accuracy (target registration error). Across both in-domain and zero-shot tasks, deep learning-based methods consistently achieved state-of-the-art accuracy while producing anatomically plausible deformation fields. The top-performing deep learning-based models demonstrated diffeomorphic properties and inverse consistency, outperforming several leading optimization-based methods, and showing strong robustness to most domain shifts, the exception being a drop in performance on out-of-domain contrasts.

Brightness-Invariant Tracking Estimation in Tagged MRI

May 23, 2025Abstract:Magnetic resonance (MR) tagging is an imaging technique for noninvasively tracking tissue motion in vivo by creating a visible pattern of magnetization saturation (tags) that deforms with the tissue. Due to longitudinal relaxation and progression to steady-state, the tags and tissue brightnesses change over time, which makes tracking with optical flow methods error-prone. Although Fourier methods can alleviate these problems, they are also sensitive to brightness changes as well as spectral spreading due to motion. To address these problems, we introduce the brightness-invariant tracking estimation (BRITE) technique for tagged MRI. BRITE disentangles the anatomy from the tag pattern in the observed tagged image sequence and simultaneously estimates the Lagrangian motion. The inherent ill-posedness of this problem is addressed by leveraging the expressive power of denoising diffusion probabilistic models to represent the probabilistic distribution of the underlying anatomy and the flexibility of physics-informed neural networks to estimate biologically-plausible motion. A set of tagged MR images of a gel phantom was acquired with various tag periods and imaging flip angles to demonstrate the impact of brightness variations and to validate our method. The results show that BRITE achieves more accurate motion and strain estimates as compared to other state of the art methods, while also being resistant to tag fading.

Correlation Ratio for Unsupervised Learning of Multi-modal Deformable Registration

Apr 16, 2025Abstract:In recent years, unsupervised learning for deformable image registration has been a major research focus. This approach involves training a registration network using pairs of moving and fixed images, along with a loss function that combines an image similarity measure and deformation regularization. For multi-modal image registration tasks, the correlation ratio has been a widely-used image similarity measure historically, yet it has been underexplored in current deep learning methods. Here, we propose a differentiable correlation ratio to use as a loss function for learning-based multi-modal deformable image registration. This approach extends the traditionally non-differentiable implementation of the correlation ratio by using the Parzen windowing approximation, enabling backpropagation with deep neural networks. We validated the proposed correlation ratio on a multi-modal neuroimaging dataset. In addition, we established a Bayesian training framework to study how the trade-off between the deformation regularizer and similarity measures, including mutual information and our proposed correlation ratio, affects the registration performance.

ECLARE: Efficient cross-planar learning for anisotropic resolution enhancement

Mar 14, 2025Abstract:In clinical imaging, magnetic resonance (MR) image volumes are often acquired as stacks of 2D slices, permitting decreased scan times, improved signal-to-noise ratio, and image contrasts unique to 2D MR pulse sequences. While this is sufficient for clinical evaluation, automated algorithms designed for 3D analysis perform sub-optimally on 2D-acquired scans, especially those with thick slices and gaps between slices. Super-resolution (SR) methods aim to address this problem, but previous methods do not address all of the following: slice profile shape estimation, slice gap, domain shift, and non-integer / arbitrary upsampling factors. In this paper, we propose ECLARE (Efficient Cross-planar Learning for Anisotropic Resolution Enhancement), a self-SR method that addresses each of these factors. ECLARE estimates the slice profile from the 2D-acquired multi-slice MR volume, trains a network to learn the mapping from low-resolution to high-resolution in-plane patches from the same volume, and performs SR with anti-aliasing. We compared ECLARE to cubic B-spline interpolation, SMORE, and other contemporary SR methods. We used realistic and representative simulations so that quantitative performance against a ground truth could be computed, and ECLARE outperformed all other methods in both signal recovery and downstream tasks. On real data for which there is no ground truth, ECLARE demonstrated qualitative superiority over other methods as well. Importantly, as ECLARE does not use external training data it cannot suffer from domain shift between training and testing. Our code is open-source and available at https://www.github.com/sremedios/eclare.

Unsupervised learning of spatially varying regularization for diffeomorphic image registration

Dec 23, 2024Abstract:Spatially varying regularization accommodates the deformation variations that may be necessary for different anatomical regions during deformable image registration. Historically, optimization-based registration models have harnessed spatially varying regularization to address anatomical subtleties. However, most modern deep learning-based models tend to gravitate towards spatially invariant regularization, wherein a homogenous regularization strength is applied across the entire image, potentially disregarding localized variations. In this paper, we propose a hierarchical probabilistic model that integrates a prior distribution on the deformation regularization strength, enabling the end-to-end learning of a spatially varying deformation regularizer directly from the data. The proposed method is straightforward to implement and easily integrates with various registration network architectures. Additionally, automatic tuning of hyperparameters is achieved through Bayesian optimization, allowing efficient identification of optimal hyperparameters for any given registration task. Comprehensive evaluations on publicly available datasets demonstrate that the proposed method significantly improves registration performance and enhances the interpretability of deep learning-based registration, all while maintaining smooth deformations.

Unique MS Lesion Identification from MRI

Oct 12, 2024

Abstract:Unique identification of multiple sclerosis (MS) white matter lesions (WMLs) is important to help characterize MS progression. WMLs are routinely identified from magnetic resonance images (MRIs) but the resultant total lesion load does not correlate well with EDSS; whereas mean unique lesion volume has been shown to correlate with EDSS. Our approach builds on prior work by incorporating Hessian matrix computation from lesion probability maps before using the random walker algorithm to estimate the volume of each unique lesion. Synthetic images demonstrate our ability to accurately count the number of lesions present. The takeaways, are: 1) that our method correctly identifies all lesions including many that are missed by previous methods; 2) we can better separate confluent lesions; and 3) we can accurately capture the total volume of WMLs in a given probability map. This work will allow new more meaningful statistics to be computed from WMLs in brain MRIs

Unsupervised Learning of Multi-modal Affine Registration for PET/CT

Sep 20, 2024

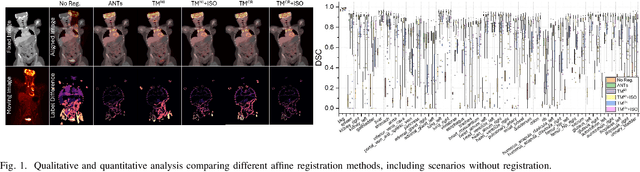

Abstract:Affine registration plays a crucial role in PET/CT imaging, where aligning PET with CT images is challenging due to their respective functional and anatomical representations. Despite the significant promise shown by recent deep learning (DL)-based methods in various medical imaging applications, their application to multi-modal PET/CT affine registration remains relatively unexplored. This study investigates a DL-based approach for PET/CT affine registration. We introduce a novel method using Parzen windowing to approximate the correlation ratio, which acts as the image similarity measure for training DNNs in multi-modal registration. Additionally, we propose a multi-scale, instance-specific optimization scheme that iteratively refines the DNN-generated affine parameters across multiple image resolutions. Our method was evaluated against the widely used mutual information metric and a popular optimization-based technique from the ANTs package, using a large public FDG-PET/CT dataset with synthetic affine transformations. Our approach achieved a mean Dice Similarity Coefficient (DSC) of 0.870, outperforming the compared methods and demonstrating its effectiveness in multi-modal PET/CT image registration.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge