Joel Honkamaa

Strategies for Robust Deep Learning Based Deformable Registration

Oct 27, 2025Abstract:Deep learning based deformable registration methods have become popular in recent years. However, their ability to generalize beyond training data distribution can be poor, significantly hindering their usability. LUMIR brain registration challenge for Learn2Reg 2025 aims to advance the field by evaluating the performance of the registration on contrasts and modalities different from those included in the training set. Here we describe our submission to the challenge, which proposes a very simple idea for significantly improving robustness by transforming the images into MIND feature space before feeding them into the model. In addition, a special ensembling strategy is proposed that shows a small but consistent improvement.

Beyond the LUMIR challenge: The pathway to foundational registration models

May 30, 2025

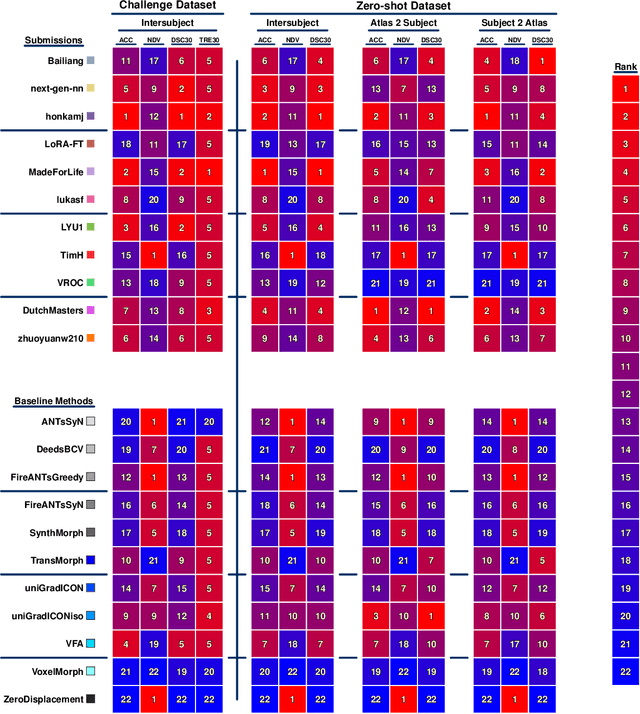

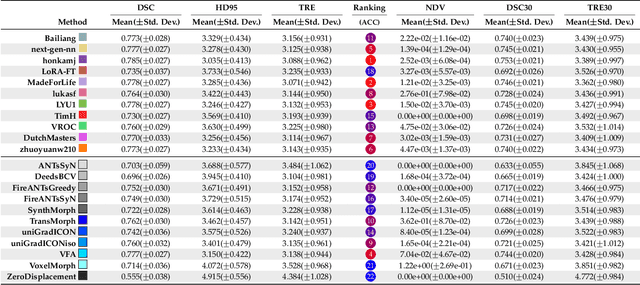

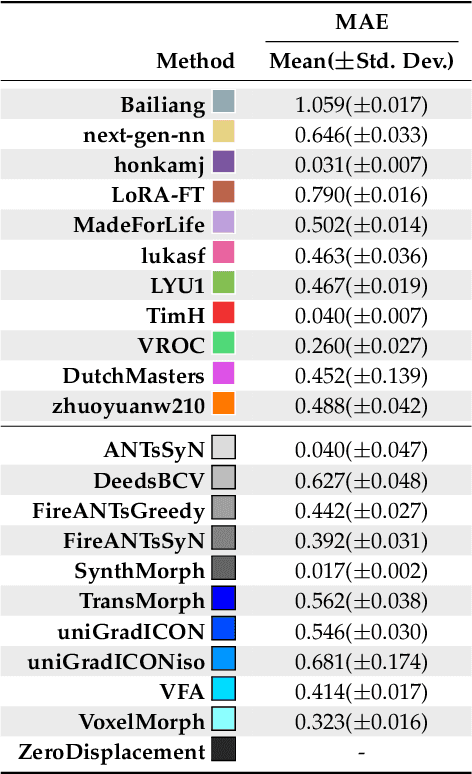

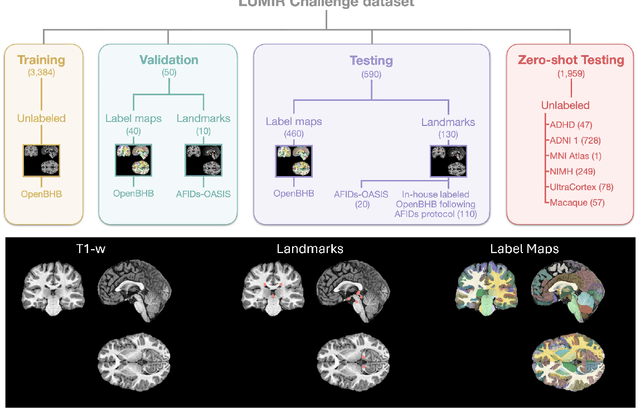

Abstract:Medical image challenges have played a transformative role in advancing the field, catalyzing algorithmic innovation and establishing new performance standards across diverse clinical applications. Image registration, a foundational task in neuroimaging pipelines, has similarly benefited from the Learn2Reg initiative. Building on this foundation, we introduce the Large-scale Unsupervised Brain MRI Image Registration (LUMIR) challenge, a next-generation benchmark designed to assess and advance unsupervised brain MRI registration. Distinct from prior challenges that leveraged anatomical label maps for supervision, LUMIR removes this dependency by providing over 4,000 preprocessed T1-weighted brain MRIs for training without any label maps, encouraging biologically plausible deformation modeling through self-supervision. In addition to evaluating performance on 590 held-out test subjects, LUMIR introduces a rigorous suite of zero-shot generalization tasks, spanning out-of-domain imaging modalities (e.g., FLAIR, T2-weighted, T2*-weighted), disease populations (e.g., Alzheimer's disease), acquisition protocols (e.g., 9.4T MRI), and species (e.g., macaque brains). A total of 1,158 subjects and over 4,000 image pairs were included for evaluation. Performance was assessed using both segmentation-based metrics (Dice coefficient, 95th percentile Hausdorff distance) and landmark-based registration accuracy (target registration error). Across both in-domain and zero-shot tasks, deep learning-based methods consistently achieved state-of-the-art accuracy while producing anatomically plausible deformation fields. The top-performing deep learning-based models demonstrated diffeomorphic properties and inverse consistency, outperforming several leading optimization-based methods, and showing strong robustness to most domain shifts, the exception being a drop in performance on out-of-domain contrasts.

New multimodal similarity measure for image registration via modeling local functional dependence with linear combination of learned basis functions

Mar 07, 2025

Abstract:The deformable registration of images of different modalities, essential in many medical imaging applications, remains challenging. The main challenge is developing a robust measure for image overlap despite the compared images capturing different aspects of the underlying tissue. Here, we explore similarity metrics based on functional dependence between intensity values of registered images. Although functional dependence is too restrictive on the global scale, earlier work has shown competitive performance in deformable registration when such measures are applied over small enough contexts. We confirm this finding and further develop the idea by modeling local functional dependence via the linear basis function model with the basis functions learned jointly with the deformation. The measure can be implemented via convolutions, making it efficient to compute on GPUs. We release the method as an easy-to-use tool and show good performance on three datasets compared to well-established baseline and earlier functional dependence-based methods.

ASymReg: Robust symmetric image registration using anti-symmetric formulation and deformation inversion layers

Mar 17, 2023Abstract:Deep learning based deformable medical image registration methods have emerged as a strong alternative for classical iterative registration methods. However, the currently published deep learning methods do not fulfill as strict symmetry properties with respect to the inputs as some classical registration methods, for which the registration outcome is the same regardless of the order of the inputs. While some deep learning methods label themselves as symmetric, they are either symmetric only a priori, which does not guarantee symmetry for any given input pair, or they do not generate accurate explicit inverses. In this work, we propose a novel registration architecture which by construction makes the registration network anti-symmetric with respect to its inputs. We demonstrate on two datasets that the proposed method achieves state-of-the-art results in terms of registration accuracy and that the generated deformations have accurate explicit inverses.

Deformation equivariant cross-modality image synthesis with paired non-aligned training data

Aug 26, 2022

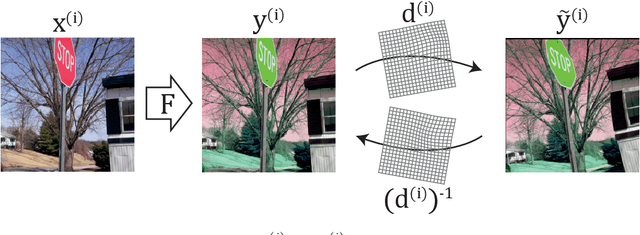

Abstract:Cross-modality image synthesis is an active research topic with multiple medical clinically relevant applications. Recently, methods allowing training with paired but misaligned data have started to emerge. However, no robust and well-performing methods applicable to a wide range of real world data sets exist. In this work, we propose a generic solution to the problem of cross-modality image synthesis with paired but non-aligned data by introducing new deformation equivariance encouraging loss functions. The method consists of joint training of an image synthesis network together with separate registration networks and allows adversarial training conditioned on the input even with misaligned data. The work lowers the bar for new clinical applications by allowing effortless training of cross-modality image synthesis networks for more difficult data sets and opens up opportunities for the development of new generic learning based cross-modality registration algorithms.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge