Sethu Vijayakumar

Online Estimation and Manipulation of Articulated Objects

Jan 04, 2026Abstract:From refrigerators to kitchen drawers, humans interact with articulated objects effortlessly every day while completing household chores. For automating these tasks, service robots must be capable of manipulating arbitrary articulated objects. Recent deep learning methods have been shown to predict valuable priors on the affordance of articulated objects from vision. In contrast, many other works estimate object articulations by observing the articulation motion, but this requires the robot to already be capable of manipulating the object. In this article, we propose a novel approach combining these methods by using a factor graph for online estimation of articulation which fuses learned visual priors and proprioceptive sensing during interaction into an analytical model of articulation based on Screw Theory. With our method, a robotic system makes an initial prediction of articulation from vision before touching the object, and then quickly updates the estimate from kinematic and force sensing during manipulation. We evaluate our method extensively in both simulations and real-world robotic manipulation experiments. We demonstrate several closed-loop estimation and manipulation experiments in which the robot was capable of opening previously unseen drawers. In real hardware experiments, the robot achieved a 75% success rate for autonomous opening of unknown articulated objects.

Attentive Feature Aggregation or: How Policies Learn to Stop Worrying about Robustness and Attend to Task-Relevant Visual Cues

Nov 13, 2025Abstract:The adoption of pre-trained visual representations (PVRs), leveraging features from large-scale vision models, has become a popular paradigm for training visuomotor policies. However, these powerful representations can encode a broad range of task-irrelevant scene information, making the resulting trained policies vulnerable to out-of-domain visual changes and distractors. In this work we address visuomotor policy feature pooling as a solution to the observed lack of robustness in perturbed scenes. We achieve this via Attentive Feature Aggregation (AFA), a lightweight, trainable pooling mechanism that learns to naturally attend to task-relevant visual cues, ignoring even semantically rich scene distractors. Through extensive experiments in both simulation and the real world, we demonstrate that policies trained with AFA significantly outperform standard pooling approaches in the presence of visual perturbations, without requiring expensive dataset augmentation or fine-tuning of the PVR. Our findings show that ignoring extraneous visual information is a crucial step towards deploying robust and generalisable visuomotor policies. Project Page: tsagkas.github.io/afa

Enhancing Tactile-based Reinforcement Learning for Robotic Control

Oct 24, 2025Abstract:Achieving safe, reliable real-world robotic manipulation requires agents to evolve beyond vision and incorporate tactile sensing to overcome sensory deficits and reliance on idealised state information. Despite its potential, the efficacy of tactile sensing in reinforcement learning (RL) remains inconsistent. We address this by developing self-supervised learning (SSL) methodologies to more effectively harness tactile observations, focusing on a scalable setup of proprioception and sparse binary contacts. We empirically demonstrate that sparse binary tactile signals are critical for dexterity, particularly for interactions that proprioceptive control errors do not register, such as decoupled robot-object motions. Our agents achieve superhuman dexterity in complex contact tasks (ball bouncing and Baoding ball rotation). Furthermore, we find that decoupling the SSL memory from the on-policy memory can improve performance. We release the Robot Tactile Olympiad (RoTO) benchmark to standardise and promote future research in tactile-based manipulation. Project page: https://elle-miller.github.io/tactile_rl

Few-shot transfer of tool-use skills using human demonstrations with proximity and tactile sensing

Jul 17, 2025Abstract:Tools extend the manipulation abilities of robots, much like they do for humans. Despite human expertise in tool manipulation, teaching robots these skills faces challenges. The complexity arises from the interplay of two simultaneous points of contact: one between the robot and the tool, and another between the tool and the environment. Tactile and proximity sensors play a crucial role in identifying these complex contacts. However, learning tool manipulation using these sensors remains challenging due to limited real-world data and the large sim-to-real gap. To address this, we propose a few-shot tool-use skill transfer framework using multimodal sensing. The framework involves pre-training the base policy to capture contact states common in tool-use skills in simulation and fine-tuning it with human demonstrations collected in the real-world target domain to bridge the domain gap. We validate that this framework enables teaching surface-following tasks using tools with diverse physical and geometric properties with a small number of demonstrations on the Franka Emika robot arm. Our analysis suggests that the robot acquires new tool-use skills by transferring the ability to recognise tool-environment contact relationships from pre-trained to fine-tuned policies. Additionally, combining proximity and tactile sensors enhances the identification of contact states and environmental geometry.

Fast Flow-based Visuomotor Policies via Conditional Optimal Transport Couplings

May 02, 2025Abstract:Diffusion and flow matching policies have recently demonstrated remarkable performance in robotic applications by accurately capturing multimodal robot trajectory distributions. However, their computationally expensive inference, due to the numerical integration of an ODE or SDE, limits their applicability as real-time controllers for robots. We introduce a methodology that utilizes conditional Optimal Transport couplings between noise and samples to enforce straight solutions in the flow ODE for robot action generation tasks. We show that naively coupling noise and samples fails in conditional tasks and propose incorporating condition variables into the coupling process to improve few-step performance. The proposed few-step policy achieves a 4% higher success rate with a 10x speed-up compared to Diffusion Policy on a diverse set of simulation tasks. Moreover, it produces high-quality and diverse action trajectories within 1-2 steps on a set of real-world robot tasks. Our method also retains the same training complexity as Diffusion Policy and vanilla Flow Matching, in contrast to distillation-based approaches.

ContactFusion: Stochastic Poisson Surface Maps from Visual and Contact Sensing

Mar 20, 2025Abstract:Robust and precise robotic assembly entails insertion of constituent components. Insertion success is hindered when noise in scene understanding exceeds tolerance limits, especially when fabricated with tight tolerances. In this work, we propose ContactFusion which combines global mapping with local contact information, fusing point clouds with force sensing. Our method entails a Rejection Sampling based contact occupancy sensing procedure which estimates contact locations on the end-effector from Force/Torque sensing at the wrist. We demonstrate how to fuse contact with visual information into a Stochastic Poisson Surface Map (SPSMap) - a map representation that can be updated with the Stochastic Poisson Surface Reconstruction (SPSR) algorithm. We first validate the contact occupancy sensor in simulation and show its ability to detect the contact location on the robot from force sensing information. Then, we evaluate our method in a peg-in-hole task, demonstrating an improvement in the hole pose estimate with the fusion of the contact information with the SPSMap.

Learning Visuotactile Estimation and Control for Non-prehensile Manipulation under Occlusions

Dec 17, 2024

Abstract:Manipulation without grasping, known as non-prehensile manipulation, is essential for dexterous robots in contact-rich environments, but presents many challenges relating with underactuation, hybrid-dynamics, and frictional uncertainty. Additionally, object occlusions in a scenario of contact uncertainty and where the motion of the object evolves independently from the robot becomes a critical problem, which previous literature fails to address. We present a method for learning visuotactile state estimators and uncertainty-aware control policies for non-prehensile manipulation under occlusions, by leveraging diverse interaction data from privileged policies trained in simulation. We formulate the estimator within a Bayesian deep learning framework, to model its uncertainty, and then train uncertainty-aware control policies by incorporating the pre-learned estimator into the reinforcement learning (RL) loop, both of which lead to significantly improved estimator and policy performance. Therefore, unlike prior non-prehensile research that relies on complex external perception set-ups, our method successfully handles occlusions after sim-to-real transfer to robotic hardware with a simple onboard camera. See our video: https://youtu.be/hW-C8i_HWgs.

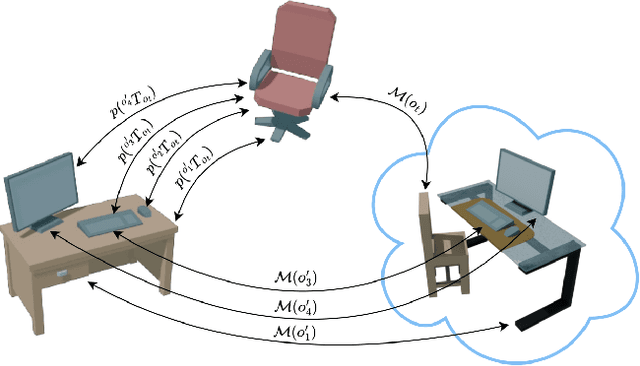

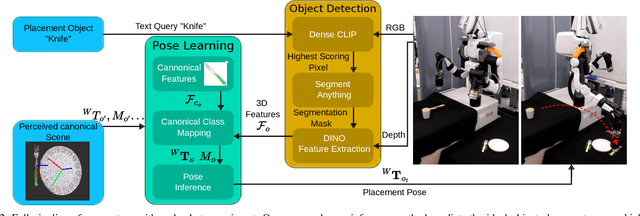

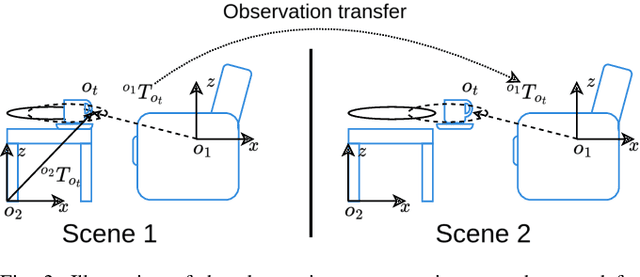

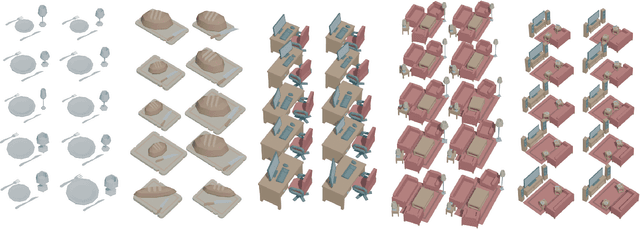

Learning Few-Shot Object Placement with Intra-Category Transfer

Nov 05, 2024

Abstract:Efficient learning from demonstration for long-horizon tasks remains an open challenge in robotics. While significant effort has been directed toward learning trajectories, a recent resurgence of object-centric approaches has demonstrated improved sample efficiency, enabling transferable robotic skills. Such approaches model tasks as a sequence of object poses over time. In this work, we propose a scheme for transferring observed object arrangements to novel object instances by learning these arrangements on canonical class frames. We then employ this scheme to enable a simple yet effective approach for training models from as few as five demonstrations to predict arrangements of a wide range of objects including tableware, cutlery, furniture, and desk spaces. We propose a method for optimizing the learned models to enables efficient learning of tasks such as setting a table or tidying up an office with intra-category transfer, even in the presence of distractors. We present extensive experimental results in simulation and on a real robotic system for table setting which, based on human evaluations, scored 73.3% compared to a human baseline. We make the code and trained models publicly available at http://oplict.cs.uni-freiburg.de.

An Efficient Representation of Whole-body Model Predictive Control for Online Compliant Dual-arm Mobile Manipulation

Oct 30, 2024

Abstract:Dual-arm mobile manipulators can transport and manipulate large-size objects with simple end-effectors. To interact with dynamic environments with strict safety and compliance requirements, achieving whole-body motion planning online while meeting various hard constraints for such highly redundant mobile manipulators poses a significant challenge. We tackle this challenge by presenting an efficient representation of whole-body motion trajectories within our bilevel model-based predictive control (MPC) framework. We utilize B\'ezier-curve parameterization to represent the optimized collision-free trajectories of two collaborating end-effectors in the first MPC, facilitating fast long-horizon object-oriented motion planning in SE(3) while considering approximated feasibility constraints. This approach is further applied to parameterize whole-body trajectories in the second MPC for whole-body motion generation with predictive admittance control in a relatively short horizon while satisfying whole-body hard constraints. This representation enables two MPCs with continuous properties, thereby avoiding inaccurate model-state transition and dense decision-variable settings in existing MPCs using the discretization method. It strengthens the online execution of the bilevel MPC framework in high-dimensional space and facilitates the generation of consistent commands for our hybrid position/velocity-controlled robot. The simulation comparisons and real-world experiments demonstrate the efficiency and robustness of this approach in various scenarios for static and dynamic obstacle avoidance, and compliant interaction control with the manipulated object and external disturbances.

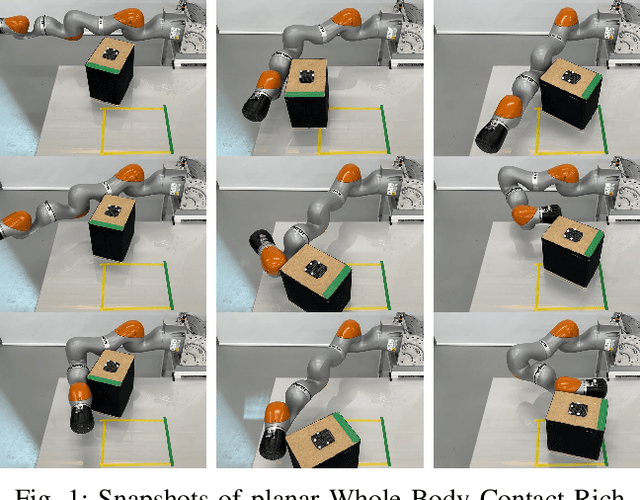

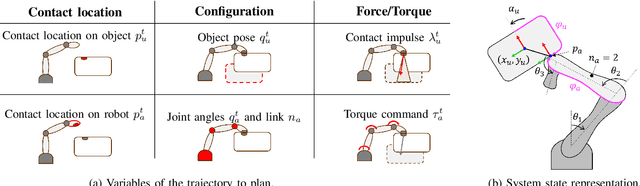

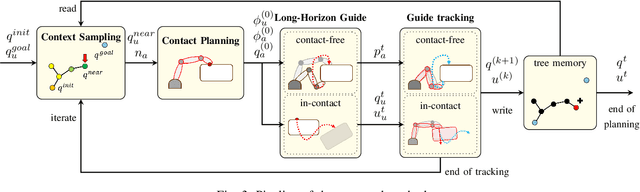

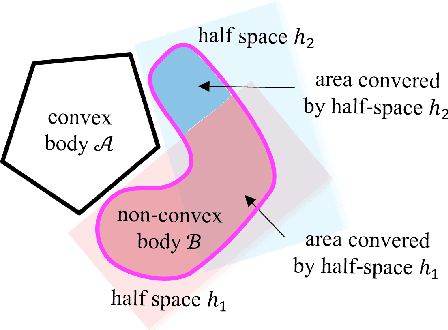

Explicit Contact Optimization in Whole-Body Contact-Rich Manipulation

Aug 28, 2024

Abstract:Humans can exploit contacts anywhere on their body surface to manipulate large and heavy items, objects normally out of reach or multiple objects at once. However, such manipulation through contacts using the whole surface of the body remains extremely challenging to achieve on robots. This can be labelled as Whole-Body Contact-Rich Manipulation (WBCRM) problem. In addition to the high-dimensionality of the Contact-Rich Manipulation problem due to the combinatorics of contact modes, admitting contact creation anywhere on the body surface adds complexity, which hinders planning of manipulation within a reasonable time. We address this computational problem by formulating the contact and motion planning of planar WBCRM as hierarchical continuous optimization problems. To enable this formulation, we propose a novel continuous explicit representation of the robot surface, that we believe to be foundational for future research using continuous optimization for WBCRM. Our results demonstrate a significant improvement of convergence, planning time and feasibility - with, on the average, 99% less iterations and 96% reduction in time to find a solution over considered scenarios, without recourse to prone-to-failure trajectory refinement steps.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge