Ryan Kastner

UC San Diego

Machine Learning on Heterogeneous, Edge, and Quantum Hardware for Particle Physics (ML-HEQUPP)

Feb 24, 2026Abstract:The next generation of particle physics experiments will face a new era of challenges in data acquisition, due to unprecedented data rates and volumes along with extreme environments and operational constraints. Harnessing this data for scientific discovery demands real-time inference and decision-making, intelligent data reduction, and efficient processing architectures beyond current capabilities. Crucial to the success of this experimental paradigm are several emerging technologies, such as artificial intelligence and machine learning (AI/ML) and silicon microelectronics, and the advent of quantum algorithms and processing. Their intersection includes areas of research such as low-power and low-latency devices for edge computing, heterogeneous accelerator systems, reconfigurable hardware, novel codesign and synthesis strategies, readout for cryogenic or high-radiation environments, and analog computing. This white paper presents a community-driven vision to identify and prioritize research and development opportunities in hardware-based ML systems and corresponding physics applications, contributing towards a successful transition to the new data frontier of fundamental science.

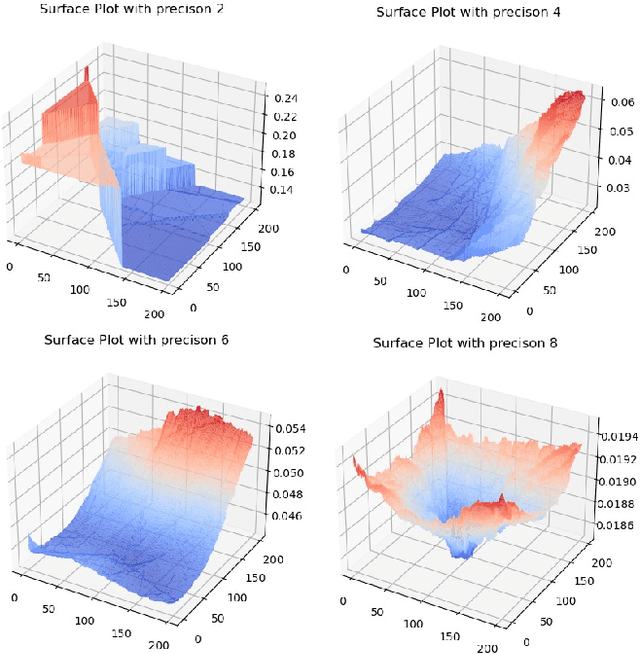

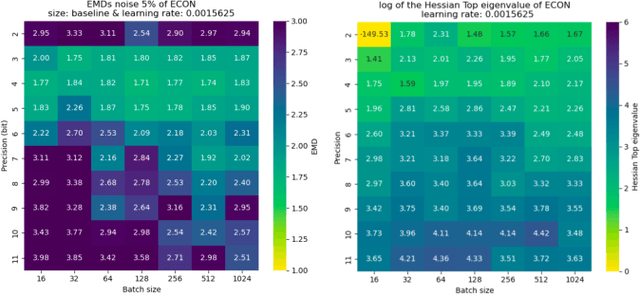

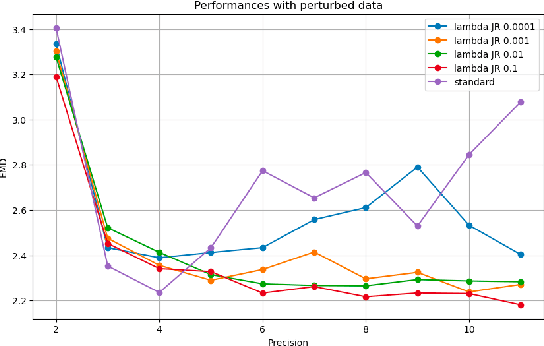

Loss Landscape Analysis for Reliable Quantized ML Models for Scientific Sensing

Feb 12, 2025Abstract:In this paper, we propose a method to perform empirical analysis of the loss landscape of machine learning (ML) models. The method is applied to two ML models for scientific sensing, which necessitates quantization to be deployed and are subject to noise and perturbations due to experimental conditions. Our method allows assessing the robustness of ML models to such effects as a function of quantization precision and under different regularization techniques -- two crucial concerns that remained underexplored so far. By investigating the interplay between performance, efficiency, and robustness by means of loss landscape analysis, we both established a strong correlation between gently-shaped landscapes and robustness to input and weight perturbations and observed other intriguing and non-obvious phenomena. Our method allows a systematic exploration of such trade-offs a priori, i.e., without training and testing multiple models, leading to more efficient development workflows. This work also highlights the importance of incorporating robustness into the Pareto optimization of ML models, enabling more reliable and adaptive scientific sensing systems.

CGRA4ML: A Framework to Implement Modern Neural Networks for Scientific Edge Computing

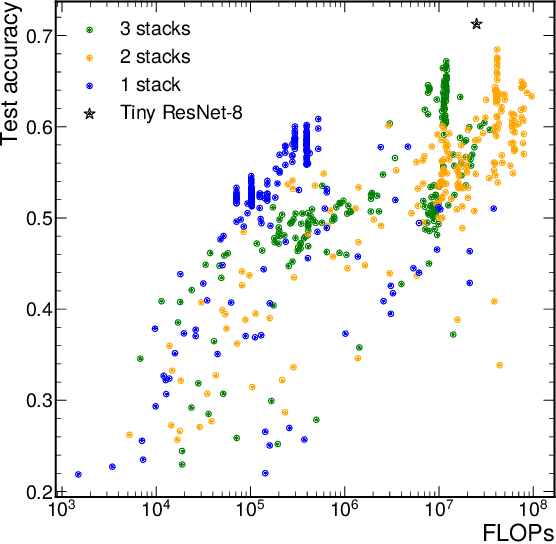

Aug 29, 2024Abstract:Scientific edge computing increasingly relies on hardware-accelerated neural networks to implement complex, near-sensor processing at extremely high throughputs and low latencies. Existing frameworks like HLS4ML are effective for smaller models, but struggle with larger, modern neural networks due to their requirement of spatially implementing the neural network layers and storing all weights in on-chip memory. CGRA4ML is an open-source, modular framework designed to bridge the gap between neural network model complexity and extreme performance requirements. CGRA4ML extends the capabilities of HLS4ML by allowing off-chip data storage and supporting a broader range of neural network architectures, including models like ResNet, PointNet, and transformers. Unlike HLS4ML, CGRA4ML generates SystemVerilog RTL, making it more suitable for targeting ASIC and FPGA design flows. We demonstrate the effectiveness of our framework by implementing and scaling larger models that were previously unattainable with HLS4ML, showcasing its adaptability and efficiency in handling complex computations. CGRA4ML also introduces an extensive verification framework, with a generated runtime firmware that enables its integration into different SoC platforms. CGRA4ML's minimal and modular infrastructure of Python API, SystemVerilog hardware, Tcl toolflows, and C runtime, facilitates easy integration and experimentation, allowing scientists to focus on innovation rather than the intricacies of hardware design and optimization.

Reliable edge machine learning hardware for scientific applications

Jun 27, 2024

Abstract:Extreme data rate scientific experiments create massive amounts of data that require efficient ML edge processing. This leads to unique validation challenges for VLSI implementations of ML algorithms: enabling bit-accurate functional simulations for performance validation in experimental software frameworks, verifying those ML models are robust under extreme quantization and pruning, and enabling ultra-fine-grained model inspection for efficient fault tolerance. We discuss approaches to developing and validating reliable algorithms at the scientific edge under such strict latency, resource, power, and area requirements in extreme experimental environments. We study metrics for developing robust algorithms, present preliminary results and mitigation strategies, and conclude with an outlook of these and future directions of research towards the longer-term goal of developing autonomous scientific experimentation methods for accelerated scientific discovery.

Architectural Implications of Neural Network Inference for High Data-Rate, Low-Latency Scientific Applications

Mar 13, 2024

Abstract:With more scientific fields relying on neural networks (NNs) to process data incoming at extreme throughputs and latencies, it is crucial to develop NNs with all their parameters stored on-chip. In many of these applications, there is not enough time to go off-chip and retrieve weights. Even more so, off-chip memory such as DRAM does not have the bandwidth required to process these NNs as fast as the data is being produced (e.g., every 25 ns). As such, these extreme latency and bandwidth requirements have architectural implications for the hardware intended to run these NNs: 1) all NN parameters must fit on-chip, and 2) codesigning custom/reconfigurable logic is often required to meet these latency and bandwidth constraints. In our work, we show that many scientific NN applications must run fully on chip, in the extreme case requiring a custom chip to meet such stringent constraints.

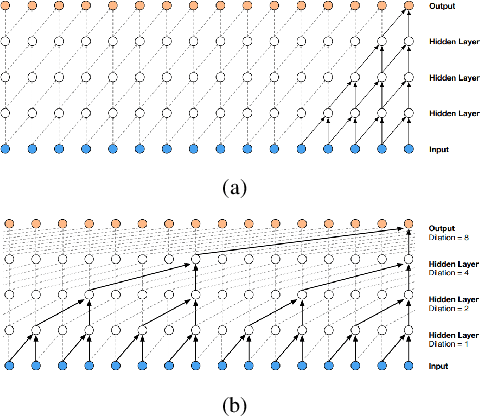

Tailor: Altering Skip Connections for Resource-Efficient Inference

Jan 18, 2023

Abstract:Deep neural networks use skip connections to improve training convergence. However, these skip connections are costly in hardware, requiring extra buffers and increasing on- and off-chip memory utilization and bandwidth requirements. In this paper, we show that skip connections can be optimized for hardware when tackled with a hardware-software codesign approach. We argue that while a network's skip connections are needed for the network to learn, they can later be removed or shortened to provide a more hardware efficient implementation with minimal to no accuracy loss. We introduce Tailor, a codesign tool whose hardware-aware training algorithm gradually removes or shortens a fully trained network's skip connections to lower their hardware cost. The optimized hardware designs improve resource utilization by up to 34% for BRAMs, 13% for FFs, and 16% for LUTs.

Open-source FPGA-ML codesign for the MLPerf Tiny Benchmark

Jun 23, 2022

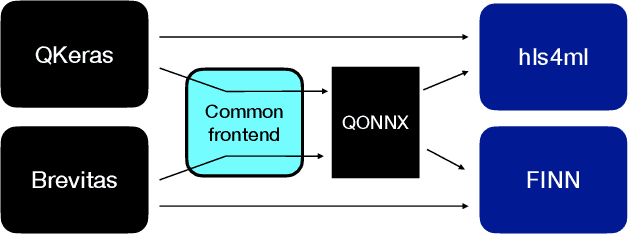

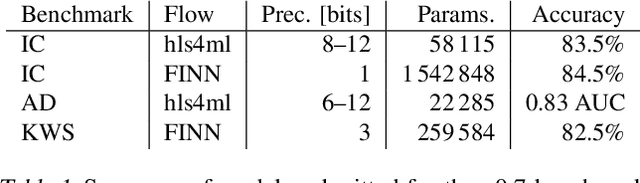

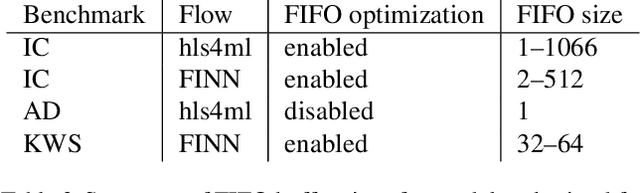

Abstract:We present our development experience and recent results for the MLPerf Tiny Inference Benchmark on field-programmable gate array (FPGA) platforms. We use the open-source hls4ml and FINN workflows, which aim to democratize AI-hardware codesign of optimized neural networks on FPGAs. We present the design and implementation process for the keyword spotting, anomaly detection, and image classification benchmark tasks. The resulting hardware implementations are quantized, configurable, spatial dataflow architectures tailored for speed and efficiency and introduce new generic optimizations and common workflows developed as a part of this work. The full workflow is presented from quantization-aware training to FPGA implementation. The solutions are deployed on system-on-chip (Pynq-Z2) and pure FPGA (Arty A7-100T) platforms. The resulting submissions achieve latencies as low as 20 $\mu$s and energy consumption as low as 30 $\mu$J per inference. We demonstrate how emerging ML benchmarks on heterogeneous hardware platforms can catalyze collaboration and the development of new techniques and more accessible tools.

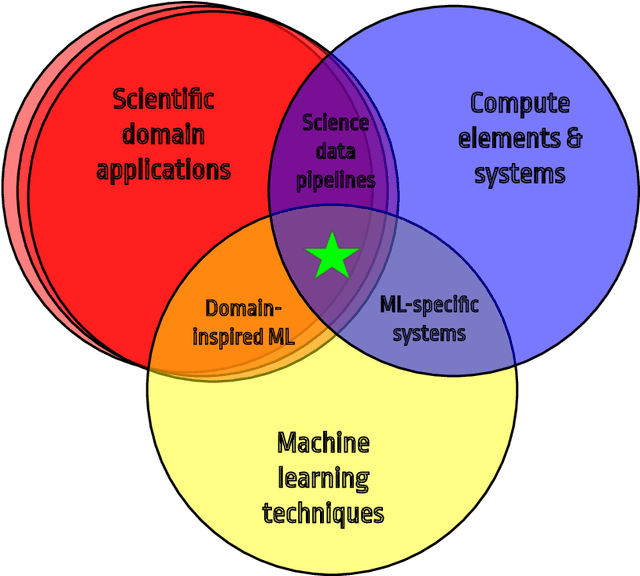

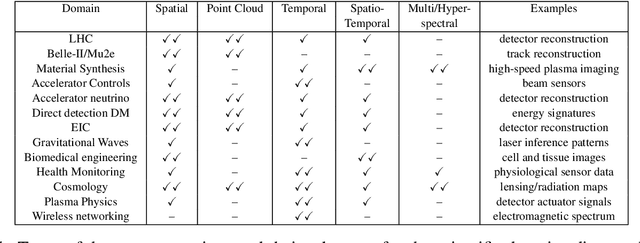

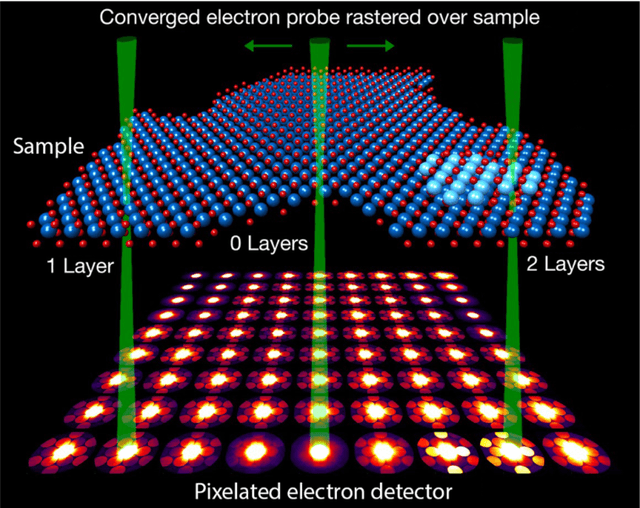

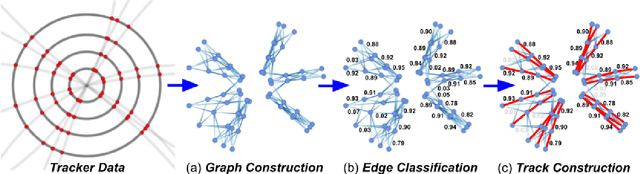

Applications and Techniques for Fast Machine Learning in Science

Oct 25, 2021

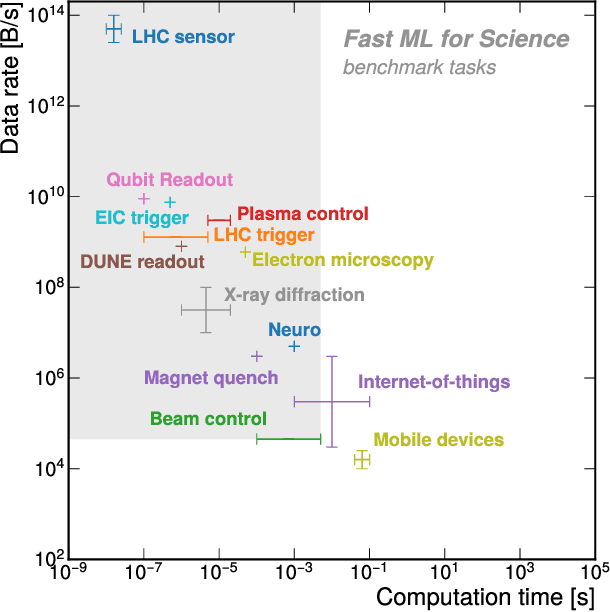

Abstract:In this community review report, we discuss applications and techniques for fast machine learning (ML) in science -- the concept of integrating power ML methods into the real-time experimental data processing loop to accelerate scientific discovery. The material for the report builds on two workshops held by the Fast ML for Science community and covers three main areas: applications for fast ML across a number of scientific domains; techniques for training and implementing performant and resource-efficient ML algorithms; and computing architectures, platforms, and technologies for deploying these algorithms. We also present overlapping challenges across the multiple scientific domains where common solutions can be found. This community report is intended to give plenty of examples and inspiration for scientific discovery through integrated and accelerated ML solutions. This is followed by a high-level overview and organization of technical advances, including an abundance of pointers to source material, which can enable these breakthroughs.

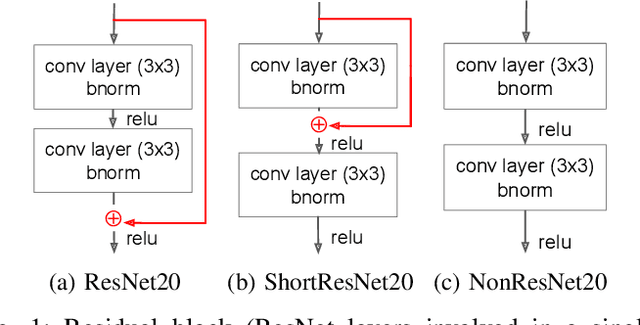

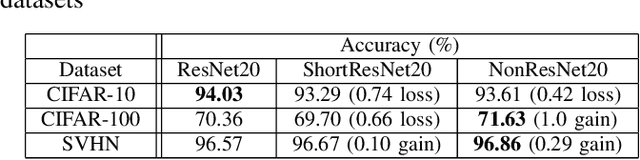

Hardware-efficient Residual Networks for FPGAs

Feb 02, 2021

Abstract:Residual networks (ResNets) employ skip connections in their networks -- reusing activations from previous layers -- to improve training convergence, but these skip connections create challenges for hardware implementations of ResNets. The hardware must either wait for skip connections to be processed before processing more incoming data or buffer them elsewhere. Without skip connections, ResNets would be more hardware-efficient. Thus, we present the teacher-student learning method to gradually prune away all of a ResNet's skip connections, constructing a network we call NonResNet. We show that when implemented for FPGAs, NonResNet decreases ResNet's BRAM utilization by 9% and LUT utilization by 3% and increases throughput by 5%.

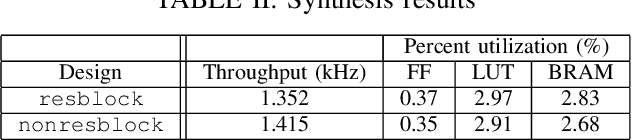

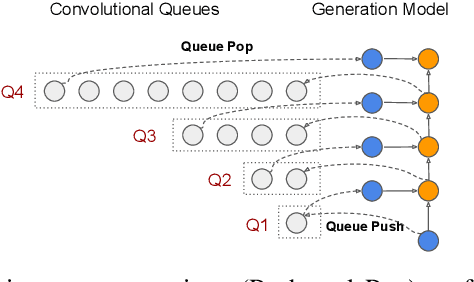

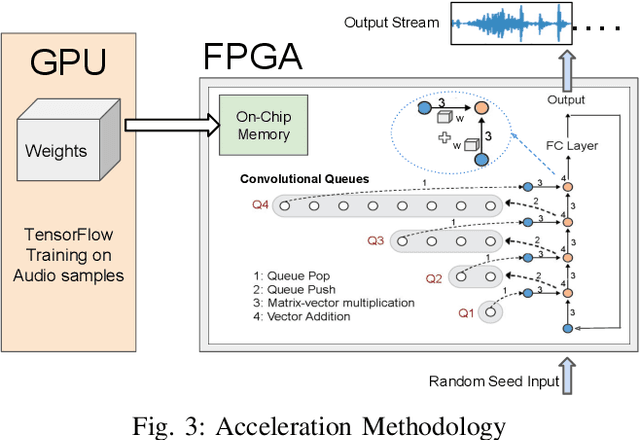

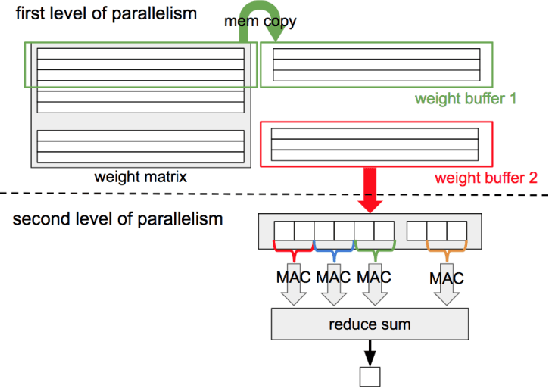

FastWave: Accelerating Autoregressive Convolutional Neural Networks on FPGA

Feb 09, 2020

Abstract:Autoregressive convolutional neural networks (CNNs) have been widely exploited for sequence generation tasks such as audio synthesis, language modeling and neural machine translation. WaveNet is a deep autoregressive CNN composed of several stacked layers of dilated convolution that is used for sequence generation. While WaveNet produces state-of-the art audio generation results, the naive inference implementation is quite slow; it takes a few minutes to generate just one second of audio on a high-end GPU. In this work, we develop the first accelerator platform~\textit{FastWave} for autoregressive convolutional neural networks, and address the associated design challenges. We design the Fast-Wavenet inference model in Vivado HLS and perform a wide range of optimizations including fixed-point implementation, array partitioning and pipelining. Our model uses a fully parameterized parallel architecture for fast matrix-vector multiplication that enables per-layer customized latency fine-tuning for further throughput improvement. Our experiments comparatively assess the trade-off between throughput and resource utilization for various optimizations. Our best WaveNet design on the Xilinx XCVU13P FPGA that uses only on-chip memory, achieves 66 faster generation speed compared to CPU implementation and 11 faster generation speed than GPU implementation.

* Published as a conference paper at ICCAD 2019

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge