Hardware-efficient Residual Networks for FPGAs

Paper and Code

Feb 02, 2021

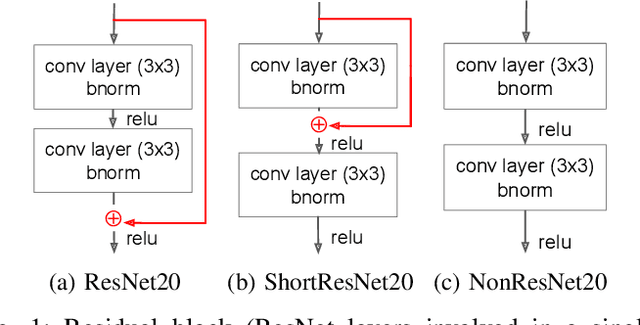

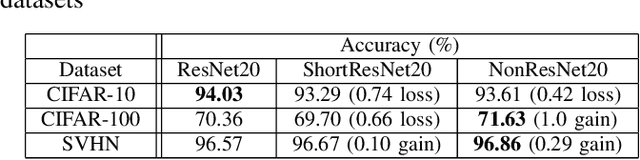

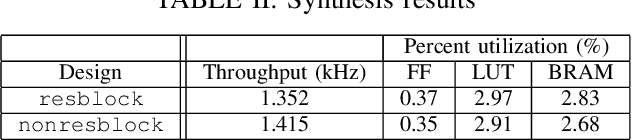

Residual networks (ResNets) employ skip connections in their networks -- reusing activations from previous layers -- to improve training convergence, but these skip connections create challenges for hardware implementations of ResNets. The hardware must either wait for skip connections to be processed before processing more incoming data or buffer them elsewhere. Without skip connections, ResNets would be more hardware-efficient. Thus, we present the teacher-student learning method to gradually prune away all of a ResNet's skip connections, constructing a network we call NonResNet. We show that when implemented for FPGAs, NonResNet decreases ResNet's BRAM utilization by 9% and LUT utilization by 3% and increases throughput by 5%.

* Presented at DATE Friday Workshop on System-level Design Methods for

Deep Learning on Heterogeneous Architectures (SLOHA 2021) (arXiv:2102.00818)

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge