Qingchao Chen

3D MRI Image Pretraining via Controllable 2D Slice Navigation Task

May 07, 2026Abstract:Self-supervised pretraining has become the mainstream approach for learning MRI representations from unlabeled scans. However, most existing objectives still treat each scan primarily as static aggregations of slices, patches or volumes. We ask whether there exists an intrinsic form of self-supervision signal that is different from reconstructing the masked patches, through transforming the 3D volumes into controllable 2D rendered sequences: by rendering slices at continuous positions, orientations, and scales, a 3D volume can be converted into dense video-action sequences whose controls are the action trajectories. We study this formulation with an action-conditioned pretraining objective, where a tokenizer encodes slice observations and a latent dynamics model predicts the evolution of latent features. Across representative anatomical and spatial downstream tasks, the proposed pretraining is evaluated against standard static-volume baselines, tokenizer-only pretraining, and dynamics variants without aligned actions. These results suggest that controllable MRI slice navigation provides a useful complementary pretraining interface for learning anatomical and spatial representations from large unlabeled MRI collections.

The Texture-Shape Dilemma: Boundary-Safe Synthetic Generation for 3D Medical Transformers

Mar 01, 2026Abstract:Vision Transformers (ViTs) have revolutionized medical image analysis, yet their data-hungry nature clashes with the scarcity and privacy constraints of clinical archives. Formula-Driven Supervised Learning (FDSL) has emerged as a promising solution to this bottleneck, synthesizing infinite annotated samples from mathematical formulas without utilizing real patient data. However, existing FDSL paradigms rely on simple geometric shapes with homogeneous intensities, creating a substantial gap by neglecting tissue textures and noise patterns inherent in modalities like CT and MRI. In this paper, we identify a critical optimization conflict termed boundary aliasing: when high-frequency synthetic textures are naively added, they corrupt the image gradient signals necessary for learning structural boundaries, causing the model to fail in delineating real anatomical margins. To bridge this gap, we propose a novel Physics-inspired Spatially-Decoupled Synthesis framework. Our approach orthogonalizes the synthesis process: it first constructs a gradient-shielded buffer zone based on boundary distance to ensure stable shape learning, and subsequently injects physics-driven spectral textures into the object core. This design effectively reconciles robust shape representation learning with invariance to acquisition noise. Extensive experiments on the BTCV and MSD datasets demonstrate that our method significantly outperforms previous FDSL, as well as SSL methods trained on real-world medical datasets, by 1.43% on BTCV and up to 1.51% on MSD task, offering a scalable, annotation-free foundation for medical ViTs. The code will be made publicly available upon acceptance.

Fake It Right: Injecting Anatomical Logic into Synthetic Supervised Pre-training for Medical Segmentation

Mar 01, 2026Abstract:Vision Transformers (ViTs) excel in 3D medical segmentation but require massive annotated datasets. While Self-Supervised Learning (SSL) mitigates this using unlabeled data, it still faces strict privacy and logistical barriers. Formula-Driven Supervised Learning (FDSL) offers a privacy-preserving alternative by pre-training on synthetic mathematical primitives. However, a critical semantic gap limits its efficacy: generic shapes lack the morphological fidelity, fixed spatial layouts, and inter-organ relationships of real anatomy, preventing models from learning essential global structural priors. To bridge this gap, we propose an Anatomy-Informed Synthetic Supervised Pre-training framework unifying FDSL's infinite scalability with anatomical realism. We replace basic primitives with a lightweight shape bank with de-identified, label-only segmentation masks from 5 subjects. Furthermore, we introduce a structure-aware sequential placement strategy to govern the patch synthesis process. Instead of random placement, we enforce physiological plausibility using spatial anchors for correct localization and a topological graph to manage inter-organ interactions (e.g., preventing impossible overlaps). Extensive experiments on BTCV and MSD datasets demonstrate that our method significantly outperforms state-of-the-art FDSL baselines and SSL methods by 1.74\% and up to 1.66\%, while exhibiting a robust scaling effect where performance improves with increased synthetic data volume. This provides a data-efficient, privacy-compliant solution for medical segmentation. The code will be made publicly available upon acceptance.

The Geometry of Transfer: Unlocking Medical Vision Manifolds for Training-Free Model Ranking

Feb 27, 2026Abstract:The advent of large-scale self-supervised learning (SSL) has produced a vast zoo of medical foundation models. However, selecting optimal medical foundation models for specific segmentation tasks remains a computational bottleneck. Existing Transferability Estimation (TE) metrics, primarily designed for classification, rely on global statistical assumptions and fail to capture the topological complexity essential for dense prediction. We propose a novel Topology-Driven Transferability Estimation framework that evaluates manifold tractability rather than statistical overlap. Our approach introduces three components: (1) Global Representation Topology Divergence (GRTD), utilizing Minimum Spanning Trees to quantify feature-label structural isomorphism; (2) Local Boundary-Aware Topological Consistency (LBTC), which assesses manifold separability specifically at critical anatomical boundaries; and (3) Task-Adaptive Fusion, which dynamically integrates global and local metrics based on the semantic cardinality of the target task. Validated on the large-scale OpenMind benchmark across diverse anatomical targets and SSL foundation models, our approach significantly outperforms state-of-the-art baselines by around \textbf{31\%} relative improvement in the weighted Kendall, providing a robust, training-free proxy for efficient model selection without the cost of fine-tuning. The code will be made publicly available upon acceptance.

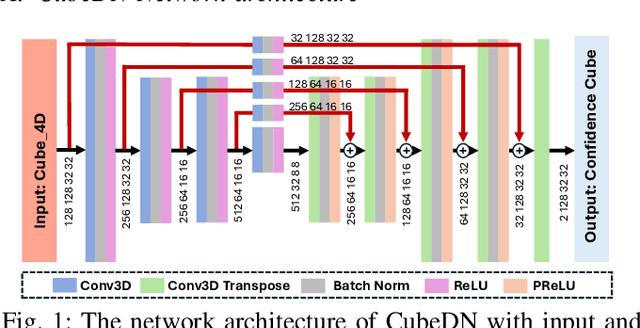

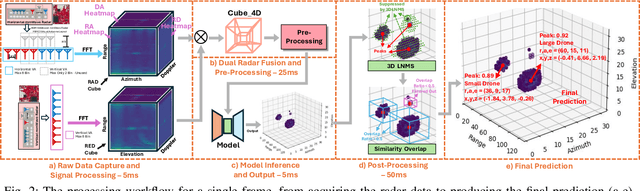

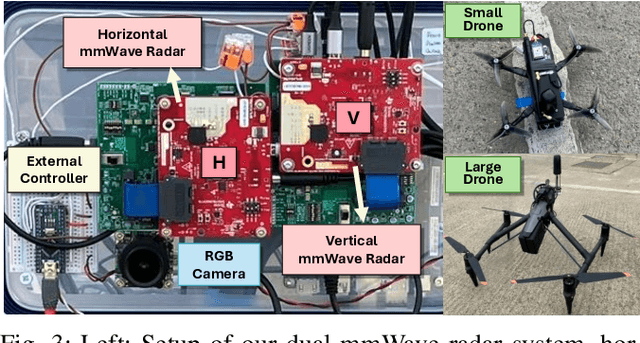

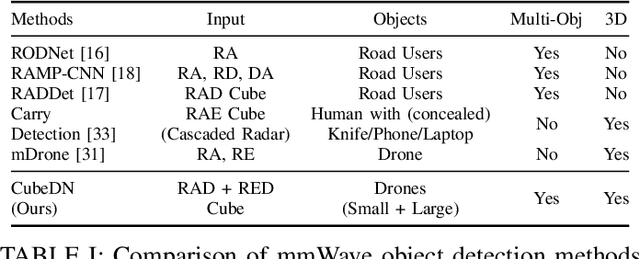

CubeDN: Real-time Drone Detection in 3D Space from Dual mmWave Radar Cubes

Aug 25, 2025

Abstract:As drone use has become more widespread, there is a critical need to ensure safety and security. A key element of this is robust and accurate drone detection and localization. While cameras and other optical sensors like LiDAR are commonly used for object detection, their performance degrades under adverse lighting and environmental conditions. Therefore, this has generated interest in finding more reliable alternatives, such as millimeter-wave (mmWave) radar. Recent research on mmWave radar object detection has predominantly focused on 2D detection of road users. Although these systems demonstrate excellent performance for 2D problems, they lack the sensing capability to measure elevation, which is essential for 3D drone detection. To address this gap, we propose CubeDN, a single-stage end-to-end radar object detection network specifically designed for flying drones. CubeDN overcomes challenges such as poor elevation resolution by utilizing a dual radar configuration and a novel deep learning pipeline. It simultaneously detects, localizes, and classifies drones of two sizes, achieving decimeter-level tracking accuracy at closer ranges with overall $95\%$ average precision (AP) and $85\%$ average recall (AR). Furthermore, CubeDN completes data processing and inference at 10Hz, making it highly suitable for practical applications.

Investigating Domain Gaps for Indoor 3D Object Detection

Aug 24, 2025Abstract:As a fundamental task for indoor scene understanding, 3D object detection has been extensively studied, and the accuracy on indoor point cloud data has been substantially improved. However, existing researches have been conducted on limited datasets, where the training and testing sets share the same distribution. In this paper, we consider the task of adapting indoor 3D object detectors from one dataset to another, presenting a comprehensive benchmark with ScanNet, SUN RGB-D and 3D Front datasets, as well as our newly proposed large-scale datasets ProcTHOR-OD and ProcFront generated by a 3D simulator. Since indoor point cloud datasets are collected and constructed in different ways, the object detectors are likely to overfit to specific factors within each dataset, such as point cloud quality, bounding box layout and instance features. We conduct experiments across datasets on different adaptation scenarios including synthetic-to-real adaptation, point cloud quality adaptation, layout adaptation and instance feature adaptation, analyzing the impact of different domain gaps on 3D object detectors. We also introduce several approaches to improve adaptation performances, providing baselines for domain adaptive indoor 3D object detection, hoping that future works may propose detectors with stronger generalization ability across domains. Our project homepage can be found in https://jeremyzhao1998.github.io/DAVoteNet-release/.

TRKT: Weakly Supervised Dynamic Scene Graph Generation with Temporal-enhanced Relation-aware Knowledge Transferring

Aug 07, 2025Abstract:Dynamic Scene Graph Generation (DSGG) aims to create a scene graph for each video frame by detecting objects and predicting their relationships. Weakly Supervised DSGG (WS-DSGG) reduces annotation workload by using an unlocalized scene graph from a single frame per video for training. Existing WS-DSGG methods depend on an off-the-shelf external object detector to generate pseudo labels for subsequent DSGG training. However, detectors trained on static, object-centric images struggle in dynamic, relation-aware scenarios required for DSGG, leading to inaccurate localization and low-confidence proposals. To address the challenges posed by external object detectors in WS-DSGG, we propose a Temporal-enhanced Relation-aware Knowledge Transferring (TRKT) method, which leverages knowledge to enhance detection in relation-aware dynamic scenarios. TRKT is built on two key components:(1)Relation-aware knowledge mining: we first employ object and relation class decoders that generate category-specific attention maps to highlight both object regions and interactive areas. Then we propose an Inter-frame Attention Augmentation strategy that exploits optical flow for neighboring frames to enhance the attention maps, making them motion-aware and robust to motion blur. This step yields relation- and motion-aware knowledge mining for WS-DSGG. (2) we introduce a Dual-stream Fusion Module that integrates category-specific attention maps into external detections to refine object localization and boost confidence scores for object proposals. Extensive experiments demonstrate that TRKT achieves state-of-the-art performance on Action Genome dataset. Our code is avaliable at https://github.com/XZPKU/TRKT.git.

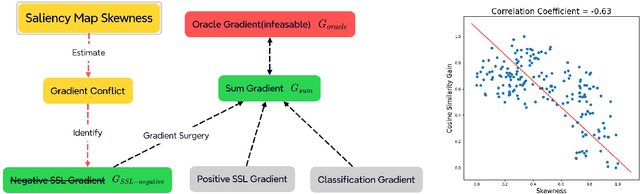

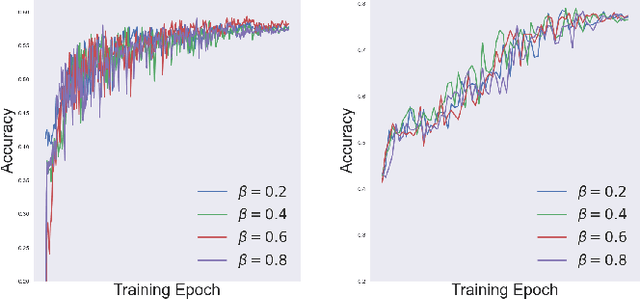

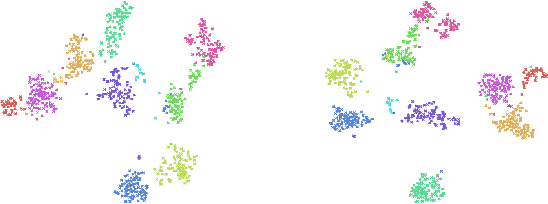

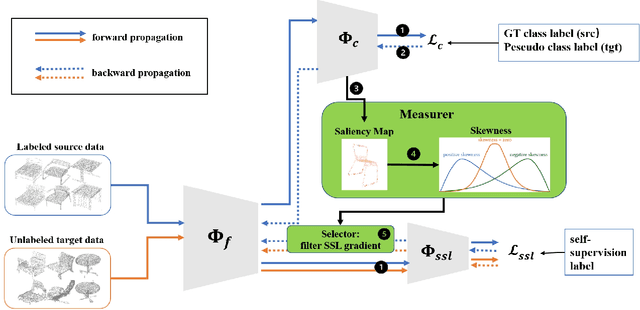

Locating and Mitigating Gradient Conflicts in Point Cloud Domain Adaptation via Saliency Map Skewness

Apr 22, 2025

Abstract:Object classification models utilizing point cloud data are fundamental for 3D media understanding, yet they often struggle with unseen or out-of-distribution (OOD) scenarios. Existing point cloud unsupervised domain adaptation (UDA) methods typically employ a multi-task learning (MTL) framework that combines primary classification tasks with auxiliary self-supervision tasks to bridge the gap between cross-domain feature distributions. However, our further experiments demonstrate that not all gradients from self-supervision tasks are beneficial and some may negatively impact the classification performance. In this paper, we propose a novel solution, termed Saliency Map-based Data Sampling Block (SM-DSB), to mitigate these gradient conflicts. Specifically, our method designs a new scoring mechanism based on the skewness of 3D saliency maps to estimate gradient conflicts without requiring target labels. Leveraging this, we develop a sample selection strategy that dynamically filters out samples whose self-supervision gradients are not beneficial for the classification. Our approach is scalable, introducing modest computational overhead, and can be integrated into all the point cloud UDA MTL frameworks. Extensive evaluations demonstrate that our method outperforms state-of-the-art approaches. In addition, we provide a new perspective on understanding the UDA problem through back-propagation analysis.

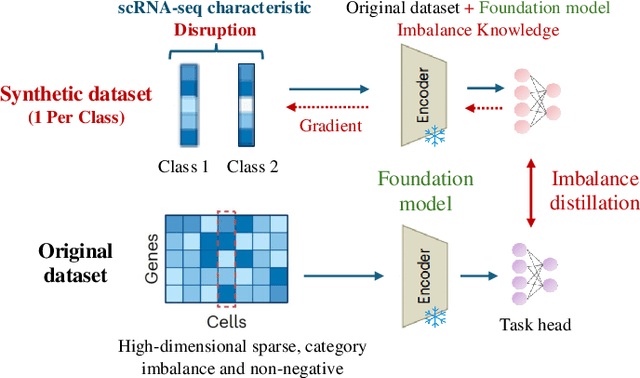

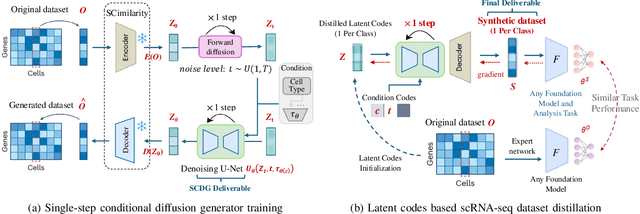

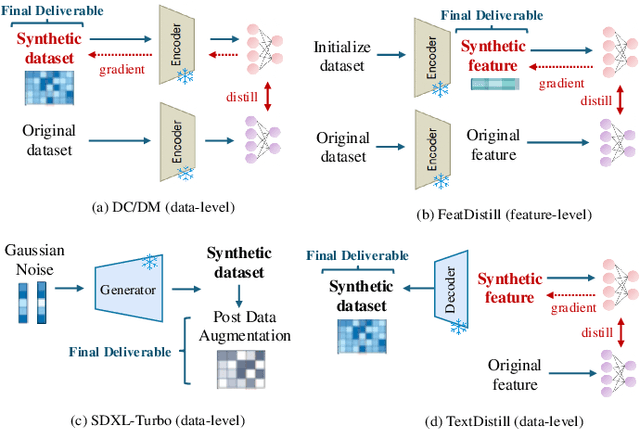

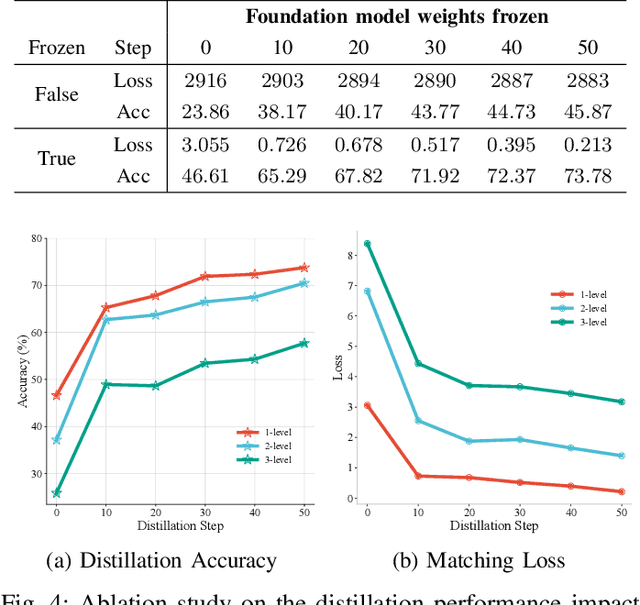

scDD: Latent Codes Based scRNA-seq Dataset Distillation with Foundation Model Knowledge

Mar 06, 2025

Abstract:Single-cell RNA sequencing (scRNA-seq) technology has profiled hundreds of millions of human cells across organs, diseases, development and perturbations to date. However, the high-dimensional sparsity, batch effect noise, category imbalance, and ever-increasing data scale of the original sequencing data pose significant challenges for multi-center knowledge transfer, data fusion, and cross-validation between scRNA-seq datasets. To address these barriers, (1) we first propose a latent codes-based scRNA-seq dataset distillation framework named scDD, which transfers and distills foundation model knowledge and original dataset information into a compact latent space and generates synthetic scRNA-seq dataset by a generator to replace the original dataset. Then, (2) we propose a single-step conditional diffusion generator named SCDG, which perform single-step gradient back-propagation to help scDD optimize distillation quality and avoid gradient decay caused by multi-step back-propagation. Meanwhile, SCDG ensures the scRNA-seq data characteristics and inter-class discriminability of the synthetic dataset through flexible conditional control and generation quality assurance. Finally, we propose a comprehensive benchmark to evaluate the performance of scRNA-seq dataset distillation in different data analysis tasks. It is validated that our proposed method can achieve 7.61% absolute and 15.70% relative improvement over previous state-of-the-art methods on average task.

Training-free Video Temporal Grounding using Large-scale Pre-trained Models

Aug 29, 2024Abstract:Video temporal grounding aims to identify video segments within untrimmed videos that are most relevant to a given natural language query. Existing video temporal localization models rely on specific datasets for training and have high data collection costs, but they exhibit poor generalization capability under the across-dataset and out-of-distribution (OOD) settings. In this paper, we propose a Training-Free Video Temporal Grounding (TFVTG) approach that leverages the ability of pre-trained large models. A naive baseline is to enumerate proposals in the video and use the pre-trained visual language models (VLMs) to select the best proposal according to the vision-language alignment. However, most existing VLMs are trained on image-text pairs or trimmed video clip-text pairs, making it struggle to (1) grasp the relationship and distinguish the temporal boundaries of multiple events within the same video; (2) comprehend and be sensitive to the dynamic transition of events (the transition from one event to another) in the video. To address these issues, we propose leveraging large language models (LLMs) to analyze multiple sub-events contained in the query text and analyze the temporal order and relationships between these events. Secondly, we split a sub-event into dynamic transition and static status parts and propose the dynamic and static scoring functions using VLMs to better evaluate the relevance between the event and the description. Finally, for each sub-event description, we use VLMs to locate the top-k proposals and leverage the order and relationships between sub-events provided by LLMs to filter and integrate these proposals. Our method achieves the best performance on zero-shot video temporal grounding on Charades-STA and ActivityNet Captions datasets without any training and demonstrates better generalization capabilities in cross-dataset and OOD settings.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge