Mingxi Li

The Tenth NTIRE 2025 Efficient Super-Resolution Challenge Report

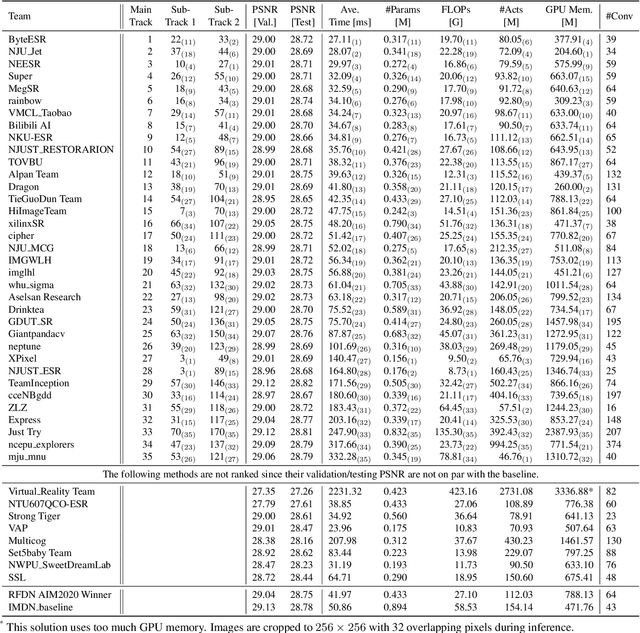

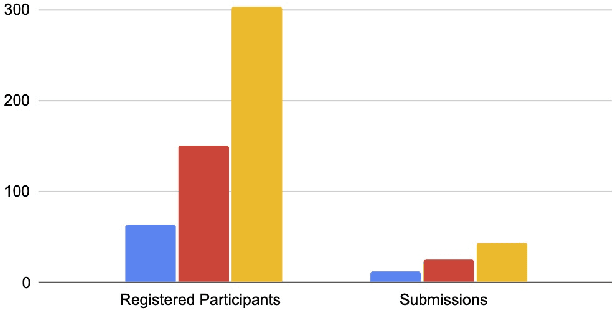

Apr 14, 2025Abstract:This paper presents a comprehensive review of the NTIRE 2025 Challenge on Single-Image Efficient Super-Resolution (ESR). The challenge aimed to advance the development of deep models that optimize key computational metrics, i.e., runtime, parameters, and FLOPs, while achieving a PSNR of at least 26.90 dB on the $\operatorname{DIV2K\_LSDIR\_valid}$ dataset and 26.99 dB on the $\operatorname{DIV2K\_LSDIR\_test}$ dataset. A robust participation saw \textbf{244} registered entrants, with \textbf{43} teams submitting valid entries. This report meticulously analyzes these methods and results, emphasizing groundbreaking advancements in state-of-the-art single-image ESR techniques. The analysis highlights innovative approaches and establishes benchmarks for future research in the field.

Self-Deployable, Adaptive Soft Robots Based on Contracting-Cord Particle Jamming

Oct 03, 2024Abstract:We developed a new class of soft locomotive robots that can self-assemble into a preprogrammed configuration and vary their stiffness afterward in a highly integrated, compact body using contracting-cord particle jamming (CCPJ). We demonstrate this with a tripod-shaped robot, TripodBot, consisting of three CCPJ-based legs attached to a central body. TripodBot is intrinsically soft and can be stored and transported in a compact configuration. On site, it can self-deploy and crawl in a slip-stick manner through the shape morphing of its legs; a simplified analytical model accurately captures the speed. The robot's adaptability is demonstrated by its ability to navigate tunnels as narrow as 61 percent of its deployed body width and ceilings as low as 31 percent of its freestanding height. Additionally, it can climb slopes up to 15 degrees, carry a load of 5 grams (2.4 times its weight), and bear a load 9429 times its weight.

V2X-Real: a Largs-Scale Dataset for Vehicle-to-Everything Cooperative Perception

Mar 24, 2024Abstract:Recent advancements in Vehicle-to-Everything (V2X) technologies have enabled autonomous vehicles to share sensing information to see through occlusions, greatly boosting the perception capability. However, there are no real-world datasets to facilitate the real V2X cooperative perception research -- existing datasets either only support Vehicle-to-Infrastructure cooperation or Vehicle-to-Vehicle cooperation. In this paper, we propose a dataset that has a mixture of multiple vehicles and smart infrastructure simultaneously to facilitate the V2X cooperative perception development with multi-modality sensing data. Our V2X-Real is collected using two connected automated vehicles and two smart infrastructures, which are all equipped with multi-modal sensors including LiDAR sensors and multi-view cameras. The whole dataset contains 33K LiDAR frames and 171K camera data with over 1.2M annotated bounding boxes of 10 categories in very challenging urban scenarios. According to the collaboration mode and ego perspective, we derive four types of datasets for Vehicle-Centric, Infrastructure-Centric, Vehicle-to-Vehicle, and Infrastructure-to-Infrastructure cooperative perception. Comprehensive multi-class multi-agent benchmarks of SOTA cooperative perception methods are provided. The V2X-Real dataset and benchmark codes will be released.

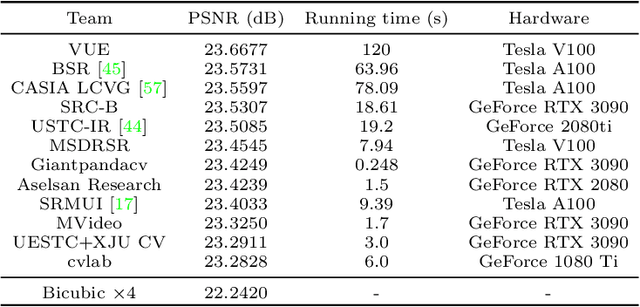

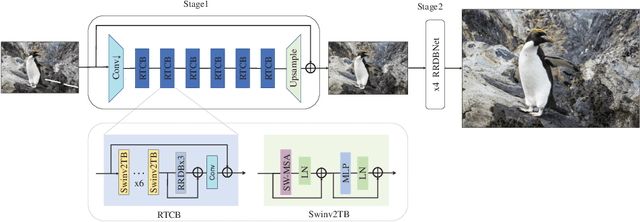

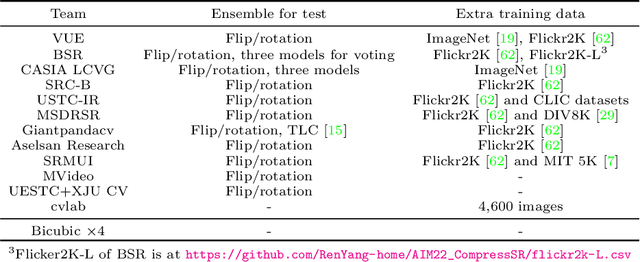

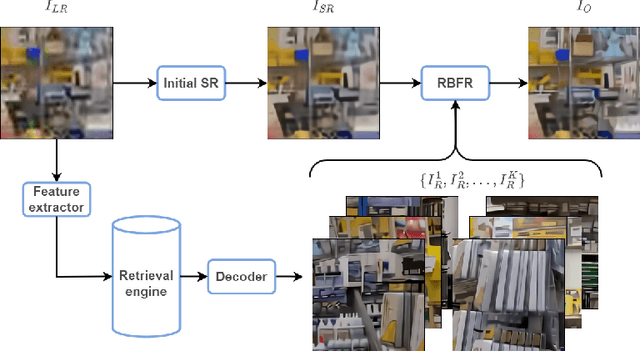

AIM 2022 Challenge on Super-Resolution of Compressed Image and Video: Dataset, Methods and Results

Aug 25, 2022

Abstract:This paper reviews the Challenge on Super-Resolution of Compressed Image and Video at AIM 2022. This challenge includes two tracks. Track 1 aims at the super-resolution of compressed image, and Track~2 targets the super-resolution of compressed video. In Track 1, we use the popular dataset DIV2K as the training, validation and test sets. In Track 2, we propose the LDV 3.0 dataset, which contains 365 videos, including the LDV 2.0 dataset (335 videos) and 30 additional videos. In this challenge, there are 12 teams and 2 teams that submitted the final results to Track 1 and Track 2, respectively. The proposed methods and solutions gauge the state-of-the-art of super-resolution on compressed image and video. The proposed LDV 3.0 dataset is available at https://github.com/RenYang-home/LDV_dataset. The homepage of this challenge is at https://github.com/RenYang-home/AIM22_CompressSR.

Residual Local Feature Network for Efficient Super-Resolution

May 16, 2022

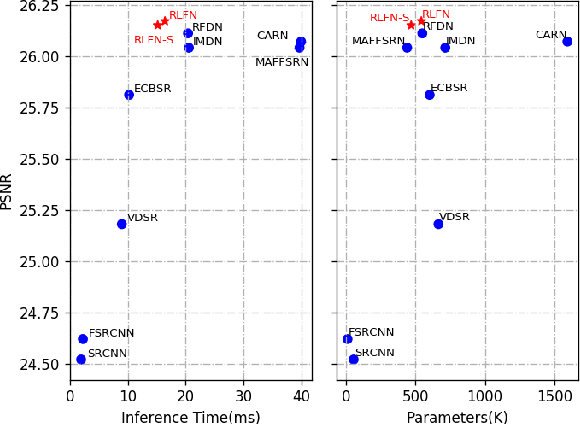

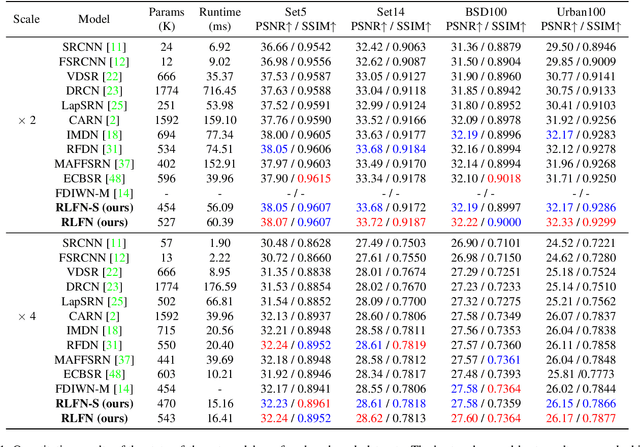

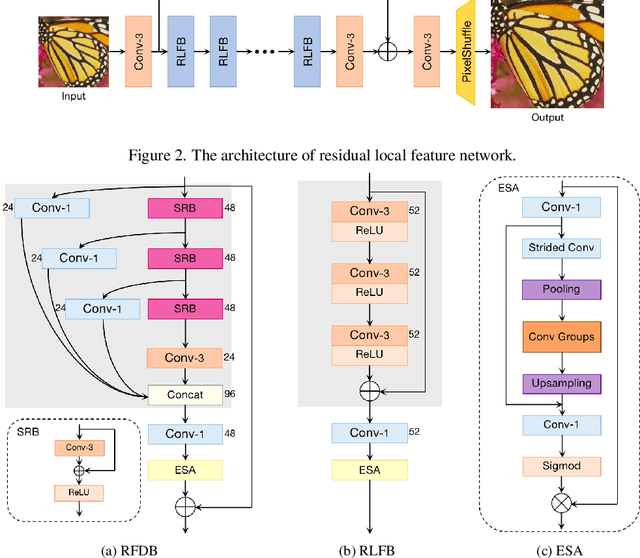

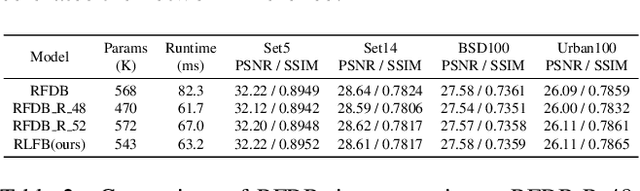

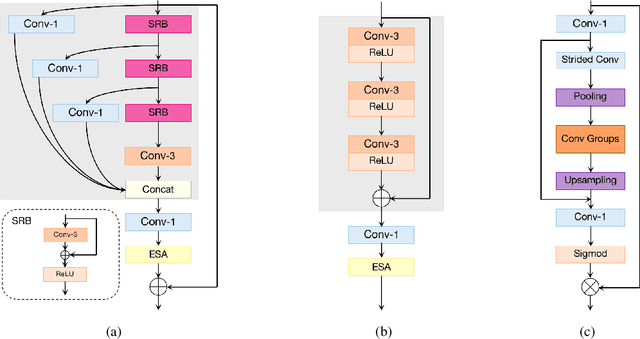

Abstract:Deep learning based approaches has achieved great performance in single image super-resolution (SISR). However, recent advances in efficient super-resolution focus on reducing the number of parameters and FLOPs, and they aggregate more powerful features by improving feature utilization through complex layer connection strategies. These structures may not be necessary to achieve higher running speed, which makes them difficult to be deployed to resource-constrained devices. In this work, we propose a novel Residual Local Feature Network (RLFN). The main idea is using three convolutional layers for residual local feature learning to simplify feature aggregation, which achieves a good trade-off between model performance and inference time. Moreover, we revisit the popular contrastive loss and observe that the selection of intermediate features of its feature extractor has great influence on the performance. Besides, we propose a novel multi-stage warm-start training strategy. In each stage, the pre-trained weights from previous stages are utilized to improve the model performance. Combined with the improved contrastive loss and training strategy, the proposed RLFN outperforms all the state-of-the-art efficient image SR models in terms of runtime while maintaining both PSNR and SSIM for SR. In addition, we won the first place in the runtime track of the NTIRE 2022 efficient super-resolution challenge. Code will be available at https://github.com/fyan111/RLFN.

NTIRE 2022 Challenge on Efficient Super-Resolution: Methods and Results

May 11, 2022

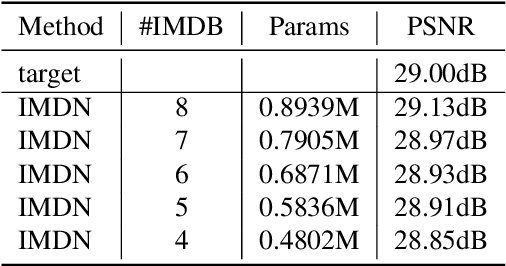

Abstract:This paper reviews the NTIRE 2022 challenge on efficient single image super-resolution with focus on the proposed solutions and results. The task of the challenge was to super-resolve an input image with a magnification factor of $\times$4 based on pairs of low and corresponding high resolution images. The aim was to design a network for single image super-resolution that achieved improvement of efficiency measured according to several metrics including runtime, parameters, FLOPs, activations, and memory consumption while at least maintaining the PSNR of 29.00dB on DIV2K validation set. IMDN is set as the baseline for efficiency measurement. The challenge had 3 tracks including the main track (runtime), sub-track one (model complexity), and sub-track two (overall performance). In the main track, the practical runtime performance of the submissions was evaluated. The rank of the teams were determined directly by the absolute value of the average runtime on the validation set and test set. In sub-track one, the number of parameters and FLOPs were considered. And the individual rankings of the two metrics were summed up to determine a final ranking in this track. In sub-track two, all of the five metrics mentioned in the description of the challenge including runtime, parameter count, FLOPs, activations, and memory consumption were considered. Similar to sub-track one, the rankings of five metrics were summed up to determine a final ranking. The challenge had 303 registered participants, and 43 teams made valid submissions. They gauge the state-of-the-art in efficient single image super-resolution.

Few-Shot Traffic Prediction with Graph Networks using Locale as Relational Inductive Biases

Mar 08, 2022

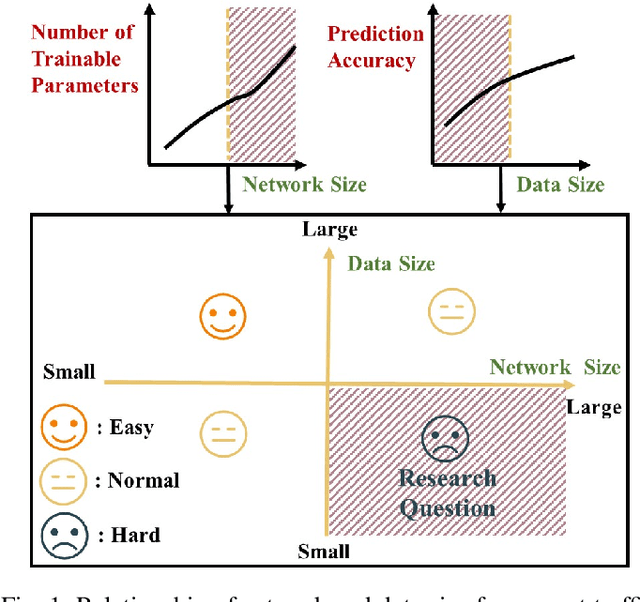

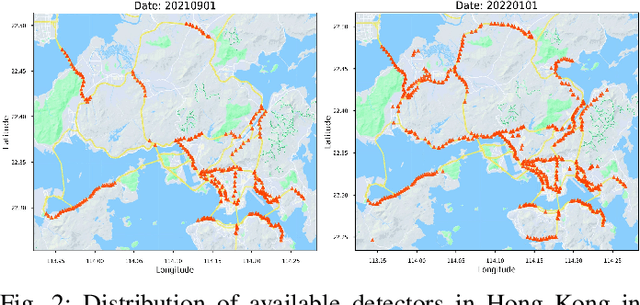

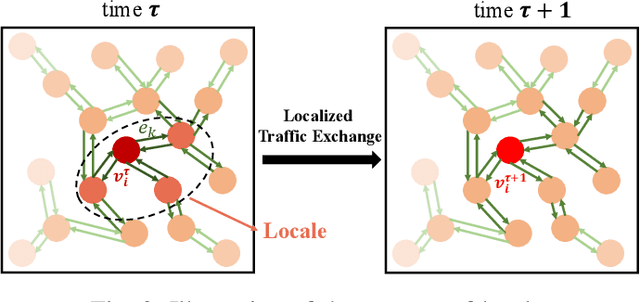

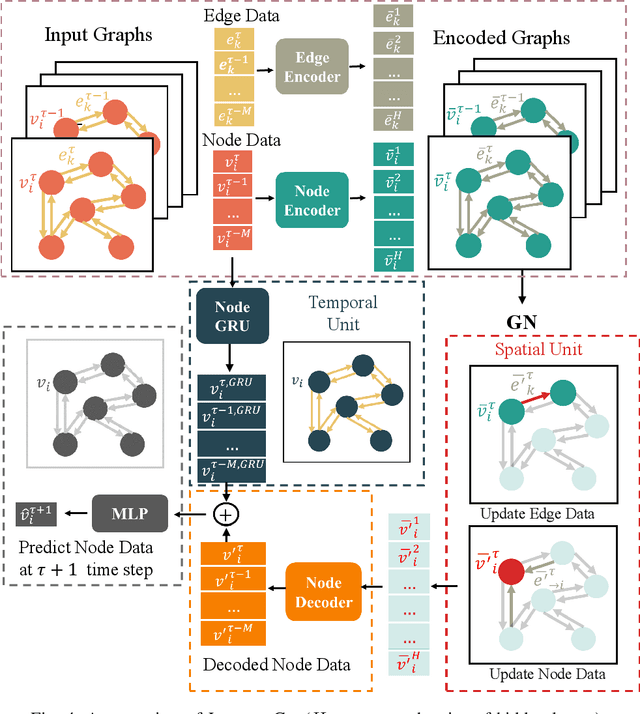

Abstract:Accurate short-term traffic prediction plays a pivotal role in various smart mobility operation and management systems. Currently, most of the state-of-the-art prediction models are based on graph neural networks (GNNs), and the required training samples are proportional to the size of the traffic network. In many cities, the available amount of traffic data is substantially below the minimum requirement due to the data collection expense. It is still an open question to develop traffic prediction models with a small size of training data on large-scale networks. We notice that the traffic states of a node for the near future only depend on the traffic states of its localized neighborhoods, which can be represented using the graph relational inductive biases. In view of this, this paper develops a graph network (GN)-based deep learning model LocaleGn that depicts the traffic dynamics using localized data aggregating and updating functions, as well as the node-wise recurrent neural networks. LocaleGn is a light-weighted model designed for training on few samples without over-fitting, and hence it can solve the problem of few-shot traffic prediction. The proposed model is examined on predicting both traffic speed and flow with six datasets, and the experimental results demonstrate that LocaleGn outperforms existing state-of-the-art baseline models. It is also demonstrated that the learned knowledge from LocaleGn can be transferred across cities. The research outcomes can help to develop light-weighted traffic prediction systems, especially for cities lacking in historically archived traffic data.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge