Michal Kosinski

Twenty Years of Personality Computing: Threats, Challenges and Future Directions

Mar 03, 2025

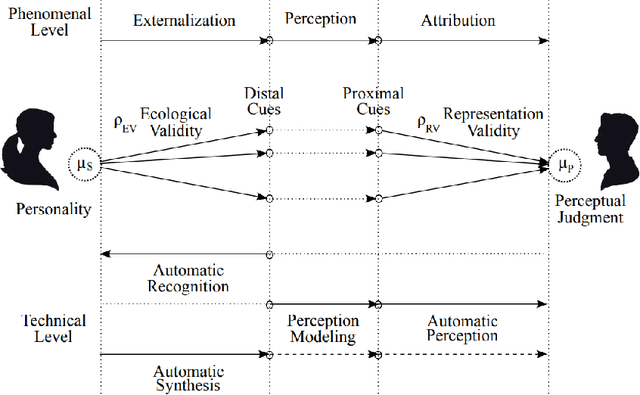

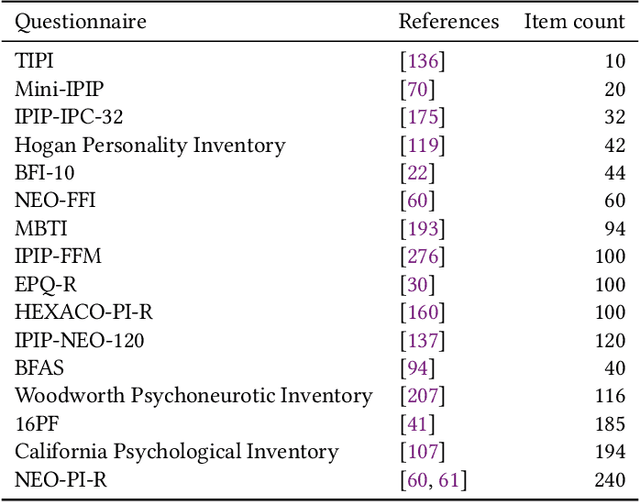

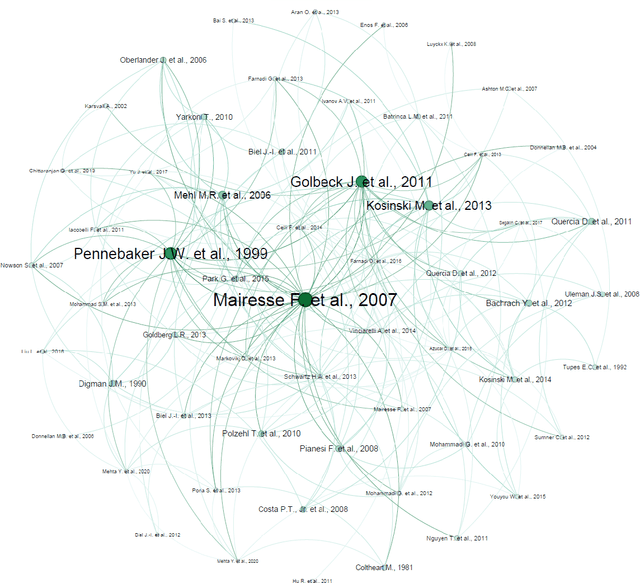

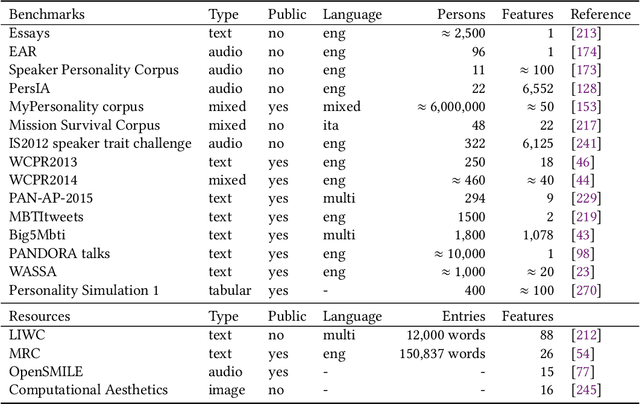

Abstract:Personality Computing is a field at the intersection of Personality Psychology and Computer Science. Started in 2005, research in the field utilizes computational methods to understand and predict human personality traits. The expansion of the field has been very rapid and, by analyzing digital footprints (text, images, social media, etc.), it helped to develop systems that recognize and even replicate human personality. While offering promising applications in talent recruiting, marketing and healthcare, the ethical implications of Personality Computing are significant. Concerns include data privacy, algorithmic bias, and the potential for manipulation by personality-aware Artificial Intelligence. This paper provides an overview of the field, explores key methodologies, discusses the challenges and threats, and outlines potential future directions for responsible development and deployment of Personality Computing technologies.

Facial Width-to-Height Ratio Does Not Predict Self-Reported Behavioral Tendencies

Oct 13, 2024

Abstract:A growing number of studies have linked facial width-to-height ratio (fWHR) with various antisocial or violent behavioral tendencies. However, those studies have predominantly been laboratory based and low powered. This work reexamined the links between fWHR and behavioral tendencies in a large sample of 137,163 participants. Behavioral tendencies were measured using 55 well-established psychometric scales, including self-report scales measuring intelligence, domains and facets of the five-factor model of personality, impulsiveness, sense of fairness, sensational interests, self-monitoring, impression management, and satisfaction with life. The findings revealed that fWHR is not substantially linked with any of these self-reported measures of behavioral tendencies, calling into question whether the links between fWHR and behavior generalize beyond the small samples and specific experimental settings that have been used in past fWHR research.

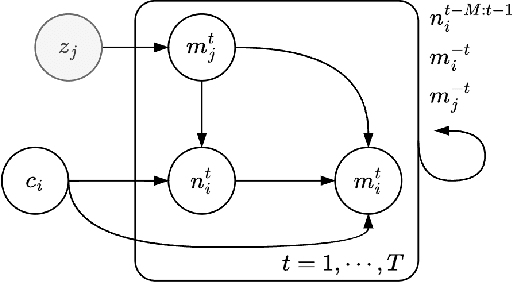

Through the Theory of Mind's Eye: Reading Minds with Multimodal Video Large Language Models

Jun 19, 2024

Abstract:Can large multimodal models have a human-like ability for emotional and social reasoning, and if so, how does it work? Recent research has discovered emergent theory-of-mind (ToM) reasoning capabilities in large language models (LLMs). LLMs can reason about people's mental states by solving various text-based ToM tasks that ask questions about the actors' ToM (e.g., human belief, desire, intention). However, human reasoning in the wild is often grounded in dynamic scenes across time. Thus, we consider videos a new medium for examining spatio-temporal ToM reasoning ability. Specifically, we ask explicit probing questions about videos with abundant social and emotional reasoning content. We develop a pipeline for multimodal LLM for ToM reasoning using video and text. We also enable explicit ToM reasoning by retrieving key frames for answering a ToM question, which reveals how multimodal LLMs reason about ToM.

Evaluating Language Model Agency through Negotiations

Jan 09, 2024

Abstract:Companies, organizations, and governments increasingly exploit Language Models' (LM) remarkable capability to display agent-like behavior. As LMs are adopted to perform tasks with growing autonomy, there exists an urgent need for reliable and scalable evaluation benchmarks. Current, predominantly static LM benchmarks are ill-suited to evaluate such dynamic applications. Thus, we propose jointly evaluating LM performance and alignment through the lenses of negotiation games. We argue that this common task better reflects real-world deployment conditions while offering insights into LMs' decision-making processes. Crucially, negotiation games allow us to study multi-turn, and cross-model interactions, modulate complexity, and side-step accidental data leakage in evaluation. We report results for six publicly accessible LMs from several major providers on a variety of negotiation games, evaluating both self-play and cross-play performance. Noteworthy findings include: (i) open-source models are currently unable to complete these tasks; (ii) cooperative bargaining games prove challenging; and (iii) the most powerful models do not always "win".

Facial recognition technology can expose political orientation from facial images even when controlling for demographics and self-presentation

Mar 28, 2023

Abstract:A facial recognition algorithm was used to extract face descriptors from carefully standardized images of 591 neutral faces taken in the laboratory setting. Face descriptors were entered into a cross-validated linear regression to predict participants' scores on a political orientation scale (Cronbach's alpha=.94) while controlling for age, gender, and ethnicity. The model's performance exceeded r=.20: much better than that of human raters and on par with how well job interviews predict job success, alcohol drives aggressiveness, or psychological therapy improves mental health. Moreover, the model derived from standardized images performed well (r=.12) in a sample of naturalistic images of 3,401 politicians from the U.S., UK, and Canada, suggesting that the associations between facial appearance and political orientation generalize beyond our sample. The analysis of facial features associated with political orientation revealed that conservatives had larger lower faces, although political orientation was only weakly associated with body mass index (BMI). The predictability of political orientation from standardized images has critical implications for privacy, regulation of facial recognition technology, as well as the understanding the origins and consequences of political orientation.

Theory of Mind May Have Spontaneously Emerged in Large Language Models

Feb 10, 2023

Abstract:Theory of mind (ToM), or the ability to impute unobservable mental states to others, is central to human social interactions, communication, empathy, self-consciousness, and morality. We administer classic false-belief tasks, widely used to test ToM in humans, to several language models, without any examples or pre-training. Our results show that models published before 2022 show virtually no ability to solve ToM tasks. Yet, the January 2022 version of GPT-3 (davinci-002) solved 70% of ToM tasks, a performance comparable with that of seven-year-old children. Moreover, its November 2022 version (davinci-003), solved 93% of ToM tasks, a performance comparable with that of nine-year-old children. These findings suggest that ToM-like ability (thus far considered to be uniquely human) may have spontaneously emerged as a byproduct of language models' improving language skills.

Machine intuition: Uncovering human-like intuitive decision-making in GPT-3.5

Dec 10, 2022Abstract:Artificial intelligence (AI) technologies revolutionize vast fields of society. Humans using these systems are likely to expect them to work in a potentially hyperrational manner. However, in this study, we show that some AI systems, namely large language models (LLMs), exhibit behavior that strikingly resembles human-like intuition - and the many cognitive errors that come with them. We use a state-of-the-art LLM, namely the latest iteration of OpenAI's Generative Pre-trained Transformer (GPT-3.5), and probe it with the Cognitive Reflection Test (CRT) as well as semantic illusions that were originally designed to investigate intuitive decision-making in humans. Our results show that GPT-3.5 systematically exhibits "machine intuition," meaning that it produces incorrect responses that are surprisingly equal to how humans respond to the CRT as well as to semantic illusions. We investigate several approaches to test how sturdy GPT-3.5's inclination for intuitive-like decision-making is. Our study demonstrates that investigating LLMs with methods from cognitive science has the potential to reveal emergent traits and adjust expectations regarding their machine behavior.

Darts: User-Friendly Modern Machine Learning for Time Series

Oct 08, 2021Abstract:We present Darts, a Python machine learning library for time series, with a focus on forecasting. Darts offers a variety of models, from classics such as ARIMA to state-of-the-art deep neural networks. The emphasis of the library is on offering modern machine learning functionalities, such as supporting multidimensional series, meta-learning on multiple series, training on large datasets, incorporating external data, ensembling models, and providing a rich support for probabilistic forecasting. At the same time, great care goes into the API design to make it user-friendly and easy to use. For instance, all models can be used using fit()/predict(), similar to scikit-learn.

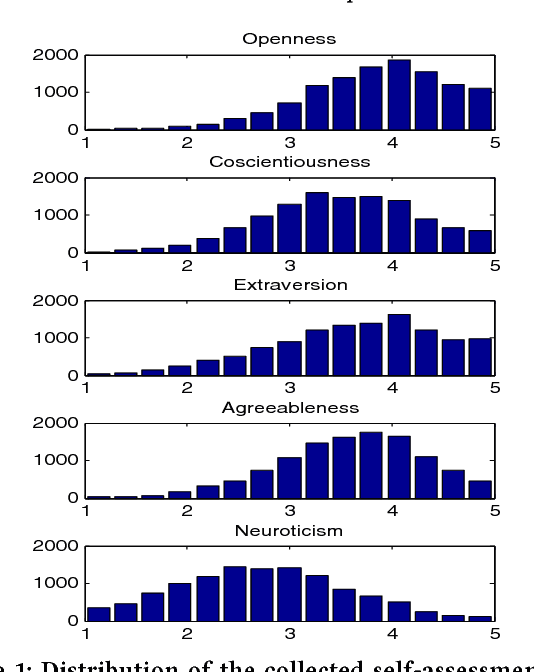

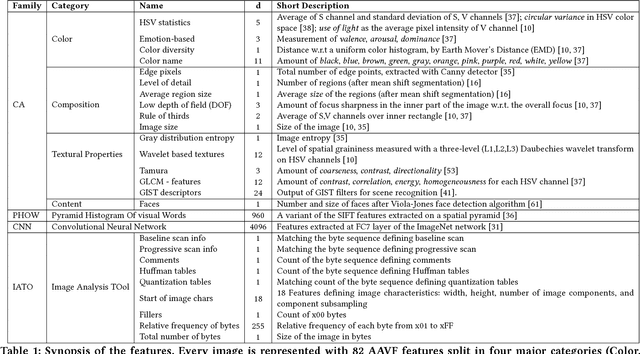

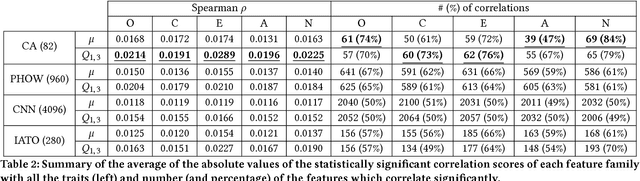

What your Facebook Profile Picture Reveals about your Personality

Aug 13, 2017

Abstract:People spend considerable effort managing the impressions they give others. Social psychologists have shown that people manage these impressions differently depending upon their personality. Facebook and other social media provide a new forum for this fundamental process; hence, understanding people's behaviour on social media could provide interesting insights on their personality. In this paper we investigate automatic personality recognition from Facebook profile pictures. We analyze the effectiveness of four families of visual features and we discuss some human interpretable patterns that explain the personality traits of the individuals. For example, extroverts and agreeable individuals tend to have warm colored pictures and to exhibit many faces in their portraits, mirroring their inclination to socialize; while neurotic ones have a prevalence of pictures of indoor places. Then, we propose a classification approach to automatically recognize personality traits from these visual features. Finally, we compare the performance of our classification approach to the one obtained by human raters and we show that computer-based classifications are significantly more accurate than averaged human-based classifications for Extraversion and Neuroticism.

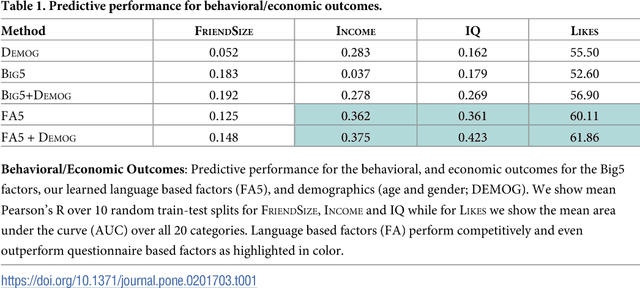

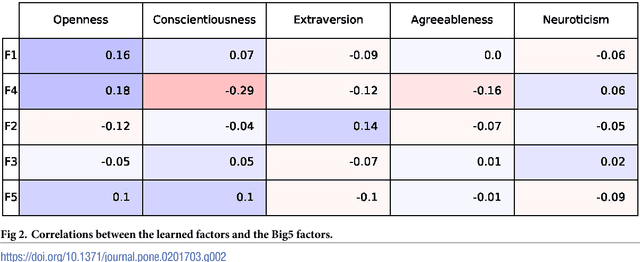

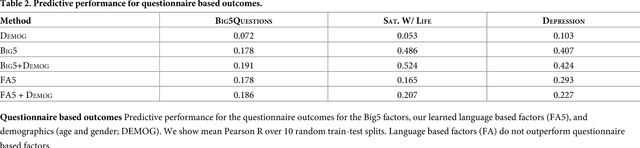

Latent Human Traits in the Language of Social Media: An Open-Vocabulary Approach

May 22, 2017

Abstract:Over the past century, personality theory and research has successfully identified core sets of characteristics that consistently describe and explain fundamental differences in the way people think, feel and behave. Such characteristics were derived through theory, dictionary analyses, and survey research using explicit self-reports. The availability of social media data spanning millions of users now makes it possible to automatically derive characteristics from language use -- at large scale. Taking advantage of linguistic information available through Facebook, we study the process of inferring a new set of potential human traits based on unprompted language use. We subject these new traits to a comprehensive set of evaluations and compare them with a popular five factor model of personality. We find that our language-based trait construct is often more generalizable in that it often predicts non-questionnaire-based outcomes better than questionnaire-based traits (e.g. entities someone likes, income and intelligence quotient), while the factors remain nearly as stable as traditional factors. Our approach suggests a value in new constructs of personality derived from everyday human language use.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge