Marija Popovic

e Institute of Geodesy and Geoinformation, University of Bonn, Germany

Keypoint Semantic Integration for Improved Feature Matching in Outdoor Agricultural Environments

Mar 11, 2025Abstract:Robust robot navigation in outdoor environments requires accurate perception systems capable of handling visual challenges such as repetitive structures and changing appearances. Visual feature matching is crucial to vision-based pipelines but remains particularly challenging in natural outdoor settings due to perceptual aliasing. We address this issue in vineyards, where repetitive vine trunks and other natural elements generate ambiguous descriptors that hinder reliable feature matching. We hypothesise that semantic information tied to keypoint positions can alleviate perceptual aliasing by enhancing keypoint descriptor distinctiveness. To this end, we introduce a keypoint semantic integration technique that improves the descriptors in semantically meaningful regions within the image, enabling more accurate differentiation even among visually similar local features. We validate this approach in two vineyard perception tasks: (i) relative pose estimation and (ii) visual localisation. Across all tested keypoint types and descriptors, our method improves matching accuracy by 12.6%, demonstrating its effectiveness over multiple months in challenging vineyard conditions.

Discrete Gaussian Process Representations for Optimising UAV-based Precision Weed Mapping

Mar 10, 2025Abstract:Accurate agricultural weed mapping using UAVs is crucial for precision farming applications. Traditional methods rely on orthomosaic stitching from rigid flight paths, which is computationally intensive and time-consuming. Gaussian Process (GP)-based mapping offers continuous modelling of the underlying variable (i.e. weed distribution) but requires discretisation for practical tasks like path planning or visualisation. Current implementations often default to quadtrees or gridmaps without systematically evaluating alternatives. This study compares five discretisation methods: quadtrees, wedgelets, top-down binary space partition (BSP) trees using least square error (LSE), bottom-up BSP trees using graph merging, and variable-resolution hexagonal grids. Evaluations on real-world weed distributions measure visual similarity, mean squared error (MSE), and computational efficiency. Results show quadtrees perform best overall, but alternatives excel in specific scenarios: hexagons or BSP LSE suit fields with large, dominant weed patches, while quadtrees are optimal for dispersed small-scale distributions. These findings highlight the need to tailor discretisation approaches to weed distribution patterns (patch size, density, coverage) rather than relying on default methods. By choosing representations based on the underlying distribution, we can improve mapping accuracy and efficiency for precision agriculture applications.

Robotic Learning for Adaptive Informative Path Planning

Apr 15, 2024Abstract:Adaptive informative path planning (AIPP) is important to many robotics applications, enabling mobile robots to efficiently collect useful data about initially unknown environments. In addition, learning-based methods are increasingly used in robotics to enhance adaptability, versatility, and robustness across diverse and complex tasks. Our survey explores research on applying robotic learning to AIPP, bridging the gap between these two research fields. We begin by providing a unified mathematical framework for general AIPP problems. Next, we establish two complementary taxonomies of current work from the perspectives of (i) learning algorithms and (ii) robotic applications. We explore synergies, recent trends, and highlight the benefits of learning-based methods in AIPP frameworks. Finally, we discuss key challenges and promising future directions to enable more generally applicable and robust robotic data-gathering systems through learning. We provide a comprehensive catalogue of papers reviewed in our survey, including publicly available repositories, to facilitate future studies in the field.

Perceptual Factors for Environmental Modeling in Robotic Active Perception

Sep 19, 2023

Abstract:Accurately assessing the potential value of new sensor observations is a critical aspect of planning for active perception. This task is particularly challenging when reasoning about high-level scene understanding using measurements from vision-based neural networks. Due to appearance-based reasoning, the measurements are susceptible to several environmental effects such as the presence of occluders, variations in lighting conditions, and redundancy of information due to similarity in appearance between nearby viewpoints. To address this, we propose a new active perception framework incorporating an arbitrary number of perceptual effects in planning and fusion. Our method models the correlation with the environment by a set of general functions termed perceptual factors to construct a perceptual map, which quantifies the aggregated influence of the environment on candidate viewpoints. This information is seamlessly incorporated into the planning and fusion processes by adjusting the uncertainty associated with measurements to weigh their contributions. We evaluate our perceptual maps in a simulated environment that reproduces environmental conditions common in robotics applications. Our results show that, by accounting for environmental effects within our perceptual maps, we improve in the state estimation by correctly selecting the viewpoints and considering the measurement noise correctly when affected by environmental factors. We furthermore deploy our approach on a ground robot to showcase its applicability for real-world active perception missions.

Efficient Volumetric Mapping Using Depth Completion With Uncertainty for Robotic Navigation

Dec 05, 2020

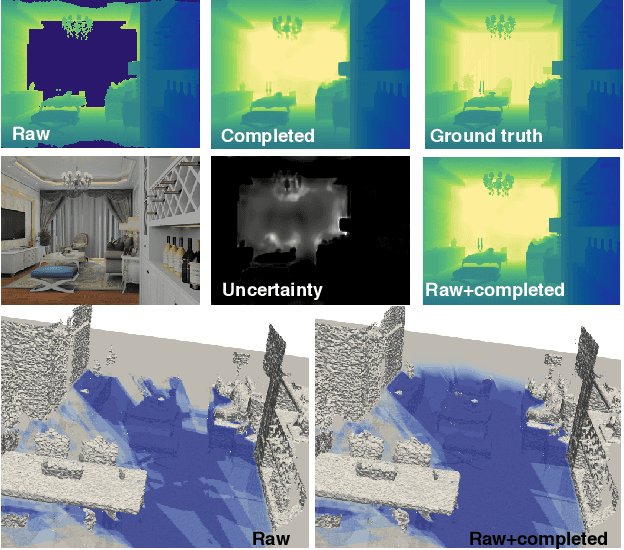

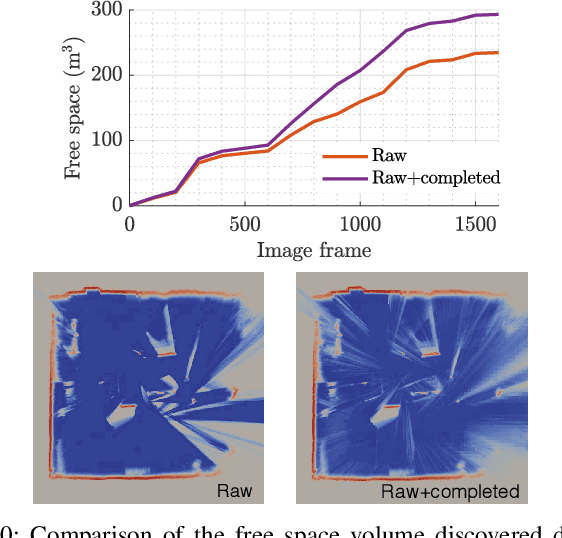

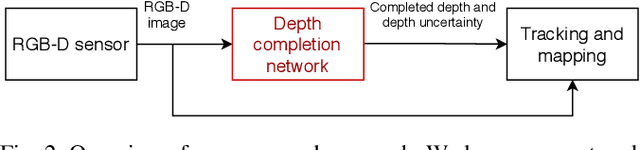

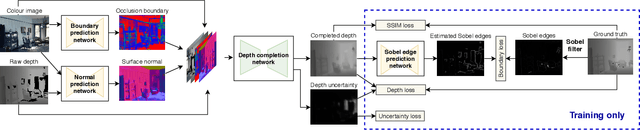

Abstract:In robotic applications, a key requirement for safe and efficient motion planning is the ability to map obstacle-free space in unknown, cluttered 3D environments. However, commodity-grade RGB-D cameras commonly used for sensing fail to register valid depth values on shiny, glossy, bright, or distant surfaces, leading to missing data in the map. To address this issue, we propose a framework leveraging probabilistic depth completion as an additional input for spatial mapping. We introduce a deep learning architecture providing uncertainty estimates for the depth completion of RGB-D images. Our pipeline exploits the inferred missing depth values and depth uncertainty to complement raw depth images and improve the speed and quality of free space mapping. Evaluations on synthetic data show that our approach maps significantly more correct free space with relatively low error when compared against using raw data alone in different indoor environments; thereby producing more complete maps that can be directly used for robotic navigation tasks. The performance of our framework is validated using real-world data.

Multi-Resolution 3D Mapping with Explicit Free Space Representation for Fast and Accurate Mobile Robot Motion Planning

Oct 19, 2020

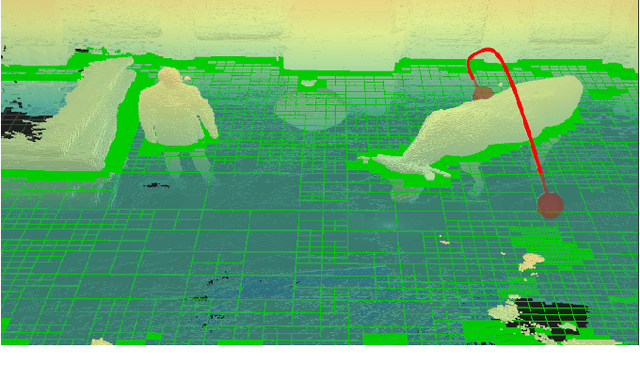

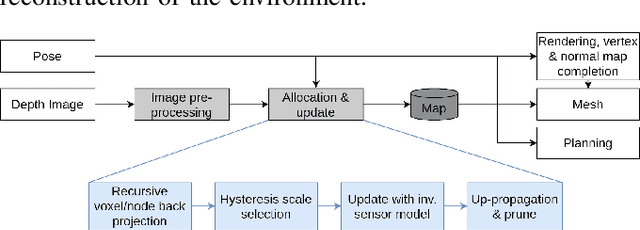

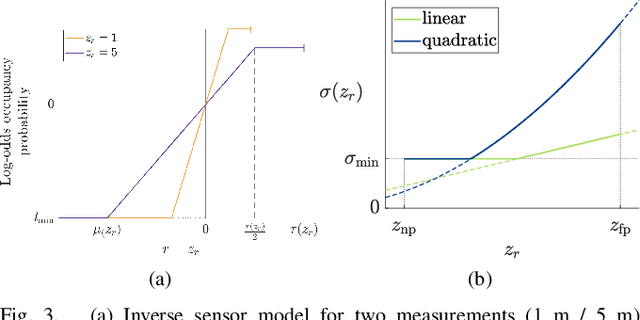

Abstract:With the aim of bridging the gap between high quality reconstruction and mobile robot motion planning, we propose an efficient system that leverages the concept of adaptive-resolution volumetric mapping, which naturally integrates with the hierarchical decomposition of space in an octree data structure. Instead of a Truncated Signed Distance Function (TSDF), we adopt mapping of occupancy probabilities in log-odds representation, which allows to represent both surfaces, as well as the entire free, i.e. observed space, as opposed to unobserved space. We introduce a method for choosing resolution -- on the fly -- in real-time by means of a multi-scale max-min pooling of the input depth image. The notion of explicit free space mapping paired with the spatial hierarchy in the data structure, as well as map resolution, allows for collision queries, as needed for robot motion planning, at unprecedented speed. We quantitatively evaluate mapping accuracy, memory, runtime performance, and planning performance showing improvements over the state of the art, particularly in cases requiring high resolution maps.

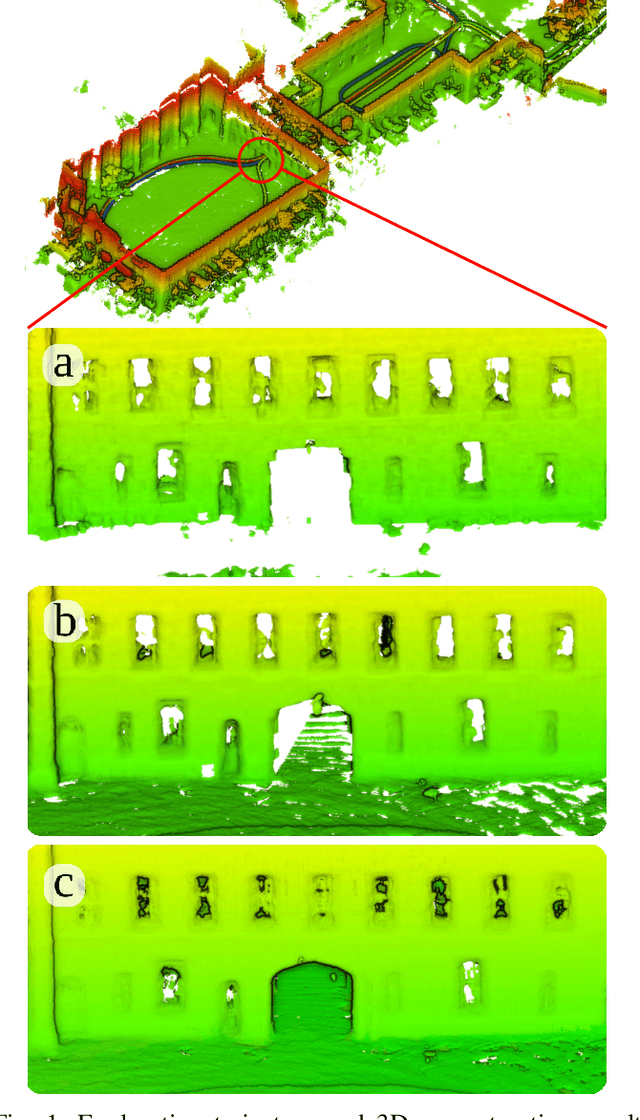

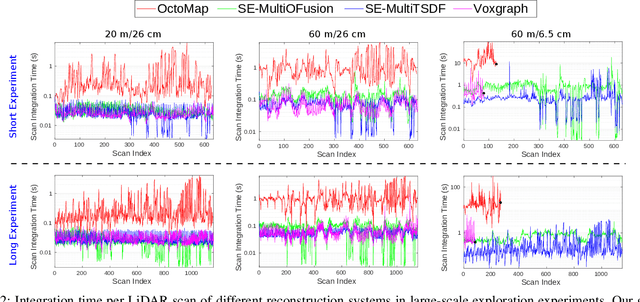

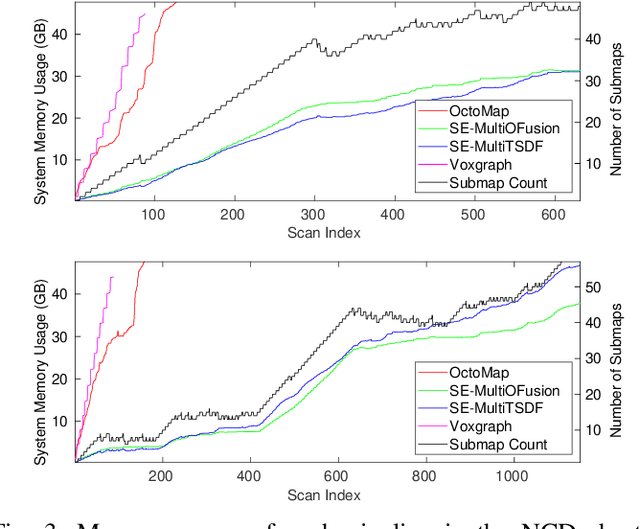

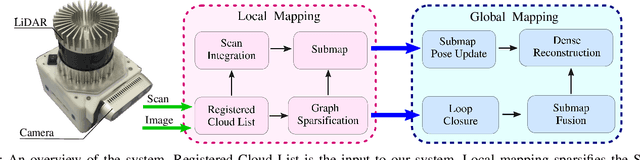

Elastic and Efficient LiDAR Reconstruction for Large-Scale Exploration Tasks

Oct 19, 2020

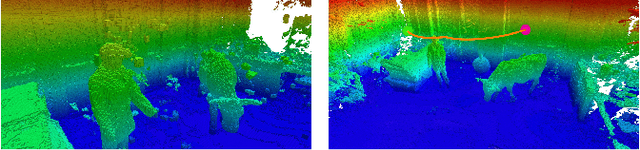

Abstract:We present an efficient, elastic 3D LiDAR reconstruction framework which can reconstruct up to maximum LiDAR ranges (60 m) at multiple frames per second, thus enabling robot exploration in large-scale environments. Our approach only requires a CPU. We focus on three main challenges of large-scale reconstruction: integration of long-range LiDAR scans at high frequency, the capacity to deform the reconstruction after loop closures are detected, and scalability for long-duration exploration. Our system extends upon a state-of-the-art efficient RGB-D volumetric reconstruction technique, called supereight, to support LiDAR scans and a newly developed submapping technique to allow for dynamic correction of the 3D reconstruction. We then introduce a novel pose graph sparsification and submap fusion feature to make our system more scalable for large environments. We evaluate the performance using a published dataset captured by a handheld mapping device scanning a set of buildings, and with a mobile robot exploring an underground room network. Experimental results demonstrate that our system can reconstruct at 3 Hz with 60 m sensor range and ~5 cm resolution, while state-of-the-art approaches can only reconstruct to 25 cm resolution or 20 m range at the same frequency.

Aerial Manipulation Using Hybrid Force and Position NMPC Applied to Aerial Writing

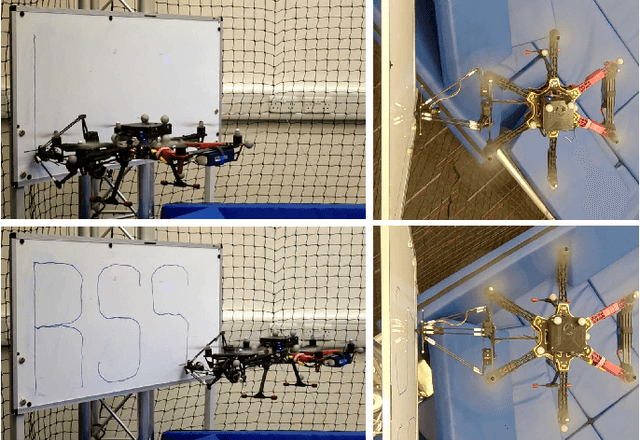

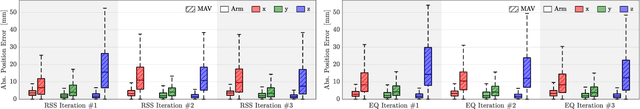

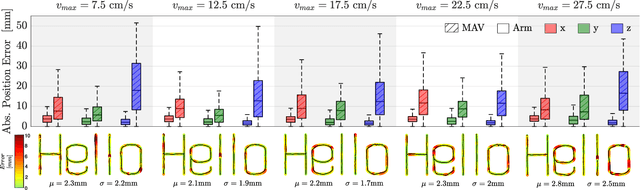

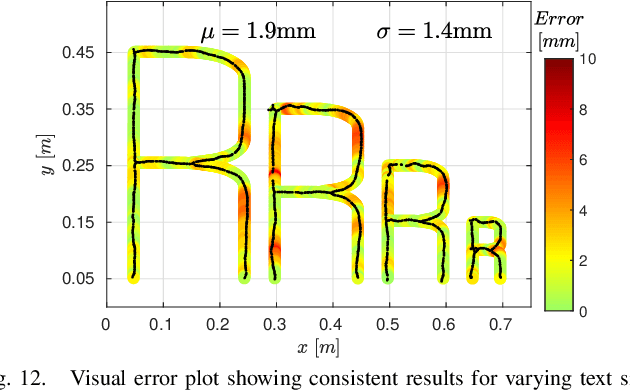

Jun 03, 2020

Abstract:Aerial manipulation aims at combining the manoeuvrability of aerial vehicles with the manipulation capabilities of robotic arms. This, however, comes at the cost of the additional control complexity due to the coupling of the dynamics of the two systems. In this paper we present a NMPC specifically designed for MAVs equipped with a robotic arm. We formulate a hybrid control model for the combined MAV-arm system which incorporates interaction forces acting on the end effector. We explain the practical implementation of our algorithm and show extensive experimental results of our custom built system performing multiple aerial-writing tasks on a whiteboard, revealing accuracy in the order of millimetres.

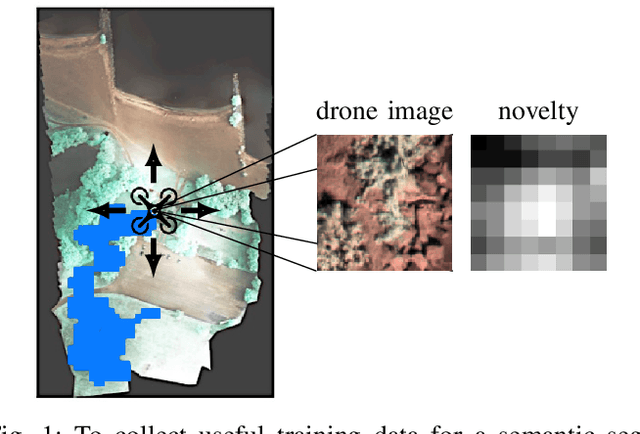

Active Learning for UAV-based Semantic Mapping

Aug 29, 2019

Abstract:Unmanned aerial vehicles combined with computer vision systems, such as convolutional neural networks, offer a flexible and affordable solution for terrain monitoring, mapping, and detection tasks. However, a key challenge remains the collection and annotation of training data for the given sensors, application, and mission. We introduce an informative path planning system that incorporates novelty estimation into its objective function, based on research for uncertainty estimation in deep learning. The system is designed for data collection to reduce both the number of flights and of annotated images. We evaluate the approach on real world terrain mapping data and show significantly smaller collected training dataset compared to standard lawnmower data collection techniques.

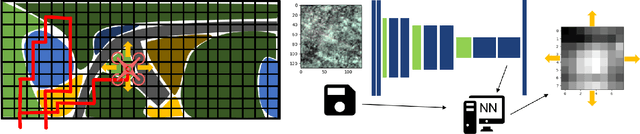

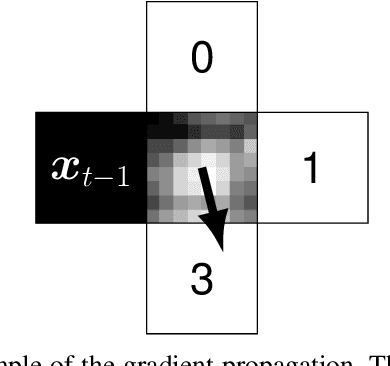

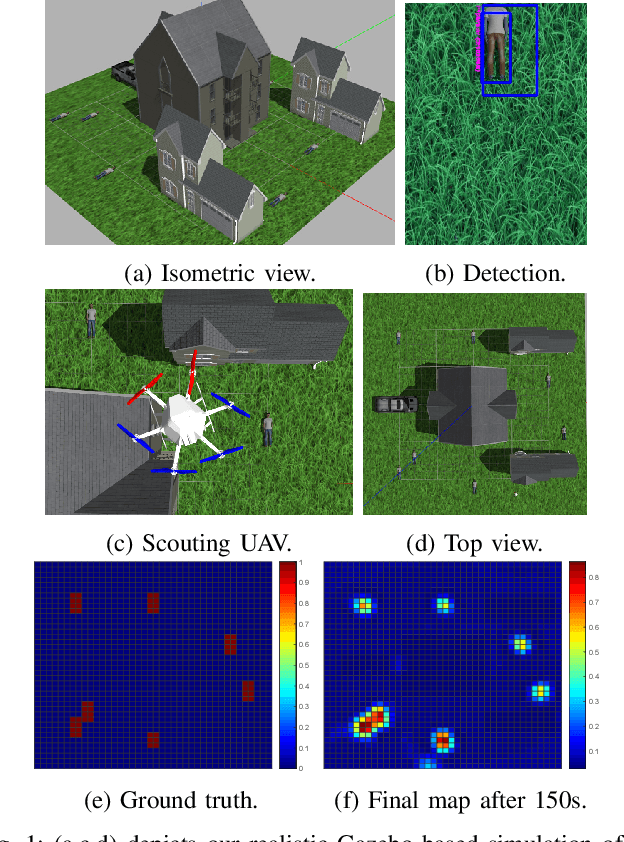

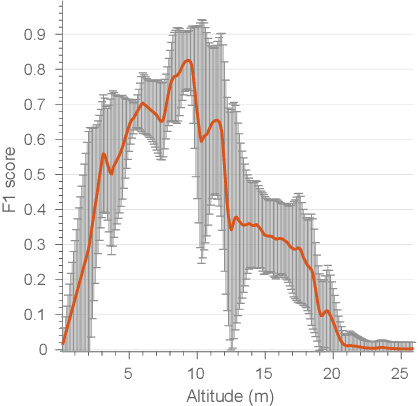

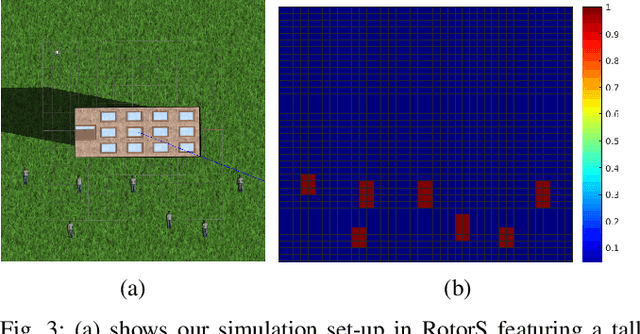

Obstacle-aware Adaptive Informative Path Planning for UAV-based Target Search

Feb 26, 2019

Abstract:Target search with unmanned aerial vehicles (UAVs) is relevant problem to many scenarios, e.g., search and rescue (SaR). However, a key challenge is planning paths for maximal search efficiency given flight time constraints. To address this, we propose the Obstacle-aware Adaptive Informative Path Planning (OA-IPP) algorithm for target search in cluttered environments using UAVs. Our approach leverages a layered planning strategy using a Gaussian Process (GP)-based model of target occupancy to generate informative paths in continuous 3D space. Within this framework, we introduce an adaptive replanning scheme which allows us to trade off between information gain, field coverage, sensor performance, and collision avoidance for efficient target detection. Extensive simulations show that our OA-IPP method performs better than state-of-the-art planners, and we demonstrate its application in a realistic urban SaR scenario.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge