Jiapeng Liu

EXaMCaP: Subset Selection with Entropy Gain Maximization for Probing Capability Gains of Large Chart Understanding Training Sets

Feb 04, 2026Abstract:Recent works focus on synthesizing Chart Understanding (ChartU) training sets to inject advanced chart knowledge into Multimodal Large Language Models (MLLMs), where the sufficiency of the knowledge is typically verified by quantifying capability gains via the fine-tune-then-evaluate paradigm. However, full-set fine-tuning MLLMs to assess such gains incurs significant time costs, hindering the iterative refinement cycles of the ChartU dataset. Reviewing the ChartU dataset synthesis and data selection domains, we find that subsets can potentially probe the MLLMs' capability gains from full-set fine-tuning. Given that data diversity is vital for boosting MLLMs' performance and entropy reflects this feature, we propose EXaMCaP, which uses entropy gain maximization to select a subset. To obtain a high-diversity subset, EXaMCaP chooses the maximum-entropy subset from the large ChartU dataset. As enumerating all possible subsets is impractical, EXaMCaP iteratively selects samples to maximize the gain in set entropy relative to the current set, approximating the maximum-entropy subset of the full dataset. Experiments show that EXaMCaP outperforms baselines in probing the capability gains of the ChartU training set, along with its strong effectiveness across diverse subset sizes and compatibility with various MLLM architectures.

Towards Effective Model Editing for LLM Personalization

Dec 15, 2025Abstract:Personalization is becoming indispensable for LLMs to align with individual user preferences and needs. Yet current approaches are often computationally expensive, data-intensive, susceptible to catastrophic forgetting, and prone to performance degradation in multi-turn interactions or when handling implicit queries. To address these challenges, we conceptualize personalization as a model editing task and introduce Personalization Editing, a framework that applies localized edits guided by clustered preference representations. This design enables precise preference-aligned updates while preserving overall model capabilities. In addition, existing personalization benchmarks frequently rely on persona-based dialogs between LLMs rather than user-LLM interactions, or focus primarily on stylistic imitation while neglecting information-seeking tasks that require accurate recall of user-specific preferences. We introduce User Preference Question Answering (UPQA), a short-answer QA dataset constructed from in-situ user queries with varying levels of difficulty. Unlike prior benchmarks, UPQA directly evaluates a model's ability to recall and apply specific user preferences. Across experimental settings, Personalization Editing achieves higher editing accuracy and greater computational efficiency than fine-tuning, while outperforming prompting-based baselines in multi-turn conversations and implicit preference questions settings.

A novel Neural-ODE model for the state of health estimation of lithium-ion battery using charging curve

May 09, 2025

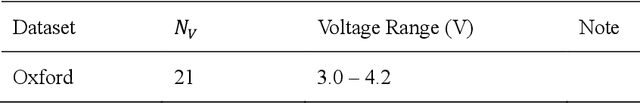

Abstract:The state of health (SOH) of lithium-ion batteries (LIBs) is crucial for ensuring the safe and reliable operation of electric vehicles. Nevertheless, the prevailing SOH estimation methods often have limited generalizability. This paper introduces a data-driven approach for estimating the SOH of LIBs, which is designed to improve generalization. We construct a hybrid model named ACLA, which integrates the attention mechanism, convolutional neural network (CNN), and long short-term memory network (LSTM) into the augmented neural ordinary differential equation (ANODE) framework. This model employs normalized charging time corresponding to specific voltages in the constant current charging phase as input and outputs the SOH as well as remaining useful of life. The model is trained on NASA and Oxford datasets and validated on the TJU and HUST datasets. Compared to the benchmark models NODE and ANODE, ACLA exhibits higher accuracy with root mean square errors (RMSE) for SOH estimation as low as 1.01% and 2.24% on the TJU and HUST datasets, respectively.

DAPLSR: Data Augmentation Partial Least Squares Regression Model via Manifold Optimization

Apr 23, 2025Abstract:Traditional Partial Least Squares Regression (PLSR) models frequently underperform when handling data characterized by uneven categories. To address the issue, this paper proposes a Data Augmentation Partial Least Squares Regression (DAPLSR) model via manifold optimization. The DAPLSR model introduces the Synthetic Minority Over-sampling Technique (SMOTE) to increase the number of samples and utilizes the Value Difference Metric (VDM) to select the nearest neighbor samples that closely resemble the original samples for generating synthetic samples. In solving the model, in order to obtain a more accurate numerical solution for PLSR, this paper proposes a manifold optimization method that uses the geometric properties of the constraint space to improve model degradation and optimization. Comprehensive experiments show that the proposed DAPLSR model achieves superior classification performance and outstanding evaluation metrics on various datasets, significantly outperforming existing methods.

Integrating Response Time and Attention Duration in Bayesian Preference Learning for Multiple Criteria Decision Aiding

Apr 21, 2025

Abstract:We introduce a multiple criteria Bayesian preference learning framework incorporating behavioral cues for decision aiding. The framework integrates pairwise comparisons, response time, and attention duration to deepen insights into decision-making processes. The approach employs an additive value function model and utilizes a Bayesian framework to derive the posterior distribution of potential ranking models by defining the likelihood of observed preference data and specifying a prior on the preference structure. This distribution highlights each model's ability to reconstruct Decision-Makers' holistic pairwise comparisons. By leveraging both response time as a proxy for cognitive effort and alternative discriminability as well as attention duration as an indicator of criterion importance, the proposed model surpasses traditional methods by uncovering richer behavioral patterns. We report the results of a laboratory experiment on mobile phone contract selection involving 30 real subjects using a dedicated application with time-, eye-, and mouse-tracking components. We validate the novel method's ability to reconstruct complete preferences. The detailed ablation studies reveal time- and attention-related behavioral patterns, confirming that integrating comprehensive data leads to developing models that better align with the DM's actual preferences.

AgentA/B: Automated and Scalable Web A/BTesting with Interactive LLM Agents

Apr 13, 2025

Abstract:A/B testing experiment is a widely adopted method for evaluating UI/UX design decisions in modern web applications. Yet, traditional A/B testing remains constrained by its dependence on the large-scale and live traffic of human participants, and the long time of waiting for the testing result. Through formative interviews with six experienced industry practitioners, we identified critical bottlenecks in current A/B testing workflows. In response, we present AgentA/B, a novel system that leverages Large Language Model-based autonomous agents (LLM Agents) to automatically simulate user interaction behaviors with real webpages. AgentA/B enables scalable deployment of LLM agents with diverse personas, each capable of navigating the dynamic webpage and interactively executing multi-step interactions like search, clicking, filtering, and purchasing. In a demonstrative controlled experiment, we employ AgentA/B to simulate a between-subject A/B testing with 1,000 LLM agents Amazon.com, and compare agent behaviors with real human shopping behaviors at a scale. Our findings suggest AgentA/B can emulate human-like behavior patterns.

Deep learning for state estimation of commercial sodium-ion batteries using partial charging profiles: validation with a multi-temperature ageing dataset

Apr 01, 2025

Abstract:Accurately predicting the state of health for sodium-ion batteries is crucial for managing battery modules, playing a vital role in ensuring operational safety. However, highly accurate models available thus far are rare due to a lack of aging data for sodium-ion batteries. In this study, we experimentally collected 53 single cells at four temperatures (0, 25, 35, and 45 {\deg}C), along with two battery modules in the lab. By utilizing the charging profiles, we were able to predict the SOC, capacity, and SOH simultaneously. This was achieved by designing a new framework that integrates the neural ordinary differential equation and 2D convolutional neural networks, using the partial charging profile as input. The charging profile is partitioned into segments, and each segment is fed into the network to output the SOC. For capacity and SOH prediction, we first aggregated the extracted features corresponding to segments from one cycle, after which an embedding block for temperature is concatenated for the final prediction. This novel approach eliminates the issue of multiple outputs for a single target. Our model demonstrated an $R^2$ accuracy of 0.998 for SOC and 0.997 for SOH across single cells at various temperatures. Furthermore, the trained model can be employed to predict single cells at temperatures outside the training set and battery modules with different capacity and current levels. The results presented here highlight the high accuracy of our model and its capability to predict multiple targets simultaneously using a partial charging profile.

Preference Construction: A Bayesian Interactive Preference Elicitation Framework Based on Monte Carlo Tree Search

Mar 19, 2025

Abstract:We present a novel preference learning framework to capture participant preferences efficiently within limited interaction rounds. It involves three main contributions. First, we develop a variational Bayesian approach to infer the participant's preference model by estimating posterior distributions and managing uncertainty from limited information. Second, we propose an adaptive questioning policy that maximizes cumulative uncertainty reduction, formulating questioning as a finite Markov decision process and using Monte Carlo Tree Search to prioritize promising question trajectories. By considering long-term effects and leveraging the efficiency of the Bayesian approach, the policy avoids shortsightedness. Third, we apply the framework to Multiple Criteria Decision Aiding, with pairwise comparison as the preference information and an additive value function as the preference model. We integrate the reparameterization trick to address high-variance issues, enhancing robustness and efficiency. Computational studies on real-world and synthetic datasets demonstrate the framework's practical usability, outperforming baselines in capturing preferences and achieving superior uncertainty reduction within limited interactions.

Fg-T2M++: LLMs-Augmented Fine-Grained Text Driven Human Motion Generation

Feb 08, 2025Abstract:We address the challenging problem of fine-grained text-driven human motion generation. Existing works generate imprecise motions that fail to accurately capture relationships specified in text due to: (1) lack of effective text parsing for detailed semantic cues regarding body parts, (2) not fully modeling linguistic structures between words to comprehend text comprehensively. To tackle these limitations, we propose a novel fine-grained framework Fg-T2M++ that consists of: (1) an LLMs semantic parsing module to extract body part descriptions and semantics from text, (2) a hyperbolic text representation module to encode relational information between text units by embedding the syntactic dependency graph into hyperbolic space, and (3) a multi-modal fusion module to hierarchically fuse text and motion features. Extensive experiments on HumanML3D and KIT-ML datasets demonstrate that Fg-T2M++ outperforms SOTA methods, validating its ability to accurately generate motions adhering to comprehensive text semantics.

Sequential LLM Framework for Fashion Recommendation

Oct 15, 2024

Abstract:The fashion industry is one of the leading domains in the global e-commerce sector, prompting major online retailers to employ recommendation systems for product suggestions and customer convenience. While recommendation systems have been widely studied, most are designed for general e-commerce problems and struggle with the unique challenges of the fashion domain. To address these issues, we propose a sequential fashion recommendation framework that leverages a pre-trained large language model (LLM) enhanced with recommendation-specific prompts. Our framework employs parameter-efficient fine-tuning with extensive fashion data and introduces a novel mix-up-based retrieval technique for translating text into relevant product suggestions. Extensive experiments show our proposed framework significantly enhances fashion recommendation performance.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge