Chang Li

Fibration Policy Optimization

Mar 09, 2026Abstract:Large language models are increasingly trained as heterogeneous systems spanning multiple domains, expert partitions, and agentic pipelines, yet prevalent proximal objectives operate at a single scale and lack a principled mechanism for coupling token-level, trajectory-level, and higher-level hierarchical stability control. To bridge this gap, we derive the Aggregational Policy Censoring Objective (APC-Obj), the first exact unconstrained reformulation of sample-based TV-TRPO, establishing that clipping-based surrogate design and trust-region optimization are dual formulations of the same problem. Building on this foundation, we develop Fiber Bundle Gating (FBG), an algebraic framework that organizes sampled RL data as a fiber bundle and decomposes ratio gating into a base-level gate on trajectory aggregates and a fiber-level gate on per-token residuals, with provable first-order agreement with the true RL objective near on-policy. From APC-Obj and FBG we derive Fibration Policy Optimization (or simply, FiberPO), a concrete objective whose Jacobian is block-diagonal over trajectories, reduces to identity at on-policy, and provides better update direction thus improving token efficiency. The compositional nature of the framework extends beyond the trajectory-token case: fibrations compose algebraically into a Fibration Gating Hierarchy (FGH) that scales the same gating mechanism to arbitrary hierarchical depth without new primitives, as demonstrated by FiberPO-Domain, a four-level instantiation with independent trust-region budgets at the domain, prompt group, trajectory, and token levels. Together, these results connect the trust-region theory, a compositional algebraic structure, and practical multi-scale stability control into a unified framework for LLM policy optimization.

The Auton Agentic AI Framework

Feb 27, 2026Abstract:The field of Artificial Intelligence is undergoing a transition from Generative AI -- probabilistic generation of text and images -- to Agentic AI, in which autonomous systems execute actions within external environments on behalf of users. This transition exposes a fundamental architectural mismatch: Large Language Models (LLMs) produce stochastic, unstructured outputs, whereas the backend infrastructure they must control -- databases, APIs, cloud services -- requires deterministic, schema-conformant inputs. The present paper describes the Auton Agentic AI Framework, a principled architecture for standardizing the creation, execution, and governance of autonomous agent systems. The framework is organized around a strict separation between the Cognitive Blueprint, a declarative, language-agnostic specification of agent identity and capabilities, and the Runtime Engine, the platform-specific execution substrate that instantiates and runs the agent. This separation enables cross-language portability, formal auditability, and modular tool integration via the Model Context Protocol (MCP). The paper formalizes the agent execution model as an augmented Partially Observable Markov Decision Process (POMDP) with a latent reasoning space, introduces a hierarchical memory consolidation architecture inspired by biological episodic memory systems, defines a constraint manifold formalism for safety enforcement via policy projection rather than post-hoc filtering, presents a three-level self-evolution framework spanning in-context adaptation through reinforcement learning, and describes runtime optimizations -- including parallel graph execution, speculative inference, and dynamic context pruning -- that reduce end-to-end latency for multi-step agent workflows.

BriMA: Bridged Modality Adaptation for Multi-Modal Continual Action Quality Assessment

Feb 22, 2026Abstract:Action Quality Assessment (AQA) aims to score how well an action is performed and is widely used in sports analysis, rehabilitation assessment, and human skill evaluation. Multi-modal AQA has recently achieved strong progress by leveraging complementary visual and kinematic cues, yet real-world deployments often suffer from non-stationary modality imbalance, where certain modalities become missing or intermittently available due to sensor failures or annotation gaps. Existing continual AQA methods overlook this issue and assume that all modalities remain complete and stable throughout training, which restricts their practicality. To address this challenge, we introduce Bridged Modality Adaptation (BriMA), an innovative approach to multi-modal continual AQA under modality-missing conditions. BriMA consists of a memory-guided bridging imputation module that reconstructs missing modalities using both task-agnostic and task-specific representations, and a modality-aware replay mechanism that prioritizes informative samples based on modality distortion and distribution drift. Experiments on three representative multi-modal AQA datasets (RG, Fis-V, and FS1000) show that BriMA consistently improves performance under different modality-missing conditions, achieving 6--8\% higher correlation and 12--15\% lower error on average. These results demonstrate a step toward robust multi-modal AQA systems under real-world deployment constraints.

PACE: Pretrained Audio Continual Learning

Feb 03, 2026Abstract:Audio is a fundamental modality for analyzing speech, music, and environmental sounds. Although pretrained audio models have significantly advanced audio understanding, they remain fragile in real-world settings where data distributions shift over time. In this work, we present the first systematic benchmark for audio continual learning (CL) with pretrained models (PTMs), together with a comprehensive analysis of its unique challenges. Unlike in vision, where parameter-efficient fine-tuning (PEFT) has proven effective for CL, directly transferring such strategies to audio leads to poor performance. This stems from a fundamental property of audio backbones: they focus on low-level spectral details rather than structured semantics, causing severe upstream-downstream misalignment. Through extensive empirical study, we identify analytic classifiers with first-session adaptation (FSA) as a promising direction, but also reveal two major limitations: representation saturation in coarse-grained scenarios and representation drift in fine-grained scenarios. To address these challenges, we propose PACE, a novel method that enhances FSA via a regularized analytic classifier and enables multi-session adaptation through adaptive subspace-orthogonal PEFT for improved semantic alignment. In addition, we introduce spectrogram-based boundary-aware perturbations to mitigate representation overlap and improve stability. Experiments on six diverse audio CL benchmarks demonstrate that PACE substantially outperforms state-of-the-art baselines, marking an important step toward robust and scalable audio continual learning with PTMs.

AI Meets Brain: Memory Systems from Cognitive Neuroscience to Autonomous Agents

Dec 29, 2025Abstract:Memory serves as the pivotal nexus bridging past and future, providing both humans and AI systems with invaluable concepts and experience to navigate complex tasks. Recent research on autonomous agents has increasingly focused on designing efficient memory workflows by drawing on cognitive neuroscience. However, constrained by interdisciplinary barriers, existing works struggle to assimilate the essence of human memory mechanisms. To bridge this gap, we systematically synthesizes interdisciplinary knowledge of memory, connecting insights from cognitive neuroscience with LLM-driven agents. Specifically, we first elucidate the definition and function of memory along a progressive trajectory from cognitive neuroscience through LLMs to agents. We then provide a comparative analysis of memory taxonomy, storage mechanisms, and the complete management lifecycle from both biological and artificial perspectives. Subsequently, we review the mainstream benchmarks for evaluating agent memory. Additionally, we explore memory security from dual perspectives of attack and defense. Finally, we envision future research directions, with a focus on multimodal memory systems and skill acquisition.

Exploiting the Prior of Generative Time Series Imputation

Dec 29, 2025Abstract:Time series imputation, i.e., filling the missing values of a time recording, finds various applications in electricity, finance, and weather modelling. Previous methods have introduced generative models such as diffusion probabilistic models and Schrodinger bridge models to conditionally generate the missing values from Gaussian noise or directly from linear interpolation results. However, as their prior is not informative to the ground-truth target, their generation process inevitably suffer increased burden and limited imputation accuracy. In this work, we present Bridge-TS, building a data-to-data generation process for generative time series imputation and exploiting the design of prior with two novel designs. Firstly, we propose expert prior, leveraging a pretrained transformer-based module as an expert to fill the missing values with a deterministic estimation, and then taking the results as the prior of ground truth target. Secondly, we explore compositional priors, utilizing several pretrained models to provide different estimation results, and then combining them in the data-to-data generation process to achieve a compositional priors-to-target imputation process. Experiments conducted on several benchmark datasets such as ETT, Exchange, and Weather show that Bridge-TS reaches a new record of imputation accuracy in terms of mean square error and mean absolute error, demonstrating the superiority of improving prior for generative time series imputation.

Unsupervised Single-Channel Audio Separation with Diffusion Source Priors

Dec 23, 2025Abstract:Single-channel audio separation aims to separate individual sources from a single-channel mixture. Most existing methods rely on supervised learning with synthetically generated paired data. However, obtaining high-quality paired data in real-world scenarios is often difficult. This data scarcity can degrade model performance under unseen conditions and limit generalization ability. To this end, in this work, we approach this problem from an unsupervised perspective, framing it as a probabilistic inverse problem. Our method requires only diffusion priors trained on individual sources. Separation is then achieved by iteratively guiding an initial state toward the solution through reconstruction guidance. Importantly, we introduce an advanced inverse problem solver specifically designed for separation, which mitigates gradient conflicts caused by interference between the diffusion prior and reconstruction guidance during inverse denoising. This design ensures high-quality and balanced separation performance across individual sources. Additionally, we find that initializing the denoising process with an augmented mixture instead of pure Gaussian noise provides an informative starting point that significantly improves the final performance. To further enhance audio prior modeling, we design a novel time-frequency attention-based network architecture that demonstrates strong audio modeling capability. Collectively, these improvements lead to significant performance gains, as validated across speech-sound event, sound event, and speech separation tasks.

U(PM)$^2$:Unsupervised polygon matching with pre-trained models for challenging stereo images

Nov 08, 2025Abstract:Stereo image matching is a fundamental task in computer vision, photogrammetry and remote sensing, but there is an almost unexplored field, i.e., polygon matching, which faces the following challenges: disparity discontinuity, scale variation, training requirement, and generalization. To address the above-mentioned issues, this paper proposes a novel U(PM)$^2$: low-cost unsupervised polygon matching with pre-trained models by uniting automatically learned and handcrafted features, of which pipeline is as follows: firstly, the detector leverages the pre-trained segment anything model to obtain masks; then, the vectorizer converts the masks to polygons and graphic structure; secondly, the global matcher addresses challenges from global viewpoint changes and scale variation based on bidirectional-pyramid strategy with pre-trained LoFTR; finally, the local matcher further overcomes local disparity discontinuity and topology inconsistency of polygon matching by local-joint geometry and multi-feature matching strategy with Hungarian algorithm. We benchmark our U(PM)$^2$ on the ScanNet and SceneFlow datasets using our proposed new metric, which achieved state-of-the-art accuracy at a competitive speed and satisfactory generalization performance at low cost without any training requirement.

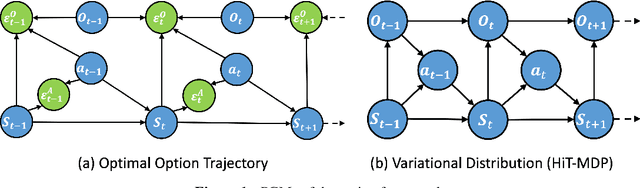

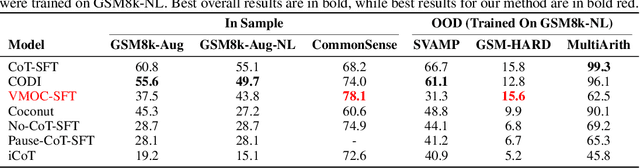

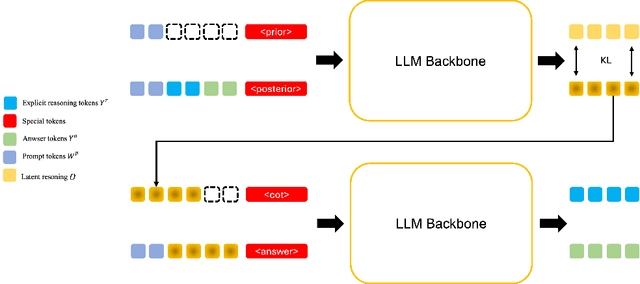

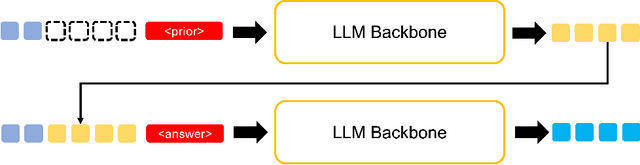

Learning Temporal Abstractions via Variational Homomorphisms in Option-Induced Abstract MDPs

Jul 24, 2025

Abstract:Large Language Models (LLMs) have shown remarkable reasoning ability through explicit Chain-of-Thought (CoT) prompting, but generating these step-by-step textual explanations is computationally expensive and slow. To overcome this, we aim to develop a framework for efficient, implicit reasoning, where the model "thinks" in a latent space without generating explicit text for every step. We propose that these latent thoughts can be modeled as temporally-extended abstract actions, or options, within a hierarchical reinforcement learning framework. To effectively learn a diverse library of options as latent embeddings, we first introduce the Variational Markovian Option Critic (VMOC), an off-policy algorithm that uses variational inference within the HiT-MDP framework. To provide a rigorous foundation for using these options as an abstract reasoning space, we extend the theory of continuous MDP homomorphisms. This proves that learning a policy in the simplified, abstract latent space, for which VMOC is suited, preserves the optimality of the solution to the original, complex problem. Finally, we propose a cold-start procedure that leverages supervised fine-tuning (SFT) data to distill human reasoning demonstrations into this latent option space, providing a rich initialization for the model's reasoning capabilities. Extensive experiments demonstrate that our approach achieves strong performance on complex logical reasoning benchmarks and challenging locomotion tasks, validating our framework as a principled method for learning abstract skills for both language and control.

Latent Swap Joint Diffusion for Long-Form Audio Generation

Feb 07, 2025

Abstract:Previous work on long-form audio generation using global-view diffusion or iterative generation demands significant training or inference costs. While recent advancements in multi-view joint diffusion for panoramic generation provide an efficient option, they struggle with spectrum generation with severe overlap distortions and high cross-view consistency costs. We initially explore this phenomenon through the connectivity inheritance of latent maps and uncover that averaging operations excessively smooth the high-frequency components of the latent map. To address these issues, we propose Swap Forward (SaFa), a frame-level latent swap framework that synchronizes multiple diffusions to produce a globally coherent long audio with more spectrum details in a forward-only manner. At its core, the bidirectional Self-Loop Latent Swap is applied between adjacent views, leveraging stepwise diffusion trajectory to adaptively enhance high-frequency components without disrupting low-frequency components. Furthermore, to ensure cross-view consistency, the unidirectional Reference-Guided Latent Swap is applied between the reference and the non-overlap regions of each subview during the early stages, providing centralized trajectory guidance. Quantitative and qualitative experiments demonstrate that SaFa significantly outperforms existing joint diffusion methods and even training-based long audio generation models. Moreover, we find that it also adapts well to panoramic generation, achieving comparable state-of-the-art performance with greater efficiency and model generalizability. Project page is available at https://swapforward.github.io/.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge