Baohua Lai

HICL: Hashtag-Driven In-Context Learning for Social Media Natural Language Understanding

Aug 19, 2023

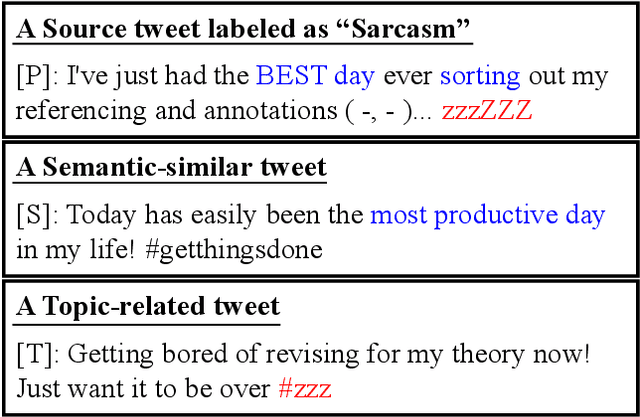

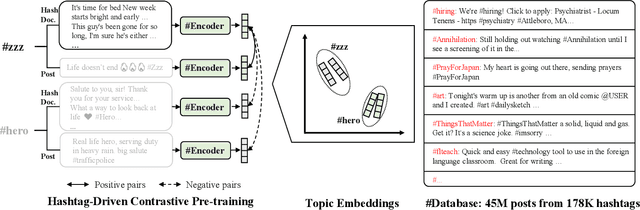

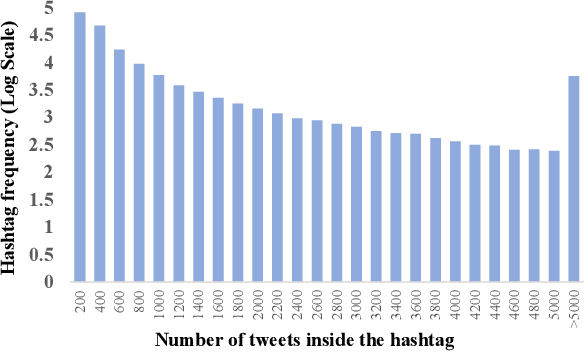

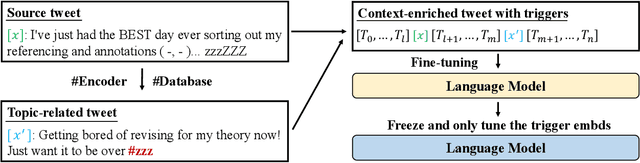

Abstract:Natural language understanding (NLU) is integral to various social media applications. However, existing NLU models rely heavily on context for semantic learning, resulting in compromised performance when faced with short and noisy social media content. To address this issue, we leverage in-context learning (ICL), wherein language models learn to make inferences by conditioning on a handful of demonstrations to enrich the context and propose a novel hashtag-driven in-context learning (HICL) framework. Concretely, we pre-train a model #Encoder, which employs #hashtags (user-annotated topic labels) to drive BERT-based pre-training through contrastive learning. Our objective here is to enable #Encoder to gain the ability to incorporate topic-related semantic information, which allows it to retrieve topic-related posts to enrich contexts and enhance social media NLU with noisy contexts. To further integrate the retrieved context with the source text, we employ a gradient-based method to identify trigger terms useful in fusing information from both sources. For empirical studies, we collected 45M tweets to set up an in-context NLU benchmark, and the experimental results on seven downstream tasks show that HICL substantially advances the previous state-of-the-art results. Furthermore, we conducted extensive analyzes and found that: (1) combining source input with a top-retrieved post from #Encoder is more effective than using semantically similar posts; (2) trigger words can largely benefit in merging context from the source and retrieved posts.

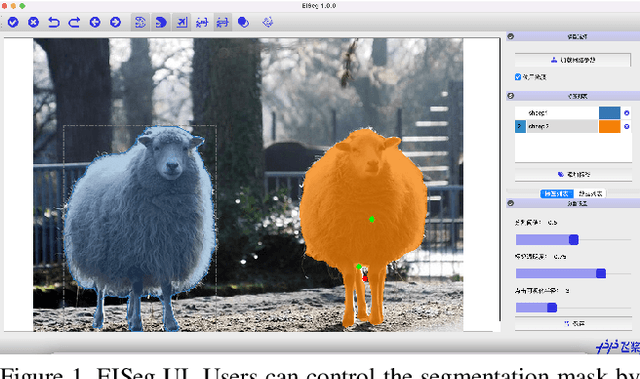

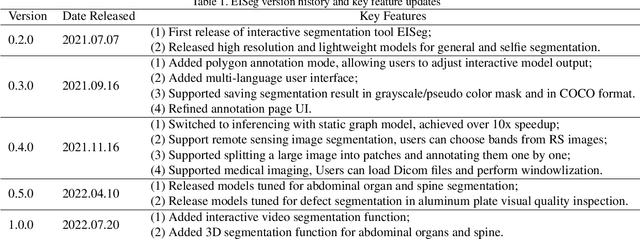

EISeg: An Efficient Interactive Segmentation Tool based on PaddlePaddle

Oct 18, 2022

Abstract:In recent years, the rapid development of deep learning has brought great advancements to image and video segmentation methods based on neural networks. However, to unleash the full potential of such models, large numbers of high-quality annotated images are necessary for model training. Currently, many widely used open-source image segmentation software relies heavily on manual annotation which is tedious and time-consuming. In this work, we introduce EISeg, an Efficient Interactive SEGmentation annotation tool that can drastically improve image segmentation annotation efficiency, generating highly accurate segmentation masks with only a few clicks. We also provide various domain-specific models for remote sensing, medical imaging, industrial quality inspections, human segmentation, and temporal aware models for video segmentation. The source code for our algorithm and user interface are available at: https://github.com/PaddlePaddle/PaddleSeg.

PP-OCRv3: More Attempts for the Improvement of Ultra Lightweight OCR System

Jun 07, 2022

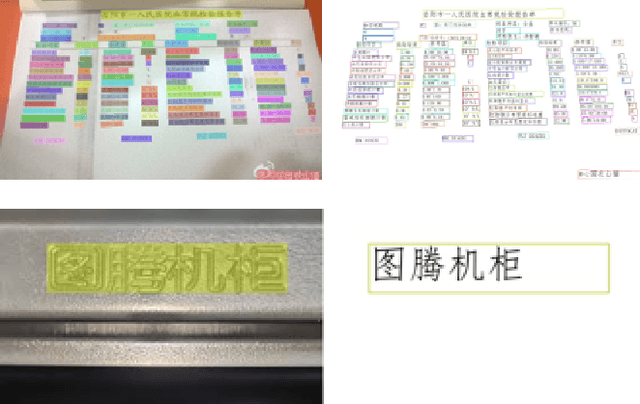

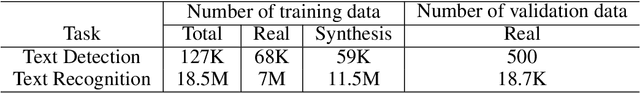

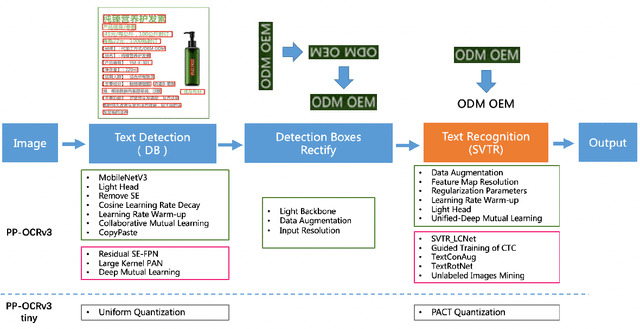

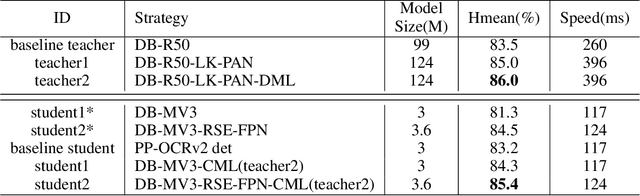

Abstract:Optical character recognition (OCR) technology has been widely used in various scenes, as shown in Figure 1. Designing a practical OCR system is still a meaningful but challenging task. In previous work, considering the efficiency and accuracy, we proposed a practical ultra lightweight OCR system (PP-OCR), and an optimized version PP-OCRv2. In order to further improve the performance of PP-OCRv2, a more robust OCR system PP-OCRv3 is proposed in this paper. PP-OCRv3 upgrades the text detection model and text recognition model in 9 aspects based on PP-OCRv2. For text detector, we introduce a PAN module with large receptive field named LK-PAN, a FPN module with residual attention mechanism named RSE-FPN, and DML distillation strategy. For text recognizer, the base model is replaced from CRNN to SVTR, and we introduce lightweight text recognition network SVTR LCNet, guided training of CTC by attention, data augmentation strategy TextConAug, better pre-trained model by self-supervised TextRotNet, UDML, and UIM to accelerate the model and improve the effect. Experiments on real data show that the hmean of PP-OCRv3 is 5% higher than PP-OCRv2 under comparable inference speed. All the above mentioned models are open-sourced and the code is available in the GitHub repository PaddleOCR which is powered by PaddlePaddle.

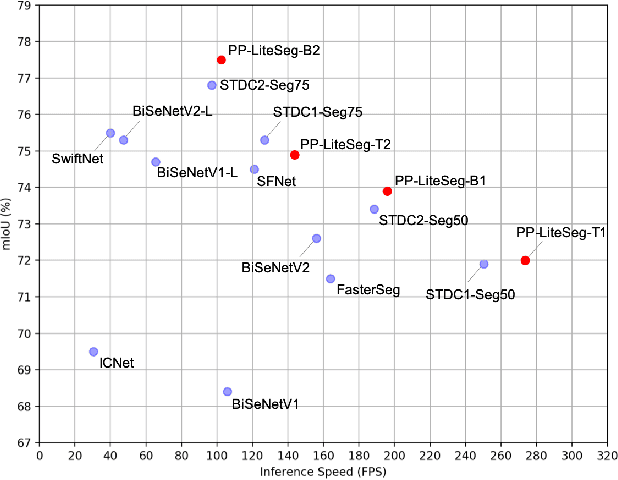

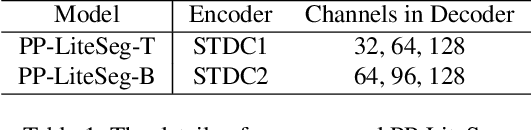

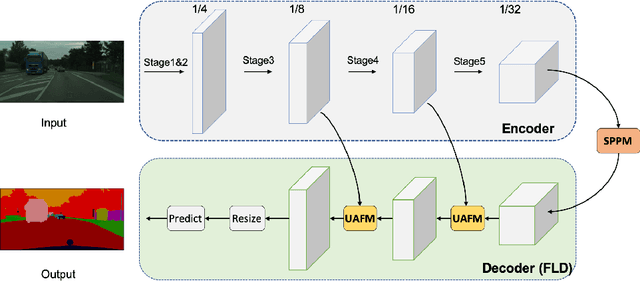

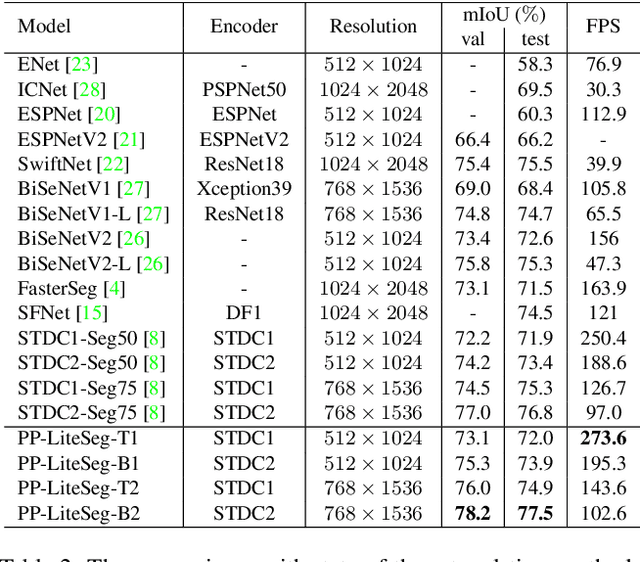

PP-LiteSeg: A Superior Real-Time Semantic Segmentation Model

Apr 06, 2022

Abstract:Real-world applications have high demands for semantic segmentation methods. Although semantic segmentation has made remarkable leap-forwards with deep learning, the performance of real-time methods is not satisfactory. In this work, we propose PP-LiteSeg, a novel lightweight model for the real-time semantic segmentation task. Specifically, we present a Flexible and Lightweight Decoder (FLD) to reduce computation overhead of previous decoder. To strengthen feature representations, we propose a Unified Attention Fusion Module (UAFM), which takes advantage of spatial and channel attention to produce a weight and then fuses the input features with the weight. Moreover, a Simple Pyramid Pooling Module (SPPM) is proposed to aggregate global context with low computation cost. Extensive evaluations demonstrate that PP-LiteSeg achieves a superior trade-off between accuracy and speed compared to other methods. On the Cityscapes test set, PP-LiteSeg achieves 72.0% mIoU/273.6 FPS and 77.5% mIoU/102.6 FPS on NVIDIA GTX 1080Ti. Source code and models are available at PaddleSeg: https://github.com/PaddlePaddle/PaddleSeg.

PP-YOLOE: An evolved version of YOLO

Apr 02, 2022

Abstract:In this report, we present PP-YOLOE, an industrial state-of-the-art object detector with high performance and friendly deployment. We optimize on the basis of the previous PP-YOLOv2, using anchor-free paradigm, more powerful backbone and neck equipped with CSPRepResStage, ET-head and dynamic label assignment algorithm TAL. We provide s/m/l/x models for different practice scenarios. As a result, PP-YOLOE-l achieves 51.4 mAP on COCO test-dev and 78.1 FPS on Tesla V100, yielding a remarkable improvement of (+1.9 AP, +13.35% speed up) and (+1.3 AP, +24.96% speed up), compared to the previous state-of-the-art industrial models PP-YOLOv2 and YOLOX respectively. Further, PP-YOLOE inference speed achieves 149.2 FPS with TensorRT and FP16-precision. We also conduct extensive experiments to verify the effectiveness of our designs. Source code and pre-trained models are available at https://github.com/PaddlePaddle/PaddleDetection.

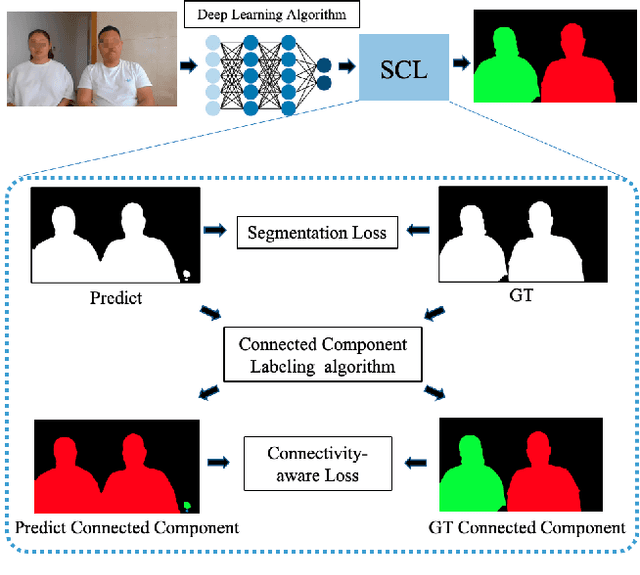

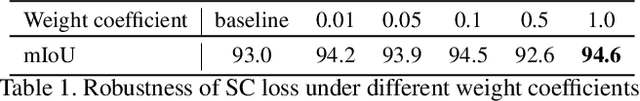

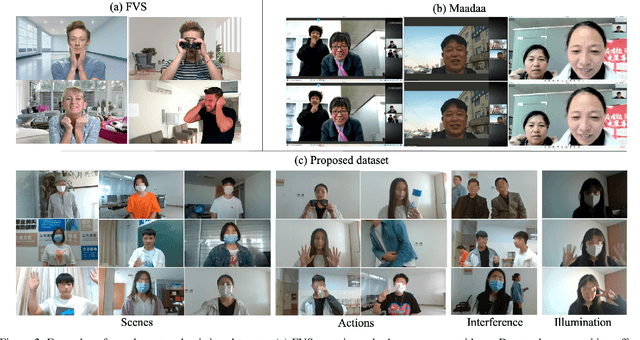

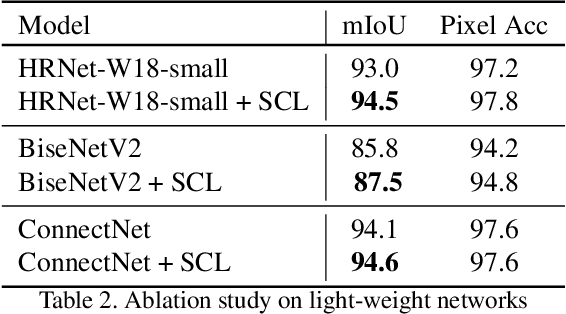

PP-HumanSeg: Connectivity-Aware Portrait Segmentation with a Large-Scale Teleconferencing Video Dataset

Dec 14, 2021

Abstract:As the COVID-19 pandemic rampages across the world, the demands of video conferencing surge. To this end, real-time portrait segmentation becomes a popular feature to replace backgrounds of conferencing participants. While feature-rich datasets, models and algorithms have been offered for segmentation that extract body postures from life scenes, portrait segmentation has yet not been well covered in a video conferencing context. To facilitate the progress in this field, we introduce an open-source solution named PP-HumanSeg. This work is the first to construct a large-scale video portrait dataset that contains 291 videos from 23 conference scenes with 14K fine-labeled frames and extensions to multi-camera teleconferencing. Furthermore, we propose a novel Semantic Connectivity-aware Learning (SCL) for semantic segmentation, which introduces a semantic connectivity-aware loss to improve the quality of segmentation results from the perspective of connectivity. And we propose an ultra-lightweight model with SCL for practical portrait segmentation, which achieves the best trade-off between IoU and the speed of inference. Extensive evaluations on our dataset demonstrate the superiority of SCL and our model. The source code is available at https://github.com/PaddlePaddle/PaddleSeg.

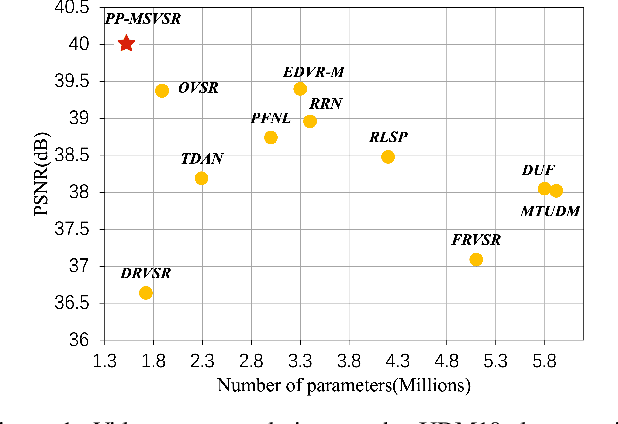

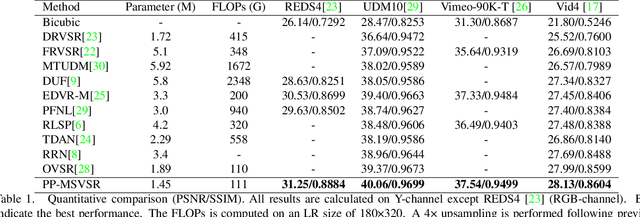

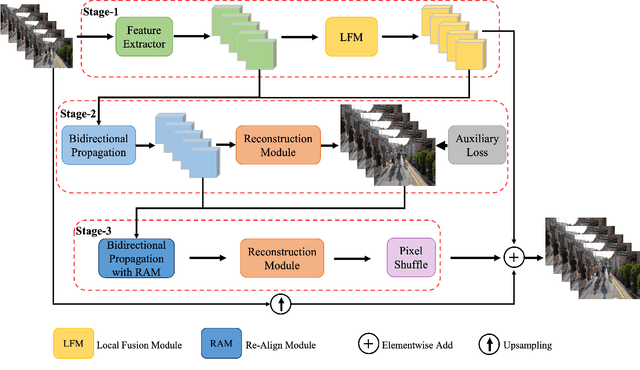

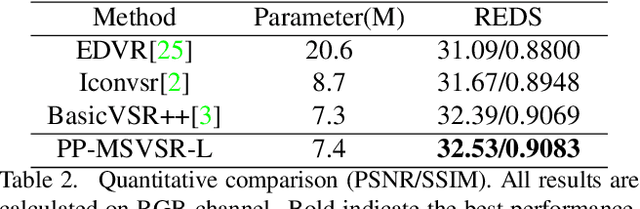

PP-MSVSR: Multi-Stage Video Super-Resolution

Dec 06, 2021

Abstract:Different from the Single Image Super-Resolution(SISR) task, the key for Video Super-Resolution(VSR) task is to make full use of complementary information across frames to reconstruct the high-resolution sequence. Since images from different frames with diverse motion and scene, accurately aligning multiple frames and effectively fusing different frames has always been the key research work of VSR tasks. To utilize rich complementary information of neighboring frames, in this paper, we propose a multi-stage VSR deep architecture, dubbed as PP-MSVSR, with local fusion module, auxiliary loss and re-align module to refine the enhanced result progressively. Specifically, in order to strengthen the fusion of features across frames in feature propagation, a local fusion module is designed in stage-1 to perform local feature fusion before feature propagation. Moreover, we introduce an auxiliary loss in stage-2 to make the features obtained by the propagation module reserve more correlated information connected to the HR space, and introduce a re-align module in stage-3 to make full use of the feature information of the previous stage. Extensive experiments substantiate that PP-MSVSR achieves a promising performance of Vid4 datasets, which achieves a PSNR of 28.13dB with only 1.45M parameters. And the PP-MSVSR-L exceeds all state of the art method on REDS4 datasets with considerable parameters. Code and models will be released in PaddleGAN\footnote{https://github.com/PaddlePaddle/PaddleGAN.}.

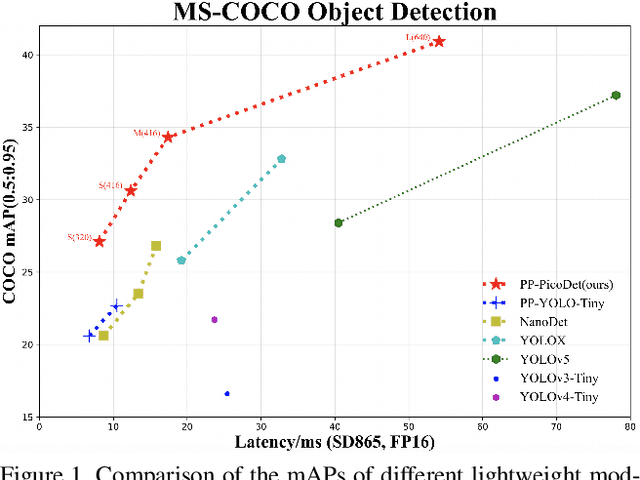

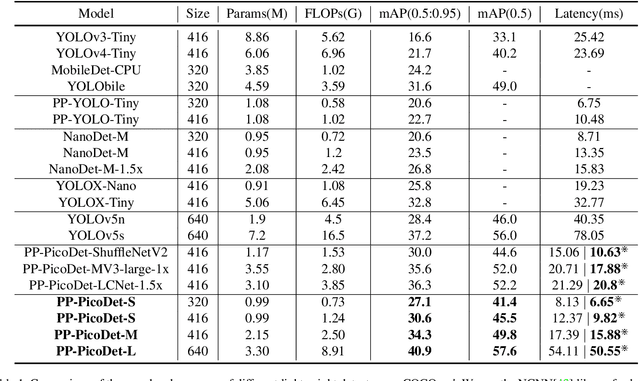

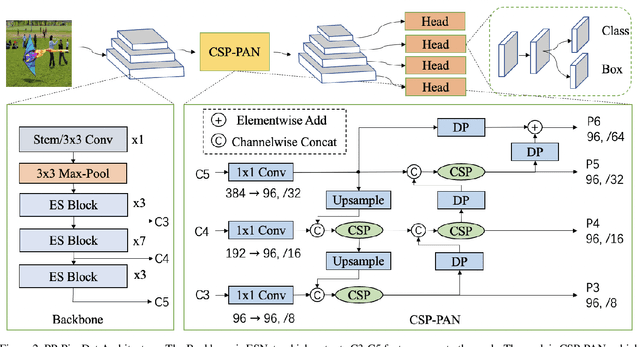

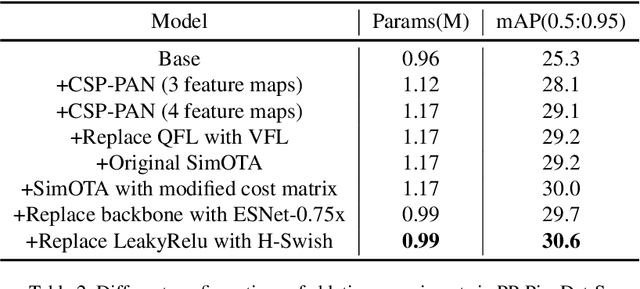

PP-PicoDet: A Better Real-Time Object Detector on Mobile Devices

Nov 01, 2021

Abstract:The better accuracy and efficiency trade-off has been a challenging problem in object detection. In this work, we are dedicated to studying key optimizations and neural network architecture choices for object detection to improve accuracy and efficiency. We investigate the applicability of the anchor-free strategy on lightweight object detection models. We enhance the backbone structure and design the lightweight structure of the neck, which improves the feature extraction ability of the network. We improve label assignment strategy and loss function to make training more stable and efficient. Through these optimizations, we create a new family of real-time object detectors, named PP-PicoDet, which achieves superior performance on object detection for mobile devices. Our models achieve better trade-offs between accuracy and latency compared to other popular models. PicoDet-S with only 0.99M parameters achieves 30.6% mAP, which is an absolute 4.8% improvement in mAP while reducing mobile CPU inference latency by 55% compared to YOLOX-Nano, and is an absolute 7.1% improvement in mAP compared to NanoDet. It reaches 123 FPS (150 FPS using Paddle Lite) on mobile ARM CPU when the input size is 320. PicoDet-L with only 3.3M parameters achieves 40.9% mAP, which is an absolute 3.7% improvement in mAP and 44% faster than YOLOv5s. As shown in Figure 1, our models far outperform the state-of-the-art results for lightweight object detection. Code and pre-trained models are available at https://github.com/PaddlePaddle/PaddleDetection.

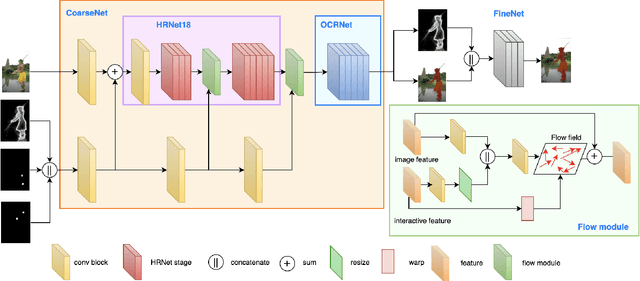

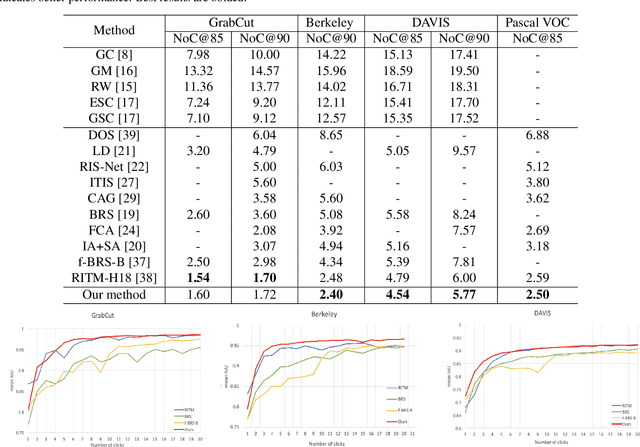

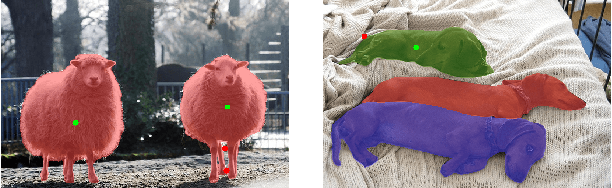

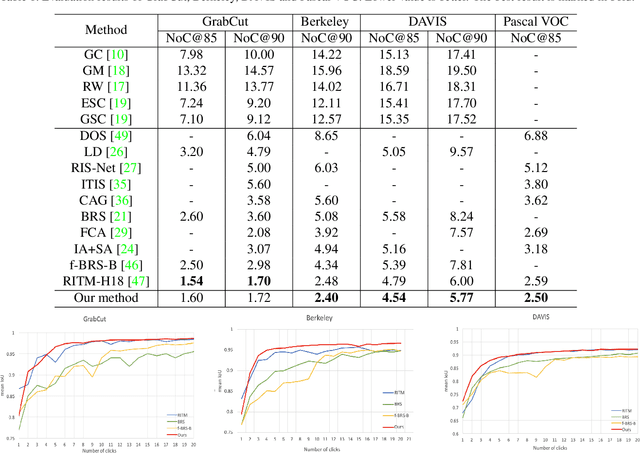

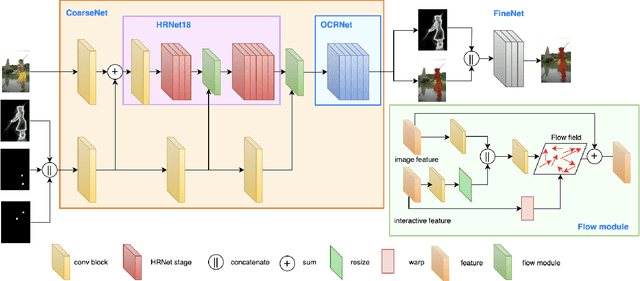

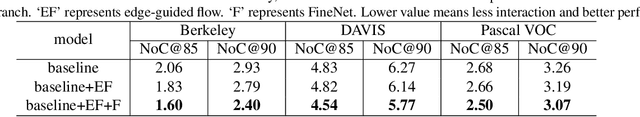

EdgeFlow: Achieving Practical Interactive Segmentation with Edge-Guided Flow

Sep 20, 2021

Abstract:High-quality training data play a key role in image segmentation tasks. Usually, pixel-level annotations are expensive, laborious and time-consuming for the large volume of training data. To reduce labelling cost and improve segmentation quality, interactive segmentation methods have been proposed, which provide the result with just a few clicks. However, their performance does not meet the requirements of practical segmentation tasks in terms of speed and accuracy. In this work, we propose EdgeFlow, a novel architecture that fully utilizes interactive information of user clicks with edge-guided flow. Our method achieves state-of-the-art performance without any post-processing or iterative optimization scheme. Comprehensive experiments on benchmarks also demonstrate the superiority of our method. In addition, with the proposed method, we develop an efficient interactive segmentation tool for practical data annotation tasks. The source code and tool is avaliable at https://github.com/PaddlePaddle/PaddleSeg.

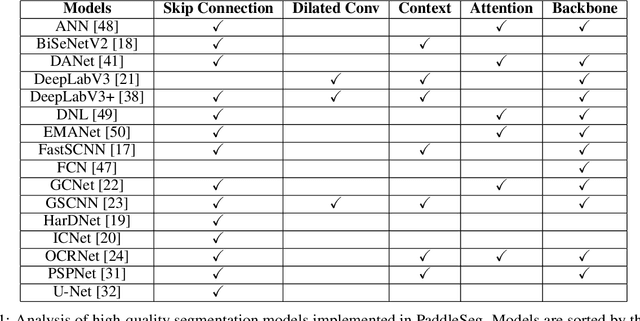

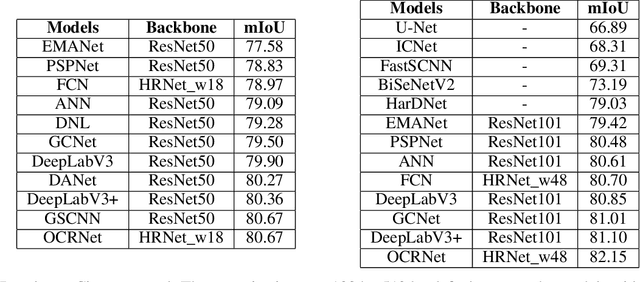

PaddleSeg: A High-Efficient Development Toolkit for Image Segmentation

Jan 15, 2021

Abstract:Image Segmentation plays an essential role in computer vision and image processing with various applications from medical diagnosis to autonomous car driving. A lot of segmentation algorithms have been proposed for addressing specific problems. In recent years, the success of deep learning techniques has tremendously influenced a wide range of computer vision areas, and the modern approaches of image segmentation based on deep learning are becoming prevalent. In this article, we introduce a high-efficient development toolkit for image segmentation, named PaddleSeg. The toolkit aims to help both developers and researchers in the whole process of designing segmentation models, training models, optimizing performance and inference speed, and deploying models. Currently, PaddleSeg supports around 20 popular segmentation models and more than 50 pre-trained models from real-time and high-accuracy levels. With modular components and backbone networks, users can easily build over one hundred models for different requirements. Furthermore, we provide comprehensive benchmarks and evaluations to show that these segmentation algorithms trained on our toolkit have more competitive accuracy. Also, we provide various real industrial applications and practical cases based on PaddleSeg. All codes and examples of PaddleSeg are available at https://github.com/PaddlePaddle/PaddleSeg.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge