Xinwei He

Tom

DINO Eats CLIP: Adapting Beyond Knowns for Open-set 3D Object Retrieval

Apr 21, 2026Abstract:Vision foundation models have shown great promise for open-set 3D object retrieval (3DOR) through efficient adaptation to multi-view images. Leveraging semantically aligned latent space, previous work typically adapts the CLIP encoder to build view-based 3D descriptors. Despite CLIP's strong generalization ability, its lack of fine-grainedness prompted us to explore the potential of a more recent self-supervised encoder-DINO. To address this, we propose DINO Eats CLIP (DEC), a novel framework for dynamic multi-view integration that is regularized by synthesizing data for unseen classes. We first find that simply mean-pooling over view features from a frozen DINO backbone gives decent performance. Yet, further adaptation causes severe overfitting on average view patterns of known classes. To combat it, we then design a module named Chunking and Adapting Module (CAM). It segments multi-view images into chunks and dynamically integrates local view relations, yielding more robust features than the standard pooling strategy. Finally, we propose Virtual Feature Synthesis (VFS) module to mitigate bias towards known categories explicitly. Under the hood, VFS leverages CLIP's broad, pre-aligned vision-language space to synthesize virtual features for unseen classes. By exposing DEC to these virtual features, we greatly enhance its open-set discrimination capacity. Extensive experiments on standard open-set 3DOR benchmarks demonstrate its superior efficacy.

PyG 2.0: Scalable Learning on Real World Graphs

Jul 22, 2025Abstract:PyG (PyTorch Geometric) has evolved significantly since its initial release, establishing itself as a leading framework for Graph Neural Networks. In this paper, we present Pyg 2.0 (and its subsequent minor versions), a comprehensive update that introduces substantial improvements in scalability and real-world application capabilities. We detail the framework's enhanced architecture, including support for heterogeneous and temporal graphs, scalable feature/graph stores, and various optimizations, enabling researchers and practitioners to tackle large-scale graph learning problems efficiently. Over the recent years, PyG has been supporting graph learning in a large variety of application areas, which we will summarize, while providing a deep dive into the important areas of relational deep learning and large language modeling.

WeakMCN: Multi-task Collaborative Network for Weakly Supervised Referring Expression Comprehension and Segmentation

May 24, 2025Abstract:Weakly supervised referring expression comprehension(WREC) and segmentation(WRES) aim to learn object grounding based on a given expression using weak supervision signals like image-text pairs. While these tasks have traditionally been modeled separately, we argue that they can benefit from joint learning in a multi-task framework. To this end, we propose WeakMCN, a novel multi-task collaborative network that effectively combines WREC and WRES with a dual-branch architecture. Specifically, the WREC branch is formulated as anchor-based contrastive learning, which also acts as a teacher to supervise the WRES branch. In WeakMCN, we propose two innovative designs to facilitate multi-task collaboration, namely Dynamic Visual Feature Enhancement(DVFE) and Collaborative Consistency Module(CCM). DVFE dynamically combines various pre-trained visual knowledge to meet different task requirements, while CCM promotes cross-task consistency from the perspective of optimization. Extensive experimental results on three popular REC and RES benchmarks, i.e., RefCOCO, RefCOCO+, and RefCOCOg, consistently demonstrate performance gains of WeakMCN over state-of-the-art single-task alternatives, e.g., up to 3.91% and 13.11% on RefCOCO for WREC and WRES tasks, respectively. Furthermore, experiments also validate the strong generalization ability of WeakMCN in both semi-supervised REC and RES settings against existing methods, e.g., +8.94% for semi-REC and +7.71% for semi-RES on 1% RefCOCO. The code is publicly available at https://github.com/MRUIL/WeakMCN.

Tetrahedron-Net for Medical Image Registration

May 07, 2025Abstract:Medical image registration plays a vital role in medical image processing. Extracting expressive representations for medical images is crucial for improving the registration quality. One common practice for this end is constructing a convolutional backbone to enable interactions with skip connections among feature extraction layers. The de facto structure, U-Net-like networks, has attempted to design skip connections such as nested or full-scale ones to connect one single encoder and one single decoder to improve its representation capacity. Despite being effective, it still does not fully explore interactions with a single encoder and decoder architectures. In this paper, we embrace this observation and introduce a simple yet effective alternative strategy to enhance the representations for registrations by appending one additional decoder. The new decoder is designed to interact with both the original encoder and decoder. In this way, it not only reuses feature presentation from corresponding layers in the encoder but also interacts with the original decoder to corporately give more accurate registration results. The new architecture is concise yet generalized, with only one encoder and two decoders forming a ``Tetrahedron'' structure, thereby dubbed Tetrahedron-Net. Three instantiations of Tetrahedron-Net are further constructed regarding the different structures of the appended decoder. Our extensive experiments prove that superior performance can be obtained on several representative benchmarks of medical image registration. Finally, such a ``Tetrahedron'' design can also be easily integrated into popular U-Net-like architectures including VoxelMorph, ViT-V-Net, and TransMorph, leading to consistent performance gains.

TeDA: Boosting Vision-Lanuage Models for Zero-Shot 3D Object Retrieval via Testing-time Distribution Alignment

May 05, 2025Abstract:Learning discriminative 3D representations that generalize well to unknown testing categories is an emerging requirement for many real-world 3D applications. Existing well-established methods often struggle to attain this goal due to insufficient 3D training data from broader concepts. Meanwhile, pre-trained large vision-language models (e.g., CLIP) have shown remarkable zero-shot generalization capabilities. Yet, they are limited in extracting suitable 3D representations due to substantial gaps between their 2D training and 3D testing distributions. To address these challenges, we propose Testing-time Distribution Alignment (TeDA), a novel framework that adapts a pretrained 2D vision-language model CLIP for unknown 3D object retrieval at test time. To our knowledge, it is the first work that studies the test-time adaptation of a vision-language model for 3D feature learning. TeDA projects 3D objects into multi-view images, extracts features using CLIP, and refines 3D query embeddings with an iterative optimization strategy by confident query-target sample pairs in a self-boosting manner. Additionally, TeDA integrates textual descriptions generated by a multimodal language model (InternVL) to enhance 3D object understanding, leveraging CLIP's aligned feature space to fuse visual and textual cues. Extensive experiments on four open-set 3D object retrieval benchmarks demonstrate that TeDA greatly outperforms state-of-the-art methods, even those requiring extensive training. We also experimented with depth maps on Objaverse-LVIS, further validating its effectiveness. Code is available at https://github.com/wangzhichuan123/TeDA.

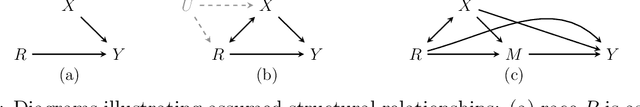

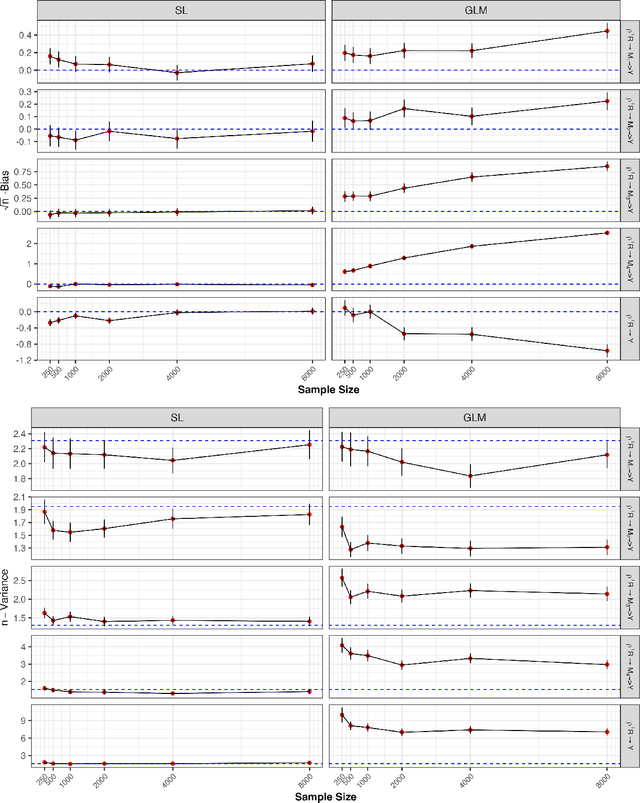

Assessing Racial Disparities in Healthcare Expenditures Using Causal Path-Specific Effects

Apr 30, 2025

Abstract:Racial disparities in healthcare expenditures are well-documented, yet the underlying drivers remain complex and require further investigation. This study employs causal and counterfactual path-specific effects to quantify how various factors, including socioeconomic status, insurance access, health behaviors, and health status, mediate these disparities. Using data from the Medical Expenditures Panel Survey, we estimate how expenditures would differ under counterfactual scenarios in which the values of specific mediators were aligned across racial groups along selected causal pathways. A key challenge in this analysis is ensuring robustness against model misspecification while addressing the zero-inflation and right-skewness of healthcare expenditures. For reliable inference, we derive asymptotically linear estimators by integrating influence function-based techniques with flexible machine learning methods, including super learners and a two-part model tailored to the zero-inflated, right-skewed nature of healthcare expenditures.

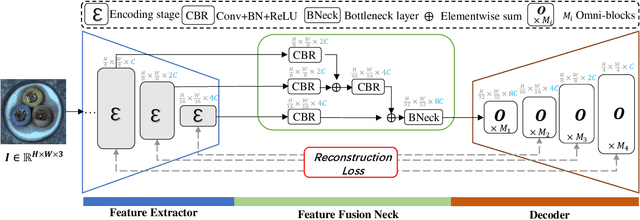

Omni-AD: Learning to Reconstruct Global and Local Features for Multi-class Anomaly Detection

Mar 27, 2025

Abstract:In multi-class unsupervised anomaly detection(MUAD), reconstruction-based methods learn to map input images to normal patterns to identify anomalous pixels. However, this strategy easily falls into the well-known "learning shortcut" issue when decoders fail to capture normal patterns and reconstruct both normal and abnormal samples naively. To address that, we propose to learn the input features in global and local manners, forcing the network to memorize the normal patterns more comprehensively. Specifically, we design a two-branch decoder block, named Omni-block. One branch corresponds to global feature learning, where we serialize two self-attention blocks but replace the query and (key, value) with learnable tokens, respectively, thus capturing global features of normal patterns concisely and thoroughly. The local branch comprises depth-separable convolutions, whose locality enables effective and efficient learning of local features for normal patterns. By stacking Omni-blocks, we build a framework, Omni-AD, to learn normal patterns of different granularity and reconstruct them progressively. Comprehensive experiments on public anomaly detection benchmarks show that our method outperforms state-of-the-art approaches in MUAD. Code is available at https://github.com/easyoo/Omni-AD.git.

ContextGNN: Beyond Two-Tower Recommendation Systems

Nov 29, 2024

Abstract:Recommendation systems predominantly utilize two-tower architectures, which evaluate user-item rankings through the inner product of their respective embeddings. However, one key limitation of two-tower models is that they learn a pair-agnostic representation of users and items. In contrast, pair-wise representations either scale poorly due to their quadratic complexity or are too restrictive on the candidate pairs to rank. To address these issues, we introduce Context-based Graph Neural Networks (ContextGNNs), a novel deep learning architecture for link prediction in recommendation systems. The method employs a pair-wise representation technique for familiar items situated within a user's local subgraph, while leveraging two-tower representations to facilitate the recommendation of exploratory items. A final network then predicts how to fuse both pair-wise and two-tower recommendations into a single ranking of items. We demonstrate that ContextGNN is able to adapt to different data characteristics and outperforms existing methods, both traditional and GNN-based, on a diverse set of practical recommendation tasks, improving performance by 20% on average.

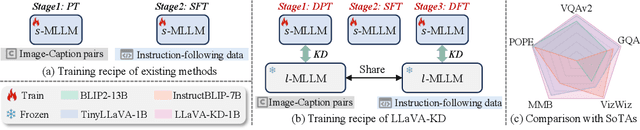

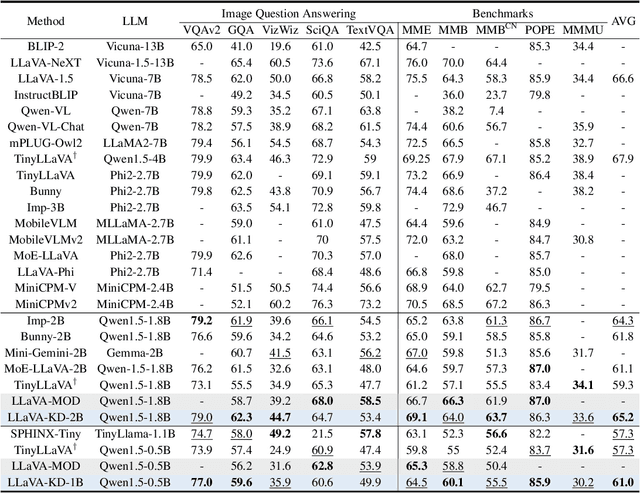

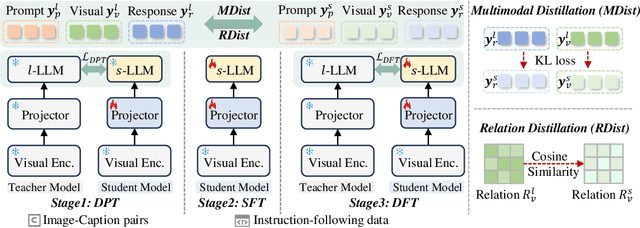

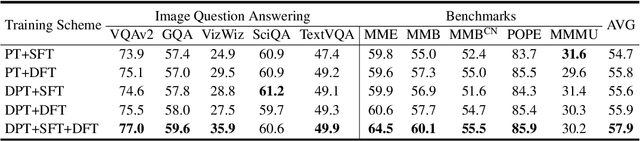

LLaVA-KD: A Framework of Distilling Multimodal Large Language Models

Oct 21, 2024

Abstract:The success of Large Language Models (LLM) has led researchers to explore Multimodal Large Language Models (MLLM) for unified visual and linguistic understanding. However, the increasing model size and computational complexity of MLLM limit their use in resource-constrained environments. Small-scale MLLM (s-MLLM) aims to retain the capabilities of the large-scale model (l-MLLM) while reducing computational demands, but resulting in a significant decline in performance. To address the aforementioned issues, we propose a novel LLaVA-KD framework to transfer knowledge from l-MLLM to s-MLLM. Specifically, we introduce Multimodal Distillation (MDist) to minimize the divergence between the visual-textual output distributions of l-MLLM and s-MLLM, and Relation Distillation (RDist) to transfer l-MLLM's ability to model correlations between visual features. Additionally, we propose a three-stage training scheme to fully exploit the potential of s-MLLM: 1) Distilled Pre-Training to align visual-textual representations, 2) Supervised Fine-Tuning to equip the model with multimodal understanding, and 3) Distilled Fine-Tuning to further transfer l-MLLM capabilities. Our approach significantly improves performance without altering the small model's architecture. Extensive experiments and ablation studies validate the effectiveness of each proposed component. Code will be available at https://github.com/caiyuxuan1120/LLaVA-KD.

CLIP-SCGI: Synthesized Caption-Guided Inversion for Person Re-Identification

Oct 12, 2024Abstract:Person re-identification (ReID) has recently benefited from large pretrained vision-language models such as Contrastive Language-Image Pre-Training (CLIP). However, the absence of concrete descriptions necessitates the use of implicit text embeddings, which demand complicated and inefficient training strategies. To address this issue, we first propose one straightforward solution by leveraging existing image captioning models to generate pseudo captions for person images, and thereby boost person re-identification with large vision language models. Using models like the Large Language and Vision Assistant (LLAVA), we generate high-quality captions based on fixed templates that capture key semantic attributes such as gender, clothing, and age. By augmenting ReID training sets from uni-modality (image) to bi-modality (image and text), we introduce CLIP-SCGI, a simple yet effective framework that leverages synthesized captions to guide the learning of discriminative and robust representations. Built on CLIP, CLIP-SCGI fuses image and text embeddings through two modules to enhance the training process. To address quality issues in generated captions, we introduce a caption-guided inversion module that captures semantic attributes from images by converting relevant visual information into pseudo-word tokens based on the descriptions. This approach helps the model better capture key information and focus on relevant regions. The extracted features are then utilized in a cross-modal fusion module, guiding the model to focus on regions semantically consistent with the caption, thereby facilitating the optimization of the visual encoder to extract discriminative and robust representations. Extensive experiments on four popular ReID benchmarks demonstrate that CLIP-SCGI outperforms the state-of-the-art by a significant margin.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge