Xiaojuan Li

Towards Quantum Tensor Decomposition in Biomedical Applications

Feb 19, 2025

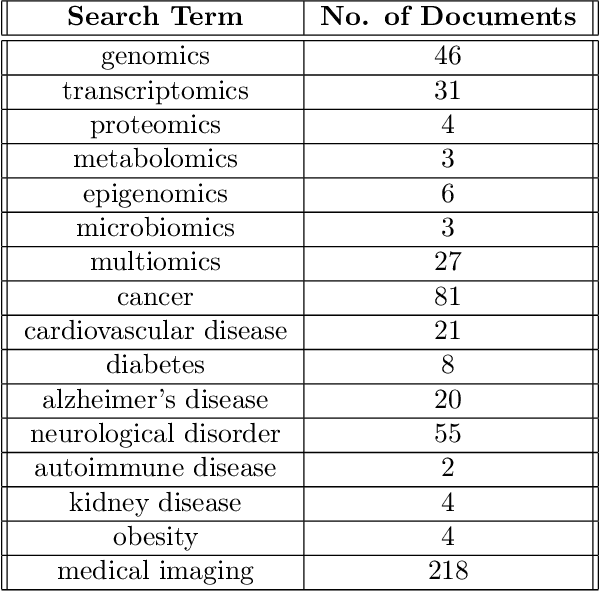

Abstract:Tensor decomposition has emerged as a powerful framework for feature extraction in multi-modal biomedical data. In this review, we present a comprehensive analysis of tensor decomposition methods such as Tucker, CANDECOMP/PARAFAC, spiked tensor decomposition, etc. and their diverse applications across biomedical domains such as imaging, multi-omics, and spatial transcriptomics. To systematically investigate the literature, we applied a topic modeling-based approach that identifies and groups distinct thematic sub-areas in biomedicine where tensor decomposition has been used, thereby revealing key trends and research directions. We evaluated challenges related to the scalability of latent spaces along with obtaining the optimal rank of the tensor, which often hinder the extraction of meaningful features from increasingly large and complex datasets. Additionally, we discuss recent advances in quantum algorithms for tensor decomposition, exploring how quantum computing can be leveraged to address these challenges. Our study includes a preliminary resource estimation analysis for quantum computing platforms and examines the feasibility of implementing quantum-enhanced tensor decomposition methods on near-term quantum devices. Collectively, this review not only synthesizes current applications and challenges of tensor decomposition in biomedical analyses but also outlines promising quantum computing strategies to enhance its impact on deriving actionable insights from complex biomedical data.

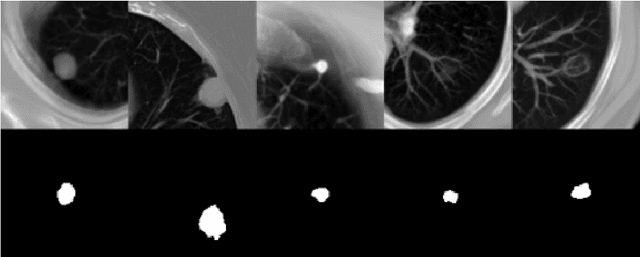

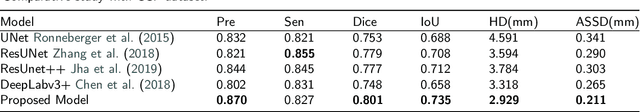

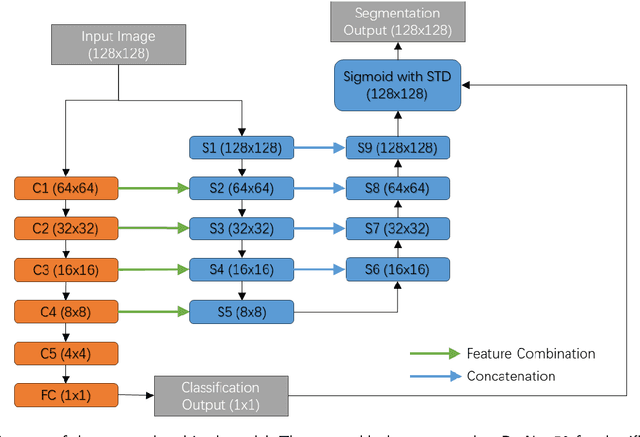

Detection-Guided Deep Learning-Based Model with Spatial Regularization for Lung Nodule Segmentation

Oct 26, 2024

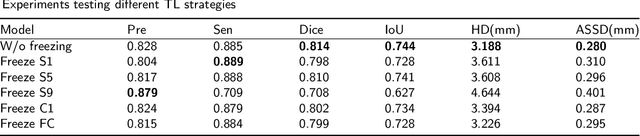

Abstract:Lung cancer ranks as one of the leading causes of cancer diagnosis and is the foremost cause of cancer-related mortality worldwide. The early detection of lung nodules plays a pivotal role in improving outcomes for patients, as it enables timely and effective treatment interventions. The segmentation of lung nodules plays a critical role in aiding physicians in distinguishing between malignant and benign lesions. However, this task remains challenging due to the substantial variation in the shapes and sizes of lung nodules, and their frequent proximity to lung tissues, which complicates clear delineation. In this study, we introduce a novel model for segmenting lung nodules in computed tomography (CT) images, leveraging a deep learning framework that integrates segmentation and classification processes. This model is distinguished by its use of feature combination blocks, which facilitate the sharing of information between the segmentation and classification components. Additionally, we employ the classification outcomes as priors to refine the size estimation of the predicted nodules, integrating these with a spatial regularization technique to enhance precision. Furthermore, recognizing the challenges posed by limited training datasets, we have developed an optimal transfer learning strategy that freezes certain layers to further improve performance. The results show that our proposed model can capture the target nodules more accurately compared to other commonly used models. By applying transfer learning, the performance can be further improved, achieving a sensitivity score of 0.885 and a Dice score of 0.814.

Novel adaptation of video segmentation to 3D MRI: efficient zero-shot knee segmentation with SAM2

Aug 08, 2024

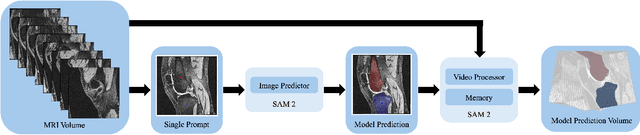

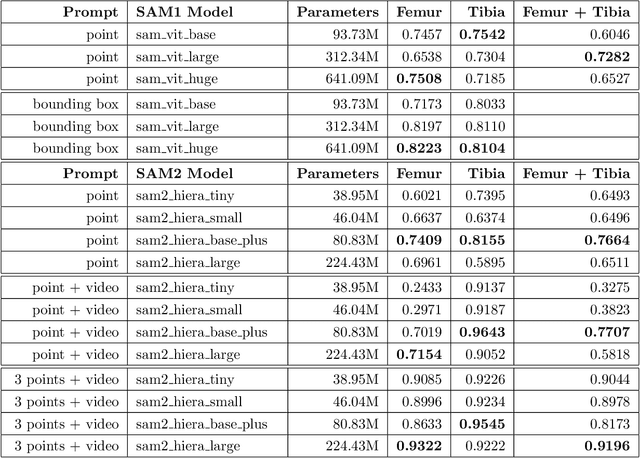

Abstract:Intelligent medical image segmentation methods are rapidly evolving and being increasingly applied, yet they face the challenge of domain transfer, where algorithm performance degrades due to different data distributions between source and target domains. To address this, we introduce a method for zero-shot, single-prompt segmentation of 3D knee MRI by adapting Segment Anything Model 2 (SAM2), a general-purpose segmentation model designed to accept prompts and retain memory across frames of a video. By treating slices from 3D medical volumes as individual video frames, we leverage SAM2's advanced capabilities to generate motion- and spatially-aware predictions. We demonstrate that SAM2 can efficiently perform segmentation tasks in a zero-shot manner with no additional training or fine-tuning, accurately delineating structures in knee MRI scans using only a single prompt. Our experiments on the Osteoarthritis Initiative Zuse Institute Berlin (OAI-ZIB) dataset reveal that SAM2 achieves high accuracy on 3D knee bone segmentation, with a testing Dice similarity coefficient of 0.9643 on tibia. We also present results generated using different SAM2 model sizes, different prompt schemes, as well as comparative results from the SAM1 model deployed on the same dataset. This breakthrough has the potential to revolutionize medical image analysis by providing a scalable, cost-effective solution for automated segmentation, paving the way for broader clinical applications and streamlined workflows.

A Geometric Flow Approach for Segmentation of Images with Inhomongeneous Intensity and Missing Boundaries

Sep 19, 2023

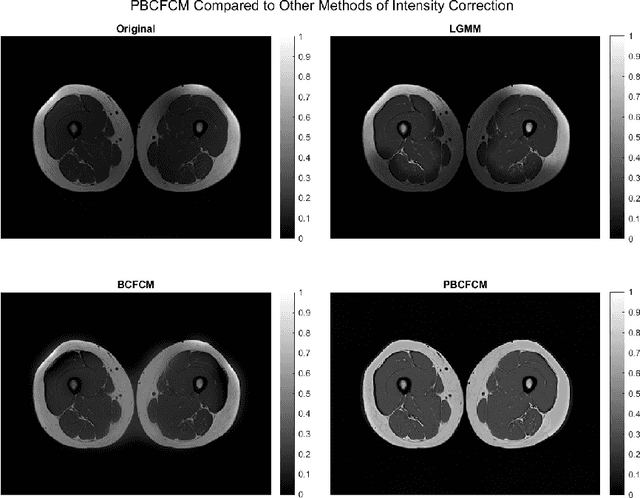

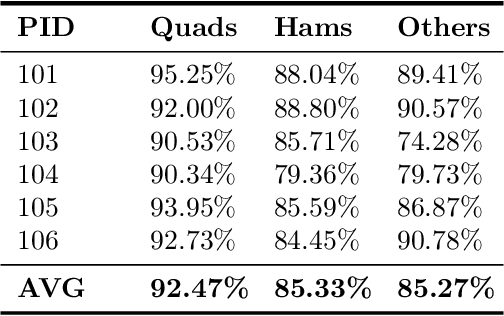

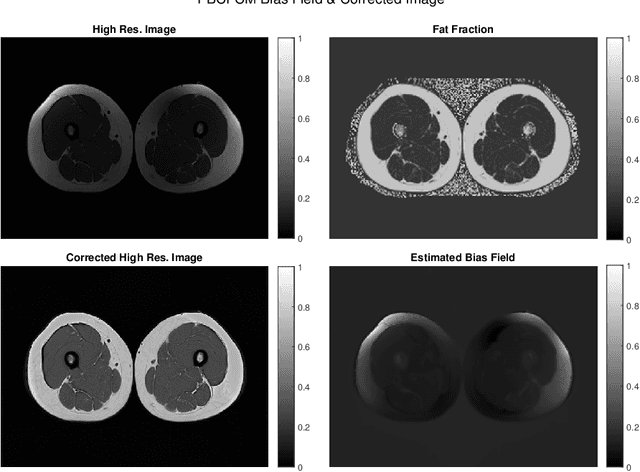

Abstract:Image segmentation is a complex mathematical problem, especially for images that contain intensity inhomogeneity and tightly packed objects with missing boundaries in between. For instance, Magnetic Resonance (MR) muscle images often contain both of these issues, making muscle segmentation especially difficult. In this paper we propose a novel intensity correction and a semi-automatic active contour based segmentation approach. The approach uses a geometric flow that incorporates a reproducing kernel Hilbert space (RKHS) edge detector and a geodesic distance penalty term from a set of markers and anti-markers. We test the proposed scheme on MR muscle segmentation and compare with some state of the art methods. To help deal with the intensity inhomogeneity in this particular kind of image, a new approach to estimate the bias field using a fat fraction image, called Prior Bias-Corrected Fuzzy C-means (PBCFCM), is introduced. Numerical experiments show that the proposed scheme leads to significantly better results than compared ones. The average dice values of the proposed method are 92.5%, 85.3%, 85.3% for quadriceps, hamstrings and other muscle groups while other approaches are at least 10% worse.

Unsupervised Deep Unrolled Reconstruction Using Regularization by Denoising

May 07, 2022

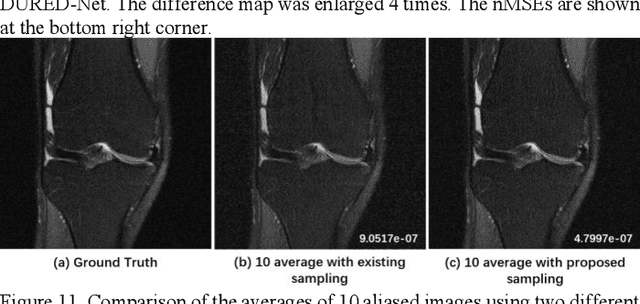

Abstract:Deep learning methods have been successfully used in various computer vision tasks. Inspired by that success, deep learning has been explored in magnetic resonance imaging (MRI) reconstruction. In particular, integrating deep learning and model-based optimization methods has shown considerable advantages. However, a large amount of labeled training data is typically needed for high reconstruction quality, which is challenging for some MRI applications. In this paper, we propose a novel reconstruction method, named DURED-Net, that enables interpretable unsupervised learning for MR image reconstruction by combining an unsupervised denoising network and a plug-and-play method. We aim to boost the reconstruction performance of unsupervised learning by adding an explicit prior that utilizes imaging physics. Specifically, the leverage of a denoising network for MRI reconstruction is achieved using Regularization by Denoising (RED). Experiment results demonstrate that the proposed method requires a reduced amount of training data to achieve high reconstruction quality.

The International Workshop on Osteoarthritis Imaging Knee MRI Segmentation Challenge: A Multi-Institute Evaluation and Analysis Framework on a Standardized Dataset

May 26, 2020

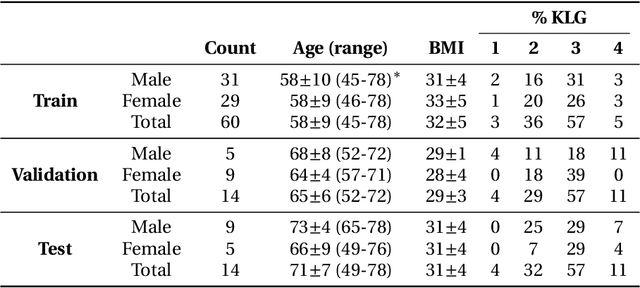

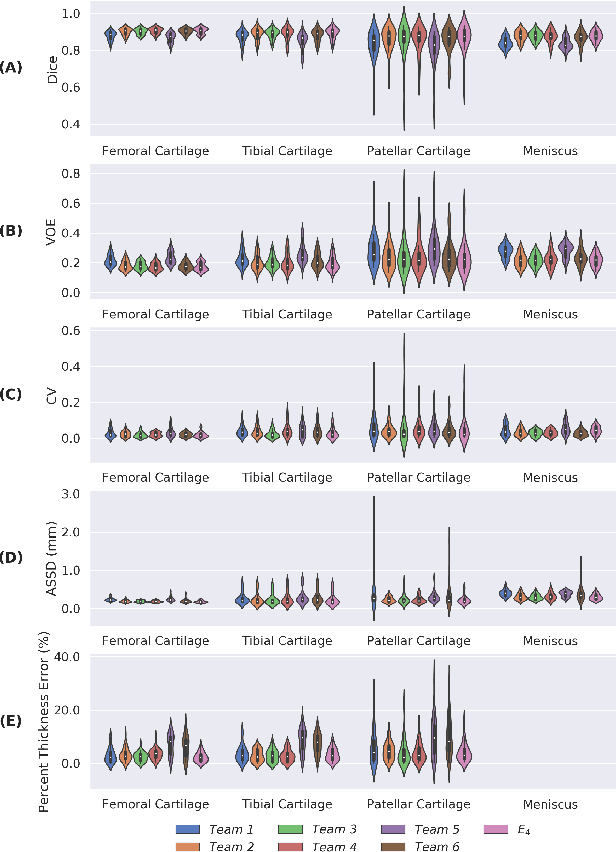

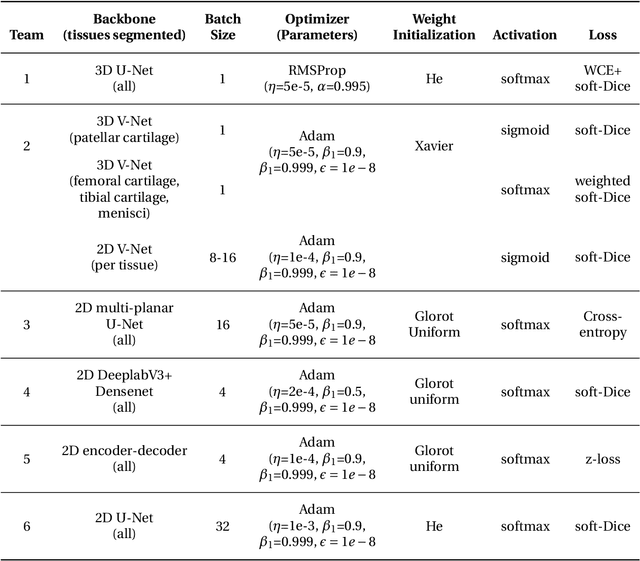

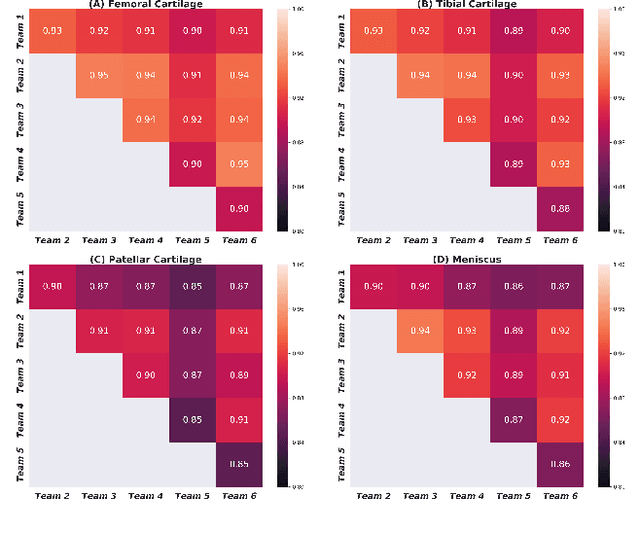

Abstract:Purpose: To organize a knee MRI segmentation challenge for characterizing the semantic and clinical efficacy of automatic segmentation methods relevant for monitoring osteoarthritis progression. Methods: A dataset partition consisting of 3D knee MRI from 88 subjects at two timepoints with ground-truth articular (femoral, tibial, patellar) cartilage and meniscus segmentations was standardized. Challenge submissions and a majority-vote ensemble were evaluated using Dice score, average symmetric surface distance, volumetric overlap error, and coefficient of variation on a hold-out test set. Similarities in network segmentations were evaluated using pairwise Dice correlations. Articular cartilage thickness was computed per-scan and longitudinally. Correlation between thickness error and segmentation metrics was measured using Pearson's coefficient. Two empirical upper bounds for ensemble performance were computed using combinations of model outputs that consolidated true positives and true negatives. Results: Six teams (T1-T6) submitted entries for the challenge. No significant differences were observed across all segmentation metrics for all tissues (p=1.0) among the four top-performing networks (T2, T3, T4, T6). Dice correlations between network pairs were high (>0.85). Per-scan thickness errors were negligible among T1-T4 (p=0.99) and longitudinal changes showed minimal bias (<0.03mm). Low correlations (<0.41) were observed between segmentation metrics and thickness error. The majority-vote ensemble was comparable to top performing networks (p=1.0). Empirical upper bound performances were similar for both combinations (p=1.0). Conclusion: Diverse networks learned to segment the knee similarly where high segmentation accuracy did not correlate to cartilage thickness accuracy. Voting ensembles did not outperform individual networks but may help regularize individual models.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge