Senior Member

Diabetic Retinopathy Screening Using Custom-Designed Convolutional Neural Network

Oct 08, 2021

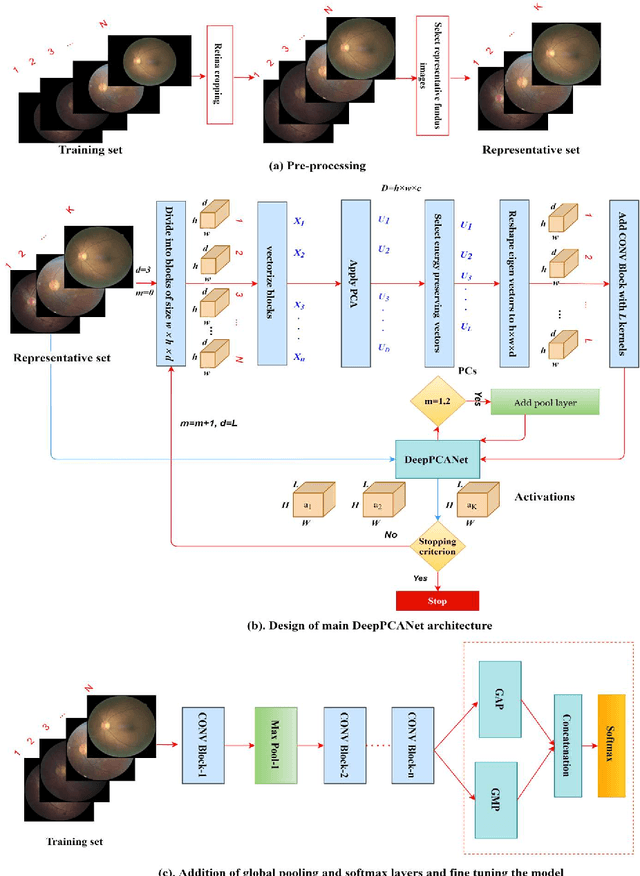

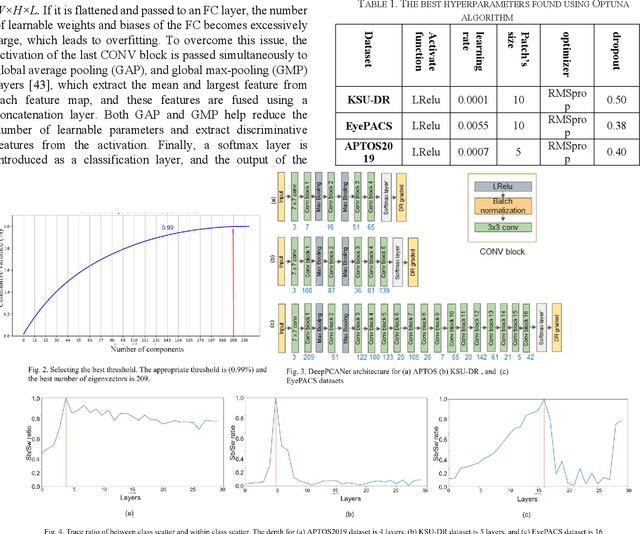

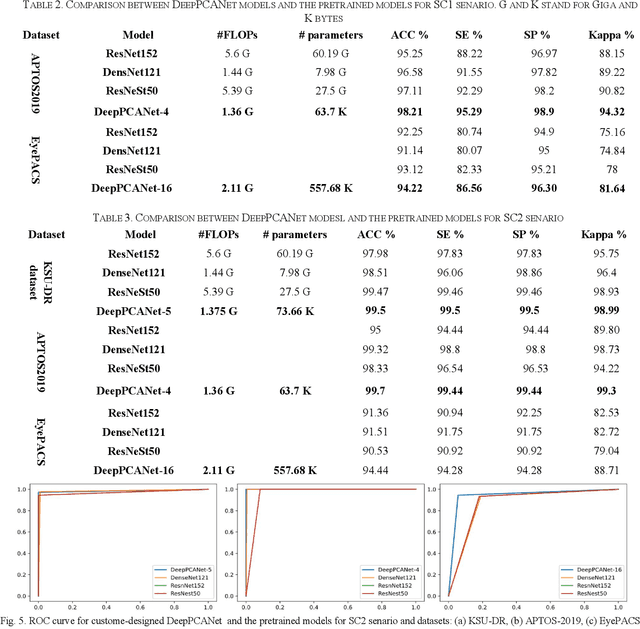

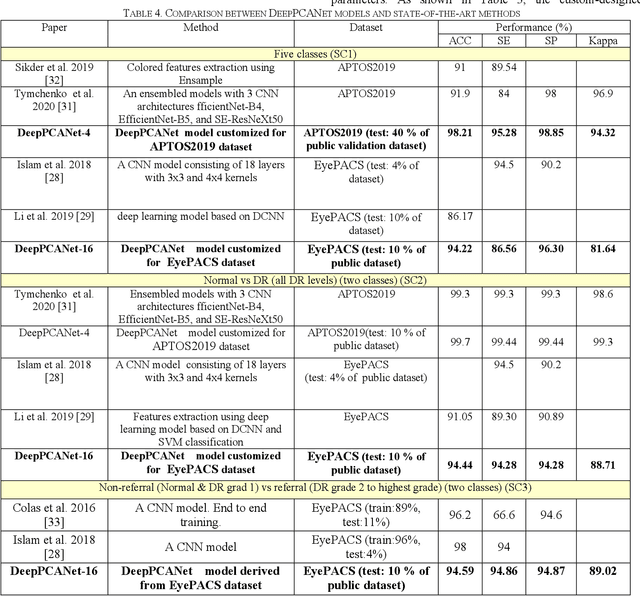

Abstract:The prevalence of diabetic retinopathy (DR) has reached 34.6% worldwide and is a major cause of blindness among middle-aged diabetic patients. Regular DR screening using fundus photography helps detect its complications and prevent its progression to advanced levels. As manual screening is time-consuming and subjective, machine learning (ML) and deep learning (DL) have been employed to aid graders. However, the existing CNN-based methods use either pre-trained CNN models or a brute force approach to design new CNN models, which are not customized to the complexity of fundus images. To overcome this issue, we introduce an approach for custom-design of CNN models, whose architectures are adapted to the structural patterns of fundus images and better represent the DR-relevant features. It takes the leverage of k-medoid clustering, principal component analysis (PCA), and inter-class and intra-class variations to automatically determine the depth and width of a CNN model. The designed models are lightweight, adapted to the internal structures of fundus images, and encode the discriminative patterns of DR lesions. The technique is validated on a local dataset from King Saud University Medical City, Saudi Arabia, and two challenging benchmark datasets from Kaggle: EyePACS and APTOS2019. The custom-designed models outperform the famous pre-trained CNN models like ResNet152, Densnet121, and ResNeSt50 with a significant decrease in the number of parameters and compete well with the state-of-the-art CNN-based DR screening methods. The proposed approach is helpful for DR screening under diverse clinical settings and referring the patients who may need further assessment and treatment to expert ophthalmologists.

A Novel Deep Learning Method for Thermal to Annotated Thermal-Optical Fused Images

Jul 13, 2021

Abstract:Thermal Images profile the passive radiation of objects and capture them in grayscale images. Such images have a very different distribution of data compared to optical colored images. We present here a work that produces a grayscale thermo-optical fused mask given a thermal input. This is a deep learning based pioneering work since to the best of our knowledge, there exists no other work on thermal-optical grayscale fusion. Our method is also unique in the sense that the deep learning method we are proposing here works on the Discrete Wavelet Transform (DWT) domain instead of the gray level domain. As a part of this work, we also present a new and unique database for obtaining the region of interest in thermal images based on an existing thermal visual paired database, containing the Region of Interest on 5 different classes of data. Finally, we are proposing a simple low cost overhead statistical measure for identifying the region of interest in the fused images, which we call as the Region of Fusion (RoF). Experiments on the database show encouraging results in identifying the region of interest in the fused images. We also show that they can be processed better in the mixed form rather than with only thermal images.

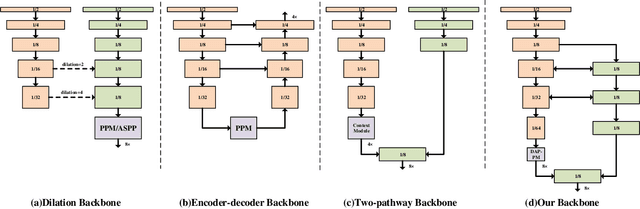

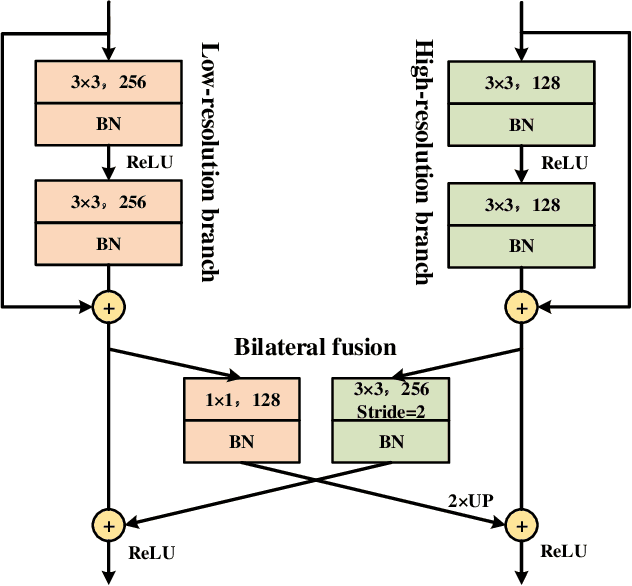

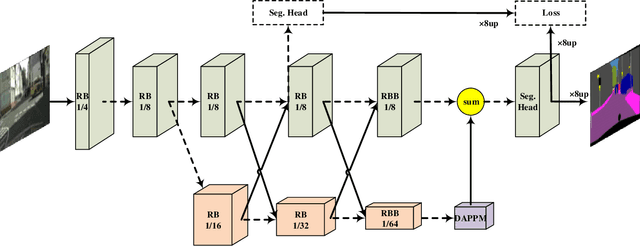

Deep Dual-resolution Networks for Real-time and Accurate Semantic Segmentation of Road Scenes

Jan 15, 2021

Abstract:Semantic segmentation is a critical technology for autonomous vehicles to understand surrounding scenes. For practical autonomous vehicles, it is undesirable to spend a considerable amount of inference time to achieve high-accuracy segmentation results. Using light-weight architectures (encoder-decoder or two-pathway) or reasoning on low-resolution images, recent methods realize very fast scene parsing which even run at more than 100 FPS on single 1080Ti GPU. However, there are still evident gaps in performance between these real-time methods and models based on dilation backbones. To tackle this problem, we propose novel deep dual-resolution networks (DDRNets) for real-time semantic segmentation of road scenes. Besides, we design a new contextual information extractor named Deep Aggregation Pyramid Pooling Module (DAPPM) to enlarge effective receptive fields and fuse multi-scale context. Our method achieves new state-of-the-art trade-off between accuracy and speed on both Cityscapes and CamVid dataset. Specially, on single 2080Ti GPU, DDRNet-23-slim yields 77.4% mIoU at 109 FPS on Cityscapes test set and 74.4% mIoU at 230 FPS on CamVid test set. Without utilizing attention mechanism, pre-training on larger semantic segmentation dataset or inference acceleration, DDRNet-39 attains 80.4% test mIoU at 23 FPS on Cityscapes. With widely used test augmentation, our method is still superior to most state-of-the-art models, requiring much less computation. Codes and trained models will be made publicly available.

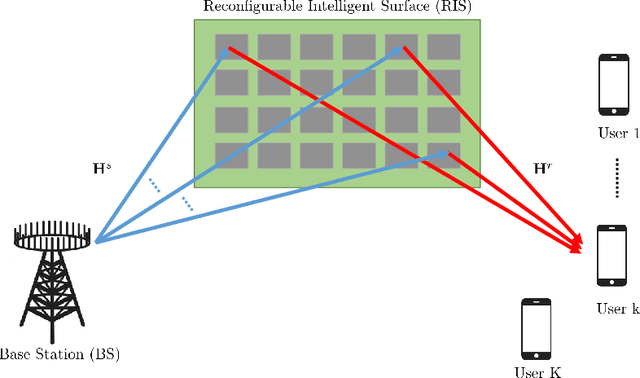

Channel Estimation for RIS-Empowered Multi-User MISO Wireless Communications

Aug 04, 2020

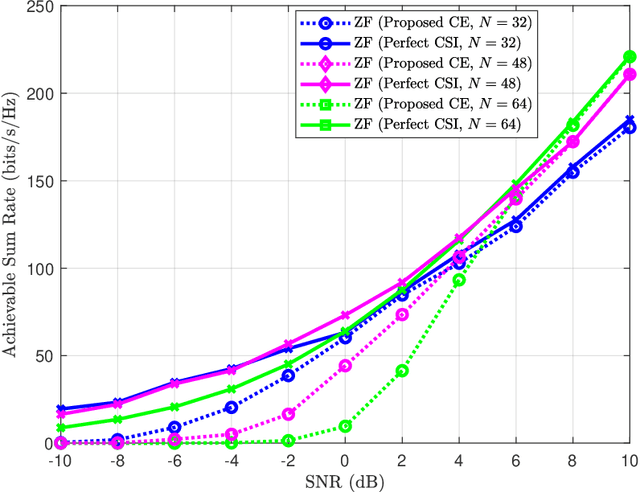

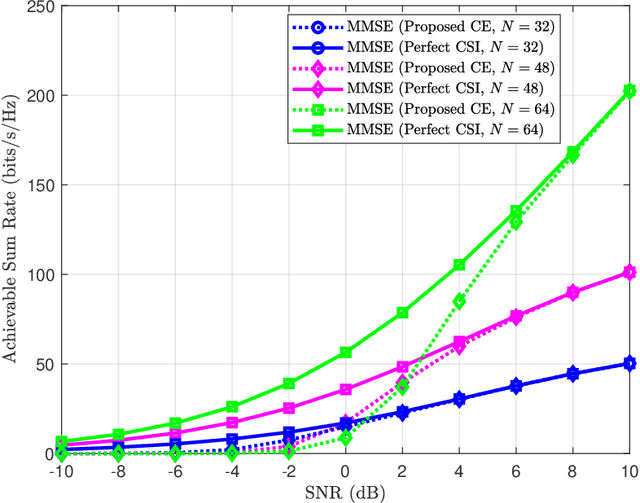

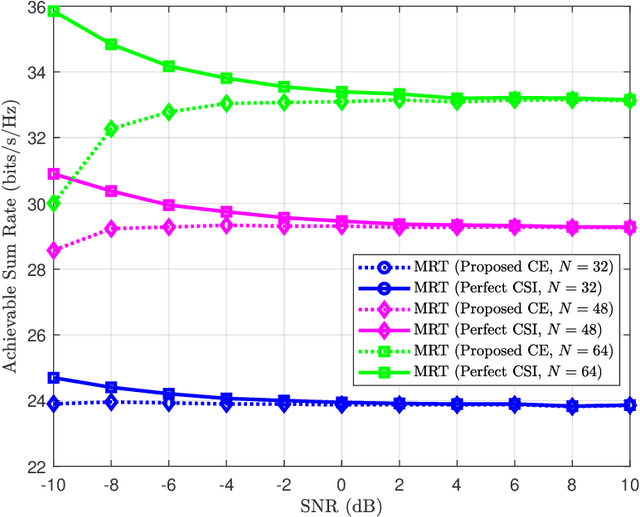

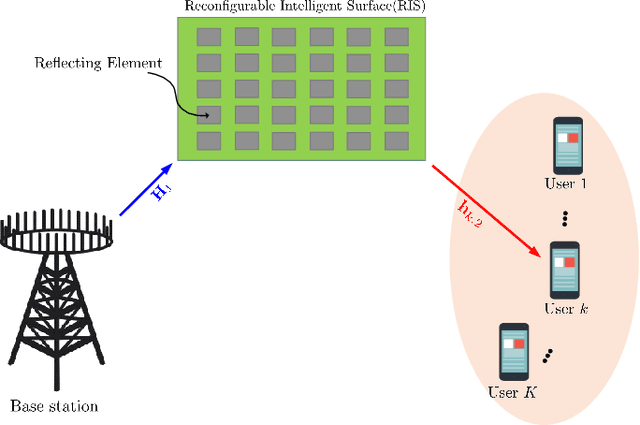

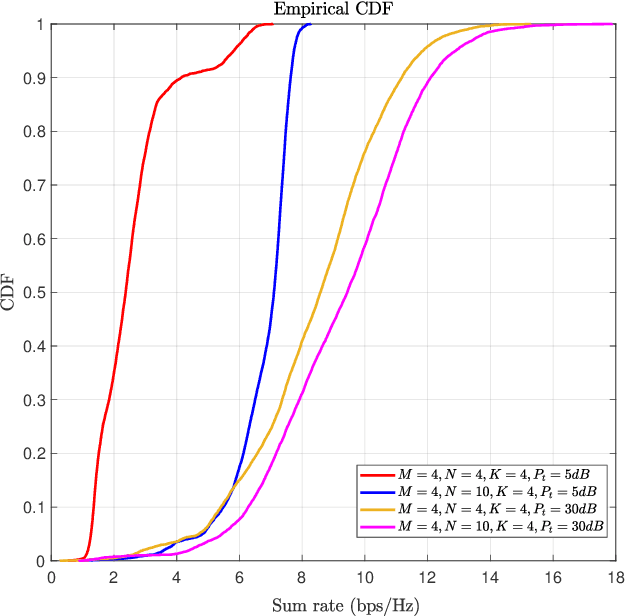

Abstract:Reconfigurable Intelligent Surfaces (RISs) have been recently considered as an energy-efficient solution for future wireless networks due to their fast and low-power configuration, which has increased potential in enabling massive connectivity and low-latency communications. Accurate and low-overhead channel estimation in RIS-based systems is one of the most critical challenges due to the usually large number of RIS unit elements and their distinctive hardware constraints. In this paper, we focus on the downlink of a RIS-empowered multi-user Multiple Input Single Output (MISO) downlink communication systems and propose a channel estimation framework based on the PARAllel FACtor (PARAFAC) decomposition to unfold the resulting cascaded channel model. We present two iterative estimation algorithms for the channels between the base station and RIS, as well as the channels between RIS and users. One is based on alternating least squares (ALS), while the other uses vector approximate message passing to iteratively reconstruct two unknown channels from the estimated vectors. To theoretically assess the performance of the ALS-based algorithm, we derived its estimation Cram\'er-Rao Bound (CRB). We also discuss the achievable sum-rate computation with estimated channels and different precoding schemes for the base station. Our extensive simulation results show that our algorithms outperform benchmark schemes and that the ALS technique achieve the CRB. It is also demonstrated that the sum rate using the estimated channels reached that of perfect channel estimation under various settings, thus, verifying the effectiveness and robustness of the proposed estimation algorithms.

Reconfigurable Intelligent Surface Assisted Multiuser MISO Systems Exploiting Deep Reinforcement Learning

Feb 24, 2020

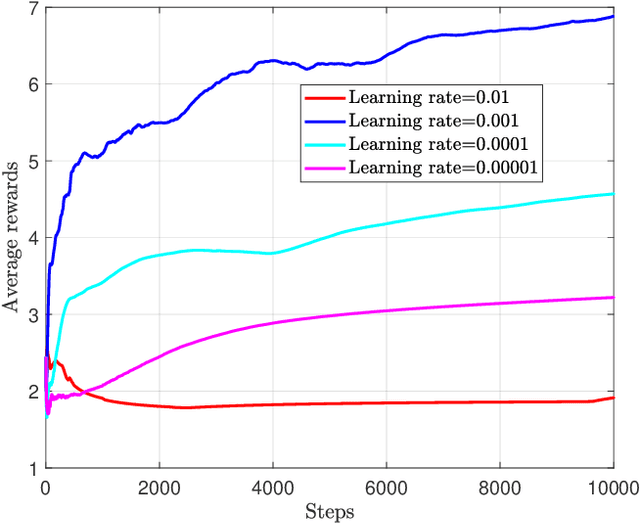

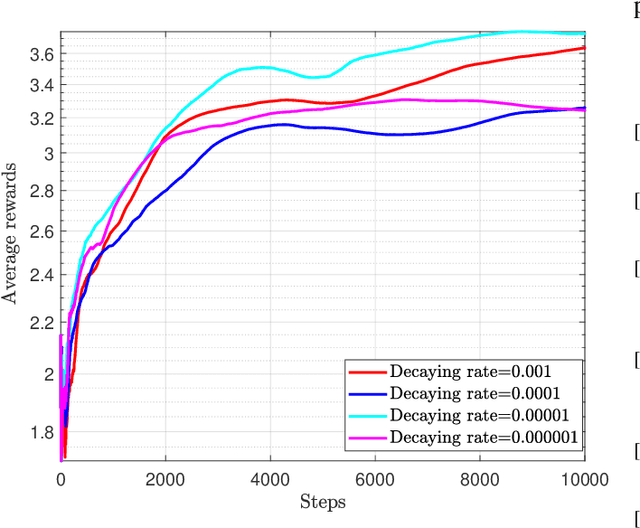

Abstract:Recently, the reconfigurable intelligent surface (RIS), benefited from the breakthrough on the fabrication of programmable meta-material, has been speculated as one of the key enabling technologies for the future six generation (6G) wireless communication systems scaled up beyond massive multiple input multiple output (Massive-MIMO) technology to achieve smart radio environments. Employed as reflecting arrays, RIS is able to assist MIMO transmissions without the need of radio frequency chains resulting in considerable reduction in power consumption. In this paper, we investigate the joint design of transmit beamforming matrix at the base station and the phase shift matrix at the RIS, by leveraging recent advances in deep reinforcement learning (DRL). We first develop a DRL based algorithm, in which the joint design is obtained through trial-and-error interactions with the environment by observing predefined rewards, in the context of continuous state and action. Unlike the most reported works utilizing the alternating optimization techniques to alternatively obtain the transmit beamforming and phase shifts, the proposed DRL based algorithm obtains the joint design simultaneously as the output of the DRL neural network. Simulation results show that the proposed algorithm is not only able to learn from the environment and gradually improve its behavior, but also obtains the comparable performance compared with two state-of-the-art benchmarks. It is also observed that, appropriate neural network parameter settings will improve significantly the performance and convergence rate of the proposed algorithm.

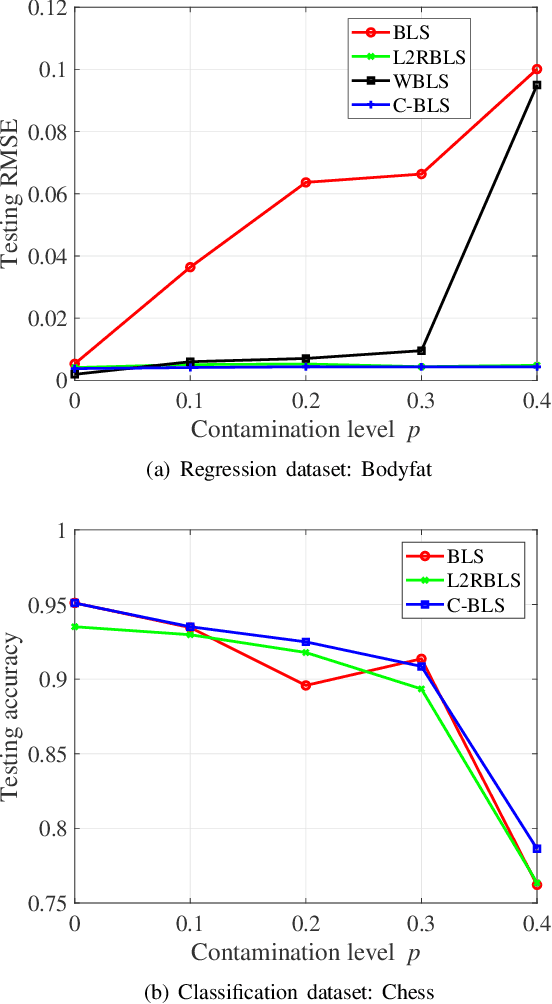

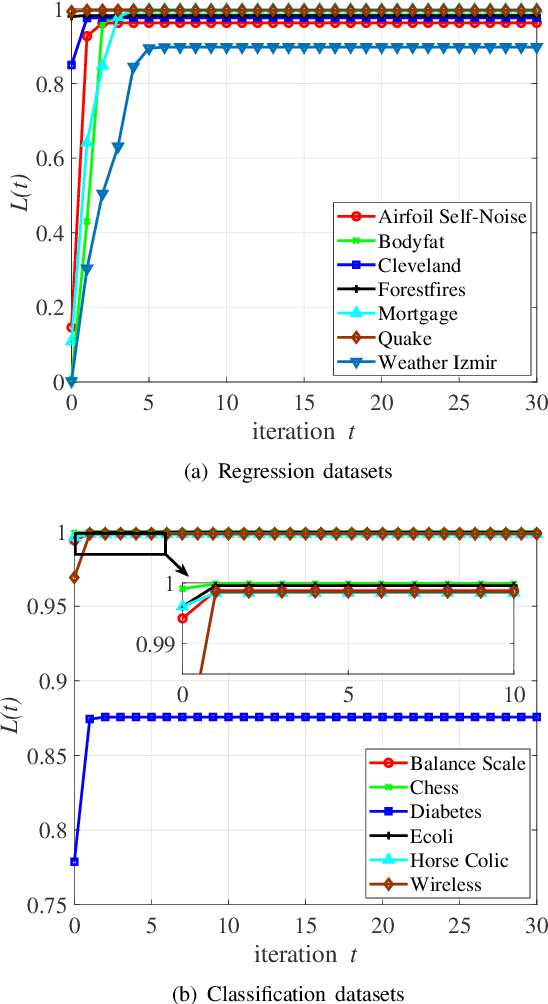

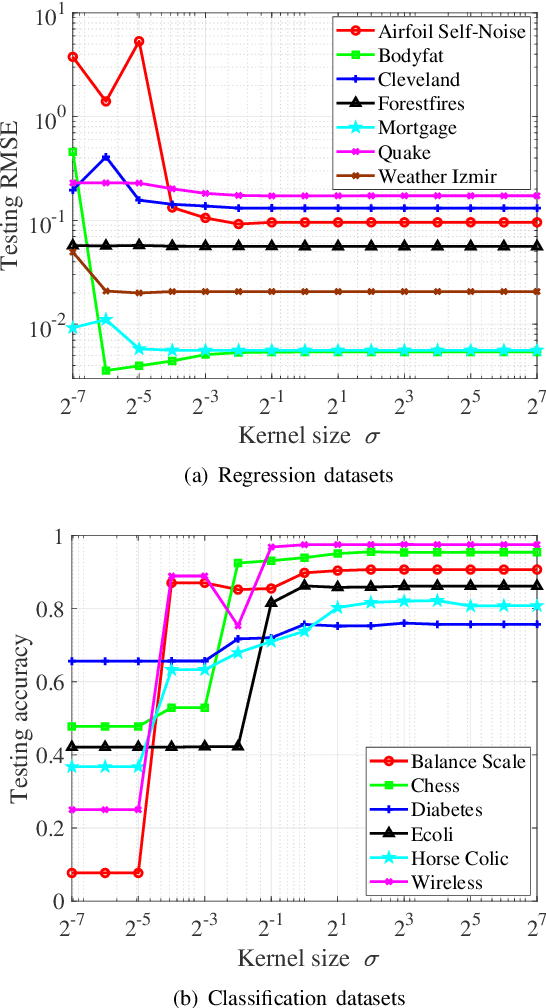

Broad Learning System Based on Maximum Correntropy Criterion

Dec 24, 2019

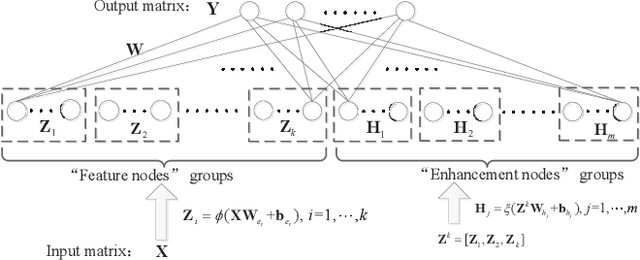

Abstract:As an effective and efficient discriminative learning method, Broad Learning System (BLS) has received increasing attention due to its outstanding performance in various regression and classification problems. However, the standard BLS is derived under the minimum mean square error (MMSE) criterion, which is, of course, not always a good choice due to its sensitivity to outliers. To enhance the robustness of BLS, we propose in this work to adopt the maximum correntropy criterion (MCC) to train the output weights, obtaining a correntropy based broad learning system (C-BLS). Thanks to the inherent superiorities of MCC, the proposed C-BLS is expected to achieve excellent robustness to outliers while maintaining the original performance of the standard BLS in Gaussian or noise-free environment. In addition, three alternative incremental learning algorithms, derived from a weighted regularized least-squares solution rather than pseudoinverse formula, for C-BLS are developed.With the incremental learning algorithms, the system can be updated quickly without the entire retraining process from the beginning, when some new samples arrive or the network deems to be expanded. Experiments on various regression and classification datasets are reported to demonstrate the desirable performance of the new methods.

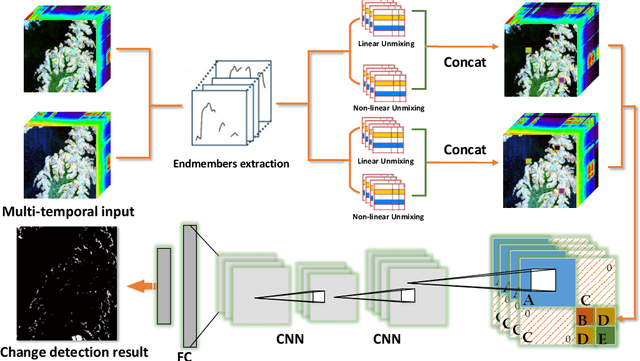

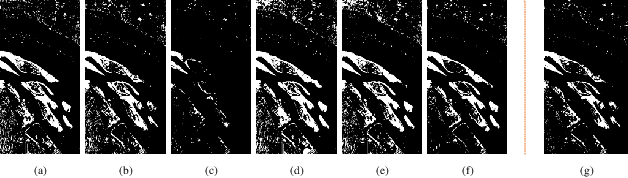

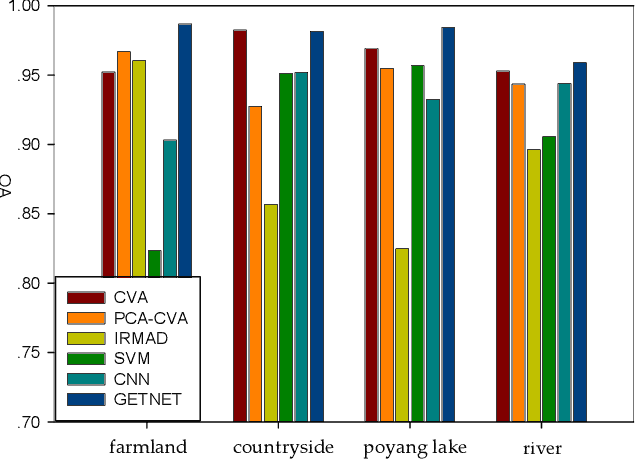

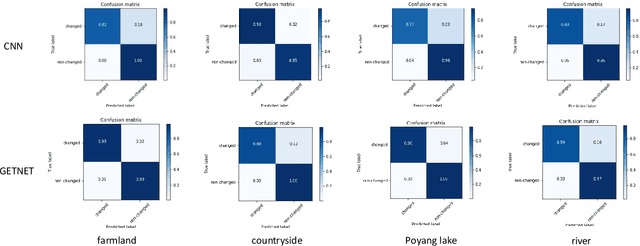

GETNET: A General End-to-end Two-dimensional CNN Framework for Hyperspectral Image Change Detection

May 05, 2019

Abstract:Change detection (CD) is an important application of remote sensing, which provides timely change information about large-scale Earth surface. With the emergence of hyperspectral imagery, CD technology has been greatly promoted, as hyperspectral data with the highspectral resolution are capable of detecting finer changes than using the traditional multispectral imagery. Nevertheless, the high dimension of hyperspectral data makes it difficult to implement traditional CD algorithms. Besides, endmember abundance information at subpixel level is often not fully utilized. In order to better handle high dimension problem and explore abundance information, this paper presents a General End-to-end Two-dimensional CNN (GETNET) framework for hyperspectral image change detection (HSI-CD). The main contributions of this work are threefold: 1) Mixed-affinity matrix that integrates subpixel representation is introduced to mine more cross-channel gradient features and fuse multi-source information; 2) 2-D CNN is designed to learn the discriminative features effectively from multi-source data at a higher level and enhance the generalization ability of the proposed CD algorithm; 3) A new HSI-CD data set is designed for the objective comparison of different methods. Experimental results on real hyperspectral data sets demonstrate the proposed method outperforms most of the state-of-the-arts.

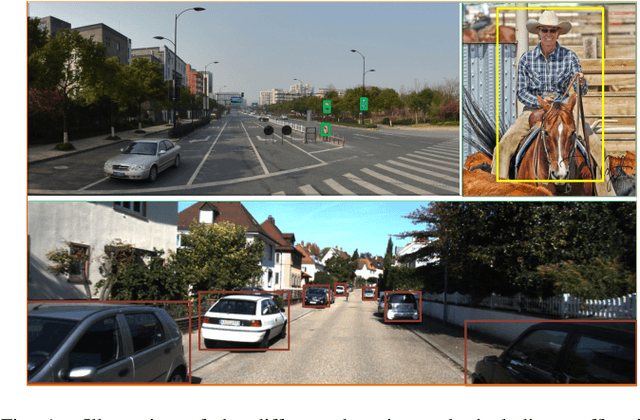

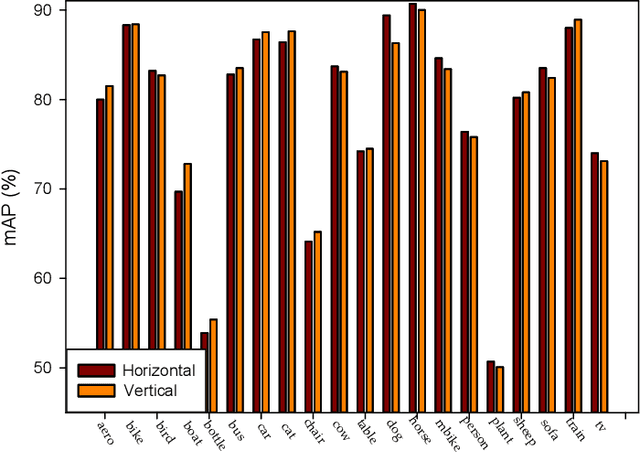

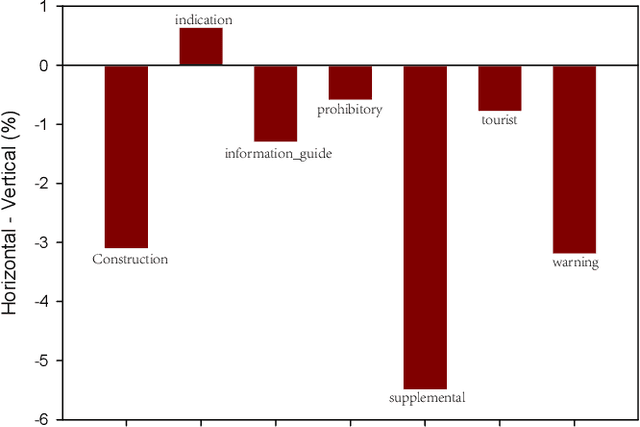

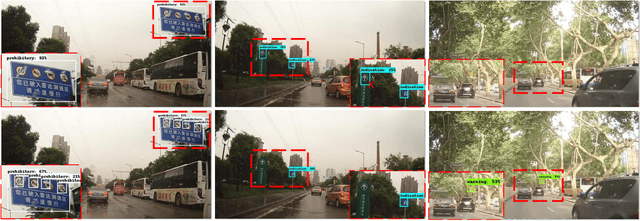

VSSA-NET: Vertical Spatial Sequence Attention Network for Traffic Sign Detection

May 05, 2019

Abstract:Although traffic sign detection has been studied for years and great progress has been made with the rise of deep learning technique, there are still many problems remaining to be addressed. For complicated real-world traffic scenes, there are two main challenges. Firstly, traffic signs are usually small size objects, which makes it more difficult to detect than large ones; Secondly, it is hard to distinguish false targets which resemble real traffic signs in complex street scenes without context information. To handle these problems, we propose a novel end-to-end deep learning method for traffic sign detection in complex environments. Our contributions are as follows: 1) We propose a multi-resolution feature fusion network architecture which exploits densely connected deconvolution layers with skip connections, and can learn more effective features for the small size object; 2) We frame the traffic sign detection as a spatial sequence classification and regression task, and propose a vertical spatial sequence attention (VSSA) module to gain more context information for better detection performance. To comprehensively evaluate the proposed method, we do experiments on several traffic sign datasets as well as the general object detection dataset and the results have shown the effectiveness of our proposed method.

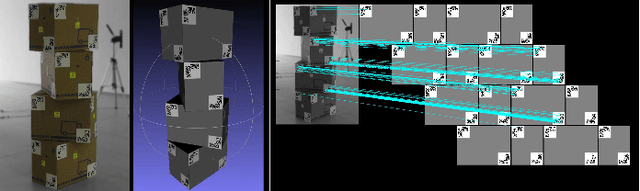

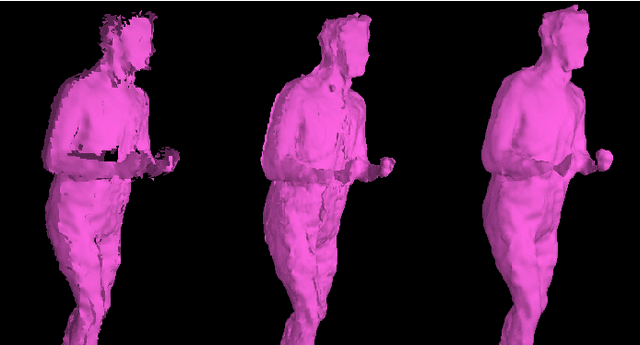

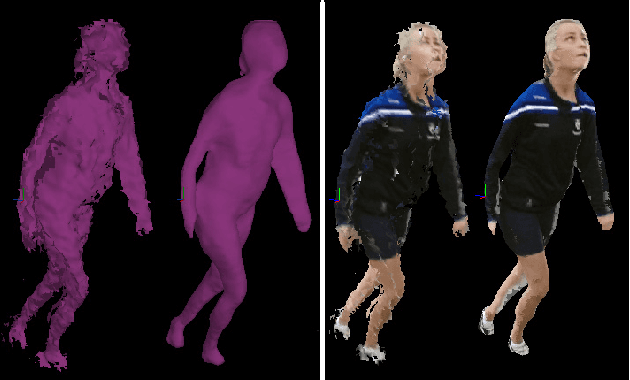

An Integrated Platform for Live 3D Human Reconstruction and Motion Capturing

Dec 08, 2017

Abstract:The latest developments in 3D capturing, processing, and rendering provide means to unlock novel 3D application pathways. The main elements of an integrated platform, which target tele-immersion and future 3D applications, are described in this paper, addressing the tasks of real-time capturing, robust 3D human shape/appearance reconstruction, and skeleton-based motion tracking. More specifically, initially, the details of a multiple RGB-depth (RGB-D) capturing system are given, along with a novel sensors' calibration method. A robust, fast reconstruction method from multiple RGB-D streams is then proposed, based on an enhanced variation of the volumetric Fourier transform-based method, parallelized on the Graphics Processing Unit, and accompanied with an appropriate texture-mapping algorithm. On top of that, given the lack of relevant objective evaluation methods, a novel framework is proposed for the quantitative evaluation of real-time 3D reconstruction systems. Finally, a generic, multiple depth stream-based method for accurate real-time human skeleton tracking is proposed. Detailed experimental results with multi-Kinect2 data sets verify the validity of our arguments and the effectiveness of the proposed system and methodologies.

* 16 pages, 12 figures, 3 tables

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge