Fadwa Al Adel

Diabetic Retinopathy Screening Using Custom-Designed Convolutional Neural Network

Oct 08, 2021

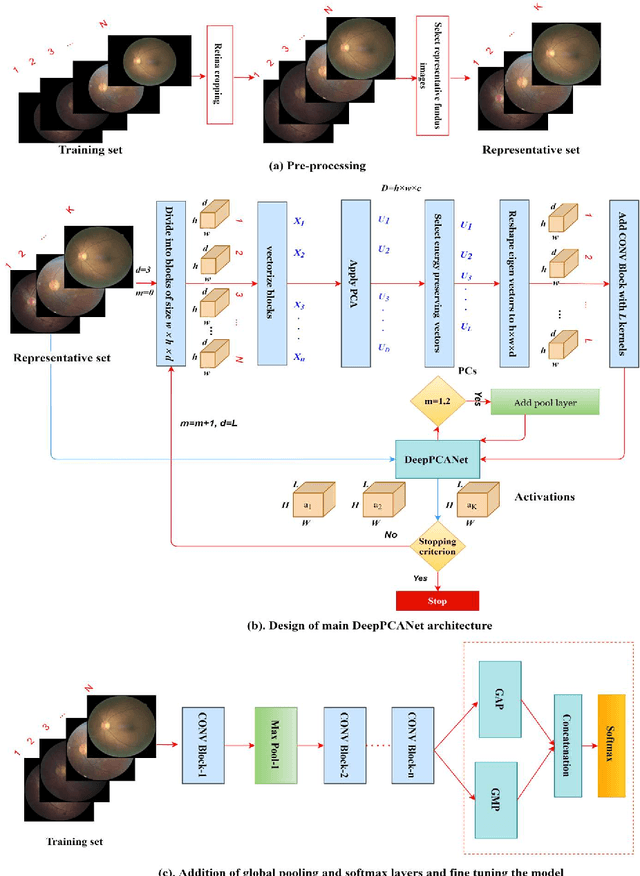

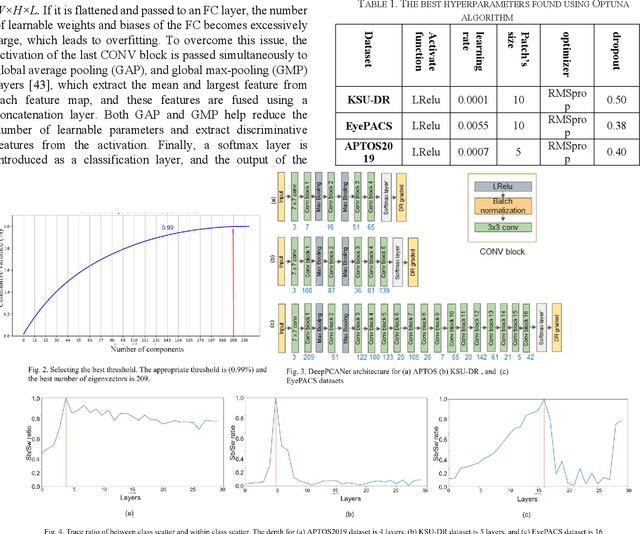

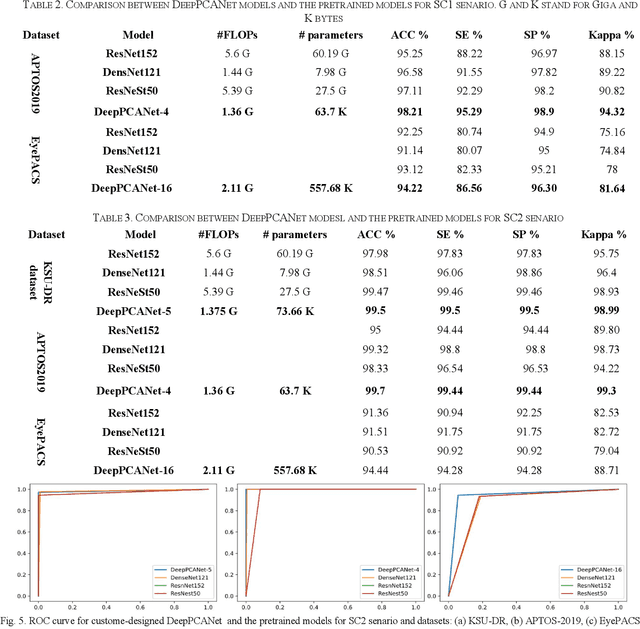

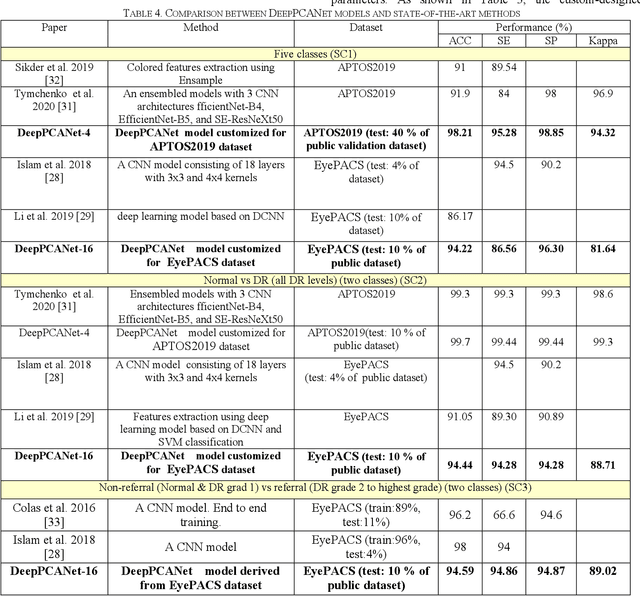

Abstract:The prevalence of diabetic retinopathy (DR) has reached 34.6% worldwide and is a major cause of blindness among middle-aged diabetic patients. Regular DR screening using fundus photography helps detect its complications and prevent its progression to advanced levels. As manual screening is time-consuming and subjective, machine learning (ML) and deep learning (DL) have been employed to aid graders. However, the existing CNN-based methods use either pre-trained CNN models or a brute force approach to design new CNN models, which are not customized to the complexity of fundus images. To overcome this issue, we introduce an approach for custom-design of CNN models, whose architectures are adapted to the structural patterns of fundus images and better represent the DR-relevant features. It takes the leverage of k-medoid clustering, principal component analysis (PCA), and inter-class and intra-class variations to automatically determine the depth and width of a CNN model. The designed models are lightweight, adapted to the internal structures of fundus images, and encode the discriminative patterns of DR lesions. The technique is validated on a local dataset from King Saud University Medical City, Saudi Arabia, and two challenging benchmark datasets from Kaggle: EyePACS and APTOS2019. The custom-designed models outperform the famous pre-trained CNN models like ResNet152, Densnet121, and ResNeSt50 with a significant decrease in the number of parameters and compete well with the state-of-the-art CNN-based DR screening methods. The proposed approach is helpful for DR screening under diverse clinical settings and referring the patients who may need further assessment and treatment to expert ophthalmologists.

A Deep Learning-Based Unified Framework for Red Lesions Detection on Retinal Fundus Images

Sep 17, 2021

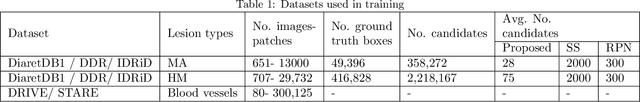

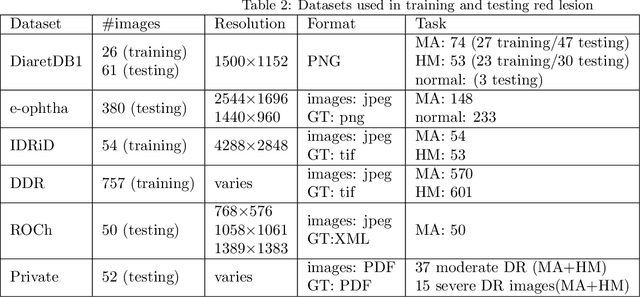

Abstract:Red-lesions, i.e., microaneurysms (MAs) and hemorrhages (HMs), are the early signs of diabetic retinopathy (DR). The automatic detection of MAs and HMs on retinal fundus images is a challenging task. Most of the existing methods detect either only MAs or only HMs because of the difference in their texture, sizes, and morphology. Though some methods detect both MAs and HMs, they suffer from the curse of dimensionality of shape and colors features and fail to detect all shape variations of HMs such as flame-shaped HM. Leveraging the progress in deep learning, we proposed a two-stream red lesions detection system dealing simultaneously with small and large red lesions. For this system, we introduced a new ROIs candidates generation method for large red lesions fundus images; it is based on blood vessel segmentation and morphological operations, and reduces the computational complexity, and enhances the detection accuracy by generating a small number of potential candidates. For detection, we adapted the Faster RCNN framework with two streams. We used pre-trained VGGNet as a bone model and carried out several extensive experiments to tune it for vessels segmentation and candidates generation, and finally learning the appropriate mapping, which yields better detection of the red lesions comparing with the state-of-the-art methods. The experimental results validated the effectiveness of the system in the detection of both MAs and HMs; the method yields higher performance for per lesion detection according to sensitivity under 4 FPIs on DiaretDB1-MA and DiaretDB1-HM datasets, and 1 FPI on e-ophtha and ROCh datasets than the state of the art methods w.r.t. various evaluation metrics. For DR screening, the system outperforms other methods on DiaretDB1-MA, DiaretDB1-HM, and e-ophtha datasets.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge