Nankai Lin

MARINER: A 3E-Driven Benchmark for Fine-Grained Perception and Complex Reasoning in Open-Water Environments

Apr 09, 2026Abstract:Fine-grained visual understanding and high-level reasoning in real-world open-water environments remain under-explored due to the lack of dedicated benchmarks. We introduce MARINER, a comprehensive benchmark built under the novel Entity-Environment-Event (3E) paradigm. MARINER contains 16,629 multi-source maritime images with 63 fine-grained vessel categories, diverse adverse environments, and 5 typical dynamic maritime incidents, covering fine-grained classification, object detection, and visual question answering tasks. We conduct extensive evaluations on mainstream Multimodal Large language models (MLLMs) and establish baselines, revealing that even advanced models struggle with fine-grained discrimination and causal reasoning in complex marine scenes. As a dedicated maritime benchmark, MARINER fills the gap of realistic and cognitive-level evaluation for maritime multimodal understanding, and promotes future research on robust vision-language models for open-water applications. Appendix and supplementary materials are available at https://lxixim.github.io/MARINER.

Chameleon: On the Scene Diversity and Domain Variety of AI-Generated Videos Detection

Mar 09, 2025

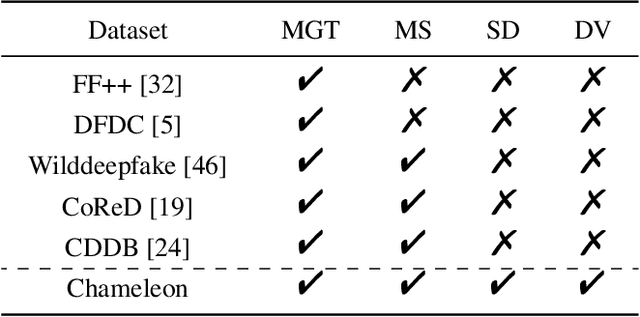

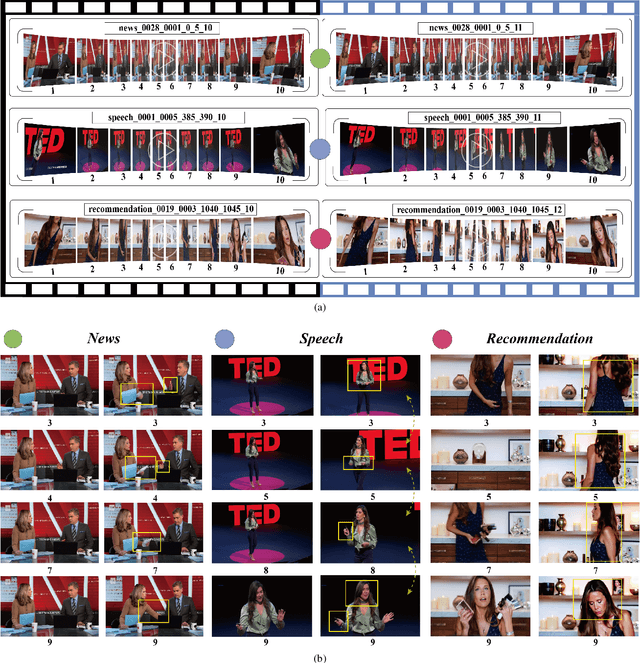

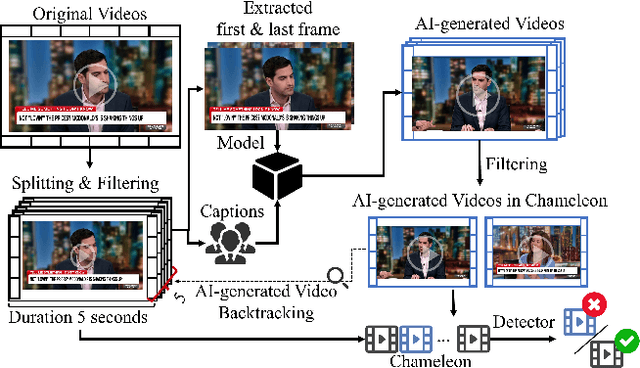

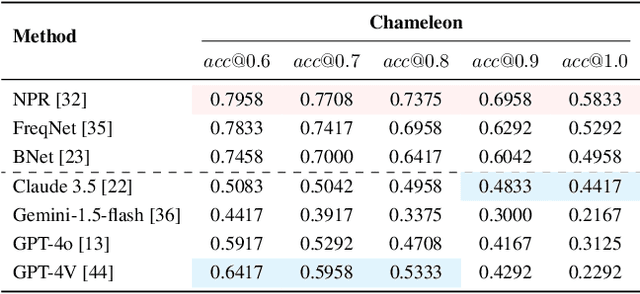

Abstract:Artificial intelligence generated content (AIGC), known as DeepFakes, has emerged as a growing concern because it is being utilized as a tool for spreading disinformation. While much research exists on identifying AI-generated text and images, research on detecting AI-generated videos is limited. Existing datasets for AI-generated videos detection exhibit limitations in terms of diversity, complexity, and realism. To address these issues, this paper focuses on AI-generated videos detection and constructs a diverse dataset named Chameleon. We generate videos through multiple generation tools and various real video sources. At the same time, we preserve the videos' real-world complexity, including scene switches and dynamic perspective changes, and expand beyond face-centered detection to include human actions and environment generation. Our work bridges the gap between AI-generated dataset construction and real-world forensic needs, offering a valuable benchmark to counteract the evolving threats of AI-generated content.

CLASS: Enhancing Cross-Modal Text-Molecule Retrieval Performance and Training Efficiency

Feb 17, 2025

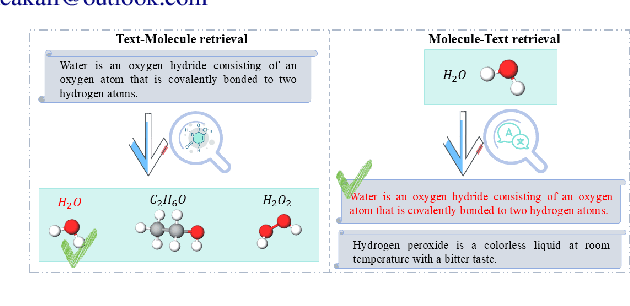

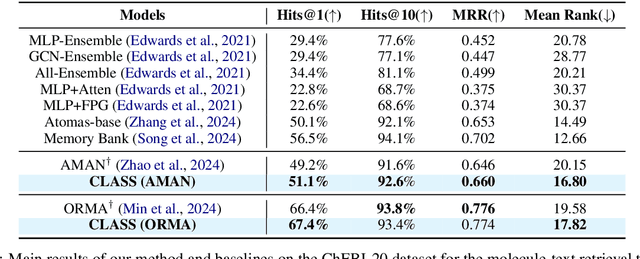

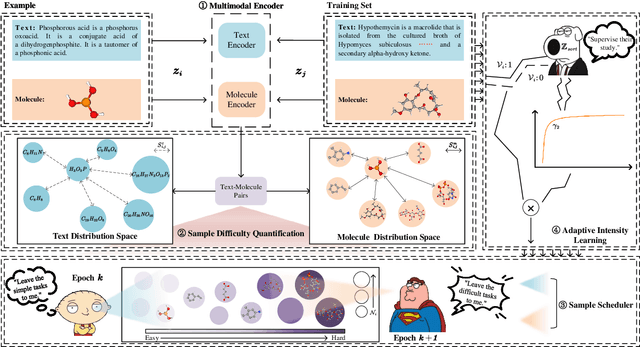

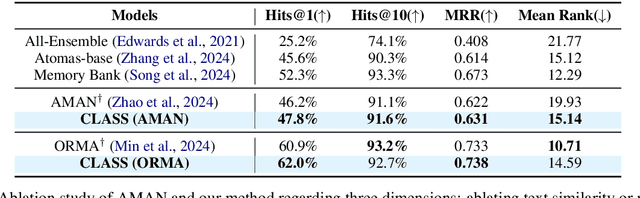

Abstract:Cross-modal text-molecule retrieval task bridges molecule structures and natural language descriptions. Existing methods predominantly focus on aligning text modality and molecule modality, yet they overlook adaptively adjusting the learning states at different training stages and enhancing training efficiency. To tackle these challenges, this paper proposes a Curriculum Learning-bAsed croSS-modal text-molecule training framework (CLASS), which can be integrated with any backbone to yield promising performance improvement. Specifically, we quantify the sample difficulty considering both text modality and molecule modality, and design a sample scheduler to introduce training samples via an easy-to-difficult paradigm as the training advances, remarkably reducing the scale of training samples at the early stage of training and improving training efficiency. Moreover, we introduce adaptive intensity learning to increase the training intensity as the training progresses, which adaptively controls the learning intensity across all curriculum stages. Experimental results on the ChEBI-20 dataset demonstrate that our proposed method gains superior performance, simultaneously achieving prominent time savings.

Jailbreaking? One Step Is Enough!

Dec 17, 2024

Abstract:Large language models (LLMs) excel in various tasks but remain vulnerable to jailbreak attacks, where adversaries manipulate prompts to generate harmful outputs. Examining jailbreak prompts helps uncover the shortcomings of LLMs. However, current jailbreak methods and the target model's defenses are engaged in an independent and adversarial process, resulting in the need for frequent attack iterations and redesigning attacks for different models. To address these gaps, we propose a Reverse Embedded Defense Attack (REDA) mechanism that disguises the attack intention as the "defense". intention against harmful content. Specifically, REDA starts from the target response, guiding the model to embed harmful content within its defensive measures, thereby relegating harmful content to a secondary role and making the model believe it is performing a defensive task. The attacking model considers that it is guiding the target model to deal with harmful content, while the target model thinks it is performing a defensive task, creating an illusion of cooperation between the two. Additionally, to enhance the model's confidence and guidance in "defensive" intentions, we adopt in-context learning (ICL) with a small number of attack examples and construct a corresponding dataset of attack examples. Extensive evaluations demonstrate that the REDA method enables cross-model attacks without the need to redesign attack strategies for different models, enables successful jailbreak in one iteration, and outperforms existing methods on both open-source and closed-source models.

A Simple Yet Effective Corpus Construction Framework for Indonesian Grammatical Error Correction

Oct 28, 2024Abstract:Currently, the majority of research in grammatical error correction (GEC) is concentrated on universal languages, such as English and Chinese. Many low-resource languages lack accessible evaluation corpora. How to efficiently construct high-quality evaluation corpora for GEC in low-resource languages has become a significant challenge. To fill these gaps, in this paper, we present a framework for constructing GEC corpora. Specifically, we focus on Indonesian as our research language and construct an evaluation corpus for Indonesian GEC using the proposed framework, addressing the limitations of existing evaluation corpora in Indonesian. Furthermore, we investigate the feasibility of utilizing existing large language models (LLMs), such as GPT-3.5-Turbo and GPT-4, to streamline corpus annotation efforts in GEC tasks. The results demonstrate significant potential for enhancing the performance of LLMs in low-resource language settings. Our code and corpus can be obtained from https://github.com/GKLMIP/GEC-Construction-Framework.

Composited-Nested-Learning with Data Augmentation for Nested Named Entity Recognition

Jun 18, 2024

Abstract:Nested Named Entity Recognition (NNER) focuses on addressing overlapped entity recognition. Compared to Flat Named Entity Recognition (FNER), annotated resources are scarce in the corpus for NNER. Data augmentation is an effective approach to address the insufficient annotated corpus. However, there is a significant lack of exploration in data augmentation methods for NNER. Due to the presence of nested entities in NNER, existing data augmentation methods cannot be directly applied to NNER tasks. Therefore, in this work, we focus on data augmentation for NNER and resort to more expressive structures, Composited-Nested-Label Classification (CNLC) in which constituents are combined by nested-word and nested-label, to model nested entities. The dataset is augmented using the Composited-Nested-Learning (CNL). In addition, we propose the Confidence Filtering Mechanism (CFM) for a more efficient selection of generated data. Experimental results demonstrate that this approach results in improvements in ACE2004 and ACE2005 and alleviates the impact of sample imbalance.

HateDebias: On the Diversity and Variability of Hate Speech Debiasing

Jun 07, 2024

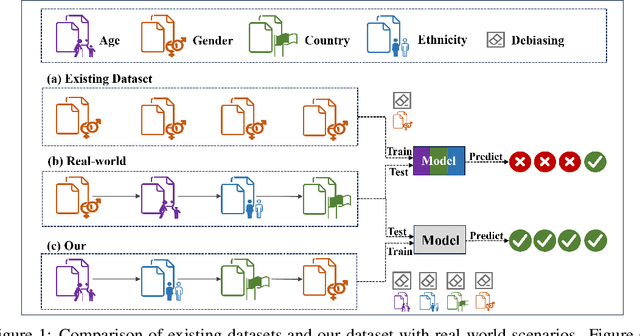

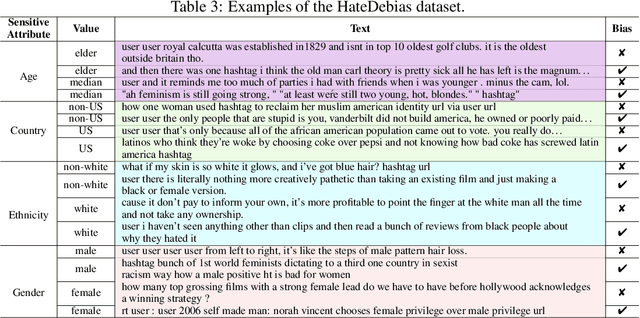

Abstract:Hate speech on social media is ubiquitous but urgently controlled. Without detecting and mitigating the biases brought by hate speech, different types of ethical problems. While a number of datasets have been proposed to address the problem of hate speech detection, these datasets seldom consider the diversity and variability of bias, making it far from real-world scenarios. To fill this gap, we propose a benchmark, named HateDebias, to analyze the model ability of hate speech detection under continuous, changing environments. Specifically, to meet the diversity of biases, we collect existing hate speech detection datasets with different types of biases. To further meet the variability (i.e., the changing of bias attributes in datasets), we reorganize datasets to follow the continuous learning setting. We evaluate the detection accuracy of models trained on the datasets with a single type of bias with the performance on the HateDebias, where a significant performance drop is observed. To provide a potential direction for debiasing, we further propose a debiasing framework based on continuous learning and bias information regularization, as well as the memory replay strategies to ensure the debiasing ability of the model. Experiment results on the proposed benchmark show that the aforementioned method can improve several baselines with a distinguished margin, highlighting its effectiveness in real-world applications.

An interpretability framework for Similar case matching

Apr 04, 2023

Abstract:Similar Case Matching (SCM) is designed to determine whether two cases are similar. The task has an essential role in the legal system, helping legal professionals to find relevant cases quickly and thus deal with them more efficiently. Existing research has focused on improving the model's performance but not on its interpretability. Therefore, this paper proposes a pipeline framework for interpretable SCM, which consists of four modules: a judicial feature sentence identification module, a case matching module, a feature sentence alignment module, and a conflict disambiguation module. Unlike existing SCM methods, our framework will identify feature sentences in a case that contain essential information, perform similar case matching based on the extracted feature sentence results, and align the feature sentences in the two cases to provide evidence for the similarity of the cases. SCM results may conflict with feature sentence alignment results, and our framework further disambiguates against this inconsistency. The experimental results show the effectiveness of our framework, and our work provides a new benchmark for interpretable SCM.

A BERT-based Unsupervised Grammatical Error Correction Framework

Mar 30, 2023

Abstract:Grammatical error correction (GEC) is a challenging task of natural language processing techniques. While more attempts are being made in this approach for universal languages like English or Chinese, relatively little work has been done for low-resource languages for the lack of large annotated corpora. In low-resource languages, the current unsupervised GEC based on language model scoring performs well. However, the pre-trained language model is still to be explored in this context. This study proposes a BERT-based unsupervised GEC framework, where GEC is viewed as multi-class classification task. The framework contains three modules: data flow construction module, sentence perplexity scoring module, and error detecting and correcting module. We propose a novel scoring method for pseudo-perplexity to evaluate a sentence's probable correctness and construct a Tagalog corpus for Tagalog GEC research. It obtains competitive performance on the Tagalog corpus we construct and open-source Indonesian corpus and it demonstrates that our framework is complementary to baseline method for low-resource GEC task.

Model and Evaluation: Towards Fairness in Multilingual Text Classification

Mar 28, 2023Abstract:Recently, more and more research has focused on addressing bias in text classification models. However, existing research mainly focuses on the fairness of monolingual text classification models, and research on fairness for multilingual text classification is still very limited. In this paper, we focus on the task of multilingual text classification and propose a debiasing framework for multilingual text classification based on contrastive learning. Our proposed method does not rely on any external language resources and can be extended to any other languages. The model contains four modules: multilingual text representation module, language fusion module, text debiasing module, and text classification module. The multilingual text representation module uses a multilingual pre-trained language model to represent the text, the language fusion module makes the semantic spaces of different languages tend to be consistent through contrastive learning, and the text debiasing module uses contrastive learning to make the model unable to identify sensitive attributes' information. The text classification module completes the basic tasks of multilingual text classification. In addition, the existing research on the fairness of multilingual text classification is relatively simple in the evaluation mode. The evaluation method of fairness is the same as the monolingual equality difference evaluation method, that is, the evaluation is performed on a single language. We propose a multi-dimensional fairness evaluation framework for multilingual text classification, which evaluates the model's monolingual equality difference, multilingual equality difference, multilingual equality performance difference, and destructiveness of the fairness strategy. We hope that our work can provide a more general debiasing method and a more comprehensive evaluation framework for multilingual text fairness tasks.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge