Eunsuk Kang

Demonstrating ViSafe: Vision-enabled Safety for High-speed Detect and Avoid

May 08, 2025

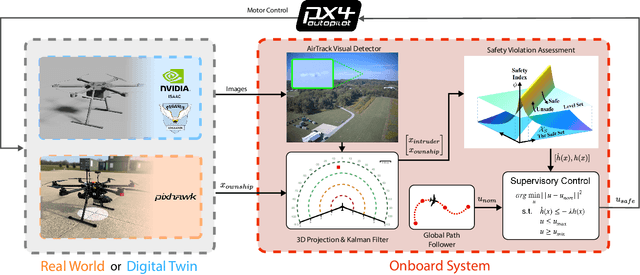

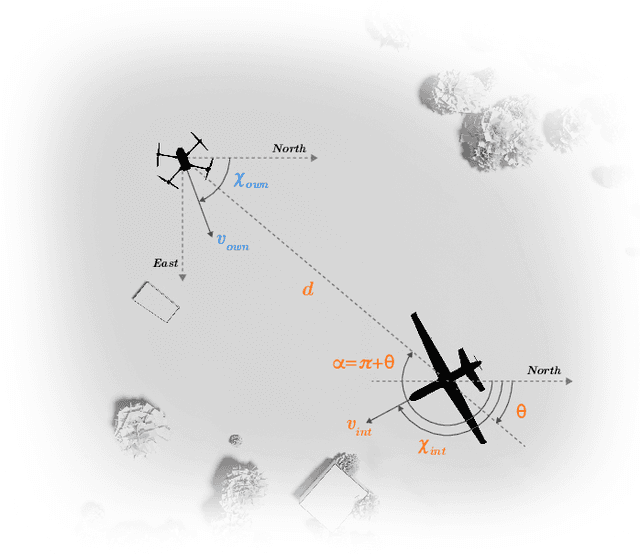

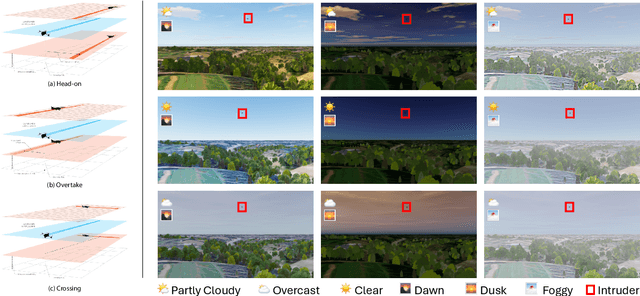

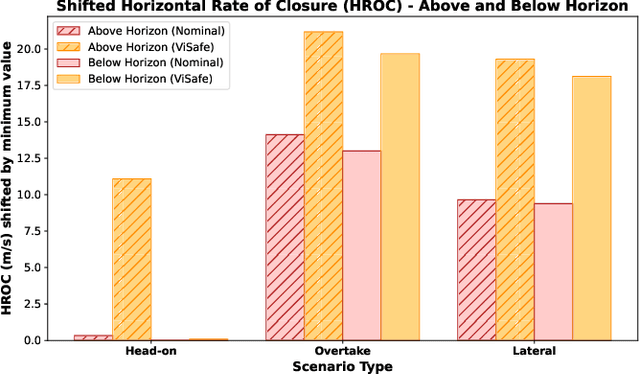

Abstract:Assured safe-separation is essential for achieving seamless high-density operation of airborne vehicles in a shared airspace. To equip resource-constrained aerial systems with this safety-critical capability, we present ViSafe, a high-speed vision-only airborne collision avoidance system. ViSafe offers a full-stack solution to the Detect and Avoid (DAA) problem by tightly integrating a learning-based edge-AI framework with a custom multi-camera hardware prototype designed under SWaP-C constraints. By leveraging perceptual input-focused control barrier functions (CBF) to design, encode, and enforce safety thresholds, ViSafe can provide provably safe runtime guarantees for self-separation in high-speed aerial operations. We evaluate ViSafe's performance through an extensive test campaign involving both simulated digital twins and real-world flight scenarios. By independently varying agent types, closure rates, interaction geometries, and environmental conditions (e.g., weather and lighting), we demonstrate that ViSafe consistently ensures self-separation across diverse scenarios. In first-of-its-kind real-world high-speed collision avoidance tests with closure rates reaching 144 km/h, ViSafe sets a new benchmark for vision-only autonomous collision avoidance, establishing a new standard for safety in high-speed aerial navigation.

Pretrained Embeddings as a Behavior Specification Mechanism

Mar 03, 2025Abstract:We propose an approach to formally specifying the behavioral properties of systems that rely on a perception model for interactions with the physical world. The key idea is to introduce embeddings -- mathematical representations of a real-world concept -- as a first-class construct in a specification language, where properties are expressed in terms of distances between a pair of ideal and observed embeddings. To realize this approach, we propose a new type of temporal logic called Embedding Temporal Logic (ETL), and describe how it can be used to express a wider range of properties about AI-enabled systems than previously possible. We demonstrate the applicability of ETL through a preliminary evaluation involving planning tasks in robots that are driven by foundation models; the results are promising, showing that embedding-based specifications can be used to steer a system towards desirable behaviors.

STLCG++: A Masking Approach for Differentiable Signal Temporal Logic Specification

Jan 08, 2025Abstract:Signal Temporal Logic (STL) offers a concise yet expressive framework for specifying and reasoning about spatio-temporal behaviors of robotic systems. Attractively, STL admits the notion of robustness, the degree to which an input signal satisfies or violates an STL specification, thus providing a nuanced evaluation of system performance. Notably, the differentiability of STL robustness enables direct integration to robotics workflows that rely on gradient-based optimization, such as trajectory optimization and deep learning. However, existing approaches to evaluating and differentiating STL robustness rely on recurrent computations, which become inefficient with longer sequences, limiting their use in time-sensitive applications. In this paper, we present STLCG++, a masking-based approach that parallelizes STL robustness evaluation and backpropagation across timesteps, achieving more than 1000x faster computation time than the recurrent approach. We also introduce a smoothing technique for differentiability through time interval bounds, expanding STL's applicability in gradient-based optimization tasks over spatial and temporal variables. Finally, we demonstrate STLCG++'s benefits through three robotics use cases and provide open-source Python libraries in JAX and PyTorch for seamless integration into modern robotics workflows.

FairSense: Long-Term Fairness Analysis of ML-Enabled Systems

Jan 03, 2025

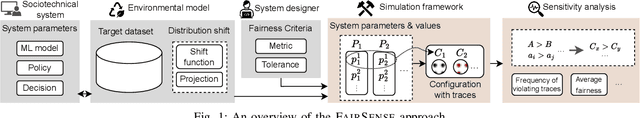

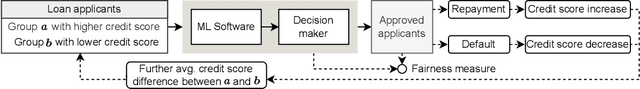

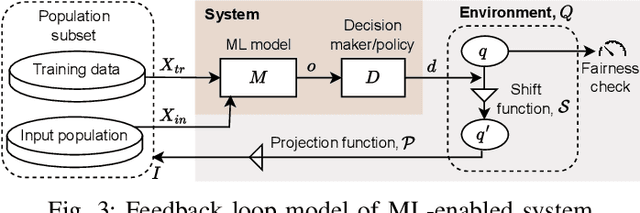

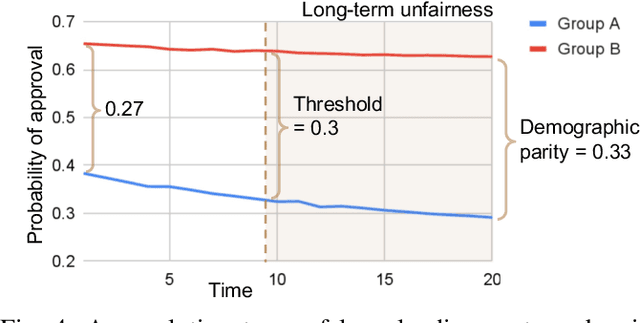

Abstract:Algorithmic fairness of machine learning (ML) models has raised significant concern in the recent years. Many testing, verification, and bias mitigation techniques have been proposed to identify and reduce fairness issues in ML models. The existing methods are model-centric and designed to detect fairness issues under static settings. However, many ML-enabled systems operate in a dynamic environment where the predictive decisions made by the system impact the environment, which in turn affects future decision-making. Such a self-reinforcing feedback loop can cause fairness violations in the long term, even if the immediate outcomes are fair. In this paper, we propose a simulation-based framework called FairSense to detect and analyze long-term unfairness in ML-enabled systems. Given a fairness requirement, FairSense performs Monte-Carlo simulation to enumerate evolution traces for each system configuration. Then, FairSense performs sensitivity analysis on the space of possible configurations to understand the impact of design options and environmental factors on the long-term fairness of the system. We demonstrate FairSense's potential utility through three real-world case studies: Loan lending, opioids risk scoring, and predictive policing.

Tolerance of Reinforcement Learning Controllers against Deviations in Cyber Physical Systems

Jun 24, 2024

Abstract:Cyber-physical systems (CPS) with reinforcement learning (RL)-based controllers are increasingly being deployed in complex physical environments such as autonomous vehicles, the Internet-of-Things(IoT), and smart cities. An important property of a CPS is tolerance; i.e., its ability to function safely under possible disturbances and uncertainties in the actual operation. In this paper, we introduce a new, expressive notion of tolerance that describes how well a controller is capable of satisfying a desired system requirement, specified using Signal Temporal Logic (STL), under possible deviations in the system. Based on this definition, we propose a novel analysis problem, called the tolerance falsification problem, which involves finding small deviations that result in a violation of the given requirement. We present a novel, two-layer simulation-based analysis framework and a novel search heuristic for finding small tolerance violations. To evaluate our approach, we construct a set of benchmark problems where system parameters can be configured to represent different types of uncertainties and disturbancesin the system. Our evaluation shows that our falsification approach and heuristic can effectively find small tolerance violations.

Safe Planning through Incremental Decomposition of Signal Temporal Logic Specifications

Mar 19, 2024Abstract:Trajectory planning is a critical process that enables autonomous systems to safely navigate complex environments. Signal temporal logic (STL) specifications are an effective way to encode complex temporally extended objectives for trajectory planning in cyber-physical systems (CPS). However, planning from these specifications using existing techniques scale exponentially with the number of nested operators and the horizon of specification. Additionally, performance is exacerbated at runtime due to limited computational budgets and compounding modeling errors. Decomposing a complex specification into smaller subtasks and incrementally planning for them can remedy these issues. In this work, we present a way to decompose STL requirements temporally to improve planning efficiency and performance. The key insight in our work is to encode all specifications as a set of reachability and invariance constraints and scheduling these constraints sequentially at runtime. Our proposed technique outperforms the state-of-the-art trajectory synthesis techniques for both linear and non linear dynamical systems.

Feature Interactions on Steroids: On the Composition of ML Models

May 13, 2021

Abstract:The lack of specifications is a key difference between traditional software engineering and machine learning. We discuss how it drastically impacts how we think about divide-and-conquer approaches to system design, and how it impacts reuse, testing and debugging activities. Traditionally, specifications provide a cornerstone for compositional reasoning and for the divide-and-conquer strategy of how we build large and complex systems from components, but those are hard to come by for machine-learned components. While the lack of specification seems like a fundamental new problem at first sight, in fact software engineers routinely deal with iffy specifications in practice: we face weak specifications, wrong specifications, and unanticipated interactions among components and their specifications. Machine learning may push us further, but the problems are not fundamentally new. Rethinking machine-learning model composition from the perspective of the feature interaction problem, we may even teach us a thing or two on how to move forward, including the importance of integration testing, of requirements engineering, and of design.

Runtime-Safety-Guided Policy Repair

Aug 17, 2020

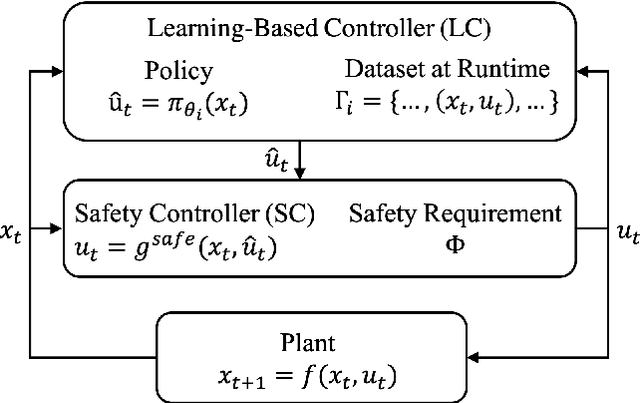

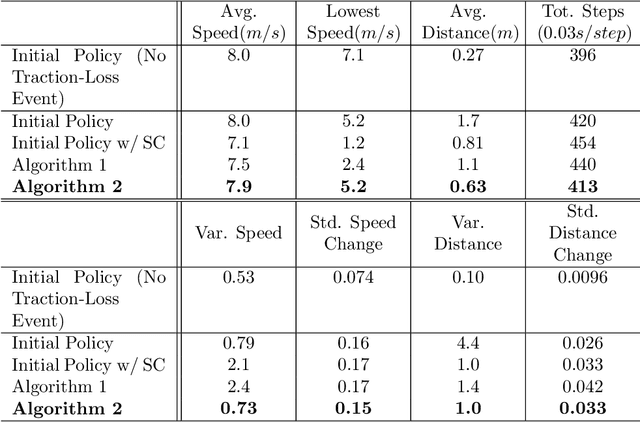

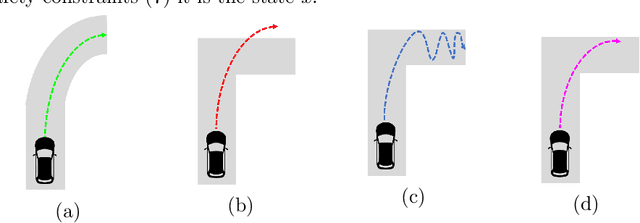

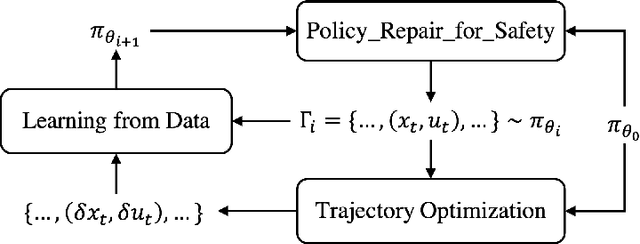

Abstract:We study the problem of policy repair for learning-based control policies in safety-critical settings. We consider an architecture where a high-performance learning-based control policy (e.g. one trained as a neural network) is paired with a model-based safety controller. The safety controller is endowed with the abilities to predict whether the trained policy will lead the system to an unsafe state, and take over control when necessary. While this architecture can provide added safety assurances, intermittent and frequent switching between the trained policy and the safety controller can result in undesirable behaviors and reduced performance. We propose to reduce or even eliminate control switching by `repairing' the trained policy based on runtime data produced by the safety controller in a way that deviates minimally from the original policy. The key idea behind our approach is the formulation of a trajectory optimization problem that allows the joint reasoning of policy update and safety constraints. Experimental results demonstrate that our approach is effective even when the system model in the safety controller is unknown and only approximated.

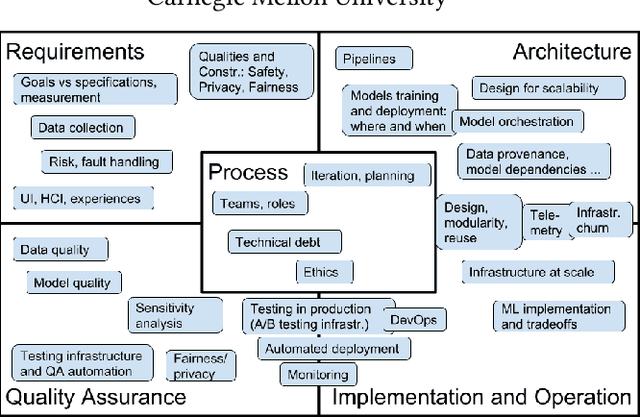

Teaching Software Engineering for AI-Enabled Systems

Jan 18, 2020

Abstract:Software engineers have significant expertise to offer when building intelligent systems, drawing on decades of experience and methods for building systems that are scalable, responsive and robust, even when built on unreliable components. Systems with artificial-intelligence or machine-learning (ML) components raise new challenges and require careful engineering. We designed a new course to teach software-engineering skills to students with a background in ML. We specifically go beyond traditional ML courses that teach modeling techniques under artificial conditions and focus, in lecture and assignments, on realism with large and changing datasets, robust and evolvable infrastructure, and purposeful requirements engineering that considers ethics and fairness as well. We describe the course and our infrastructure and share experience and all material from teaching the course for the first time.

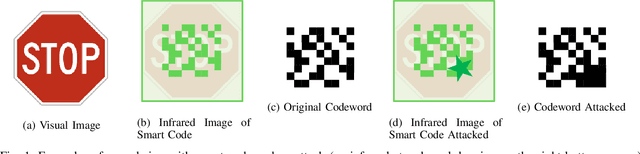

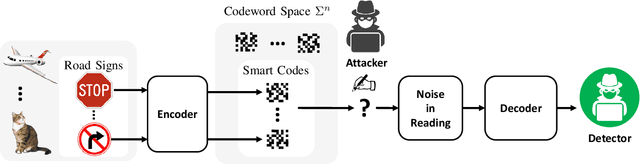

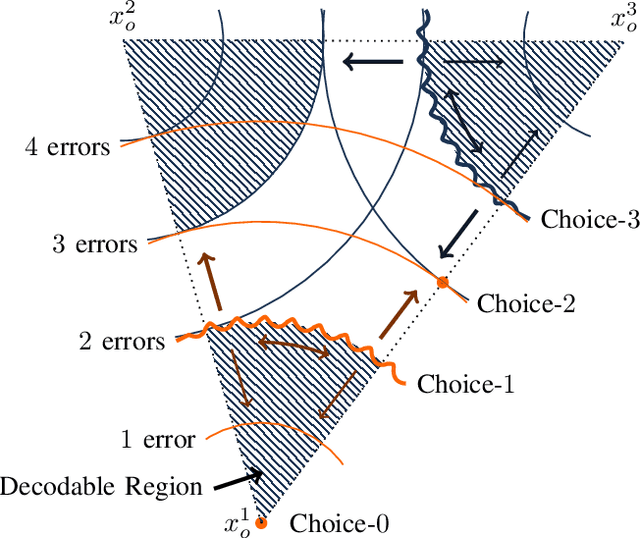

A Game Theoretical Error-Correction Framework for Secure Traffic-Sign Classification

Jan 30, 2019

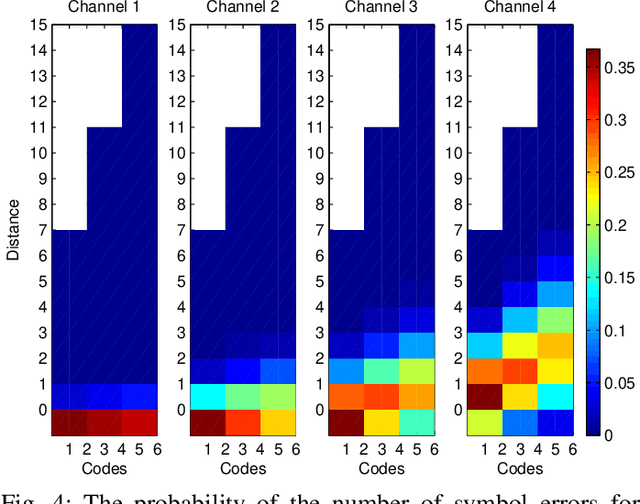

Abstract:We introduce a game theoretical error-correction framework to design classification algorithms that are reliable even in adversarial environments, with a specific focus on traffic-sign classification. Machine learning algorithms possess inherent vulnerabilities against maliciously crafted inputs especially at high dimensional input spaces. We seek to achieve reliable and timely performance in classification by redesigning the input space physically to significantly lower dimensions. Traffic-sign classification is an important use-case enabling the redesign of the inputs since traffic-signs have already been designed for their easy recognition by human drivers. We encode the original input samples to, e.g., strings of bits, through error-correction methods that can provide certain distance guarantees in-between any two different encoded inputs. And we model the interaction between the defense and the adversary as a game. Then, we analyze the underlying game using the concept of hierarchical equilibrium, where the defense strategies are designed by taking into account the best possible attack against them. At large scale, for computational simplicity, we provide an approximate solution, where we transform the problem into an efficient linear program with substantially small size compared to the original size of the entire input space. Finally, we examine the performance of the proposed scheme over different traffic-sign classification scenarios.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge