Ivan Cisneros

Demonstrating ViSafe: Vision-enabled Safety for High-speed Detect and Avoid

May 08, 2025

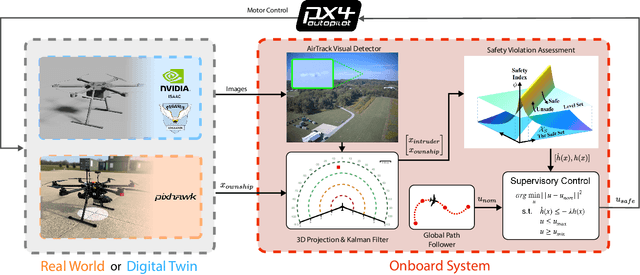

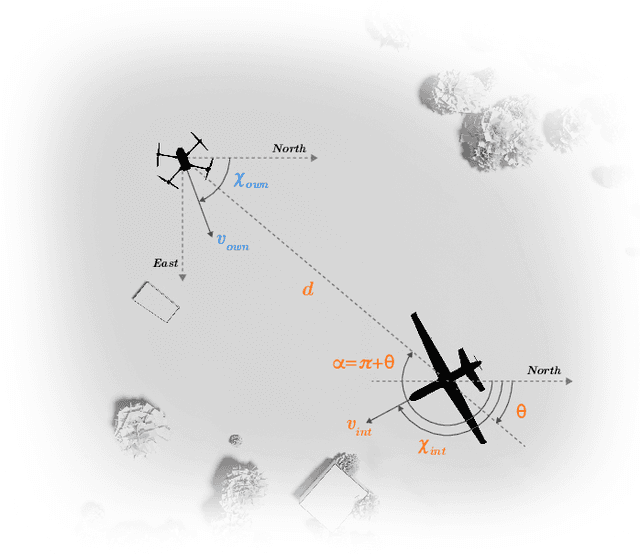

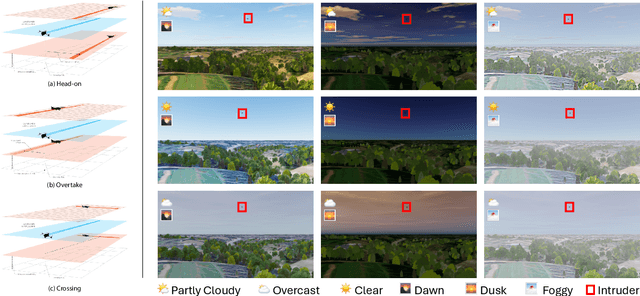

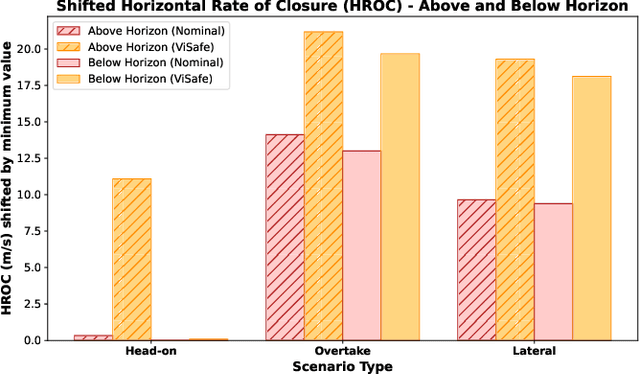

Abstract:Assured safe-separation is essential for achieving seamless high-density operation of airborne vehicles in a shared airspace. To equip resource-constrained aerial systems with this safety-critical capability, we present ViSafe, a high-speed vision-only airborne collision avoidance system. ViSafe offers a full-stack solution to the Detect and Avoid (DAA) problem by tightly integrating a learning-based edge-AI framework with a custom multi-camera hardware prototype designed under SWaP-C constraints. By leveraging perceptual input-focused control barrier functions (CBF) to design, encode, and enforce safety thresholds, ViSafe can provide provably safe runtime guarantees for self-separation in high-speed aerial operations. We evaluate ViSafe's performance through an extensive test campaign involving both simulated digital twins and real-world flight scenarios. By independently varying agent types, closure rates, interaction geometries, and environmental conditions (e.g., weather and lighting), we demonstrate that ViSafe consistently ensures self-separation across diverse scenarios. In first-of-its-kind real-world high-speed collision avoidance tests with closure rates reaching 144 km/h, ViSafe sets a new benchmark for vision-only autonomous collision avoidance, establishing a new standard for safety in high-speed aerial navigation.

FoundLoc: Vision-based Onboard Aerial Localization in the Wild

Oct 25, 2023

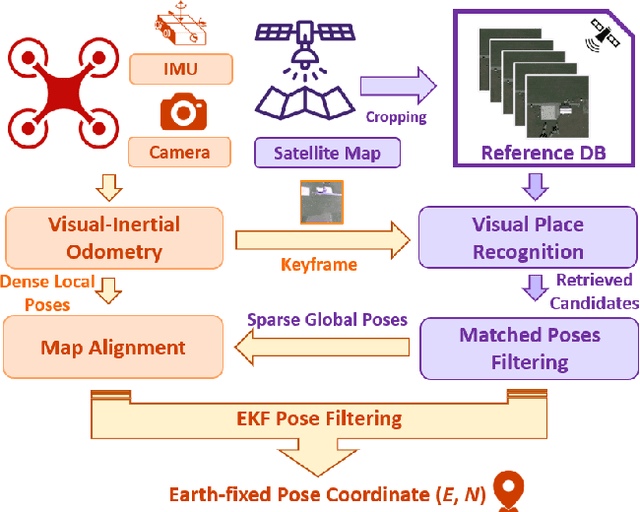

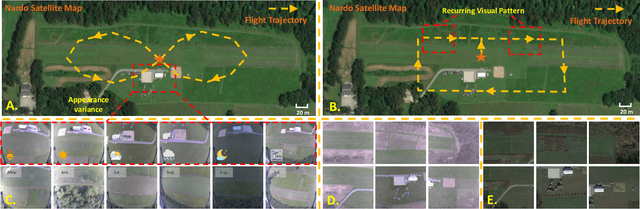

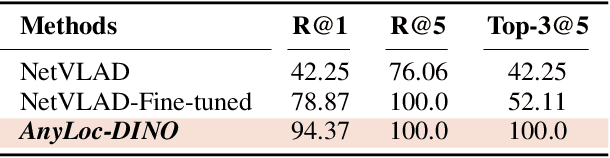

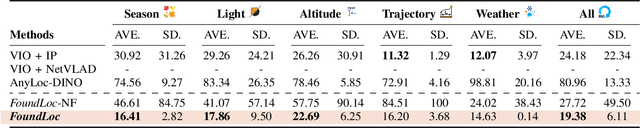

Abstract:Robust and accurate localization for Unmanned Aerial Vehicles (UAVs) is an essential capability to achieve autonomous, long-range flights. Current methods either rely heavily on GNSS, face limitations in visual-based localization due to appearance variances and stylistic dissimilarities between camera and reference imagery, or operate under the assumption of a known initial pose. In this paper, we developed a GNSS-denied localization approach for UAVs that harnesses both Visual-Inertial Odometry (VIO) and Visual Place Recognition (VPR) using a foundation model. This paper presents a novel vision-based pipeline that works exclusively with a nadir-facing camera, an Inertial Measurement Unit (IMU), and pre-existing satellite imagery for robust, accurate localization in varied environments and conditions. Our system demonstrated average localization accuracy within a $20$-meter range, with a minimum error below $1$ meter, under real-world conditions marked by drastic changes in environmental appearance and with no assumption of the vehicle's initial pose. The method is proven to be effective and robust, addressing the crucial need for reliable UAV localization in GNSS-denied environments, while also being computationally efficient enough to be deployed on resource-constrained platforms.

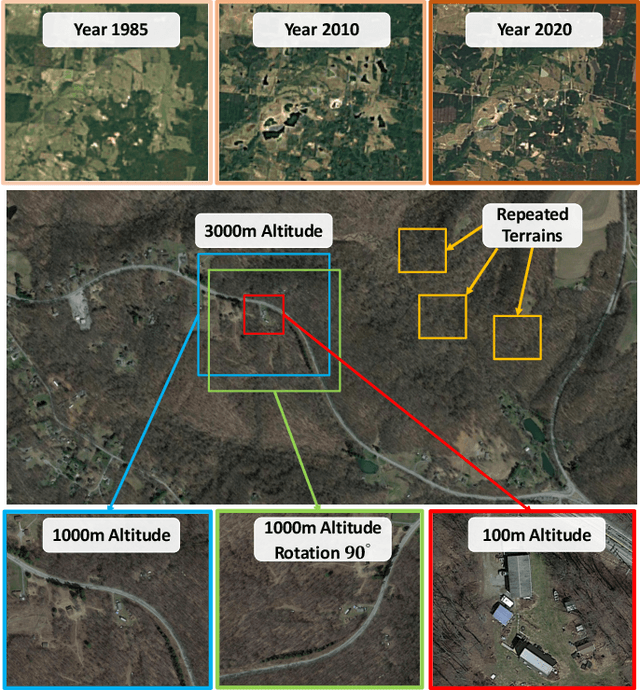

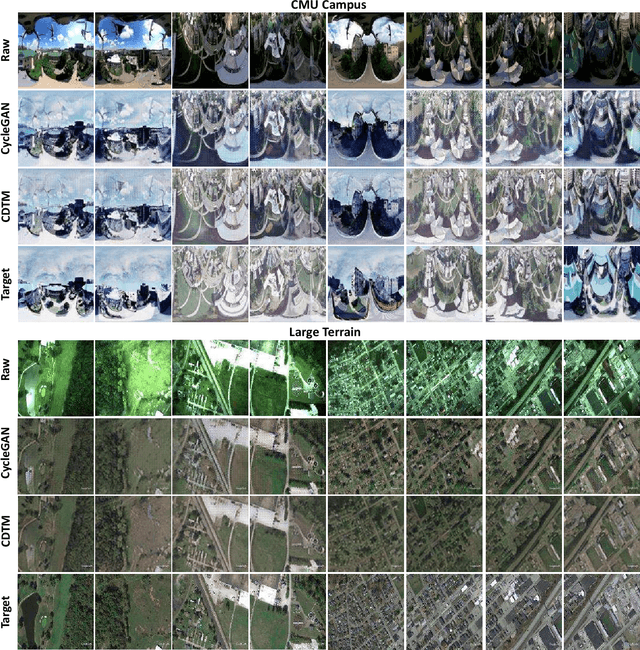

iSimLoc: Visual Global Localization for Previously Unseen Environments with Simulated Images

Sep 14, 2022

Abstract:The visual camera is an attractive device in beyond visual line of sight (B-VLOS) drone operation, since they are low in size, weight, power, and cost, and can provide redundant modality to GPS failures. However, state-of-the-art visual localization algorithms are unable to match visual data that have a significantly different appearance due to illuminations or viewpoints. This paper presents iSimLoc, a condition/viewpoint consistent hierarchical global re-localization approach. The place features of iSimLoc can be utilized to search target images under changing appearances and viewpoints. Additionally, our hierarchical global re-localization module refines in a coarse-to-fine manner, allowing iSimLoc to perform a fast and accurate estimation. We evaluate our method on one dataset with appearance variations and one dataset that focuses on demonstrating large-scale matching over a long flight in complicated environments. On our two datasets, iSimLoc achieves 88.7\% and 83.8\% successful retrieval rates with 1.5s inferencing time, compared to 45.8% and 39.7% using the next best method. These results demonstrate robust localization in a range of environments.

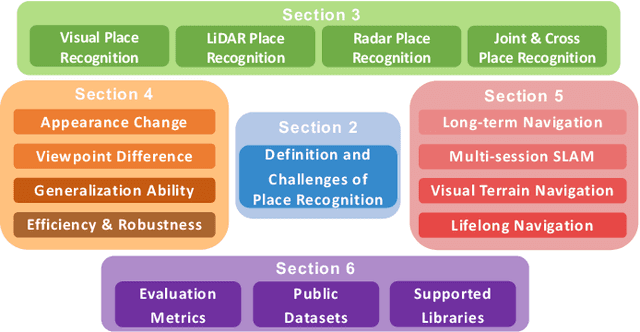

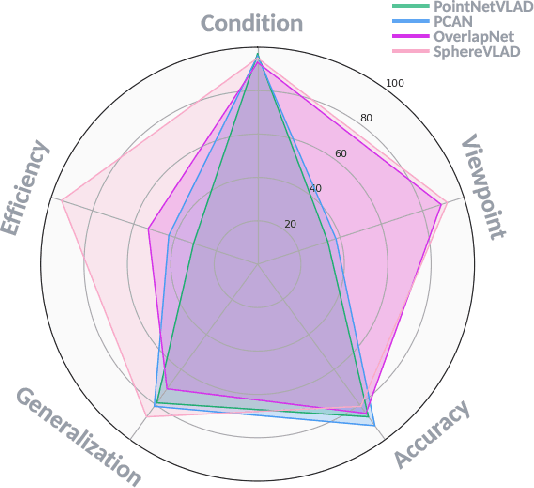

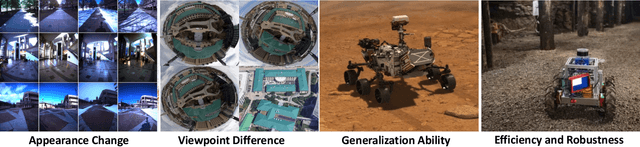

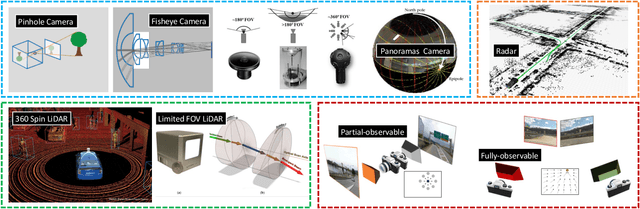

General Place Recognition Survey: Towards the Real-world Autonomy Age

Sep 09, 2022

Abstract:Place recognition is the fundamental module that can assist Simultaneous Localization and Mapping (SLAM) in loop-closure detection and re-localization for long-term navigation. The place recognition community has made astonishing progress over the last $20$ years, and this has attracted widespread research interest and application in multiple fields such as computer vision and robotics. However, few methods have shown promising place recognition performance in complex real-world scenarios, where long-term and large-scale appearance changes usually result in failures. Additionally, there is a lack of an integrated framework amongst the state-of-the-art methods that can handle all of the challenges in place recognition, which include appearance changes, viewpoint differences, robustness to unknown areas, and efficiency in real-world applications. In this work, we survey the state-of-the-art methods that target long-term localization and discuss future directions and opportunities. We start by investigating the formulation of place recognition in long-term autonomy and the major challenges in real-world environments. We then review the recent works in place recognition for different sensor modalities and current strategies for dealing with various place recognition challenges. Finally, we review the existing datasets for long-term localization and introduce our datasets and evaluation API for different approaches. This paper can be a tutorial for researchers new to the place recognition community and those who care about long-term robotics autonomy. We also provide our opinion on the frequently asked question in robotics: Do robots need accurate localization for long-term autonomy? A summary of this work and our datasets and evaluation API is publicly available to the robotics community at: https://github.com/MetaSLAM/GPRS.

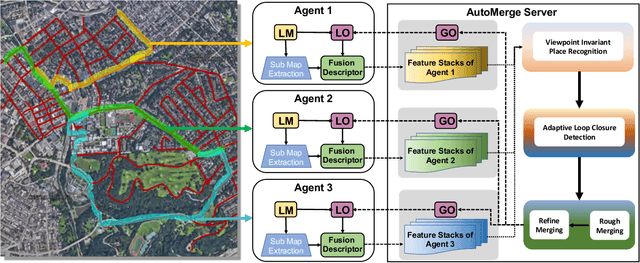

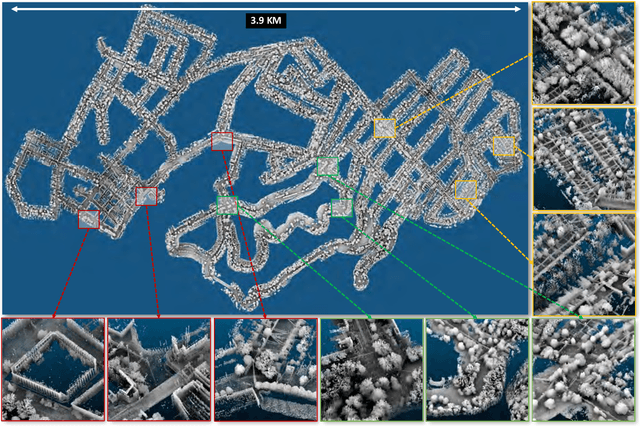

AutoMerge: A Framework for Map Assembling and Smoothing in City-scale Environments

Jul 14, 2022

Abstract:We present AutoMerge, a LiDAR data processing framework for assembling a large number of map segments into a complete map. Traditional large-scale map merging methods are fragile to incorrect data associations, and are primarily limited to working only offline. AutoMerge utilizes multi-perspective fusion and adaptive loop closure detection for accurate data associations, and it uses incremental merging to assemble large maps from individual trajectory segments given in random order and with no initial estimations. Furthermore, after assembling the segments, AutoMerge performs fine matching and pose-graph optimization to globally smooth the merged map. We demonstrate AutoMerge on both city-scale merging (120km) and campus-scale repeated merging (4.5km x 8). The experiments show that AutoMerge (i) surpasses the second- and third- best methods by 14% and 24% recall in segment retrieval, (ii) achieves comparable 3D mapping accuracy for 120 km large-scale map assembly, (iii) and it is robust to temporally-spaced revisits. To the best of our knowledge, AutoMerge is the first mapping approach that can merge hundreds of kilometers of individual segments without the aid of GPS.

ALITA: A Large-scale Incremental Dataset for Long-term Autonomy

May 22, 2022

Abstract:For long-term autonomy, most place recognition methods are mainly evaluated on simplified scenarios or simulated datasets, which cannot provide solid evidence to evaluate the readiness for current Simultaneous Localization and Mapping (SLAM). In this paper, we present a long-term place recognition dataset for use in mobile localization under large-scale dynamic environments. This dataset includes a campus-scale track and a city-scale track: 1) the campus-track focuses the long-term property, we record LiDAR device and an omnidirectional camera on 10 trajectories, and each trajectory are repeatly recorded 8 times under variant illumination conditions. 2) the city-track focuses the large-scale property, we mount the LiDAR device on the vehicle and traversing through a 120km trajectories, which contains open streets, residential areas, natural terrains, etc. They includes 200 hours of raw data of all kinds scenarios within urban environments. The ground truth position for both tracks are provided on each trajectory, which is obtained from the Global Position System with an additional General ICP based point cloud refinement. To simplify the evaluation procedure, we also provide the Python-API with a set of place recognition metrics is proposed to quickly load our dataset and evaluate the recognition performance against different methods. This dataset targets at finding methods with high place recognition accuracy and robustness, and providing real robotic system with long-term autonomy. The dataset and the provided tools can be accessed from https://github.com/MetaSLAM/ALITA.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge