Billy Pik Lik Lau

MEF-Explore: Communication-Constrained Multi-Robot Entropy-Field-Based Exploration

May 29, 2025Abstract:Collaborative multiple robots for unknown environment exploration have become mainstream due to their remarkable performance and efficiency. However, most existing methods assume perfect robots' communication during exploration, which is unattainable in real-world settings. Though there have been recent works aiming to tackle communication-constrained situations, substantial room for advancement remains for both information-sharing and exploration strategy aspects. In this paper, we propose a Communication-Constrained Multi-Robot Entropy-Field-Based Exploration (MEF-Explore). The first module of the proposed method is the two-layer inter-robot communication-aware information-sharing strategy. A dynamic graph is used to represent a multi-robot network and to determine communication based on whether it is low-speed or high-speed. Specifically, low-speed communication, which is always accessible between every robot, can only be used to share their current positions. If robots are within a certain range, high-speed communication will be available for inter-robot map merging. The second module is the entropy-field-based exploration strategy. Particularly, robots explore the unknown area distributedly according to the novel forms constructed to evaluate the entropies of frontiers and robots. These entropies can also trigger implicit robot rendezvous to enhance inter-robot map merging if feasible. In addition, we include the duration-adaptive goal-assigning module to manage robots' goal assignment. The simulation results demonstrate that our MEF-Explore surpasses the existing ones regarding exploration time and success rate in all scenarios. For real-world experiments, our method leads to a 21.32% faster exploration time and a 16.67% higher success rate compared to the baseline.

A Scalable Decentralized Reinforcement Learning Framework for UAV Target Localization Using Recurrent PPO

Dec 09, 2024

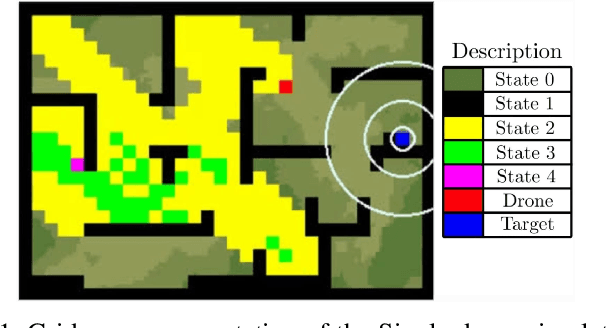

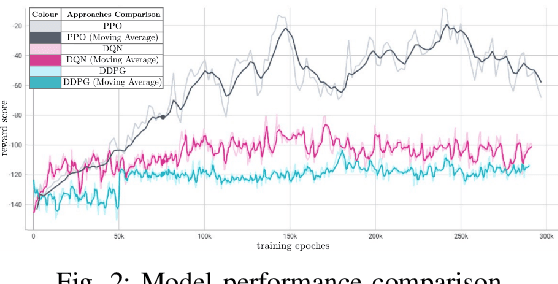

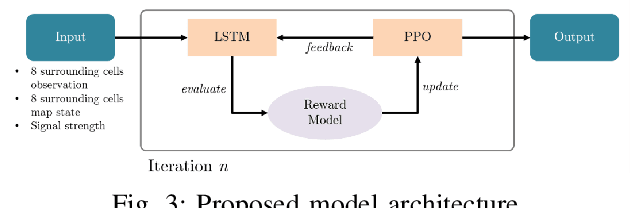

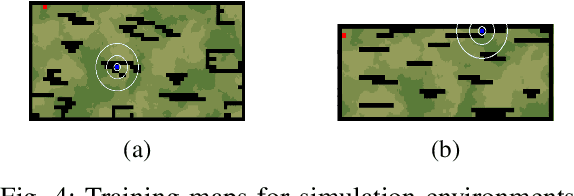

Abstract:The rapid advancements in unmanned aerial vehicles (UAVs) have unlocked numerous applications, including environmental monitoring, disaster response, and agricultural surveying. Enhancing the collective behavior of multiple decentralized UAVs can significantly improve these applications through more efficient and coordinated operations. In this study, we explore a Recurrent PPO model for target localization in perceptually degraded environments like places without GNSS/GPS signals. We first developed a single-drone approach for target identification, followed by a decentralized two-drone model. Our approach can utilize two types of sensors on the UAVs, a detection sensor and a target signal sensor. The single-drone model achieved an accuracy of 93%, while the two-drone model achieved an accuracy of 86%, with the latter requiring fewer average steps to locate the target. This demonstrates the potential of our method in UAV swarms, offering efficient and effective localization of radiant targets in complex environmental conditions.

Distributed multi-robot potential-field-based exploration with submap-based mapping and noise-augmented strategy

Jul 10, 2024

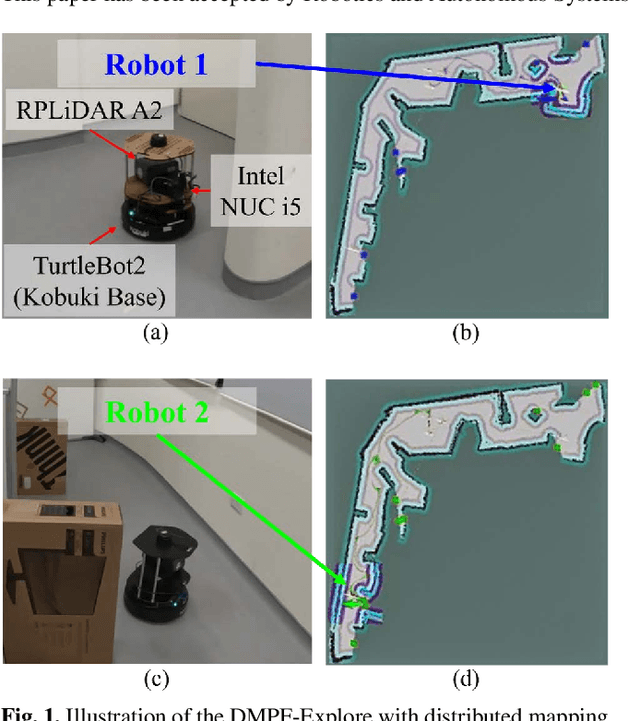

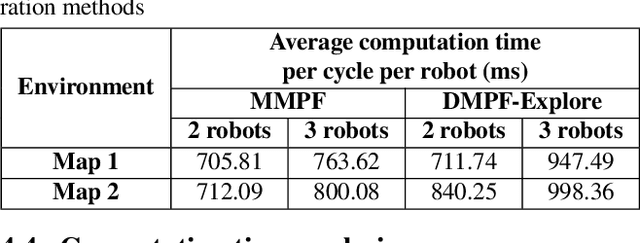

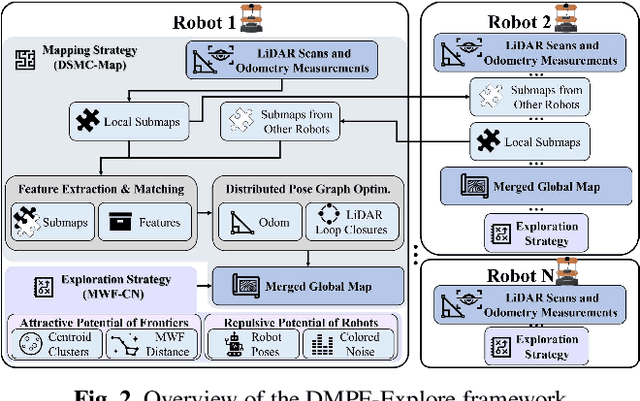

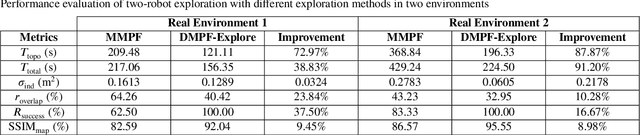

Abstract:Multi-robot collaboration has become a needed component in unknown environment exploration due to its ability to accomplish various challenging situations. Potential-field-based methods are widely used for autonomous exploration because of their high efficiency and low travel cost. However, exploration speed and collaboration ability are still challenging topics. Therefore, we propose a Distributed Multi-Robot Potential-Field-Based Exploration (DMPF-Explore). In particular, we first present a Distributed Submap-Based Multi-Robot Collaborative Mapping Method (DSMC-Map), which can efficiently estimate the robot trajectories and construct the global map by merging the local maps from each robot. Second, we introduce a Potential-Field-Based Exploration Strategy Augmented with Modified Wave-Front Distance and Colored Noises (MWF-CN), in which the accessible frontier neighborhood is extended, and the colored noise provokes the enhancement of exploration performance. The proposed exploration method is deployed for simulation and real-world scenarios. The results show that our approach outperforms the existing ones regarding exploration speed and collaboration ability.

GMC-Pos: Graph-Based Multi-Robot Coverage Positioning Method

Oct 18, 2023

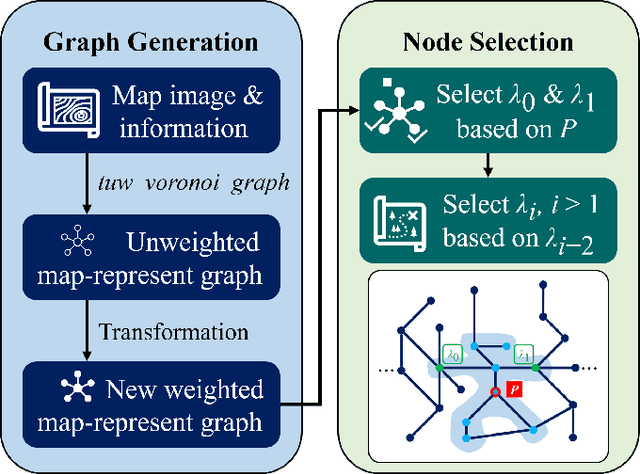

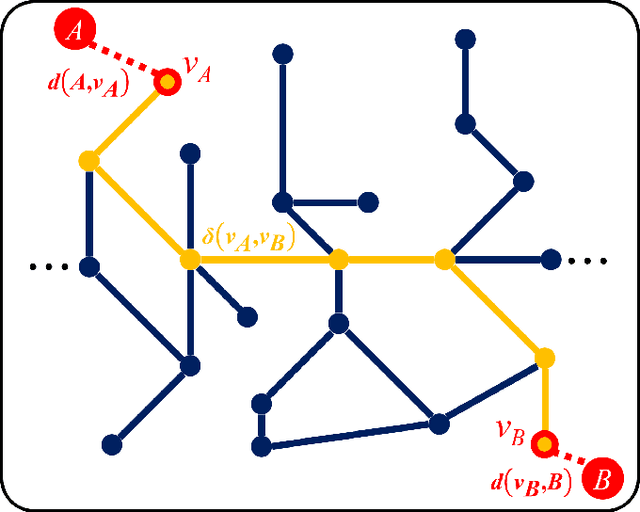

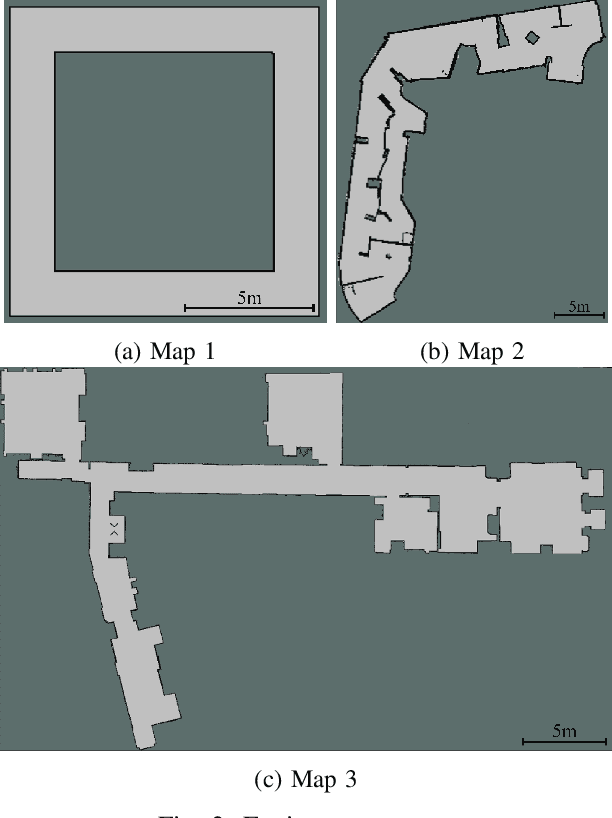

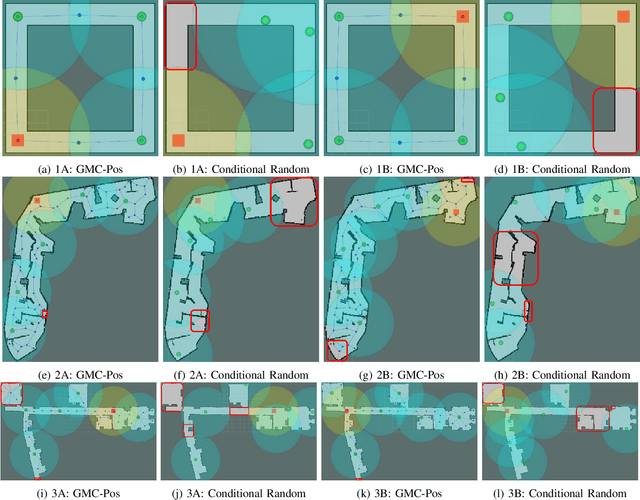

Abstract:Nowadays, several real-world tasks require adequate environment coverage for maintaining communication between multiple robots, for example, target search tasks, environmental monitoring, and post-disaster rescues. In this study, we look into a situation where there are a human operator and multiple robots, and we assume that each human or robot covers a certain range of areas. We want them to maximize their area of coverage collectively. Therefore, in this paper, we propose the Graph-Based Multi-Robot Coverage Positioning Method (GMC-Pos) to find strategic positions for robots that maximize the area coverage. Our novel approach consists of two main modules: graph generation and node selection. Firstly, graph generation represents the environment using a weighted connected graph. Then, we present a novel generalized graph-based distance and utilize it together with the graph degrees to be the conditions for node selection in a recursive manner. Our method is deployed in three environments with different settings. The results show that it outperforms the benchmark method by 15.13% to 24.88% regarding the area coverage percentage.

Moving Object Localization based on the Fusion of Ultra-WideBand and LiDAR with a Mobile Robot

Oct 16, 2023

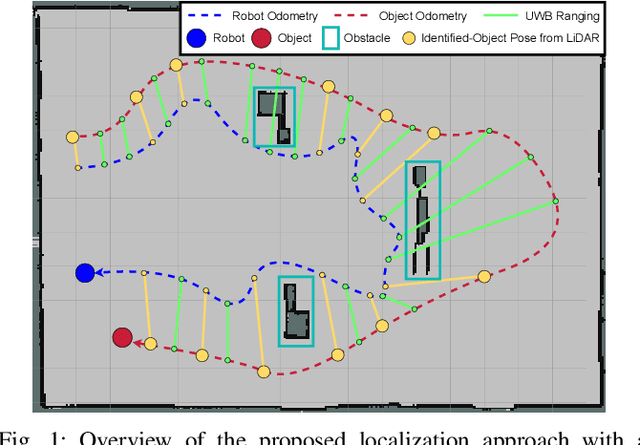

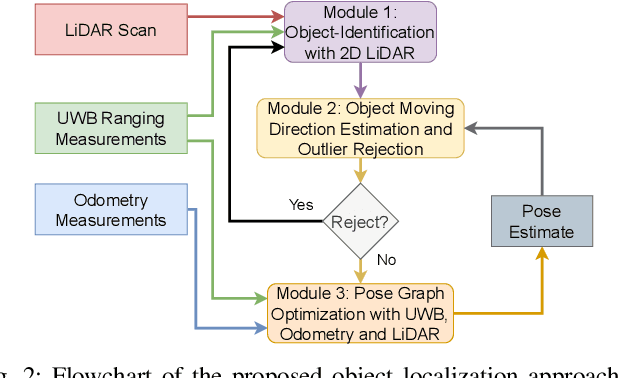

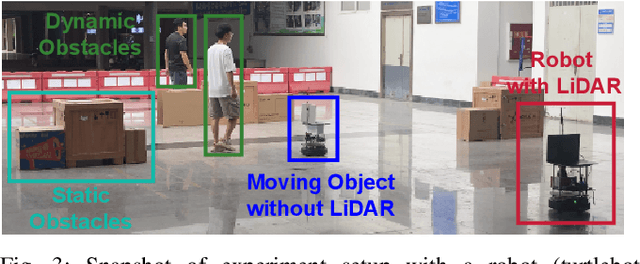

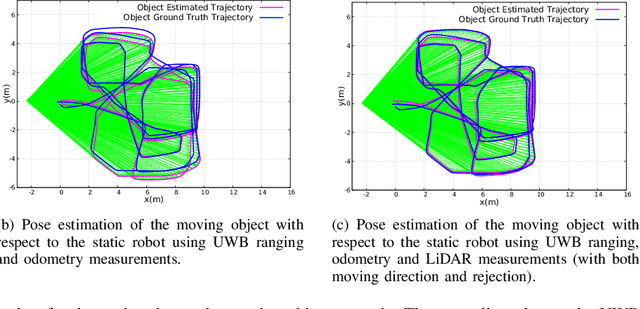

Abstract:Localization of objects is vital for robot-object interaction. Light Detection and Ranging (LiDAR) application in robotics is an emerging and widely used object localization technique due to its accurate distance measurement, long-range, wide field of view, and robustness in different conditions. However, LiDAR is unable to identify the objects when they are obstructed by obstacles, resulting in inaccuracy and noise in localization. To address this issue, we present an approach incorporating LiDAR and Ultra-Wideband (UWB) ranging for object localization. The UWB is popular in sensor fusion localization algorithms due to its low weight and low power consumption. In addition, the UWB is able to return ranging measurements even when the object is not within line-of-sight. Our approach provides an efficient solution to combine an anonymous optical sensor (LiDAR) with an identity-based radio sensor (UWB) to improve the localization accuracy of the object. Our approach consists of three modules. The first module is an object-identification algorithm that compares successive scans from the LiDAR to detect a moving object in the environment and returns the position with the closest range to UWB ranging. The second module estimates the moving object's moving direction using the previous and current estimated position from our object-identification module. It removes the suspicious estimations through an outlier rejection criterion. Lastly, we fuse the LiDAR, UWB ranging, and odometry measurements in pose graph optimization (PGO) to recover the entire trajectory of the robot and object. Extensive experiments were performed to evaluate the performance of the proposed approach.

Towards Ubiquitous Semantic Metaverse: Challenges, Approaches, and Opportunities

Aug 05, 2023Abstract:In recent years, ubiquitous semantic Metaverse has been studied to revolutionize immersive cyber-virtual experiences for augmented reality (AR) and virtual reality (VR) users, which leverages advanced semantic understanding and representation to enable seamless, context-aware interactions within mixed-reality environments. This survey focuses on the intelligence and spatio-temporal characteristics of four fundamental system components in ubiquitous semantic Metaverse, i.e., artificial intelligence (AI), spatio-temporal data representation (STDR), semantic Internet of Things (SIoT), and semantic-enhanced digital twin (SDT). We thoroughly survey the representative techniques of the four fundamental system components that enable intelligent, personalized, and context-aware interactions with typical use cases of the ubiquitous semantic Metaverse, such as remote education, work and collaboration, entertainment and socialization, healthcare, and e-commerce marketing. Furthermore, we outline the opportunities for constructing the future ubiquitous semantic Metaverse, including scalability and interoperability, privacy and security, performance measurement and standardization, as well as ethical considerations and responsible AI. Addressing those challenges is important for creating a robust, secure, and ethically sound system environment that offers engaging immersive experiences for the users and AR/VR applications.

Exploiting Radio Fingerprints for Simultaneous Localization and Mapping

May 23, 2023Abstract:Simultaneous localization and mapping (SLAM) is paramount for unmanned systems to achieve self-localization and navigation. It is challenging to perform SLAM in large environments, due to sensor limitations, complexity of the environment, and computational resources. We propose a novel approach for localization and mapping of autonomous vehicles using radio fingerprints, for example WiFi (Wireless Fidelity) or LTE (Long Term Evolution) radio features, which are widely available in the existing infrastructure. In particular, we present two solutions to exploit the radio fingerprints for SLAM. In the first solution-namely Radio SLAM, the output is a radio fingerprint map generated using SLAM technique. In the second solution-namely Radio+LiDAR SLAM, we use radio fingerprint to assist conventional LiDAR-based SLAM to improve accuracy and speed, while generating the occupancy map. We demonstrate the effectiveness of our system in three different environments, namely outdoor, indoor building, and semi-indoor environment.

Clustering and Analysis of GPS Trajectory Data using Distance-based Features

Dec 01, 2022

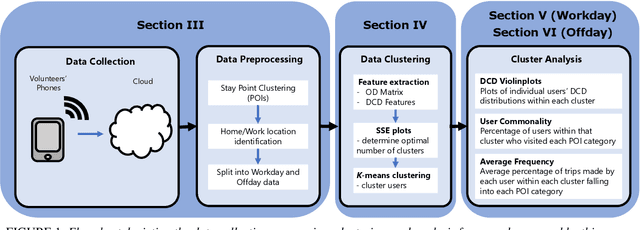

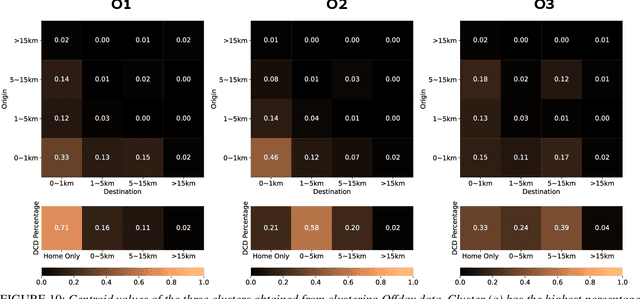

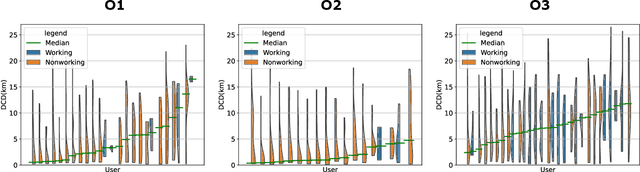

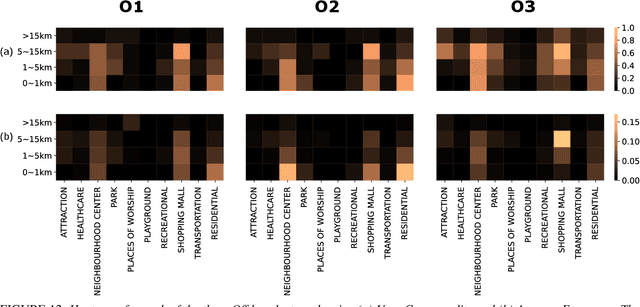

Abstract:The proliferation of smartphones has accelerated mobility studies by largely increasing the type and volume of mobility data available. One such source of mobility data is from GPS technology, which is becoming increasingly common and helps the research community understand mobility patterns of people. However, there lacks a standardized framework for studying the different mobility patterns created by the non-Work, non-Home locations of Working and Nonworking users on Workdays and Offdays using machine learning methods. We propose a new mobility metric, Daily Characteristic Distance, and use it to generate features for each user together with Origin-Destination matrix features. We then use those features with an unsupervised machine learning method, $k$-means clustering, and obtain three clusters of users for each type of day (Workday and Offday). Finally, we propose two new metrics for the analysis of the clustering results, namely User Commonality and Average Frequency. By using the proposed metrics, interesting user behaviors can be discerned and it helps us to better understand the mobility patterns of the users.

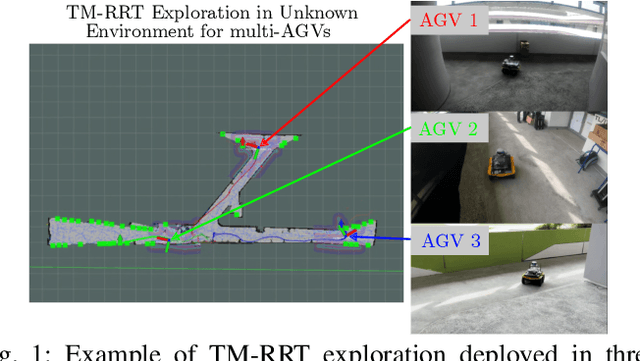

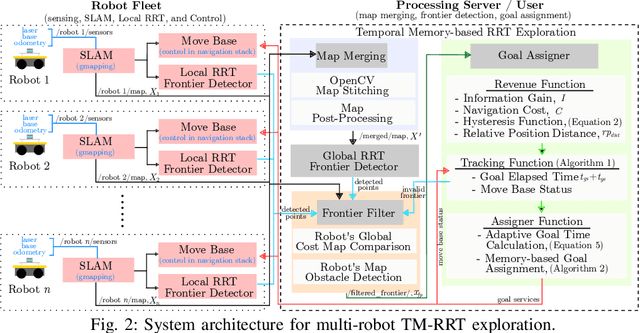

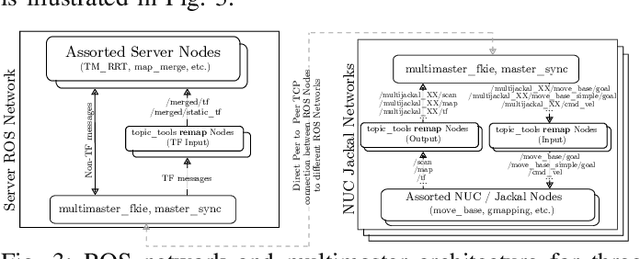

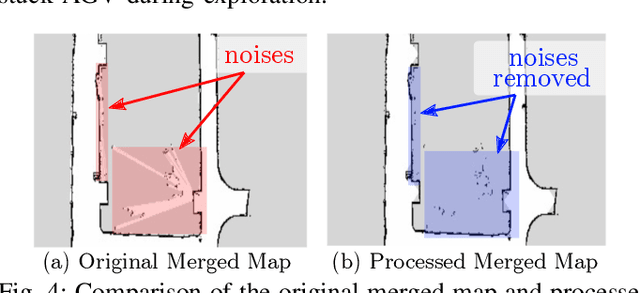

Multi-AGV's Temporal Memory-based RRT Exploration in Unknown Environment

Jul 15, 2022

Abstract:With the increasing need for multi-robot for exploring the unknown region in a challenging environment, efficient collaborative exploration strategies are needed for achieving such feat. A frontier-based Rapidly-Exploring Random Tree (RRT) exploration can be deployed to explore an unknown environment. However, its' greedy behavior causes multiple robots to explore the region with the highest revenue, which leads to massive overlapping in exploration process. To address this issue, we present a temporal memory-based RRT (TM-RRT) exploration strategy for multi-robot to perform robust exploration in an unknown environment. It computes adaptive duration for each frontier assigned and calculates the frontier's revenue based on the relative position of each robot. In addition, each robot is equipped with a memory consisting of frontier assigned and share among fleets to prevent repeating assignment of same frontier. Through both simulation and actual deployment, we have shown the robustness of TM-RRT exploration strategy by completing the exploration in a 25.0m x 54.0m (1350.0m2) area, while the conventional RRT exploration strategy falls short.

* 8 pages, 10 Figures

Distributed Ranging SLAM for Multiple Robots with Ultra-WideBand and Odometry Measurements

Jul 08, 2022

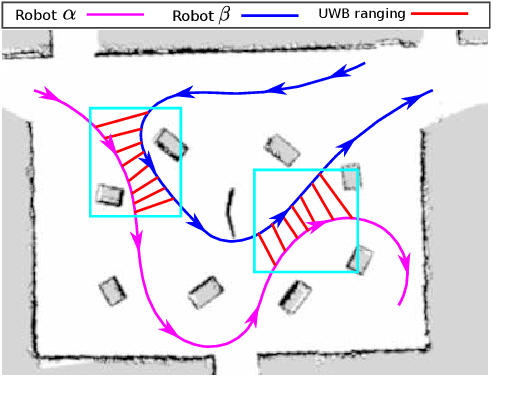

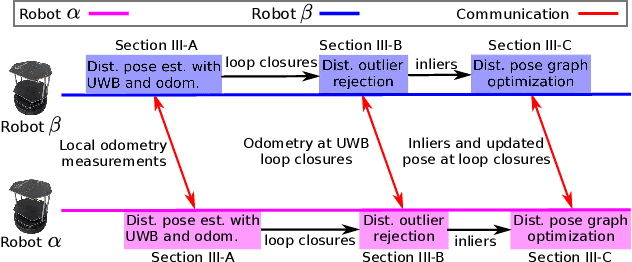

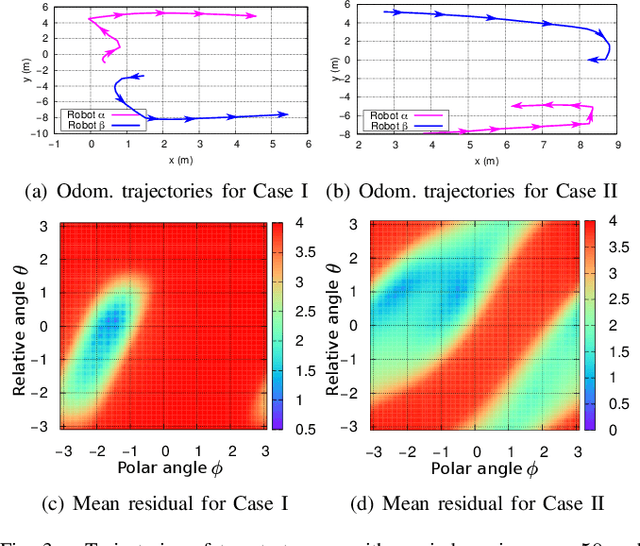

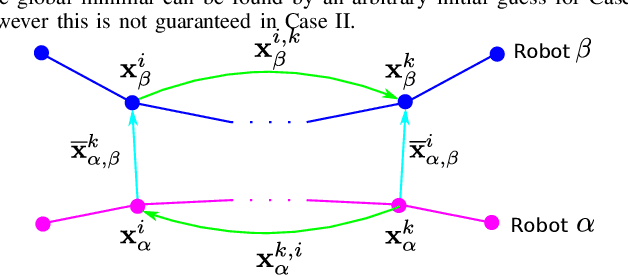

Abstract:To accomplish task efficiently in a multiple robots system, a problem that has to be addressed is Simultaneous Localization and Mapping (SLAM). LiDAR (Light Detection and Ranging) has been used for many SLAM solutions due to its superb accuracy, but its performance degrades in featureless environments, like tunnels or long corridors. Centralized SLAM solves the problem with a cloud server, which requires a huge amount of computational resources and lacks robustness against central node failure. To address these issues, we present a distributed SLAM solution to estimate the trajectory of a group of robots using Ultra-WideBand (UWB) ranging and odometry measurements. The proposed approach distributes the processing among the robot team and significantly mitigates the computation concern emerged from the centralized SLAM. Our solution determines the relative pose (also known as loop closure) between two robots by minimizing the UWB ranging measurements taken at different positions when the robots are in close proximity. UWB provides a good distance measure in line-of-sight conditions, but retrieving a precise pose estimation remains a challenge, due to ranging noise and unpredictable path traveled by the robot. To deal with the suspicious loop closures, we use Pairwise Consistency Maximization (PCM) to examine the quality of loop closures and perform outlier rejections. The filtered loop closures are then fused with odometry in a distributed pose graph optimization (DPGO) module to recover the full trajectory of the robot team. Extensive experiments are conducted to validate the effectiveness of the proposed approach.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge