Alberto Rodriguez

Reactive In-Air Clothing Manipulation with Confidence-Aware Dense Correspondence and Visuotactile Affordance

Sep 04, 2025Abstract:Manipulating clothing is challenging due to complex configurations, variable material dynamics, and frequent self-occlusion. Prior systems often flatten garments or assume visibility of key features. We present a dual-arm visuotactile framework that combines confidence-aware dense visual correspondence and tactile-supervised grasp affordance to operate directly on crumpled and suspended garments. The correspondence model is trained on a custom, high-fidelity simulated dataset using a distributional loss that captures cloth symmetries and generates correspondence confidence estimates. These estimates guide a reactive state machine that adapts folding strategies based on perceptual uncertainty. In parallel, a visuotactile grasp affordance network, self-supervised using high-resolution tactile feedback, determines which regions are physically graspable. The same tactile classifier is used during execution for real-time grasp validation. By deferring action in low-confidence states, the system handles highly occluded table-top and in-air configurations. We demonstrate our task-agnostic grasp selection module in folding and hanging tasks. Moreover, our dense descriptors provide a reusable intermediate representation for other planning modalities, such as extracting grasp targets from human video demonstrations, paving the way for more generalizable and scalable garment manipulation.

TEXterity -- Tactile Extrinsic deXterity: Simultaneous Tactile Estimation and Control for Extrinsic Dexterity

Mar 04, 2024

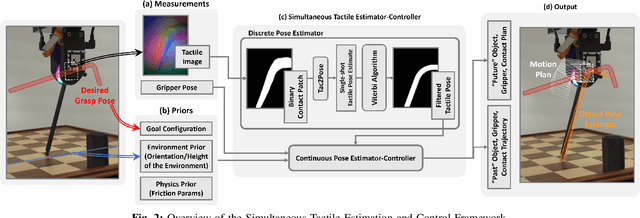

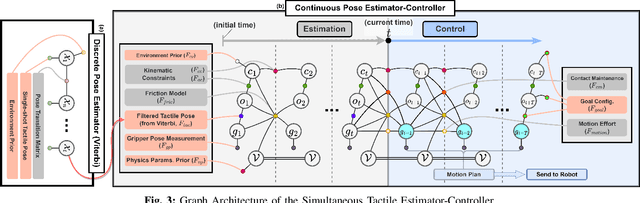

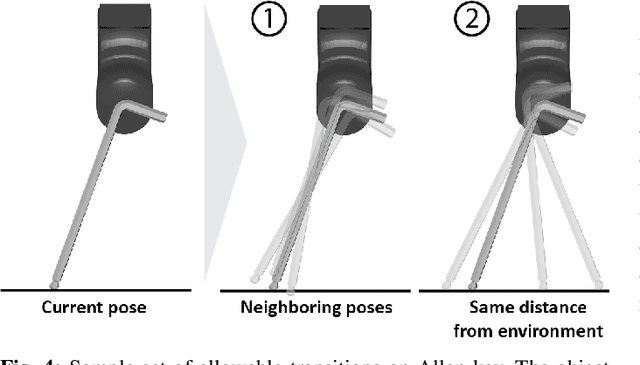

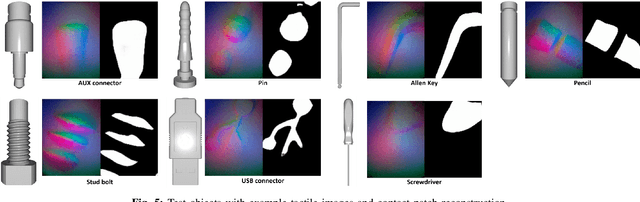

Abstract:We introduce a novel approach that combines tactile estimation and control for in-hand object manipulation. By integrating measurements from robot kinematics and an image-based tactile sensor, our framework estimates and tracks object pose while simultaneously generating motion plans in a receding horizon fashion to control the pose of a grasped object. This approach consists of a discrete pose estimator that tracks the most likely sequence of object poses in a coarsely discretized grid, and a continuous pose estimator-controller to refine the pose estimate and accurately manipulate the pose of the grasped object. Our method is tested on diverse objects and configurations, achieving desired manipulation objectives and outperforming single-shot methods in estimation accuracy. The proposed approach holds potential for tasks requiring precise manipulation and limited intrinsic in-hand dexterity under visual occlusion, laying the foundation for closed-loop behavior in applications such as regrasping, insertion, and tool use. Please see https://sites.google.com/view/texterity for videos of real-world demonstrations.

TEXterity: Tactile Extrinsic deXterity

Jan 22, 2024

Abstract:We introduce a novel approach that combines tactile estimation and control for in-hand object manipulation. By integrating measurements from robot kinematics and an image-based tactile sensor, our framework estimates and tracks object pose while simultaneously generating motion plans to control the pose of a grasped object. This approach consists of a discrete pose estimator that uses the Viterbi decoding algorithm to find the most likely sequence of object poses in a coarsely discretized grid, and a continuous pose estimator-controller to refine the pose estimate and accurately manipulate the pose of the grasped object. Our method is tested on diverse objects and configurations, achieving desired manipulation objectives and outperforming single-shot methods in estimation accuracy. The proposed approach holds potential for tasks requiring precise manipulation in scenarios where visual perception is limited, laying the foundation for closed-loop behavior applications such as assembly and tool use. Please see supplementary videos for real-world demonstration at https://sites.google.com/view/texterity.

Parallel-Jaw Gripper and Grasp Co-Optimization for Sets of Planar Objects

Oct 27, 2023

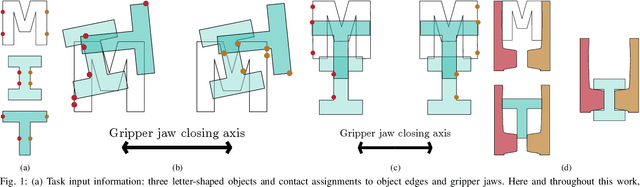

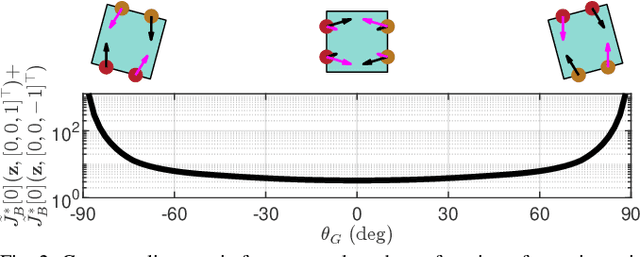

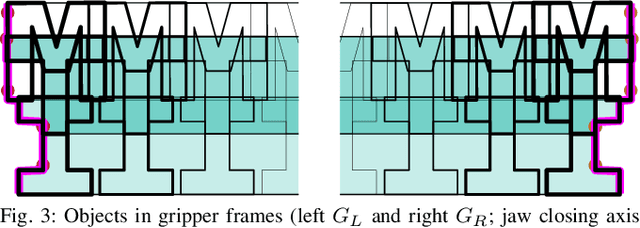

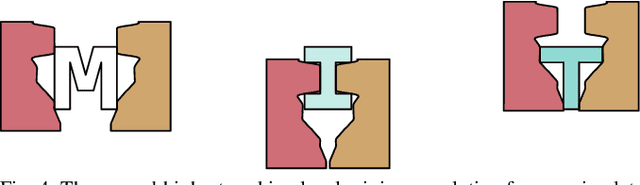

Abstract:We propose a framework for optimizing a planar parallel-jaw gripper for use with multiple objects. While optimizing general-purpose grippers and contact locations for grasps are both well studied, co-optimizing grasps and the gripper geometry to execute them receives less attention. As such, our framework synthesizes grippers optimized to stably grasp sets of polygonal objects. Given a fixed number of contacts and their assignments to object faces and gripper jaws, our framework optimizes contact locations along these faces, gripper pose for each grasp, and gripper shape. Our key insights are to pose shape and contact constraints in frames fixed to the gripper jaws, and to leverage the linearity of constraints in our grasp stability and gripper shape models via an augmented Lagrangian formulation. Together, these enable a tractable nonlinear program implementation. We apply our method to several examples. The first illustrative problem shows the discovery of a geometrically simple solution where possible. In another, space is constrained, forcing multiple objects to be contacted by the same features as each other. Finally a toolset-grasping example shows that our framework applies to complex, real-world objects. We provide a physical experiment of the toolset grasps.

Object manipulation through contact configuration regulation: multiple and intermittent contacts

Oct 01, 2023

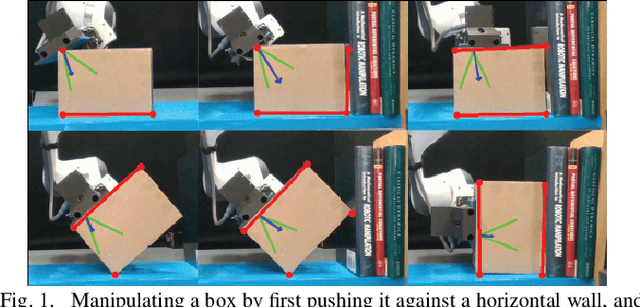

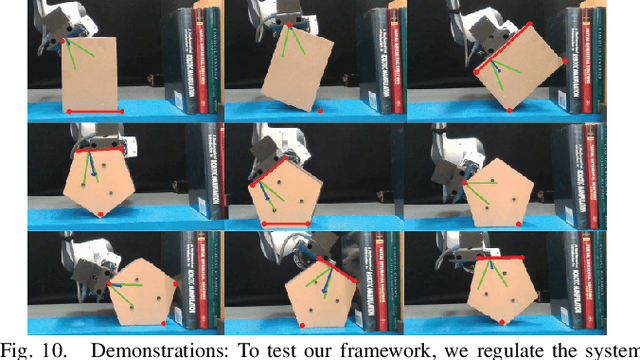

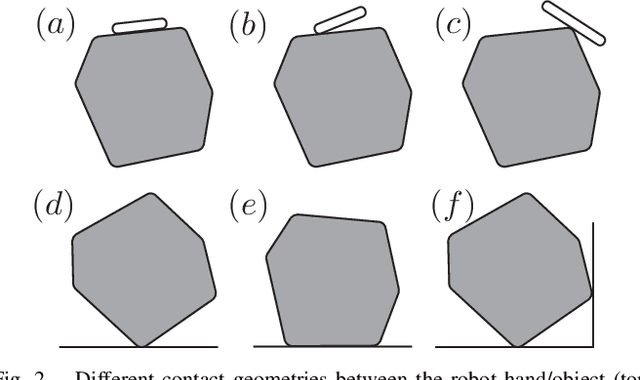

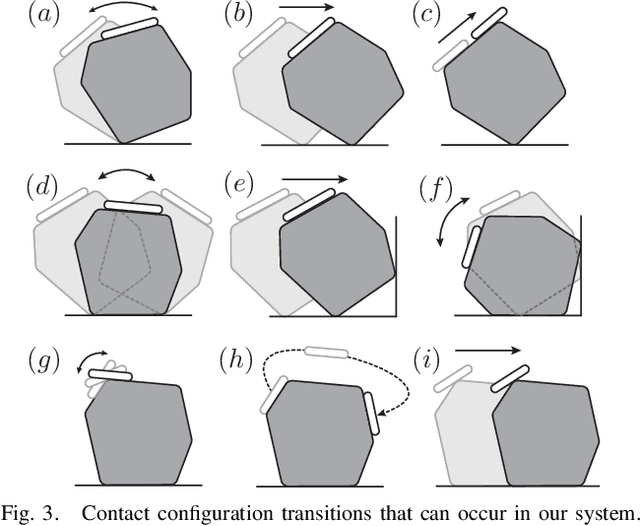

Abstract:In this work, we build on our method for manipulating unknown objects via contact configuration regulation: the estimation and control of the location, geometry, and mode of all contacts between the robot, object, and environment. We further develop our estimator and controller to enable manipulation through more complex contact interactions, including intermittent contact between the robot/object, and multiple contacts between the object/environment. In addition, we support a larger set of contact geometries at each interface. This is accomplished through a factor graph based estimation framework that reasons about the complementary kinematic and wrench constraints of contact to predict the current contact configuration. We are aided by the incorporation of a limited amount of visual feedback; which when combined with the available F/T sensing and robot proprioception, allows us to differentiate contact modes that were previously indistinguishable. We implement this revamped framework on our manipulation platform, and demonstrate that it allows the robot to perform a wider set of manipulation tasks. This includes, using a wall as a support to re-orient an object, or regulating the contact geometry between the object and the ground. Finally, we conduct ablation studies to understand the contributions from visual and tactile feedback in our manipulation framework. Our code can be found at: https://github.com/mcubelab/pbal.

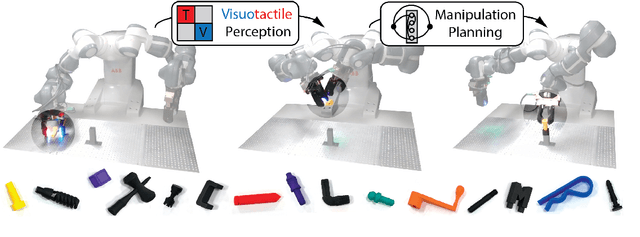

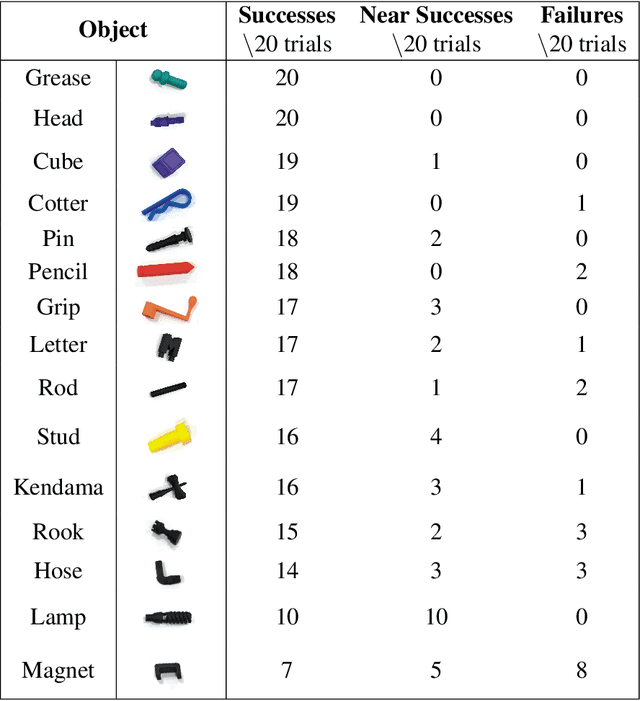

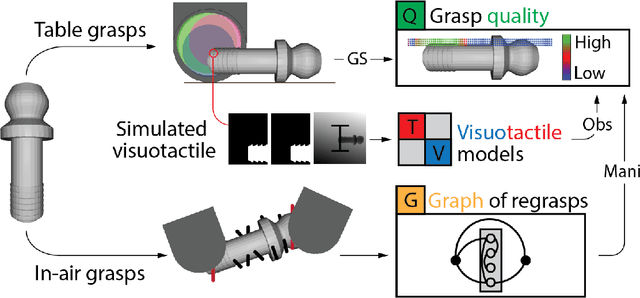

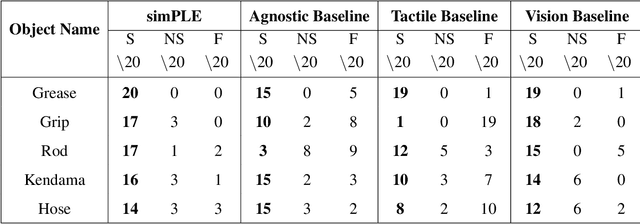

simPLE: a visuotactile method learned in simulation to precisely pick, localize, regrasp, and place objects

Jul 24, 2023

Abstract:Existing robotic systems have a clear tension between generality and precision. Deployed solutions for robotic manipulation tend to fall into the paradigm of one robot solving a single task, lacking precise generalization, i.e., the ability to solve many tasks without compromising on precision. This paper explores solutions for precise and general pick-and-place. In precise pick-and-place, i.e. kitting, the robot transforms an unstructured arrangement of objects into an organized arrangement, which can facilitate further manipulation. We propose simPLE (simulation to Pick Localize and PLacE) as a solution to precise pick-and-place. simPLE learns to pick, regrasp and place objects precisely, given only the object CAD model and no prior experience. We develop three main components: task-aware grasping, visuotactile perception, and regrasp planning. Task-aware grasping computes affordances of grasps that are stable, observable, and favorable to placing. The visuotactile perception model relies on matching real observations against a set of simulated ones through supervised learning. Finally, we compute the desired robot motion by solving a shortest path problem on a graph of hand-to-hand regrasps. On a dual-arm robot equipped with visuotactile sensing, we demonstrate pick-and-place of 15 diverse objects with simPLE. The objects span a wide range of shapes and simPLE achieves successful placements into structured arrangements with 1mm clearance over 90% of the time for 6 objects, and over 80% of the time for 11 objects. Videos are available at http://mcube.mit.edu/research/simPLE.html .

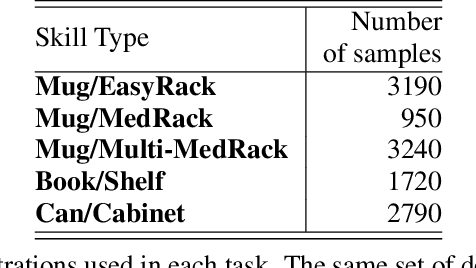

Shelving, Stacking, Hanging: Relational Pose Diffusion for Multi-modal Rearrangement

Jul 10, 2023

Abstract:We propose a system for rearranging objects in a scene to achieve a desired object-scene placing relationship, such as a book inserted in an open slot of a bookshelf. The pipeline generalizes to novel geometries, poses, and layouts of both scenes and objects, and is trained from demonstrations to operate directly on 3D point clouds. Our system overcomes challenges associated with the existence of many geometrically-similar rearrangement solutions for a given scene. By leveraging an iterative pose de-noising training procedure, we can fit multi-modal demonstration data and produce multi-modal outputs while remaining precise and accurate. We also show the advantages of conditioning on relevant local geometric features while ignoring irrelevant global structure that harms both generalization and precision. We demonstrate our approach on three distinct rearrangement tasks that require handling multi-modality and generalization over object shape and pose in both simulation and the real world. Project website, code, and videos: https://anthonysimeonov.github.io/rpdiff-multi-modal/

Simultaneous Tactile Estimation and Control of Extrinsic Contact

Mar 06, 2023

Abstract:We propose a method that simultaneously estimates and controls extrinsic contact with tactile feedback. The method enables challenging manipulation tasks that require controlling light forces and accurate motions in contact, such as balancing an unknown object on a thin rod standing upright. A factor graph-based framework fuses a sequence of tactile and kinematic measurements to estimate and control the interaction between gripper-object-environment, including the location and wrench at the extrinsic contact between the grasped object and the environment and the grasp wrench transferred from the gripper to the object. The same framework simultaneously plans the gripper motions that make it possible to estimate the state while satisfying regularizing control objectives to prevent slip, such as minimizing the grasp wrench and minimizing frictional force at the extrinsic contact. We show results with sub-millimeter contact localization error and good slip prevention even on slippery environments, for multiple contact formations (point, line, patch contact) and transitions between them. See supplementary video and results at https://sites.google.com/view/sim-tact.

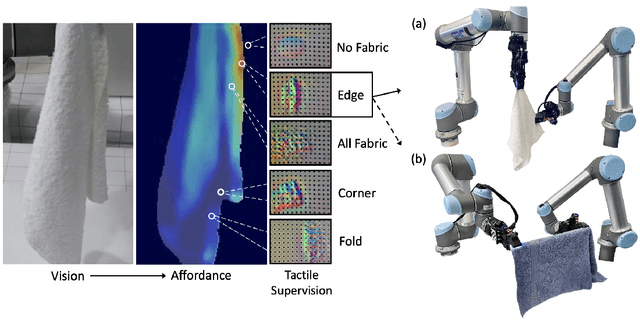

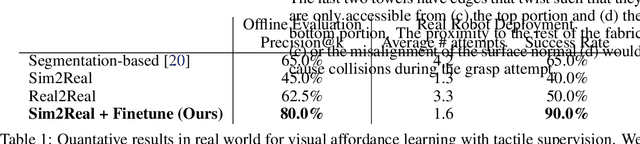

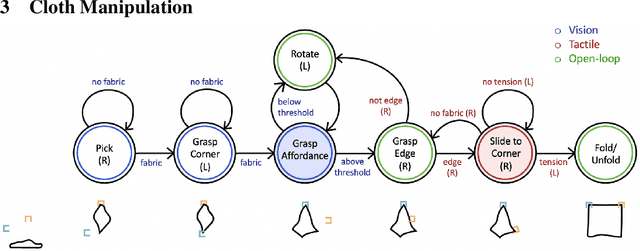

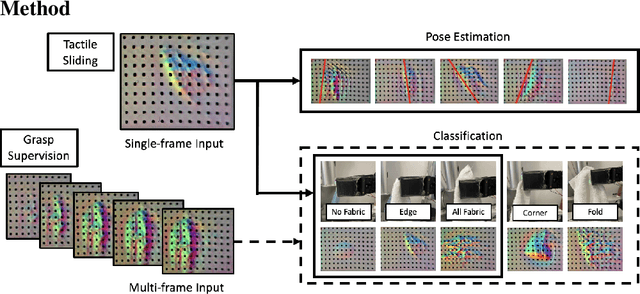

Visuotactile Affordances for Cloth Manipulation with Local Control

Dec 09, 2022

Abstract:Cloth in the real world is often crumpled, self-occluded, or folded in on itself such that key regions, such as corners, are not directly graspable, making manipulation difficult. We propose a system that leverages visual and tactile perception to unfold the cloth via grasping and sliding on edges. By doing so, the robot is able to grasp two adjacent corners, enabling subsequent manipulation tasks like folding or hanging. As components of this system, we develop tactile perception networks that classify whether an edge is grasped and estimate the pose of the edge. We use the edge classification network to supervise a visuotactile edge grasp affordance network that can grasp edges with a 90% success rate. Once an edge is grasped, we demonstrate that the robot can slide along the cloth to the adjacent corner using tactile pose estimation/control in real time. See http://nehasunil.com/visuotactile/visuotactile.html for videos.

SE(3)-Equivariant Relational Rearrangement with Neural Descriptor Fields

Nov 17, 2022Abstract:We present a method for performing tasks involving spatial relations between novel object instances initialized in arbitrary poses directly from point cloud observations. Our framework provides a scalable way for specifying new tasks using only 5-10 demonstrations. Object rearrangement is formalized as the question of finding actions that configure task-relevant parts of the object in a desired alignment. This formalism is implemented in three steps: assigning a consistent local coordinate frame to the task-relevant object parts, determining the location and orientation of this coordinate frame on unseen object instances, and executing an action that brings these frames into the desired alignment. We overcome the key technical challenge of determining task-relevant local coordinate frames from a few demonstrations by developing an optimization method based on Neural Descriptor Fields (NDFs) and a single annotated 3D keypoint. An energy-based learning scheme to model the joint configuration of the objects that satisfies a desired relational task further improves performance. The method is tested on three multi-object rearrangement tasks in simulation and on a real robot. Project website, videos, and code: https://anthonysimeonov.github.io/r-ndf/

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge