Deblurring

Deblurring is a computer-vision task that involves removing the blurring artifacts from images or videos to restore the original, sharp content. Blurring can be caused by various factors such as camera shake, fast motion, and out-of-focus objects, and can result in a loss of detail and quality in the captured images. The goal of deblurring is to produce a clear, high-quality image that accurately represents the original scene.

Papers and Code

Exact Evaluation of the Accuracy of Diffusion Models for Inverse Problems with Gaussian Data Distributions

Jul 09, 2025

Used as priors for Bayesian inverse problems, diffusion models have recently attracted considerable attention in the literature. Their flexibility and high variance enable them to generate multiple solutions for a given task, such as inpainting, super-resolution, and deblurring. However, several unresolved questions remain about how well they perform. In this article, we investigate the accuracy of these models when applied to a Gaussian data distribution for deblurring. Within this constrained context, we are able to precisely analyze the discrepancy between the theoretical resolution of inverse problems and their resolution obtained using diffusion models by computing the exact Wasserstein distance between the distribution of the diffusion model sampler and the ideal distribution of solutions to the inverse problem. Our findings allow for the comparison of different algorithms from the literature.

Deblurring in the Wild: A Real-World Dataset from Smartphone High-Speed Videos

Jun 24, 2025We introduce the largest real-world image deblurring dataset constructed from smartphone slow-motion videos. Using 240 frames captured over one second, we simulate realistic long-exposure blur by averaging frames to produce blurry images, while using the temporally centered frame as the sharp reference. Our dataset contains over 42,000 high-resolution blur-sharp image pairs, making it approximately 10 times larger than widely used datasets, with 8 times the amount of different scenes, including indoor and outdoor environments, with varying object and camera motions. We benchmark multiple state-of-the-art (SOTA) deblurring models on our dataset and observe significant performance degradation, highlighting the complexity and diversity of our benchmark. Our dataset serves as a challenging new benchmark to facilitate robust and generalizable deblurring models.

Unsupervised Imaging Inverse Problems with Diffusion Distribution Matching

Jun 17, 2025This work addresses image restoration tasks through the lens of inverse problems using unpaired datasets. In contrast to traditional approaches -- which typically assume full knowledge of the forward model or access to paired degraded and ground-truth images -- the proposed method operates under minimal assumptions and relies only on small, unpaired datasets. This makes it particularly well-suited for real-world scenarios, where the forward model is often unknown or misspecified, and collecting paired data is costly or infeasible. The method leverages conditional flow matching to model the distribution of degraded observations, while simultaneously learning the forward model via a distribution-matching loss that arises naturally from the framework. Empirically, it outperforms both single-image blind and unsupervised approaches on deblurring and non-uniform point spread function (PSF) calibration tasks. It also matches state-of-the-art performance on blind super-resolution. We also showcase the effectiveness of our method with a proof of concept for lens calibration: a real-world application traditionally requiring time-consuming experiments and specialized equipment. In contrast, our approach achieves this with minimal data acquisition effort.

Restoring Gaussian Blurred Face Images for Deanonymization Attacks

Jun 14, 2025Gaussian blur is widely used to blur human faces in sensitive photos before the photos are posted on the Internet. However, it is unclear to what extent the blurred faces can be restored and used to re-identify the person, especially under a high-blurring setting. In this paper, we explore this question by developing a deblurring method called Revelio. The key intuition is to leverage a generative model's memorization effect and approximate the inverse function of Gaussian blur for face restoration. Compared with existing methods, we design the deblurring process to be identity-preserving. It uses a conditional Diffusion model for preliminary face restoration and then uses an identity retrieval model to retrieve related images to further enhance fidelity. We evaluate Revelio with large public face image datasets and show that it can effectively restore blurred faces, especially under a high-blurring setting. It has a re-identification accuracy of 95.9%, outperforming existing solutions. The result suggests that Gaussian blur should not be used for face anonymization purposes. We also demonstrate the robustness of this method against mismatched Gaussian kernel sizes and functions, and test preliminary countermeasures and adaptive attacks to inspire future work.

Plug-and-Play Linear Attention for Pre-trained Image and Video Restoration Models

Jun 10, 2025Multi-head self-attention (MHSA) has become a core component in modern computer vision models. However, its quadratic complexity with respect to input length poses a significant computational bottleneck in real-time and resource constrained environments. We propose PnP-Nystra, a Nystr\"om based linear approximation of self-attention, developed as a plug-and-play (PnP) module that can be integrated into the pre-trained image and video restoration models without retraining. As a drop-in replacement for MHSA, PnP-Nystra enables efficient acceleration in various window-based transformer architectures, including SwinIR, Uformer, and RVRT. Our experiments across diverse image and video restoration tasks, including denoising, deblurring, and super-resolution, demonstrate that PnP-Nystra achieves a 2-4x speed-up on an NVIDIA RTX 4090 GPU and a 2-5x speed-up on CPU inference. Despite these significant gains, the method incurs a maximum PSNR drop of only 1.5 dB across all evaluated tasks. To the best of our knowledge, we are the first to demonstrate a linear attention functioning as a training-free substitute for MHSA in restoration models.

Multi-Step Guided Diffusion for Image Restoration on Edge Devices: Toward Lightweight Perception in Embodied AI

Jun 08, 2025Diffusion models have shown remarkable flexibility for solving inverse problems without task-specific retraining. However, existing approaches such as Manifold Preserving Guided Diffusion (MPGD) apply only a single gradient update per denoising step, limiting restoration fidelity and robustness, especially in embedded or out-of-distribution settings. In this work, we introduce a multistep optimization strategy within each denoising timestep, significantly enhancing image quality, perceptual accuracy, and generalization. Our experiments on super-resolution and Gaussian deblurring demonstrate that increasing the number of gradient updates per step improves LPIPS and PSNR with minimal latency overhead. Notably, we validate this approach on a Jetson Orin Nano using degraded ImageNet and a UAV dataset, showing that MPGD, originally trained on face datasets, generalizes effectively to natural and aerial scenes. Our findings highlight MPGD's potential as a lightweight, plug-and-play restoration module for real-time visual perception in embodied AI agents such as drones and mobile robots.

EV-LayerSegNet: Self-supervised Motion Segmentation using Event Cameras

Jun 07, 2025Event cameras are novel bio-inspired sensors that capture motion dynamics with much higher temporal resolution than traditional cameras, since pixels react asynchronously to brightness changes. They are therefore better suited for tasks involving motion such as motion segmentation. However, training event-based networks still represents a difficult challenge, as obtaining ground truth is very expensive, error-prone and limited in frequency. In this article, we introduce EV-LayerSegNet, a self-supervised CNN for event-based motion segmentation. Inspired by a layered representation of the scene dynamics, we show that it is possible to learn affine optical flow and segmentation masks separately, and use them to deblur the input events. The deblurring quality is then measured and used as self-supervised learning loss. We train and test the network on a simulated dataset with only affine motion, achieving IoU and detection rate up to 71% and 87% respectively.

On Inverse Problems, Parameter Estimation, and Domain Generalization

Jun 06, 2025

Signal restoration and inverse problems are key elements in most real-world data science applications. In the past decades, with the emergence of machine learning methods, inversion of measurements has become a popular step in almost all physical applications, which is normally executed prior to downstream tasks that often involve parameter estimation. In this work, we analyze the general problem of parameter estimation in an inverse problem setting. First, we address the domain-shift problem by re-formulating it in direct relation with the discrete parameter estimation analysis. We analyze a significant vulnerability in current attempts to enforce domain generalization, which we dubbed the Double Meaning Theorem. Our theoretical findings are experimentally illustrated for domain shift examples in image deblurring and speckle suppression in medical imaging. We then proceed to a theoretical analysis of parameter estimation given observed measurements before and after data processing involving an inversion of the observations. We compare this setting for invertible and non-invertible (degradation) processes. We distinguish between continuous and discrete parameter estimation, corresponding with regression and classification problems, respectively. Our theoretical findings align with the well-known information-theoretic data processing inequality, and to a certain degree question the common misconception that data-processing for inversion, based on modern generative models that may often produce outstanding perceptual quality, will necessarily improve the following parameter estimation objective. It is our hope that this paper will provide practitioners with deeper insights that may be leveraged in the future for the development of more efficient and informed strategic system planning, critical in safety-sensitive applications.

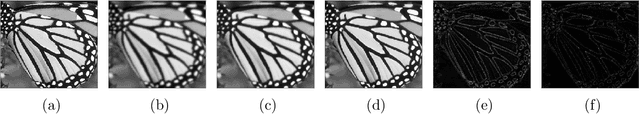

Video Deblurring with Deconvolution and Aggregation Networks

Jun 04, 2025In contrast to single-image deblurring, video deblurring has the advantage that neighbor frames can be utilized to deblur a target frame. However, existing video deblurring algorithms often fail to properly employ the neighbor frames, resulting in sub-optimal performance. In this paper, we propose a deconvolution and aggregation network (DAN) for video deblurring that utilizes the information of neighbor frames well. In DAN, both deconvolution and aggregation strategies are achieved through three sub-networks: the preprocessing network (PPN) and the alignment-based deconvolution network (ABDN) for the deconvolution scheme; the frame aggregation network (FAN) for the aggregation scheme. In the deconvolution part, blurry inputs are first preprocessed by the PPN with non-local operations. Then, the output frames from the PPN are deblurred by the ABDN based on the frame alignment. In the FAN, these deblurred frames from the deconvolution part are combined into a latent frame according to reliability maps which infer pixel-wise sharpness. The proper combination of three sub-networks can achieve favorable performance on video deblurring by using the neighbor frames suitably. In experiments, the proposed DAN was demonstrated to be superior to existing state-of-the-art methods through both quantitative and qualitative evaluations on the public datasets.

Joint Video Enhancement with Deblurring, Super-Resolution, and Frame Interpolation Network

Jun 04, 2025Video quality is often severely degraded by multiple factors rather than a single factor. These low-quality videos can be restored to high-quality videos by sequentially performing appropriate video enhancement techniques. However, the sequential approach was inefficient and sub-optimal because most video enhancement approaches were designed without taking into account that multiple factors together degrade video quality. In this paper, we propose a new joint video enhancement method that mitigates multiple degradation factors simultaneously by resolving an integrated enhancement problem. Our proposed network, named DSFN, directly produces a high-resolution, high-frame-rate, and clear video from a low-resolution, low-frame-rate, and blurry video. In the DSFN, low-resolution and blurry input frames are enhanced by a joint deblurring and super-resolution (JDSR) module. Meanwhile, intermediate frames between input adjacent frames are interpolated by a triple-frame-based frame interpolation (TFBFI) module. The proper combination of the proposed modules of DSFN can achieve superior performance on the joint video enhancement task. Experimental results show that the proposed method outperforms other sequential state-of-the-art techniques on public datasets with a smaller network size and faster processing time.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge