Zihua Si

LargePiG: Your Large Language Model is Secretly a Pointer Generator

Oct 15, 2024

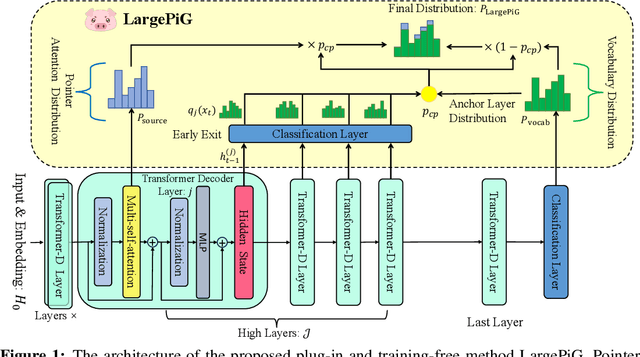

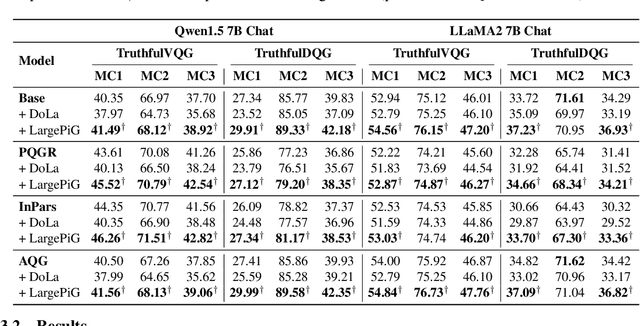

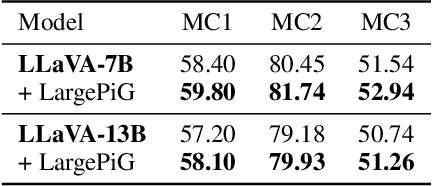

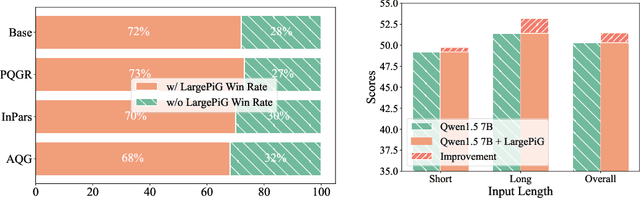

Abstract:Recent research on query generation has focused on using Large Language Models (LLMs), which despite bringing state-of-the-art performance, also introduce issues with hallucinations in the generated queries. In this work, we introduce relevance hallucination and factuality hallucination as a new typology for hallucination problems brought by query generation based on LLMs. We propose an effective way to separate content from form in LLM-generated queries, which preserves the factual knowledge extracted and integrated from the inputs and compiles the syntactic structure, including function words, using the powerful linguistic capabilities of the LLM. Specifically, we introduce a model-agnostic and training-free method that turns the Large Language Model into a Pointer-Generator (LargePiG), where the pointer attention distribution leverages the LLM's inherent attention weights, and the copy probability is derived from the difference between the vocabulary distribution of the model's high layers and the last layer. To validate the effectiveness of LargePiG, we constructed two datasets for assessing the hallucination problems in query generation, covering both document and video scenarios. Empirical studies on various LLMs demonstrated the superiority of LargePiG on both datasets. Additional experiments also verified that LargePiG could reduce hallucination in large vision language models and improve the accuracy of document-based question-answering and factuality evaluation tasks.

TWIN V2: Scaling Ultra-Long User Behavior Sequence Modeling for Enhanced CTR Prediction at Kuaishou

Jul 23, 2024

Abstract:The significance of modeling long-term user interests for CTR prediction tasks in large-scale recommendation systems is progressively gaining attention among researchers and practitioners. Existing work, such as SIM and TWIN, typically employs a two-stage approach to model long-term user behavior sequences for efficiency concerns. The first stage rapidly retrieves a subset of sequences related to the target item from a long sequence using a search-based mechanism namely the General Search Unit (GSU), while the second stage calculates the interest scores using the Exact Search Unit (ESU) on the retrieved results. Given the extensive length of user behavior sequences spanning the entire life cycle, potentially reaching up to 10^6 in scale, there is currently no effective solution for fully modeling such expansive user interests. To overcome this issue, we introduced TWIN-V2, an enhancement of TWIN, where a divide-and-conquer approach is applied to compress life-cycle behaviors and uncover more accurate and diverse user interests. Specifically, a hierarchical clustering method groups items with similar characteristics in life-cycle behaviors into a single cluster during the offline phase. By limiting the size of clusters, we can compress behavior sequences well beyond the magnitude of 10^5 to a length manageable for online inference in GSU retrieval. Cluster-aware target attention extracts comprehensive and multi-faceted long-term interests of users, thereby making the final recommendation results more accurate and diverse. Extensive offline experiments on a multi-billion-scale industrial dataset and online A/B tests have demonstrated the effectiveness of TWIN-V2. Under an efficient deployment framework, TWIN-V2 has been successfully deployed to the primary traffic that serves hundreds of millions of daily active users at Kuaishou.

UniSAR: Modeling User Transition Behaviors between Search and Recommendation

Apr 15, 2024Abstract:Nowadays, many platforms provide users with both search and recommendation services as important tools for accessing information. The phenomenon has led to a correlation between user search and recommendation behaviors, providing an opportunity to model user interests in a fine-grained way. Existing approaches either model user search and recommendation behaviors separately or overlook the different transitions between user search and recommendation behaviors. In this paper, we propose a framework named UniSAR that effectively models the different types of fine-grained behavior transitions for providing users a Unified Search And Recommendation service. Specifically, UniSAR models the user transition behaviors between search and recommendation through three steps: extraction, alignment, and fusion, which are respectively implemented by transformers equipped with pre-defined masks, contrastive learning that aligns the extracted fine-grained user transitions, and cross-attentions that fuse different transitions. To provide users with a unified service, the learned representations are fed into the downstream search and recommendation models. Joint learning on both search and recommendation data is employed to utilize the knowledge and enhance each other. Experimental results on two public datasets demonstrated the effectiveness of UniSAR in terms of enhancing both search and recommendation simultaneously. The experimental analysis further validates that UniSAR enhances the results by successfully modeling the user transition behaviors between search and recommendation.

To Search or to Recommend: Predicting Open-App Motivation with Neural Hawkes Process

Apr 04, 2024

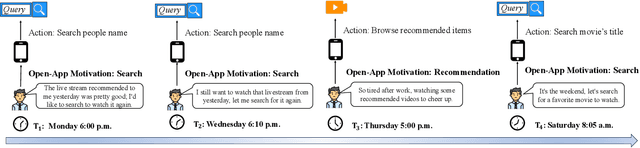

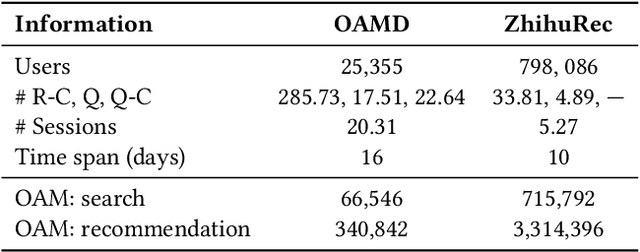

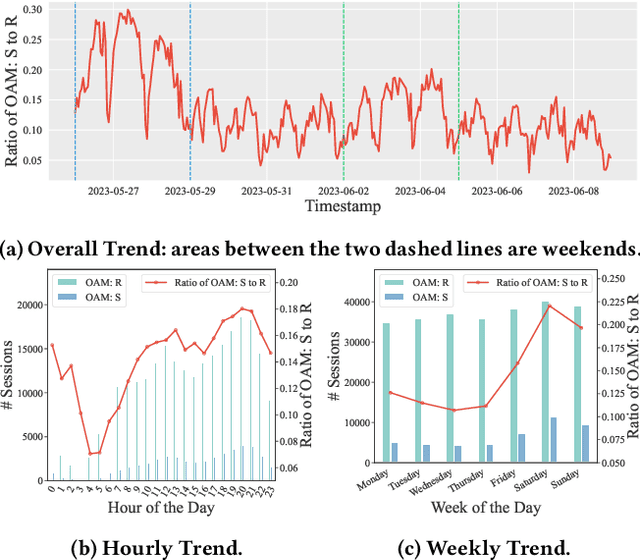

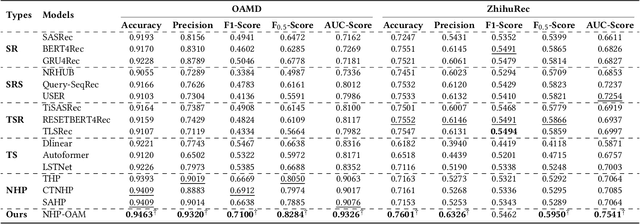

Abstract:Incorporating Search and Recommendation (S&R) services within a singular application is prevalent in online platforms, leading to a new task termed open-app motivation prediction, which aims to predict whether users initiate the application with the specific intent of information searching, or to explore recommended content for entertainment. Studies have shown that predicting users' motivation to open an app can help to improve user engagement and enhance performance in various downstream tasks. However, accurately predicting open-app motivation is not trivial, as it is influenced by user-specific factors, search queries, clicked items, as well as their temporal occurrences. Furthermore, these activities occur sequentially and exhibit intricate temporal dependencies. Inspired by the success of the Neural Hawkes Process (NHP) in modeling temporal dependencies in sequences, this paper proposes a novel neural Hawkes process model to capture the temporal dependencies between historical user browsing and querying actions. The model, referred to as Neural Hawkes Process-based Open-App Motivation prediction model (NHP-OAM), employs a hierarchical transformer and a novel intensity function to encode multiple factors, and open-app motivation prediction layer to integrate time and user-specific information for predicting users' open-app motivations. To demonstrate the superiority of our NHP-OAM model and construct a benchmark for the Open-App Motivation Prediction task, we not only extend the public S&R dataset ZhihuRec but also construct a new real-world Open-App Motivation Dataset (OAMD). Experiments on these two datasets validate NHP-OAM's superiority over baseline models. Further downstream application experiments demonstrate NHP-OAM's effectiveness in predicting users' Open-App Motivation, highlighting the immense application value of NHP-OAM.

Large Language Models Enhanced Collaborative Filtering

Mar 26, 2024

Abstract:Recent advancements in Large Language Models (LLMs) have attracted considerable interest among researchers to leverage these models to enhance Recommender Systems (RSs). Existing work predominantly utilizes LLMs to generate knowledge-rich texts or utilizes LLM-derived embeddings as features to improve RSs. Although the extensive world knowledge embedded in LLMs generally benefits RSs, the application can only take limited number of users and items as inputs, without adequately exploiting collaborative filtering information. Considering its crucial role in RSs, one key challenge in enhancing RSs with LLMs lies in providing better collaborative filtering information through LLMs. In this paper, drawing inspiration from the in-context learning and chain of thought reasoning in LLMs, we propose the Large Language Models enhanced Collaborative Filtering (LLM-CF) framework, which distils the world knowledge and reasoning capabilities of LLMs into collaborative filtering. We also explored a concise and efficient instruction-tuning method, which improves the recommendation capabilities of LLMs while preserving their general functionalities (e.g., not decreasing on the LLM benchmark). Comprehensive experiments on three real-world datasets demonstrate that LLM-CF significantly enhances several backbone recommendation models and consistently outperforms competitive baselines, showcasing its effectiveness in distilling the world knowledge and reasoning capabilities of LLM into collaborative filtering.

Incorporating Judgment Prediction into Legal Case Retrieval via Law-aware Generative Retrieval

Dec 15, 2023

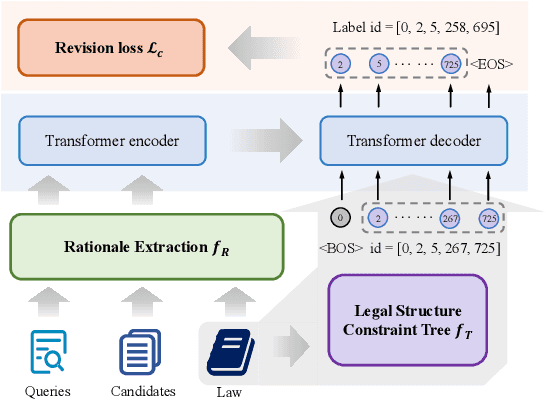

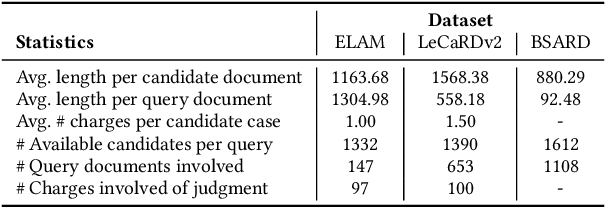

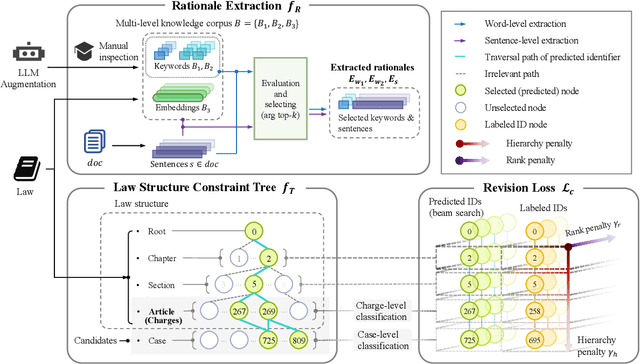

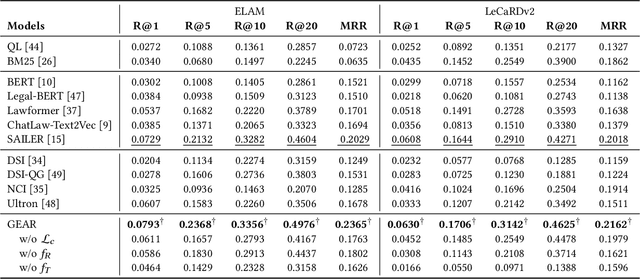

Abstract:Legal case retrieval and judgment prediction are crucial components in intelligent legal systems. In practice, determining whether two cases share the same charges through legal judgment prediction is essential for establishing their relevance in case retrieval. However, current studies on legal case retrieval merely focus on the semantic similarity between paired cases, ignoring their charge-level consistency. This separation leads to a lack of context and potential inaccuracies in the case retrieval that can undermine trust in the system's decision-making process. Given the guidance role of laws to both tasks and inspired by the success of generative retrieval, in this work, we propose to incorporate judgment prediction into legal case retrieval, achieving a novel law-aware Generative legal case retrieval method called Gear. Specifically, Gear first extracts rationales (key circumstances and key elements) for legal cases according to the definition of charges in laws, ensuring a shared and informative representation for both tasks. Then in accordance with the inherent hierarchy of laws, we construct a law structure constraint tree and assign law-aware semantic identifier(s) to each case based on this tree. These designs enable a unified traversal from the root, through intermediate charge nodes, to case-specific leaf nodes, which respectively correspond to two tasks. Additionally, in the training, we also introduce a revision loss that jointly minimizes the discrepancy between the identifiers of predicted and labeled charges as well as retrieved cases, improving the accuracy and consistency for both tasks. Extensive experiments on two datasets demonstrate that Gear consistently outperforms state-of-the-art methods in legal case retrieval while maintaining competitive judgment prediction performance.

Generative Retrieval with Semantic Tree-Structured Item Identifiers via Contrastive Learning

Sep 23, 2023

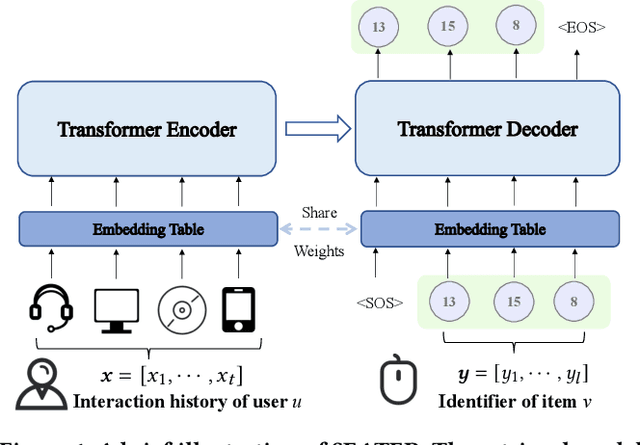

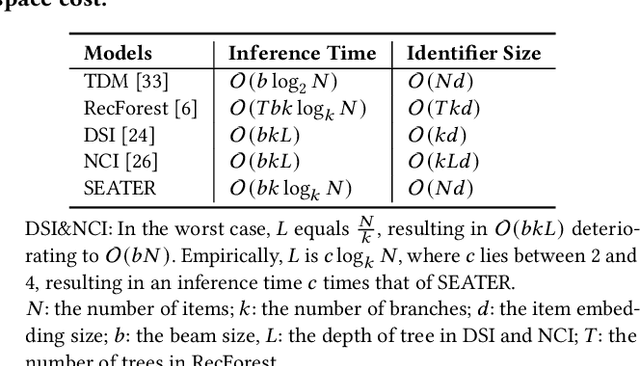

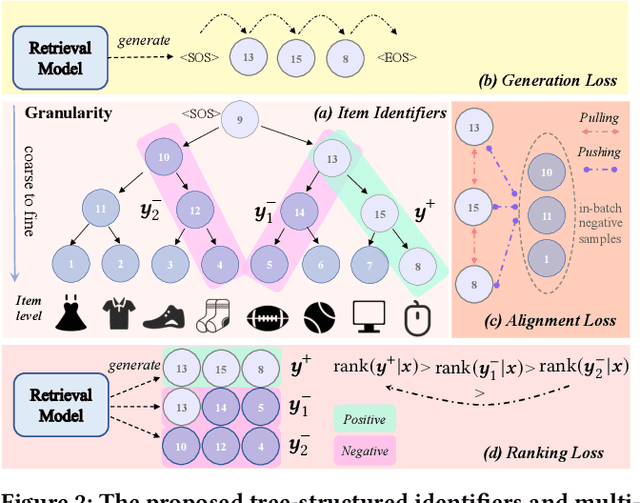

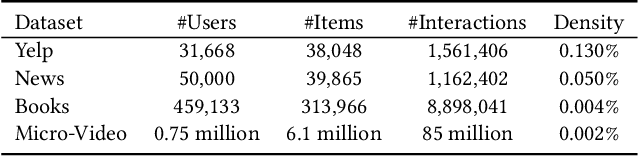

Abstract:The retrieval phase is a vital component in recommendation systems, requiring the model to be effective and efficient. Recently, generative retrieval has become an emerging paradigm for document retrieval, showing notable performance. These methods enjoy merits like being end-to-end differentiable, suggesting their viability in recommendation. However, these methods fall short in efficiency and effectiveness for large-scale recommendations. To obtain efficiency and effectiveness, this paper introduces a generative retrieval framework, namely SEATER, which learns SEmAntic Tree-structured item identifiERs via contrastive learning. Specifically, we employ an encoder-decoder model to extract user interests from historical behaviors and retrieve candidates via tree-structured item identifiers. SEATER devises a balanced k-ary tree structure of item identifiers, allocating semantic space to each token individually. This strategy maintains semantic consistency within the same level, while distinct levels correlate to varying semantic granularities. This structure also maintains consistent and fast inference speed for all items. Considering the tree structure, SEATER learns identifier tokens' semantics, hierarchical relationships, and inter-token dependencies. To achieve this, we incorporate two contrastive learning tasks with the generation task to optimize both the model and identifiers. The infoNCE loss aligns the token embeddings based on their hierarchical positions. The triplet loss ranks similar identifiers in desired orders. In this way, SEATER achieves both efficiency and effectiveness. Extensive experiments on three public datasets and an industrial dataset have demonstrated that SEATER outperforms state-of-the-art models significantly.

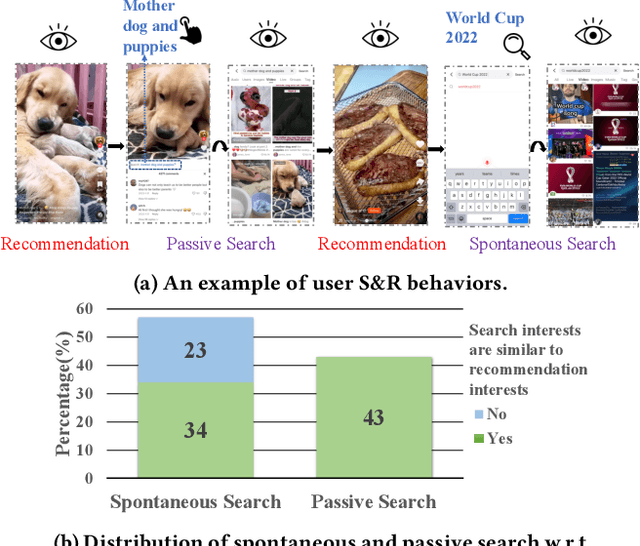

KuaiSAR: A Unified Search And Recommendation Dataset

Jun 18, 2023Abstract:The confluence of Search and Recommendation (S&R) services is vital to online services, including e-commerce and video platforms. The integration of S&R modeling is a highly intuitive approach adopted by industry practitioners. However, there is a noticeable lack of research conducted in this area within academia, primarily due to the absence of publicly available datasets. Consequently, a substantial gap has emerged between academia and industry regarding research endeavors in joint optimization using user behavior data from both S&R services. To bridge this gap, we introduce the first large-scale, real-world dataset KuaiSAR of integrated Search And Recommendation behaviors collected from Kuaishou, a leading short-video app in China with over 350 million daily active users. Previous research in this field has predominantly employed publicly available semi-synthetic datasets and simulated, with artificially fabricated search behaviors. Distinct from previous datasets, KuaiSAR contains genuine user behaviors, including the occurrence of each interaction within either search or recommendation service, and the users' transitions between the two services. This work aids in joint modeling of S&R, and utilizing search data for recommender systems (and recommendation data for search engines). Furthermore, due to the various feedback labels associated with user-video interactions, KuaiSAR also supports a broad range of tasks, including intent recommendation, multi-task learning, and modeling of long sequential multi-behavioral patterns. We believe this dataset will serve as a catalyst for innovative research and bridge the gap between academia and industry in understanding the S&R services in practical, real-world applications.

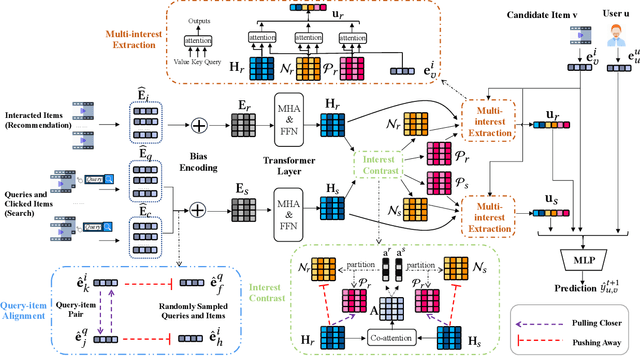

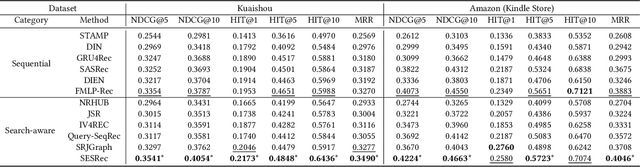

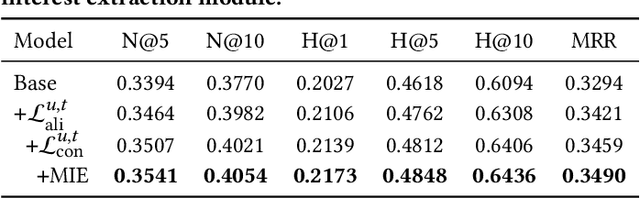

When Search Meets Recommendation: Learning Disentangled Search Representation for Recommendation

May 18, 2023

Abstract:Modern online service providers such as online shopping platforms often provide both search and recommendation (S&R) services to meet different user needs. Rarely has there been any effective means of incorporating user behavior data from both S&R services. Most existing approaches either simply treat S&R behaviors separately, or jointly optimize them by aggregating data from both services, ignoring the fact that user intents in S&R can be distinctively different. In our paper, we propose a Search-Enhanced framework for the Sequential Recommendation (SESRec) that leverages users' search interests for recommendation, by disentangling similar and dissimilar representations within S&R behaviors. Specifically, SESRec first aligns query and item embeddings based on users' query-item interactions for the computations of their similarities. Two transformer encoders are used to learn the contextual representations of S&R behaviors independently. Then a contrastive learning task is designed to supervise the disentanglement of similar and dissimilar representations from behavior sequences of S&R. Finally, we extract user interests by the attention mechanism from three perspectives, i.e., the contextual representations, the two separated behaviors containing similar and dissimilar interests. Extensive experiments on both industrial and public datasets demonstrate that SESRec consistently outperforms state-of-the-art models. Empirical studies further validate that SESRec successfully disentangle similar and dissimilar user interests from their S&R behaviors.

Uncovering ChatGPT's Capabilities in Recommender Systems

May 11, 2023

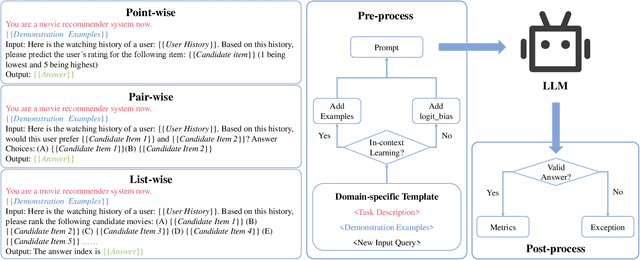

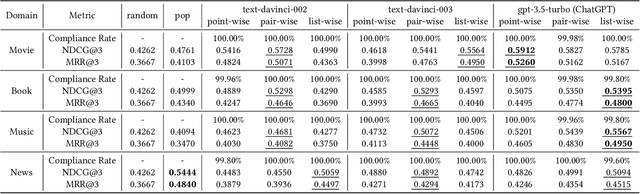

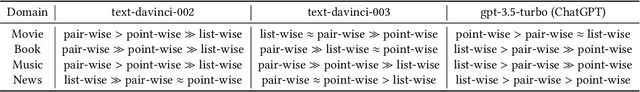

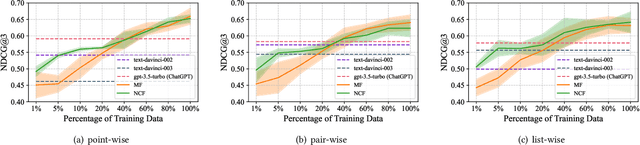

Abstract:The debut of ChatGPT has recently attracted the attention of the natural language processing (NLP) community and beyond. Existing studies have demonstrated that ChatGPT shows significant improvement in a range of downstream NLP tasks, but the capabilities and limitations of ChatGPT in terms of recommendations remain unclear. In this study, we aim to conduct an empirical analysis of ChatGPT's recommendation ability from an Information Retrieval (IR) perspective, including point-wise, pair-wise, and list-wise ranking. To achieve this goal, we re-formulate the above three recommendation policies into a domain-specific prompt format. Through extensive experiments on four datasets from different domains, we demonstrate that ChatGPT outperforms other large language models across all three ranking policies. Based on the analysis of unit cost improvements, we identify that ChatGPT with list-wise ranking achieves the best trade-off between cost and performance compared to point-wise and pair-wise ranking. Moreover, ChatGPT shows the potential for mitigating the cold start problem and explainable recommendation. To facilitate further explorations in this area, the full code and detailed original results are open-sourced at https://github.com/rainym00d/LLM4RS.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge