Yukie Nagai

Symmetric Behavior Regularization via Taylor Expansion of Symmetry

Aug 06, 2025

Abstract:This paper introduces symmetric divergences to behavior regularization policy optimization (BRPO) to establish a novel offline RL framework. Existing methods focus on asymmetric divergences such as KL to obtain analytic regularized policies and a practical minimization objective. We show that symmetric divergences do not permit an analytic policy as regularization and can incur numerical issues as loss. We tackle these challenges by the Taylor series of $f$-divergence. Specifically, we prove that an analytic policy can be obtained with a finite series. For loss, we observe that symmetric divergences can be decomposed into an asymmetry and a conditional symmetry term, Taylor-expanding the latter alleviates numerical issues. Summing together, we propose Symmetric $f$ Actor-Critic (S$f$-AC), the first practical BRPO algorithm with symmetric divergences. Experimental results on distribution approximation and MuJoCo verify that S$f$-AC performs competitively.

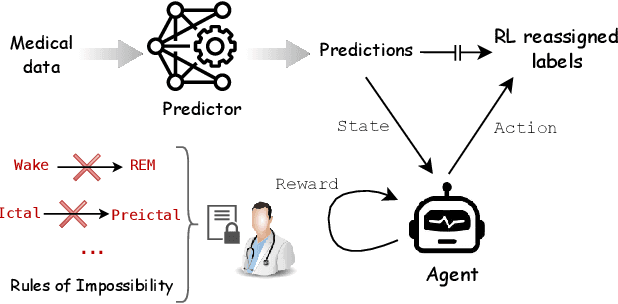

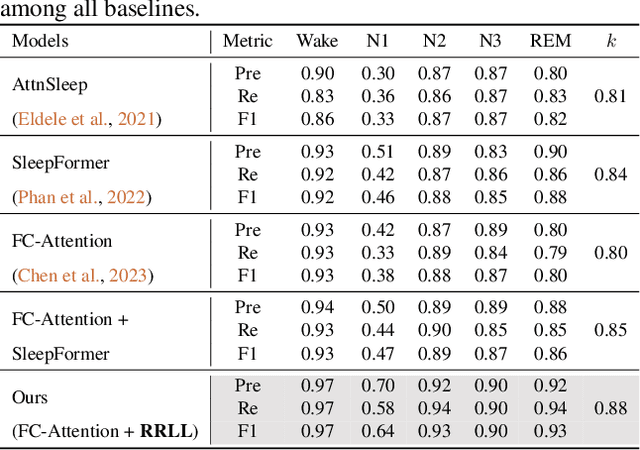

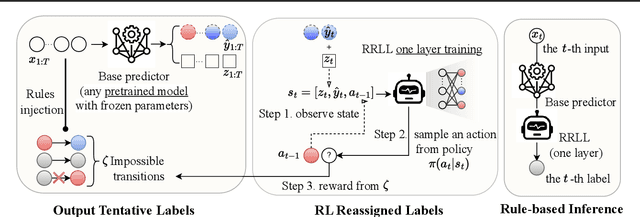

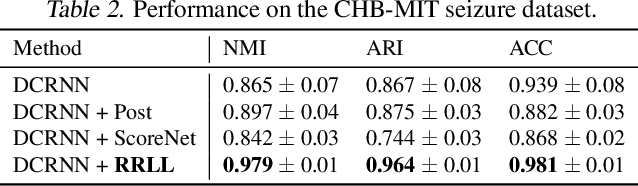

Towards Physiologically Sensible Predictions via the Rule-based Reinforcement Learning Layer

Jan 31, 2025

Abstract:This paper adds to the growing literature of reinforcement learning (RL) for healthcare by proposing a novel paradigm: augmenting any predictor with Rule-based RL Layer (RRLL) that corrects the model's physiologically impossible predictions. Specifically, RRLL takes as input states predicted labels and outputs corrected labels as actions. The reward of the state-action pair is evaluated by a set of general rules. RRLL is efficient, general and lightweight: it does not require heavy expert knowledge like prior work but only a set of impossible transitions. This set is much smaller than all possible transitions; yet it can effectively reduce physiologically impossible mistakes made by the state-of-the-art predictor models. We verify the utility of RRLL on a variety of important healthcare classification problems and observe significant improvements using the same setup, with only the domain-specific set of impossibility changed. In-depth analysis shows that RRLL indeed improves accuracy by effectively reducing the presence of physiologically impossible predictions.

Fat-to-Thin Policy Optimization: Offline RL with Sparse Policies

Jan 24, 2025

Abstract:Sparse continuous policies are distributions that can choose some actions at random yet keep strictly zero probability for the other actions, which are radically different from the Gaussian. They have important real-world implications, e.g. in modeling safety-critical tasks like medicine. The combination of offline reinforcement learning and sparse policies provides a novel paradigm that enables learning completely from logged datasets a safety-aware sparse policy. However, sparse policies can cause difficulty with the existing offline algorithms which require evaluating actions that fall outside of the current support. In this paper, we propose the first offline policy optimization algorithm that tackles this challenge: Fat-to-Thin Policy Optimization (FtTPO). Specifically, we maintain a fat (heavy-tailed) proposal policy that effectively learns from the dataset and injects knowledge to a thin (sparse) policy, which is responsible for interacting with the environment. We instantiate FtTPO with the general $q$-Gaussian family that encompasses both heavy-tailed and sparse policies and verify that it performs favorably in a safety-critical treatment simulation and the standard MuJoCo suite. Our code is available at \url{https://github.com/lingweizhu/fat2thin}.

Affordance Blending Networks

Apr 24, 2024

Abstract:Affordances, a concept rooted in ecological psychology and pioneered by James J. Gibson, have emerged as a fundamental framework for understanding the dynamic relationship between individuals and their environments. Expanding beyond traditional perceptual and cognitive paradigms, affordances represent the inherent effect and action possibilities that objects offer to the agents within a given context. As a theoretical lens, affordances bridge the gap between effect and action, providing a nuanced understanding of the connections between agents' actions on entities and the effect of these actions. In this study, we propose a model that unifies object, action and effect into a single latent representation in a common latent space that is shared between all affordances that we call the affordance space. Using this affordance space, our system is able to generate effect trajectories when action and object are given and is able to generate action trajectories when effect trajectories and objects are given. In the experiments, we showed that our model does not learn the behavior of each object but it learns the affordance relations shared by the objects that we call equivalences. In addition to simulated experiments, we showed that our model can be used for direct imitation in real world cases. We also propose affordances as a base for Cross Embodiment transfer to link the actions of different robots. Finally, we introduce selective loss as a solution that allows valid outputs to be generated for indeterministic model inputs.

Correspondence learning between morphologically different robots through task demonstrations

Oct 20, 2023

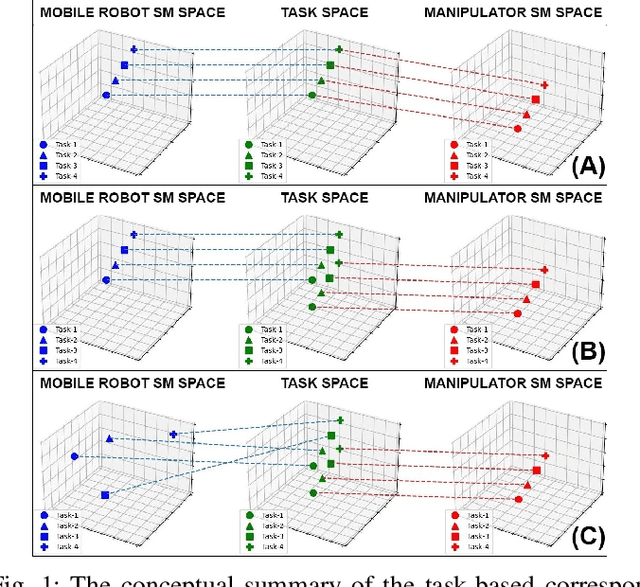

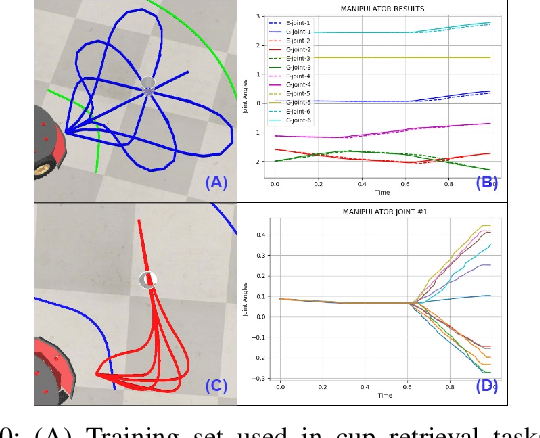

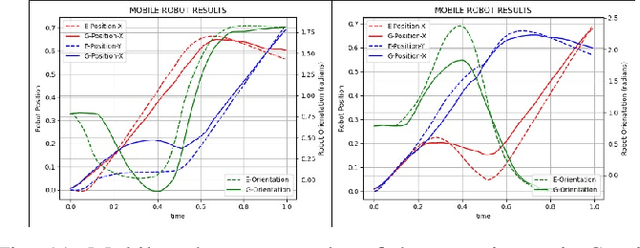

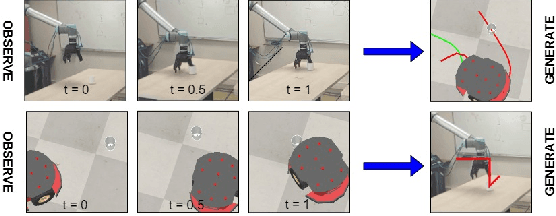

Abstract:We observe a large variety of robots in terms of their bodies, sensors, and actuators. Given the commonalities in the skill sets, teaching each skill to each different robot independently is inefficient and not scalable when the large variety in the robotic landscape is considered. If we can learn the correspondences between the sensorimotor spaces of different robots, we can expect a skill that is learned in one robot can be more directly and easily transferred to the other robots. In this paper, we propose a method to learn correspondences between robots that have significant differences in their morphologies: a fixed-based manipulator robot with joint control and a differential drive mobile robot. For this, both robots are first given demonstrations that achieve the same tasks. A common latent representation is formed while learning the corresponding policies. After this initial learning stage, the observation of a new task execution by one robot becomes sufficient to generate a latent space representation pertaining to the other robot to achieve the same task. We verified our system in a set of experiments where the correspondence between two simulated robots is learned (1) when the robots need to follow the same paths to achieve the same task, (2) when the robots need to follow different trajectories to achieve the same task, and (3) when complexities of the required sensorimotor trajectories are different for the robots considered. We also provide a proof-of-the-concept realization of correspondence learning between a real manipulator robot and a simulated mobile robot.

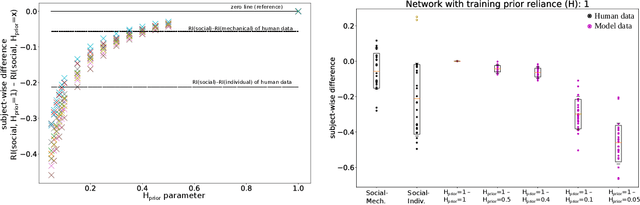

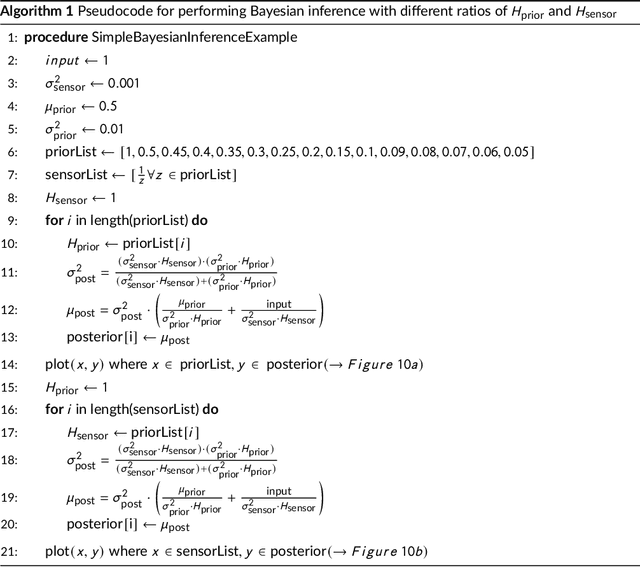

The world seems different in a social context: a neural network analysis of human experimental data

Mar 03, 2022

Abstract:Human perception and behavior are affected by the situational context, in particular during social interactions. A recent study demonstrated that humans perceive visual stimuli differently depending on whether they do the task by themselves or together with a robot. Specifically, it was found that the central tendency effect is stronger in social than in non-social task settings. The particular nature of such behavioral changes induced by social interaction, and their underlying cognitive processes in the human brain are, however, still not well understood. In this paper, we address this question by training an artificial neural network inspired by the predictive coding theory on the above behavioral data set. Using this computational model, we investigate whether the change in behavior that was caused by the situational context in the human experiment could be explained by continuous modifications of a parameter expressing how strongly sensory and prior information affect perception. We demonstrate that it is possible to replicate human behavioral data in both individual and social task settings by modifying the precision of prior and sensory signals, indicating that social and non-social task settings might in fact exist on a continuum. At the same time an analysis of the neural activation traces of the trained networks provides evidence that information is coded in fundamentally different ways in the network in the individual and in the social conditions. Our results emphasize the importance of computational replications of behavioral data for generating hypotheses on the underlying cognitive mechanisms of shared perception and may provide inspiration for follow-up studies in the field of neuroscience.

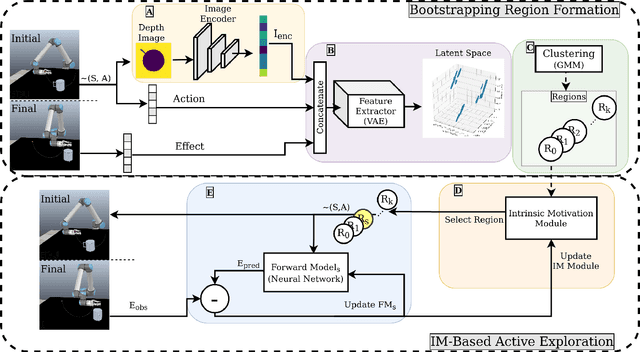

Intrinsic Motivation in Object-Action-Outcome Blending Latent Space

Aug 26, 2020

Abstract:One effective approach for equipping artificial agents with sensorimotor skills is to use self-exploration. To do this efficiently is critical as time and data collection are costly. In this study, we propose an exploration mechanism that blends action, object, and action outcome representations into a latent space, where local regions are formed to host forward model learning. The agent uses intrinsic motivation to select the forward model with the highest learning progress to adapt at a given exploration step. This parallels how infants learn, as high learning progress indicates that the learning problem is neither too easy nor too difficult in the selected region. The proposed approach is validated with a simulated robot in a table-top environment. The robot interacts with different kinds of objects using a set of parameterized actions and learns the outcomes of these interactions. With the proposed approach, the robot organizes its own curriculum of learning as in existing intrinsic motivation approaches and outperforms them in terms of learning speed. Moreover, the learning regime demonstrates features that partially match infant development, in particular, the proposed system learns to predict grasp action outcomes earlier than that of push action.

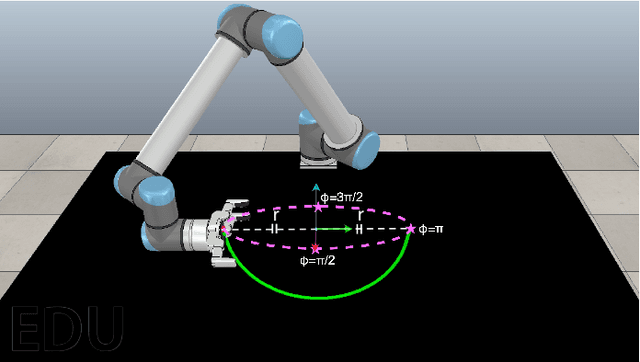

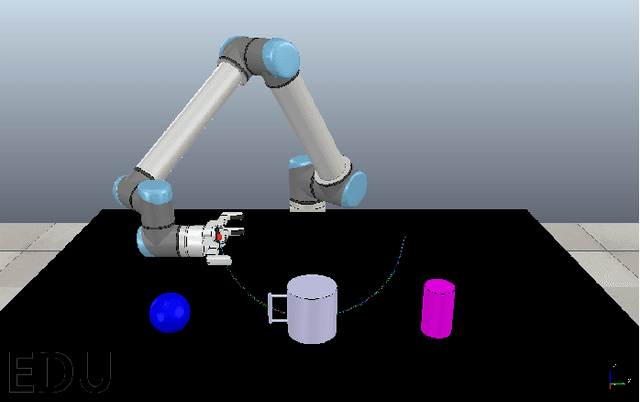

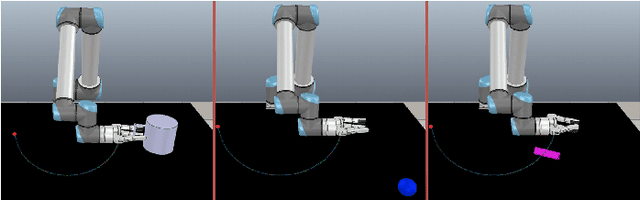

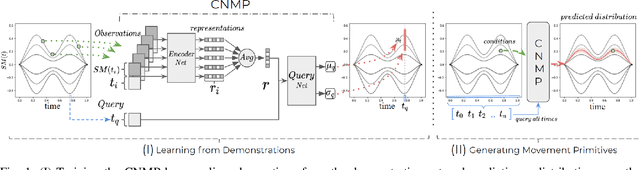

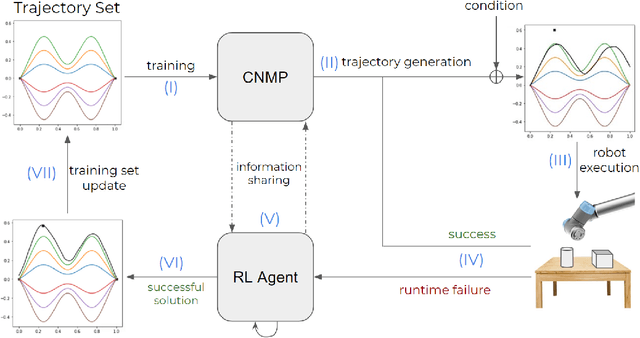

Adaptive Conditional Neural Movement Primitives via Representation Sharing Between Supervised and Reinforcement Learning

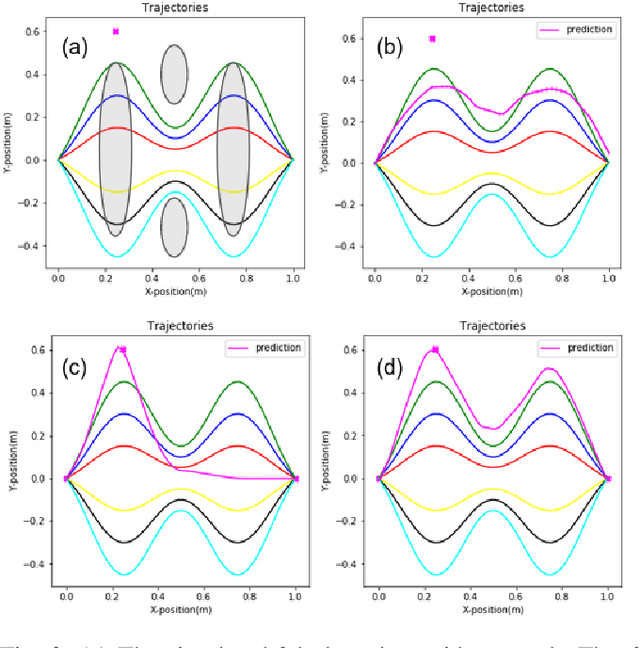

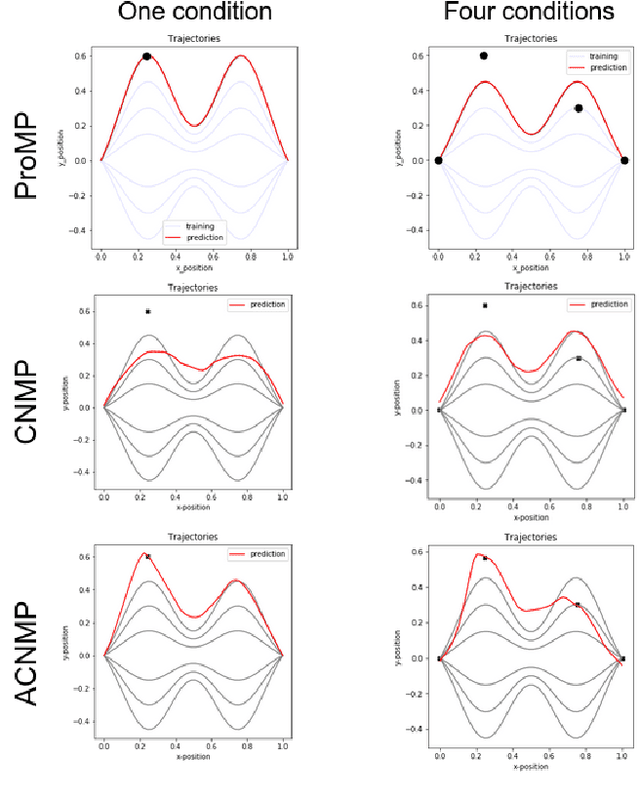

Mar 25, 2020

Abstract:Learning by Demonstration provides a sample efficient way to equip robots with complex sensorimotor skills in supervised manner. Several movement primitive representations can be used for flexible motor representation and learning. A recent state-of-the art approach is Conditional Neural Movement Primitives (CNMP) that can learn non-linear relations between environment parameters and complex multi-modal trajectories from a few expert demonstrations by forming powerful latent space representations. In this study, to improve the applicability of CNMP to changing tasks and/or environments, we couple it with a reinforcement learning agent that exploits the formed representations by the original CNMP network, and learns to generate synthetic demonstrations for further learning. This enables the CNMP network to generalize to new environments by adapting its internal representations. In the current implementation, the reinforcement learning agent is triggered when a failure in task execution is detected, and the CNMP is trained with the newly discovered demonstration (trajectory), which shares essential characteristics with the original demonstrations due to the representation sharing. As a result, the overall system increases its capacity and handle situations in scenarios where the initial CNMP network can not produce a useful trajectory. To show the validity of our proposed model, we compare our approach with original CNMP work and other movement primitives approaches. Furthermore, we presents the experimental results from the implementation of the proposed model on real robotics setups, which indicate the applicability of our approach as an effective adaptive learning by demonstration system.

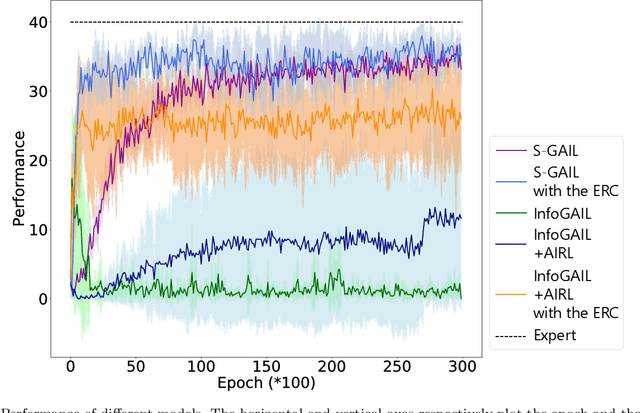

Situated GAIL: Multitask imitation using task-conditioned adversarial inverse reinforcement learning

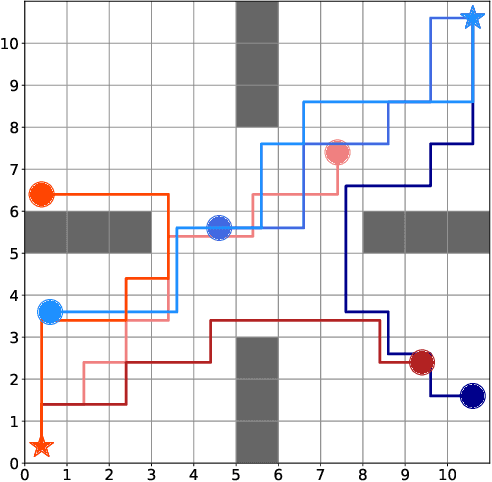

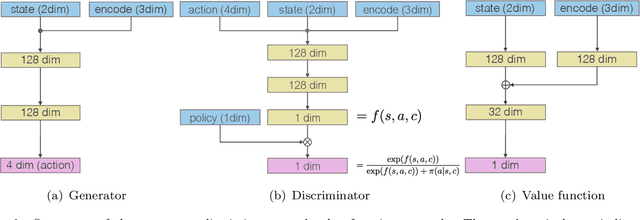

Nov 01, 2019

Abstract:Generative adversarial imitation learning (GAIL) has attracted increasing attention in the field of robot learning. It enables robots to learn a policy to achieve a task demonstrated by an expert while simultaneously estimating the reward function behind the expert's behaviors. However, this framework is limited to learning a single task with a single reward function. This study proposes an extended framework called situated GAIL (S-GAIL), in which a task variable is introduced to both the discriminator and generator of the GAIL framework. The task variable has the roles of discriminating different contexts and making the framework learn different reward functions and policies for multiple tasks. To achieve the early convergence of learning and robustness during reward estimation, we introduce a term to adjust the entropy regularization coefficient in the generator's objective function. Our experiments using two setups (navigation in a discrete grid world and arm reaching in a continuous space) demonstrate that the proposed framework can acquire multiple reward functions and policies more effectively than existing frameworks. The task variable enables our framework to differentiate contexts while sharing common knowledge among multiple tasks.

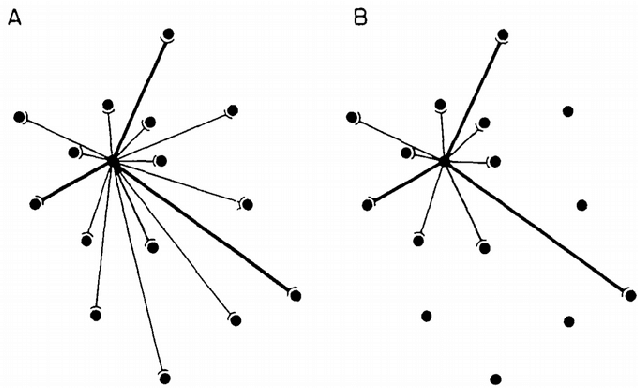

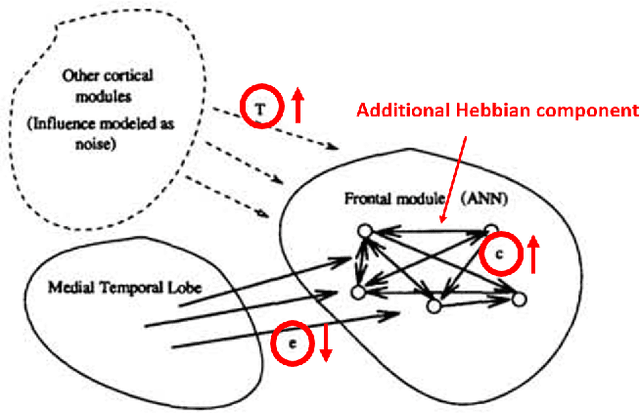

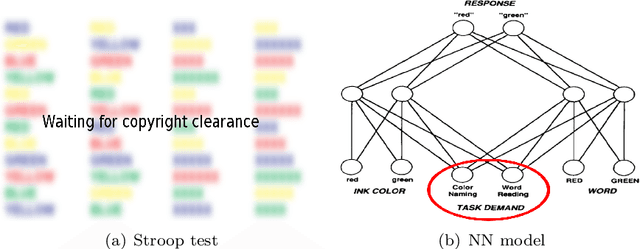

A Review on Neural Network Models of Schizophrenia and Autism Spectrum Disorder

Jun 24, 2019

Abstract:This survey presents the most relevant neural network models of autism spectrum disorder and schizophrenia, from the first connectionist models to recent deep network architectures. We analyzed and compared the most representative symptoms with its neural model counterpart, detailing the alteration introduced in the network that generates each of the symptoms, and identifying their strengths and weaknesses. For completeness we additionally cross-compared Bayesian and free-energy approaches. Models of schizophrenia mainly focused on hallucinations and delusional thoughts using neural disconnections or inhibitory imbalance as the predominating alteration. Models of autism rather focused on perceptual difficulties, mainly excessive attention to environment details, implemented as excessive inhibitory connections or increased sensory precision. We found an excessive tight view of the psychopathologies around one specific and simplified effect, usually constrained to the technical idiosyncrasy of the network used. Recent theories and evidence on sensorimotor integration and body perception combined with modern neural network architectures offer a broader and novel spectrum to approach these psychopathologies, outlining the future research on neural networks computational psychiatry, a powerful asset for understanding the inner processes of the human brain.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge