Improving interactive reinforcement learning: What makes a good teacher?

Paper and Code

Apr 15, 2019

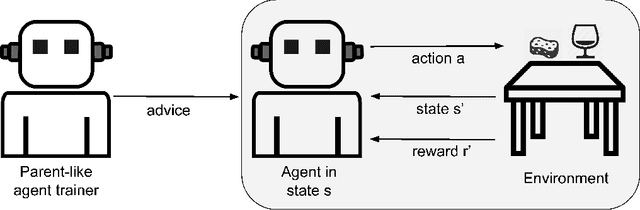

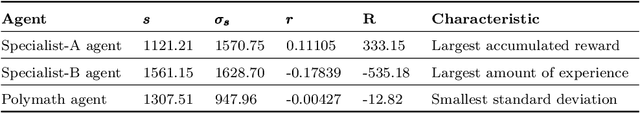

Interactive reinforcement learning has become an important apprenticeship approach to speed up convergence in classic reinforcement learning problems. In this regard, a variant of interactive reinforcement learning is policy shaping which uses a parent-like trainer to propose the next action to be performed and by doing so reduces the search space by advice. On some occasions, the trainer may be another artificial agent which in turn was trained using reinforcement learning methods to afterward becoming an advisor for other learner-agents. In this work, we analyze internal representations and characteristics of artificial agents to determine which agent may outperform others to become a better trainer-agent. Using a polymath agent, as compared to a specialist agent, an advisor leads to a larger reward and faster convergence of the reward signal and also to a more stable behavior in terms of the state visit frequency of the learner-agents. Moreover, we analyze system interaction parameters in order to determine how influential they are in the apprenticeship process, where the consistency of feedback is much more relevant when dealing with different learner obedience parameters.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge