Serge Thill

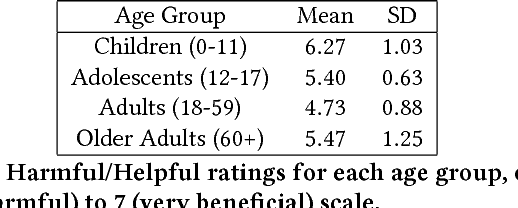

From Modelling to Understanding Children's Behaviour in the Context of Robotics and Social Artificial Intelligence

Oct 20, 2022Abstract:Understanding and modelling children's cognitive processes and their behaviour in the context of their interaction with robots and social artificial intelligence systems is a fundamental prerequisite for meaningful and effective robot interventions. However, children's development involve complex faculties such as exploration, creativity and curiosity which are challenging to model. Also, often children express themselves in a playful way which is different from a typical adult behaviour. Different children also have different needs, and it remains a challenge in the current state of the art that those of neurodiverse children are under-addressed. With this workshop, we aim to promote a common ground among different disciplines such as developmental sciences, artificial intelligence and social robotics and discuss cutting-edge research in the area of user modelling and adaptive systems for children.

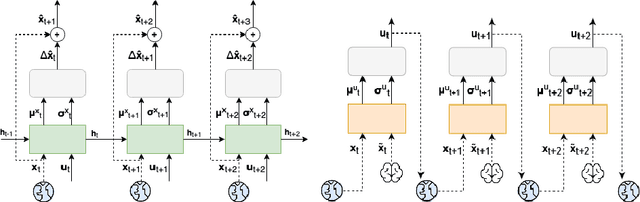

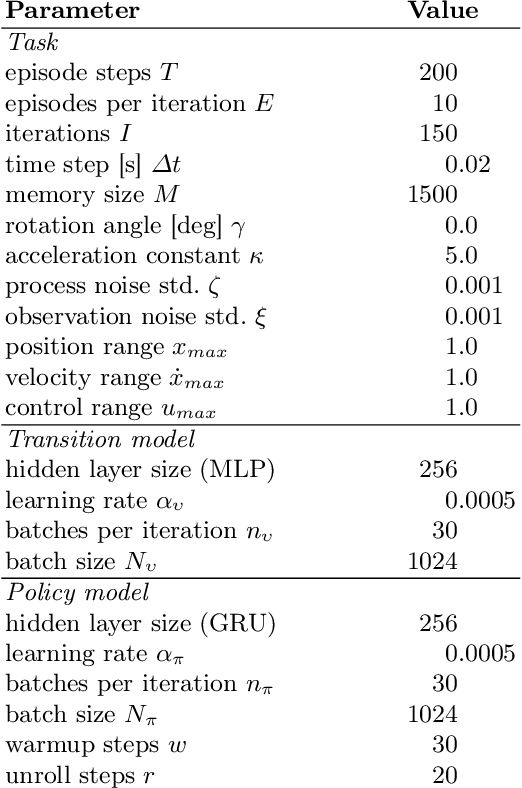

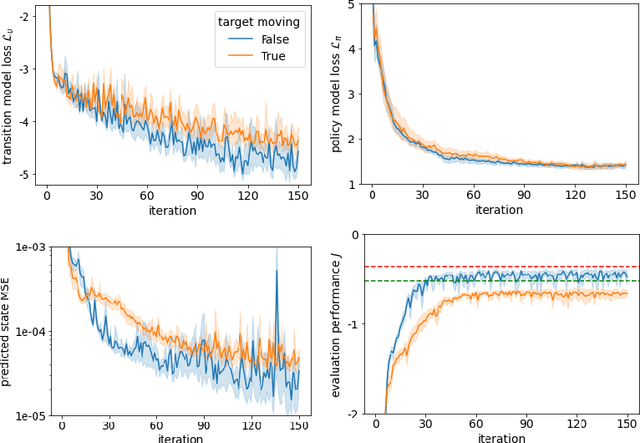

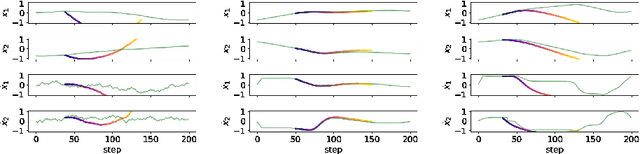

Learning Policies for Continuous Control via Transition Models

Sep 16, 2022

Abstract:It is doubtful that animals have perfect inverse models of their limbs (e.g., what muscle contraction must be applied to every joint to reach a particular location in space). However, in robot control, moving an arm's end-effector to a target position or along a target trajectory requires accurate forward and inverse models. Here we show that by learning the transition (forward) model from interaction, we can use it to drive the learning of an amortized policy. Hence, we revisit policy optimization in relation to the deep active inference framework and describe a modular neural network architecture that simultaneously learns the system dynamics from prediction errors and the stochastic policy that generates suitable continuous control commands to reach a desired reference position. We evaluated the model by comparing it against the baseline of a linear quadratic regulator, and conclude with additional steps to take toward human-like motor control.

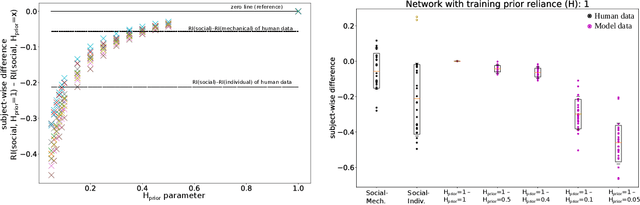

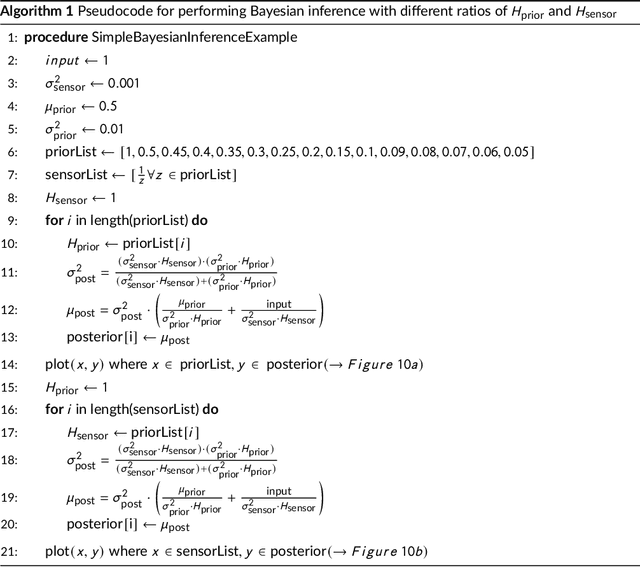

The world seems different in a social context: a neural network analysis of human experimental data

Mar 03, 2022

Abstract:Human perception and behavior are affected by the situational context, in particular during social interactions. A recent study demonstrated that humans perceive visual stimuli differently depending on whether they do the task by themselves or together with a robot. Specifically, it was found that the central tendency effect is stronger in social than in non-social task settings. The particular nature of such behavioral changes induced by social interaction, and their underlying cognitive processes in the human brain are, however, still not well understood. In this paper, we address this question by training an artificial neural network inspired by the predictive coding theory on the above behavioral data set. Using this computational model, we investigate whether the change in behavior that was caused by the situational context in the human experiment could be explained by continuous modifications of a parameter expressing how strongly sensory and prior information affect perception. We demonstrate that it is possible to replicate human behavioral data in both individual and social task settings by modifying the precision of prior and sensory signals, indicating that social and non-social task settings might in fact exist on a continuum. At the same time an analysis of the neural activation traces of the trained networks provides evidence that information is coded in fundamentally different ways in the network in the individual and in the social conditions. Our results emphasize the importance of computational replications of behavioral data for generating hypotheses on the underlying cognitive mechanisms of shared perception and may provide inspiration for follow-up studies in the field of neuroscience.

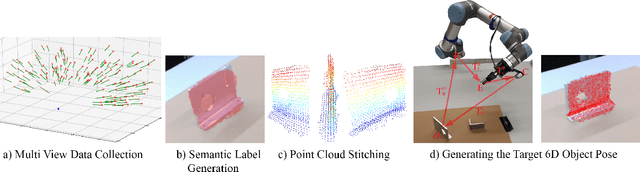

Generating Annotated Training Data for 6D Object Pose Estimation in Operational Environments with Minimal User Interaction

Mar 17, 2021

Abstract:Recently developed deep neural networks achieved state-of-the-art results in the subject of 6D object pose estimation for robot manipulation. However, those supervised deep learning methods require expensive annotated training data. Current methods for reducing those costs frequently use synthetic data from simulations, but rely on expert knowledge and suffer from the "domain gap" when shifting to the real world. Here, we present a proof of concept for a novel approach of autonomously generating annotated training data for 6D object pose estimation. This approach is designed for learning new objects in operational environments while requiring little interaction and no expertise on the part of the user. We evaluate our autonomous data generation approach in two grasping experiments, where we archive a similar grasping success rate as related work on a non autonomously generated data set.

Recognizing Human Internal States: A Conceptor-Based Approach

Sep 09, 2019

Abstract:The past few decades has seen increased interest in the application of social robots to interventions for Autism Spectrum Disorder as behavioural coaches [4]. We consider that robots embedded in therapies could also provide quantitative diagnostic information by observing patient behaviours. The social nature of ASD symptoms means that, to achieve this, robots need to be able to recognize the internal states their human interaction partners are experiencing, e.g. states of confusion, engagement etc. Approaching this problem can be broken down into two questions: (1) what information, accessible to robots, can be used to recognize internal states, and (2) how can a system classify internal states such that it allows for sufficiently detailed diagnostic information? In this paper we discuss these two questions in depth and propose a novel, conceptor-based classifier. We report the initial results of this system in a proof-of-concept study and outline plans for future work.

Proceedings of the Workshop on Social Robots in Therapy: Focusing on Autonomy and Ethical Challenges

Dec 18, 2018

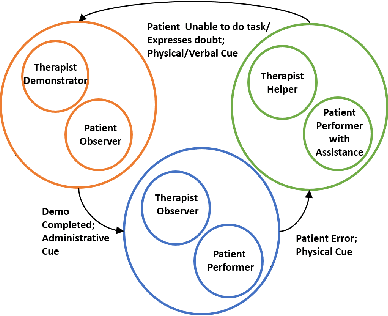

Abstract:Robot-Assisted Therapy (RAT) has successfully been used in HRI research by including social robots in health-care interventions by virtue of their ability to engage human users both social and emotional dimensions. Research projects on this topic exist all over the globe in the USA, Europe, and Asia. All of these projects have the overall ambitious goal to increase the well-being of a vulnerable population. Typical work in RAT is performed using remote controlled robots; a technique called Wizard-of-Oz (WoZ). The robot is usually controlled, unbeknownst to the patient, by a human operator. However, WoZ has been demonstrated to not be a sustainable technique in the long-term. Providing the robots with autonomy (while remaining under the supervision of the therapist) has the potential to lighten the therapists burden, not only in the therapeutic session itself but also in longer-term diagnostic tasks. Therefore, there is a need for exploring several degrees of autonomy in social robots used in therapy. Increasing the autonomy of robots might also bring about a new set of challenges. In particular, there will be a need to answer new ethical questions regarding the use of robots with a vulnerable population, as well as a need to ensure ethically-compliant robot behaviours. Therefore, in this workshop we want to gather findings and explore which degree of autonomy might help to improve health-care interventions and how we can overcome the ethical challenges inherent to it.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge