Vicky Charisi

Liability regimes in the age of AI: a use-case driven analysis of the burden of proof

Nov 03, 2022

Abstract:New emerging technologies powered by Artificial Intelligence (AI) have the potential to disruptively transform our societies for the better. In particular, data-driven learning approaches (i.e., Machine Learning (ML)) have been a true revolution in the advancement of multiple technologies in various application domains. But at the same time there is growing concerns about certain intrinsic characteristics of these methodologies that carry potential risks to both safety and fundamental rights. Although there are mechanisms in the adoption process to minimize these risks (e.g., safety regulations), these do not exclude the possibility of harm occurring, and if this happens, victims should be able to seek compensation. Liability regimes will therefore play a key role in ensuring basic protection for victims using or interacting with these systems. However, the same characteristics that make AI systems inherently risky, such as lack of causality, opacity, unpredictability or their self and continuous learning capabilities, lead to considerable difficulties when it comes to proving causation. This paper presents three case studies, as well as the methodology to reach them, that illustrate these difficulties. Specifically, we address the cases of cleaning robots, delivery drones and robots in education. The outcome of the proposed analysis suggests the need to revise liability regimes to alleviate the burden of proof on victims in cases involving AI technologies.

From Modelling to Understanding Children's Behaviour in the Context of Robotics and Social Artificial Intelligence

Oct 20, 2022Abstract:Understanding and modelling children's cognitive processes and their behaviour in the context of their interaction with robots and social artificial intelligence systems is a fundamental prerequisite for meaningful and effective robot interventions. However, children's development involve complex faculties such as exploration, creativity and curiosity which are challenging to model. Also, often children express themselves in a playful way which is different from a typical adult behaviour. Different children also have different needs, and it remains a challenge in the current state of the art that those of neurodiverse children are under-addressed. With this workshop, we aim to promote a common ground among different disciplines such as developmental sciences, artificial intelligence and social robotics and discuss cutting-edge research in the area of user modelling and adaptive systems for children.

UNICEF Guidance on AI for Children: Application to the Design of a Social Robot For and With Autistic Children

Aug 27, 2021

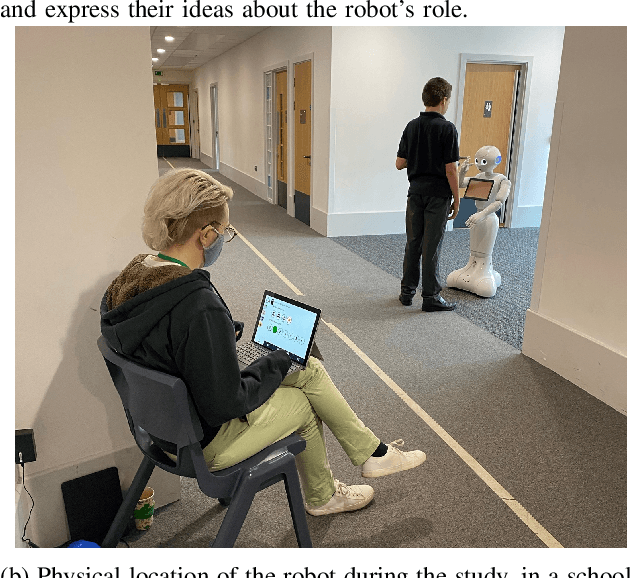

Abstract:For a period of three weeks in June 2021, we embedded a social robot (Softbank Pepper) in a Special Educational Needs (SEN) school, with a focus on supporting the well-being of autistic children. Our methodology to design and embed the robot among this vulnerable population follows a comprehensive participatory approach. We used the research project as a test-bed to demonstrate in a complex real-world environment the importance and suitability of the nine UNICEF guidelines on AI for Children. The UNICEF guidelines on AI for Children closely align with several of the UN goals for sustainable development, and, as such, we report here our contribution to these goals.

Should artificial agents ask for help in human-robot collaborative problem-solving?

May 25, 2020

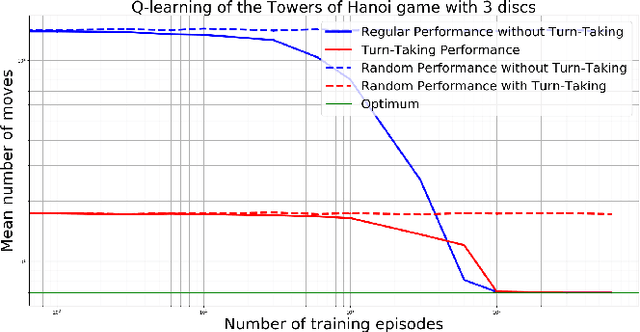

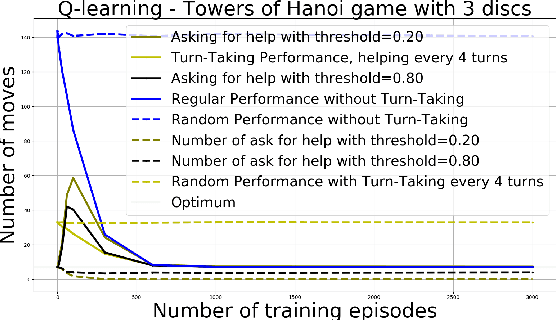

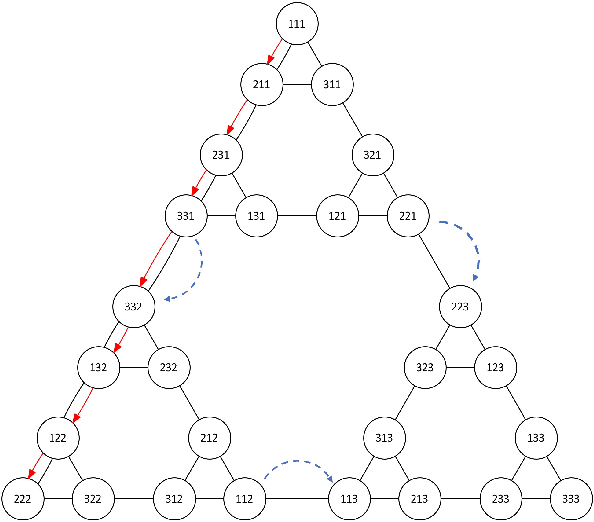

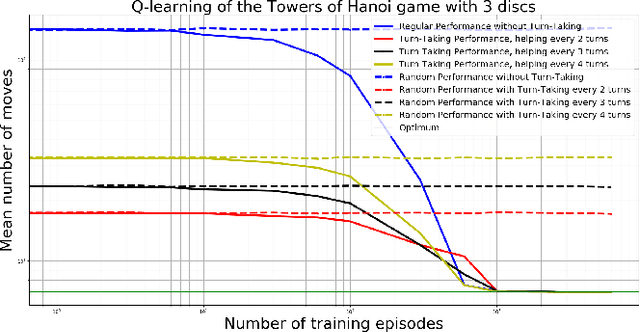

Abstract:Transferring as fast as possible the functioning of our brain to artificial intelligence is an ambitious goal that would help advance the state of the art in AI and robotics. It is in this perspective that we propose to start from hypotheses derived from an empirical study in a human-robot interaction and to verify if they are validated in the same way for children as for a basic reinforcement learning algorithm. Thus, we check whether receiving help from an expert when solving a simple close-ended task (the Towers of Hano\"i) allows to accelerate or not the learning of this task, depending on whether the intervention is canonical or requested by the player. Our experiences have allowed us to conclude that, whether requested or not, a Q-learning algorithm benefits in the same way from expert help as children do.

Assessing the impact of machine intelligence on human behaviour: an interdisciplinary endeavour

Jun 07, 2018

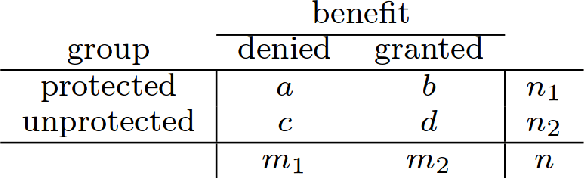

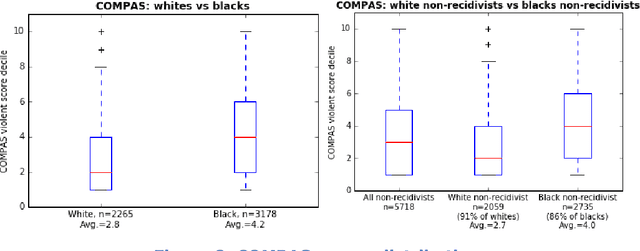

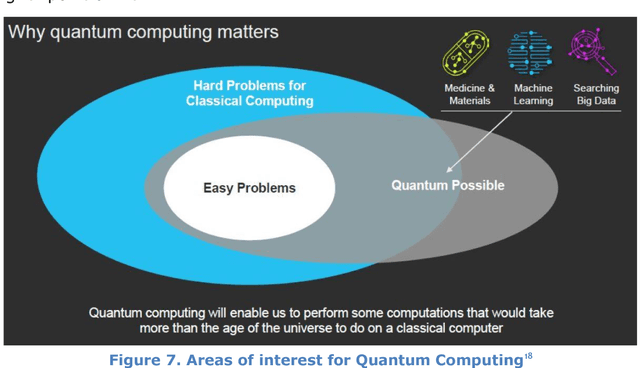

Abstract:This document contains the outcome of the first Human behaviour and machine intelligence (HUMAINT) workshop that took place 5-6 March 2018 in Barcelona, Spain. The workshop was organized in the context of a new research programme at the Centre for Advanced Studies, Joint Research Centre of the European Commission, which focuses on studying the potential impact of artificial intelligence on human behaviour. The workshop gathered an interdisciplinary group of experts to establish the state of the art research in the field and a list of future research challenges to be addressed on the topic of human and machine intelligence, algorithm's potential impact on human cognitive capabilities and decision making, and evaluation and regulation needs. The document is made of short position statements and identification of challenges provided by each expert, and incorporates the result of the discussions carried out during the workshop. In the conclusion section, we provide a list of emerging research topics and strategies to be addressed in the near future.

Towards Moral Autonomous Systems

Oct 31, 2017

Abstract:Both the ethics of autonomous systems and the problems of their technical implementation have by now been studied in some detail. Less attention has been given to the areas in which these two separate concerns meet. This paper, written by both philosophers and engineers of autonomous systems, addresses a number of issues in machine ethics that are located at precisely the intersection between ethics and engineering. We first discuss the main challenges which, in our view, machine ethics posses to moral philosophy. We them consider different approaches towards the conceptual design of autonomous systems and their implications on the ethics implementation in such systems. Then we examine problematic areas regarding the specification and verification of ethical behavior in autonomous systems, particularly with a view towards the requirements of future legislation. We discuss transparency and accountability issues that will be crucial for any future wide deployment of autonomous systems in society. Finally we consider the, often overlooked, possibility of intentional misuse of AI systems and the possible dangers arising out of deliberately unethical design, implementation, and use of autonomous robots.

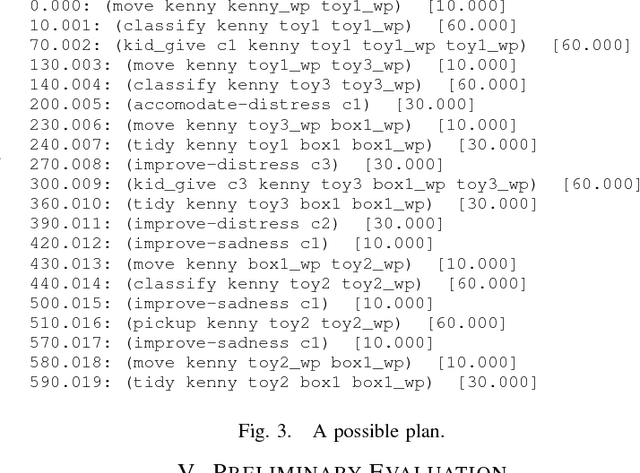

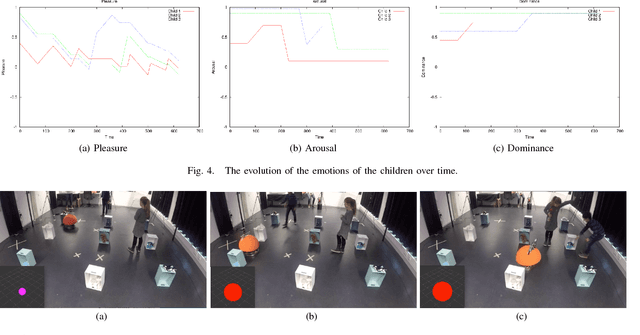

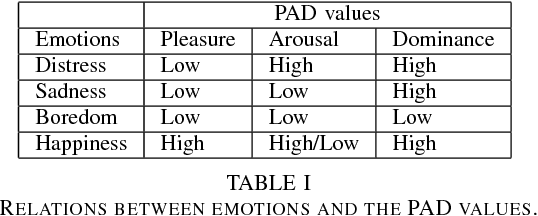

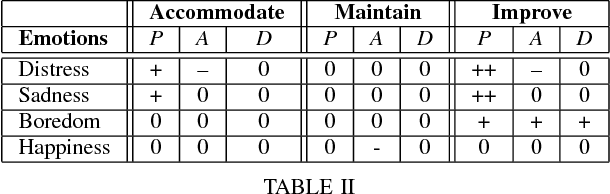

Planning Based System for Child-Robot Interaction in Dynamic Play Environments

Aug 21, 2017

Abstract:This paper describes the initial steps towards the design of a robotic system that intends to perform actions autonomously in a naturalistic play environment. At the same time it aims for social human-robot interaction~(HRI), focusing on children. We draw on existing theories of child development and on dimensional models of emotions to explore the design of a dynamic interaction framework for natural child-robot interaction. In this dynamic setting, the social HRI is defined by the ability of the system to take into consideration the socio-emotional state of the user and to plan appropriately by selecting appropriate strategies for execution. The robot needs a temporal planning system, which combines features of task-oriented actions and principles of social human robot interaction. We present initial results of an empirical study for the evaluation of the proposed framework in the context of a collaborative sorting game.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge