Yinlong Qian

M4V: Multi-Modal Mamba for Text-to-Video Generation

Jun 12, 2025Abstract:Text-to-video generation has significantly enriched content creation and holds the potential to evolve into powerful world simulators. However, modeling the vast spatiotemporal space remains computationally demanding, particularly when employing Transformers, which incur quadratic complexity in sequence processing and thus limit practical applications. Recent advancements in linear-time sequence modeling, particularly the Mamba architecture, offer a more efficient alternative. Nevertheless, its plain design limits its direct applicability to multi-modal and spatiotemporal video generation tasks. To address these challenges, we introduce M4V, a Multi-Modal Mamba framework for text-to-video generation. Specifically, we propose a multi-modal diffusion Mamba (MM-DiM) block that enables seamless integration of multi-modal information and spatiotemporal modeling through a multi-modal token re-composition design. As a result, the Mamba blocks in M4V reduce FLOPs by 45% compared to the attention-based alternative when generating videos at 768$\times$1280 resolution. Additionally, to mitigate the visual quality degradation in long-context autoregressive generation processes, we introduce a reward learning strategy that further enhances per-frame visual realism. Extensive experiments on text-to-video benchmarks demonstrate M4V's ability to produce high-quality videos while significantly lowering computational costs. Code and models will be publicly available at https://huangjch526.github.io/M4V_project.

FlexVAR: Flexible Visual Autoregressive Modeling without Residual Prediction

Feb 27, 2025Abstract:This work challenges the residual prediction paradigm in visual autoregressive modeling and presents FlexVAR, a new Flexible Visual AutoRegressive image generation paradigm. FlexVAR facilitates autoregressive learning with ground-truth prediction, enabling each step to independently produce plausible images. This simple, intuitive approach swiftly learns visual distributions and makes the generation process more flexible and adaptable. Trained solely on low-resolution images ($\leq$ 256px), FlexVAR can: (1) Generate images of various resolutions and aspect ratios, even exceeding the resolution of the training images. (2) Support various image-to-image tasks, including image refinement, in/out-painting, and image expansion. (3) Adapt to various autoregressive steps, allowing for faster inference with fewer steps or enhancing image quality with more steps. Our 1.0B model outperforms its VAR counterpart on the ImageNet 256$\times$256 benchmark. Moreover, when zero-shot transfer the image generation process with 13 steps, the performance further improves to 2.08 FID, outperforming state-of-the-art autoregressive models AiM/VAR by 0.25/0.28 FID and popular diffusion models LDM/DiT by 1.52/0.19 FID, respectively. When transferring our 1.0B model to the ImageNet 512$\times$512 benchmark in a zero-shot manner, FlexVAR achieves competitive results compared to the VAR 2.3B model, which is a fully supervised model trained at 512$\times$512 resolution.

CLIP-GS: Unifying Vision-Language Representation with 3D Gaussian Splatting

Dec 26, 2024

Abstract:Recent works in 3D multimodal learning have made remarkable progress. However, typically 3D multimodal models are only capable of handling point clouds. Compared to the emerging 3D representation technique, 3D Gaussian Splatting (3DGS), the spatially sparse point cloud cannot depict the texture information of 3D objects, resulting in inferior reconstruction capabilities. This limitation constrains the potential of point cloud-based 3D multimodal representation learning. In this paper, we present CLIP-GS, a novel multimodal representation learning framework grounded in 3DGS. We introduce the GS Tokenizer to generate serialized gaussian tokens, which are then processed through transformer layers pre-initialized with weights from point cloud models, resulting in the 3DGS embeddings. CLIP-GS leverages contrastive loss between 3DGS and the visual-text embeddings of CLIP, and we introduce an image voting loss to guide the directionality and convergence of gradient optimization. Furthermore, we develop an efficient way to generate triplets of 3DGS, images, and text, facilitating CLIP-GS in learning unified multimodal representations. Leveraging the well-aligned multimodal representations, CLIP-GS demonstrates versatility and outperforms point cloud-based models on various 3D tasks, including multimodal retrieval, zero-shot, and few-shot classification.

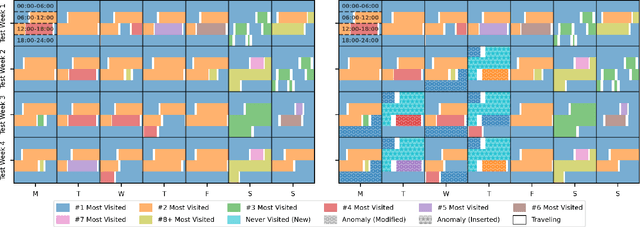

Back to Bayesics: Uncovering Human Mobility Distributions and Anomalies with an Integrated Statistical and Neural Framework

Oct 01, 2024Abstract:Existing methods for anomaly detection often fall short due to their inability to handle the complexity, heterogeneity, and high dimensionality inherent in real-world mobility data. In this paper, we propose DeepBayesic, a novel framework that integrates Bayesian principles with deep neural networks to model the underlying multivariate distributions from sparse and complex datasets. Unlike traditional models, DeepBayesic is designed to manage heterogeneous inputs, accommodating both continuous and categorical data to provide a more comprehensive understanding of mobility patterns. The framework features customized neural density estimators and hybrid architectures, allowing for flexibility in modeling diverse feature distributions and enabling the use of specialized neural networks tailored to different data types. Our approach also leverages agent embeddings for personalized anomaly detection, enhancing its ability to distinguish between normal and anomalous behaviors for individual agents. We evaluate our approach on several mobility datasets, demonstrating significant improvements over state-of-the-art anomaly detection methods. Our results indicate that incorporating personalization and advanced sequence modeling techniques can substantially enhance the ability to detect subtle and complex anomalies in spatiotemporal event sequences.

NUMOSIM: A Synthetic Mobility Dataset with Anomaly Detection Benchmarks

Sep 04, 2024

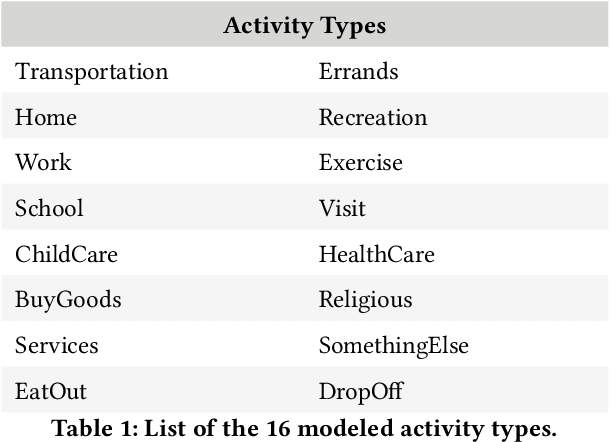

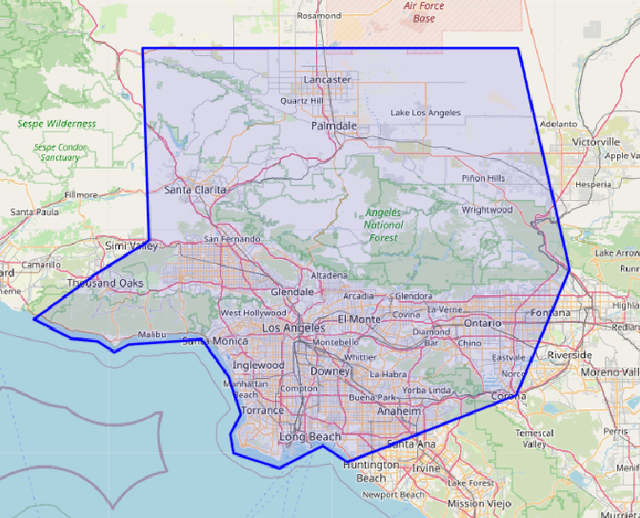

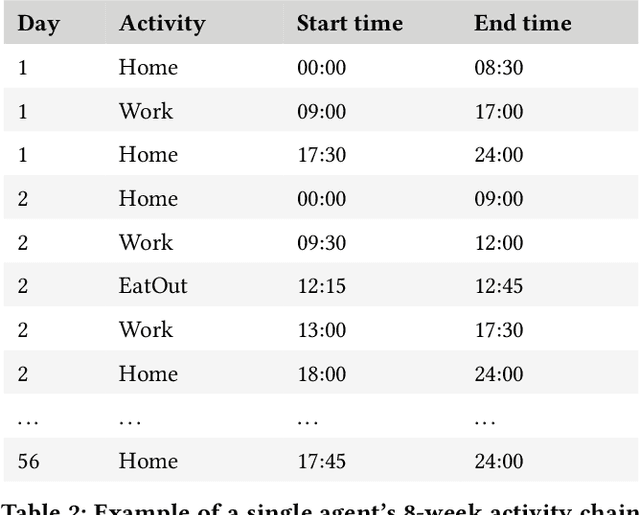

Abstract:Collecting real-world mobility data is challenging. It is often fraught with privacy concerns, logistical difficulties, and inherent biases. Moreover, accurately annotating anomalies in large-scale data is nearly impossible, as it demands meticulous effort to distinguish subtle and complex patterns. These challenges significantly impede progress in geospatial anomaly detection research by restricting access to reliable data and complicating the rigorous evaluation, comparison, and benchmarking of methodologies. To address these limitations, we introduce a synthetic mobility dataset, NUMOSIM, that provides a controlled, ethical, and diverse environment for benchmarking anomaly detection techniques. NUMOSIM simulates a wide array of realistic mobility scenarios, encompassing both typical and anomalous behaviours, generated through advanced deep learning models trained on real mobility data. This approach allows NUMOSIM to accurately replicate the complexities of real-world movement patterns while strategically injecting anomalies to challenge and evaluate detection algorithms based on how effectively they capture the interplay between demographic, geospatial, and temporal factors. Our goal is to advance geospatial mobility analysis by offering a realistic benchmark for improving anomaly detection and mobility modeling techniques. To support this, we provide open access to the NUMOSIM dataset, along with comprehensive documentation, evaluation metrics, and benchmark results.

OV-DINO: Unified Open-Vocabulary Detection with Language-Aware Selective Fusion

Jul 10, 2024

Abstract:Open-vocabulary detection is a challenging task due to the requirement of detecting objects based on class names, including those not encountered during training. Existing methods have shown strong zero-shot detection capabilities through pre-training on diverse large-scale datasets. However, these approaches still face two primary challenges: (i) how to universally integrate diverse data sources for end-to-end training, and (ii) how to effectively leverage the language-aware capability for region-level cross-modality understanding. To address these challenges, we propose a novel unified open-vocabulary detection method called OV-DINO, which pre-trains on diverse large-scale datasets with language-aware selective fusion in a unified framework. Specifically, we introduce a Unified Data Integration (UniDI) pipeline to enable end-to-end training and eliminate noise from pseudo-label generation by unifying different data sources into detection-centric data. In addition, we propose a Language-Aware Selective Fusion (LASF) module to enable the language-aware ability of the model through a language-aware query selection and fusion process. We evaluate the performance of the proposed OV-DINO on popular open-vocabulary detection benchmark datasets, achieving state-of-the-art results with an AP of 50.6\% on the COCO dataset and 40.0\% on the LVIS dataset in a zero-shot manner, demonstrating its strong generalization ability. Furthermore, the fine-tuned OV-DINO on COCO achieves 58.4\% AP, outperforming many existing methods with the same backbone. The code for OV-DINO will be available at \href{https://github.com/wanghao9610/OV-DINO}{https://github.com/wanghao9610/OV-DINO}.

Deep Steganalysis: End-to-End Learning with Supervisory Information beyond Class Labels

Jun 27, 2018

Abstract:Recently, deep learning has shown its power in steganalysis. However, the proposed deep models have been often learned from pre-calculated noise residuals with fixed high-pass filters rather than from raw images. In this paper, we propose a new end-to-end learning framework that can learn steganalytic features directly from pixels. In the meantime, the high-pass filters are also automatically learned. Besides class labels, we make use of additional pixel level supervision of cover-stego image pair to jointly and iteratively train the proposed network which consists of a residual calculation network and a steganalysis network. The experimental results prove the effectiveness of the proposed architecture.

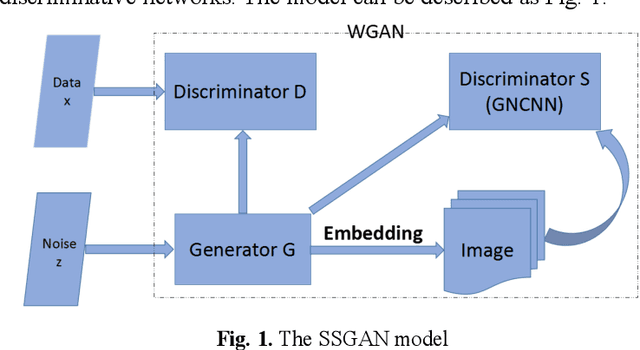

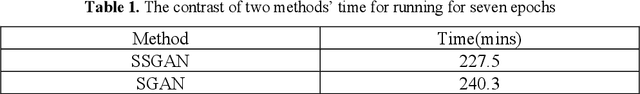

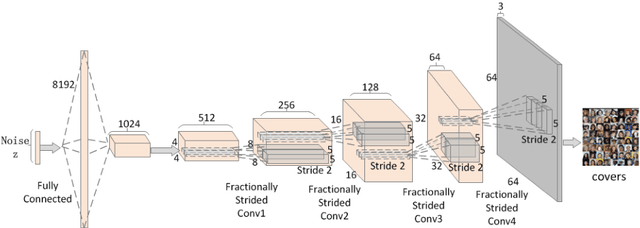

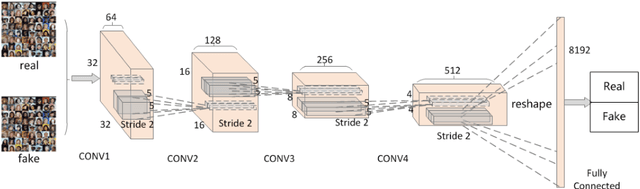

SSGAN: Secure Steganography Based on Generative Adversarial Networks

Jul 29, 2017

Abstract:In this paper, a novel strategy of Secure Steganograpy based on Generative Adversarial Networks is proposed to generate suitable and secure covers for steganography. The proposed architecture has one generative network, and two discriminative networks. The generative network mainly evaluates the visual quality of the generated images for steganography, and the discriminative networks are utilized to assess their suitableness for information hiding. Different from the existing work which adopts Deep Convolutional Generative Adversarial Networks, we utilize another form of generative adversarial networks. By using this new form of generative adversarial networks, significant improvements are made on the convergence speed, the training stability and the image quality. Furthermore, a sophisticated steganalysis network is reconstructed for the discriminative network, and the network can better evaluate the performance of the generated images. Numerous experiments are conducted on the publicly available datasets to demonstrate the effectiveness and robustness of the proposed method.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge