Tielin Zhang

MedKGent: A Large Language Model Agent Framework for Constructing Temporally Evolving Medical Knowledge Graph

Aug 17, 2025

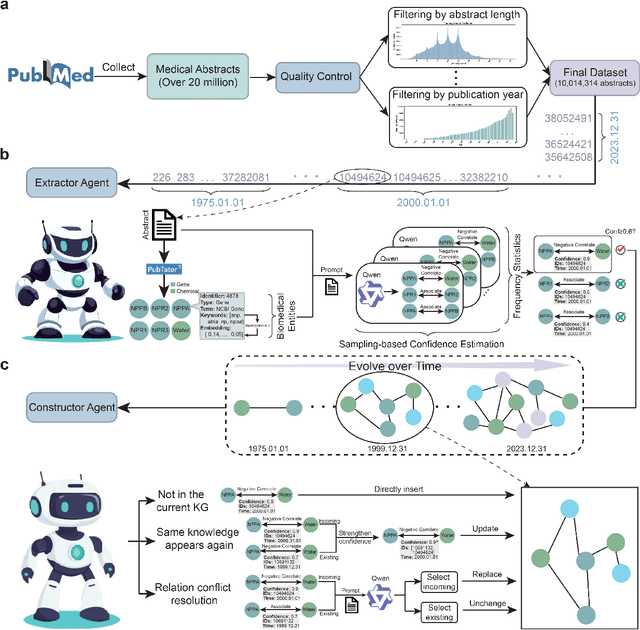

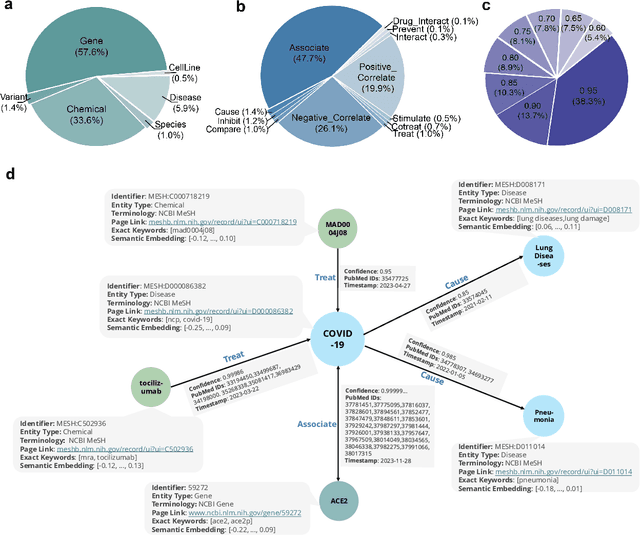

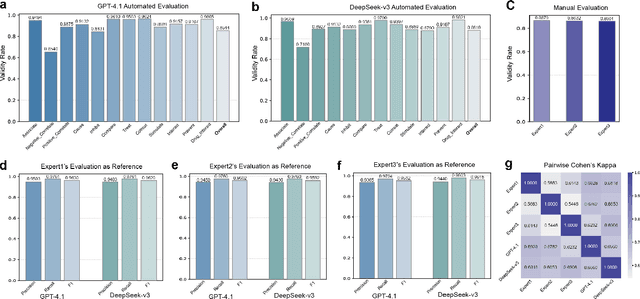

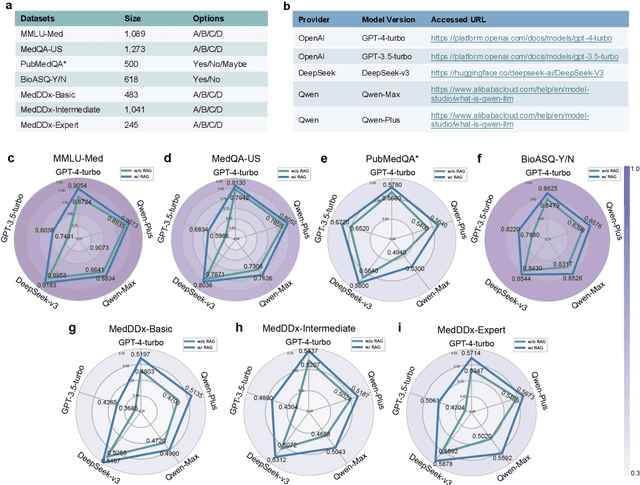

Abstract:The rapid expansion of medical literature presents growing challenges for structuring and integrating domain knowledge at scale. Knowledge Graphs (KGs) offer a promising solution by enabling efficient retrieval, automated reasoning, and knowledge discovery. However, current KG construction methods often rely on supervised pipelines with limited generalizability or naively aggregate outputs from Large Language Models (LLMs), treating biomedical corpora as static and ignoring the temporal dynamics and contextual uncertainty of evolving knowledge. To address these limitations, we introduce MedKGent, a LLM agent framework for constructing temporally evolving medical KGs. Leveraging over 10 million PubMed abstracts published between 1975 and 2023, we simulate the emergence of biomedical knowledge via a fine-grained daily time series. MedKGent incrementally builds the KG in a day-by-day manner using two specialized agents powered by the Qwen2.5-32B-Instruct model. The Extractor Agent identifies knowledge triples and assigns confidence scores via sampling-based estimation, which are used to filter low-confidence extractions and inform downstream processing. The Constructor Agent incrementally integrates the retained triples into a temporally evolving graph, guided by confidence scores and timestamps to reinforce recurring knowledge and resolve conflicts. The resulting KG contains 156,275 entities and 2,971,384 relational triples. Quality assessments by two SOTA LLMs and three domain experts demonstrate an accuracy approaching 90\%, with strong inter-rater agreement. To evaluate downstream utility, we conduct RAG across seven medical question answering benchmarks using five leading LLMs, consistently observing significant improvements over non-augmented baselines. Case studies further demonstrate the KG's value in literature-based drug repurposing via confidence-aware causal inference.

Information-Theoretic Complementary Prompts for Improved Continual Text Classification

May 27, 2025Abstract:Continual Text Classification (CTC) aims to continuously classify new text data over time while minimizing catastrophic forgetting of previously acquired knowledge. However, existing methods often focus on task-specific knowledge, overlooking the importance of shared, task-agnostic knowledge. Inspired by the complementary learning systems theory, which posits that humans learn continually through the interaction of two systems -- the hippocampus, responsible for forming distinct representations of specific experiences, and the neocortex, which extracts more general and transferable representations from past experiences -- we introduce Information-Theoretic Complementary Prompts (InfoComp), a novel approach for CTC. InfoComp explicitly learns two distinct prompt spaces: P(rivate)-Prompt and S(hared)-Prompt. These respectively encode task-specific and task-invariant knowledge, enabling models to sequentially learn classification tasks without relying on data replay. To promote more informative prompt learning, InfoComp uses an information-theoretic framework that maximizes mutual information between different parameters (or encoded representations). Within this framework, we design two novel loss functions: (1) to strengthen the accumulation of task-specific knowledge in P-Prompt, effectively mitigating catastrophic forgetting, and (2) to enhance the retention of task-invariant knowledge in S-Prompt, improving forward knowledge transfer. Extensive experiments on diverse CTC benchmarks show that our approach outperforms previous state-of-the-art methods.

Research Advances and New Paradigms for Biology-inspired Spiking Neural Networks

Aug 26, 2024

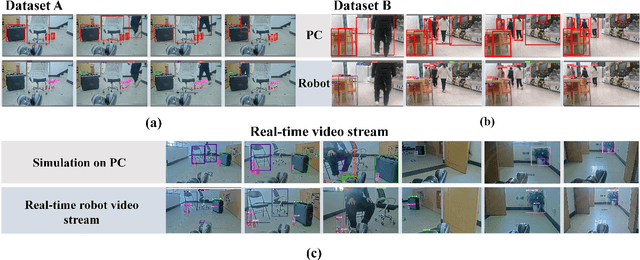

Abstract:Spiking neural networks (SNNs) are gaining popularity in the computational simulation and artificial intelligence fields owing to their biological plausibility and computational efficiency. This paper explores the historical development of SNN and concludes that these two fields are intersecting and merging rapidly. Following the successful application of Dynamic Vision Sensors (DVS) and Dynamic Audio Sensors (DAS), SNNs have found some proper paradigms, such as continuous visual signal tracking, automatic speech recognition, and reinforcement learning for continuous control, that have extensively supported their key features, including spike encoding, neuronal heterogeneity, specific functional circuits, and multiscale plasticity. Compared to these real-world paradigms, the brain contains a spiking version of the biology-world paradigm, which exhibits a similar level of complexity and is usually considered a mirror of the real world. Considering the projected rapid development of invasive and parallel Brain-Computer Interface (BCI), as well as the new BCI-based paradigms that include online pattern recognition and stimulus control of biological spike trains, SNNs naturally leverage their advantages in energy efficiency, robustness, and flexibility. The biological brain has inspired the present study of SNNs and effective SNN machine-learning algorithms, which can help enhance neuroscience discoveries in the brain by applying them to the new BCI paradigm. Such two-way interactions with positive feedback can accelerate brain science research and brain-inspired intelligence technology.

Enhanced Spatiotemporal Prediction Using Physical-guided And Frequency-enhanced Recurrent Neural Networks

May 23, 2024Abstract:Spatiotemporal prediction plays an important role in solving natural problems and processing video frames, especially in weather forecasting and human action recognition. Recent advances attempt to incorporate prior physical knowledge into the deep learning framework to estimate the unknown governing partial differential equations (PDEs), which have shown promising results in spatiotemporal prediction tasks. However, previous approaches only restrict neural network architectures or loss functions to acquire physical or PDE features, which decreases the representative capacity of a neural network. Meanwhile, the updating process of the physical state cannot be effectively estimated. To solve the above mentioned problems, this paper proposes a physical-guided neural network, which utilizes the frequency-enhanced Fourier module and moment loss to strengthen the model's ability to estimate the spatiotemporal dynamics. Furthermore, we propose an adaptive second-order Runge-Kutta method with physical constraints to model the physical states more precisely. We evaluate our model on both spatiotemporal and video prediction tasks. The experimental results show that our model outperforms state-of-the-art methods and performs best in several datasets, with a much smaller parameter count.

Biologically-Plausible Topology Improved Spiking Actor Network for Efficient Deep Reinforcement Learning

Mar 29, 2024Abstract:The success of Deep Reinforcement Learning (DRL) is largely attributed to utilizing Artificial Neural Networks (ANNs) as function approximators. Recent advances in neuroscience have unveiled that the human brain achieves efficient reward-based learning, at least by integrating spiking neurons with spatial-temporal dynamics and network topologies with biologically-plausible connectivity patterns. This integration process allows spiking neurons to efficiently combine information across and within layers via nonlinear dendritic trees and lateral interactions. The fusion of these two topologies enhances the network's information-processing ability, crucial for grasping intricate perceptions and guiding decision-making procedures. However, ANNs and brain networks differ significantly. ANNs lack intricate dynamical neurons and only feature inter-layer connections, typically achieved by direct linear summation, without intra-layer connections. This limitation leads to constrained network expressivity. To address this, we propose a novel alternative for function approximator, the Biologically-Plausible Topology improved Spiking Actor Network (BPT-SAN), tailored for efficient decision-making in DRL. The BPT-SAN incorporates spiking neurons with intricate spatial-temporal dynamics and introduces intra-layer connections, enhancing spatial-temporal state representation and facilitating more precise biological simulations. Diverging from the conventional direct linear weighted sum, the BPT-SAN models the local nonlinearities of dendritic trees within the inter-layer connections. For the intra-layer connections, the BPT-SAN introduces lateral interactions between adjacent neurons, integrating them into the membrane potential formula to ensure accurate spike firing.

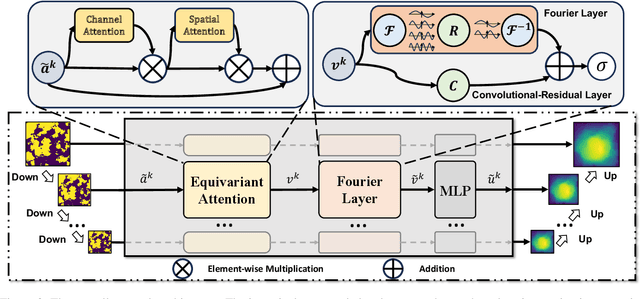

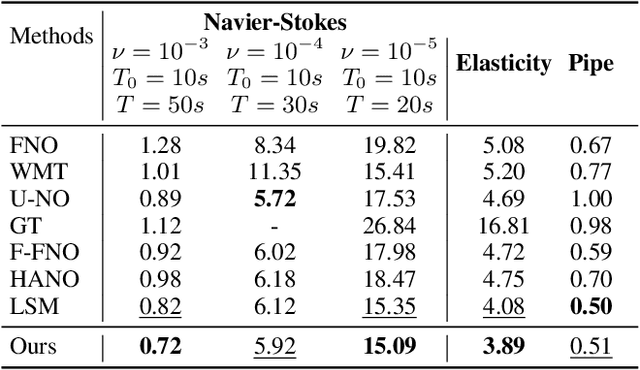

Local Convolution Enhanced Global Fourier Neural Operator For Multiscale Dynamic Spaces Prediction

Nov 21, 2023

Abstract:Neural operators extend the capabilities of traditional neural networks by allowing them to handle mappings between function spaces for the purpose of solving partial differential equations (PDEs). One of the most notable methods is the Fourier Neural Operator (FNO), which is inspired by Green's function method and approximate operator kernel directly in the frequency domain. In this work, we focus on predicting multiscale dynamic spaces, which is equivalent to solving multiscale PDEs. Multiscale PDEs are characterized by rapid coefficient changes and solution space oscillations, which are crucial for modeling atmospheric convection and ocean circulation. To solve this problem, models should have the ability to capture rapid changes and process them at various scales. However, the FNO only approximates kernels in the low-frequency domain, which is insufficient when solving multiscale PDEs. To address this challenge, we propose a novel hierarchical neural operator that integrates improved Fourier layers with attention mechanisms, aiming to capture all details and handle them at various scales. These mechanisms complement each other in the frequency domain and encourage the model to solve multiscale problems. We perform experiments on dynamic spaces governed by forward and reverse problems of multiscale elliptic equations, Navier-Stokes equations and some other physical scenarios, and reach superior performance in existing PDE benchmarks, especially equations characterized by rapid coefficient variations.

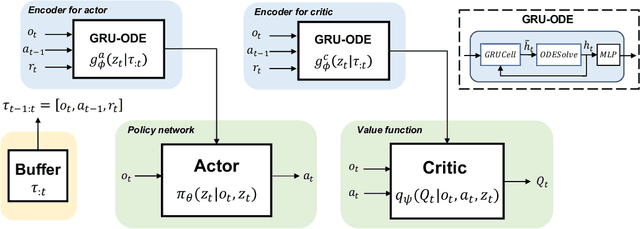

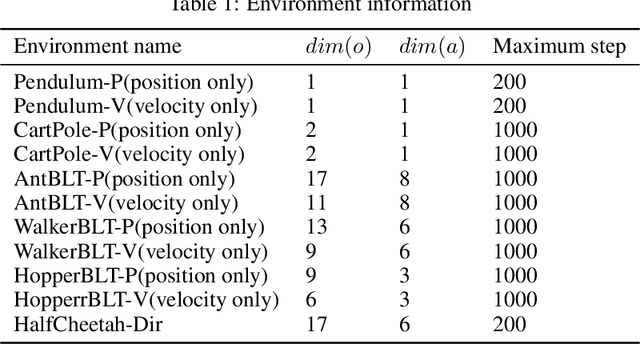

ODE-based Recurrent Model-free Reinforcement Learning for POMDPs

Sep 25, 2023

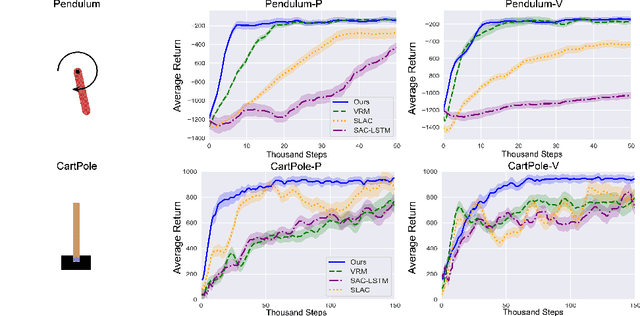

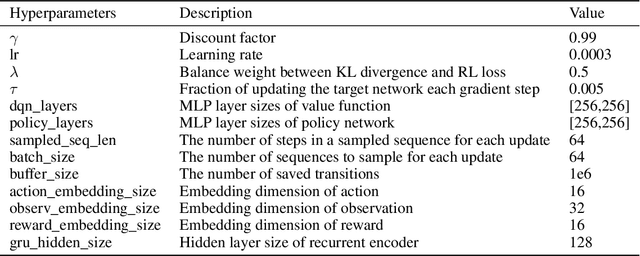

Abstract:Neural ordinary differential equations (ODEs) are widely recognized as the standard for modeling physical mechanisms, which help to perform approximate inference in unknown physical or biological environments. In partially observable (PO) environments, how to infer unseen information from raw observations puzzled the agents. By using a recurrent policy with a compact context, context-based reinforcement learning provides a flexible way to extract unobservable information from historical transitions. To help the agent extract more dynamics-related information, we present a novel ODE-based recurrent model combines with model-free reinforcement learning (RL) framework to solve partially observable Markov decision processes (POMDPs). We experimentally demonstrate the efficacy of our methods across various PO continuous control and meta-RL tasks. Furthermore, our experiments illustrate that our method is robust against irregular observations, owing to the ability of ODEs to model irregularly-sampled time series.

Attention-free Spikformer: Mixing Spike Sequences with Simple Linear Transforms

Aug 17, 2023

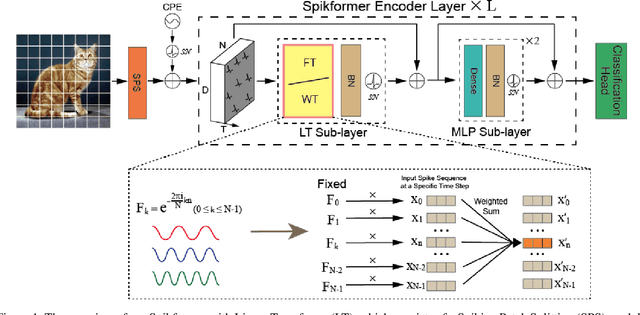

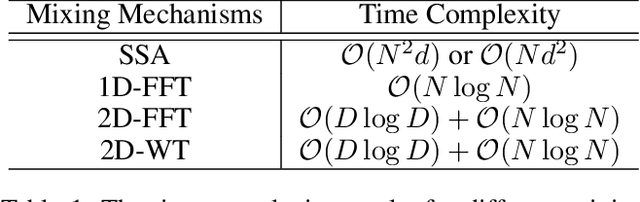

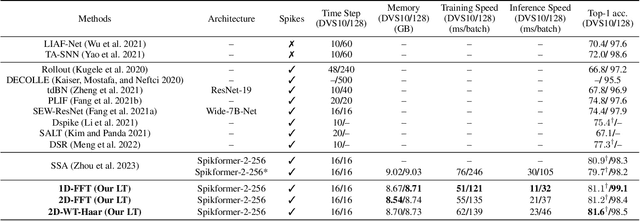

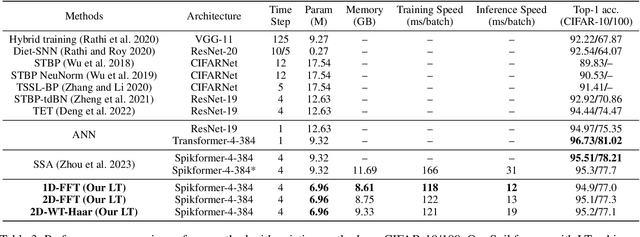

Abstract:By integrating the self-attention capability and the biological properties of Spiking Neural Networks (SNNs), Spikformer applies the flourishing Transformer architecture to SNNs design. It introduces a Spiking Self-Attention (SSA) module to mix sparse visual features using spike-form Query, Key, and Value, resulting in the State-Of-The-Art (SOTA) performance on numerous datasets compared to previous SNN-like frameworks. In this paper, we demonstrate that the Spikformer architecture can be accelerated by replacing the SSA with an unparameterized Linear Transform (LT) such as Fourier and Wavelet transforms. These transforms are utilized to mix spike sequences, reducing the quadratic time complexity to log-linear time complexity. They alternate between the frequency and time domains to extract sparse visual features, showcasing powerful performance and efficiency. We conduct extensive experiments on image classification using both neuromorphic and static datasets. The results indicate that compared to the SOTA Spikformer with SSA, Spikformer with LT achieves higher Top-1 accuracy on neuromorphic datasets (i.e., CIFAR10-DVS and DVS128 Gesture) and comparable Top-1 accuracy on static datasets (i.e., CIFAR-10 and CIFAR-100). Furthermore, Spikformer with LT achieves approximately 29-51% improvement in training speed, 61-70% improvement in inference speed, and reduces memory usage by 4-26% due to not requiring learnable parameters.

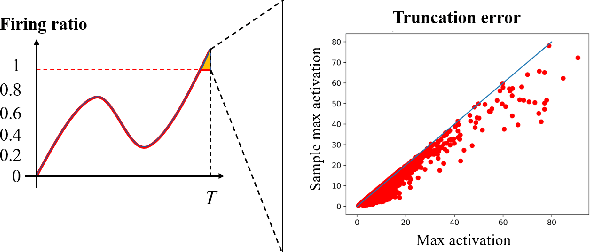

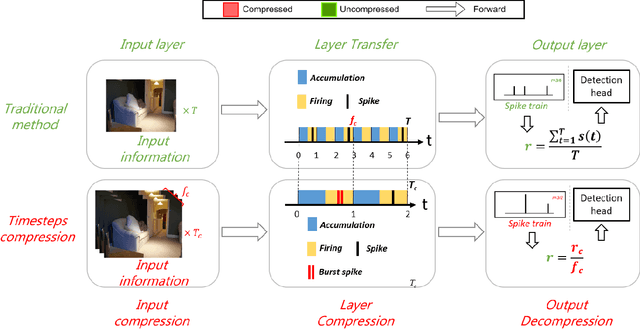

Spiking Neural Network for Ultra-low-latency and High-accurate Object Detection

Jun 27, 2023

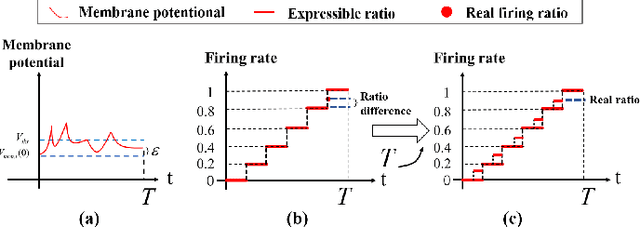

Abstract:Spiking Neural Networks (SNNs) have garnered widespread interest for their energy efficiency and brain-inspired event-driven properties. While recent methods like Spiking-YOLO have expanded the SNNs to more challenging object detection tasks, they often suffer from high latency and low detection accuracy, making them difficult to deploy on latency sensitive mobile platforms. Furthermore, the conversion method from Artificial Neural Networks (ANNs) to SNNs is hard to maintain the complete structure of the ANNs, resulting in poor feature representation and high conversion errors. To address these challenges, we propose two methods: timesteps compression and spike-time-dependent integrated (STDI) coding. The former reduces the timesteps required in ANN-SNN conversion by compressing information, while the latter sets a time-varying threshold to expand the information holding capacity. We also present a SNN-based ultra-low latency and high accurate object detection model (SUHD) that achieves state-of-the-art performance on nontrivial datasets like PASCAL VOC and MS COCO, with about remarkable 750x fewer timesteps and 30% mean average precision (mAP) improvement, compared to the Spiking-YOLO on MS COCO datasets. To the best of our knowledge, SUHD is the deepest spike-based object detection model to date that achieves ultra low timesteps to complete the lossless conversion.

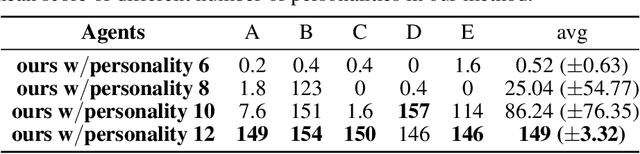

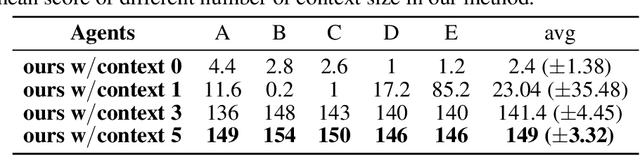

Mixture of personality improved Spiking actor network for efficient multi-agent cooperation

May 10, 2023

Abstract:Adaptive human-agent and agent-agent cooperation are becoming more and more critical in the research area of multi-agent reinforcement learning (MARL), where remarked progress has been made with the help of deep neural networks. However, many established algorithms can only perform well during the learning paradigm but exhibit poor generalization during cooperation with other unseen partners. The personality theory in cognitive psychology describes that humans can well handle the above cooperation challenge by predicting others' personalities first and then their complex actions. Inspired by this two-step psychology theory, we propose a biologically plausible mixture of personality (MoP) improved spiking actor network (SAN), whereby a determinantal point process is used to simulate the complex formation and integration of different types of personality in MoP, and dynamic and spiking neurons are incorporated into the SAN for the efficient reinforcement learning. The benchmark Overcooked task, containing a strong requirement for cooperative cooking, is selected to test the proposed MoP-SAN. The experimental results show that the MoP-SAN can achieve both high performances during not only the learning paradigm but also the generalization test (i.e., cooperation with other unseen agents) paradigm where most counterpart deep actor networks failed. Necessary ablation experiments and visualization analyses were conducted to explain why MoP and SAN are effective in multi-agent reinforcement learning scenarios while DNN performs poorly in the generalization test.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge