Robert Haschke

HydroelasticTouch: Simulation of Tactile Sensors with Hydroelastic Contact Surfaces

Jan 14, 2025

Abstract:Thanks to recent advancements in the development of inexpensive, high-resolution tactile sensors, touch sensing has become popular in contact-rich robotic manipulation tasks. With the surge of data-driven methods and their requirement for substantial datasets, several methods of simulating tactile sensors have emerged in the tactile research community to overcome real-world data collection limitations. These simulation approaches can be split into two main categories: fast but inaccurate (soft) point-contact models and slow but accurate finite element modeling. In this work, we present a novel approach to simulating pressure-based tactile sensors using the hydroelastic contact model, which provides a high degree of physical realism at a reasonable computational cost. This model produces smooth contact forces for soft-to-soft and soft-to-rigid contacts along even non-convex contact surfaces. Pressure values are approximated at each point of the contact surface and can be integrated to calculate sensor outputs. We validate our models' capacity to synthesize real-world tactile data by conducting zero-shot sim-to-real transfer of a model for object state estimation. Our simulation is available as a plug-in to our open-source, MuJoCo-based simulator.

Precision-Focused Reinforcement Learning Model for Robotic Object Pushing

Nov 13, 2024

Abstract:Non-prehensile manipulation, such as pushing objects to a desired target position, is an important skill for robots to assist humans in everyday situations. However, the task is challenging due to the large variety of objects with different and sometimes unknown physical properties, such as shape, size, mass, and friction. This can lead to the object overshooting its target position, requiring fast corrective movements of the robot around the object, especially in cases where objects need to be precisely pushed. In this paper, we improve the state-of-the-art by introducing a new memory-based vision-proprioception RL model to push objects more precisely to target positions using fewer corrective movements.

Adaptive Kinematic Modeling for Improved Hand Posture Estimates Using a Haptic Glove

Nov 10, 2024

Abstract:Most commercially available haptic gloves compromise the accuracy of hand-posture measurements in favor of a simpler design with fewer sensors. While inaccurate posture data is often sufficient for the task at hand in biomedical settings such as VR-therapy-aided rehabilitation, measurements should be as precise as possible to digitally recreate hand postures as accurately as possible. With these applications in mind, we have added extra sensors to the commercially available Dexmo haptic glove by Dexta Robotics and applied kinematic models of the haptic glove and the user's hand to improve the accuracy of hand-posture measurements. In this work, we describe the augmentations and the kinematic modeling approach. Additionally, we present and discuss an evaluation of hand posture measurements as a proof of concept.

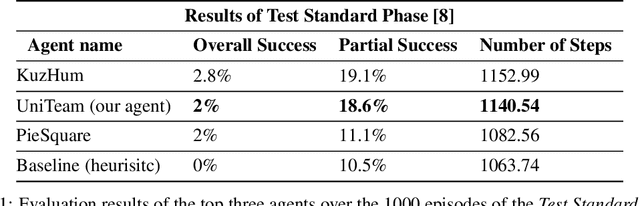

Towards Open-World Mobile Manipulation in Homes: Lessons from the Neurips 2023 HomeRobot Open Vocabulary Mobile Manipulation Challenge

Jul 09, 2024

Abstract:In order to develop robots that can effectively serve as versatile and capable home assistants, it is crucial for them to reliably perceive and interact with a wide variety of objects across diverse environments. To this end, we proposed Open Vocabulary Mobile Manipulation as a key benchmark task for robotics: finding any object in a novel environment and placing it on any receptacle surface within that environment. We organized a NeurIPS 2023 competition featuring both simulation and real-world components to evaluate solutions to this task. Our baselines on the most challenging version of this task, using real perception in simulation, achieved only an 0.8% success rate; by the end of the competition, the best participants achieved an 10.8\% success rate, a 13x improvement. We observed that the most successful teams employed a variety of methods, yet two common threads emerged among the best solutions: enhancing error detection and recovery, and improving the integration of perception with decision-making processes. In this paper, we detail the results and methodologies used, both in simulation and real-world settings. We discuss the lessons learned and their implications for future research. Additionally, we compare performance in real and simulated environments, emphasizing the necessity for robust generalization to novel settings.

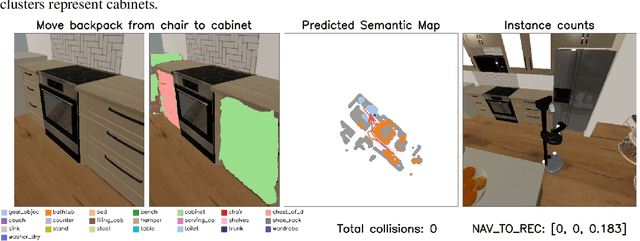

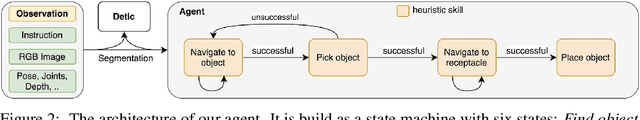

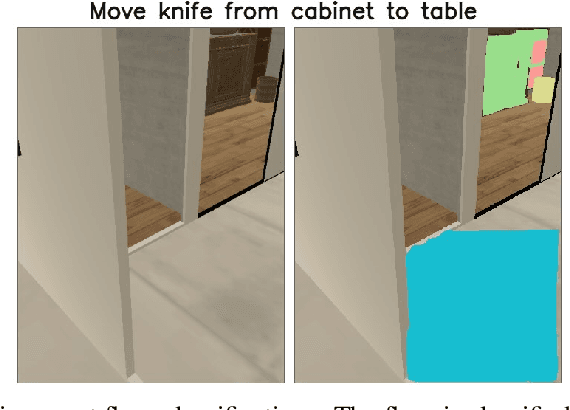

UniTeam: Open Vocabulary Mobile Manipulation Challenge

Dec 14, 2023

Abstract:This report introduces our UniTeam agent - an improved baseline for the "HomeRobot: Open Vocabulary Mobile Manipulation" challenge. The challenge poses problems of navigation in unfamiliar environments, manipulation of novel objects, and recognition of open-vocabulary object classes. This challenge aims to facilitate cross-cutting research in embodied AI using recent advances in machine learning, computer vision, natural language, and robotics. In this work, we conducted an exhaustive evaluation of the provided baseline agent; identified deficiencies in perception, navigation, and manipulation skills; and improved the baseline agent's performance. Notably, enhancements were made in perception - minimizing misclassifications; navigation - preventing infinite loop commitments; picking - addressing failures due to changing object visibility; and placing - ensuring accurate positioning for successful object placement.

Language-Conditioned Semantic Search-Based Policy for Robotic Manipulation Tasks

Dec 10, 2023

Abstract:Reinforcement learning and Imitation Learning approaches utilize policy learning strategies that are difficult to generalize well with just a few examples of a task. In this work, we propose a language-conditioned semantic search-based method to produce an online search-based policy from the available demonstration dataset of state-action trajectories. Here we directly acquire actions from the most similar manipulation trajectories found in the dataset. Our approach surpasses the performance of the baselines on the CALVIN benchmark and exhibits strong zero-shot adaptation capabilities. This holds great potential for expanding the use of our online search-based policy approach to tasks typically addressed by Imitation Learning or Reinforcement Learning-based policies.

Bio-Inspired Grasping Controller for Sensorized 2-DoF Grippers

Nov 13, 2023Abstract:We present a holistic grasping controller, combining free-space position control and in-contact force-control for reliable grasping given uncertain object pose estimates. Employing tactile fingertip sensors, undesired object displacement during grasping is minimized by pausing the finger closing motion for individual joints on first contact until force-closure is established. While holding an object, the controller is compliant with external forces to avoid high internal object forces and prevent object damage. Gravity as an external force is explicitly considered and compensated for, thus preventing gravity-induced object drift. We evaluate the controller in two experiments on the TIAGo robot and its parallel-jaw gripper proving the effectiveness of the approach for robust grasping and minimizing object displacement. In a series of ablation studies, we demonstrate the utility of the individual controller components.

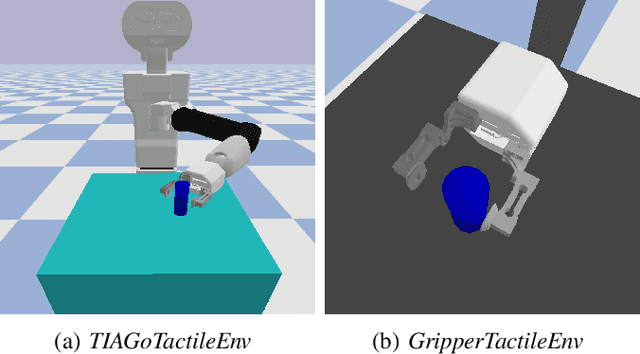

TIAGo RL: Simulated Reinforcement Learning Environments with Tactile Data for Mobile Robots

Nov 13, 2023

Abstract:Tactile information is important for robust performance in robotic tasks that involve physical interaction, such as object manipulation. However, with more data included in the reasoning and control process, modeling behavior becomes increasingly difficult. Deep Reinforcement Learning (DRL) produced promising results for learning complex behavior in various domains, including tactile-based manipulation in robotics. In this work, we present our open-source reinforcement learning environments for the TIAGo service robot. They produce tactile sensor measurements that resemble those of a real sensorised gripper for TIAGo, encouraging research in transfer learning of DRL policies. Lastly, we show preliminary training results of a learned force control policy and compare it to a classical PI controller.

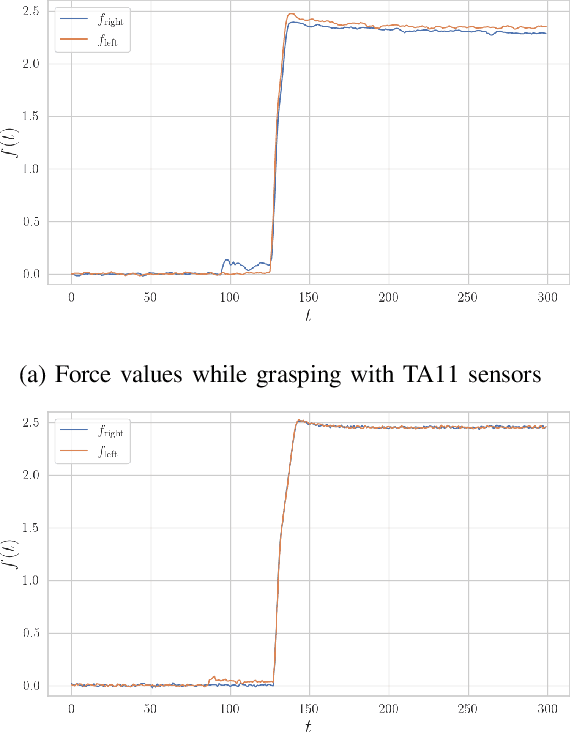

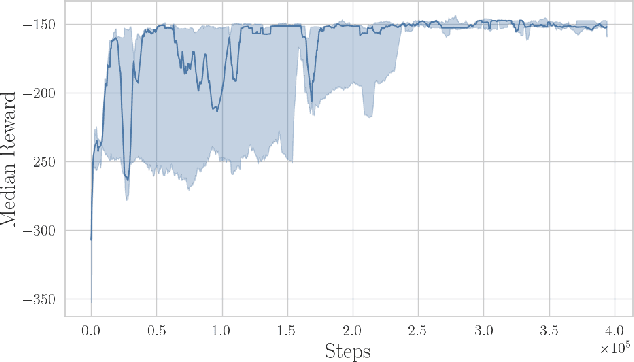

Towards Transferring Tactile-based Continuous Force Control Policies from Simulation to Robot

Nov 13, 2023

Abstract:The advent of tactile sensors in robotics has sparked many ideas on how robots can leverage direct contact measurements of their environment interactions to improve manipulation tasks. An important line of research in this regard is that of grasp force control, which aims to manipulate objects safely by limiting the amount of force exerted on the object. While prior works have either hand-modeled their force controllers, employed model-based approaches, or have not shown sim-to-real transfer, we propose a model-free deep reinforcement learning approach trained in simulation and then transferred to the robot without further fine-tuning. We therefore present a simulation environment that produces realistic normal forces, which we use to train continuous force control policies. An evaluation in which we compare against a baseline and perform an ablation study shows that our approach outperforms the hand-modeled baseline and that our proposed inductive bias and domain randomization facilitate sim-to-real transfer. Code, models, and supplementary videos are available on https://sites.google.com/view/rl-force-ctrl

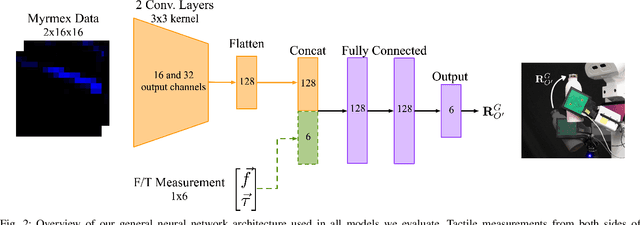

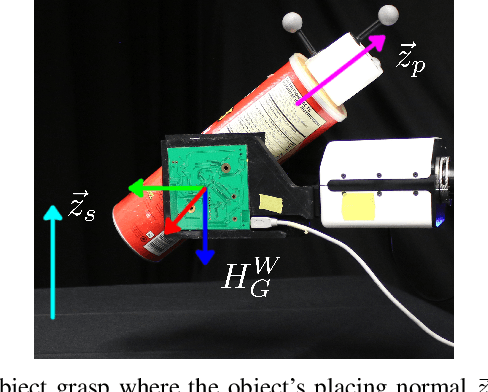

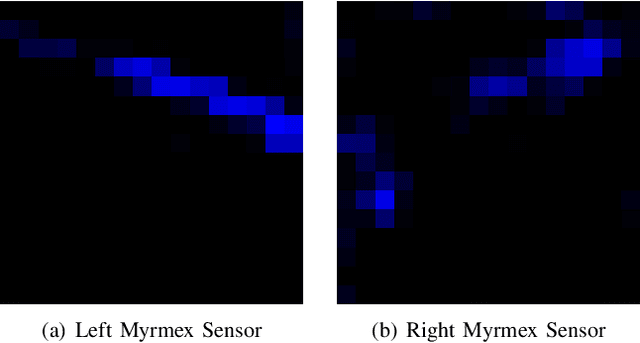

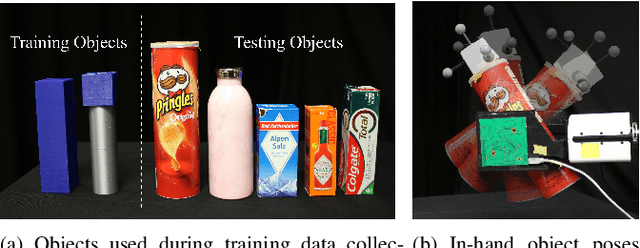

Placing by Touching: An empirical study on the importance of tactile sensing for precise object placing

Oct 05, 2022

Abstract:Tactile sensors are promising tools for endowing robots with embodied intelligence and increased dexterity. These sensors can provide robotic systems with direct information about physical interactions with the world, which is difficult to obtain from extrinsic perception systems. This work deals with a practical everyday living problem: stable object placement on flat surfaces starting from unknown initial poses. Common approaches for object placing either require complete scene specifications or indirect sensor measurements, such as cameras which are prone to suffer from occlusions. Instead, this work proposes a novel approach for stable object placing that combines tactile feedback and proprioceptive sensing. We devise a neural architecture that estimates a rotation matrix which results in a corrective gripper movement that aligns the object with the table and paves the way for the subsequent stable object placement. We compare models with different sensing modalities, such as force-torque and an external motion capture system, in real-world object placement tasks with different objects. Our experimental evaluation of the placing policies with a set of unknown everyday objects reveals an impressive generalization of the tactile-based pipeline and suggests that tactile sensing plays a vital role in the intrinsic understanding of dexterous object manipulation. Videos of our approach are available at https://sites.google.com/view/placing-by-touching.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge