Luca Muratore

A High-Force Gripper with Embedded Multimodal Sensing for Powerful and Perception Driven Grasping

Apr 07, 2025Abstract:Modern humanoid robots have shown their promising potential for executing various tasks involving the grasping and manipulation of objects using their end-effectors. Nevertheless, in the most of the cases, the grasping and manipulation actions involve low to moderate payload and interaction forces. This is due to limitations often presented by the end-effectors, which can not match their arm-reachable payload, and hence limit the payload that can be grasped and manipulated. In addition, grippers usually do not embed adequate perception in their hardware, and grasping actions are mainly driven by perception sensors installed in the rest of the robot body, frequently affected by occlusions due to the arm motions during the execution of the grasping and manipulation tasks. To address the above, we developed a modular high grasping force gripper equipped with embedded multi-modal perception functionalities. The proposed gripper can generate a grasping force of 110 N in a compact implementation. The high grasping force capability is combined with embedded multi-modal sensing, which includes an eye-in-hand camera, a Time-of-Flight (ToF) distance sensor, an Inertial Measurement Unit (IMU) and an omnidirectional microphone, permitting the implementation of perception-driven grasping functionalities. We extensively evaluated the grasping force capacity of the gripper by introducing novel payload evaluation metrics that are a function of the robot arm's dynamic motion and gripper thermal states. We also evaluated the embedded multi-modal sensing by performing perception-guided enhanced grasping operations.

* 8 pages, 15 figures

CONCERT: a Modular Reconfigurable Robot for Construction

Apr 07, 2025Abstract:This paper presents CONCERT, a fully reconfigurable modular collaborative robot (cobot) for multiple on-site operations in a construction site. CONCERT has been designed to support human activities in construction sites by leveraging two main characteristics: high-power density motors and modularity. In this way, the robot is able to perform a wide range of highly demanding tasks by acting as a co-worker of the human operator or by autonomously executing them following user instructions. Most of its versatility comes from the possibility of rapidly changing its kinematic structure by adding or removing passive or active modules. In this way, the robot can be set up in a vast set of morphologies, consequently changing its workspace and capabilities depending on the task to be executed. In the same way, distal end-effectors can be replaced for the execution of different operations. This paper also includes a full description of the software pipeline employed to automatically discover and deploy the robot morphology. Specifically, depending on the modules installed, the robot updates the kinematic, dynamic, and geometric parameters, taking into account the information embedded in each module. In this way, we demonstrate how the robot can be fully reassembled and made operational in less than ten minutes. We validated the CONCERT robot across different use cases, including drilling, sanding, plastering, and collaborative transportation with obstacle avoidance, all performed in a real construction site scenario. We demonstrated the robot's adaptivity and performance in multiple scenarios characterized by different requirements in terms of power and workspace. CONCERT has been designed and built by the Humanoid and Human-Centered Mechatronics Laboratory (HHCM) at the Istituto Italiano di Tecnologia in the context of the European Project Horizon 2020 CONCERT.

A Laser-guided Interaction Interface for Providing Effective Robot Assistance to People with Upper Limbs Impairments

Mar 20, 2025

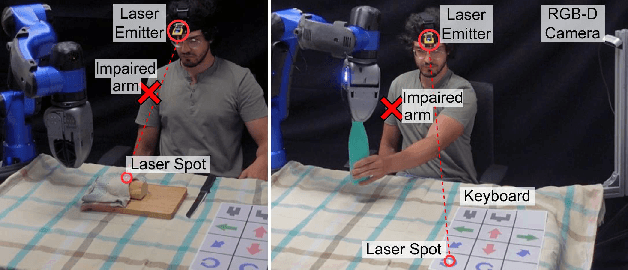

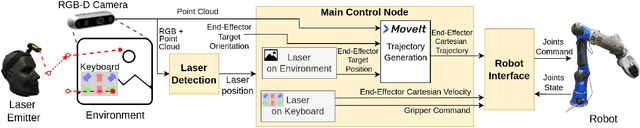

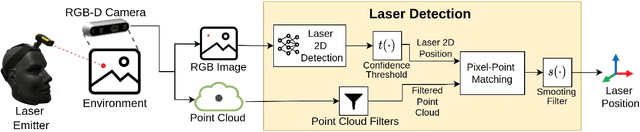

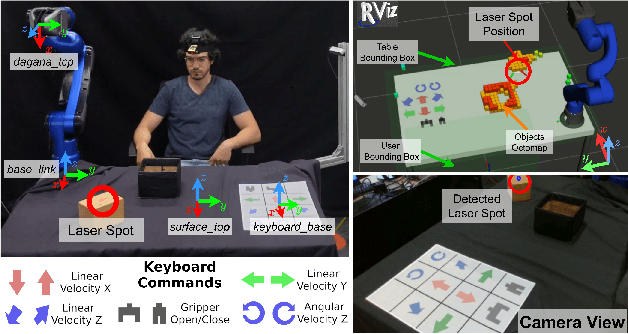

Abstract:Robotics has shown significant potential in assisting people with disabilities to enhance their independence and involvement in daily activities. Indeed, a societal long-term impact is expected in home-care assistance with the deployment of intelligent robotic interfaces. This work presents a human-robot interface developed to help people with upper limbs impairments, such as those affected by stroke injuries, in activities of everyday life. The proposed interface leverages on a visual servoing guidance component, which utilizes an inexpensive but effective laser emitter device. By projecting the laser on a surface within the workspace of the robot, the user is able to guide the robotic manipulator to desired locations, to reach, grasp and manipulate objects. Considering the targeted users, the laser emitter is worn on the head, enabling to intuitively control the robot motions with head movements that point the laser in the environment, which projection is detected with a neural network based perception module. The interface implements two control modalities: the first allows the user to select specific locations directly, commanding the robot to reach those points; the second employs a paper keyboard with buttons that can be virtually pressed by pointing the laser at them. These buttons enable a more direct control of the Cartesian velocity of the end-effector and provides additional functionalities such as commanding the action of the gripper. The proposed interface is evaluated in a series of manipulation tasks involving a 6DOF assistive robot manipulator equipped with 1DOF beak-like gripper. The two interface modalities are combined to successfully accomplish tasks requiring bimanual capacity that is usually affected in people with upper limbs impairments.

* 8 pages, 12 figures

Wearable Haptics for a Marionette-inspired Teleoperation of Highly Redundant Robotic Systems

Mar 20, 2025Abstract:The teleoperation of complex, kinematically redundant robots with loco-manipulation capabilities represents a challenge for human operators, who have to learn how to operate the many degrees of freedom of the robot to accomplish a desired task. In this context, developing an easy-to-learn and easy-to-use human-robot interface is paramount. Recent works introduced a novel teleoperation concept, which relies on a virtual physical interaction interface between the human operator and the remote robot equivalent to a "Marionette" control, but whose feedback was limited to only visual feedback on the human side. In this paper, we propose extending the "Marionette" interface by adding a wearable haptic interface to cope with the limitations given by the previous works. Leveraging the additional haptic feedback modality, the human operator gains full sensorimotor control over the robot, and the awareness about the robot's response and interactions with the environment is greatly improved. We evaluated the proposed interface and the related teleoperation framework with naive users, assessing the teleoperation performance and the user experience with and without haptic feedback. The conducted experiments consisted in a loco-manipulation mission with the CENTAURO robot, a hybrid leg-wheel quadruped with a humanoid dual-arm upper body.

* 7 pages, 8 figures

Design and Validation of a Multi-Arm Relocatable Manipulator for Space Applications

Jan 24, 2023

Abstract:This work presents the computational design and validation of the Multi-Arm Relocatable Manipulator (MARM), a three-limb robot for space applications, with particular reference to the MIRROR (i.e., the Multi-arm Installation Robot for Readying ORUs and Reflectors) use-case scenario as proposed by the European Space Agency. A holistic computational design and validation pipeline is proposed, with the aim of comparing different limb designs, as well as ensuring that valid limb candidates enable MARM to perform the complex loco-manipulation tasks required. Motivated by the task complexity in terms of kinematic reachability, (self)-collision avoidance, contact wrench limits, and motor torque limits affecting Earth experiments, this work leverages on multiple state-of-art planning and control approaches to aid the robot design and validation. These include sampling-based planning on manifolds, non-linear trajectory optimization, and quadratic programs for inverse dynamics computations with constraints. Finally, we present the attained MARM design and conduct preliminary tests for hardware validation through a set of lab experiments.

Flexible Disaster Response of Tomorrow -- Final Presentation and Evaluation of the CENTAURO System

Sep 19, 2019

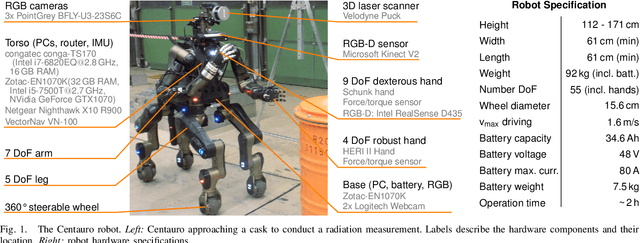

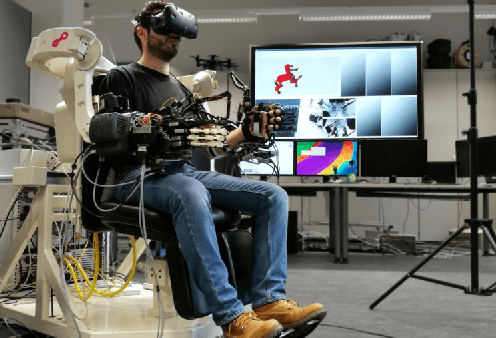

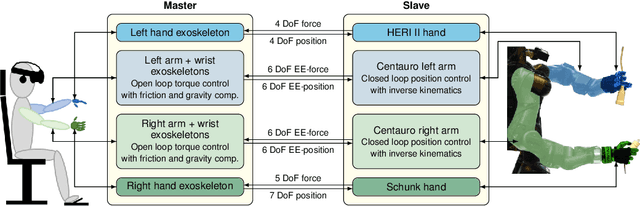

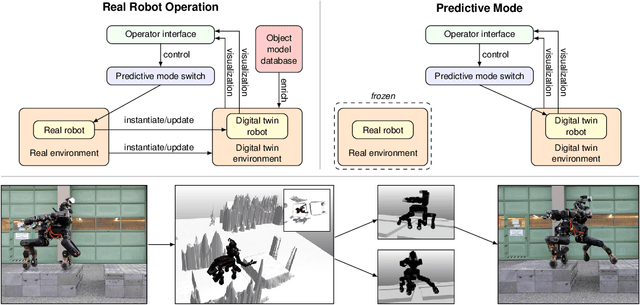

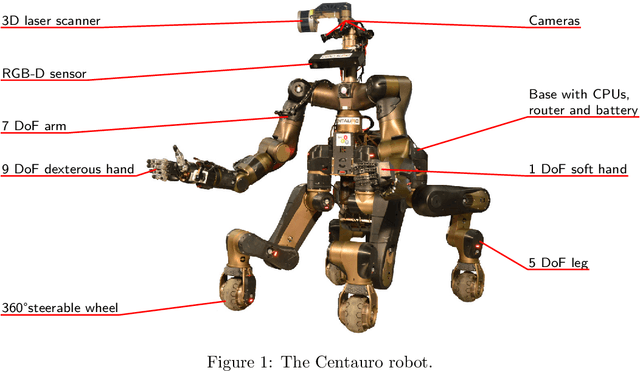

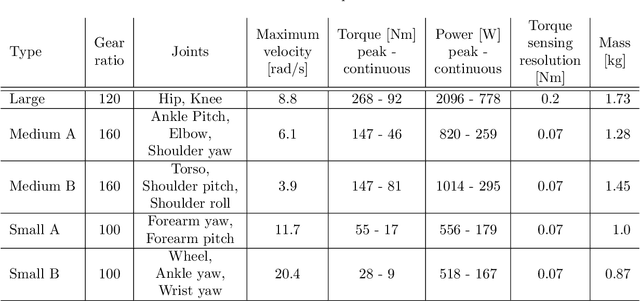

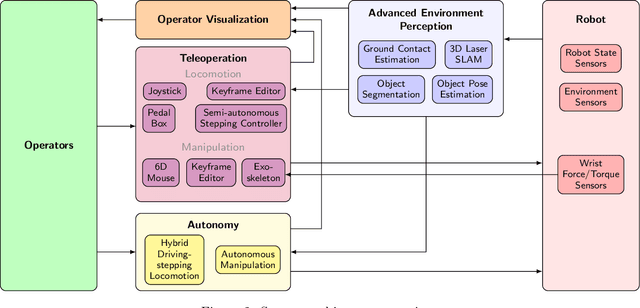

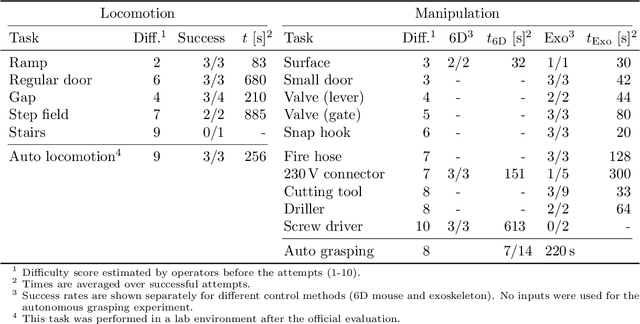

Abstract:Mobile manipulation robots have high potential to support rescue forces in disaster-response missions. Despite the difficulties imposed by real-world scenarios, robots are promising to perform mission tasks from a safe distance. In the CENTAURO project, we developed a disaster-response system which consists of the highly flexible Centauro robot and suitable control interfaces including an immersive tele-presence suit and support-operator controls on different levels of autonomy. In this article, we give an overview of the final CENTAURO system. In particular, we explain several high-level design decisions and how those were derived from requirements and extensive experience of Kerntechnische Hilfsdienst GmbH, Karlsruhe, Germany (KHG). We focus on components which were recently integrated and report about a systematic evaluation which demonstrated system capabilities and revealed valuable insights.

Remote Mobile Manipulation with the Centauro Robot: Full-body Telepresence and Autonomous Operator Assistance

Aug 05, 2019

Abstract:Solving mobile manipulation tasks in inaccessible and dangerous environments is an important application of robots to support humans. Example domains are construction and maintenance of manned and unmanned stations on the moon and other planets. Suitable platforms require flexible and robust hardware, a locomotion approach that allows for navigating a wide variety of terrains, dexterous manipulation capabilities, and respective user interfaces. We present the CENTAURO system which has been designed for these requirements and consists of the Centauro robot and a set of advanced operator interfaces with complementary strength enabling the system to solve a wide range of realistic mobile manipulation tasks. The robot possesses a centaur-like body plan and is driven by torque-controlled compliant actuators. Four articulated legs ending in steerable wheels allow for omnidirectional driving as well as for making steps. An anthropomorphic upper body with two arms ending in five-finger hands enables human-like manipulation. The robot perceives its environment through a suite of multimodal sensors. The resulting platform complexity goes beyond the complexity of most known systems which puts the focus on a suitable operator interface. An operator can control the robot through a telepresence suit, which allows for flexibly solving a large variety of mobile manipulation tasks. Locomotion and manipulation functionalities on different levels of autonomy support the operation. The proposed user interfaces enable solving a wide variety of tasks without previous task-specific training. The integrated system is evaluated in numerous teleoperated experiments that are described along with lessons learned.

Translating Videos to Commands for Robotic Manipulation with Deep Recurrent Neural Networks

Oct 01, 2017

Abstract:We present a new method to translate videos to commands for robotic manipulation using Deep Recurrent Neural Networks (RNN). Our framework first extracts deep features from the input video frames with a deep Convolutional Neural Networks (CNN). Two RNN layers with an encoder-decoder architecture are then used to encode the visual features and sequentially generate the output words as the command. We demonstrate that the translation accuracy can be improved by allowing a smooth transaction between two RNN layers and using the state-of-the-art feature extractor. The experimental results on our new challenging dataset show that our approach outperforms recent methods by a fair margin. Furthermore, we combine the proposed translation module with the vision and planning system to let a robot perform various manipulation tasks. Finally, we demonstrate the effectiveness of our framework on a full-size humanoid robot WALK-MAN.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge