Davide Torielli

Intuitive Human-Robot Interfaces Leveraging on Autonomy Features for the Control of Highly-redundant Robots

May 12, 2025Abstract:[...] With the TelePhysicalOperation interface, the user can teleoperate the different capabilities of a robot (e.g., single/double arm manipulation, wheel/leg locomotion) by applying virtual forces on selected robot body parts. This approach emulates the intuitiveness of physical human-robot interaction, but at the same time it permits to teleoperate the robot from a safe distance, in a way that resembles a "Marionette" interface. The system is further enhanced with wearable haptic feedback functions to align better with the "Marionette" metaphor, and a user study has been conducted to validate its efficacy with and without the haptic channel enabled. Considering the importance of robot independence, the TelePhysicalOperation interface incorporates autonomy modules to face, for example, the teleoperation of dual-arm mobile base robots for bimanual object grasping and transportation tasks. With the laser-guided interface, the user can indicate points of interest to the robot through the utilization of a simple but effective laser emitter device. With a neural network-based vision system, the robot tracks the laser projection in real time, allowing the user to indicate not only fixed goals, like objects, but also paths to follow. With the implemented autonomous behavior, a mobile manipulator employs its locomanipulation abilities to follow the indicated goals. The behavior is modeled using Behavior Trees, exploiting their reactivity to promptly respond to changes in goal positions, and their modularity to adapt the motion planning to the task needs. The proposed laser interface has also been employed in an assistive scenario. In this case, users with upper limbs impairments can control an assistive manipulator by directing a head-worn laser emitter to the point of interests, to collaboratively address activities of everyday life. [...]

Cooperative Assembly with Autonomous Mobile Manipulators in an Underwater Scenario

May 12, 2025Abstract:[...] Specifically, the problem addressed is an assembly one known as the peg-in-hole task. In this case, two autonomous manipulators must carry cooperatively (at kinematic level) a peg and must insert it into an hole fixed in the environment. Even if the peg-in-hole is a well-known problem, there are no specific studies related to the use of two different autonomous manipulators, especially in underwater scenarios. Among all the possible investigations towards the problem, this work focuses mainly on the kinematic control of the robots. The methods used are part of the Task Priority Inverse Kinematics (TPIK) approach, with a cooperation scheme that permits to exchange as less information as possible between the agents (that is really important being water a big impediment for communication). A force-torque sensor is exploited at kinematic level to help the insertion phase. The results show how the TPIK and the chosen cooperation scheme can be used for the stated problem. The simulated experiments done consider little errors in the hole's pose, that still permit to insert the peg but with a lot of frictions and possible stucks. It is shown how can be possible to improve (thanks to the data provided by the force-torque sensor) the insertion phase performed by the two manipulators in presence of these errors. [...]

A High-Force Gripper with Embedded Multimodal Sensing for Powerful and Perception Driven Grasping

Apr 07, 2025Abstract:Modern humanoid robots have shown their promising potential for executing various tasks involving the grasping and manipulation of objects using their end-effectors. Nevertheless, in the most of the cases, the grasping and manipulation actions involve low to moderate payload and interaction forces. This is due to limitations often presented by the end-effectors, which can not match their arm-reachable payload, and hence limit the payload that can be grasped and manipulated. In addition, grippers usually do not embed adequate perception in their hardware, and grasping actions are mainly driven by perception sensors installed in the rest of the robot body, frequently affected by occlusions due to the arm motions during the execution of the grasping and manipulation tasks. To address the above, we developed a modular high grasping force gripper equipped with embedded multi-modal perception functionalities. The proposed gripper can generate a grasping force of 110 N in a compact implementation. The high grasping force capability is combined with embedded multi-modal sensing, which includes an eye-in-hand camera, a Time-of-Flight (ToF) distance sensor, an Inertial Measurement Unit (IMU) and an omnidirectional microphone, permitting the implementation of perception-driven grasping functionalities. We extensively evaluated the grasping force capacity of the gripper by introducing novel payload evaluation metrics that are a function of the robot arm's dynamic motion and gripper thermal states. We also evaluated the embedded multi-modal sensing by performing perception-guided enhanced grasping operations.

* 8 pages, 15 figures

Wearable Haptics for a Marionette-inspired Teleoperation of Highly Redundant Robotic Systems

Mar 20, 2025Abstract:The teleoperation of complex, kinematically redundant robots with loco-manipulation capabilities represents a challenge for human operators, who have to learn how to operate the many degrees of freedom of the robot to accomplish a desired task. In this context, developing an easy-to-learn and easy-to-use human-robot interface is paramount. Recent works introduced a novel teleoperation concept, which relies on a virtual physical interaction interface between the human operator and the remote robot equivalent to a "Marionette" control, but whose feedback was limited to only visual feedback on the human side. In this paper, we propose extending the "Marionette" interface by adding a wearable haptic interface to cope with the limitations given by the previous works. Leveraging the additional haptic feedback modality, the human operator gains full sensorimotor control over the robot, and the awareness about the robot's response and interactions with the environment is greatly improved. We evaluated the proposed interface and the related teleoperation framework with naive users, assessing the teleoperation performance and the user experience with and without haptic feedback. The conducted experiments consisted in a loco-manipulation mission with the CENTAURO robot, a hybrid leg-wheel quadruped with a humanoid dual-arm upper body.

* 7 pages, 8 figures

A Laser-guided Interaction Interface for Providing Effective Robot Assistance to People with Upper Limbs Impairments

Mar 20, 2025

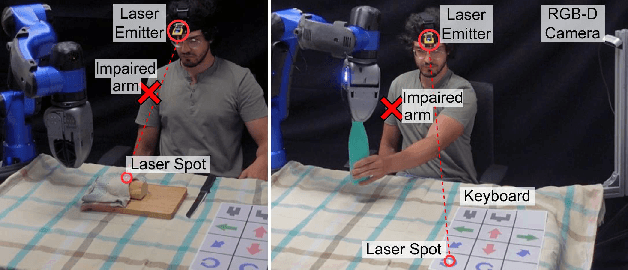

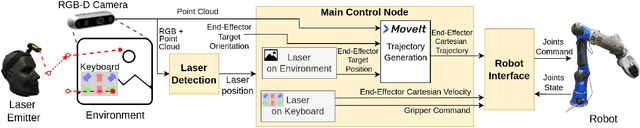

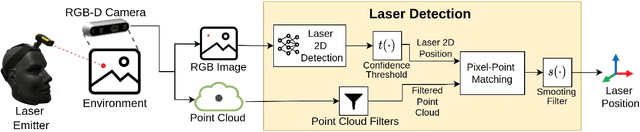

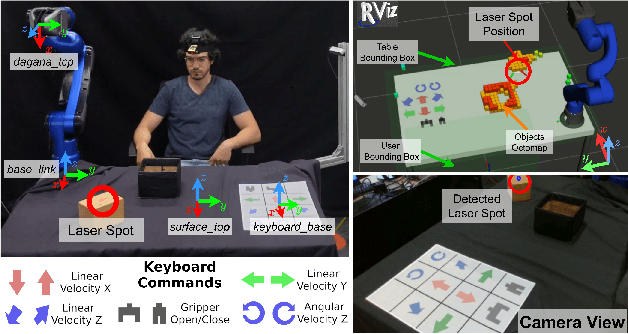

Abstract:Robotics has shown significant potential in assisting people with disabilities to enhance their independence and involvement in daily activities. Indeed, a societal long-term impact is expected in home-care assistance with the deployment of intelligent robotic interfaces. This work presents a human-robot interface developed to help people with upper limbs impairments, such as those affected by stroke injuries, in activities of everyday life. The proposed interface leverages on a visual servoing guidance component, which utilizes an inexpensive but effective laser emitter device. By projecting the laser on a surface within the workspace of the robot, the user is able to guide the robotic manipulator to desired locations, to reach, grasp and manipulate objects. Considering the targeted users, the laser emitter is worn on the head, enabling to intuitively control the robot motions with head movements that point the laser in the environment, which projection is detected with a neural network based perception module. The interface implements two control modalities: the first allows the user to select specific locations directly, commanding the robot to reach those points; the second employs a paper keyboard with buttons that can be virtually pressed by pointing the laser at them. These buttons enable a more direct control of the Cartesian velocity of the end-effector and provides additional functionalities such as commanding the action of the gripper. The proposed interface is evaluated in a series of manipulation tasks involving a 6DOF assistive robot manipulator equipped with 1DOF beak-like gripper. The two interface modalities are combined to successfully accomplish tasks requiring bimanual capacity that is usually affected in people with upper limbs impairments.

* 8 pages, 12 figures

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge