Johan Mathe

ICML Topological Deep Learning Challenge 2024: Beyond the Graph Domain

Sep 08, 2024

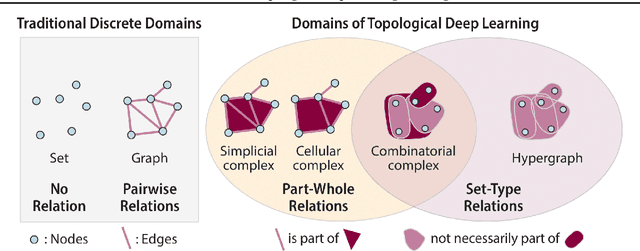

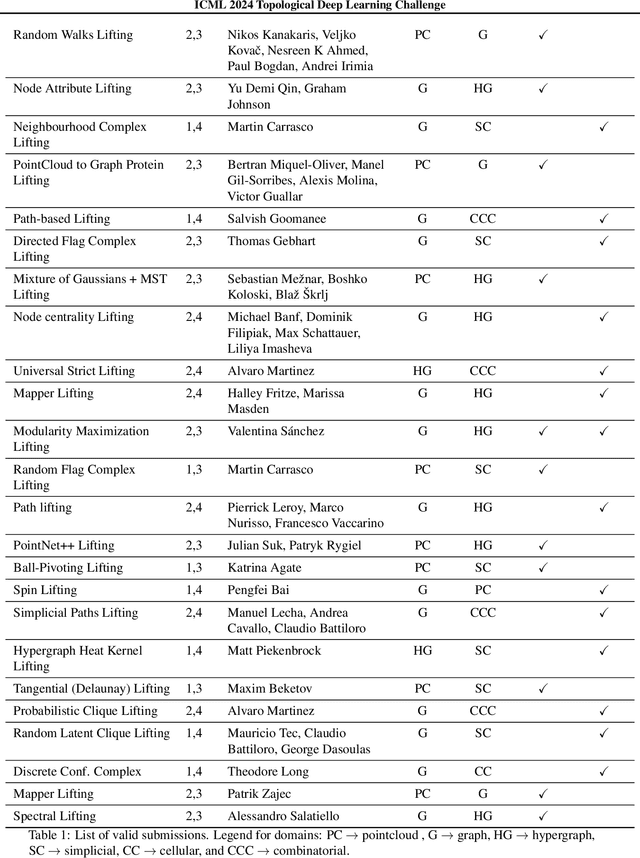

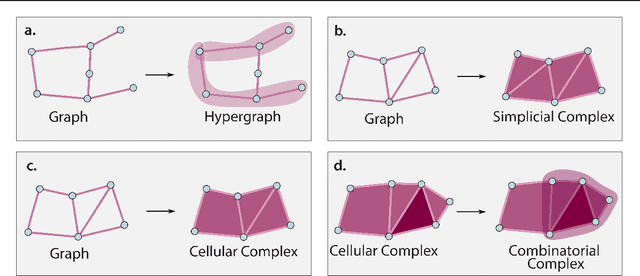

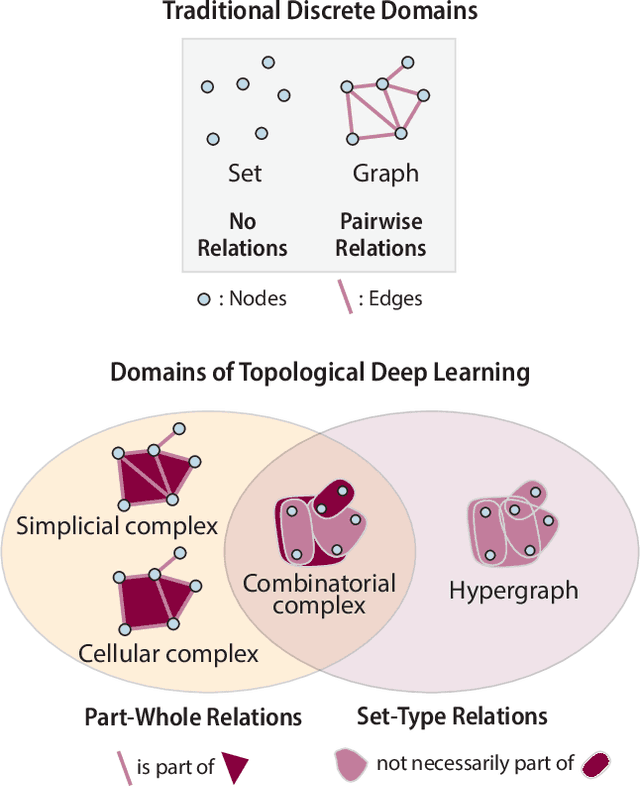

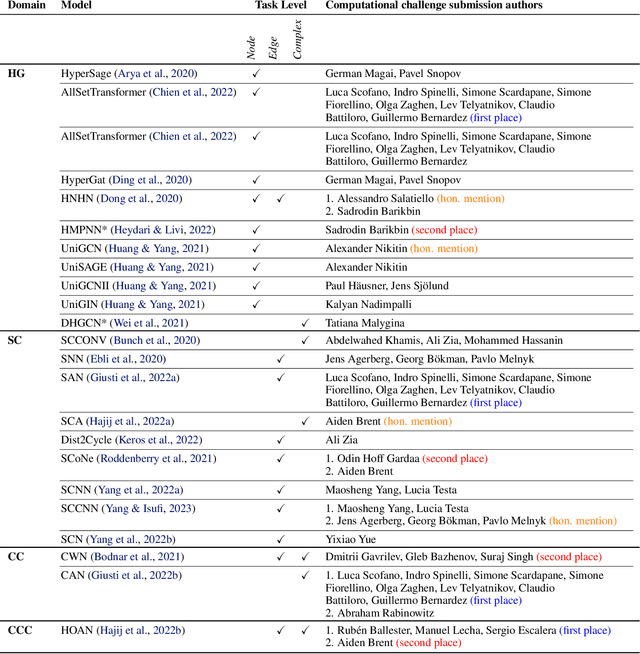

Abstract:This paper describes the 2nd edition of the ICML Topological Deep Learning Challenge that was hosted within the ICML 2024 ELLIS Workshop on Geometry-grounded Representation Learning and Generative Modeling (GRaM). The challenge focused on the problem of representing data in different discrete topological domains in order to bridge the gap between Topological Deep Learning (TDL) and other types of structured datasets (e.g. point clouds, graphs). Specifically, participants were asked to design and implement topological liftings, i.e. mappings between different data structures and topological domains --like hypergraphs, or simplicial/cell/combinatorial complexes. The challenge received 52 submissions satisfying all the requirements. This paper introduces the main scope of the challenge, and summarizes the main results and findings.

Beyond Euclid: An Illustrated Guide to Modern Machine Learning with Geometric, Topological, and Algebraic Structures

Jul 12, 2024

Abstract:The enduring legacy of Euclidean geometry underpins classical machine learning, which, for decades, has been primarily developed for data lying in Euclidean space. Yet, modern machine learning increasingly encounters richly structured data that is inherently nonEuclidean. This data can exhibit intricate geometric, topological and algebraic structure: from the geometry of the curvature of space-time, to topologically complex interactions between neurons in the brain, to the algebraic transformations describing symmetries of physical systems. Extracting knowledge from such non-Euclidean data necessitates a broader mathematical perspective. Echoing the 19th-century revolutions that gave rise to non-Euclidean geometry, an emerging line of research is redefining modern machine learning with non-Euclidean structures. Its goal: generalizing classical methods to unconventional data types with geometry, topology, and algebra. In this review, we provide an accessible gateway to this fast-growing field and propose a graphical taxonomy that integrates recent advances into an intuitive unified framework. We subsequently extract insights into current challenges and highlight exciting opportunities for future development in this field.

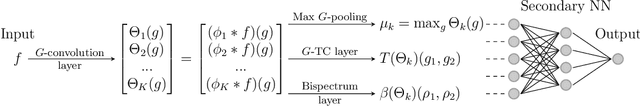

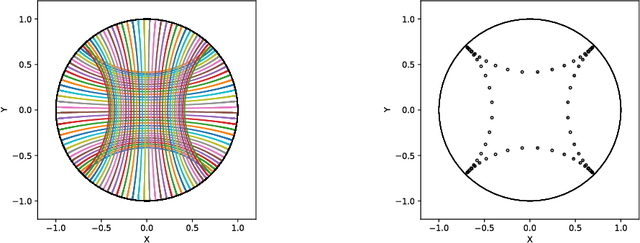

The Selective G-Bispectrum and its Inversion: Applications to G-Invariant Networks

Jul 10, 2024

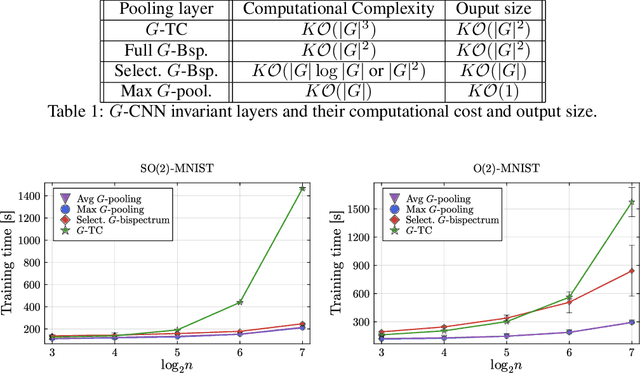

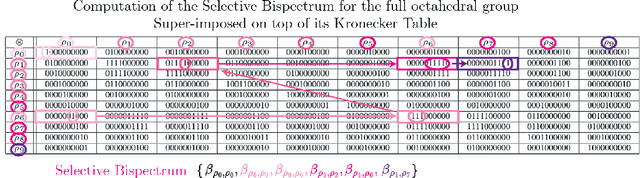

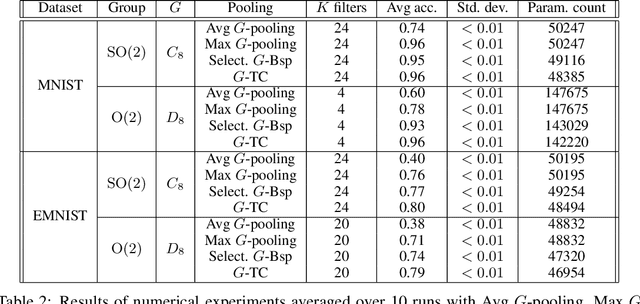

Abstract:An important problem in signal processing and deep learning is to achieve \textit{invariance} to nuisance factors not relevant for the task. Since many of these factors are describable as the action of a group $G$ (e.g. rotations, translations, scalings), we want methods to be $G$-invariant. The $G$-Bispectrum extracts every characteristic of a given signal up to group action: for example, the shape of an object in an image, but not its orientation. Consequently, the $G$-Bispectrum has been incorporated into deep neural network architectures as a computational primitive for $G$-invariance\textemdash akin to a pooling mechanism, but with greater selectivity and robustness. However, the computational cost of the $G$-Bispectrum ($\mathcal{O}(|G|^2)$, with $|G|$ the size of the group) has limited its widespread adoption. Here, we show that the $G$-Bispectrum computation contains redundancies that can be reduced into a \textit{selective $G$-Bispectrum} with $\mathcal{O}(|G|)$ complexity. We prove desirable mathematical properties of the selective $G$-Bispectrum and demonstrate how its integration in neural networks enhances accuracy and robustness compared to traditional approaches, while enjoying considerable speeds-up compared to the full $G$-Bispectrum.

ICML 2023 Topological Deep Learning Challenge : Design and Results

Oct 02, 2023

Abstract:This paper presents the computational challenge on topological deep learning that was hosted within the ICML 2023 Workshop on Topology and Geometry in Machine Learning. The competition asked participants to provide open-source implementations of topological neural networks from the literature by contributing to the python packages TopoNetX (data processing) and TopoModelX (deep learning). The challenge attracted twenty-eight qualifying submissions in its two-month duration. This paper describes the design of the challenge and summarizes its main findings.

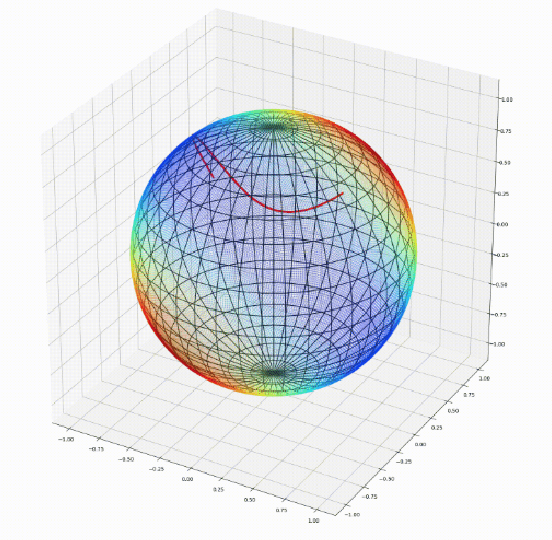

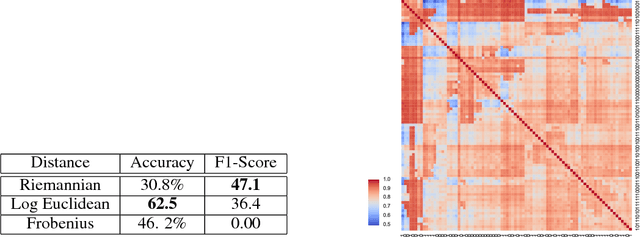

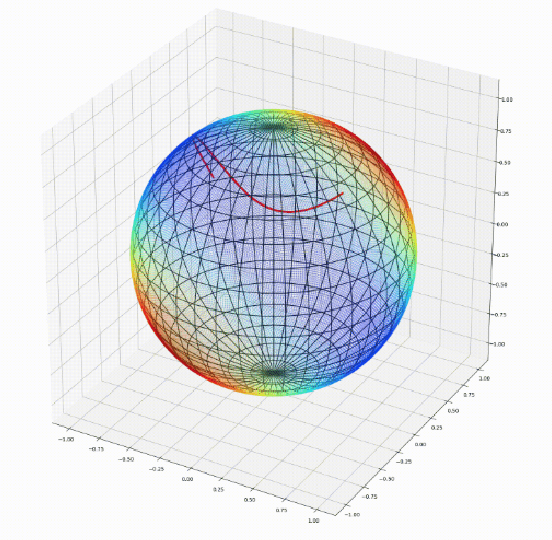

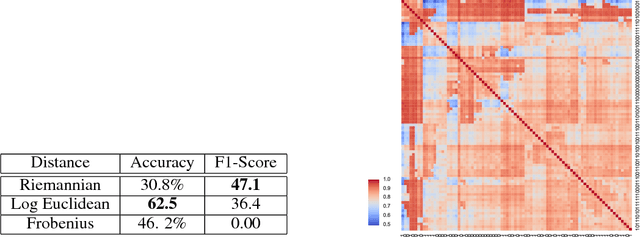

Geomstats: A Python Package for Riemannian Geometry in Machine Learning

Apr 07, 2020

Abstract:We introduce Geomstats, an open-source Python toolbox for computations and statistics on nonlinear manifolds, such as hyperbolic spaces, spaces of symmetric positive definite matrices, Lie groups of transformations, and many more. We provide object-oriented and extensively unit-tested implementations. Among others, manifolds come equipped with families of Riemannian metrics, with associated exponential and logarithmic maps, geodesics and parallel transport. Statistics and learning algorithms provide methods for estimation, clustering and dimension reduction on manifolds. All associated operations are vectorized for batch computation and provide support for different execution backends, namely NumPy, PyTorch and TensorFlow, enabling GPU acceleration. This paper presents the package, compares it with related libraries and provides relevant code examples. We show that Geomstats provides reliable building blocks to foster research in differential geometry and statistics, and to democratize the use of Riemannian geometry in machine learning applications. The source code is freely available under the MIT license at \url{geomstats.ai}.

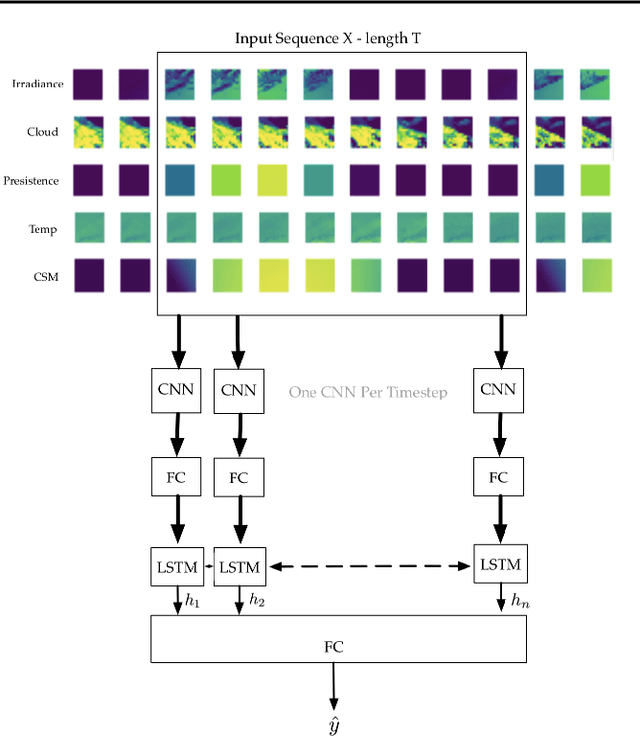

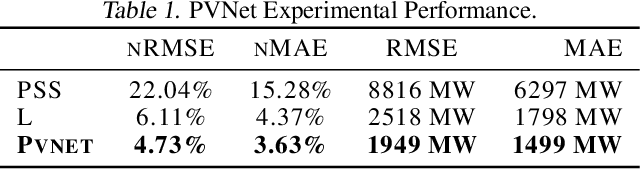

PVNet: A LRCN Architecture for Spatio-Temporal Photovoltaic PowerForecasting from Numerical Weather Prediction

Feb 04, 2019

Abstract:Photovoltaic (PV) power generation has emerged as one of the lead renewable energy sources. Yet, its production is characterized by high uncertainty, being dependent on weather conditions like solar irradiance and temperature. Predicting PV production, even in the 24 hour forecast, remains a challenge and leads energy providers to keep idle - often carbon emitting - plants. In this paper we introduce a Long-Term Recurrent Convolutional Network using Numerical Weather Predictions (NWP) to predict, in turn, PV production in the 24 hour and 48 hour forecast horizons. This network architecture fully leverages both temporal and spatial weather data, sampled over the whole geographical area of interest. We train our model on a NWP dataset from the National Oceanic and Atmospheric Administration (NOAA) to predict spatially aggregated PV production in Germany. We compare its performance to the persistence model and to state-of-the-art methods.

geomstats: a Python Package for Riemannian Geometry in Machine Learning

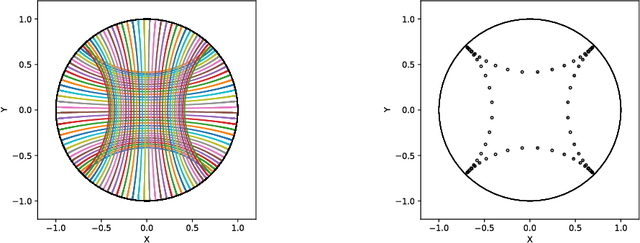

Nov 06, 2018

Abstract:We introduce geomstats, a python package that performs computations on manifolds such as hyperspheres, hyperbolic spaces, spaces of symmetric positive definite matrices and Lie groups of transformations. We provide efficient and extensively unit-tested implementations of these manifolds, together with useful Riemannian metrics and associated Exponential and Logarithm maps. The corresponding geodesic distances provide a range of intuitive choices of Machine Learning loss functions. We also give the corresponding Riemannian gradients. The operations implemented in geomstats are available with different computing backends such as numpy, tensorflow and keras. We have enabled GPU implementation and integrated geomstats manifold computations into keras deep learning framework. This paper also presents a review of manifolds in machine learning and an overview of the geomstats package with examples demonstrating its use for efficient and user-friendly Riemannian geometry.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge