Jürgen Kurths

Predicting Instability in Complex Oscillator Networks: Limitations and Potentials of Network Measures and Machine Learning

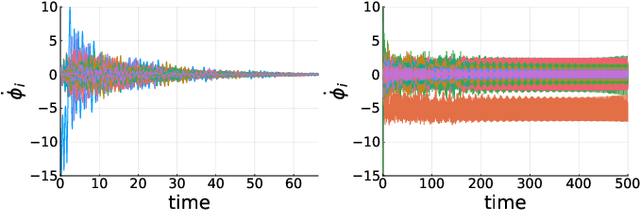

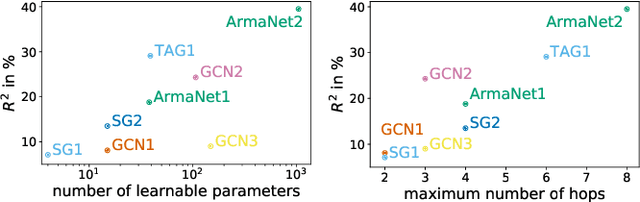

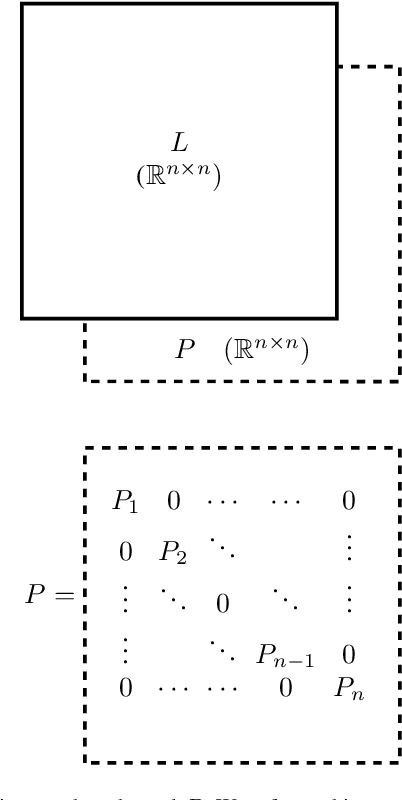

Feb 27, 2024Abstract:A central question of network science is how functional properties of systems arise from their structure. For networked dynamical systems, structure is typically quantified with network measures. A functional property that is of theoretical and practical interest for oscillatory systems is the stability of synchrony to localized perturbations. Recently, Graph Neural Networks (GNNs) have been shown to predict this stability successfully; at the same time, network measures have struggled to paint a clear picture. Here we collect 46 relevant network measures and find that no small subset can reliably predict stability. The performance of GNNs can only be matched by combining all network measures and nodewise machine learning. However, unlike GNNs, this approach fails to extrapolate from network ensembles to several real power grid topologies. This suggests that correlations of network measures and function may be misleading, and that GNNs capture the causal relationship between structure and stability substantially better.

Embedding Theory of Reservoir Computing and Reducing Reservoir Network Using Time Delays

Mar 16, 2023

Abstract:Reservoir computing (RC), a particular form of recurrent neural network, is under explosive development due to its exceptional efficacy and high performance in reconstruction or/and prediction of complex physical systems. However, the mechanism triggering such effective applications of RC is still unclear, awaiting deep and systematic exploration. Here, combining the delayed embedding theory with the generalized embedding theory, we rigorously prove that RC is essentially a high dimensional embedding of the original input nonlinear dynamical system. Thus, using this embedding property, we unify into a universal framework the standard RC and the time-delayed RC where we novelly introduce time delays only into the network's output layer, and we further find a trade-off relation between the time delays and the number of neurons in RC. Based on this finding, we significantly reduce the network size of RC for reconstructing and predicting some representative physical systems, and, more surprisingly, only using a single neuron reservoir with time delays is sometimes sufficient for achieving those tasks.

Symbiosis of an artificial neural network and models of biological neurons: training and testing

Feb 03, 2023

Abstract:In this paper we show the possibility of creating and identifying the features of an artificial neural network (ANN) which consists of mathematical models of biological neurons. The FitzHugh--Nagumo (FHN) system is used as an example of model demonstrating simplified neuron activity. First, in order to reveal how biological neurons can be embedded within an ANN, we train the ANN with nonlinear neurons to solve a a basic image recognition problem with MNIST database; and next, we describe how FHN systems can be introduced into this trained ANN. After all, we show that an ANN with FHN systems inside can be successfully trained and its accuracy becomes larger. What has been done above opens up great opportunities in terms of the direction of analog neural networks, in which artificial neurons can be replaced by biological ones. \end{abstract}

PrEF: Percolation-based Evolutionary Framework for the diffusion-source-localization problem in large networks

May 19, 2022

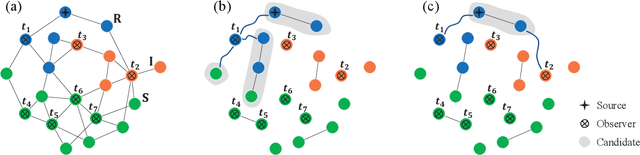

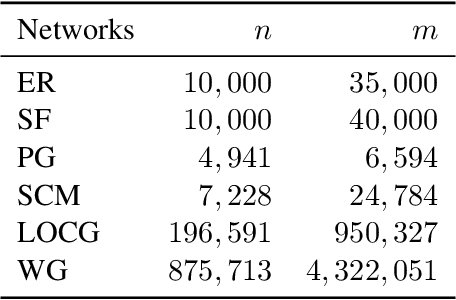

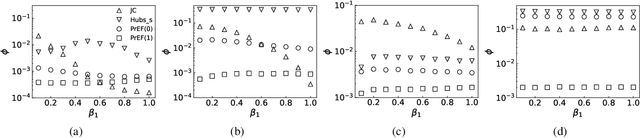

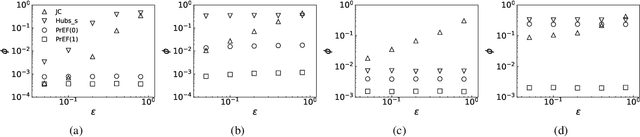

Abstract:We assume that the state of a number of nodes in a network could be investigated if necessary, and study what configuration of those nodes could facilitate a better solution for the diffusion-source-localization (DSL) problem. In particular, we formulate a candidate set which contains the diffusion source for sure, and propose the method, Percolation-based Evolutionary Framework (PrEF), to minimize such set. Hence one could further conduct more intensive investigation on only a few nodes to target the source. To achieve that, we first demonstrate that there are some similarities between the DSL problem and the network immunization problem. We find that the minimization of the candidate set is equivalent to the minimization of the order parameter if we view the observer set as the removal node set. Hence, PrEF is developed based on the network percolation and evolutionary algorithm. The effectiveness of the proposed method is validated on both model and empirical networks in regard to varied circumstances. Our results show that the developed approach could achieve a much smaller candidate set compared to the state of the art in almost all cases. Meanwhile, our approach is also more stable, i.e., it has similar performance irrespective of varied infection probabilities, diffusion models, and outbreak ranges. More importantly, our approach might provide a new framework to tackle the DSL problem in extreme large networks.

Predicting Dynamic Stability of Power Grids using Graph Neural Networks

Aug 18, 2021

Abstract:The prediction of dynamical stability of power grids becomes more important and challenging with increasing shares of renewable energy sources due to their decentralized structure, reduced inertia and volatility. We investigate the feasibility of applying graph neural networks (GNN) to predict dynamic stability of synchronisation in complex power grids using the single-node basin stability (SNBS) as a measure. To do so, we generate two synthetic datasets for grids with 20 and 100 nodes respectively and estimate SNBS using Monte-Carlo sampling. Those datasets are used to train and evaluate the performance of eight different GNN-models. All models use the full graph without simplifications as input and predict SNBS in a nodal-regression-setup. We show that SNBS can be predicted in general and the performance significantly changes using different GNN-models. Furthermore, we observe interesting transfer capabilities of our approach: GNN-models trained on smaller grids can directly be applied on larger grids without the need of retraining.

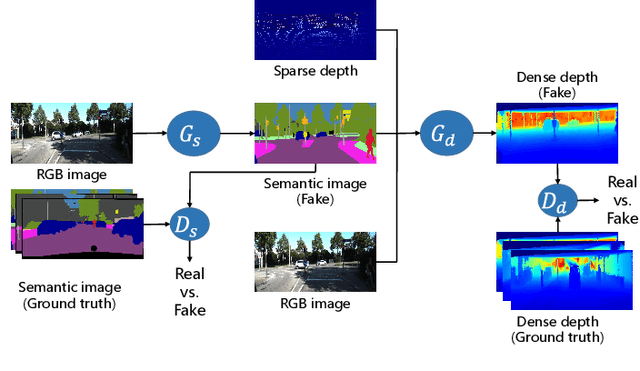

Multi-task GANs for Semantic Segmentation and Depth Completion with Cycle Consistency

Nov 29, 2020

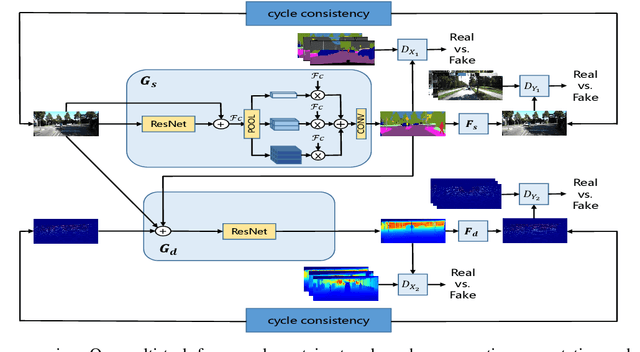

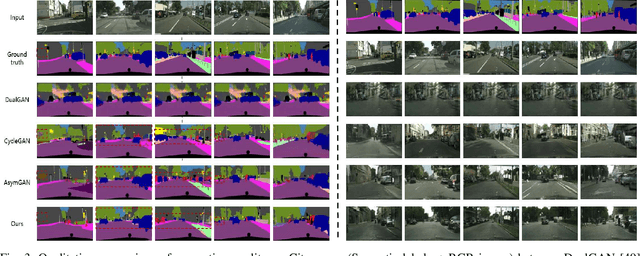

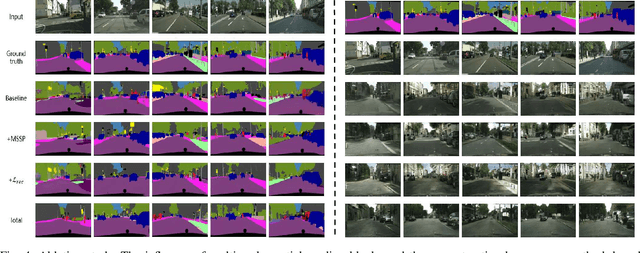

Abstract:Semantic segmentation and depth completion are two challenging tasks in scene understanding, and they are widely used in robotics and autonomous driving. Although several works are proposed to jointly train these two tasks using some small modifications, like changing the last layer, the result of one task is not utilized to improve the performance of the other one despite that there are some similarities between these two tasks. In this paper, we propose multi-task generative adversarial networks (Multi-task GANs), which are not only competent in semantic segmentation and depth completion, but also improve the accuracy of depth completion through generated semantic images. In addition, we improve the details of generated semantic images based on CycleGAN by introducing multi-scale spatial pooling blocks and the structural similarity reconstruction loss. Furthermore, considering the inner consistency between semantic and geometric structures, we develop a semantic-guided smoothness loss to improve depth completion results. Extensive experiments on Cityscapes dataset and KITTI depth completion benchmark show that the Multi-task GANs are capable of achieving competitive performance for both semantic segmentation and depth completion tasks.

When Autonomous Systems Meet Accuracy and Transferability through AI: A Survey

Apr 30, 2020

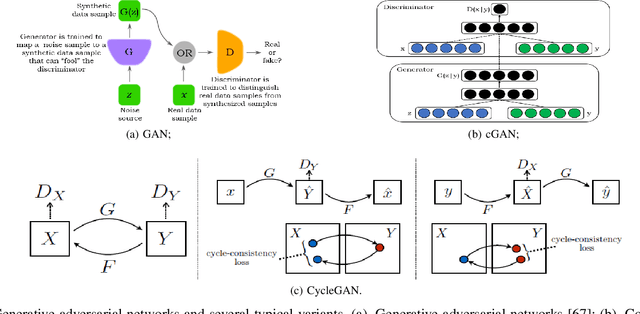

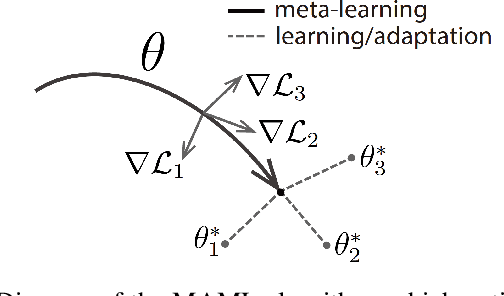

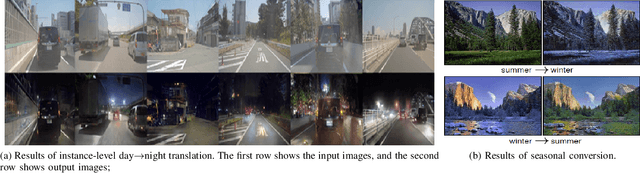

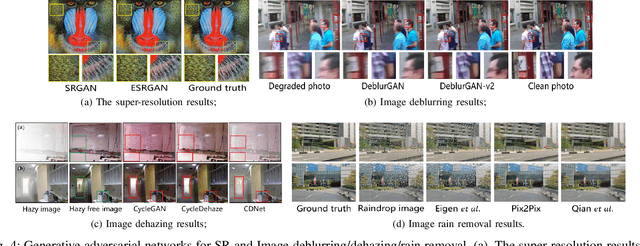

Abstract:With widespread applications of artificial intelligence (AI), the capabilities of the perception, understanding, decision-making and control for autonomous systems have improved significantly in the past years. When autonomous systems consider the performance of accuracy and transferability, several AI methods, like adversarial learning, reinforcement learning (RL) and meta-learning, show their powerful performance. Here, we review the learning-based approaches in autonomous systems from the perspectives of accuracy and transferability. Accuracy means that a well-trained model shows good results during the testing phase, in which the testing set shares a same task or a data distribution with the training set. Transferability means that when a well-trained model is transferred to other testing domains, the accuracy is still good. Firstly, we introduce some basic concepts of transfer learning and then present some preliminaries of adversarial learning, RL and meta-learning. Secondly, we focus on reviewing the accuracy or transferability or both of them to show the advantages of adversarial learning, like generative adversarial networks (GANs), in typical computer vision tasks in autonomous systems, including image style transfer, image superresolution, image deblurring/dehazing/rain removal, semantic segmentation, depth estimation, pedestrian detection and person re-identification (re-ID). Then, we further review the performance of RL and meta-learning from the aspects of accuracy or transferability or both of them in autonomous systems, involving pedestrian tracking, robot navigation and robotic manipulation. Finally, we discuss several challenges and future topics for using adversarial learning, RL and meta-learning in autonomous systems.

Machine Discovery of Partial Differential Equations from Spatiotemporal Data

Sep 15, 2019

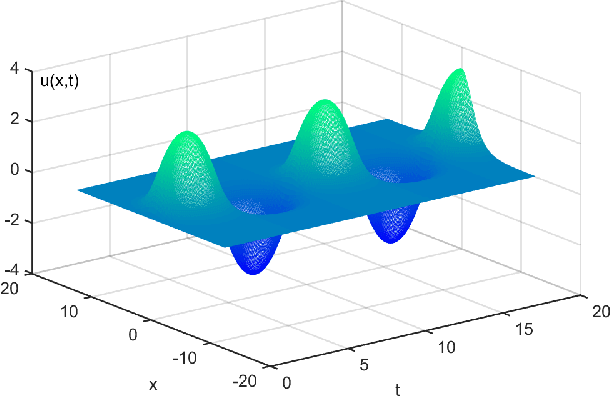

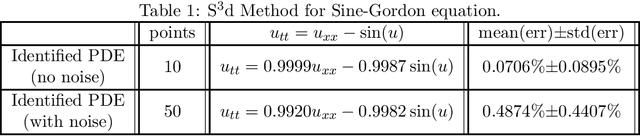

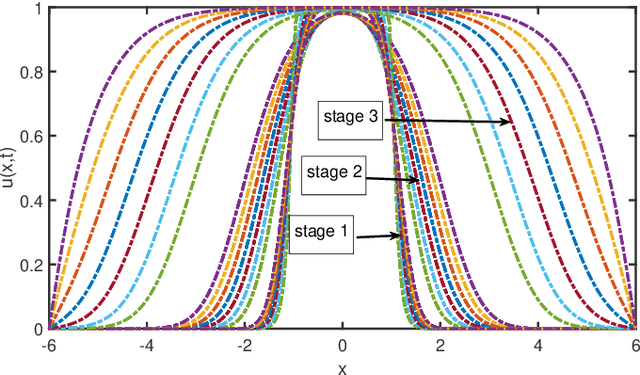

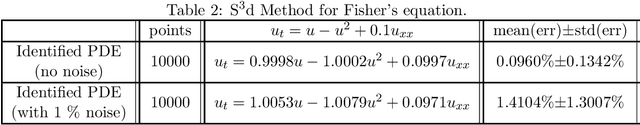

Abstract:The study presents a general framework for discovering underlying Partial Differential Equations (PDEs) using measured spatiotemporal data. The method, called Sparse Spatiotemporal System Discovery ($\text{S}^3\text{d}$), decides which physical terms are necessary and which can be removed (because they are physically negligible in the sense that they do not affect the dynamics too much) from a pool of candidate functions. The method is built on the recent development of Sparse Bayesian Learning; which enforces the sparsity in the to-be-identified PDEs, and therefore can balance the model complexity and fitting error with theoretical guarantees. Without leveraging prior knowledge or assumptions in the discovery process, we use an automated approach to discover ten types of PDEs, including the famous Navier-Stokes and sine-Gordon equations, from simulation data alone. Moreover, we demonstrate our data-driven discovery process with the Complex Ginzburg-Landau Equation (CGLE) using data measured from a traveling-wave convection experiment. Our machine discovery approach presents solutions that has the potential to inspire, support and assist physicists for the establishment of physical laws from measured spatiotemporal data, especially in notorious fields that are often too complex to allow a straightforward establishment of physical law, such as biophysics, fluid dynamics, neuroscience or nonlinear optics.

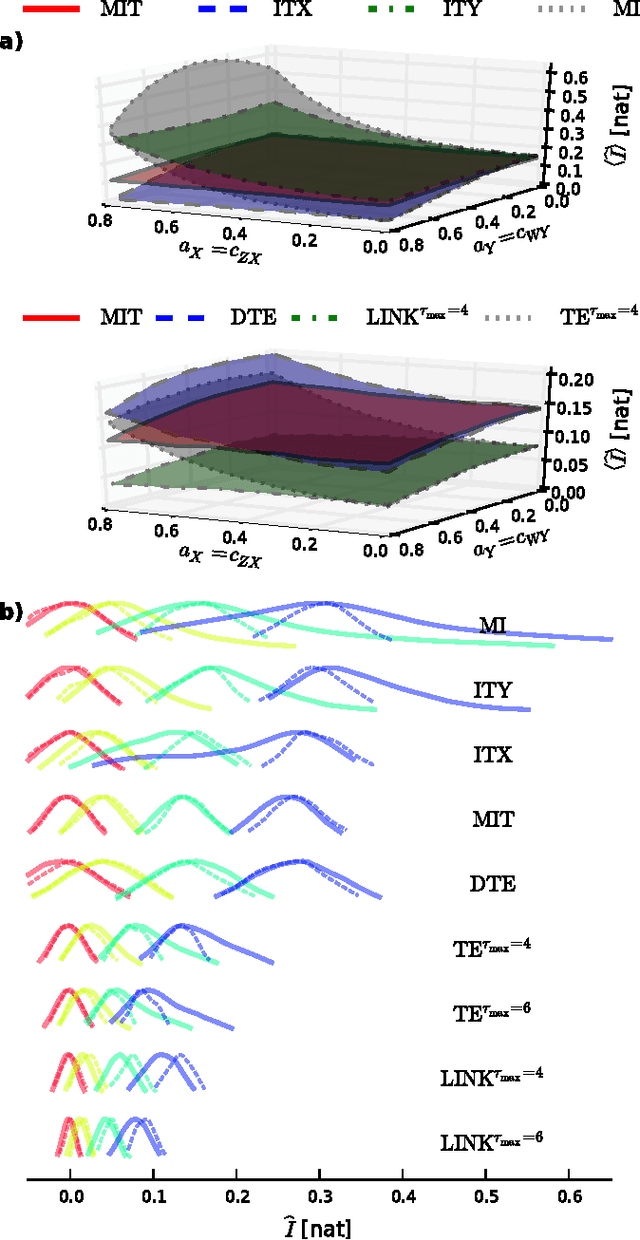

Optimal model-free prediction from multivariate time series

Jun 18, 2015

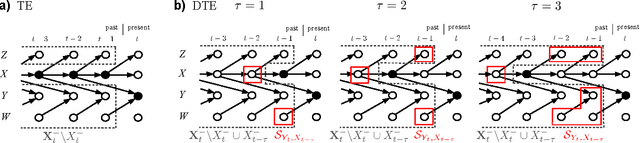

Abstract:Forecasting a time series from multivariate predictors constitutes a challenging problem, especially using model-free approaches. Most techniques, such as nearest-neighbor prediction, quickly suffer from the curse of dimensionality and overfitting for more than a few predictors which has limited their application mostly to the univariate case. Therefore, selection strategies are needed that harness the available information as efficiently as possible. Since often the right combination of predictors matters, ideally all subsets of possible predictors should be tested for their predictive power, but the exponentially growing number of combinations makes such an approach computationally prohibitive. Here a prediction scheme that overcomes this strong limitation is introduced utilizing a causal pre-selection step which drastically reduces the number of possible predictors to the most predictive set of causal drivers making a globally optimal search scheme tractable. The information-theoretic optimality is derived and practical selection criteria are discussed. As demonstrated for multivariate nonlinear stochastic delay processes, the optimal scheme can even be less computationally expensive than commonly used sub-optimal schemes like forward selection. The method suggests a general framework to apply the optimal model-free approach to select variables and subsequently fit a model to further improve a prediction or learn statistical dependencies. The performance of this framework is illustrated on a climatological index of El Ni\~no Southern Oscillation.

* 14 pages, 9 figures

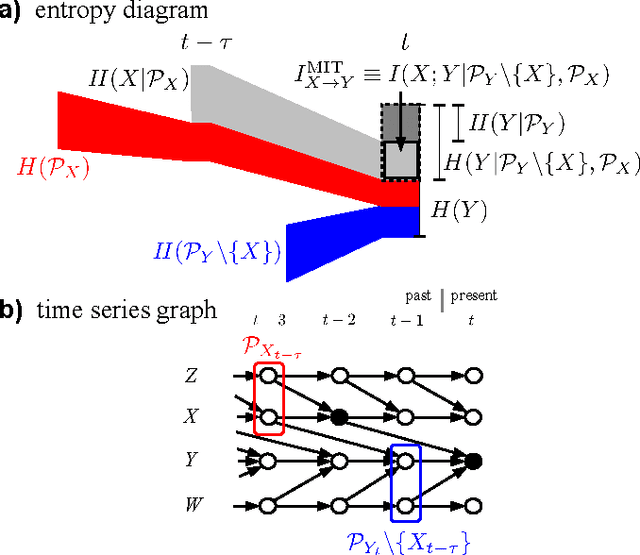

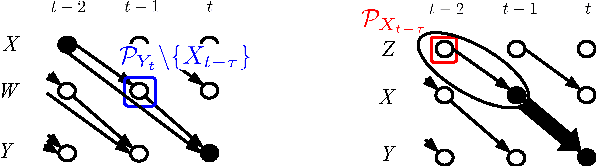

Quantifying Causal Coupling Strength: A Lag-specific Measure For Multivariate Time Series Related To Transfer Entropy

Nov 21, 2012

Abstract:While it is an important problem to identify the existence of causal associations between two components of a multivariate time series, a topic addressed in Runge et al. (2012), it is even more important to assess the strength of their association in a meaningful way. In the present article we focus on the problem of defining a meaningful coupling strength using information theoretic measures and demonstrate the short-comings of the well-known mutual information and transfer entropy. Instead, we propose a certain time-delayed conditional mutual information, the momentary information transfer (MIT), as a measure of association that is general, causal and lag-specific, reflects a well interpretable notion of coupling strength and is practically computable. MIT is based on the fundamental concept of source entropy, which we utilize to yield a notion of coupling strength that is, compared to mutual information and transfer entropy, well interpretable, in that for many cases it solely depends on the interaction of the two components at a certain lag. In particular, MIT is thus in many cases able to exclude the misleading influence of autodependency within a process in an information-theoretic way. We formalize and prove this idea analytically and numerically for a general class of nonlinear stochastic processes and illustrate the potential of MIT on climatological data.

* 15 pages, 6 figures; accepted for publication in Physical Review E

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge