Dayong Ding

Cross-domain Collaborative Learning for Recognizing Multiple Retinal Diseases from Wide-Field Fundus Images

May 14, 2023Abstract:This paper addresses the emerging task of recognizing multiple retinal diseases from wide-field (WF) and ultra-wide-field (UWF) fundus images. For an effective reuse of existing labeled color fundus photo (CFP) data, we propose Cross-domain Collaborative Learning (CdCL). Inspired by the success of fixed-ratio based mixup in unsupervised domain adaptation, we re-purpose this strategy for the current task. Due to the intrinsic disparity between the field-of-view of CFP and WF/UWF images, a scale bias naturally exists in a mixup sample that the anatomic structure from a CFP image will be considerably larger than its WF/UWF counterpart. The CdCL method resolves the issue by Scale-bias Correction, which employs Transformers for producing scale-invariant features. As demonstrated by extensive experiments on multiple datasets covering both WF and UWF images, the proposed method compares favorably against a number of competitive baselines.

Segmentation-based Information Extraction and Amalgamation in Fundus Images for Glaucoma Detection

Sep 23, 2022

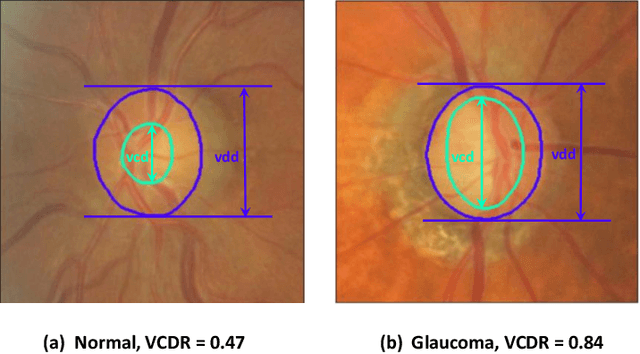

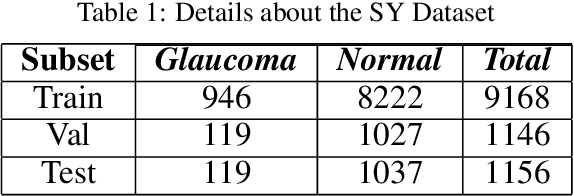

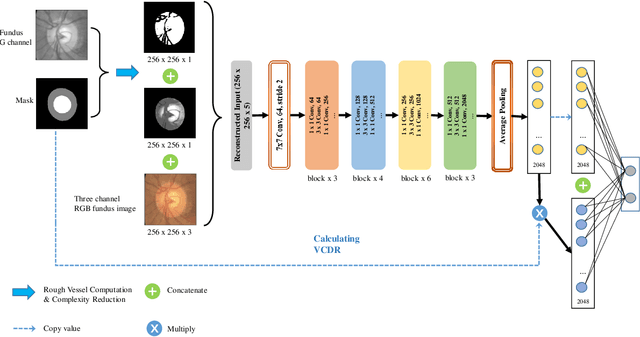

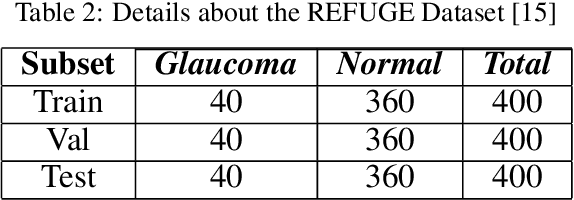

Abstract:Glaucoma is a severe blinding disease, for which automatic detection methods are urgently needed to alleviate the scarcity of ophthalmologists. Many works have proposed to employ deep learning methods that involve the segmentation of optic disc and cup for glaucoma detection, in which the segmentation process is often considered merely as an upstream sub-task. The relationship between fundus images and segmentation masks in terms of joint decision-making in glaucoma assessment is rarely explored. We propose a novel segmentation-based information extraction and amalgamation method for the task of glaucoma detection, which leverages the robustness of segmentation masks without disregarding the rich information in the original fundus images. Experimental results on both private and public datasets demonstrate that our proposed method outperforms all models that utilize solely either fundus images or masks.

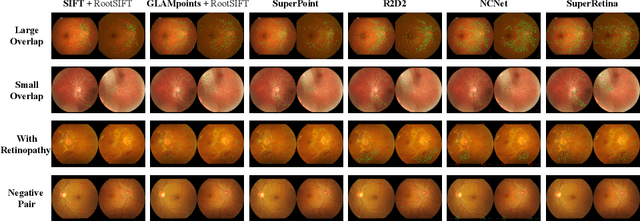

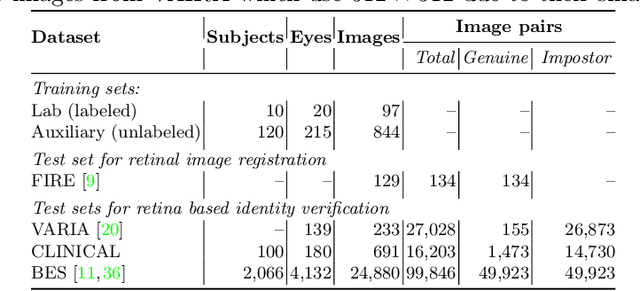

Semi-Supervised Keypoint Detector and Descriptor for Retinal Image Matching

Jul 16, 2022

Abstract:For retinal image matching (RIM), we propose SuperRetina, the first end-to-end method with jointly trainable keypoint detector and descriptor. SuperRetina is trained in a novel semi-supervised manner. A small set of (nearly 100) images are incompletely labeled and used to supervise the network to detect keypoints on the vascular tree. To attack the incompleteness of manual labeling, we propose Progressive Keypoint Expansion to enrich the keypoint labels at each training epoch. By utilizing a keypoint-based improved triplet loss as its description loss, SuperRetina produces highly discriminative descriptors at full input image size. Extensive experiments on multiple real-world datasets justify the viability of SuperRetina. Even with manual labeling replaced by auto labeling and thus making the training process fully manual-annotation free, SuperRetina compares favorably against a number of strong baselines for two RIM tasks, i.e. image registration and identity verification. SuperRetina will be open source.

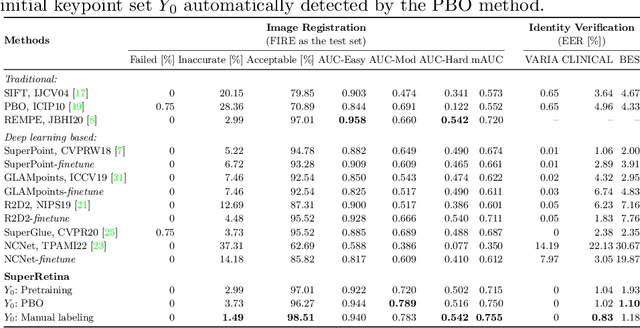

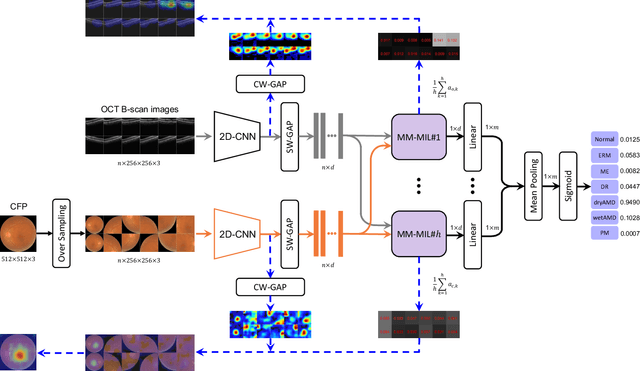

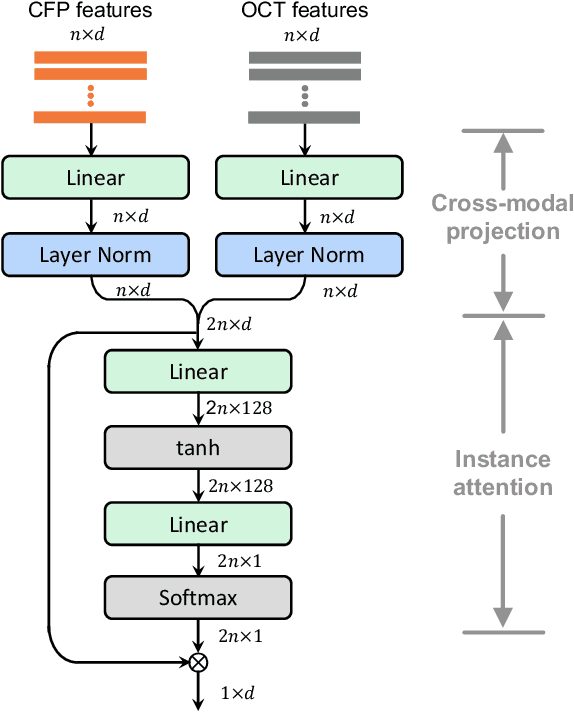

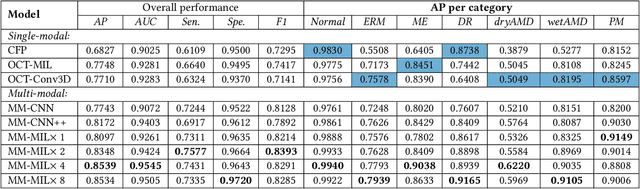

Multi-Modal Multi-Instance Learning for Retinal Disease Recognition

Sep 25, 2021

Abstract:This paper attacks an emerging challenge of multi-modal retinal disease recognition. Given a multi-modal case consisting of a color fundus photo (CFP) and an array of OCT B-scan images acquired during an eye examination, we aim to build a deep neural network that recognizes multiple vision-threatening diseases for the given case. As the diagnostic efficacy of CFP and OCT is disease-dependent, the network's ability of being both selective and interpretable is important. Moreover, as both data acquisition and manual labeling are extremely expensive in the medical domain, the network has to be relatively lightweight for learning from a limited set of labeled multi-modal samples. Prior art on retinal disease recognition focuses either on a single disease or on a single modality, leaving multi-modal fusion largely underexplored. We propose in this paper Multi-Modal Multi-Instance Learning (MM-MIL) for selectively fusing CFP and OCT modalities. Its lightweight architecture (as compared to current multi-head attention modules) makes it suited for learning from relatively small-sized datasets. For an effective use of MM-MIL, we propose to generate a pseudo sequence of CFPs by over sampling a given CFP. The benefits of this tactic include well balancing instances across modalities, increasing the resolution of the CFP input, and finding out regions of the CFP most relevant with respect to the final diagnosis. Extensive experiments on a real-world dataset consisting of 1,206 multi-modal cases from 1,193 eyes of 836 subjects demonstrate the viability of the proposed model.

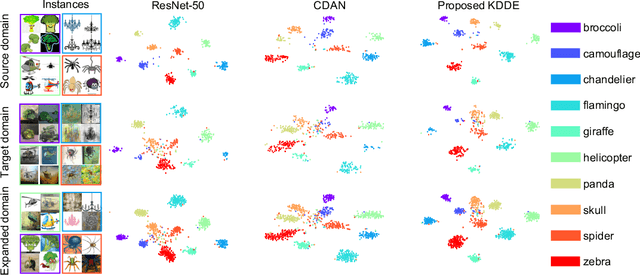

Unsupervised Domain Expansion for Visual Categorization

Apr 01, 2021

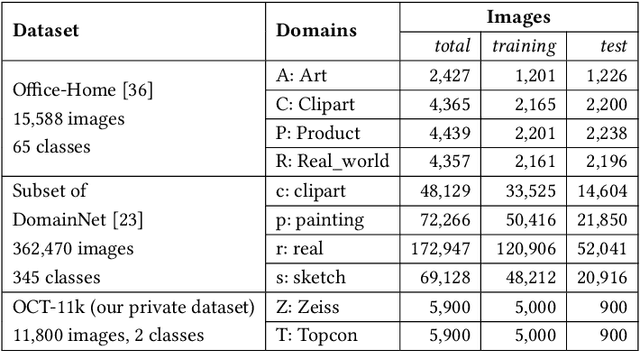

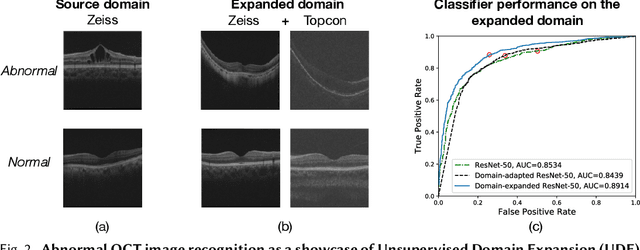

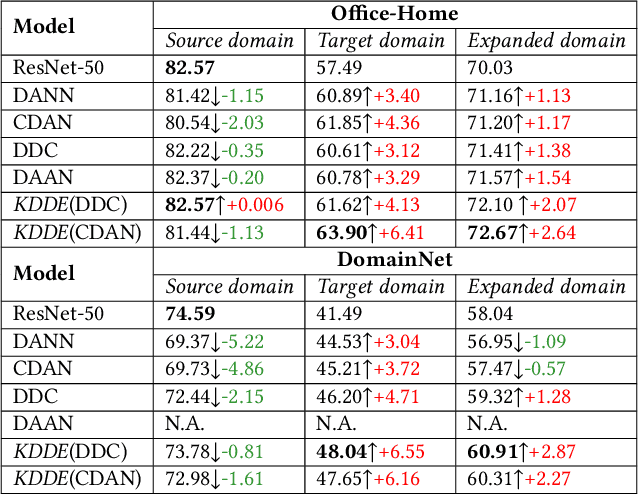

Abstract:Expanding visual categorization into a novel domain without the need of extra annotation has been a long-term interest for multimedia intelligence. Previously, this challenge has been approached by unsupervised domain adaptation (UDA). Given labeled data from a source domain and unlabeled data from a target domain, UDA seeks for a deep representation that is both discriminative and domain-invariant. While UDA focuses on the target domain, we argue that the performance on both source and target domains matters, as in practice which domain a test example comes from is unknown. In this paper we extend UDA by proposing a new task called unsupervised domain expansion (UDE), which aims to adapt a deep model for the target domain with its unlabeled data, meanwhile maintaining the model's performance on the source domain. We propose Knowledge Distillation Domain Expansion (KDDE) as a general method for the UDE task. Its domain-adaptation module can be instantiated with any existing model. We develop a knowledge distillation based learning mechanism, enabling KDDE to optimize a single objective wherein the source and target domains are equally treated. Extensive experiments on two major benchmarks, i.e., Office-Home and DomainNet, show that KDDE compares favorably against four competitive baselines, i.e., DDC, DANN, DAAN, and CDAN, for both UDA and UDE tasks. Our study also reveals that the current UDA models improve their performance on the target domain at the cost of noticeable performance loss on the source domain.

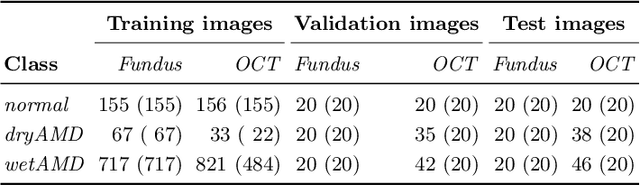

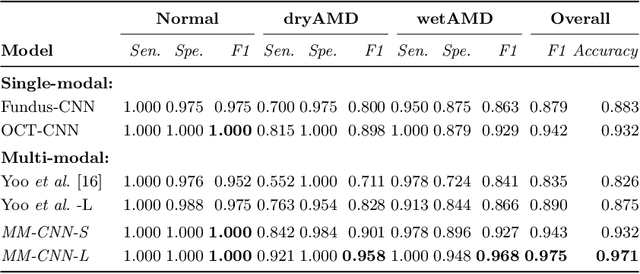

Learning Two-Stream CNN for Multi-Modal Age-related Macular Degeneration Categorization

Dec 03, 2020

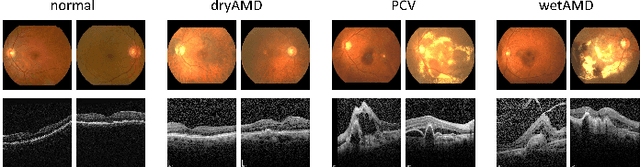

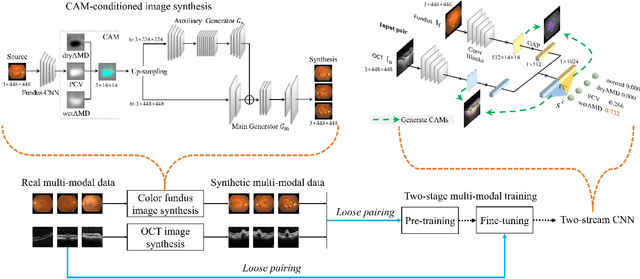

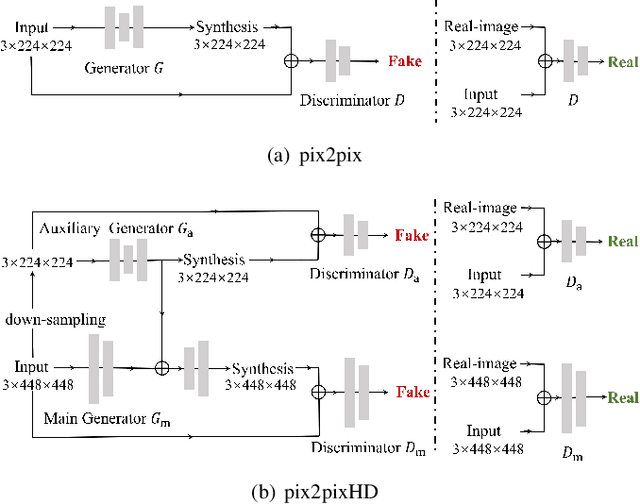

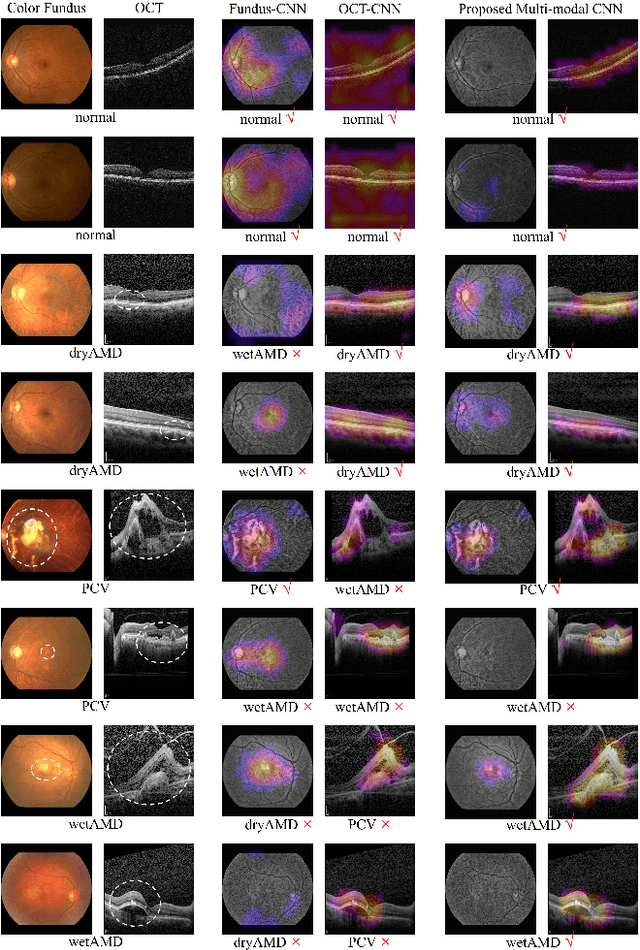

Abstract:This paper tackles automated categorization of Age-related Macular Degeneration (AMD), a common macular disease among people over 50. Previous research efforts mainly focus on AMD categorization with a single-modal input, let it be a color fundus image or an OCT image. By contrast, we consider AMD categorization given a multi-modal input, a direction that is clinically meaningful yet mostly unexplored. Contrary to the prior art that takes a traditional approach of feature extraction plus classifier training that cannot be jointly optimized, we opt for end-to-end multi-modal Convolutional Neural Networks (MM-CNN). Our MM-CNN is instantiated by a two-stream CNN, with spatially-invariant fusion to combine information from the fundus and OCT streams. In order to visually interpret the contribution of the individual modalities to the final prediction, we extend the class activation mapping (CAM) technique to the multi-modal scenario. For effective training of MM-CNN, we develop two data augmentation methods. One is GAN-based fundus / OCT image synthesis, with our novel use of CAMs as conditional input of a high-resolution image-to-image translation GAN. The other method is Loose Pairing, which pairs a fundus image and an OCT image on the basis of their classes instead of eye identities. Experiments on a clinical dataset consisting of 1,099 color fundus images and 1,290 OCT images acquired from 1,099 distinct eyes verify the effectiveness of the proposed solution for multi-modal AMD categorization.

Learn to Segment Retinal Lesions and Beyond

Dec 25, 2019

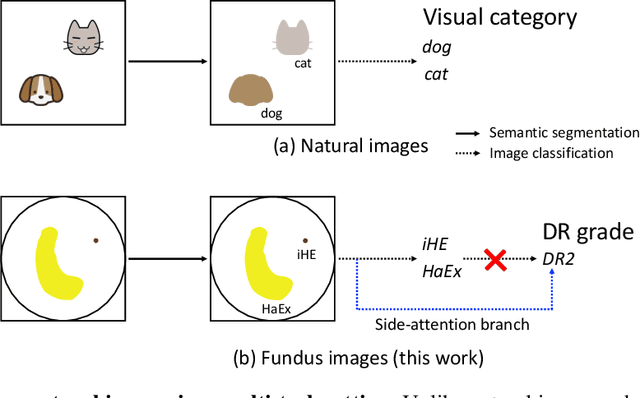

Abstract:Towards automated retinal screening, this paper makes an endeavor to simultaneously achieve pixel-level retinal lesion segmentation and image-level disease classification. Such a multi-task approach is crucial for accurate and clinically interpretable disease diagnosis. Prior art is insufficient due to three challenges, that is, lesions lacking objective boundaries, clinical importance of lesions irrelevant to their size, and the lack of one-to-one correspondence between lesion and disease classes. This paper attacks the three challenges in the context of diabetic retinopathy (DR) grading. We propose L-Net, a new variant of fully convolutional networks, with its expansive path re-designed to tackle the first challenge. A dual loss that leverages both semantic segmentation and image classification losses is devised to resolve the second challenge. We propose Side-Attention Net (SiAN) as our multi-task framework. Harnessing L-Net as a side-attention branch, SiAN simultaneously improves DR grading and interprets the decision with lesion maps. A set of 12K fundus images is manually segmented by 45 ophthalmologists for 8 DR-related lesions, resulting in 290K manual segments in total. Extensive experiments on this large-scale dataset show that our proposed approach surpasses the prior art for multiple tasks including lesion segmentation, lesion classification and DR grading.

Hierarchical Attention Networks for Medical Image Segmentation

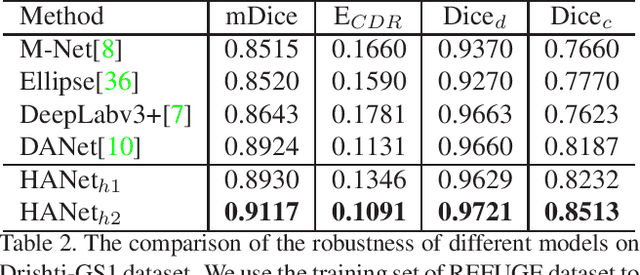

Nov 25, 2019

Abstract:The medical image is characterized by the inter-class indistinction, high variability, and noise, where the recognition of pixels is challenging. Unlike previous self-attention based methods that capture context information from one level, we reformulate the self-attention mechanism from the view of the high-order graph and propose a novel method, namely Hierarchical Attention Network (HANet), to address the problem of medical image segmentation. Concretely, an HA module embedded in the HANet captures context information from neighbors of multiple levels, where these neighbors are extracted from the high-order graph. In the high-order graph, there will be an edge between two nodes only if the correlation between them is high enough, which naturally reduces the noisy attention information caused by the inter-class indistinction. The proposed HA module is robust to the variance of input and can be flexibly inserted into the existing convolution neural networks. We conduct experiments on three medical image segmentation tasks including optic disc/cup segmentation, blood vessel segmentation, and lung segmentation. Extensive results show our method is more effective and robust than the existing state-of-the-art methods.

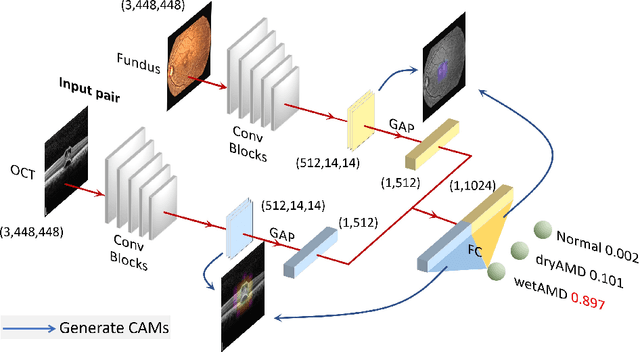

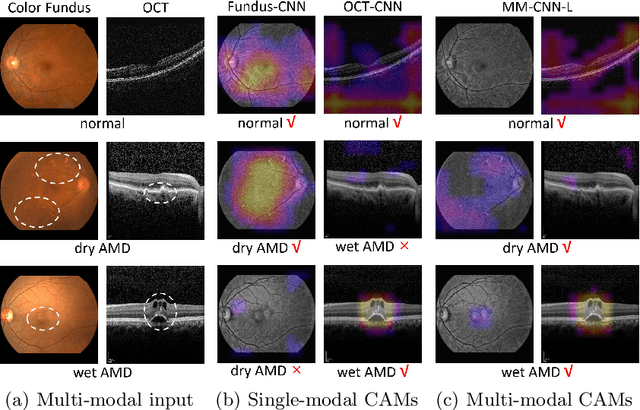

Two-Stream CNN with Loose Pair Training for Multi-modal AMD Categorization

Jul 28, 2019

Abstract:This paper studies automated categorization of age-related macular degeneration (AMD) given a multi-modal input, which consists of a color fundus image and an optical coherence tomography (OCT) image from a specific eye. Previous work uses a traditional method, comprised of feature extraction and classifier training that cannot be optimized jointly. By contrast, we propose a two-stream convolutional neural network (CNN) that is end-to-end. The CNN's fusion layer is tailored to the need of fusing information from the fundus and OCT streams. For generating more multi-modal training instances, we introduce Loose Pair training, where a fundus image and an OCT image are paired based on class labels rather than eyes. Moreover, for a visual interpretation of how the individual modalities make contributions, we extend the class activation mapping technique to the multi-modal scenario. Experiments on a real-world dataset collected from an outpatient clinic justify the viability of our proposal for multi-modal AMD categorization.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge