Zhihong Wang

Seed-Prover 1.5: Mastering Undergraduate-Level Theorem Proving via Learning from Experience

Dec 19, 2025

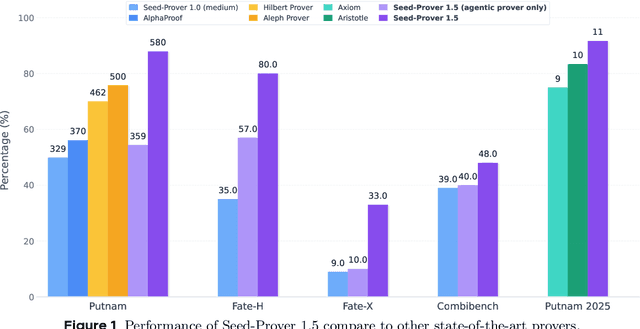

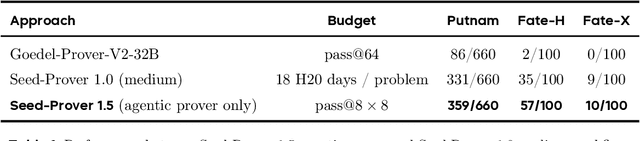

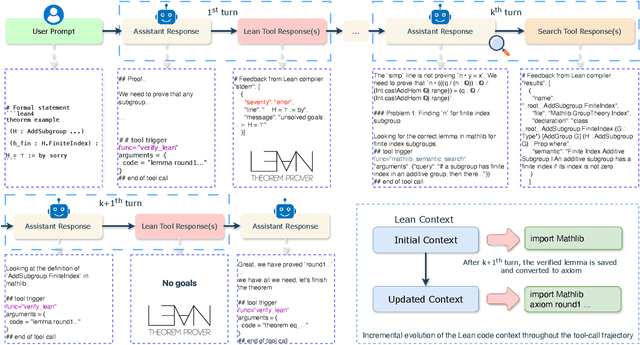

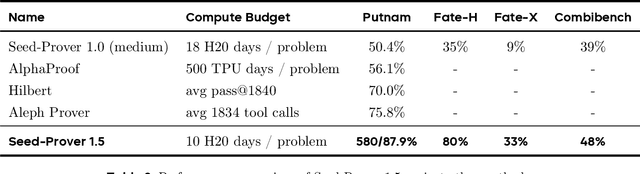

Abstract:Large language models have recently made significant progress to generate rigorous mathematical proofs. In contrast, utilizing LLMs for theorem proving in formal languages (such as Lean) remains challenging and computationally expensive, particularly when addressing problems at the undergraduate level and beyond. In this work, we present \textbf{Seed-Prover 1.5}, a formal theorem-proving model trained via large-scale agentic reinforcement learning, alongside an efficient test-time scaling (TTS) workflow. Through extensive interactions with Lean and other tools, the model continuously accumulates experience during the RL process, substantially enhancing the capability and efficiency of formal theorem proving. Furthermore, leveraging recent advancements in natural language proving, our TTS workflow efficiently bridges the gap between natural and formal languages. Compared to state-of-the-art methods, Seed-Prover 1.5 achieves superior performance with a smaller compute budget. It solves \textbf{88\% of PutnamBench} (undergraduate-level), \textbf{80\% of Fate-H} (graduate-level), and \textbf{33\% of Fate-X} (PhD-level) problems. Notably, using our system, we solved \textbf{11 out of 12 problems} from Putnam 2025 within 9 hours. Our findings suggest that scaling learning from experience, driven by high-quality formal feedback, holds immense potential for the future of formal mathematical reasoning.

Seed-Prover: Deep and Broad Reasoning for Automated Theorem Proving

Aug 01, 2025Abstract:LLMs have demonstrated strong mathematical reasoning abilities by leveraging reinforcement learning with long chain-of-thought, yet they continue to struggle with theorem proving due to the lack of clear supervision signals when solely using natural language. Dedicated domain-specific languages like Lean provide clear supervision via formal verification of proofs, enabling effective training through reinforcement learning. In this work, we propose \textbf{Seed-Prover}, a lemma-style whole-proof reasoning model. Seed-Prover can iteratively refine its proof based on Lean feedback, proved lemmas, and self-summarization. To solve IMO-level contest problems, we design three test-time inference strategies that enable both deep and broad reasoning. Seed-Prover proves $78.1\%$ of formalized past IMO problems, saturates MiniF2F, and achieves over 50\% on PutnamBench, outperforming the previous state-of-the-art by a large margin. To address the lack of geometry support in Lean, we introduce a geometry reasoning engine \textbf{Seed-Geometry}, which outperforms previous formal geometry engines. We use these two systems to participate in IMO 2025 and fully prove 5 out of 6 problems. This work represents a significant advancement in automated mathematical reasoning, demonstrating the effectiveness of formal verification with long chain-of-thought reasoning.

DarkFarseer: Inductive Spatio-temporal Kriging via Hidden Style Enhancement and Sparsity-Noise Mitigation

Jan 06, 2025

Abstract:With the rapid growth of the Internet of Things and Cyber-Physical Systems, widespread sensor deployment has become essential. However, the high costs of building sensor networks limit their scale and coverage, making fine-grained deployment challenging. Inductive Spatio-Temporal Kriging (ISK) addresses this issue by introducing virtual sensors. Based on graph neural networks (GNNs) extracting the relationships between physical and virtual sensors, ISK can infer the measurements of virtual sensors from physical sensors. However, current ISK methods rely on conventional message-passing mechanisms and network architectures, without effectively extracting spatio-temporal features of physical sensors and focusing on representing virtual sensors. Additionally, existing graph construction methods face issues of sparse and noisy connections, destroying ISK performance. To address these issues, we propose DarkFarseer, a novel ISK framework with three key components. First, we propose the Neighbor Hidden Style Enhancement module with a style transfer strategy to enhance the representation of virtual nodes in a temporal-then-spatial manner to better extract the spatial relationships between physical and virtual nodes. Second, we propose Virtual-Component Contrastive Learning, which aims to enrich the node representation by establishing the association between the patterns of virtual nodes and the regional patterns within graph components. Lastly, we design a Similarity-Based Graph Denoising Strategy, which reduces the connectivity strength of noisy connections around virtual nodes and their neighbors based on their temporal information and regional spatial patterns. Extensive experiments demonstrate that DarkFarseer significantly outperforms existing ISK methods.

Temporal Knowledge Graph Completion with Time-sensitive Relations in Hypercomplex Space

Mar 02, 2024

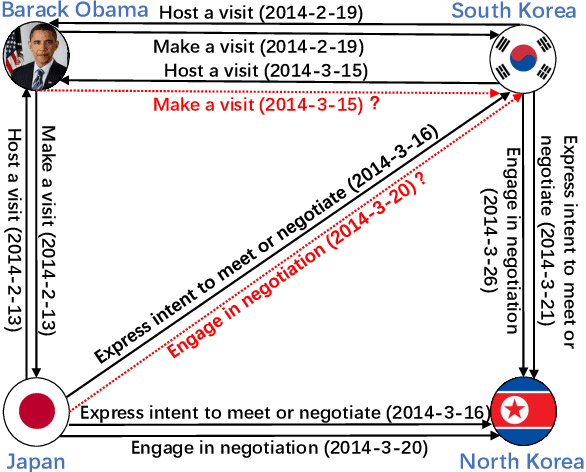

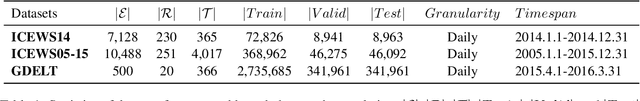

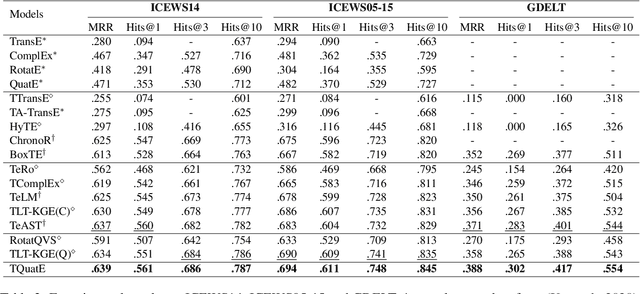

Abstract:Temporal knowledge graph completion (TKGC) aims to fill in missing facts within a given temporal knowledge graph at a specific time. Existing methods, operating in real or complex spaces, have demonstrated promising performance in this task. This paper advances beyond conventional approaches by introducing more expressive quaternion representations for TKGC within hypercomplex space. Unlike existing quaternion-based methods, our study focuses on capturing time-sensitive relations rather than time-aware entities. Specifically, we model time-sensitive relations through time-aware rotation and periodic time translation, effectively capturing complex temporal variability. Furthermore, we theoretically demonstrate our method's capability to model symmetric, asymmetric, inverse, compositional, and evolutionary relation patterns. Comprehensive experiments on public datasets validate that our proposed approach achieves state-of-the-art performance in the field of TKGC.

Relevance Feedback with Brain Signals

Dec 09, 2023

Abstract:The Relevance Feedback (RF) process relies on accurate and real-time relevance estimation of feedback documents to improve retrieval performance. Since collecting explicit relevance annotations imposes an extra burden on the user, extensive studies have explored using pseudo-relevance signals and implicit feedback signals as substitutes. However, such signals are indirect indicators of relevance and suffer from complex search scenarios where user interactions are absent or biased. Recently, the advances in portable and high-precision brain-computer interface (BCI) devices have shown the possibility to monitor user's brain activities during search process. Brain signals can directly reflect user's psychological responses to search results and thus it can act as additional and unbiased RF signals. To explore the effectiveness of brain signals in the context of RF, we propose a novel RF framework that combines BCI-based relevance feedback with pseudo-relevance signals and implicit signals to improve the performance of document re-ranking. The experimental results on the user study dataset show that incorporating brain signals leads to significant performance improvement in our RF framework. Besides, we observe that brain signals perform particularly well in several hard search scenarios, especially when implicit signals as feedback are missing or noisy. This reveals when and how to exploit brain signals in the context of RF.

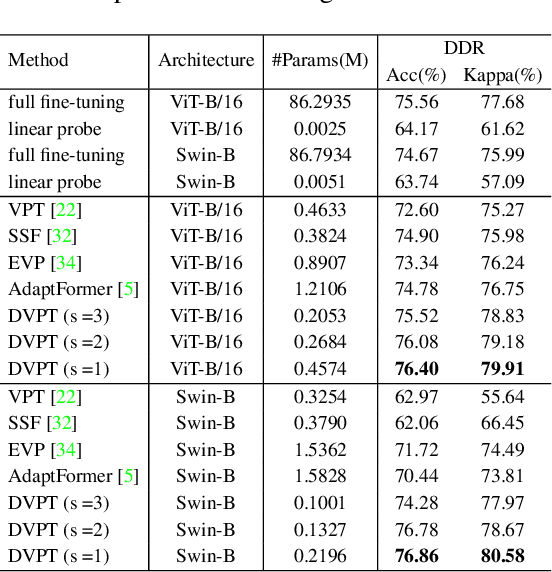

DVPT: Dynamic Visual Prompt Tuning of Large Pre-trained Models for Medical Image Analysis

Jul 19, 2023

Abstract:Limited labeled data makes it hard to train models from scratch in medical domain, and an important paradigm is pre-training and then fine-tuning. Large pre-trained models contain rich representations, which can be adapted to downstream medical tasks. However, existing methods either tune all the parameters or the task-specific layers of the pre-trained models, ignoring the input variations of medical images, and thus they are not efficient or effective. In this work, we aim to study parameter-efficient fine-tuning (PEFT) for medical image analysis, and propose a dynamic visual prompt tuning method, named DVPT. It can extract knowledge beneficial to downstream tasks from large models with a few trainable parameters. Firstly, the frozen features are transformed by an lightweight bottleneck layer to learn the domain-specific distribution of downstream medical tasks, and then a few learnable visual prompts are used as dynamic queries and then conduct cross-attention with the transformed features, attempting to acquire sample-specific knowledge that are suitable for each sample. Finally, the features are projected to original feature dimension and aggregated with the frozen features. This DVPT module can be shared between different Transformer layers, further reducing the trainable parameters. To validate DVPT, we conduct extensive experiments with different pre-trained models on medical classification and segmentation tasks. We find such PEFT method can not only efficiently adapt the pre-trained models to the medical domain, but also brings data efficiency with partial labeled data. For example, with 0.5\% extra trainable parameters, our method not only outperforms state-of-the-art PEFT methods, even surpasses the full fine-tuning by more than 2.20\% Kappa score on medical classification task. It can saves up to 60\% labeled data and 99\% storage cost of ViT-B/16.

A Digital Twin Empowered Lightweight Model Sharing Scheme for Multi-Robot Systems

May 03, 2023

Abstract:Multi-robot system for manufacturing is an Industry Internet of Things (IIoT) paradigm with significant operational cost savings and productivity improvement, where Unmanned Aerial Vehicles (UAVs) are employed to control and implement collaborative productions without human intervention. This mission-critical system relies on 3-Dimension (3-D) scene recognition to improve operation accuracy in the production line and autonomous piloting. However, implementing 3-D point cloud learning, such as Pointnet, is challenging due to limited sensing and computing resources equipped with UAVs. Therefore, we propose a Digital Twin (DT) empowered Knowledge Distillation (KD) method to generate several lightweight learning models and select the optimal model to deploy on UAVs. With a digital replica of the UAVs preserved at the edge server, the DT system controls the model sharing network topology and learning model structure to improve recognition accuracy further. Moreover, we employ network calculus to formulate and solve the model sharing configuration problem toward minimal resource consumption, as well as convergence. Simulation experiments are conducted over a popular point cloud dataset to evaluate the proposed scheme. Experiment results show that the proposed model sharing scheme outperforms the individual model in terms of computing resource consumption and recognition accuracy.

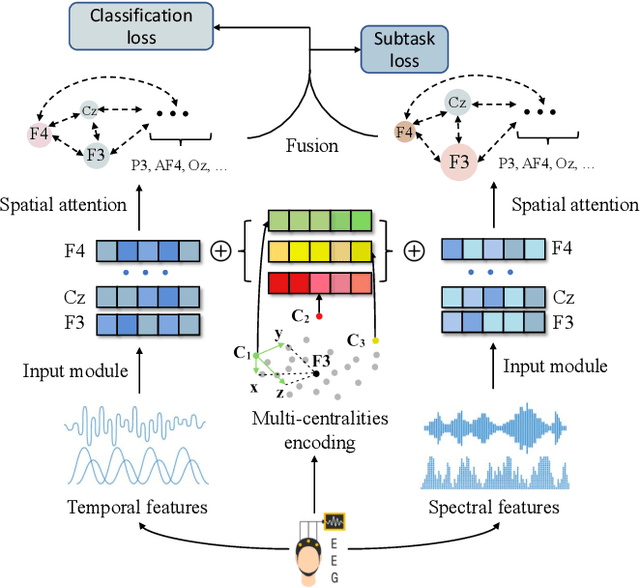

Brain Topography Adaptive Network for Satisfaction Modeling in Interactive Information Access System

Aug 17, 2022

Abstract:With the growth of information on the Web, most users heavily rely on information access systems (e.g., search engines, recommender systems, etc.) in their daily lives. During this procedure, modeling users' satisfaction status plays an essential part in improving their experiences with the systems. In this paper, we aim to explore the benefits of using Electroencephalography (EEG) signals for satisfaction modeling in interactive information access system design. Different from existing EEG classification tasks, the arisen of satisfaction involves multiple brain functions, such as arousal, prototypicality, and appraisals, which are related to different brain topographical areas. Thus modeling user satisfaction raises great challenges to existing solutions. To address this challenge, we propose BTA, a Brain Topography Adaptive network with a multi-centrality encoding module and a spatial attention mechanism module to capture cognitive connectivities in different spatial distances. We explore the effectiveness of BTA for satisfaction modeling in two popular information access scenarios, i.e., search and recommendation. Extensive experiments on two real-world datasets verify the effectiveness of introducing brain topography adaptive strategy in satisfaction modeling. Furthermore, we also conduct search result re-ranking task and video rating prediction task based on the satisfaction inferred from brain signals on search and recommendation scenarios, respectively. Experimental results show that brain signals extracted with BTA help improve the performance of interactive information access systems significantly.

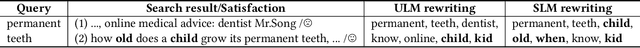

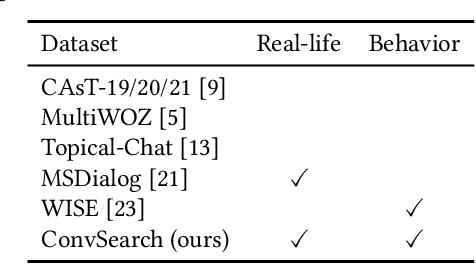

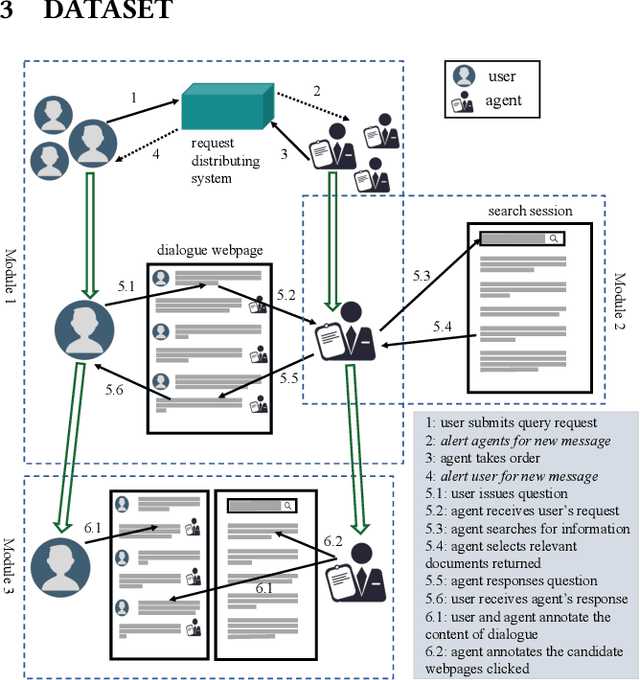

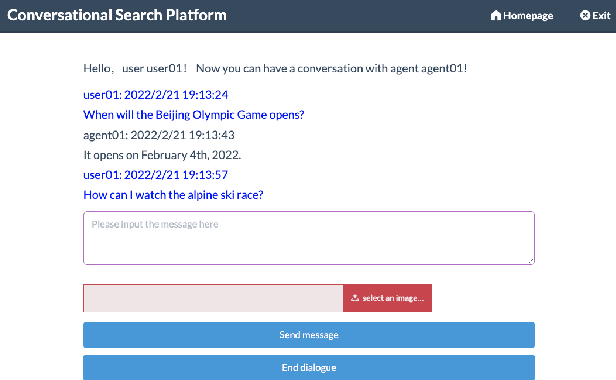

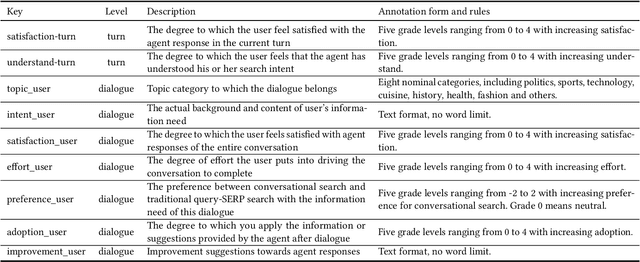

ConvSearch: A Open-Domain Conversational Search Behavior Dataset

Apr 06, 2022

Abstract:Conversational Search has been paid much attention recently with the increasing popularity of intelligent user interfaces. However, compared with the endeavour in designing effective conversational search algorithms, relatively much fewer researchers have focused on the construction of benchmark datasets. For most existing datasets, the information needs are defined by researchers and search requests are not proposed by actual users. Meanwhile, these datasets usually focus on the conversations between users and agents (systems), while largely ignores the search behaviors of agents before they return response to users. To overcome these problems, we construct a Chinese Open-Domain Conversational Search Behavior Dataset (ConvSearch) based on Wizard-of-Oz paradigm in the field study scenario. We develop a novel conversational search platform to collect dialogue contents, annotate dialogue quality and candidate search results and record agent search behaviors. 25 search agents and 51 users are recruited for the field study that lasts about 45 days. The ConvSearch dataset contains 1,131 dialogues together with annotated search results and corresponding search behaviors. We also provide the intent labels of each search behavior iteration to support intent understanding related researches. The dataset is already open to public for academic usage.

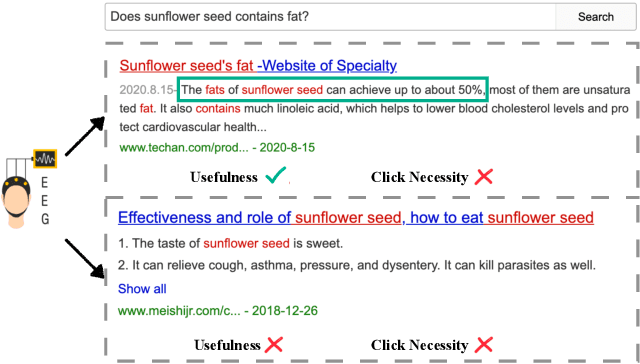

Why Don't You Click: Neural Correlates of Non-Click Behaviors in Web Search

Sep 22, 2021

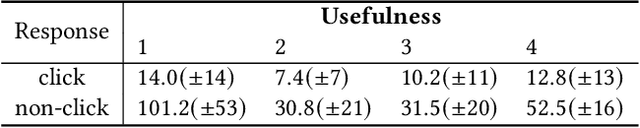

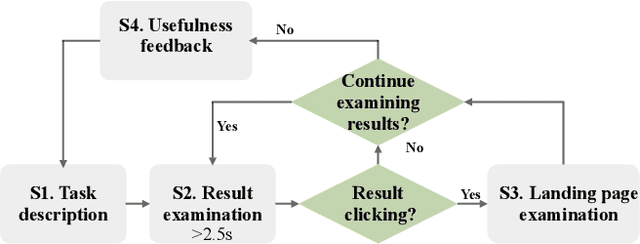

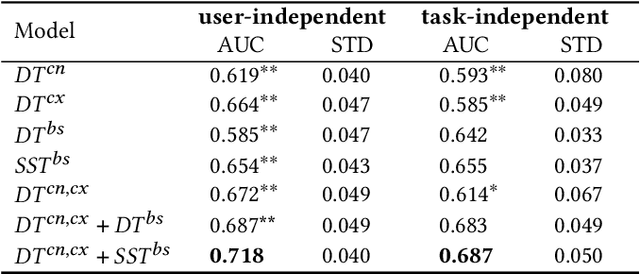

Abstract:Web search heavily relies on click-through behavior as an essential feedback signal for performance improvement and evaluation. Traditionally, click is usually treated as a positive implicit feedback signal of relevance or usefulness, while non-click (especially non-click after examination) is regarded as a signal of irrelevance or uselessness. However, there are many cases where users do not click on any search results but still satisfy their information need with the contents of the results shown on the Search Engine Result Page (SERP). This raises the problem of measuring result usefulness and modeling user satisfaction in "Zero-click" search scenarios. Previous works have solved this issue by (1) detecting user satisfaction for abandoned SERP with context information and (2) considering result-level click necessity with external assessors' annotations. However, few works have investigated the reason behind non-click behavior and estimated the usefulness of non-click results. A challenge for this research question is how to collect valuable feedback for non-click results. With neuroimaging technologies, we design a lab-based user study and reveal differences in brain signals while examining non-click search results with different usefulness levels. The findings in significant brain regions and electroencephalogram~(EEG) spectrum also suggest that the process of usefulness judgment might involve similar cognitive functions of relevance perception and satisfaction decoding. Inspired by these findings, we conduct supervised learning tasks to estimate the usefulness of non-click results with brain signals and conventional information (i.e., content and context factors). Results show that it is feasible to utilize brain signals to improve usefulness estimation performance and enhancing human-computer interactions in "Zero-click" search scenarios.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge