Yinghao Cai

State Key Laboratory of Management and Control for Complex Systems, Institute of Automation, Chinese Academy of Sciences, China

FG-CLTP: Fine-Grained Contrastive Language Tactile Pretraining for Robotic Manipulation

Mar 11, 2026Abstract:Recent advancements in integrating tactile sensing into vision-language-action (VLA) models have demonstrated transformative potential for robotic perception. However, existing tactile representations predominantly rely on qualitative descriptors (e.g., texture), neglecting quantitative contact states such as force magnitude, contact geometry, and principal axis orientation, which are indispensable for fine-grained manipulation. To bridge this gap, we propose FG-CLTP, a fine-grained contrastive language tactile pretraining framework. We first introduce a novel dataset comprising over 100k tactile 3D point cloud-language pairs that explicitly capture multidimensional contact states from the sensor's perspective. We then implement a discretized numerical tokenization mechanism to achieve quantitative-semantic alignment, effectively injecting explicit physical metrics into the multimodal feature space. The proposed FG-CLTP model yields a 95.9% classification accuracy and reduces the regression error (MAE) by 52.6% compared to state-of-the-art methods. Furthermore, the integration of 3D point cloud representations establishes a sensor-agnostic foundation with a minimal sim-to-real gap of 3.5%. Building upon this fine-grained representation, we develop a 3D tactile-language-action (3D-TLA) architecture driven by a flow matching policy to enable multimodal reasoning and control. Extensive experiments demonstrate that our framework significantly outperforms strong baselines in contact-rich manipulation tasks, providing a robust and generalizable foundation for tactile-language-action models.

PEAfowl: Perception-Enhanced Multi-View Vision-Language-Action for Bimanual Manipulation

Jan 25, 2026Abstract:Bimanual manipulation in cluttered scenes requires policies that remain stable under occlusions, viewpoint and scene variations. Existing vision-language-action models often fail to generalize because (i) multi-view features are fused via view-agnostic token concatenation, yielding weak 3D-consistent spatial understanding, and (ii) language is injected as global conditioning, resulting in coarse instruction grounding. In this paper, we introduce PEAfowl, a perception-enhanced multi-view VLA policy for bimanual manipulation. For spatial reasoning, PEAfowl predicts per-token depth distributions, performs differentiable 3D lifting, and aggregates local cross-view neighbors to form geometrically grounded, cross-view consistent representations. For instruction grounding, we propose to replace global conditioning with a Perceiver-style text-aware readout over frozen CLIP visual features, enabling iterative evidence accumulation. To overcome noisy and incomplete commodity depth without adding inference overhead, we apply training-only depth distillation from a pretrained depth teacher to supervise the depth-distribution head, providing perception front-end with geometry-aware priors. On RoboTwin 2.0 under domain-randomized setting, PEAfowl improves the strongest baseline by 23.0 pp in success rate, and real-robot experiments further demonstrate reliable sim-to-real transfer and consistent improvements from depth distillation. Project website: https://peafowlvla.github.io/.

MISCGrasp: Leveraging Multiple Integrated Scales and Contrastive Learning for Enhanced Volumetric Grasping

Jul 03, 2025Abstract:Robotic grasping faces challenges in adapting to objects with varying shapes and sizes. In this paper, we introduce MISCGrasp, a volumetric grasping method that integrates multi-scale feature extraction with contrastive feature enhancement for self-adaptive grasping. We propose a query-based interaction between high-level and low-level features through the Insight Transformer, while the Empower Transformer selectively attends to the highest-level features, which synergistically strikes a balance between focusing on fine geometric details and overall geometric structures. Furthermore, MISCGrasp utilizes multi-scale contrastive learning to exploit similarities among positive grasp samples, ensuring consistency across multi-scale features. Extensive experiments in both simulated and real-world environments demonstrate that MISCGrasp outperforms baseline and variant methods in tabletop decluttering tasks. More details are available at https://miscgrasp.github.io/.

SENIOR: Efficient Query Selection and Preference-Guided Exploration in Preference-based Reinforcement Learning

Jun 17, 2025Abstract:Preference-based Reinforcement Learning (PbRL) methods provide a solution to avoid reward engineering by learning reward models based on human preferences. However, poor feedback- and sample- efficiency still remain the problems that hinder the application of PbRL. In this paper, we present a novel efficient query selection and preference-guided exploration method, called SENIOR, which could select the meaningful and easy-to-comparison behavior segment pairs to improve human feedback-efficiency and accelerate policy learning with the designed preference-guided intrinsic rewards. Our key idea is twofold: (1) We designed a Motion-Distinction-based Selection scheme (MDS). It selects segment pairs with apparent motion and different directions through kernel density estimation of states, which is more task-related and easy for human preference labeling; (2) We proposed a novel preference-guided exploration method (PGE). It encourages the exploration towards the states with high preference and low visits and continuously guides the agent achieving the valuable samples. The synergy between the two mechanisms could significantly accelerate the progress of reward and policy learning. Our experiments show that SENIOR outperforms other five existing methods in both human feedback-efficiency and policy convergence speed on six complex robot manipulation tasks from simulation and four real-worlds.

CLTP: Contrastive Language-Tactile Pre-training for 3D Contact Geometry Understanding

May 13, 2025Abstract:Recent advancements in integrating tactile sensing with vision-language models (VLMs) have demonstrated remarkable potential for robotic multimodal perception. However, existing tactile descriptions remain limited to superficial attributes like texture, neglecting critical contact states essential for robotic manipulation. To bridge this gap, we propose CLTP, an intuitive and effective language tactile pretraining framework that aligns tactile 3D point clouds with natural language in various contact scenarios, thus enabling contact-state-aware tactile language understanding for contact-rich manipulation tasks. We first collect a novel dataset of 50k+ tactile 3D point cloud-language pairs, where descriptions explicitly capture multidimensional contact states (e.g., contact location, shape, and force) from the tactile sensor's perspective. CLTP leverages a pre-aligned and frozen vision-language feature space to bridge holistic textual and tactile modalities. Experiments validate its superiority in three downstream tasks: zero-shot 3D classification, contact state classification, and tactile 3D large language model (LLM) interaction. To the best of our knowledge, this is the first study to align tactile and language representations from the contact state perspective for manipulation tasks, providing great potential for tactile-language-action model learning. Code and datasets are open-sourced at https://sites.google.com/view/cltp/.

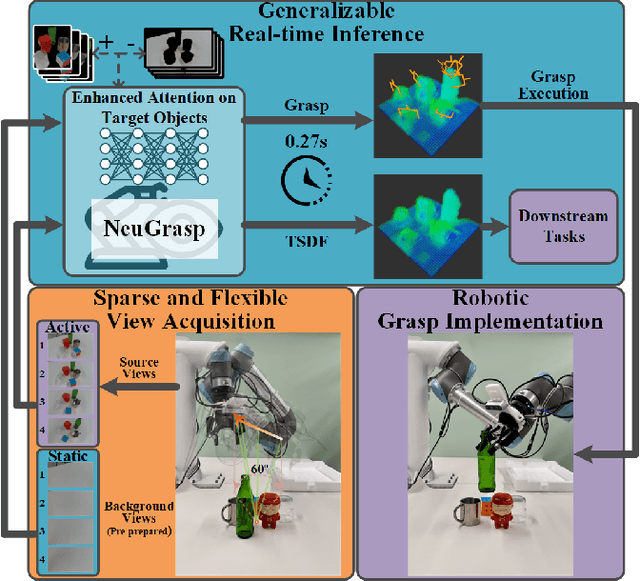

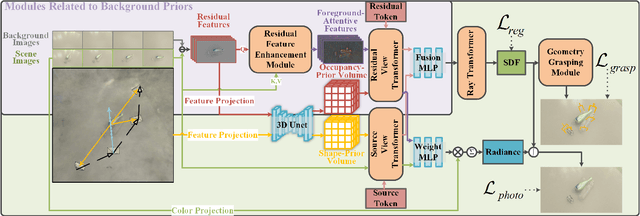

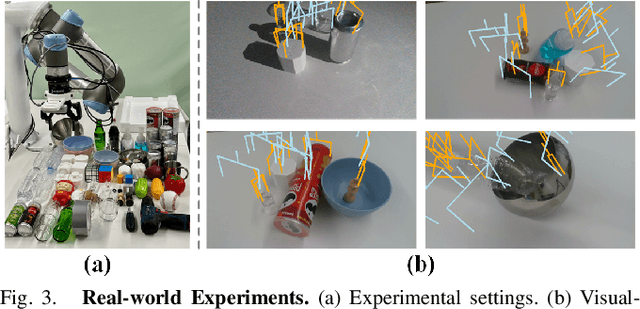

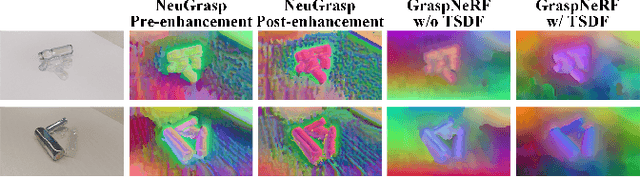

NeuGrasp: Generalizable Neural Surface Reconstruction with Background Priors for Material-Agnostic Object Grasp Detection

Mar 05, 2025

Abstract:Robotic grasping in scenes with transparent and specular objects presents great challenges for methods relying on accurate depth information. In this paper, we introduce NeuGrasp, a neural surface reconstruction method that leverages background priors for material-agnostic grasp detection. NeuGrasp integrates transformers and global prior volumes to aggregate multi-view features with spatial encoding, enabling robust surface reconstruction in narrow and sparse viewing conditions. By focusing on foreground objects through residual feature enhancement and refining spatial perception with an occupancy-prior volume, NeuGrasp excels in handling objects with transparent and specular surfaces. Extensive experiments in both simulated and real-world scenarios show that NeuGrasp outperforms state-of-the-art methods in grasping while maintaining comparable reconstruction quality. More details are available at https://neugrasp.github.io/.

Exploring Consistency in Graph Representations:from Graph Kernels to Graph Neural Networks

Oct 31, 2024

Abstract:Graph Neural Networks (GNNs) have emerged as a dominant approach in graph representation learning, yet they often struggle to capture consistent similarity relationships among graphs. While graph kernel methods such as the Weisfeiler-Lehman subtree (WL-subtree) and Weisfeiler-Lehman optimal assignment (WLOA) kernels are effective in capturing similarity relationships, they rely heavily on predefined kernels and lack sufficient non-linearity for more complex data patterns. Our work aims to bridge the gap between neural network methods and kernel approaches by enabling GNNs to consistently capture relational structures in their learned representations. Given the analogy between the message-passing process of GNNs and WL algorithms, we thoroughly compare and analyze the properties of WL-subtree and WLOA kernels. We find that the similarities captured by WLOA at different iterations are asymptotically consistent, ensuring that similar graphs remain similar in subsequent iterations, thereby leading to superior performance over the WL-subtree kernel. Inspired by these findings, we conjecture that the consistency in the similarities of graph representations across GNN layers is crucial in capturing relational structures and enhancing graph classification performance. Thus, we propose a loss to enforce the similarity of graph representations to be consistent across different layers. Our empirical analysis verifies our conjecture and shows that our proposed consistency loss can significantly enhance graph classification performance across several GNN backbones on various datasets.

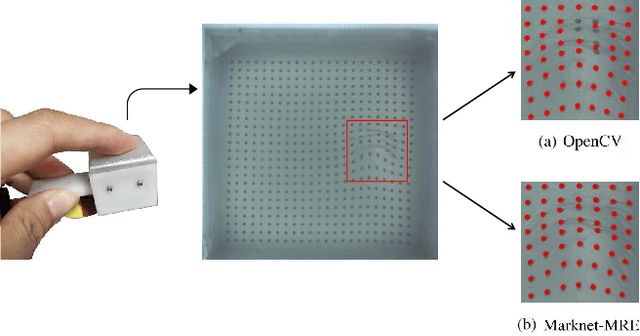

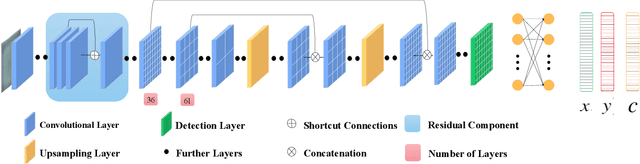

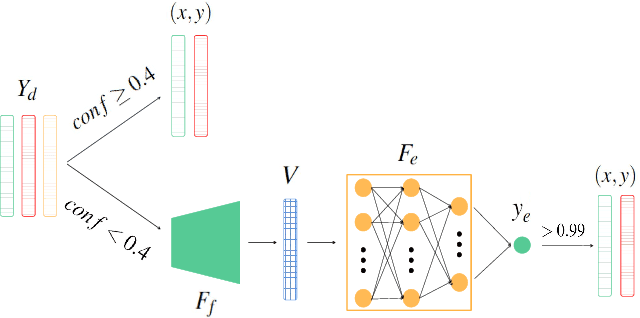

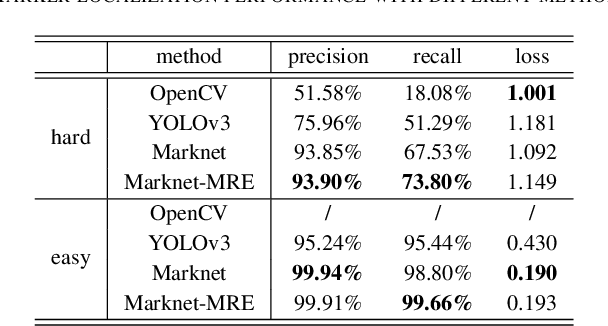

Real-Time Marker Localization Learning for GelStereo Tactile Sensing

Nov 24, 2022

Abstract:Visuotactile sensing technology is becoming more popular in tactile sensing, but the effectiveness of the existing marker detection localization methods remains to be further explored. Instead of contour-based blob detection, this paper presents a learning-based marker localization network for GelStereo visuotactile sensing called Marknet. Specifically, the Marknet presents a grid regression architecture to incorporate the distribution of the GelStereo markers. Furthermore, a marker rationality evaluator (MRE) is modelled to screen suitable prediction results. The experimental results show that the Marknet combined with MRE achieves 93.90% precision for irregular markers in contact areas, which outperforms the traditional contour-based blob detection method by a large margin of 42.32%. Meanwhile, the proposed learning-based marker localization method can achieve better real-time performance beyond the blob detection interface provided by the OpenCV library through GPU acceleration, which we believe will lead to considerable perceptual sensitivity gains in various robotic manipulation tasks.

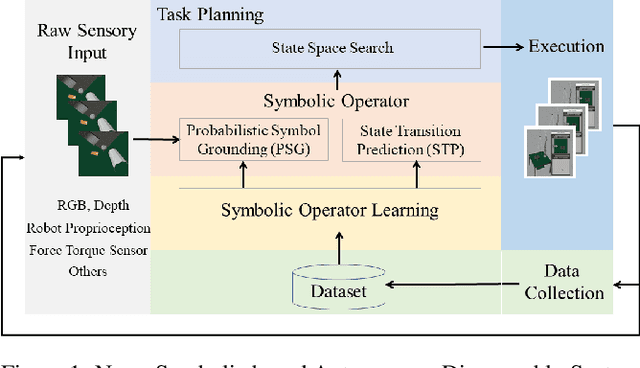

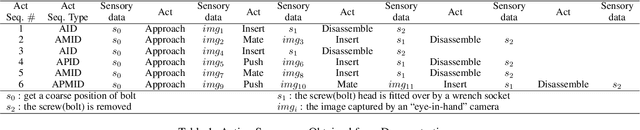

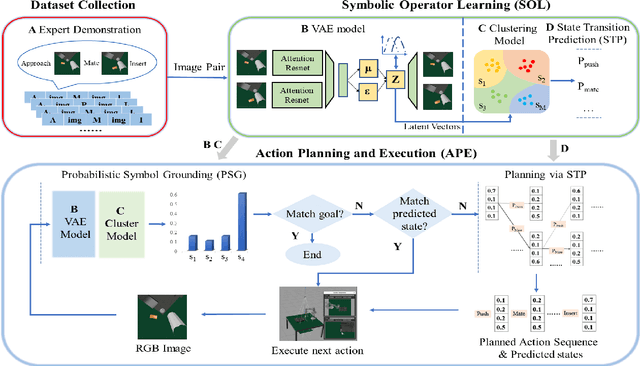

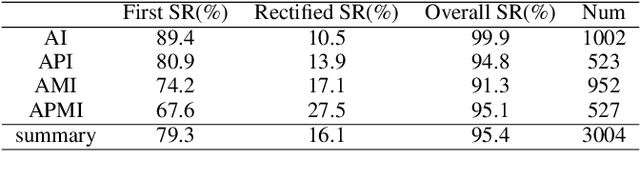

Learning Symbolic Operators: A Neurosymbolic Solution for Autonomous Disassembly of Electric Vehicle Battery

Jun 07, 2022

Abstract:The booming of electric vehicles demands efficient battery disassembly for recycling to be environment-friendly. Currently, battery disassembly is still primarily done by humans, probably assisted by robots, due to the unstructured environment and high uncertainties. It is highly desirable to design autonomous solutions to improve work efficiency and lower human risks in high voltage and toxic environments. This paper proposes a novel neurosymbolic method, which augments the traditional Variational Autoencoder (VAE) model to learn symbolic operators based on raw sensory inputs and their relationships. The symbolic operators include a probabilistic state symbol grounding model and a state transition matrix for predicting states after each execution to enable autonomous task and motion planning. At last, the method's feasibility is verified through test results.

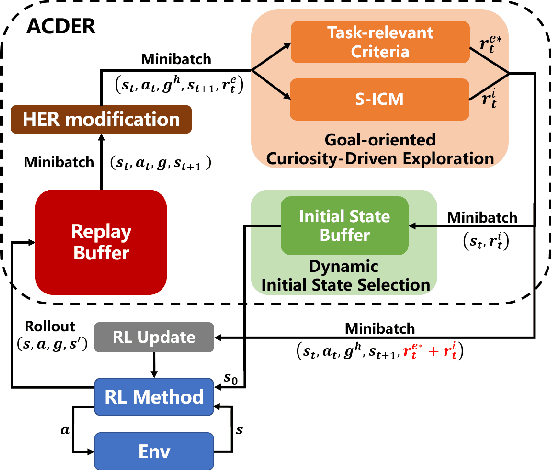

ACDER: Augmented Curiosity-Driven Experience Replay

Nov 16, 2020

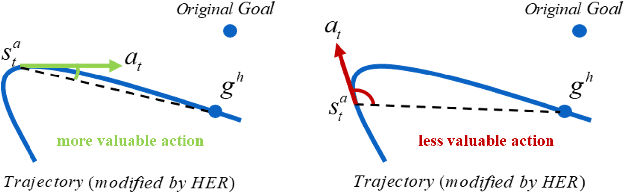

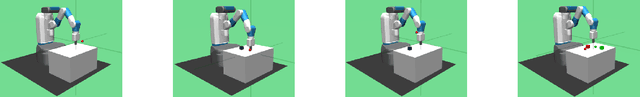

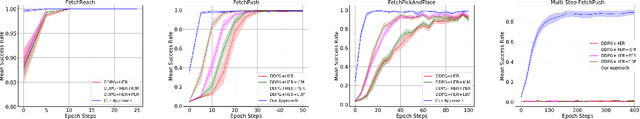

Abstract:Exploration in environments with sparse feedback remains a challenging research problem in reinforcement learning (RL). When the RL agent explores the environment randomly, it results in low exploration efficiency, especially in robotic manipulation tasks with high dimensional continuous state and action space. In this paper, we propose a novel method, called Augmented Curiosity-Driven Experience Replay (ACDER), which leverages (i) a new goal-oriented curiosity-driven exploration to encourage the agent to pursue novel and task-relevant states more purposefully and (ii) the dynamic initial states selection as an automatic exploratory curriculum to further improve the sample-efficiency. Our approach complements Hindsight Experience Replay (HER) by introducing a new way to pursue valuable states. Experiments conducted on four challenging robotic manipulation tasks with binary rewards, including Reach, Push, Pick&Place and Multi-step Push. The empirical results show that our proposed method significantly outperforms existing methods in the first three basic tasks and also achieves satisfactory performance in multi-step robotic task learning.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge