Yahao Shi

Drive Any Mesh: 4D Latent Diffusion for Mesh Deformation from Video

Jun 09, 2025

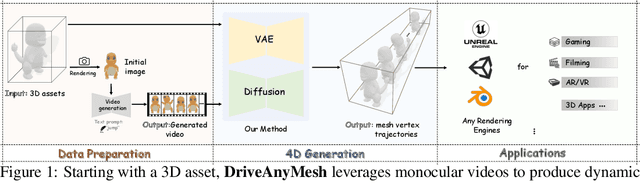

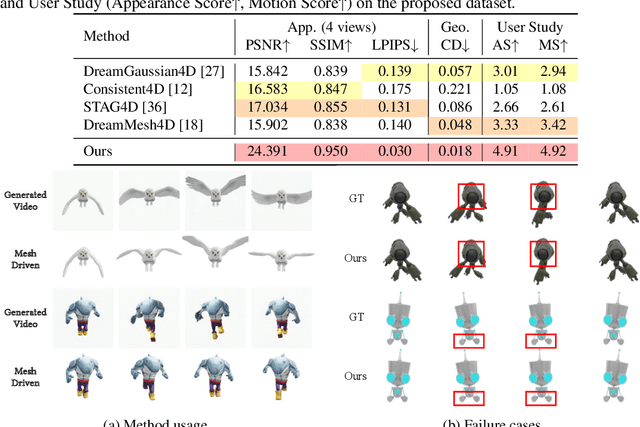

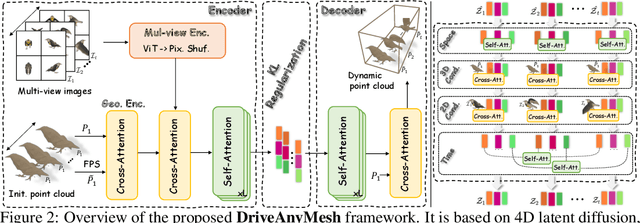

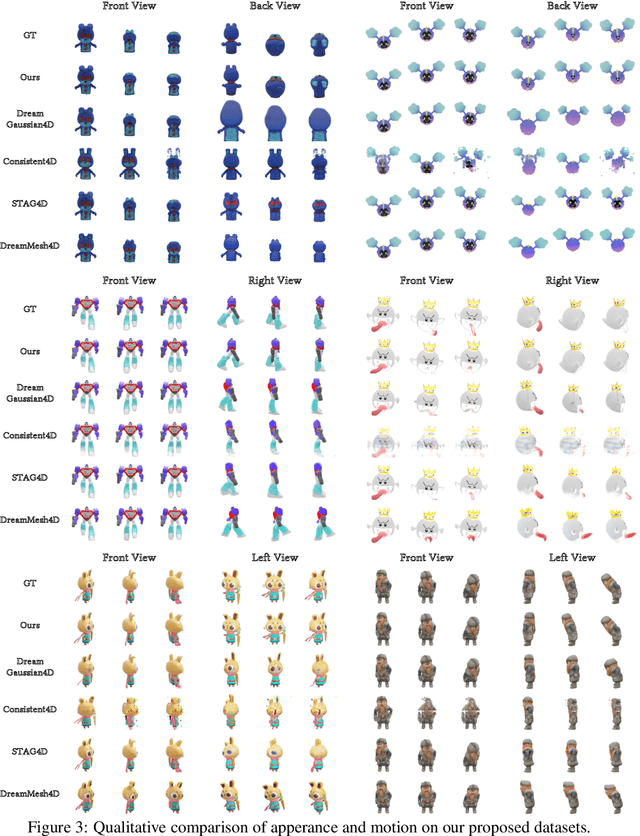

Abstract:We propose DriveAnyMesh, a method for driving mesh guided by monocular video. Current 4D generation techniques encounter challenges with modern rendering engines. Implicit methods have low rendering efficiency and are unfriendly to rasterization-based engines, while skeletal methods demand significant manual effort and lack cross-category generalization. Animating existing 3D assets, instead of creating 4D assets from scratch, demands a deep understanding of the input's 3D structure. To tackle these challenges, we present a 4D diffusion model that denoises sequences of latent sets, which are then decoded to produce mesh animations from point cloud trajectory sequences. These latent sets leverage a transformer-based variational autoencoder, simultaneously capturing 3D shape and motion information. By employing a spatiotemporal, transformer-based diffusion model, information is exchanged across multiple latent frames, enhancing the efficiency and generalization of the generated results. Our experimental results demonstrate that DriveAnyMesh can rapidly produce high-quality animations for complex motions and is compatible with modern rendering engines. This method holds potential for applications in both the gaming and filming industries.

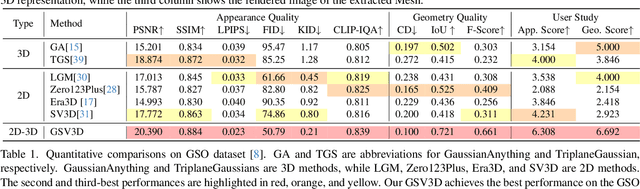

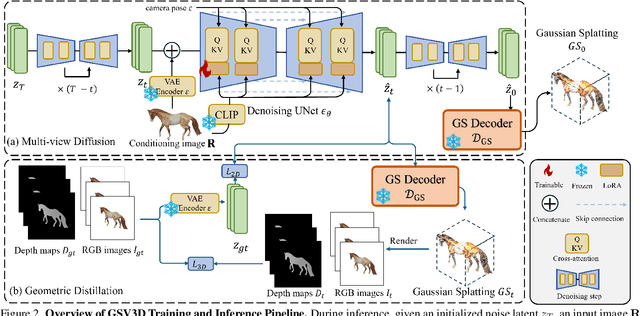

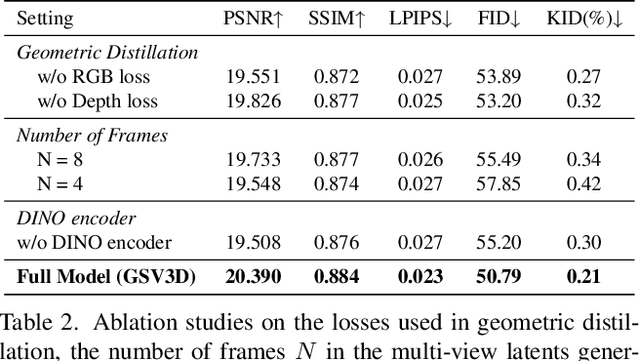

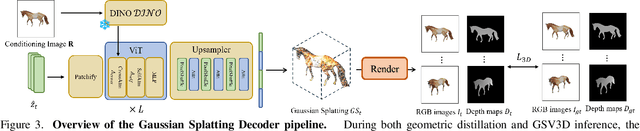

GSV3D: Gaussian Splatting-based Geometric Distillation with Stable Video Diffusion for Single-Image 3D Object Generation

Mar 08, 2025

Abstract:Image-based 3D generation has vast applications in robotics and gaming, where high-quality, diverse outputs and consistent 3D representations are crucial. However, existing methods have limitations: 3D diffusion models are limited by dataset scarcity and the absence of strong pre-trained priors, while 2D diffusion-based approaches struggle with geometric consistency. We propose a method that leverages 2D diffusion models' implicit 3D reasoning ability while ensuring 3D consistency via Gaussian-splatting-based geometric distillation. Specifically, the proposed Gaussian Splatting Decoder enforces 3D consistency by transforming SV3D latent outputs into an explicit 3D representation. Unlike SV3D, which only relies on implicit 2D representations for video generation, Gaussian Splatting explicitly encodes spatial and appearance attributes, enabling multi-view consistency through geometric constraints. These constraints correct view inconsistencies, ensuring robust geometric consistency. As a result, our approach simultaneously generates high-quality, multi-view-consistent images and accurate 3D models, providing a scalable solution for single-image-based 3D generation and bridging the gap between 2D Diffusion diversity and 3D structural coherence. Experimental results demonstrate state-of-the-art multi-view consistency and strong generalization across diverse datasets. The code will be made publicly available upon acceptance.

OpenGaussian: Towards Point-Level 3D Gaussian-based Open Vocabulary Understanding

Jun 04, 2024

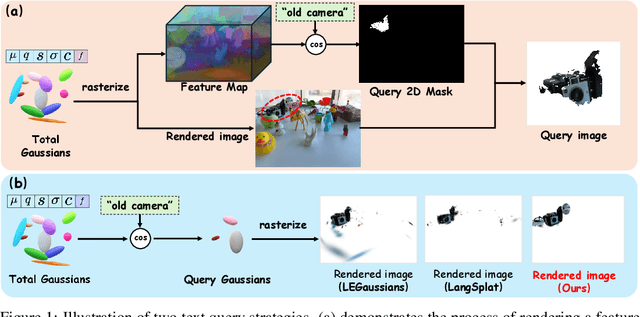

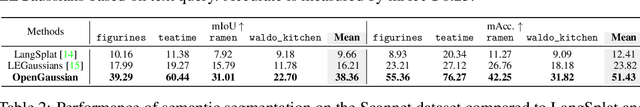

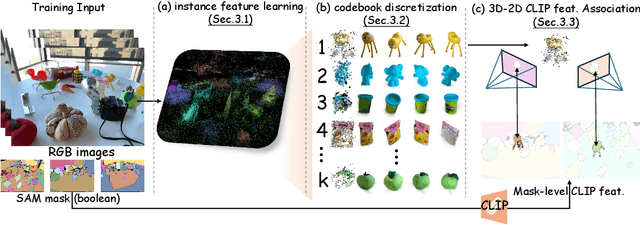

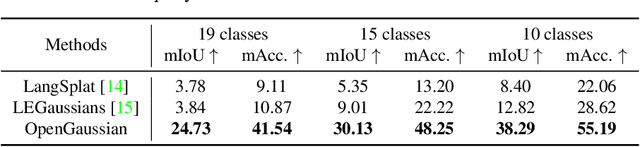

Abstract:This paper introduces OpenGaussian, a method based on 3D Gaussian Splatting (3DGS) capable of 3D point-level open vocabulary understanding. Our primary motivation stems from observing that existing 3DGS-based open vocabulary methods mainly focus on 2D pixel-level parsing. These methods struggle with 3D point-level tasks due to weak feature expressiveness and inaccurate 2D-3D feature associations. To ensure robust feature presentation and 3D point-level understanding, we first employ SAM masks without cross-frame associations to train instance features with 3D consistency. These features exhibit both intra-object consistency and inter-object distinction. Then, we propose a two-stage codebook to discretize these features from coarse to fine levels. At the coarse level, we consider the positional information of 3D points to achieve location-based clustering, which is then refined at the fine level. Finally, we introduce an instance-level 3D-2D feature association method that links 3D points to 2D masks, which are further associated with 2D CLIP features. Extensive experiments, including open vocabulary-based 3D object selection, 3D point cloud understanding, click-based 3D object selection, and ablation studies, demonstrate the effectiveness of our proposed method. Project page: https://3d-aigc.github.io/OpenGaussian

GIR: 3D Gaussian Inverse Rendering for Relightable Scene Factorization

Dec 08, 2023

Abstract:This paper presents GIR, a 3D Gaussian Inverse Rendering method for relightable scene factorization. Compared to existing methods leveraging discrete meshes or neural implicit fields for inverse rendering, our method utilizes 3D Gaussians to estimate the material properties, illumination, and geometry of an object from multi-view images. Our study is motivated by the evidence showing that 3D Gaussian is a more promising backbone than neural fields in terms of performance, versatility, and efficiency. In this paper, we aim to answer the question: ``How can 3D Gaussian be applied to improve the performance of inverse rendering?'' To address the complexity of estimating normals based on discrete and often in-homogeneous distributed 3D Gaussian representations, we proposed an efficient self-regularization method that facilitates the modeling of surface normals without the need for additional supervision. To reconstruct indirect illumination, we propose an approach that simulates ray tracing. Extensive experiments demonstrate our proposed GIR's superior performance over existing methods across multiple tasks on a variety of widely used datasets in inverse rendering. This substantiates its efficacy and broad applicability, highlighting its potential as an influential tool in relighting and reconstruction. Project page: https://3dgir.github.io

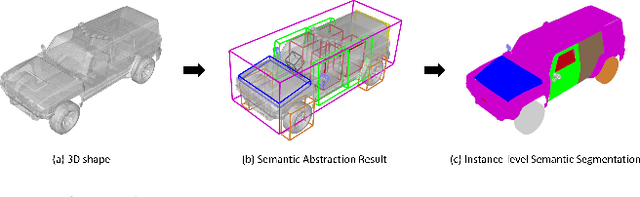

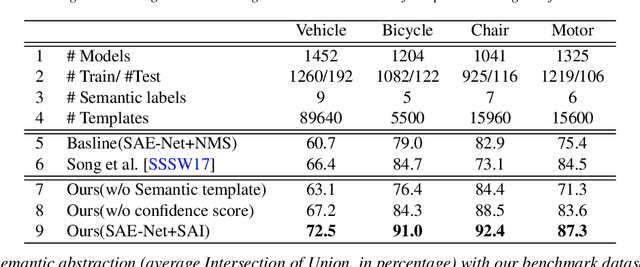

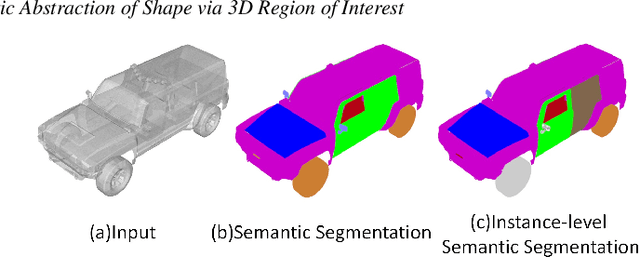

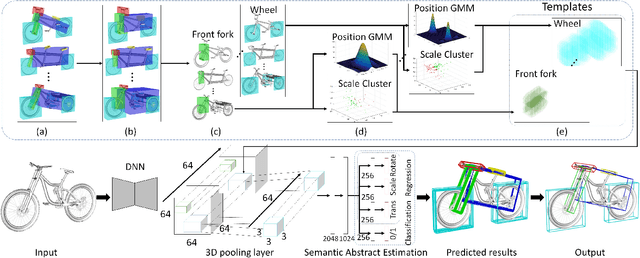

Learning Semantic Abstraction of Shape via 3D Region of Interest

Jan 13, 2022

Abstract:In this paper, we focus on the two tasks of 3D shape abstraction and semantic analysis. This is in contrast to current methods, which focus solely on either 3D shape abstraction or semantic analysis. In addition, previous methods have had difficulty producing instance-level semantic results, which has limited their application. We present a novel method for the joint estimation of a 3D shape abstraction and semantic analysis. Our approach first generates a number of 3D semantic candidate regions for a 3D shape; we then employ these candidates to directly predict the semantic categories and refine the parameters of the candidate regions simultaneously using a deep convolutional neural network. Finally, we design an algorithm to fuse the predicted results and obtain the final semantic abstraction, which is shown to be an improvement over a standard non maximum suppression. Experimental results demonstrate that our approach can produce state-of-the-art results. Moreover, we also find that our results can be easily applied to instance-level semantic part segmentation and shape matching.

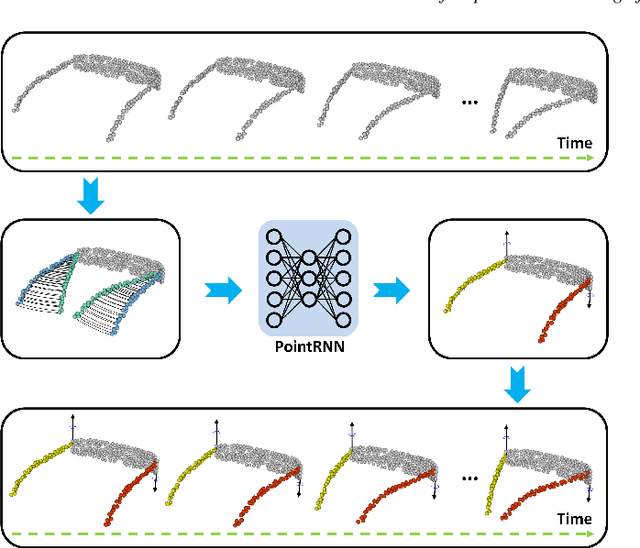

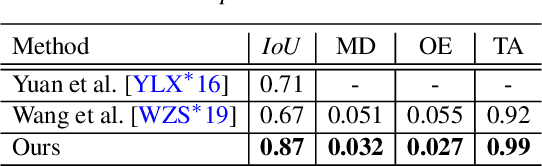

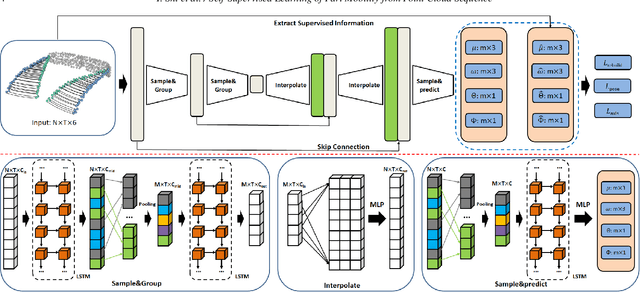

Self-Supervised Learning of Part Mobility from Point Cloud Sequence

Oct 20, 2020

Abstract:Part mobility analysis is a significant aspect required to achieve a functional understanding of 3D objects. It would be natural to obtain part mobility from the continuous part motion of 3D objects. In this study, we introduce a self-supervised method for segmenting motion parts and predicting their motion attributes from a point cloud sequence representing a dynamic object. To sufficiently utilize spatiotemporal information from the point cloud sequence, we generate trajectories by using correlations among successive frames of the sequence instead of directly processing the point clouds. We propose a novel neural network architecture called PointRNN to learn feature representations of trajectories along with their part rigid motions. We evaluate our method on various tasks including motion part segmentation, motion axis prediction and motion range estimation. The results demonstrate that our method outperforms previous techniques on both synthetic and real datasets. Moreover, our method has the ability to generalize to new and unseen objects. It is important to emphasize that it is not required to know any prior shape structure, prior shape category information, or shape orientation. To the best of our knowledge, this is the first study on deep learning to extract part mobility from point cloud sequence of a dynamic object.

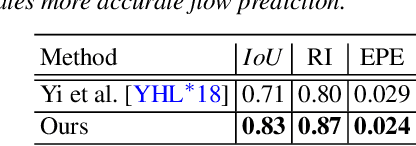

Shape2Motion: Joint Analysis of Motion Parts and Attributes from 3D Shapes

Mar 12, 2019

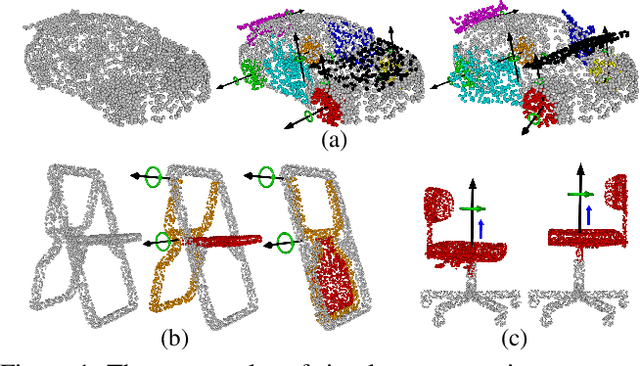

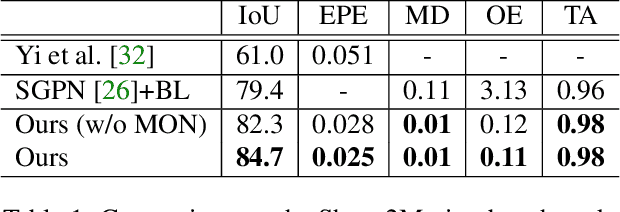

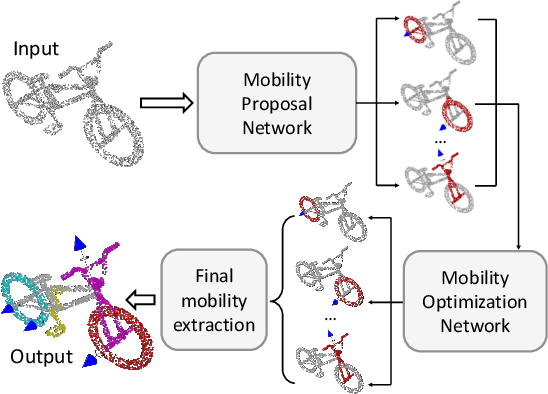

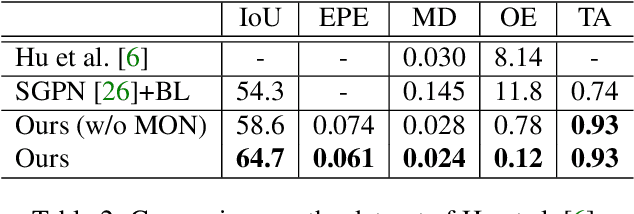

Abstract:For the task of mobility analysis of 3D shapes, we propose joint analysis for simultaneous motion part segmentation and motion attribute estimation, taking a single 3D model as input. The problem is significantly different from those tackled in the existing works which assume the availability of either a pre-existing shape segmentation or multiple 3D models in different motion states. To that end, we develop Shape2Motion which takes a single 3D point cloud as input, and jointly computes a mobility-oriented segmentation and the associated motion attributes. Shape2Motion is comprised of two deep neural networks designed for mobility proposal generation and mobility optimization, respectively. The key contribution of these networks is the novel motion-driven features and losses used in both motion part segmentation and motion attribute estimation. This is based on the observation that the movement of a functional part preserves the shape structure. We evaluate Shape2Motion with a newly proposed benchmark for mobility analysis of 3D shapes. Results demonstrate that our method achieves the state-of-the-art performance both in terms of motion part segmentation and motion attribute estimation.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge