Xinhai Liu

refer to the report for detailed contributions

Hunyuan3D Studio: End-to-End AI Pipeline for Game-Ready 3D Asset Generation

Sep 16, 2025

Abstract:The creation of high-quality 3D assets, a cornerstone of modern game development, has long been characterized by labor-intensive and specialized workflows. This paper presents Hunyuan3D Studio, an end-to-end AI-powered content creation platform designed to revolutionize the game production pipeline by automating and streamlining the generation of game-ready 3D assets. At its core, Hunyuan3D Studio integrates a suite of advanced neural modules (such as Part-level 3D Generation, Polygon Generation, Semantic UV, etc.) into a cohesive and user-friendly system. This unified framework allows for the rapid transformation of a single concept image or textual description into a fully-realized, production-quality 3D model complete with optimized geometry and high-fidelity PBR textures. We demonstrate that assets generated by Hunyuan3D Studio are not only visually compelling but also adhere to the stringent technical requirements of contemporary game engines, significantly reducing iteration time and lowering the barrier to entry for 3D content creation. By providing a seamless bridge from creative intent to technical asset, Hunyuan3D Studio represents a significant leap forward for AI-assisted workflows in game development and interactive media.

Hunyuan3D 2.0: Scaling Diffusion Models for High Resolution Textured 3D Assets Generation

Jan 21, 2025

Abstract:We present Hunyuan3D 2.0, an advanced large-scale 3D synthesis system for generating high-resolution textured 3D assets. This system includes two foundation components: a large-scale shape generation model -- Hunyuan3D-DiT, and a large-scale texture synthesis model -- Hunyuan3D-Paint. The shape generative model, built on a scalable flow-based diffusion transformer, aims to create geometry that properly aligns with a given condition image, laying a solid foundation for downstream applications. The texture synthesis model, benefiting from strong geometric and diffusion priors, produces high-resolution and vibrant texture maps for either generated or hand-crafted meshes. Furthermore, we build Hunyuan3D-Studio -- a versatile, user-friendly production platform that simplifies the re-creation process of 3D assets. It allows both professional and amateur users to manipulate or even animate their meshes efficiently. We systematically evaluate our models, showing that Hunyuan3D 2.0 outperforms previous state-of-the-art models, including the open-source models and closed-source models in geometry details, condition alignment, texture quality, and etc. Hunyuan3D 2.0 is publicly released in order to fill the gaps in the open-source 3D community for large-scale foundation generative models. The code and pre-trained weights of our models are available at: https://github.com/Tencent/Hunyuan3D-2

Tencent Hunyuan3D-1.0: A Unified Framework for Text-to-3D and Image-to-3D Generation

Nov 05, 2024

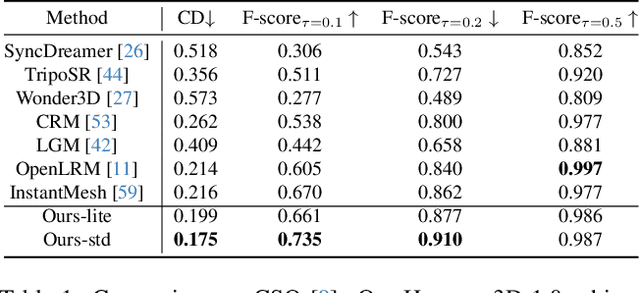

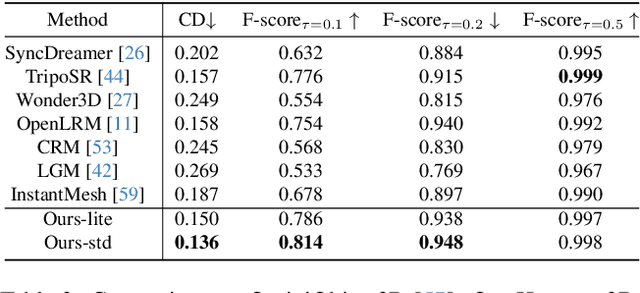

Abstract:While 3D generative models have greatly improved artists' workflows, the existing diffusion models for 3D generation suffer from slow generation and poor generalization. To address this issue, we propose a two-stage approach named Hunyuan3D-1.0 including a lite version and a standard version, that both support text- and image-conditioned generation. In the first stage, we employ a multi-view diffusion model that efficiently generates multi-view RGB in approximately 4 seconds. These multi-view images capture rich details of the 3D asset from different viewpoints, relaxing the tasks from single-view to multi-view reconstruction. In the second stage, we introduce a feed-forward reconstruction model that rapidly and faithfully reconstructs the 3D asset given the generated multi-view images in approximately 7 seconds. The reconstruction network learns to handle noises and in-consistency introduced by the multi-view diffusion and leverages the available information from the condition image to efficiently recover the 3D structure. Our framework involves the text-to-image model, i.e., Hunyuan-DiT, making it a unified framework to support both text- and image-conditioned 3D generation. Our standard version has 3x more parameters than our lite and other existing model. Our Hunyuan3D-1.0 achieves an impressive balance between speed and quality, significantly reducing generation time while maintaining the quality and diversity of the produced assets.

MultiPull: Detailing Signed Distance Functions by Pulling Multi-Level Queries at Multi-Step

Nov 02, 2024

Abstract:Reconstructing a continuous surface from a raw 3D point cloud is a challenging task. Recent methods usually train neural networks to overfit on single point clouds to infer signed distance functions (SDFs). However, neural networks tend to smooth local details due to the lack of ground truth signed distances or normals, which limits the performance of overfitting-based methods in reconstruction tasks. To resolve this issue, we propose a novel method, named MultiPull, to learn multi-scale implicit fields from raw point clouds by optimizing accurate SDFs from coarse to fine. We achieve this by mapping 3D query points into a set of frequency features, which makes it possible to leverage multi-level features during optimization. Meanwhile, we introduce optimization constraints from the perspective of spatial distance and normal consistency, which play a key role in point cloud reconstruction based on multi-scale optimization strategies. Our experiments on widely used object and scene benchmarks demonstrate that our method outperforms the state-of-the-art methods in surface reconstruction.

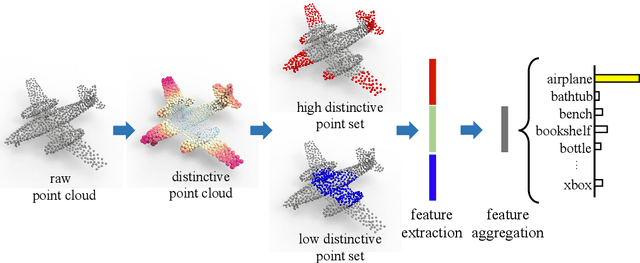

D-Net: Learning for Distinctive Point Clouds by Self-Attentive Point Searching and Learnable Feature Fusion

May 10, 2023

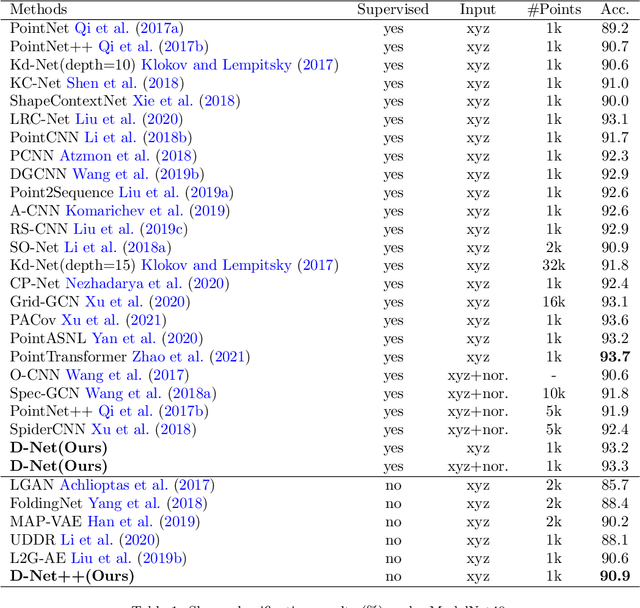

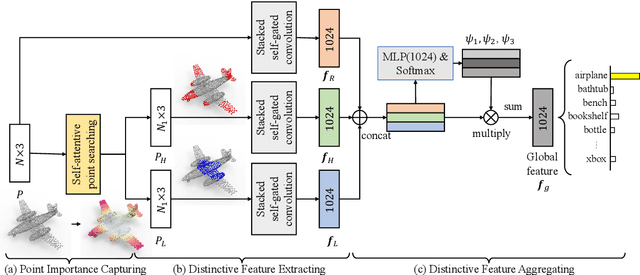

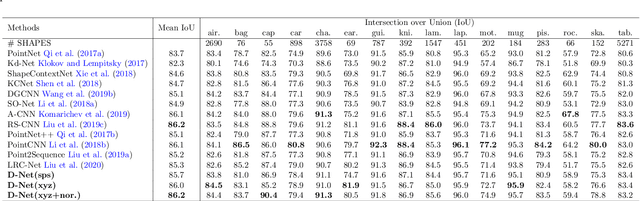

Abstract:Learning and selecting important points on a point cloud is crucial for point cloud understanding in various applications. Most of early methods selected the important points on 3D shapes by analyzing the intrinsic geometric properties of every single shape, which fails to capture the importance of points that distinguishes a shape from objects of other classes, i.e., the distinction of points. To address this problem, we propose D-Net (Distinctive Network) to learn for distinctive point clouds based on a self-attentive point searching and a learnable feature fusion. Specifically, in the self-attentive point searching, we first learn the distinction score for each point to reveal the distinction distribution of the point cloud. After ranking the learned distinction scores, we group a point cloud into a high distinctive point set and a low distinctive one to enrich the fine-grained point cloud structure. To generate a compact feature representation for each distinctive point set, a stacked self-gated convolution is proposed to extract the distinctive features. Finally, we further introduce a learnable feature fusion mechanism to aggregate multiple distinctive features into a global point cloud representation in a channel-wise aggregation manner. The results also show that the learned distinction distribution of a point cloud is highly consistent with objects of the same class and different from objects of other classes. Extensive experiments on public datasets, including ModelNet and ShapeNet part dataset, demonstrate the ability to learn for distinctive point clouds, which helps to achieve the state-of-the-art performance in some shape understanding applications.

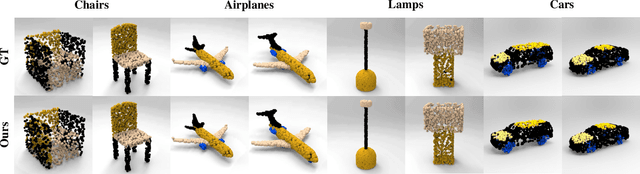

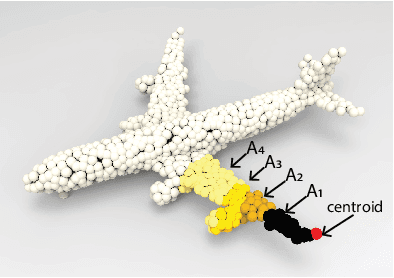

SPU-Net: Self-Supervised Point Cloud Upsampling by Coarse-to-Fine Reconstruction with Self-Projection Optimization

Dec 08, 2020

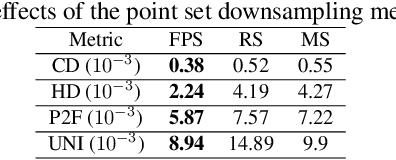

Abstract:The task of point cloud upsampling aims to acquire dense and uniform point sets from sparse and irregular point sets. Although significant progress has been made with deep learning models, they require ground-truth dense point sets as the supervision information, which can only trained on synthetic paired training data and are not suitable for training under real-scanned sparse data. However, it is expensive and tedious to obtain large scale paired sparse-dense point sets for training from real scanned sparse data. To address this problem, we propose a self-supervised point cloud upsampling network, named SPU-Net, to capture the inherent upsampling patterns of points lying on the underlying object surface. Specifically, we propose a coarse-to-fine reconstruction framework, which contains two main components: point feature extraction and point feature expansion, respectively. In the point feature extraction, we integrate self-attention module with graph convolution network (GCN) to simultaneously capture context information inside and among local regions. In the point feature expansion, we introduce a hierarchically learnable folding strategy to generate the upsampled point sets with learnable 2D grids. Moreover, to further optimize the noisy points in the generated point sets, we propose a novel self-projection optimization associated with uniform and reconstruction terms, as a joint loss, to facilitate the self-supervised point cloud upsampling. We conduct various experiments on both synthetic and real-scanned datasets, and the results demonstrate that we achieve comparable performance to the state-of-the-art supervised methods.

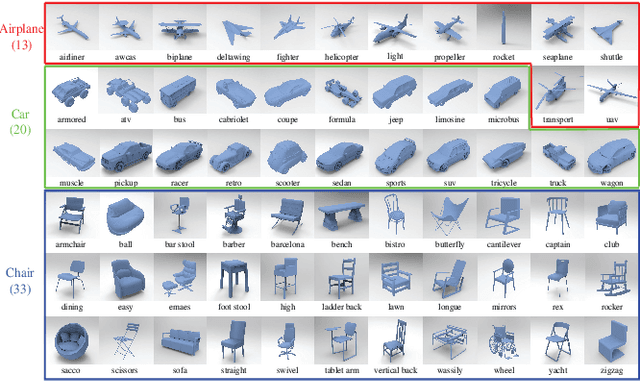

Fine-Grained 3D Shape Classification with Hierarchical Part-View Attentions

May 26, 2020

Abstract:Fine-grained 3D shape classification is important and research challenging for shape understanding and analysis. However, due to the lack of fine-grained 3D shape benchmark, research on fine-grained 3D shape classification has rarely been explored. To address this issue, we first introduce a new dataset of fine-grained 3D shapes, which consists of three categories including airplane, car and chair. Each category consists of several subcategories at a fine-grained level. According to our experiments under this fine-grained dataset, we find that state-of-the-art methods are significantly limited by the small variance among subcategories in the same category. To resolve this problem, we further propose a novel fine-grained 3D shape classification method named FG3D-Net to capture the fine-grained local details of 3D shapes from multiple rendered views. Specifically, we first train a Region Proposal Network (RPN) to detect the generally semantic parts inside multiple views under the benchmark of generally semantic part detection. Then, we design a hierarchical part-view attention aggregation module to learn global shape representation by aggregating generally semantic part features, which preserves the local details of 3D shapes. The part-view attention module leverages a part-level attention and a view-level attention to increase the discriminative ability of features, where the part-level attention highlights the important parts in each view while the view-level attention highlights the discriminative views among all the views from the same object. In addition, we integrate the Recurrent Neural Network (RNN) to capture the spatial relationships among sequential views from different viewpoints. Our results under the fine-grained 3D shape dataset show that our method outperforms other state-of-the-art methods.

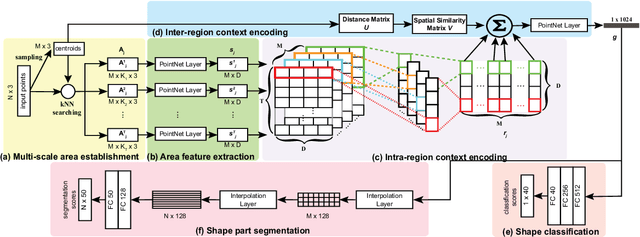

LRC-Net: Learning Discriminative Features on Point Clouds by Encoding Local Region Contexts

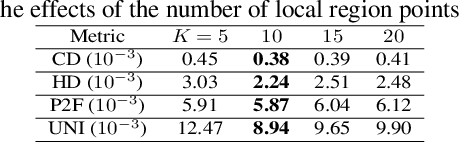

Mar 21, 2020

Abstract:Learning discriminative feature directly on point clouds is still challenging in the understanding of 3D shapes. Recent methods usually partition point clouds into local region sets, and then extract the local region features with fixed-size CNN or MLP, and finally aggregate all individual local features into a global feature using simple max pooling. However, due to the irregularity and sparsity in sampled point clouds, it is hard to encode the fine-grained geometry of local regions and their spatial relationships when only using the fixed-size filters and individual local feature integration, which limit the ability to learn discriminative features. To address this issue, we present a novel Local-Region-Context Network (LRC-Net), to learn discriminative features on point clouds by encoding the fine-grained contexts inside and among local regions simultaneously. LRC-Net consists of two main modules. The first module, named intra-region context encoding, is designed for capturing the geometric correlation inside each local region by novel variable-size convolution filter. The second module, named inter-region context encoding, is proposed for integrating the spatial relationships among local regions based on spatial similarity measures. Experimental results show that LRC-Net is competitive with state-of-the-art methods in shape classification and shape segmentation applications.

Point2SpatialCapsule: Aggregating Features and Spatial Relationships of Local Regions on Point Clouds using Spatial-aware Capsules

Aug 29, 2019

Abstract:Learning discriminative shape representation directly on point clouds is still challenging in 3D shape analysis and understanding. Recent studies usually involve three steps: first splitting a point cloud into some local regions, then extracting corresponding feature of each local region, and finally aggregating all individual local region features into a global feature as shape representation using simple max pooling. However, such pooling-based feature aggregation methods do not adequately take the spatial relationships between local regions into account, which greatly limits the ability to learn discriminative shape representation. To address this issue, we propose a novel deep learning network, named Point2SpatialCapsule, for aggregating features and spatial relationships of local regions on point clouds, which aims to learn more discriminative shape representation. Compared with traditional max-pooling based feature aggregation networks, Point2SpatialCapsule can explicitly learn not only geometric features of local regions but also spatial relationships among them. It consists of two modules. To resolve the disorder problem of local regions, the first module, named geometric feature aggregation, is designed to aggregate the local region features into the learnable cluster centers, which explicitly encodes the spatial locations from the original 3D space. The second module, named spatial relationship aggregation, is proposed for further aggregating clustered features and the spatial relationships among them in the feature space using the spatial-aware capsules developed in this paper. Compared to the previous capsule network based methods, the feature routing on the spatial-aware capsules can learn more discriminative spatial relationships among local regions for point clouds, which establishes a direct mapping between log priors and the spatial locations through feature clusters.

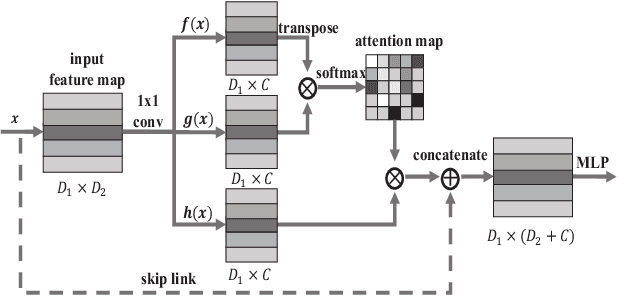

L2G Auto-encoder: Understanding Point Clouds by Local-to-Global Reconstruction with Hierarchical Self-Attention

Aug 02, 2019

Abstract:Auto-encoder is an important architecture to understand point clouds in an encoding and decoding procedure of self reconstruction. Current auto-encoder mainly focuses on the learning of global structure by global shape reconstruction, while ignoring the learning of local structures. To resolve this issue, we propose Local-to-Global auto-encoder (L2G-AE) to simultaneously learn the local and global structure of point clouds by local to global reconstruction. Specifically, L2G-AE employs an encoder to encode the geometry information of multiple scales in a local region at the same time. In addition, we introduce a novel hierarchical self-attention mechanism to highlight the important points, scales and regions at different levels in the information aggregation of the encoder. Simultaneously, L2G-AE employs a recurrent neural network (RNN) as decoder to reconstruct a sequence of scales in a local region, based on which the global point cloud is incrementally reconstructed. Our outperforming results in shape classification, retrieval and upsampling show that L2G-AE can understand point clouds better than state-of-the-art methods.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge