Wenhan Cao

One-Step Sampler for Boltzmann Distributions via Drifting

Mar 18, 2026Abstract:We present a drifting-based framework for amortized sampling of Boltzmann distributions defined by energy functions. The method trains a one-step neural generator by projecting samples along a Gaussian-smoothed score field from the current model distribution toward the target Boltzmann distribution. For targets specified only up to an unknown normalization constant, we derive a practical target-side drift from a smoothed energy and use two estimators: a local importance-sampling mean-shift estimator and a second-order curvature-corrected approximation. Combined with a mini-batch Gaussian mean-shift estimate of the sampler-side smoothed score, this yields a simple stop-gradient objective for stable one-step training. On a four-mode Gaussian-mixture Boltzmann target, our sampler achieves mean error $0.0754$, covariance error $0.0425$, and RBF MMD $0.0020$. Additional double-well and banana targets show that the same formulation also handles nonconvex and curved low-energy geometries. Overall, the results support drifting as an effective way to amortize iterative sampling from Boltzmann distributions into a single forward pass at test time.

CoINS: Counterfactual Interactive Navigation via Skill-Aware VLM

Jan 07, 2026Abstract:Recent Vision-Language Models (VLMs) have demonstrated significant potential in robotic planning. However, they typically function as semantic reasoners, lacking an intrinsic understanding of the specific robot's physical capabilities. This limitation is particularly critical in interactive navigation, where robots must actively modify cluttered environments to create traversable paths. Existing VLM-based navigators are predominantly confined to passive obstacle avoidance, failing to reason about when and how to interact with objects to clear blocked paths. To bridge this gap, we propose Counterfactual Interactive Navigation via Skill-aware VLM (CoINS), a hierarchical framework that integrates skill-aware reasoning and robust low-level execution. Specifically, we fine-tune a VLM, named InterNav-VLM, which incorporates skill affordance and concrete constraint parameters into the input context and grounds them into a metric-scale environmental representation. By internalizing the logic of counterfactual reasoning through fine-tuning on the proposed InterNav dataset, the model learns to implicitly evaluate the causal effects of object removal on navigation connectivity, thereby determining interaction necessity and target selection. To execute the generated high-level plans, we develop a comprehensive skill library through reinforcement learning, specifically introducing traversability-oriented strategies to manipulate diverse objects for path clearance. A systematic benchmark in Isaac Sim is proposed to evaluate both the reasoning and execution aspects of interactive navigation. Extensive simulations and real-world experiments demonstrate that CoINS significantly outperforms representative baselines, achieving a 17\% higher overall success rate and over 80\% improvement in complex long-horizon scenarios compared to the best-performing baseline

NANO-SLAM : Natural Gradient Gaussian Approximation for Vehicle SLAM

Apr 27, 2025

Abstract:Accurate localization is a challenging task for autonomous vehicles, particularly in GPS-denied environments such as urban canyons and tunnels. In these scenarios, simultaneous localization and mapping (SLAM) offers a more robust alternative to GPS-based positioning, enabling vehicles to determine their position using onboard sensors and surrounding environment's landmarks. Among various vehicle SLAM approaches, Rao-Blackwellized particle filter (RBPF) stands out as one of the most widely adopted methods due to its efficient solution with logarithmic complexity relative to the map size. RBPF approximates the posterior distribution of the vehicle pose using a set of Monte Carlo particles through two main steps: sampling and importance weighting. The key to effective sampling lies in solving a distribution that closely approximates the posterior, known as the sampling distribution, to accelerate convergence. Existing methods typically derive this distribution via linearization, which introduces significant approximation errors due to the inherent nonlinearity of the system. To address this limitation, we propose a novel vehicle SLAM method called \textit{N}atural Gr\textit{a}dient Gaussia\textit{n} Appr\textit{o}ximation (NANO)-SLAM, which avoids linearization errors by modeling the sampling distribution as the solution to an optimization problem over Gaussian parameters and solving it using natural gradient descent. This approach improves the accuracy of the sampling distribution and consequently enhances localization performance. Experimental results on the long-distance Sydney Victoria Park vehicle SLAM dataset show that NANO-SLAM achieves over 50\% improvement in localization accuracy compared to the most widely used vehicle SLAM algorithms, with minimal additional computational cost.

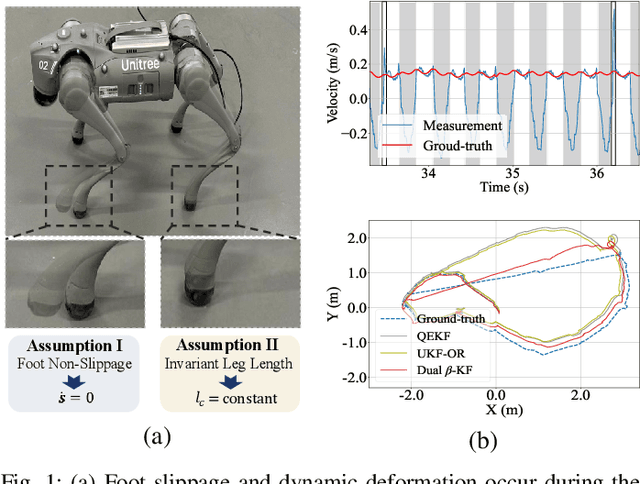

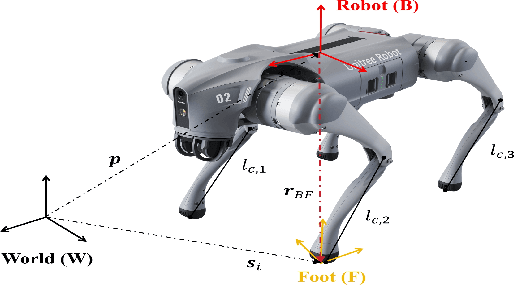

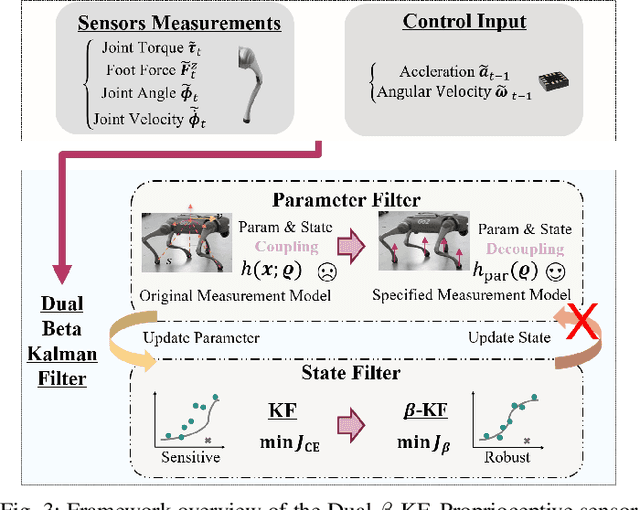

Robust State Estimation for Legged Robots with Dual Beta Kalman Filter

Nov 18, 2024

Abstract:Existing state estimation algorithms for legged robots that rely on proprioceptive sensors often overlook foot slippage and leg deformation in the physical world, leading to large estimation errors. To address this limitation, we propose a comprehensive measurement model that accounts for both foot slippage and variable leg length by analyzing the relative motion between foot contact points and the robot's body center. We show that leg length is an observable quantity, meaning that its value can be explicitly inferred by designing an auxiliary filter. To this end, we introduce a dual estimation framework that iteratively employs a parameter filter to estimate the leg length parameters and a state filter to estimate the robot's state. To prevent error accumulation in this iterative framework, we construct a partial measurement model for the parameter filter using the leg static equation. This approach ensures that leg length estimation relies solely on joint torques and foot contact forces, avoiding the influence of state estimation errors on the parameter estimation. Unlike leg length which can be directly estimated, foot slippage cannot be measured directly with the current sensor configuration. However, since foot slippage occurs at a low frequency, it can be treated as outliers in the measurement data. To mitigate the impact of these outliers, we propose the beta Kalman filter (beta KF), which redefines the estimation loss in canonical Kalman filtering using beta divergence. This divergence can assign low weights to outliers in an adaptive manner, thereby enhancing the robustness of the estimation algorithm. These techniques together form the dual beta-Kalman filter (Dual beta KF), a novel algorithm for robust state estimation in legged robots. Experimental results on the Unitree GO2 robot demonstrate that the Dual beta KF significantly outperforms state-of-the-art methods.

Collecting Larg-Scale Robotic Datasets on a High-Speed Mobile Platform

Aug 01, 2024Abstract:Mobile robotics datasets are essential for research on robotics, for example for research on Simultaneous Localization and Mapping (SLAM). Therefore the ShanghaiTech Mapping Robot was constructed, that features a multitude high-performance sensors and a 16-node cluster to collect all this data. That robot is based on a Clearpath Husky mobile base with a maximum speed of 1 meter per second. This is fine for indoor datasets, but to collect large-scale outdoor datasets a faster platform is needed. This system paper introduces our high-speed mobile platform for data collection. The mapping robot is secured on the rear-steered flatbed car with maximum field of view. Additionally two encoders collect odometry data from two of the car wheels and an external sensor plate houses a downlooking RGB and event camera. With this setup a dataset of more than 10km in the underground parking garage and the outside of our campus was collected and is published with this paper.

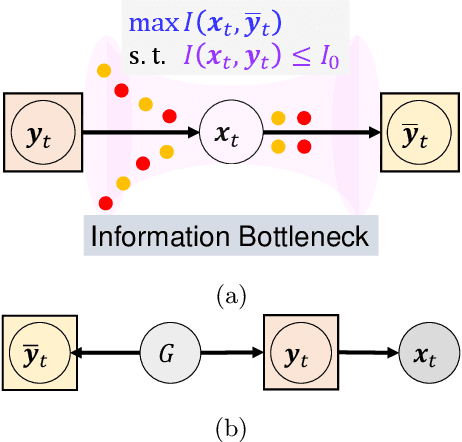

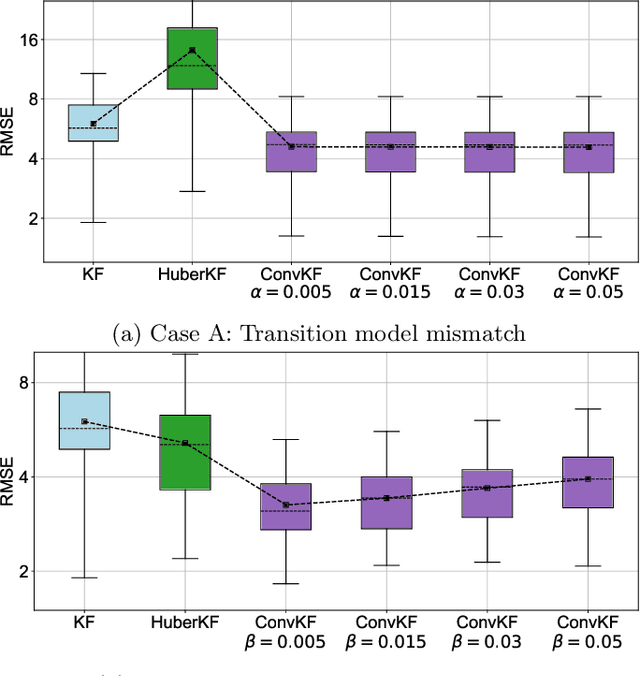

Convolutional Unscented Kalman Filter for Multi-Object Tracking with Outliers

Jun 03, 2024

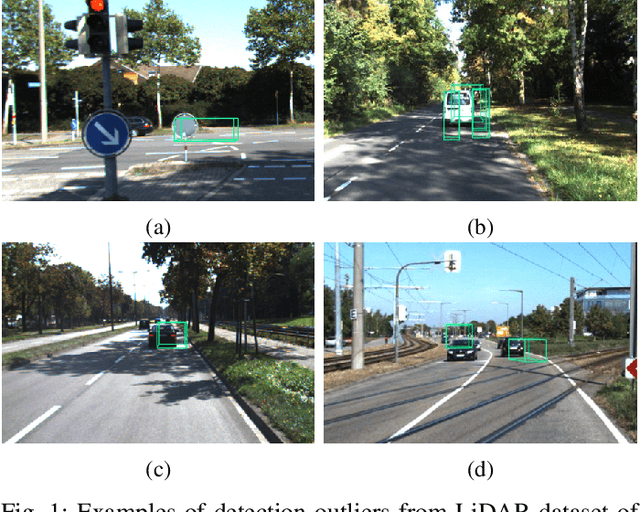

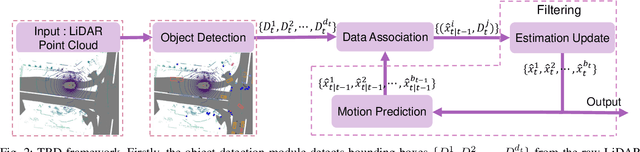

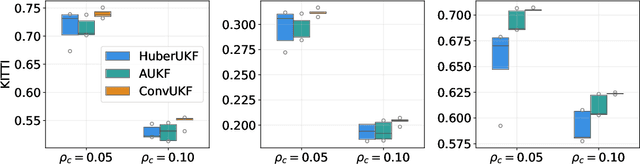

Abstract:Multi-object tracking (MOT) is an essential technique for navigation in autonomous driving. In tracking-by-detection systems, biases, false positives, and misses, which are referred to as outliers, are inevitable due to complex traffic scenarios. Recent tracking methods are based on filtering algorithms that overlook these outliers, leading to reduced tracking accuracy or even loss of the objects trajectory. To handle this challenge, we adopt a probabilistic perspective, regarding the generation of outliers as misspecification between the actual distribution of measurement data and the nominal measurement model used for filtering. We further demonstrate that, by designing a convolutional operation, we can mitigate this misspecification. Incorporating this operation into the widely used unscented Kalman filter (UKF) in commonly adopted tracking algorithms, we derive a variant of the UKF that is robust to outliers, called the convolutional UKF (ConvUKF). We show that ConvUKF maintains the Gaussian conjugate property, thus allowing for real-time tracking. We also prove that ConvUKF has a bounded tracking error in the presence of outliers, which implies robust stability. The experimental results on the KITTI and nuScenes datasets show improved accuracy compared to representative baseline algorithms for MOT tasks.

Convolutional Bayesian Filtering

Mar 30, 2024

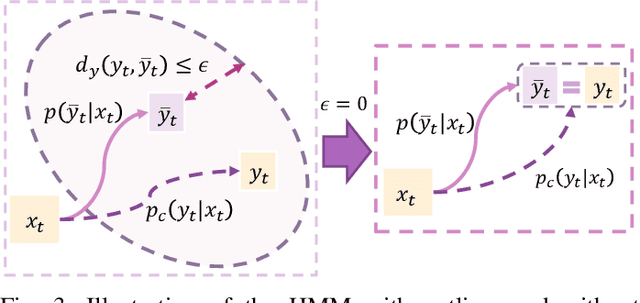

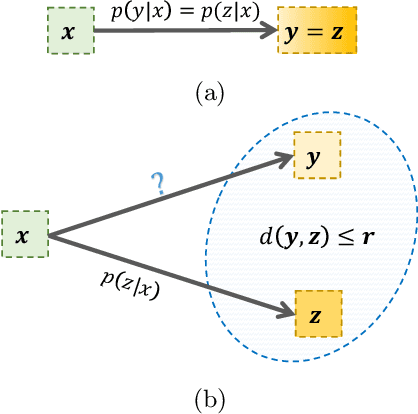

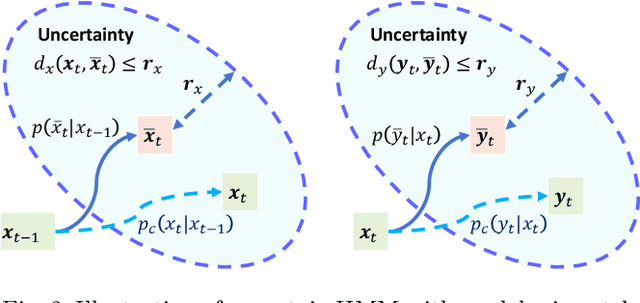

Abstract:Bayesian filtering serves as the mainstream framework of state estimation in dynamic systems. Its standard version utilizes total probability rule and Bayes' law alternatively, where how to define and compute conditional probability is critical to state distribution inference. Previously, the conditional probability is assumed to be exactly known, which represents a measure of the occurrence probability of one event, given the second event. In this paper, we find that by adding an additional event that stipulates an inequality condition, we can transform the conditional probability into a special integration that is analogous to convolution. Based on this transformation, we show that both transition probability and output probability can be generalized to convolutional forms, resulting in a more general filtering framework that we call convolutional Bayesian filtering. This new framework encompasses standard Bayesian filtering as a special case when the distance metric of the inequality condition is selected as Dirac delta function. It also allows for a more nuanced consideration of model mismatch by choosing different types of inequality conditions. For instance, when the distance metric is defined in a distributional sense, the transition probability and output probability can be approximated by simply rescaling them into fractional powers. Under this framework, a robust version of Kalman filter can be constructed by only altering the noise covariance matrix, while maintaining the conjugate nature of Gaussian distributions. Finally, we exemplify the effectiveness of our approach by reshaping classic filtering algorithms into convolutional versions, including Kalman filter, extended Kalman filter, unscented Kalman filter and particle filter.

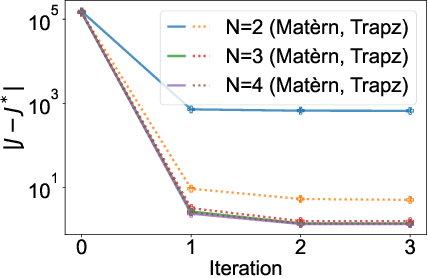

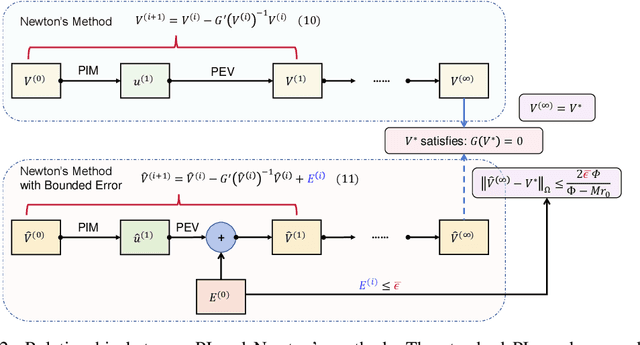

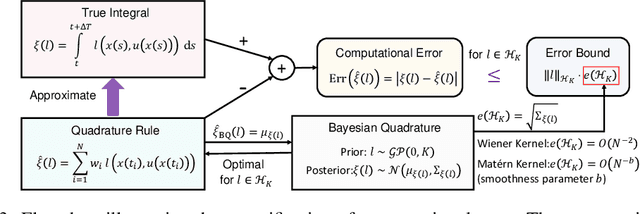

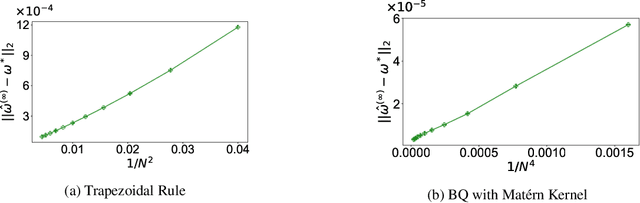

Impact of Computation in Integral Reinforcement Learning for Continuous-Time Control

Feb 27, 2024

Abstract:Integral reinforcement learning (IntRL) demands the precise computation of the utility function's integral at its policy evaluation (PEV) stage. This is achieved through quadrature rules, which are weighted sums of utility functions evaluated from state samples obtained in discrete time. Our research reveals a critical yet underexplored phenomenon: the choice of the computational method -- in this case, the quadrature rule -- can significantly impact control performance. This impact is traced back to the fact that computational errors introduced in the PEV stage can affect the policy iteration's convergence behavior, which in turn affects the learned controller. To elucidate how computation impacts control, we draw a parallel between IntRL's policy iteration and Newton's method applied to the Hamilton-Jacobi-Bellman equation. In this light, computational error in PEV manifests as an extra error term in each iteration of Newton's method, with its upper bound proportional to the computational error. Further, we demonstrate that when the utility function resides in a reproducing kernel Hilbert space (RKHS), the optimal quadrature is achievable by employing Bayesian quadrature with the RKHS-inducing kernel function. We prove that the local convergence rates for IntRL using the trapezoidal rule and Bayesian quadrature with a Mat\'ern kernel to be $O(N^{-2})$ and $O(N^{-b})$, where $N$ is the number of evenly-spaced samples and $b$ is the Mat\'ern kernel's smoothness parameter. These theoretical findings are finally validated by two canonical control tasks.

3D-Printed Hydraulic Fluidic Logic Circuitry for Soft Robots

Jan 30, 2024

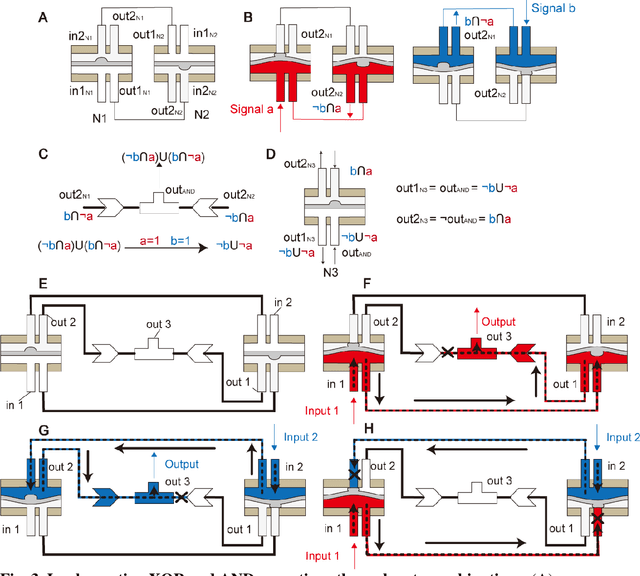

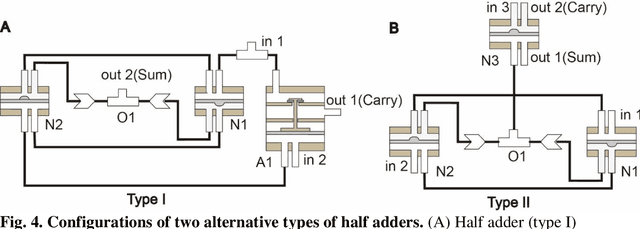

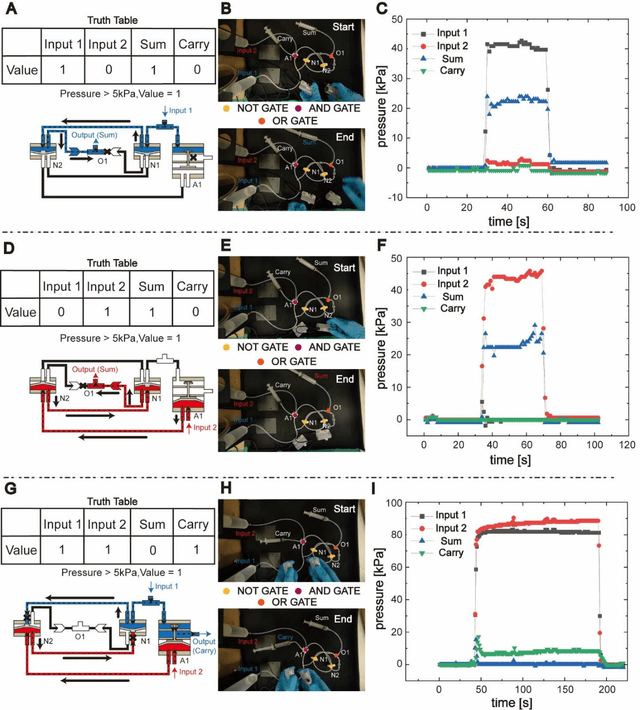

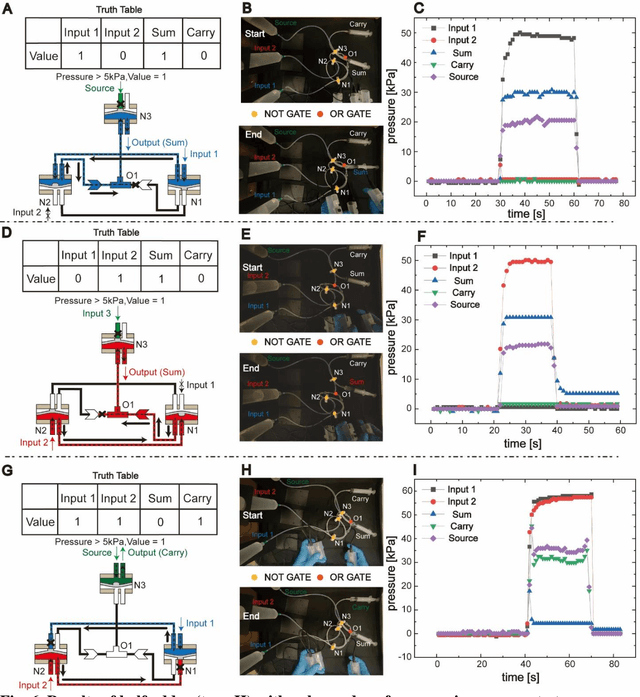

Abstract:Fluidic logic circuitry analogous to its electric counterpart could potentially provide soft robots with machine intelligence due to its supreme adaptability, dexterity, and seamless compatibility using state-of-the-art additive manufacturing processes. However, conventional microfluidic channel based circuitry suffers from limited driving force, while macroscopic pneumatic logic lacks timely responsivity and desirable accuracy. Producing heavy duty, highly responsive and integrated fluidic soft robotic circuitry for control and actuation purposes for biomedical applications has yet to be accomplished in a hydraulic manner. Here, we present a 3D printed hydraulic fluidic half-adder system, composing of three basic hydraulic fluidic logic building blocks: AND, OR, and NOT gates. Furthermore, a hydraulic soft robotic half-adder system is implemented using an XOR operation and modified dual NOT gate system based on an electrical oscillator structure. This half-adder system possesses binary arithmetic capability as a key component of arithmetic logic unit in modern computers. With slight modifications, it can realize the control over three different directions of deformation of a three degree-of-freedom soft actuation mechanism solely by changing the states of the two fluidic inputs. This hydraulic fluidic system utilizing a small number of inputs to control multiple distinct outputs, can alter the internal state of the circuit solely based on external inputs, holding significant promises for the development of microfluidics, fluidic logic, and intricate internal systems of untethered soft robots with machine intelligence.

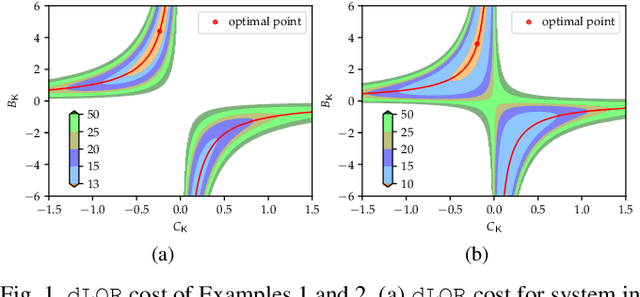

On the Optimization Landscape of Dynamic Output Feedback: A Case Study for Linear Quadratic Regulator

Sep 12, 2022

Abstract:The convergence of policy gradient algorithms in reinforcement learning hinges on the optimization landscape of the underlying optimal control problem. Theoretical insights into these algorithms can often be acquired from analyzing those of linear quadratic control. However, most of the existing literature only considers the optimization landscape for static full-state or output feedback policies (controllers). We investigate the more challenging case of dynamic output-feedback policies for linear quadratic regulation (abbreviated as dLQR), which is prevalent in practice but has a rather complicated optimization landscape. We first show how the dLQR cost varies with the coordinate transformation of the dynamic controller and then derive the optimal transformation for a given observable stabilizing controller. At the core of our results is the uniqueness of the stationary point of dLQR when it is observable, which is in a concise form of an observer-based controller with the optimal similarity transformation. These results shed light on designing efficient algorithms for general decision-making problems with partially observed information.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge